AMD Radeon HD 7970 GHz Edition Review: Give Me Back That Crown!

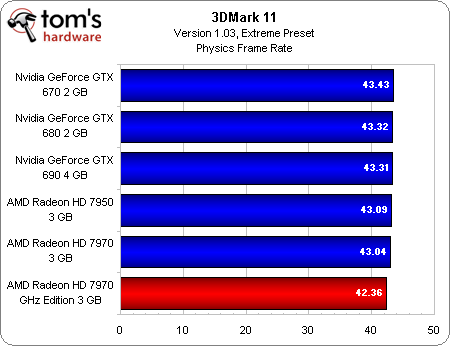

Benchmark Results: 3DMark 11

We don’t make any recommendations based on synthetic results; if you can’t play it, you wouldn’t buy a graphics card for it.

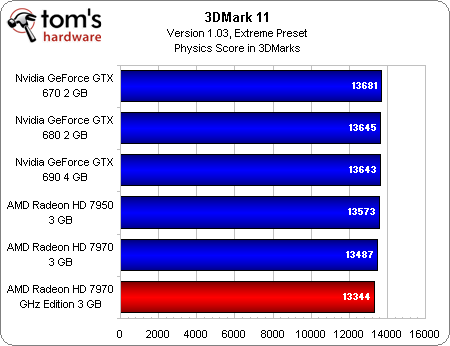

In theory, though, 3DMark should allow us to compare AMD’s technology to Nvidia’s without the influence of developer bias resulting from the help that both companies provide in certain titles. If Futuremark is doing its job, the variation you see from one game to another should be largely mitigated here.

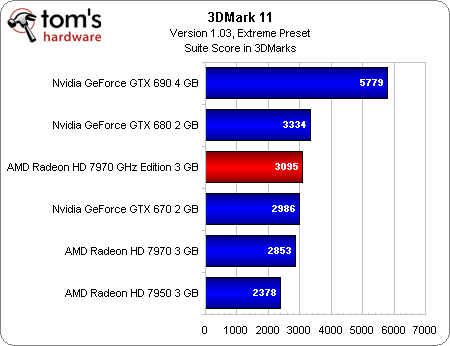

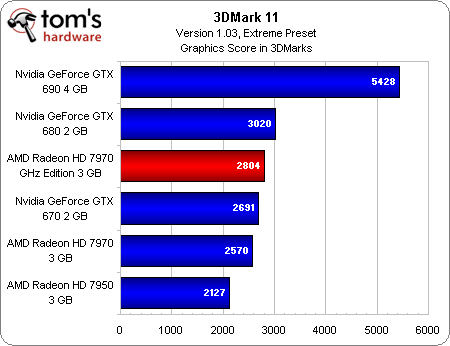

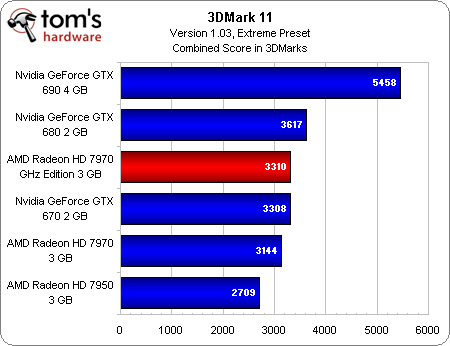

Whether or not that’s actually the case, AMD’s Radeon HD 7970 GHz Edition leapfrogs the GeForce GTX 670 and settles in just behind the GeForce GTX 680.

I don’t want to spoil the rest of the results, but you’re going to see that 3DMark doesn’t reflect the majority of our real-world benchmarks. In fact, there’s a trend that I’ll zero in on as we flip through three different resolutions—that is, the 7970 does increasingly well as you ask it to render more pixels and turn up quality settings. Considering that our 3DMark 11 benchmark employs the Extreme preset, I really would have expected it to place AMD’s latest ahead of Nvidia’s GeForce GTX 680.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Benchmark Results: 3DMark 11

Prev Page Test Setup And Benchmarks Next Page Benchmark Results: Battlefield 3 (DX 11)-

Darkerson My only complaint with the "new" card is the price. Otherwise it looks like a nice card. Better than the original version, at any rate, not that the original was a bad card to begin with.Reply -

wasabiman321 Great I just ordered a gtx 670 ftw... Grrr I hope performance gets better for nvidia drivers too :DReply -

mayankleoboy1 nice show AMD !Reply

with Winzip that does not use GPU, VCE that slows down video encoding and a card that gives lower min FPS..... EPIC FAIL.

or before releasing your products, try to ensure S/W compatibility. -

vmem jrharbortTo me, increasing the memory speed was a pointless move. Nvidia realized that all of the bandwidth provided by GDDR5 and a 384bit bus is almost never utilized. The drop back to a 256bit bus on their GTX 680 allowed them to cut cost and power usage without causing a drop in performance. High end AMD cards see the most improvement from an increased core clock. Memory... Not so much.Then again, Nvidia pretty much cheated on this generation as well. Cutting out nearly 80% of the GPGPU logic, something Nvidia had been trying to market for YEARS, allowed then to even further drop production costs and power usage. AMD now has the lead in this market, but at the cost of higher power consumption and production cost.This quick fix by AMD will work for now, but they obviously need to rethink their future designs a bit.Reply

the issue is them rethinking their future designs scares me... Nvidia has started a HORRIBLE trend in the business that I hope to dear god AMD does not follow suite. True, Nvidia is able to produce more gaming performance for less, but this is pushing anyone who wants GPU compute to get an overpriced professional card. now before you say "well if you're making a living out of it, fork out the cash and go Quadro", let me remind you that a lot of innovators in various fields actually do use GPU compute to ultimately make progress (especially in academic sciences) to ultimately bring us better tech AND new directions in tech development... and I for one know a lot of government funded labs that can't afford to buy a stack of quadro cards -

DataGrave ReplyNvidia has started a HORRIBLE trend in the business that I hope to dear god AMD does not follow suite.

100% acknowledge

And for the gamers: take a look at the new UT4 engine! Without excellent GPGPU performace this will be a disaster for each graphics card. See you, Nvidia. -

cangelini mayankleoboy1Thanks for putting my name in teh review now if only you could bold it;-)Reply

Excellent tip. Told you I'd look into it!