SanDisk Extreme II SSD Review: Striking At The Heavy-Hitters

SanDisk is looking for a rise to prominence in the SSD segment with a new Marvell 88SS9187-based drive. The Extreme II packs 19 nm Toggle-mode NAND (from SanDisk, naturally), specialized firmware, and intriguing performance potential. How does it compare?

Results: Random Performance

Iometer is still our synthetic metric of choice for testing 4 KB random performance. Technically, "random" translates to a consecutive access that occurs more than one sector away. On a mechanical hard disk, this can lead to significant latencies that hammer performance. Spinning media simply handles sequential accesses much better than random ones, since the heads don't have to be physically repositioned. With SSDs, the random/sequential access distinction is much less relevant. Data can be put wherever the controller wants it, so the idea that the operating system sees one piece of information next to another is mostly just an illusion.

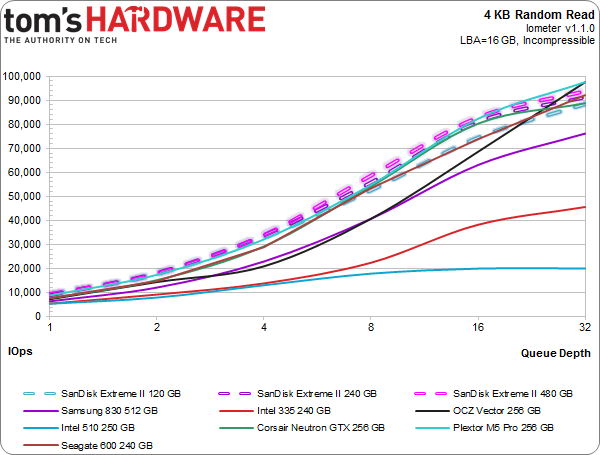

4 KB Random Read

Plextor's M5 Pro and the SanDisk drives offer similar performance. Throughout the capacity range, the Extreme IIs are competitive. The 120 GB model isn't as strong, but it's almost exactly as fast as the 240 GB Seagate 600.

The 240 GB and 480 GB Extreme IIs don't quite hit 100,000 IOPS, but there's no shame in 94,000 and 91,000 IOPS, either.

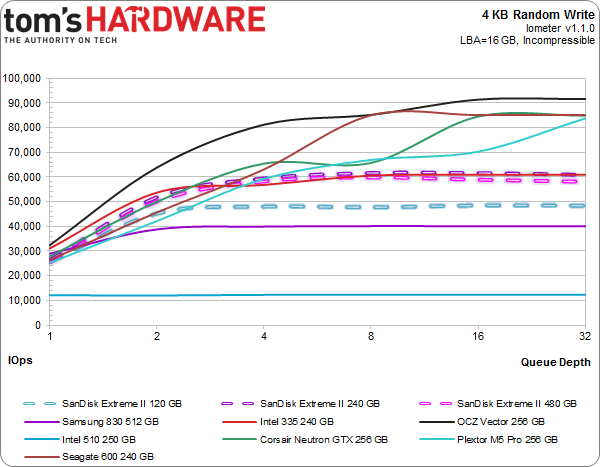

4 KB Random Write

And then things seem to go pear-shaped. A glance at the above chart makes it clear that SanDisk's drives aren't living up to their specifications. Shouldn't they be hitting 80,000 IOPS or so?

The explanation is relatively simple. We test with random data over a 16 GB LBA space. Industry-wide, most consumer-oriented SSD tests are limited to 8 GB. Now, this doesn't matter most of the time. Hard drives are especially sensitive to LBA active ranges, since spinning platters and floating heads need more time to move when the data you request is physically farther away. Solid-state storage obviously isn't subject to the same limitation, though some SSDs are more sensitive to changes in LBA ranges than others. The difference just usually isn't so profound.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

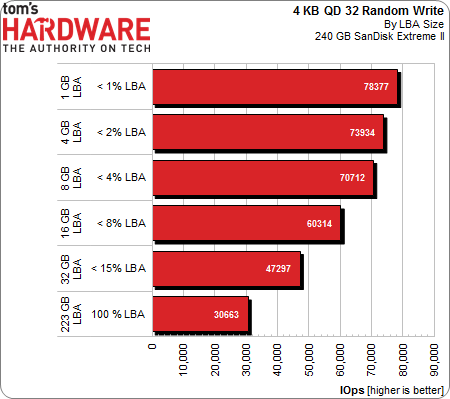

Using the 240 GB Extreme II, we can demonstrate this idiosyncrasy. Starting with 1 GB of sectors and graduating to the entire LBA range, the drop in performance is substantial by the time we get to 16 GB. There are technical reasons why this might happen to a lesser degree with other SSDs, but it looks like the implementation of nCache can result in slower random writes at high queue depths over a large number of LBAs. It's possible that a tradeoff exists between writes that can be cached and writes that exceed the cache's capacity, hurting performance when the cache is full and improving speed when nCache can effectively handle smaller random writes.

Is this a problem? In a word, no.

Typically, random workloads bombarding the entire drive are considered enterprise-oriented. Consumer usage just doesn't match that profile. Random writes are more typically limited to smaller areas, and the amount of writing is exceptionally light. The fact of the matter is that SanDisk's Extreme II was designed for desktop workloads. Wringing the last few drops of performance from an interface-limited SSD means taking steps to improve one area at the expense of others.

The trade-off seems fair. The Extreme II is less useful for a selection of some enterprise applications, but is better as a boot and desktop application drive. We can live with that.

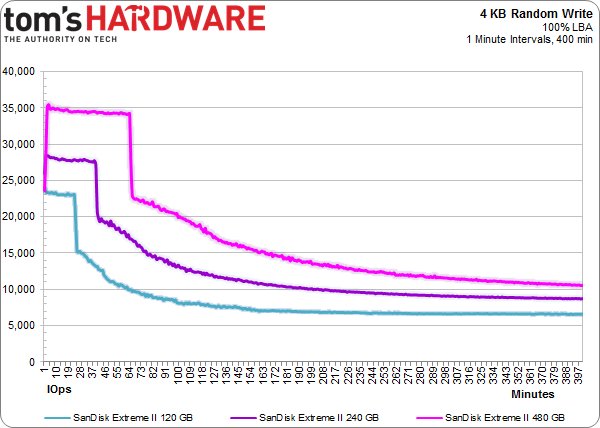

Besides, the Extreme IIs aren't even as bad off as they might appear. Consider the above 4 KB write saturation test at a queue depth of 32. Sure, the drives start well off of their highs. But after the SSDs are filled and garbage collection is in full swing, SanDisk's solutions aren't any worse than competing models. In some cases, they're even better. Performance levels off and ranges from 7,000 to 10,000 IOPS, depending on size. If nothing else, that's competitive. Regardless, there just aren't any reasons why you'd ever write like this on a gaming rig.

Current page: Results: Random Performance

Prev Page Results: Sequential Performance Next Page Results: Tom's Storage Bench-

Someone Somewhere Where's the 840/840 Pro?Reply

Also, you appear to have put one of the labels back on the wrong way round. -

boulbox I have always been a fan of Sandisk SSDs, can't wait until to try this out in someone else's build as they usually sell their products that is very acceptable for budgets.Reply -

slomo4sho I am also curious about the selection of the comparative models. Having the Extreme (not II) in the charts for comparison between the two generations would have been a welcomed addition along with the inclusion of the 840 series.Reply -

flong777 I know a lot of people have already pointed this out but can't Tom's Hardware afford a damn 256 GB 840 Pro? I mean come on, it is the fastest SSD on the planet right now.Reply -

raidtarded Seriously, what is the point of this article? The fastest car in the world is as Yugo if you dont test against a Lamborghini.Reply -

teh_gerbil Why are there 2 of your most recent SSD reviews lack the Samsung 840/Pro? Are you being paid by the respective companies to avoid using them, as for both SSD's, as per other reviews I have read, the 840 Pro cr@ps all over both of them, but due to your lack of them, they're both top of your benchmarks! Very very bad benchmarking.Reply

http://www.tomshardware.com/reviews/vertex-450-256gb-review,3517.html -

merikafyeah Want an 840 Pro comparison and far more in-depth review?Reply

See here: http://www.anandtech.com/show/7006/sandisk-extreme-ii-review-480gb

It's Anand's new favorite SSD, and based on the results, I'm inclined to agree.

It's peak performance is right up there with the 840 Pro, but what's really extreme is the drive's consistency. It's performance when the drive is close to full is unmatched.

There are no high peaks accompanied by low valleys in performance when it comes to the Extreme II. It's pretty much smooth and fast sailing all the time, which in my book, places the Extreme II a step above the 840 Pro. The 840 Pro would have to be at least $30 cheaper than the Extreme II for me to even consider it over the Extreme II.

-

JPNpower Why is the 840 Pro the fastest SSD on the planet? It has its share of drawbacks, and is slower than the OCZ Vector, and the Plextor M5 Pro Xtreme on many benchmarks. Don't make broad statemets that aren't always true.Reply