Why you can trust Tom's Hardware

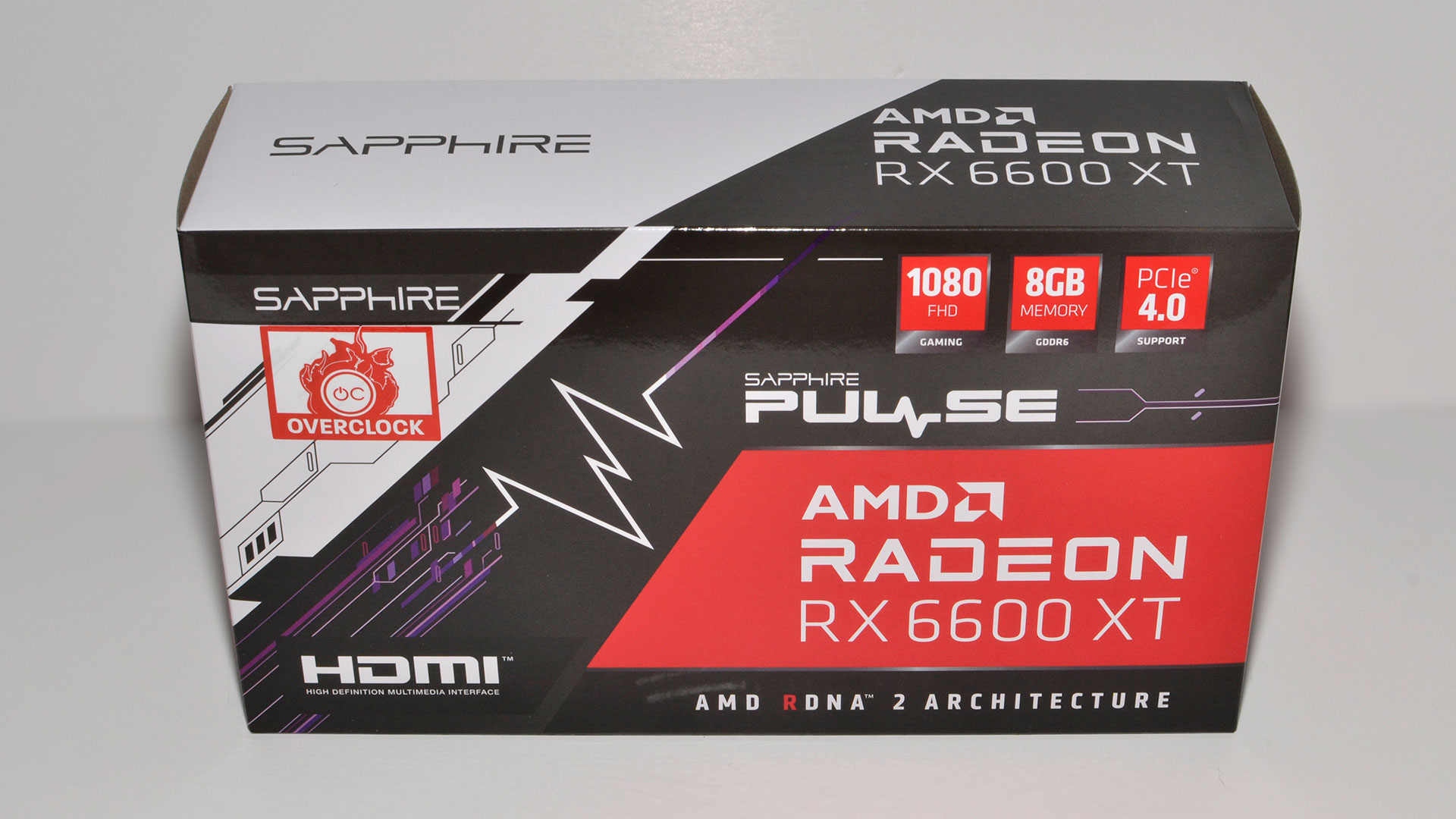

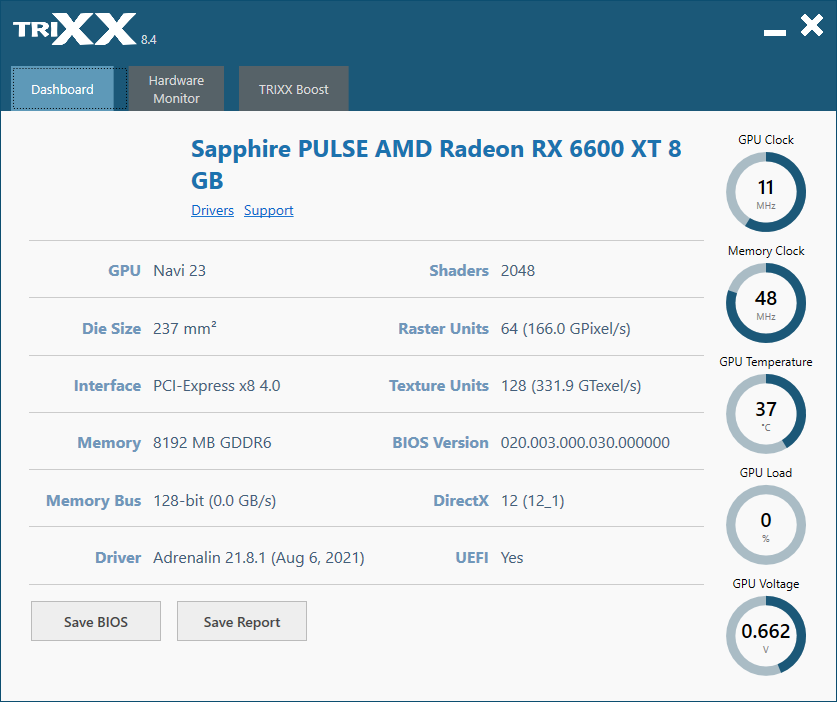

There's no question this is more of a 'budget' RX 6600 XT when you look at the Sapphire design and box. The packaging is about as no-frills as you can get, with a plain brown cardboard box inside the outer sleeve and a relatively small box to begin with. That's not a bad thing, and if you're interested in building a smaller PC, the dual-fan cooler and relatively compact design are just what you'll need.

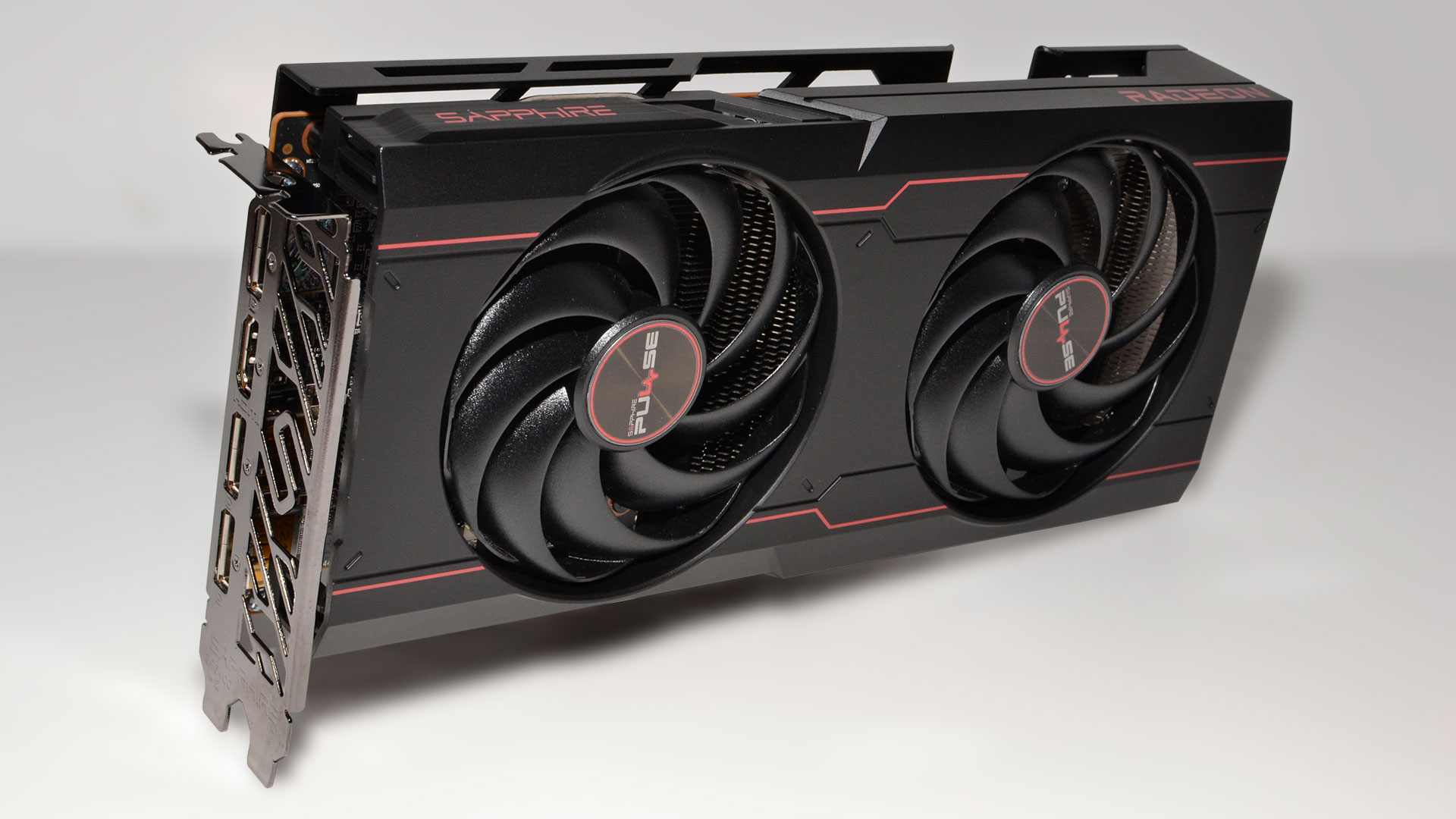

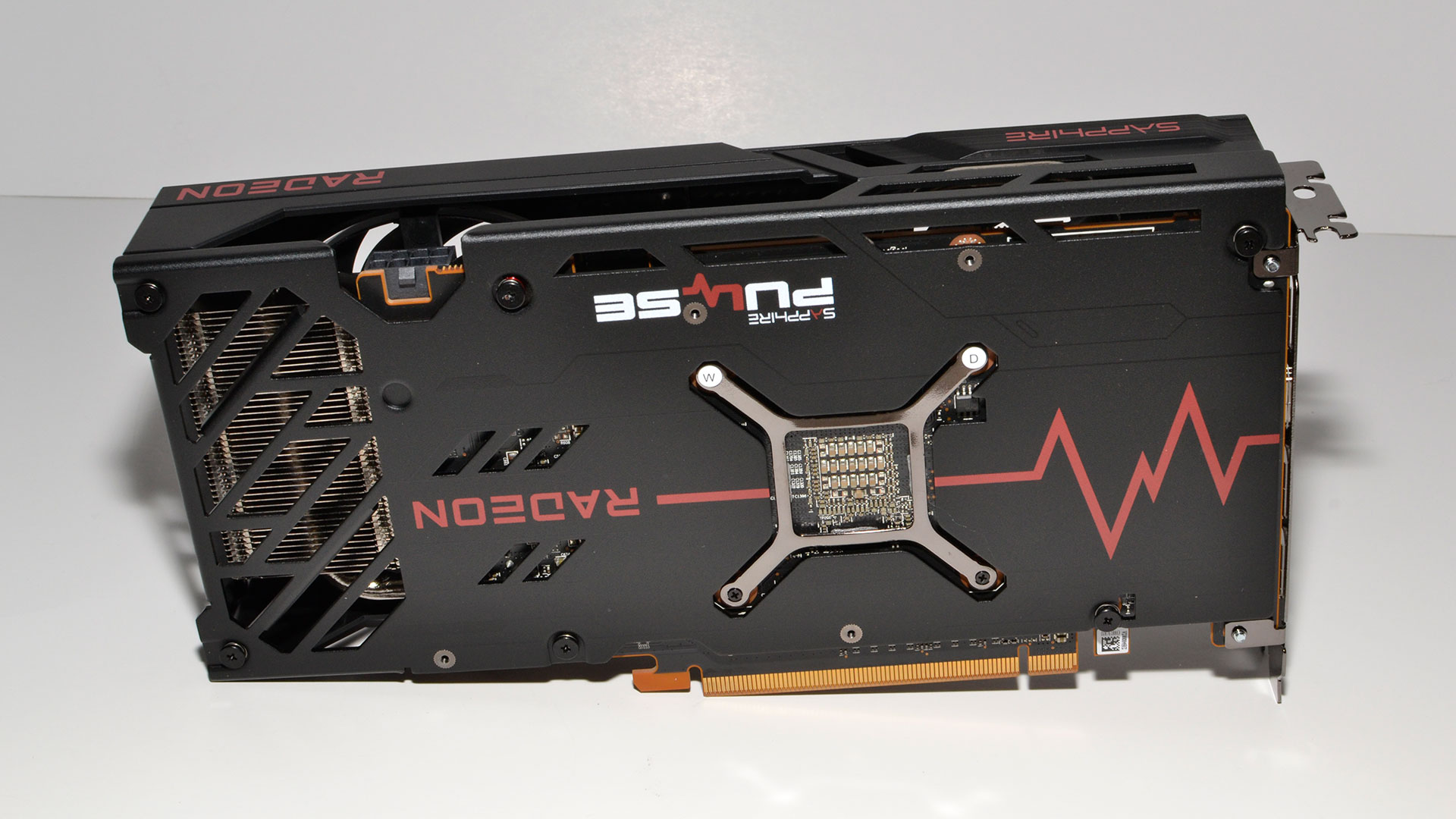

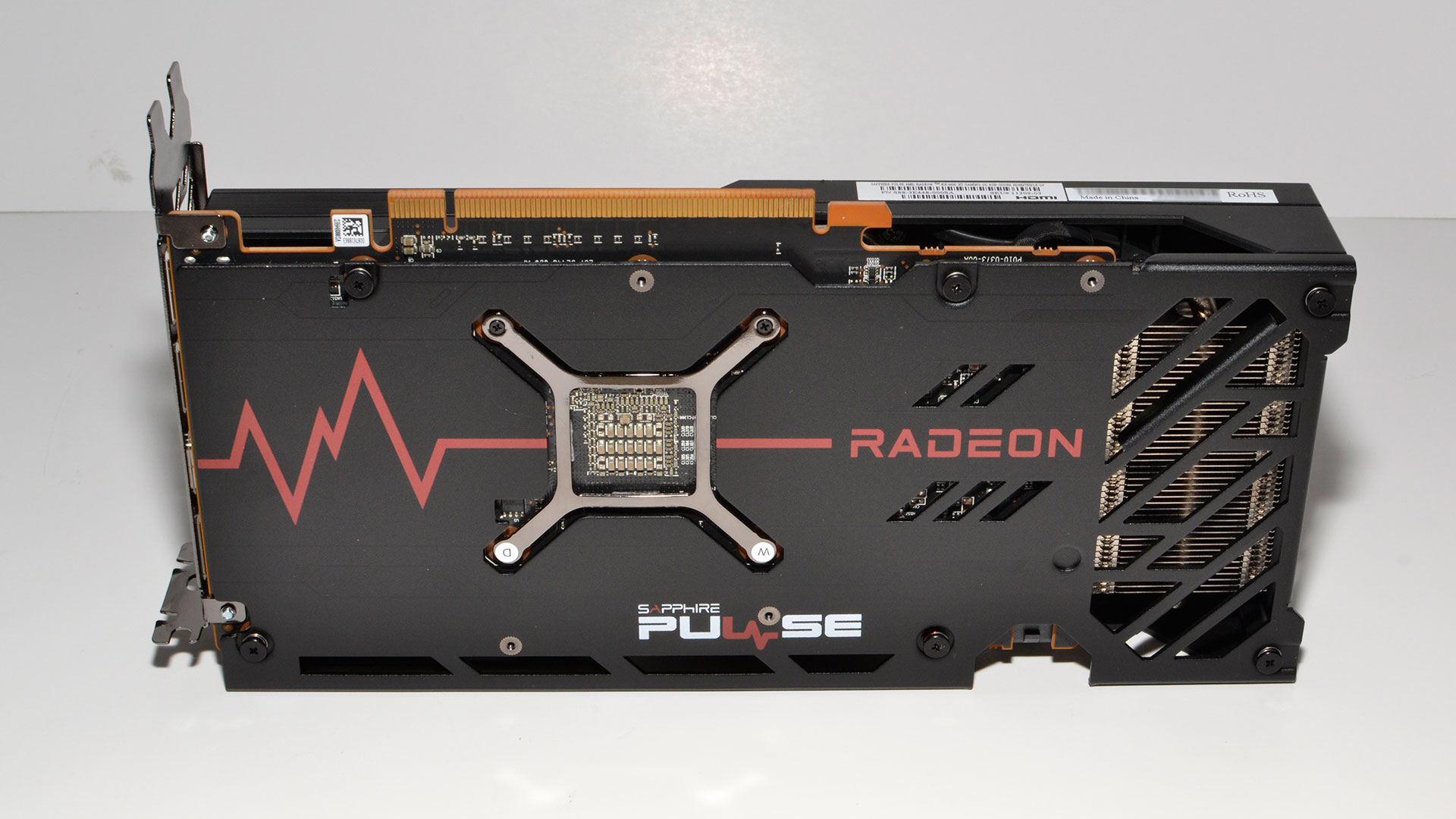

The Pulse measures 240x118x43mm (our measurements — the official size is 240x119.85x44.75mm) and weighs just 611g. The ASRock Phantom by comparison weighs 898g and measures 306x131x47mm, while the Gigabyte Eagle measures 289x112x38mm and tips the scales at 674g. The Sapphire takes up just a bit more than a standard 2-slot width, but can probably be classified as such still, though we recommend users avoid putting any expansion card in the adjacent slot regardless, as that can impede airflow and lead to substantially higher temperatures.

Sapphire uses two custom-sized 88mm fans, with integrated rims that help improve the static air pressure and cooling. We'll see the effects of that design choice when we get to the power and cooling tests later, but potential buyers shouldn't have anything to worry about. Like most other RX 6600 XT cards, it also includes a single 8-pin power connector, and unless you plan on radical overclocks with LN2, that should be more than sufficient.

Aesthetically, there's zero lighting on the Pulse, RGB or otherwise. Some people will appreciate that, as it means you can put the card in a PC in your bedroom and not have to deal with the technicolor light show. Of course, you'd still need a case and motherboard that don't have glowing lights everywhere, but we'll leave that as an exercise for the PC builder. On the other hand, if you're a fan of RGB lighting, you'll probably want to look elsewhere.

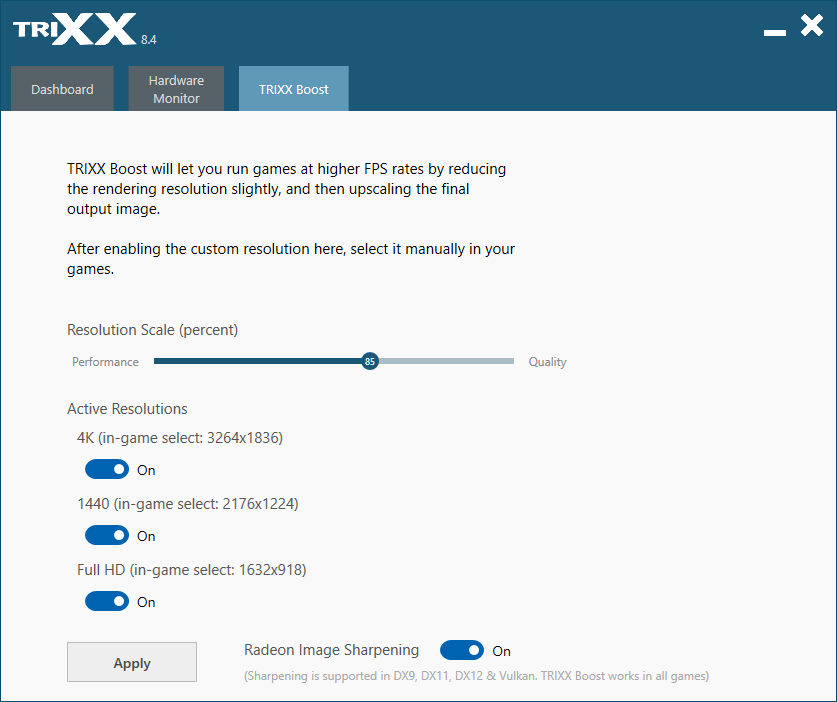

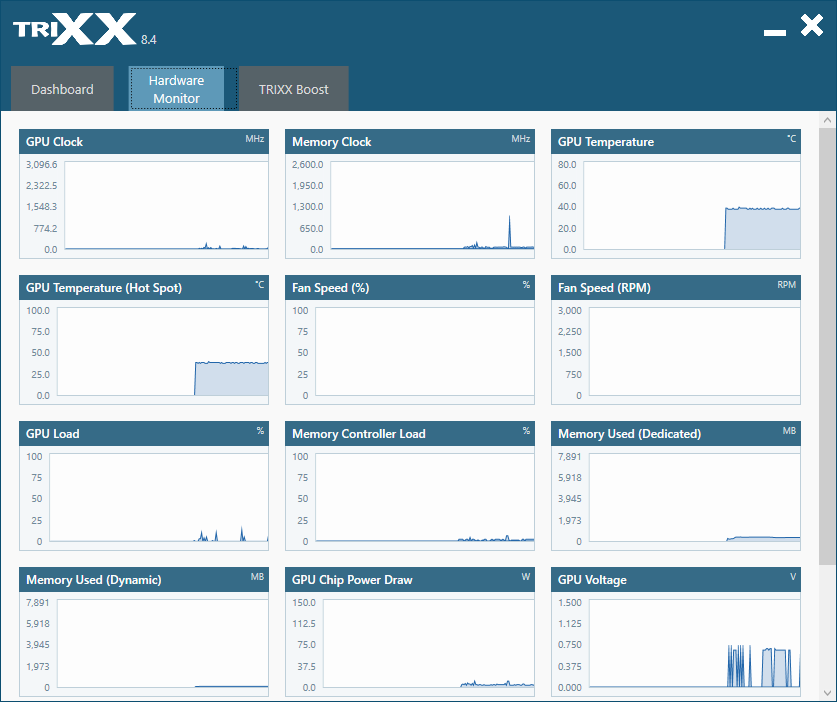

The one noteworthy extra Sapphire includes with its graphics cards is Sapphire Trixx. Most graphics card manufacturers have software of some form, either for overclocking or hardware monitoring, or both. Sapphire doesn't provide overclocking, but it does have HW monitoring if you want it. More importantly, it has Trixx Boost, which can use AMD's Radeon Image Sharpening to let you select a lower resolution and then have everything scaled to the normal resolution. The default 85% setting is a reasonable option, and as an example, it renders at 2176x1224 and upscales to 2560x1440. We included some benchmarks at 1440p with Trixx Boost enabled, and you get about 25% better performance than native 1440p.

What about image quality? There's a bit of a tradeoff there, mostly noticeable on high contrast text. Subjectively, though, most people likely wouldn't even notice the difference unless they're specifically told to look for it. I gave it a shot, having someone else set the in-game resolution to 2560x1440 or 2176x1224 while I was out of the room, then coming back and trying to determine whether Trixx Boost was enabled or not. I managed about 75% accuracy, but considering random guessing would get me 50%, that's not too bad.

Certainly, Sapphire makes a case for Trixx Boost being more practical than end-user overclocking. If you custom-tune your GPU, adjusting clocks and increasing the fan speed, you might get a 10% increase in overall performance. However, that generally comes after an hour or so of tweaking and tuning, and it's still not guaranteed to be 100% stable. On the other hand, Trixx Boost can easily get you 20% more performance, in about 30 seconds, for a minor drop in image quality but zero reduction (that I noticed) in stability. It's sort of like AMD's FidelityFX Super Resolution (AMD FSR) only it was already available a year ago, and it works in all games. We have to wonder if Sapphire will look into updating Trixx Boost to incorporate AMD FSR instead of RIS, given both are open source, but AMD FSR has better overall image quality supposedly.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

MORE: Best Graphics Cards

MORE: GPU Benchmarks and Hierarchy

MORE: All Graphics Content

Current page: Sapphire Radeon RX 6600 XT Pulse Design and Features

Prev Page Sapphire Radeon RX 6600 XT Pulse Intro and Specs Next Page Sapphire Radeon RX 6600 XT Pulse Gaming Performance

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

logainofhades No bling and few extras isn't exactly a con, nor is its performance, at higher resolutions, as it was marketed as a 1080p card.Reply -

-Fran- One super important point about Sapphire cards: they have upsampling built in into TRIXX. It may not be FSR or DLSS, but you can upscale ANY game you want through it using the GPU. I have no idea how it does it, but you can.Reply

Other than that, this card looks clean and tidy. Not the best looking Sapphire in history, as that one goes to the original RX480 Nitro+ IMO. What a gorgeous design it was. I wish they'd use it for everything and get rid of the fake-glossy plastic garbo they've been using as of late.

Regards. -

JarredWaltonGPU Reply

Wow! It's almost like you ... didn't read the review. LOLYuka said:One super important point about Sapphire cards: they have upsampling built in into TRIXX. It may not be FSR or DLSS, but you can upscale ANY game you want through it using the GPU. I have no idea how it does it, but you can.

Other than that, this card looks clean and tidy. Not the best looking Sapphire in history, as that one goes to the original RX480 Nitro+ IMO. What a gorgeous design it was. I wish they'd use it for everything and get rid of the fake-glossy plastic garbo they've been using as of late.

Regards.

I talk quite about about Trixx Boost and even ran benchmarks with it enabled at 1440p, FYI. -

-Fran- Reply

I did read it; I must have omitted it from my mind :PJarredWaltonGPU said:Wow! It's almost like you ... didn't read the review. LOL

I talk quite about about Trixx Boost and even ran benchmarks with it enabled at 1440p, FYI.

Apologies. -

TheAlmightyProo ReplyYuka said:One super important point about Sapphire cards: they have upsampling built in into TRIXX. It may not be FSR or DLSS, but you can upscale ANY game you want through it using the GPU. I have no idea how it does it, but you can.

Other than that, this card looks clean and tidy. Not the best looking Sapphire in history, as that one goes to the original RX480 Nitro+ IMO. What a gorgeous design it was. I wish they'd use it for everything and get rid of the fake-glossy plastic garbo they've been using as of late.

Regards.

iirc that RX 480 Sapphire Nitro (did they do this in 580 too?) is the one with all the little holes in it and otherwise straight lines etc. I also seem to recall swappable fans, but could be wrong...

But yeah, it was a beaut, my fave design at the time, and I'd have so gone for one if I hadn't decided on a 1070 (Gigabyte Xtreme Gaming) as a safer bet holding 2560x1080 for longer before needing to drop to 1080p. That said, I've always liked Sapphires and eventually got one this year (6800XT Sapphire Nitro+ SE @3440x1440) and I'm absolutely not disappointed in that or my first full AMD CPU in 16 years (5800X) Sure, not so great at RT and FSR needs to catch up and catch on but I have maybe 2-3 games out of 50 I'd play that'll make use of either, no great loss yet until they become more refined and ubiquitous imo. It runs like a dream and cool too. Assuming AMD keep up or overtake the next gen but one, I'd be happy to buy Sapphire again. -

TheAlmightyProo ReplyJarredWaltonGPU said:Wow! It's almost like you ... didn't read the review. LOL

I talk quite about about Trixx Boost and even ran benchmarks with it enabled at 1440p, FYI.

Trixx looks like a damn good app tbh. Having a Sapphire 6800XT Nitro+ SE I could be using it but omitted doing so... I dunno, maybe cos it's already good enough at 3440x1440?

However, I do have a good gaming UHD 120Hz TV (Samsung Q80T) waiting to game from the couch (after an upcoming house move) which might do well with a little boost going forward as I'm not even thinking of upgrading for at least 3-5 years and after the first iterations of the 'big new things' have been refined somewhat.

So thanks for spending some time on that info and testing with it on. I might've ignored or forgotten it but knowing it's there as a tried and tested option is good to know. -

JarredWaltonGPU Reply

FWIW, you can just create a custom resolution in AMD or Nvidia control panel as an alternative if you don't have a Sapphire card. It's difficult to judge image quality, and in some cases I think it does make a difference. However, I'm not quite sure how Trixx Boost outputs a different resolution via RIS. If you do a screen capture, it's still at the Trixx Boost resolution, as though it's simply rendering at a lower resolution and using the display scaler to stretch the output. Potentially it happens internal to the card's output, so that 85% scaling gets bumped up to native for the DisplayPort signal, but then how does that use RIS since that would be a hardware/firmware feature?TheAlmightyProo said:Trixx looks like a damn good app tbh. Having a Sapphire 6800XT Nitro+ SE I could be using it but omitted doing so... I dunno, maybe cos it's already good enough at 3440x1440?

However, I do have a good gaming UHD 120Hz TV (Samsung Q80T) waiting to game from the couch (after an upcoming house move) which might do well with a little boost going forward as I'm not even thinking of upgrading for at least 3-5 years and after the first iterations of the 'big new things' have been refined somewhat.

So thanks for spending some time on that info and testing with it on. I might've ignored or forgotten it but knowing it's there as a tried and tested option is good to know.

Bottom line is rendering fewer pixels requires less GPU effort. How you stretch those to the desired output is the question. DLSS and FSR definitely scale to the desired resolution, so that Windows+PrtScrn capture images at the native resolution. Trixx Boost doesn't seem to function in the same way. ¯\(ツ)/¯ -

InvalidError Reply

Performance at higher resolution is definitely a con since in a sane GPU market, nobody would be willing to pay anywhere near $400 for a "1080p" gaming GPU with a gimped 4.0x8 interface and 128bits VRAM in a healthy market. This is the sort of penny-pinching you'd only expect to see on sub-$150 GPUs. On Nvidia's side, you don't see the PCIe interface get cut down until you get into sub-$100 SKUs like the GT1030.logainofhades said:No bling and few extras isn't exactly a con, nor is its performance, at higher resolutions, as it was marketed as a 1080p card.

As some techtubers put it, all GPUs are turd sandwiches. The 6600XT isn't good for the price, it is just the least worst turd sandwich at the moment if you absolutely must buy a GPU now. -

logainofhades Price aside, the card was advertised as a 1080p card, and the 6600xt does 1080p quite well. I don't understand the gimped interface either, but AMD promised 1080p, and delivered. Prices are stupid, and will be for quite some time, as many are saying 2023, before this chip shortage ends.Reply -

InvalidError Reply

$400 GPUs have been doing "1080p quite well" with contemporary titles for over a decade. I personally find it insulting that AMD would brag about that in 2021.logainofhades said:Price aside, the card was advertised as a 1080p card, and the 6600xt does 1080p quite well.