Second-Gen SandForce: Seven 120 GB SSDs Rounded Up

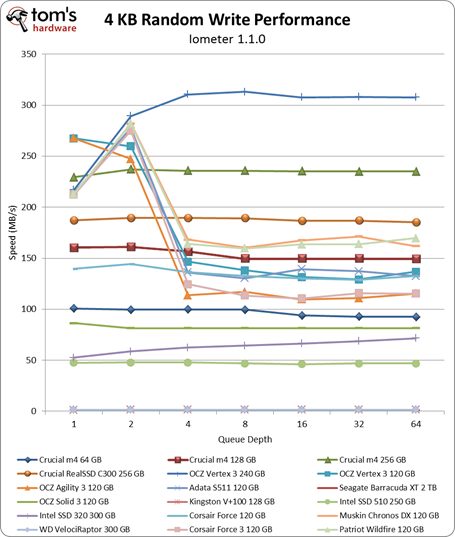

Benchmark Results: 4 KB Random Performance (Throughput)

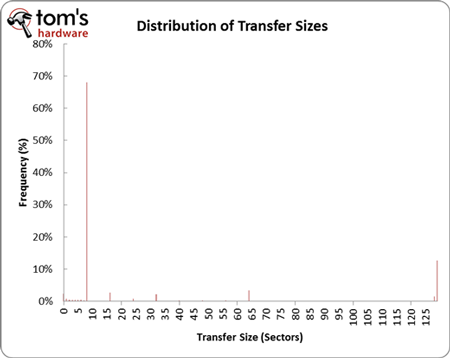

Our Storage Bench v1.0 mixes random and sequential operations. However, it's still important to isolate 4 KB random performance because that's such a large portion of what you're doing on a day-to-day basis. Right after Storage Bench v1.0, we subject the drives to Iometer to test random 4 KB performance. But why specifically 4 KB?

When you open Firefox, browse multiple Web pages, and write a few documents, you're mostly performing small random read and write operations. The chart above comes from analyzing Storage Bench v1.0, but it epitomizes what you'll see when you analyze any trace from a desktop computer. Notice that close to 70% of all of our accesses are eight sectors in size (512 bytes per sector, thus 4 KB).

We're restricting Iometer to test an LBA space of 16 GB because a fresh install of a 64-bit version of Windows 7 takes up nearly that amount of space. In a way, this examines the performance that you would see from accessing various scattered file dependencies, caches, and temporary files.

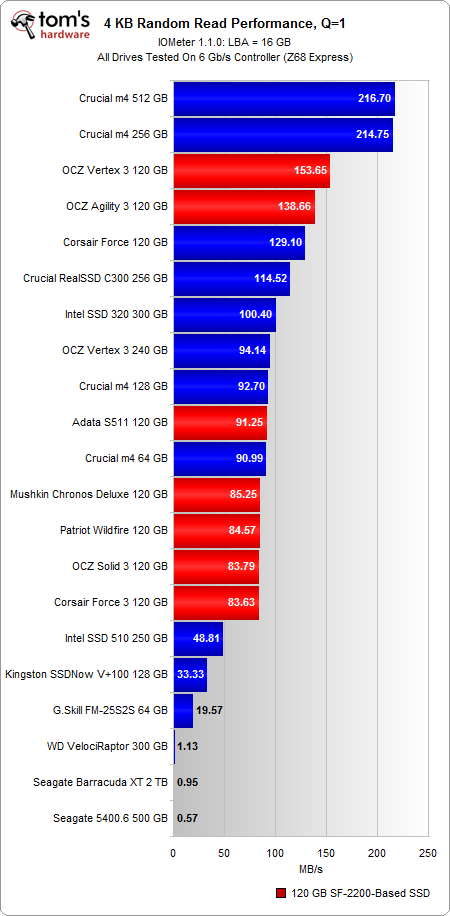

If you're a typical PC user, it's important to examine performance at a queue depth of one, because this is where the majority of your accesses are going to fall on a machine that isn't being hammered by I/O commands.

Before we get to the numbers, note that we're presenting random performance in MB/s instead of IOPS. There is a direct relationship between these two units, as average transfer size * IOPS = MB/s. Most workloads tend to be a mixture of different transfer sizes, which is why the networking ninjas in IT prefer IOPS. It reflects the number of transactions that occur per second. Since we're only testing with a single transfer size, it's more relevant to look at MB/s (it's also more intuitive for "the rest of us"). If you want to convert back to IOPS, just take the MB/s figure and divide by .004096 MB (remember your units) for the 4 KB transfer size.

At a queue depth of one, the 512 GB and 256 GB m4s reign king in random reads; both push past 200 MB/s. The closest contender is OCZ's 120 GB Vertex 3, but it falls behind by 25% with a random read rate of 153 MB/s. The Agility 3 follows closely at 138 MB/s, but all of the other drives fall behind by a noticeable margin. The S511, Force 3, Solid 3, Wildfire, and Chronos Deluxe all run about 50% slower, with speeds hovering around 90 MB/s.

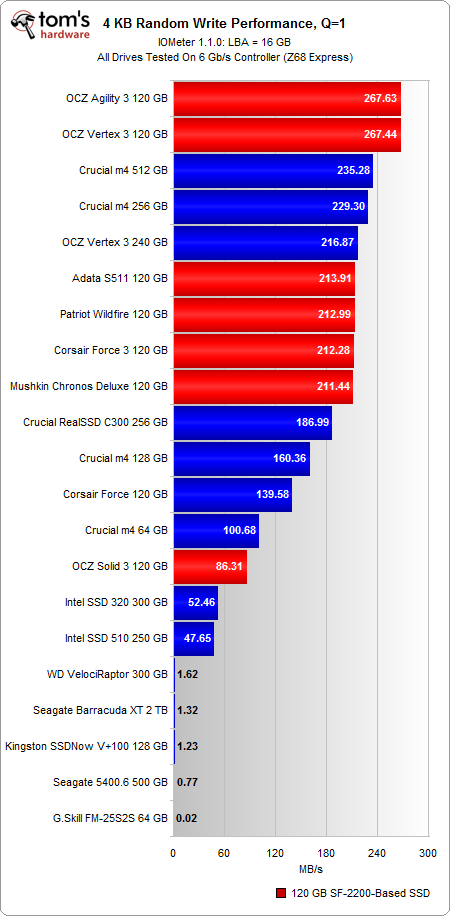

In random writes, the story changes. This time Crucial's 256 GB and 512 GB m4s drop behind the OCZ 120 GB Vertex 3 and Agility 3, albeit by a smaller margin than the random read test (the 120 GB Agility 3 only runs 13% faster than the 256 GB m4). The Force 3, Wildfire, Chronos Deluxe, and S511 perform much better here as well with speeds around 210 MB/s.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Notice how far back the Solid 3 slides, though. With a random write speed of 86 MB/s, the 120 GB Solid 3 is outperformed even by Crucial's 64 GB m4. The explanation relates back to firmware. The Solid 3 is a more budget-oriented version of the Agility 3. While both SSDs use the same 25 nm ONFi 1.0-based flash, the Solid 3's firmware is more performance-restricted. The company eventually plans to use less expensive NAND configurations to help drop cost (and then price), while maintaining the same lower-rated performance spec.

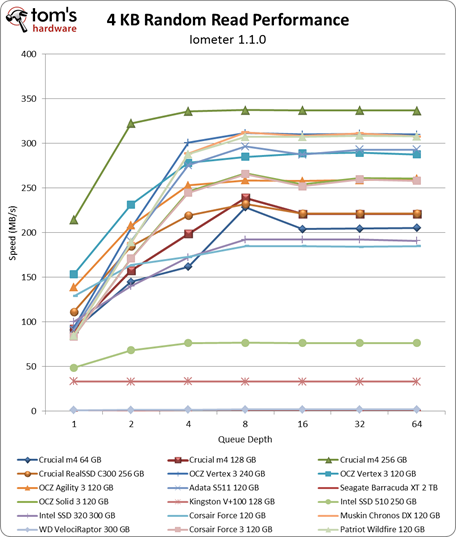

Perhaps you're also wondering why the 240 GB Vertex 3 runs slower than the 120 GB version at a queue depth of one. When you're not hammering the drive with higher queue depths, accesses are limited by the speed at which cache hits and misses occur in the metadata lookup table. Thus, a large-capacity SSD with a larger lookup table experiences lower throughput, as it must search through more metadata.

As we start looking at queue depths higher than four, we finally see the 240 GB Vertex 3 overtake its 120 GB counterpart because performance is no longer bound by the same lookup table bottleneck. When you have outstanding I/Os stacking up, there are enough accesses that the SSD controller can fully saturate the table with multiple queries. It's a clear lead that we maintain in random writes, where the 240 GB Vertex 3 finishes in first place.

However, in random reads, that honor goes to the 256 GB and 512 GB m4s.

Current page: Benchmark Results: 4 KB Random Performance (Throughput)

Prev Page Benchmark Results: Storage Bench v1.0 And Real-World Analysis Next Page Benchmark Results: 4 KB Random Performance (Response Time)-

dauthus The Corsair force series 3 drives should be instantly disqualified due to BSoDs etc. Go look at their reviews on newegg, it is horrifying.Reply -

garage1217 Nice review. You left out the corsair Force GT 120gb however which would have compared equally to the vertex as other sites have scored it. Also I own one, it ROCKS.Reply

On the force 3, it got horrible reviews because of a production issue. Corsair issued a full recall and now the issues with that particular drive have been cleared up which is why it was not disqualified. Very old news. -

dauthus ReplyOn the force 3, it got horrible reviews because of a production issue. Corsair issued a full recall and now the issues with that particular drive have been cleared up which is why it was not disqualified. Very old news.

You are wrong sir. -

gregzeng Googling told me that SSDs are almost impossible to use with Linux (EXT4). My netbook & notebook drives are in MS NTFS-COMPRESSED partitions (not Linux NTFS-4G, 'cos no compression). MS claims compressions has 'negligible' speed costs. Is that true, for about twice then storage space?Reply -

mayankleoboy1 why not include the max iops editions?Reply

anands benchies showed that 120gb vertex3 max iops ~= 256gb vertex 3 for quite a less price -

Hellbound This article mentions installing the OS and applications to SSD, and the rest (movies, music) to conventional hdd's. But I'm not sure how to do that. I've google'd it and there are many suggestions how to do it. I would like to know the best way to go about this.Reply -

whysobluepandabear HellboundThis article mentions installing the OS and applications to SSD, and the rest (movies, music) to conventional hdd's. But I'm not sure how to do that. I've google'd it and there are many suggestions how to do it. I would like to know the best way to go about this.WTF?Reply

Step 1.) Install SSD.

Step 2.) Install OS on SSD and everything you want to access and run quickly.

Step 3.) Install HDD.

Step 4.) Send files to E, F, G, H, I, J or whatever drive the HDD is. Performance orientated apps go to the C, or whatever drive your SSD is.

It's literally no different than if you were to plug in an external HDD via USB. You direct files and applications as accordingly.

We'll dismiss the Z68 - which allows you to use a small SSD to boost your normal HDD - otherwise if your SSD is large enough, it's actually a worse route, and just instead use the SSD. -

flong This is a superb review because it deals with real-world performance. I commend Tom's for providing a thorough review - one of the most thorough that I have read on any computer site. Tom's is right, the 120 GB size SSD is the sweet spot in SSD drive performance Vs cost.Reply

If you read similar reviews on other sites, the Patriot Wildfire, The Corsair Force 3 GT and possibly the OCZ Vertex 3 are the top performers in the 120 GB drive performance. The Wildfire uses 32 NM Toshiba toggle flash memory which is the best. The Force 3 GT uses 25 NM memory but somehow manages to keep up with the Wildfire. Note this is not the Corsair Force 3 listed in this review, it is the Corsair Force 3 GT - emphasize the GT. The GT and the wildfire are the two fastest 120 GB drives available right now based on real-world performance benchmarks.

The real important benchmarks to watch for are the real-world benchmarks at the end of each review. These really are the only ones that count. The other benchmarks are synthetic and they are not very accurate. The OCZ drives win all of the synthetic benchmarks but their real-world performance falls behind the Force GT and the Wildfire.

Another critical factor is that "fill-rate" performance of the drives. This is the performance of the drives as they fill. Again, the Wildfire and the Force GT rise to the top with the Vertex 3 coming in third place.

This review lists the Mushkin as a top performer, but it is not listed in many reviews (none that I have read) and so I have not included it in my comments. It is possible that this is a top performer also but I would like to read other reviews about it to confirm. -

Same thing with OCZ, to be honest. They got an error rate of 33% over at Newegg. Honestly I won't buy a single drive from them, no matter how fast, until they've fixed their issues that have lasted for two bloody generations.Reply

Crucial m4 for performance and Intel 320 for value is the best. -

compton HellboundThis article mentions installing the OS and applications to SSD, and the rest (movies, music) to conventional hdd's. But I'm not sure how to do that. I've google'd it and there are many suggestions how to do it. I would like to know the best way to go about this.Reply

Besides just manually managing your files on the HDD, there is another method you can use. It's more complicated to set up, but if you can google and follow directions, you'll find it may be easier.

With Windows 7 you can basically take your "My Documents" folder (the \Users\ stuff) and symbolically link the folders to the mechanical HDD. Everytime an application wants to save to one of your document folders, which would otherwise be on your system drive (in this case a SSD) will just end up on the HDD. From a file management perspective, you may find it easier.

I do it manually -- just install Windows, Office Pro 2010, Pantone, Google Chrome, iTunes, ect. to the SSD. All of my music, movies, backups of my SSD (I'm only using about 22GB of my Intel 510's 111GB) end up on the HDD. My Steam folder is about 200GB as well, so it goes on the HDD.

You just have to do stuff like change iTunes folder in advanced options to the folder on the HDD. It's really easy to do. That way, when I want to use another SSD, I have all the Steam games and media on the HDD. Fresh installs are really easy this way.

I tried installing some of my games on a few of the SSDs I own. Some games can really benefit, but mostly the increase in speed over a fast HDD isn't worth it.

I bought an original WD Raptor 36GB drive in 2003 that I used for many years, so I was completely comfortable trying to manage the stuff that ends up on my HDD. I ended up moving from a 60GB SSD to a 120GB SSD that is faster but I just can't bring myself to put much on it.