Three Xeon E5 Server Systems From Intel, Tyan, And Supermicro

After taking a first look at Intel's Xeon E5 processors, we wanted to round up a handful of dual-socket barebones platforms to see which vendor sells the best match for Sandy Bridge-EP-based chips. Intel, Supermicro, and Tyan are here to represent.

Supermicro 6027R-N3RF4+: Layout And Overview, Continued

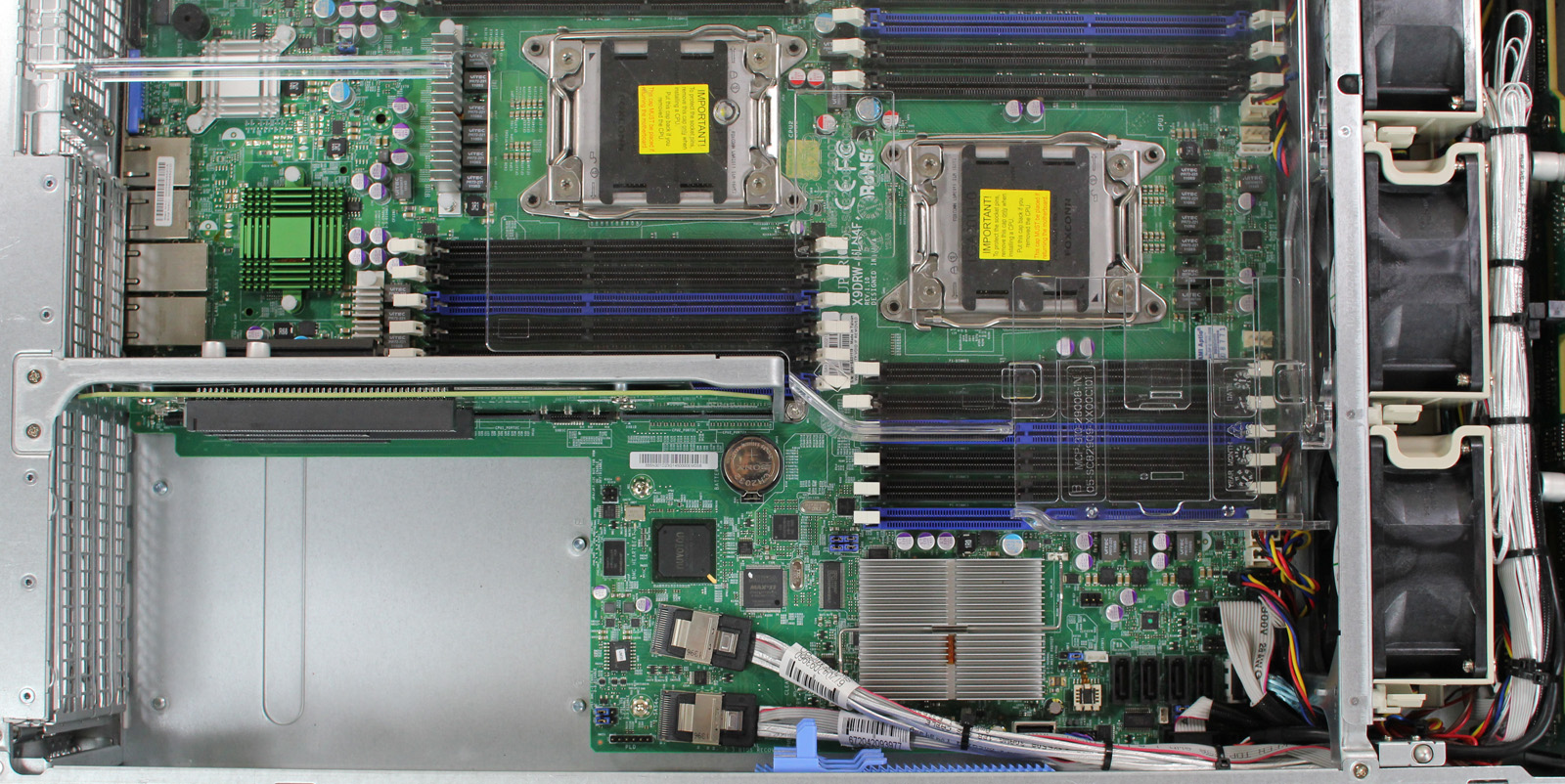

You'll also find four full-height expansion slots around back, along with three half-height slots. As we're about to see, only two of the three half-height bays can be used at a time. Otherwise, the motherboard's memory interferes with the third.

Supermicro's chassis employs fixed cut-outs for the motherboards it accepts, rather than a larger block for a customizable I/O shield. We're looking at a proprietary design, so it's unlikely that you'd ever put another motherboard in this chassis. So, the lack of upgradability in this application is both acceptable and fairly standard. Much like servers designed by Dell, HP, Sun, IBM, and Fujitsu, engineers build these enclosures to optimize expandability and airflow, not to adhere to standard motherboard form factors.

A quick peek at the available ports reveals a legacy PS/2 keyboard and mouse combo port, serial connectivity, VGA output, and four USB ports. There is a total of five 8P8C jacks: one above a pair of USB ports, and four in a row to the right. The one above the USB ports originates from a Realtek RTL8201N, and is used for IPMI 2.0 remote management functions. Some server vendors use a shared port for remote management, but I personally prefer a dedicated port, since I set up LANs to VPN into for management. Installing new servers in environments like this is very easy because you know that the cable from the management NIC goes to the management network. The other four ports are serviced by an on-board Intel I350-AM4 controller, which is becoming standard on the newest-generation servers.

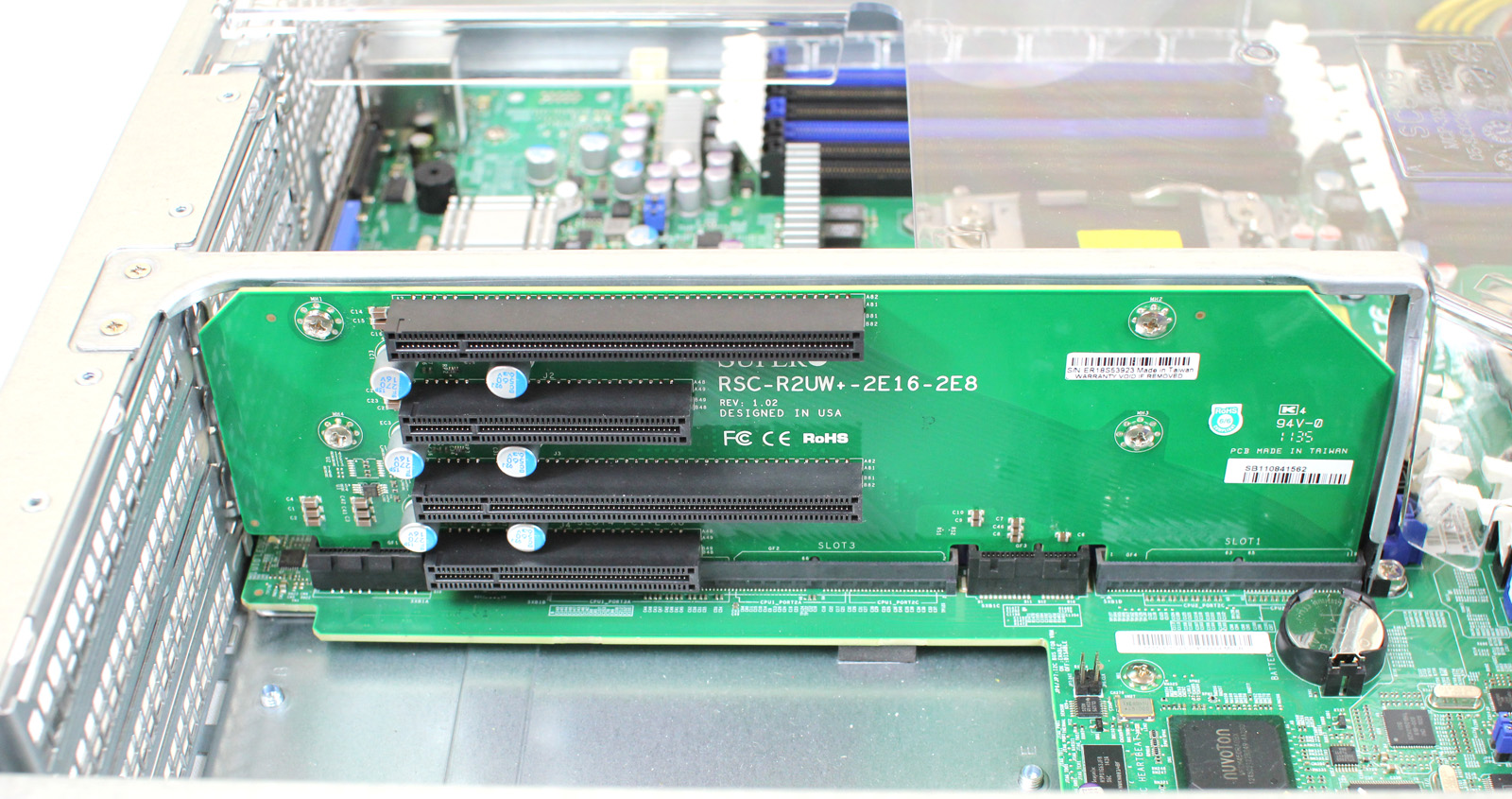

As mentioned, the Supermicro X9DRW-3LN4F+ supports up to six add-in cards. The sextet is PCI Express 3.0-compliant, and exposed through risers. Two are full-height, full-length x16 slots, one is a full-height, full-length x8 slot, one is a full-height, half-length x8 slot, and two are low-profile slots.

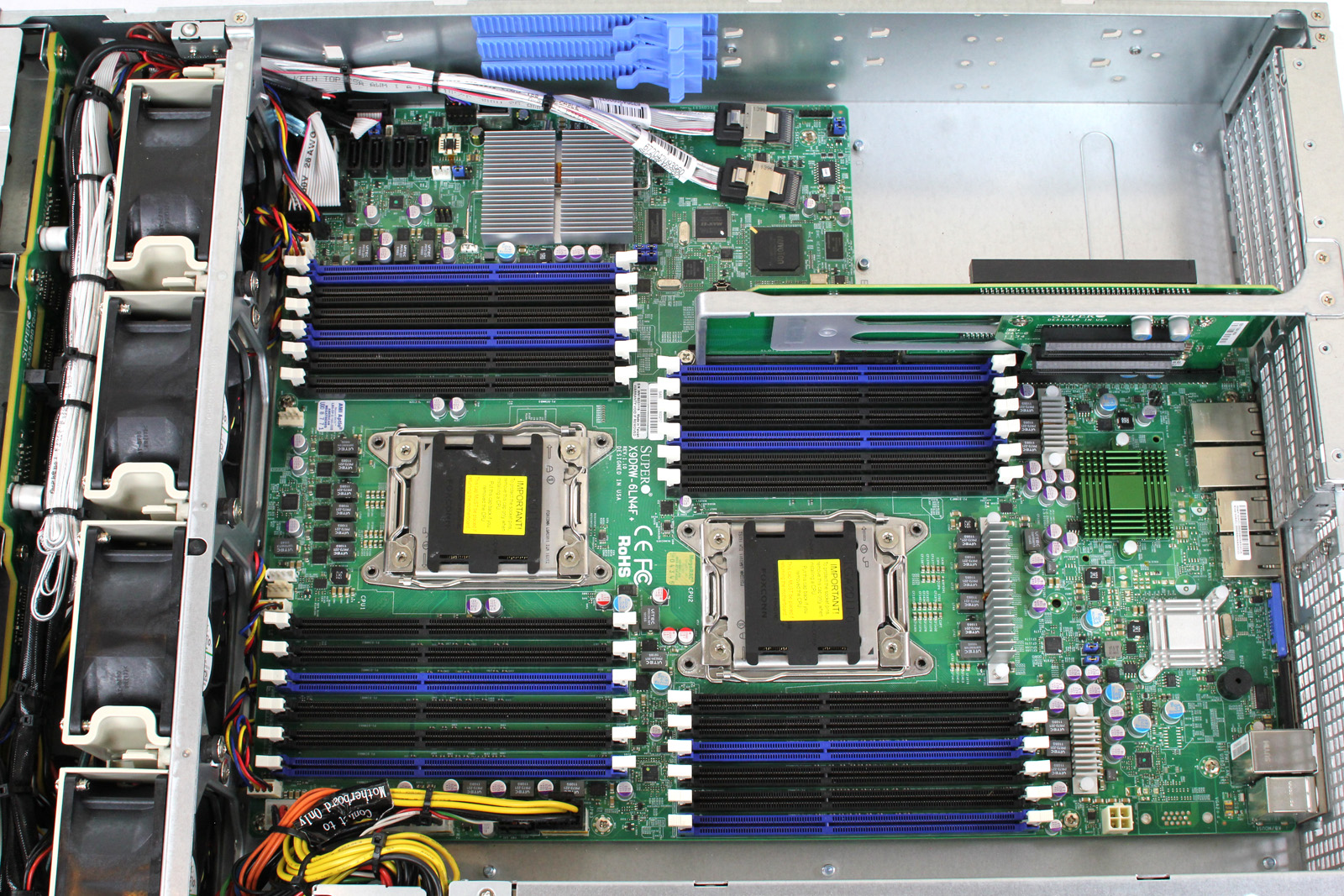

Supermicro utilizes Intel's C606 PCH in its design, which gives the platform two SATA 6Gb/s ports, two SATA 3Gb/s ports, and eight 3 Gb/s SAS ports directly from the PCH. This is a big departure from previous generations, where an engineer would typically dedicate PCIe connectivity to an on-board SAS host bus adapter or RAID controller.

Intel's C606 chipset's SAS ports are really targeted at the 3.5" high-capacity storage market, where 3 Gb/s is generally enough throughput. The company is not being as aggressive as originally thought (after all, LSI is one of Intel's partners), as it was originally believed that the SCU would feature 6 Gb/s SAS connectivity. For this generation, at least, all three participating vendors do offer LSI-based controllers as upgrade options. We just aren't seeing them, since we requested that manufacturers leave them off.

One thing you do see on the board, directly resulting from this integrated chassis/motherboard package, is that one of the two SAS-based SFF-8087 connectors is angled to provide easier cable management for the four-link cables.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Each CPU and its associated memory is placed in-line, but slightly offset. That's a standard configuration for dual-socket server motherboards. Supermicro manages to fit 12 DDR3 DIMM slots per CPU, yielding a maximum memory configuration of 192 GB using unbuffered ECC DDR3 memory or 768 GB of registered ECC DDR3 memory.

Those 12 slots mean that Supermicro's enclosure can only hold two cards across its three low-profile expansion slots. They also mean that Supermicro's cooling solution is not compatible with the standard 91.5 x 91.5 mm LGA 2011 footprint. Instead, it uses a narrower mounting orientation (look for "S" at the end of Supermicro SKUs to ensure you have the slim version.) To address its unique coolers, Supermicro provides two SNK-P0048PSC (note the "S" in PSC for slim LGA 2011) passive 2U heat sinks for our 135 W Xeons.

Current page: Supermicro 6027R-N3RF4+: Layout And Overview, Continued

Prev Page Supermicro 6027R-N3RF4+: Layout And Overview Next Page Supermicro 6027R-N3RF4+: Management Features And Serviceability-

EzioAs Reply9532821 said:the charts are looking strange. they need to be reduced in size a bit....

I agree. Just reduce it a little bit but don't make it too hard to see -

willard TheBigTrollno comparison needed. intel usually winsUsually? The E5s absolutely crush AMD's best offerings. AMD's top of the line server chips are about equal in performance to Intel's last generation of chips, which are now more than two years old. It's even more lopsided than Sandy Bridge vs. Bulldozer.Reply -

Malovane dogman_1234Cool. Now, can we compare these to Opteron systems?Reply

As an AMD fan, I wish we could. But while Magny-Cours was competitive with the last gen Xeons, AMD doesn't really have anything that stacks up against the E5. In pretty much every workload, E5 dominates the 62xx or the 61xx series by 30-50%. The E5 is even price competitive at this point.

We'll just have to see how Piledriver does.

-

jaquith Hmm...in comparison my vote is the Dell PowerEdge R720 http://www.dell.com/us/business/p/poweredge-r720/pd?oc=bectj3&model_id=poweredge-r720 it's better across the board i.e. no comparison. None of this 'testing' is applicable to these servers.Reply -

lilcinw Finally we have some F@H benches!! Thank you!Reply

Having said that I would suggest you include expected PPD for the given TPF since that is what folders look at when deciding on hardware. Or you could just devote 48 hours from each machine to generate actual results for F@H and donate those points to your F@H team (yes Tom's has a team and visibility is our biggest problem). -

dogman_1234 lilcinwFinally we have some F@H benches!! Thank you!Having said that I would suggest you include expected PPD for the given TPF since that is what folders look at when deciding on hardware. Or you could just devote 48 hours from each machine to generate actual results for F@H and donate those points to your F@H team (yes Tom's has a team and visibility is our biggest problem).The issue is that other tech sites promote their teams. We do not have a promotive site. Even while mentioning F@H, some people do not agree with it or will never want to participate. It is a mentality. However, it is a choice!Reply