Nvidia Tegra K1 In-Depth: The Power Of An Xbox In A Mobile SoC?

Nvidia gave us an early look at its Tegra K1 SoC at its headquarters in Santa Clara. By far the most noteworthy change is a shift from programmable vertex and pixel shaders to the company's Kepler architecture, enabling exciting new graphics capabilities.

Putting Kepler Into Tegra: It’s All About Graphics

Alright, so, we have revised Cortex-A15 cores, Nvidia's own Denver cores coming sometime later, and a more appropriate variation of the 28 nm manufacturing process improving the performance-per-watt of Tegra K1 compared to its predecessor. That story could turn out to be very good, but we suspect it isn't strong enough on its own to coax partners away from entrenched incumbents.

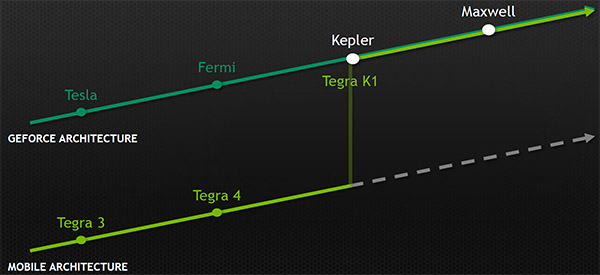

Nvidia is instead making a move a lot of enthusiasts expected in the Tegra 4 timeframe: it’s converging Tegra’s graphics technology with the same design pervasive through the HPC, workstation, desktop, and mobile markets. Gone are the programmable vertex and pixel shaders, which Nvidia previously told me were the right decision at the time for Tegra 4, replaced by the company’s Kepler architecture.

What Nvidia’s reps couldn’t tell me back when “Wayne” launched was that they built Kepler in such a way to facilitate scalability from the 250 W discrete GPUs we see in workstations down to the sub-2 W version that shows up in Tegra K1. Kepler was the missing piece Nvidia needed to phase out its older mobile architecture.

Article continues belowMoving forward, every GPU architecture Nvidia develops will be mobile-first. That was a decision management made during Kepler’s design and then applied to Maxwell from the start. It doesn’t mean Maxwell will show up in Tegra first (in fact, the first Maxwell-powered discrete GPUs are expected in the next few weeks). But the architecture was approached with its mobile configuration and power characteristics in mind, scaling up from there. Sounds like a gamble for a company so reliant on the success of its big GPUs, right? Nvidia says that the principles applied to getting mobile right will be what help it maximize the efficiency of its discrete products moving forward—and we’ll have the hardware to put those claims to the test once GeForce GTX 750 materializes.

Here’s the other side of that coin from a group of guys who do nearly all of their gaming on PCs: do smartphones and tablets really need similar capabilities as desktops? We sit down to a mouse and keyboard for the story-driven content or all-night multi-player marathons, and kick back on the couch for more casual console gaming. Given small screens and interface limitations, is there a real reason to make powerful graphics the front-and-center priority? According to Nvidia’s data, yes. A majority of revenue earned through Google Play comes from games. A majority of time spent on tablets is spent gaming. And a majority of game developers are targeting mobile devices (slightly more than PCs, even). Although we joke around that tablet gaming happens in the bathroom, leaving little time for an immersive experience, perhaps ample graphics horsepower and the right API support are what push this segment to the next level.

Naturally, Nvidia needs to count on gaming continuing as the dominant tablet workload. Graphics is its wheelhouse, after all. With the company’s old mobile architecture set aside, it can push that agenda forward using the same software tools developers leverage in the desktop and professional spaces. Whether or not ISVs move massively successful games like GTA over from previous-gen consoles to Android is a business decision. But Nvidia makes this possible in hardware and through its various development environments on the software side. The individual tools aren’t particularly relevant to enthusiasts. What they mean, however, is that titles written for DirectX 11, OpenGL, any version of OpenGL ES, CUDA, and OpenCL 1.1 can be brought over using Windows, Linux, and OS X.

It’s hard not to notice that all of this is a sharp contrast to last year’s message, which was that Tegra 4 lacked OpenGL, OpenGL ES 3.0, CUDA, and DirectX 11 support, but that it was optimized for available apps, making its feature set perfectly suitable. We much prefer Tegra’s prospects going into 2014, with the impetus on Android and Windows RT developers to exploit Nvidia’s hardware. If this was a story about any other hardware vendor, it might seem far-fetched to expect titles tuned for a specific platform. But Nvidia tends to play the relationship game well; there is already plenty of content with Tegra-oriented optimizations.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

As if to drive its point home, Nvidia showed off a demonstration of the Unreal Engine 4’s feature set (which includes several line items that transcend OpenGL ES 3.0) running on a Tegra K1 reference tablet in the Tegra Note 7’s chassis. It took the engine, ported it to Android, implemented an OpenGL 4.4 renderer, and now UE4-based content runs on Tegra K1. Also on display were Serious Sam 3 and Trine 2—both 2011-era titles that looked great on Nvidia’s samples.

Current page: Putting Kepler Into Tegra: It’s All About Graphics

Prev Page Tegra K1’s CPU: An Updated 4+1 Cortex-A15 Design Next Page Tegra K1’s GPU: More Or Less One SMX-

RupertJr "Approx. Comparison To Both Last-Gen Consoles"Reply

Why don't you update the graph title to "Prev Gen Consoles" since last gen consoles has 1.23 TFLOPS (XBOX One) and 1.84 TFLOPS (PS4) performance difference to this K1 chip is huge. It looks like NVidia will loose another battle... -

renz496 Reply12372657 said:Where is the maxwell stuff did they not even address it yesterday? WTF

most likely they will talk about it when the actual gpu is close to release. -

Wisecracker ReplyAt least in theory, Tegra K1 could be on par with those previous-generation systems ...

AMD does it. Intel, too. But ...

When it comes to over-the-top hype, embellishment and hyperbole, nVidia is the king.

-

ZolaIII @ Rupert JrReply

Well multiplied 3x & with higher memory bandwidth you are there.

1.5x Maxwell & 2x cores (28nm to 16-14nm).

Hyper memory cube.

All this in 2015. -

RupertJr @ZolaIIIReply

but what you are assuming does not exists nowadays and maybe until years...

where will be other chips at that time? -

dragonsqrrl "in fact, the first Maxwell-powered discrete GPUs are expected in the next few weeks"Reply

Excellent. -

InvalidError Reply

Well, they are right on at least one thing: 2014's SoCs are now about on par with high-end components from ~7 years ago or mid-range gaming PCs from ~5 years ago and are managing to do so on a 2-3W power budget, which is rightfully impressive IMO.12373063 said:It looks like NVidia will loose another battle...

If SoCs continue improving at this pace while PCs remain mostly stagnant, the performance gap between SoCs and mainstream PCs will be mostly gone by the time 2015 is over. -

Shankovich I'm assuming this SoC will run at around 15 watts. With 192 CUDA cores and DDR3 LP, your max output will probably be somewhere around 180 GFLOPS. Yes yes it CAN do the same effects as consoles and PC, so what? If the Game Cube had DX 11.2 api running on it, it could as well, but obviouslt it coiuldn't put too much on the screen. This will be the same deal with the K1.Reply

It's cool, I like it, don't get me wrong, but the stuff they're saying, though technically correct, is so misleading to about 90% of the market. People are going to think they have PS4's in their hand when in reality they have half a xbox 360 with up to date API's running on it.