Nvidia Tegra K1 In-Depth: The Power Of An Xbox In A Mobile SoC?

Nvidia gave us an early look at its Tegra K1 SoC at its headquarters in Santa Clara. By far the most noteworthy change is a shift from programmable vertex and pixel shaders to the company's Kepler architecture, enabling exciting new graphics capabilities.

Chimera 2: Putting An Emphasis On Imaging

Nvidia was clearly bullish on imaging when it introduced Tegra 4. Given the company’s strength in graphics, it made sense that it’d extend expertise to photography and video as well. Disappointingly, we waited almost an entire year before the first manifestation of the company’s Computational Photography Engine surfaced, enabling always-on HDR and video stabilization. Then again, given the dearth of devices sporting Tegra 4, a modest software ecosystem isn’t altogether surprising. Should Tegra K1 enjoy more rapid pick-up, we’d hope to see hardware manufacturers doing more with the SoC’s imaging capabilities.

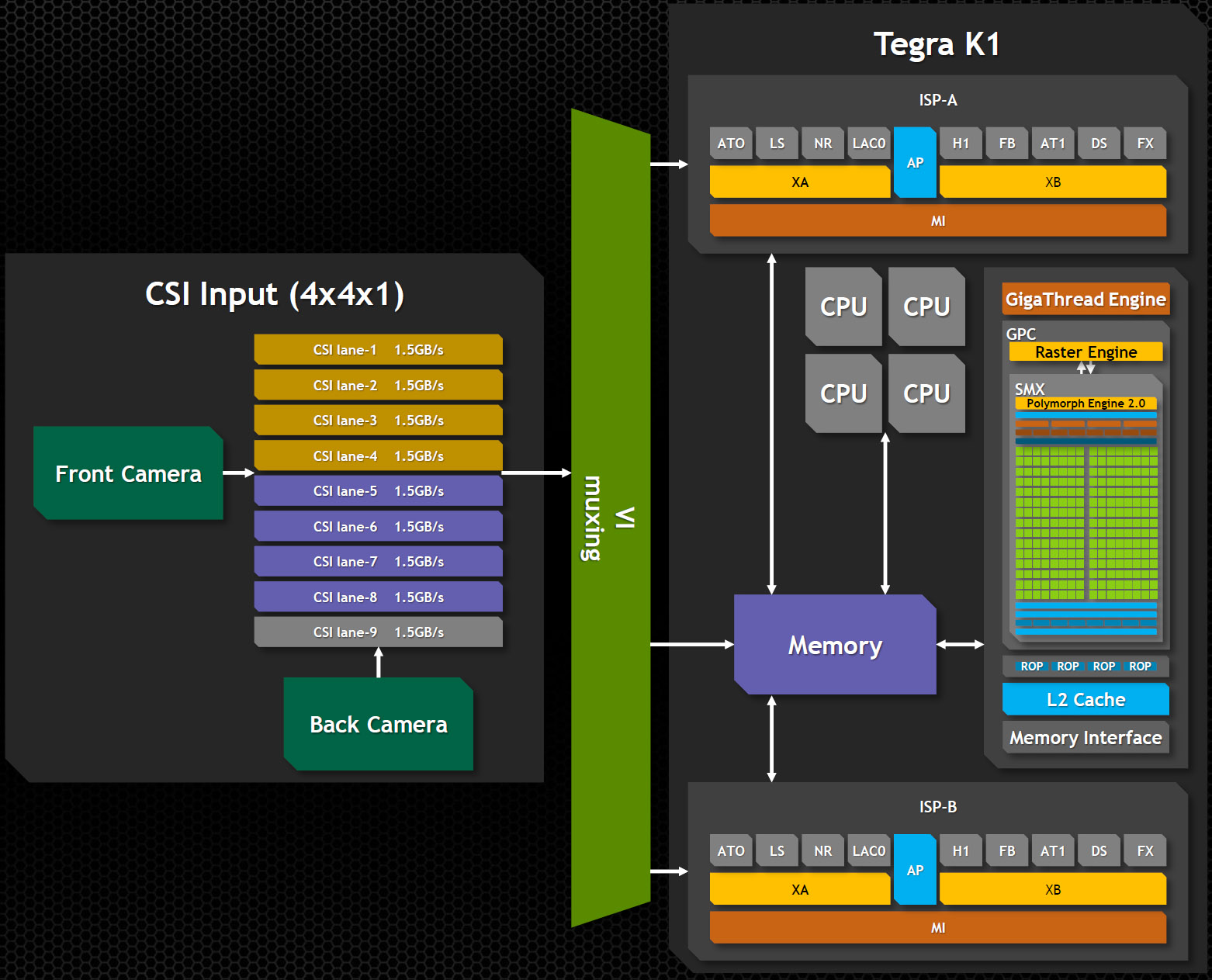

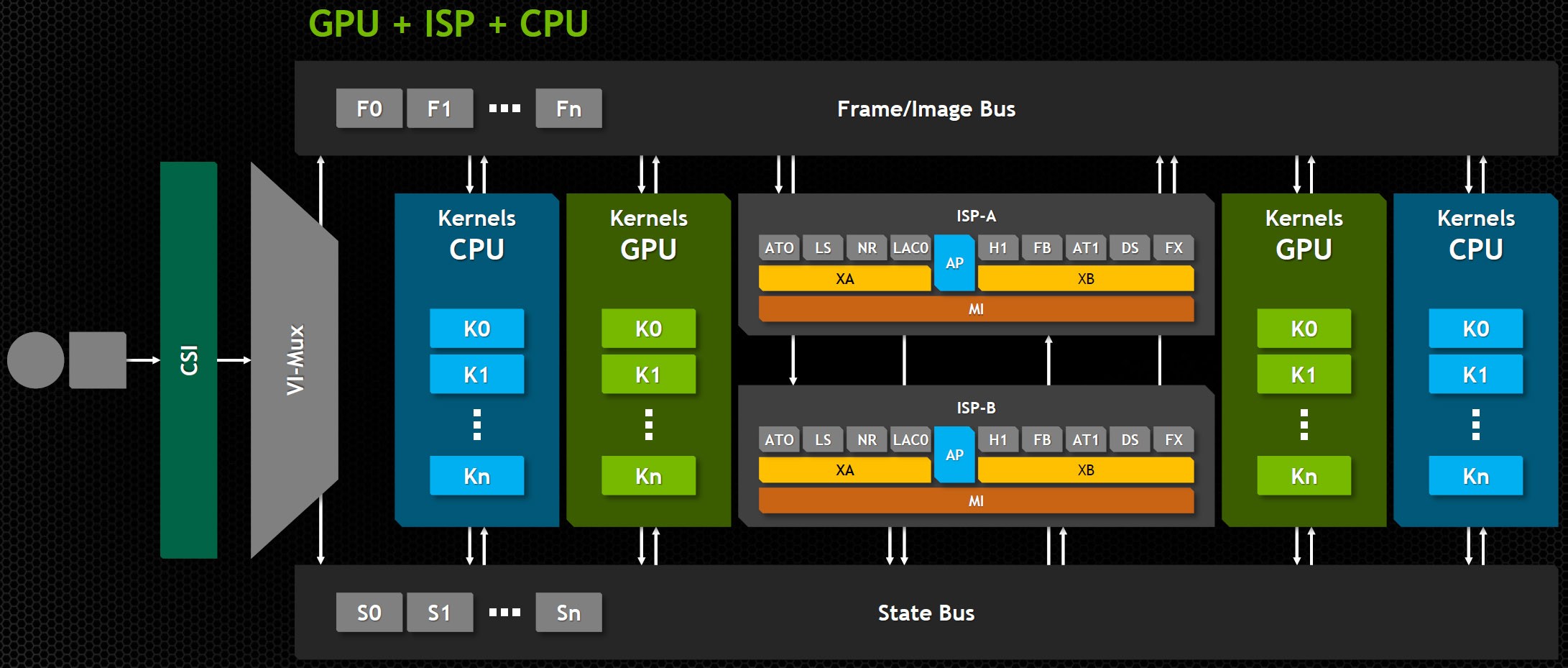

The potential balloons with Tegra K1. Last generation, Tegra 4’s ISP was rated for up to 350 megapixels/s of throughput. This time around, dual ISPs offer 600 megapixels each (two 20 MP streams at 30 Hz). Having a pair of ISPs make it possible to gather images from one source as information is processed from another, either from two cameras or from a camera and memory. A crossbar fabric connects both ISPs to memory, where they’re able to communicate with the CPU and GPU.

There’s a lot of future-looking enablement going on—support for up to 4096 focus points, 14-bit input, 100 MP sensors, interoperability with general-purpose compute, and quality-enhancing capabilities to minimize noise, correct bad pixels, and downscale are all at least possible. Some of the features are in place simply to improve quality, while others may pave the way for new imaging applications.

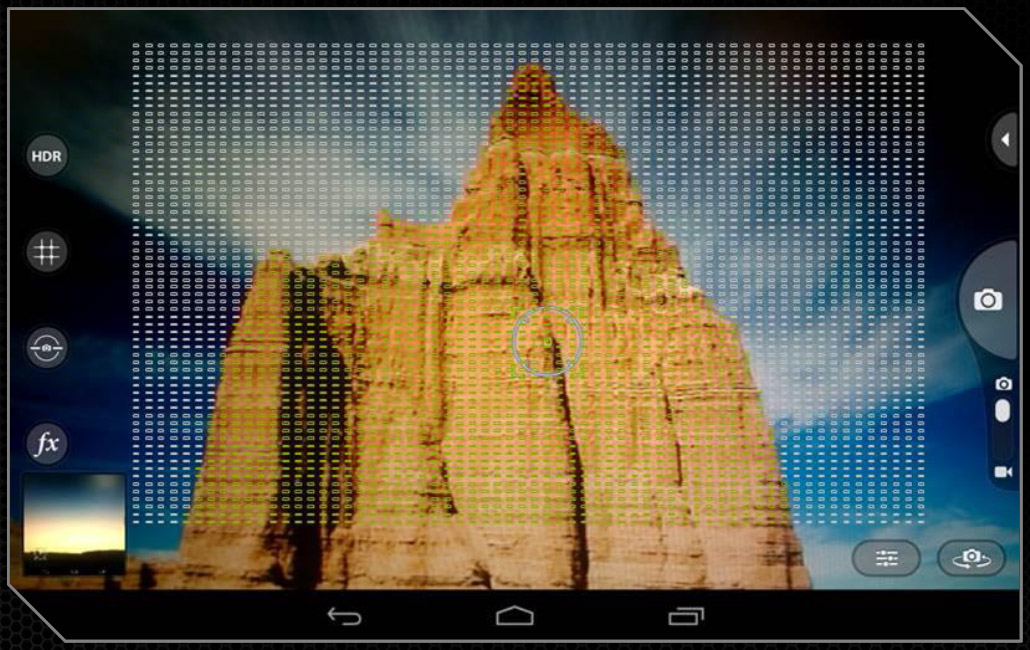

Article continues belowFor instance, allowing more than 4000 focus points seems like overkill. Nvidia counters that this is useful for tracking multiple objects, and indeed we’ve seen demos of moving content where the camera detects and tracks the subject, maintaining focus on it, despite the rest of the scene changing. In the same vein, leveraging the graphics engine’s newfound compute capability, effects can be applied to the ISP’s contents in real-time. Deep images, where each pixel can store any number of samples per channel (instead of just one), become viable. So does creating panoramic shots by “painting” with a camera sensor.

Powerful ISPs backed by a capable GPU open the door to new forms of artistic expression, and Nvidia’s team clearly has the vision to drive innovation forward in that space. As mentioned, though, demonstrations of what computational photography can achieve have been somewhat slow to emerge in production. By taking the reins and introducing its own Tegra Note 7, Nvidia put itself in a position to roll out new software features. The first OTA update appeared mere weeks ago. Hopefully, that cadence speeds up with Tegra K1—we don’t want to wait another year before the company’s most recent demos become tangible.

When Nvidia introduced Chimera last year, it presented a slide very similar to this one, except that there was only one ISP and the GPU wasn’t Kepler-based.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Chimera 2: Putting An Emphasis On Imaging

Prev Page Tegra K1’s GPU: More Or Less One SMX Next Page Can Nvidia Strike While The Iron Is Hot?-

RupertJr "Approx. Comparison To Both Last-Gen Consoles"Reply

Why don't you update the graph title to "Prev Gen Consoles" since last gen consoles has 1.23 TFLOPS (XBOX One) and 1.84 TFLOPS (PS4) performance difference to this K1 chip is huge. It looks like NVidia will loose another battle... -

renz496 Reply12372657 said:Where is the maxwell stuff did they not even address it yesterday? WTF

most likely they will talk about it when the actual gpu is close to release. -

Wisecracker ReplyAt least in theory, Tegra K1 could be on par with those previous-generation systems ...

AMD does it. Intel, too. But ...

When it comes to over-the-top hype, embellishment and hyperbole, nVidia is the king.

-

ZolaIII @ Rupert JrReply

Well multiplied 3x & with higher memory bandwidth you are there.

1.5x Maxwell & 2x cores (28nm to 16-14nm).

Hyper memory cube.

All this in 2015. -

RupertJr @ZolaIIIReply

but what you are assuming does not exists nowadays and maybe until years...

where will be other chips at that time? -

dragonsqrrl "in fact, the first Maxwell-powered discrete GPUs are expected in the next few weeks"Reply

Excellent. -

InvalidError Reply

Well, they are right on at least one thing: 2014's SoCs are now about on par with high-end components from ~7 years ago or mid-range gaming PCs from ~5 years ago and are managing to do so on a 2-3W power budget, which is rightfully impressive IMO.12373063 said:It looks like NVidia will loose another battle...

If SoCs continue improving at this pace while PCs remain mostly stagnant, the performance gap between SoCs and mainstream PCs will be mostly gone by the time 2015 is over. -

Shankovich I'm assuming this SoC will run at around 15 watts. With 192 CUDA cores and DDR3 LP, your max output will probably be somewhere around 180 GFLOPS. Yes yes it CAN do the same effects as consoles and PC, so what? If the Game Cube had DX 11.2 api running on it, it could as well, but obviouslt it coiuldn't put too much on the screen. This will be the same deal with the K1.Reply

It's cool, I like it, don't get me wrong, but the stuff they're saying, though technically correct, is so misleading to about 90% of the market. People are going to think they have PS4's in their hand when in reality they have half a xbox 360 with up to date API's running on it.