Elon Musk implies that Tesla's procuring AMD's Instinct MI300 for AI

Modern cars need big AI GPUs.

Elon Musk, CEO of Tesla, said his company was procuring artificial intelligence (AI) processors worth billions of dollars for its artificial intelligence training workloads. Nvidia's AI GPUs are on the top of Musk's list, but they are followed by the company's own Dojo processors and hardware from AMD, which already supplies Tesla system-on-chips for infotainment.

"A Dojo Supercomputer [is worth] $500 [million], while a large sum of money, [it] is only equivalent to a 100,000-unit H100 system from Nvidia," Elon Musk, chief executive of Twitter, said in an X post.

A data center full of Tesla's Dojos costing $500 million is an impressive development, considering they will be used for computer vision, video recognition processing, and machine learning. But the company is spending more than $500 million on Nvidia's hardware, presumably H100 and H200 GPUs now and then Blackwell-based B100 late this year. It is noteworthy that Teals only procured 15 thousand H100 GPUs last year, according to Omdia, so apparently, the company is accelerating its Nvidia-powered efforts.

"Tesla will spend more than that on Nvidia hardware this year," Musk wrote. "The table stakes for being competitive in AI are at least several billion dollars per year at this point."

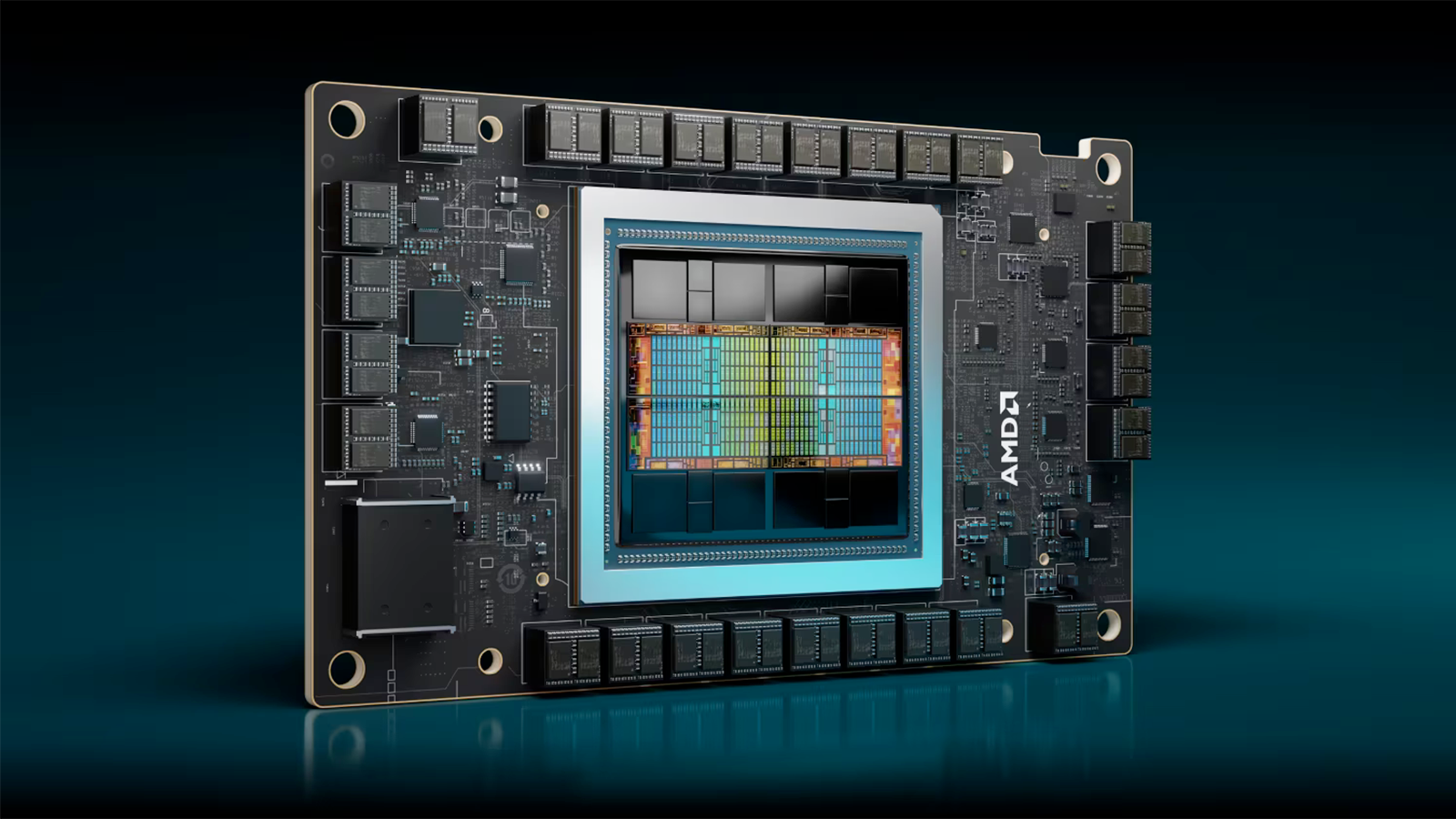

What is intriguing is that he answered positively (albeit laconically) when asked whether Tesla was procuring AI hardware from AMD. At present, AMD has multiple offerings for AI, including its Instinct MI200, Instinct MI250, and Instinct MI300X accelerators, as well as the Instinct MI300A accelerated processing unit which combines Zen 4 x86 general-purpose cores as well as CDNA 3-based clusters (or rather chipsets) for computing.

According to AMD's reported performance numbers, the Instinct MI300X beats Nvidia's H100 80GB in AI and HPC performance. Meanwhile, the H100 80GB is currently widely used by significant hyperscalers such as Google, Meta (formerly Facebook), and Microsoft. Workloads run on H100 will be scaled out to other H100s, so AMD's MI300X does not precisely have to compete against H100, at least for existing customers and workloads. Yet again, performance numbers demonstrated by AMD could indicate that the Instinct MI300X will likely be a strong rival for Nvidia's upcoming H200 141GB GPU.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.