AMD Ryzen Threadripper 1950X Game Mode, Benchmarked

Testing Ryzen's Infinity Fabric & Memory Subsystem

Infinity Fabric Latency And Bandwidth

The 256-bit Infinity Fabric crossbar ties the resources inside of a Zeppelin die together. Tacking on a second Zeppelin die to create Threadripper introduces another layer of the fabric, though. Cache accesses remain local to each CCX, but a large amount of memory, I/O, and thread-to-thread traffic still flow across that second layer.

It didn't take long for enthusiasts to figure out that AMD's Infinity Fabric is tied into the same frequency domain as the memory controller, so a memory overclock reduces latency and increases bandwidth through the crossbar. Performance in latency-sensitive applications (like games) consequently improves.

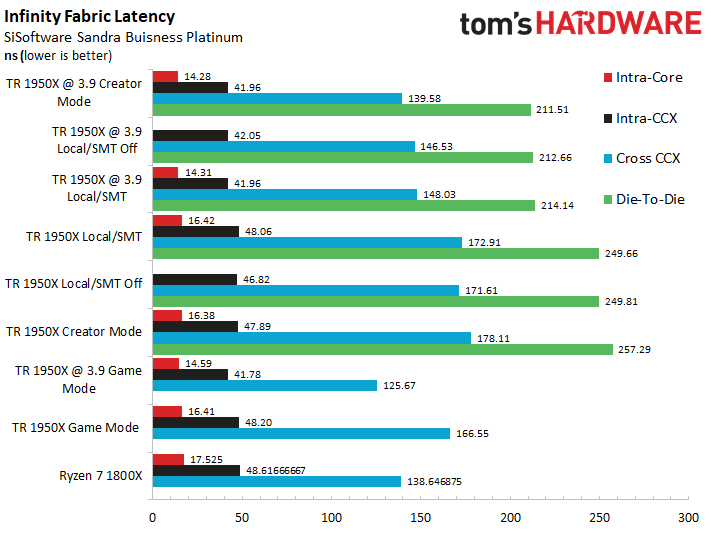

SiSoftware Sandra's Processor Multi-Core Efficiency test helps us illustrate the Infinity Fabric's performance. We use the Multi-Threaded metric with the "best pair match" setting (lowest latency). The utility measures ping times between threads to quantify fabric latency in every possible configuration.

The intra-core latency measurements represent communication between two logical threads resident on the same physical core, and as we can see, disabling SMT eliminates that measurement entirely. For the remaining setups, tuning reduces latency by a few nanoseconds. But this is attributable to higher clock rates. As we've seen in the past, increased memory frequencies have little effect on intra-core latency.

Intra-CCX measurements quantify latency between threads on the same CCX that are not resident on the same core. Increasing the clock rate yields larger ~6ns latency reductions.

Cross-CCX quantifies the latency between threads located on two separate CCXes, and we see a similar reduction thanks to overclocking. Notably, the Ryzen 7 1800X features much lower Cross-CCX latency than the stock Threadripper and most overclocked configurations. This is likely due to some form of provisioning, possibly in the scheduling algorithms, for Threadripper's extra layer of fabric.

As we can see, the overclocked Threadripper CPU in Game mode, which doesn't have an active fabric link to the other die, has the lowest Cross-CCX latency.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Die-To-Die measures communication between the two separate Zeppelin dies. Game mode effectively disables the second Zeppelin die at an operating system level, eliminating die-to-die latency entirely. The second die's uncore is still active though, which is necessary to ensure its I/O and memory controllers are still accessible.

Creator mode suffers the worst die-to-die latency, but tuning reduces it considerably. The two SMT options (on and off) receive large reductions from our overclocking efforts as well.

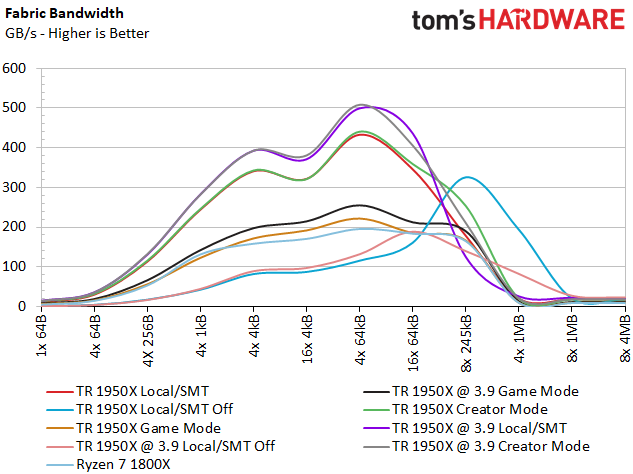

The utility measures fabric bandwidth too, which is critical for performance since data fetches from the remote memory also flow across the fabric. As such, AMD over-provisions the fabric and memory subsystem to optimize the distributed memory architecture.

Both the Creator mode and Local/SMT configurations offer the best fabric bandwidth, enjoying big boosts from overclocking. The Ryzen 7 1800X falls into the middle of the chart alongside Threadripper's Game mode, which is logical considering they are both effectively 8C/16T processors. Disabling SMT but leaving both dies active (Local/SMT off) yields a unique profile that provides higher performance with larger accesses and lower performance with smaller accesses.

Cache And Memory Latency

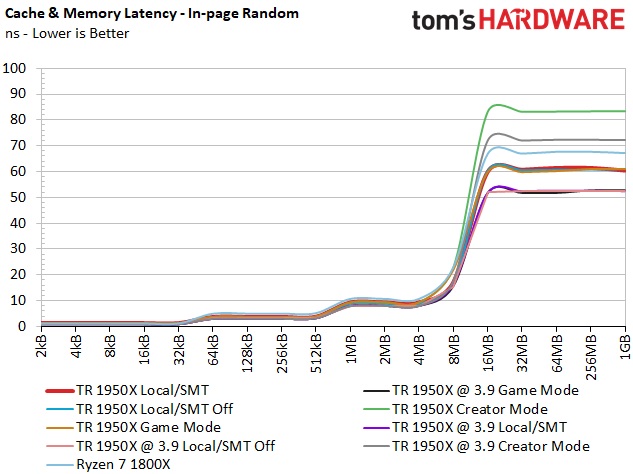

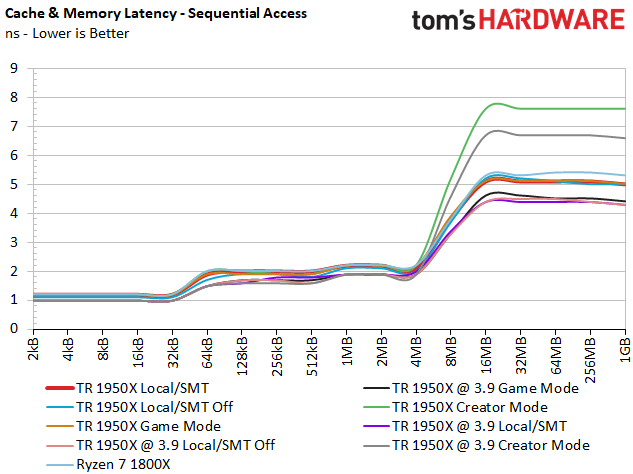

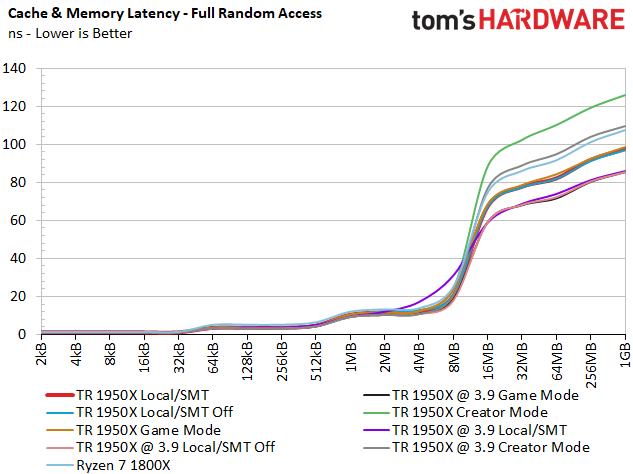

We tested with DDR4-2666 memory at stock settings and increased to DDR4-3200 for our overclocked configurations.

The Translation Look Aside Buffer is a cache that reduces access times by storing recently accessed memory addresses. Like all caches, the TLB has a limited capacity, so address requests that land in the TLB are "hits," while requests that land outside of the cache are "misses." Of course, hits are more desirable, and solid prefetcher performance yields higher hit rates.

Sequential access patterns are almost entirely prefetched into the TLB, so the sequential test is a good measure of prefetcher performance. The in-page random test measures random accesses within the same memory page. It also measures TLB performance and represents best-case random performance (this is the measurement vendors use for official spec sheets). The full random test features a mix of TLB hits and misses, with a strong likelihood of misses, so it quantifies worst-case latency.

Regardless of the memory access pattern, the smallest data chunks fit into the L1 cache. And as the size of the data increases, it populates the larger caches.

| Header Cell - Column 0 | L1 | L2 | L3 | Main Memory |

|---|---|---|---|---|

| Range | 2KB - 32KB | 32KB - 512KB | 512KB - 8MB | 8MB - 1GB |

Threadripper 1950X features better L2 and L3 latency than the Ryzen 7 1800X with every type of access pattern. Also, we spot notable latency reductions via overclocking for Threadripper's L1, L2, and L3 caches.

That changes as the workload flows out to main memory. Threadripper's Creator mode (the default setting) has the highest latency with every access pattern. This is a direct result of memory accesses landing in the remote memory. Our in-page measurements mirror AMD's 86.9ns specification, but worst-case full random access exceeds 120ns. Overclocking the processor and memory lowers latency, but Creator mode still doesn't overtake any of the configurations we compare it to.

Switching into NUMA mode with the Local setting improves main memory access dramatically for the other configurations. We measure ~60ns for in-page near memory access, again in line with AMD's specifications, while worst-case latency weighs in at 100ns.

Cache Bandwidth

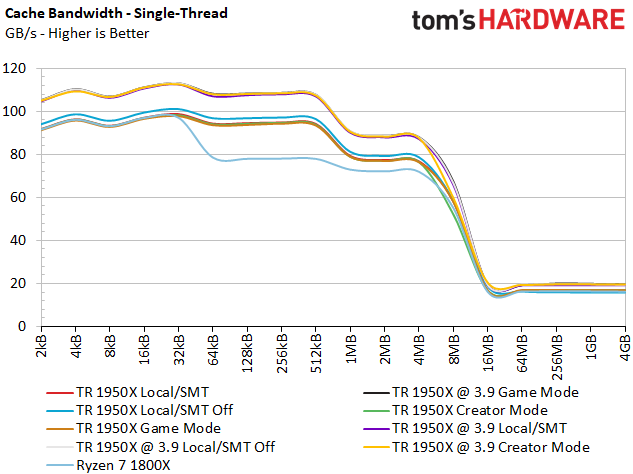

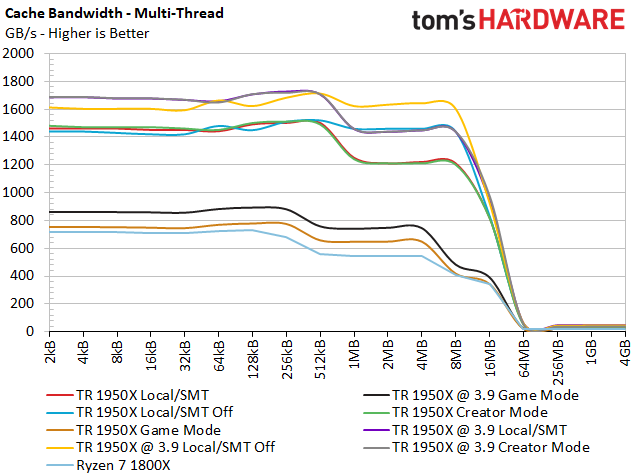

Each CCX has its own caches, so a Threadripper CPU features four distinct clusters of L1, L2, and L3 memory. Our bandwidth benchmark illustrates the aggregate performance of these tiers.

During the single-threaded test, Ryzen 7 1800X demonstrates lower throughput than the Threadripper processors. The other configurations clump together in familiar stock and overclocked groups.

The multi-threaded tests are more interesting; we see Ryzen 7 1800X and the two Threadripper Game modes fall to the bottom of the chart. Because Game mode disables the cores on one die, it effectively takes the corresponding cache out of commission.

MORE: Best CPUs

MORE: Intel & AMD Processor Hierarchy

MORE: All CPUs Content

AMD Ryzen Threadripper 1950X

Current page: Testing Ryzen's Infinity Fabric & Memory Subsystem

Prev Page Finding The Right Modes Next Page VRMark & 3DMark

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

beshonk "extra cores could enable more performance in the future as software evolves to utilize them better"Reply

I can't believe we're still saying this in 2017. Developers suck at their job. -

sztepa82 "AMD aims Threadripper at content creators, heavy multitaskers, and gamers who stream simultaneously. It also says the processors are ideal for gaming at high resolutions. Ryzen Threadripper 1950X isn't intended for playing around at low resolutions, particularly in older, lightly-threaded titles. ____Still, we tested at 1920x1080____ ...."Reply

Thank you for being out there for us, Tom's, no other website has ever done that. The only other thing we can hope for is that you'll also do a 2S Epyc 7601 review playing Project Cars in 320x240. -

shrapnel_indie ReplyEach change requires a reboot, chewing up precious time as you save open projects, halt conversations, and try to remember which web browser tabs to relaunch.

not if you're running the right browser with the right options active. Firefox can remember the last tabs you had open and reopen them upon startup... of course this is within the last Firefox window closed, and you have to properly exit. (no killing the thread(s).) -

-Fran- Since this CPU (and Intel's X and XE line) are aimed for big spenders, when are you guys going to test multi GPU in these CPUs?Reply

Also, you mentioned streaming as part of the big CPU charisma, but there was no actual test with it. Why not just run OBS with the same software encoding settings for each platform and run a game? It's not that hard to do, is it?

Cheers! -

Dyseman Quote- 'When I go to pause the video (ad) your site takes me to another tab. Bye, bye.'Reply

It's easy enough to disable the JW Player with ublock. Those videos are not considered ads but adblockers, but you can tell it to block anything that uses JW Player, then whitelist any other site that needs to use it for NON-ADs. -

rhysiam Thanks for this investigation Toms, really thorough and interesting article.Reply

It's interesting and a little disappointing that an OC to 3.9Ghz seems to pretty consistently achieve a small but measurable bump in gaming. The 1950X can use XFR to get to 4.2Ghz on lightly threaded workloads. Obviously in well-threaded games the CPU isn't going to be able to sustain 4.2Ghz, but it's a bit disappointing it can't manage 3.9-4ghz across the 4-6 cores used in gaming workloads. In fact, judging from the results it seems to be sitting around 3.7-3.8Ghz or so in most games. That seems low to me. There should be plenty of thermal and power headroom available to to get 4-6 cores up to nice high clocks, which should be enough cores for pretty much every game in the suite (except perhaps AOTS). If that was happening we'd see the OC making no difference, or even perhaps causing a slight performance regression in games (like it does in synthetic single-threaded tests). But clearly that's not the case.

It seems to me that AMD's power management implementation is resulting in some pretty conservative clock speeds in the 4-6 core workload range. That has implications outside of gaming as well, because 4-6 thread workloads are quite common even in the productivity and content creation space. It's hardly a deal breaker (we're only looking a couple of hundred mhz), but I'm curious whether others think AMD is giving up a little more performance than they should be here? Or am I missing something? -

jdwii Reply20187640 said:Thanks for this investigation Toms, really thorough and interesting article.

It's interesting and a little disappointing that an OC to 3.9Ghz seems to pretty consistently achieve a small but measurable bump in gaming. The 1950X can use XFR to get to 4.2Ghz on lightly threaded workloads. Obviously in well-threaded games the CPU isn't going to be able to sustain 4.2Ghz, but it's a bit disappointing it can't manage 3.9-4ghz across the 4-6 cores used in gaming workloads. In fact, judging from the results it seems to be sitting around 3.7-3.8Ghz or so in most games. That seems low to me. There should be plenty of thermal and power headroom available to to get 4-6 cores up to nice high clocks, which should be enough cores for pretty much every game in the suite (except perhaps AOTS). If that was happening we'd see the OC making no difference, or even perhaps causing a slight performance regression in games (like it does in synthetic single-threaded tests). But clearly that's not the case.

It seems to me that AMD's power management implementation is resulting in some pretty conservative clock speeds in the 4-6 core workload range. That has implications outside of gaming as well, because 4-6 thread workloads are quite common even in the productivity and content creation space. It's hardly a deal breaker (we're only looking a couple of hundred mhz), but I'm curious whether others think AMD is giving up a little more performance than they should be here? Or am I missing something?

Ryzen hits a certain point in return pretty darn fast for example CPU might only use 1.15V to get 3.6ghz stable but 3.9ghz needs like 1.3V way to much. -

papality Reply20185985 said:"extra cores could enable more performance in the future as software evolves to utilize them better"

I can't believe we're still saying this in 2017. Developers suck at their job.

Intel's billions had a lot to say in this.