JMicron Returns: The JMF667H Controller On Four Reference SSDs

It's rare that we get the chance to test SSDs before they hit production. But after waltzing with Silicon Motion's SM2246EN platform last year, JMicron offered us a handful of reference drives with different types of flash, all driven by the new JMF667H.

Results: Random Performance

We turn to Iometer as our synthetic metric of choice for testing 4 KB random performance. Technically, "random" translates to a consecutive access that occurs more than one sector away. On a mechanical hard disk, this can lead to significant latencies that hammer performance. Spinning media simply handles sequential accesses much better than random ones, since the heads don't have to be physically repositioned. With SSDs, the random/sequential access distinction is much less relevant. Data are put wherever the controller wants it, so the idea that the operating system sees one piece of information next to another is mostly just an illusion.

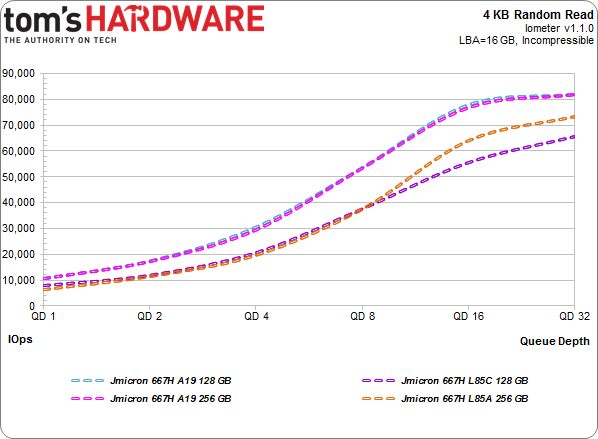

4 KB Random Reads

Testing the performance of SSDs often emphasizes 4 KB random reads, and for good reason. Most system accesses are both small and random. Moreover, read performance is arguably more important than writes when you're talking about typical client workloads.

Article continues belowThe controller is "only" good for about 80,000 4 KB IOPS with the fastest flash around. The ONFi-equipped L85 variants appear under that peak, while the A19-equipped configurations are even from a queue depth of one through 32. The low-queue-depth results are the most relevant to desktop users, and they're all clustered around 10,000 IOPS.

Follow the lines upward, though, and it's plain that Toshiba's A19 flash offers a big performance bump at every step. The two Toggle-mode-equipped drives plateau at a queue depth of 16, but that could be the controller running out of steam. After all, Plextor's M6S (also with A19 NAND, but a four-channel Marvell processor) gets close to 100,000 in this same workload.

Granted, pushing peaks for the sake of spec sheets doesn't make much sense when nobody in a desktop environment will ever see them outside of freakishly improbable circumstance...

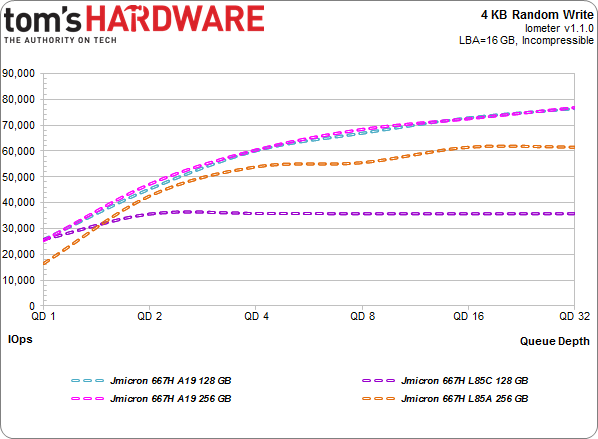

4 KB Random Writes

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Again, the A19-based models track closely, reaching 80,000 IOPS. This is because the 128 GB sample employs the same numbers of dies as the 256 GB model. JMicron's bigger reference SSD simply uses denser 128 Gb dies.

The 256 GB model armed with L85A doesn't make it much past 60,000 IOPS, while the L85C-based drive never even sees 40,000. It does, however, fare much better at a queue depth of one than the L85A-based version. The four-channel design does appear to extract maximum performance from each respective NAND interface.

But we're not going to use theoretical corner cases (the sequential and random 4 KB benchmarks we just ran) to crown one configuration a winner and another a loser. Really, the trace-based metrics that follow are better for formulating conclusions. And doubly-so for these JMF667H platforms, since you can't go out and buy any of them.

Current page: Results: Random Performance

Prev Page Results: Sequential Performance Next Page Results: Write Saturation-

Snipergod87 Page 6: "For every 1 GB the host asked to be written, Mushkin's drive is forced to write 1.05 GB."Reply

Mushkin drive?, To much copy paste. -

koolkei guys. please take a look at thisReply

http://www.tweaktown.com/reviews/6052/kingfast-c-drive-f8-series-240gb-ssd-review-cheapest-tested-240gb-drive-so-far/index.html

that's an actual SSD using this controller, and the price is........ a little more than surprising... -

pjmelect I remember their USB to IDE SATA chip. It caused data corruption every 4 GB or so when transferring data via the IDE interface. I have always been wary of their products since then.Reply -

tripleX "But we're not going to use theoretical corner cases (the sequential and random 4 KB benchmarks we just ran) to crown one configuration a winner and another a loser."Reply

A corner case is not sequential and random benchmarks. It is an engineering term that means, according to Wiki:

A corner case (or pathological case) is a problem or situation that occurs only outside of normal operating parameters—specifically one that manifests itself when multiple environmental variables or conditions are simultaneously at extreme levels, even though each parameter is within the specified range for that parameter.

-

g00ey JMicron has always made pretty shitty products so I won´'t buy any of these anytime soon...Reply -

2Be_or_Not2Be I, too, find it hard to want to purchase a drive from a manufacturer with such a lackluster history.Reply

One part of this article that also doesn't make sense: "Why four channels and not eight? Efficiency is one key motivator. Fewer channels facilitate a smaller ASIC, which can, in turn, be more power-friendly." Compare the size of the PCB to one like the Samsung 850 Pro. They aren't saving much in real estate (they are actually bigger than the Samsung boards), so it makes it hard to believe they're saving much in power here.