Samsung Galaxy S5 Smartphone Review

Will the gravity well from Galaxy S5 capture your interest, or will you streak past with escape velocity?

Why you can trust Tom's Hardware

Camera: Hardware

When the Galaxy S5 launched as Samsung's flagship device at the beginning of 2014, it came with a couple of key advancements, including Samsung's own ISOCELL camera sensor and phase detection autofocus (PDAF). In the months that followed, several other flagship phones appeared, each with their own camera improvements. So, can the S5's camera still compete with these newer devices?

Samsung Galaxy S5 Camera Specs

The Galaxy S5 still has one of the highest megapixel counts, the newer Xperia Z3 being one of only a few phones to have more. However, packing more pixels into the same sensor area results in smaller pixels that capture less light.

Balancing resolution with pixel size is challenging, but as you’ll see later in our image quality section, Samsung's ISOCELL sensor is reasonably successful at combining lots of detail with respectable low-light performance. But the cameras in the HTC One (M8) and iPhone 6 still outperform the S5 in challenging light due to their sensors' much larger pixels.

As for the optics in front of the sensor, you can see that all of the phones being compared have similar specifications, apart from the Z3. It does have a lens with both a wide aperture and a wide focal lens to allow you to fit more of what you see in the frame. Other than the new sensor, the S5’s other camera specs match those found on its predecessor, the S4.

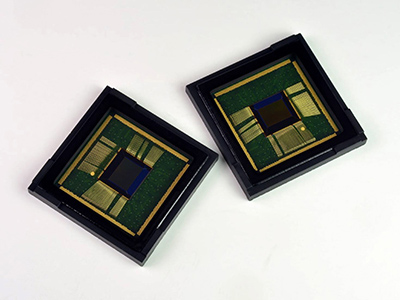

Samsung's ISOCELL Sensor and Phase Detection AF

In previous Galaxy phones, Samsung relied on using image sensors from other vendors, namely Sony. The Galaxy S4 used the 13MP IMX091PQ and the Note 3 used the 13MP Sony IMX135 Exmor RS, which added 4K video (the Note 3 being one of the first phones to record in UHD). For the S5, Samsung decided to change things up and use one of its own ISOCELL sensors announced back in 2013.

Samsung claims that its ISOCELL technology “substantially increases light sensitivity” and allows for “higher color fidelity even in poor lighting conditions.” This is despite the fact that the 1/2.6” sensor’s pixels are still a small 1.12µm, the same as the S4 and many other phones using a 13MP Sony sensor. The way this is achieved is by adding additional barriers between adjacent cells, thereby reducing the crosstalk between them. This short video by Samsung gives you a better idea of how its ISOCELL technology works:

It's interesting to note that the Galaxy S5’s sensor shoots natively at 16:9. At the time of its release, it was the only other phone besides HTC's One M7 and M8 that shot in this aspect ratio. When the Note 4 was released later in the year, its native aspect ratio (despite using a different sensor) was also 16:9. It appears that Samsung will be adopting this standard for the foreseeable future.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The other innovation that Samsung introduced with the Galaxy S5 is phase detection autofocus (PDAF), the S5 being the first smartphone to implement this technology. PDAF works in addition to the traditional contrast AF system (found in most other smartphones) to give the S5 much faster focusing times. Contrast AF uses the SoC’s ISP to compare the contrast of nearby pixels at different focal distances to determine focus, and while this system is not processor-intensive, it is relatively slow. PDAF is a lot faster (Samsung claims the S5 can focus on its subject in as little as 300ms), and works by calculating the distance between the light refracted by microlenses superimposed on the sensor. Samsung's implementation works well, and seems just as fast as the PDAF system used by the iPhone 6.

-

grumpigeek My Galaxy S5 in in an Urban Armor Gear case that looks great and protects the phone, so I don't really care what it looks like.Reply

The device is 100% reliable and I have found the battery life to be excellent - way better than any smartphone I have had previously.

-

implantedcaries Guys you are reviewing a mobile which was released a year and then calling it average compared to competitiReply -

firefoxx04 I have an s5. This review would have been welcomed a year ago.Reply

The phone is top notch. I've known this for a while. -

implantedcaries Guys you are reviewing a mobile which was released a year back and then calling it average compared to competition?? Seriously? Yes I agree S5 is not the most exciting prospect out there for new mobile buyers now, but it wasn't so in 2014 when it was actually launched. Also its one of the very few mobiles already receiving lollipop updates.. No mention of that.. Any hidden agenda against Samsung?Reply -

FritzEiv Folks, you're right. This review is quite late. We began testing the S5 a long time ago, but we've had a bit of a backlog of smartphones to review since Matt (our senior mobile editor) started on staff and we're just catching up. We aren't trying to pretend it's a new phone, thus we haven't put it up in our main feature carousel; but we did want to publish this and others just to have them for archival and future referral and comparison purposes. We are working on other smartphones that are little more current and then we hope to be "on time" as new ones arrive. Hence, for example, Matt's performance preview of Qualcomm's Snapdragon 810 earlier this week. We've been a bit more timely on devices like the OnePlus and the iPhone reviews as well. But hey, continue your sarcasm, because we probably deserve it. Just want you to know why we are doing this, that we're not trying to fool anyone, and that we'll be caught up in short order. Thanks for your patience.Reply

- Fritz (Editor-in-chief) -

Mac266 One thing in this review irritated me: the whole "it's ugly" thing. It might not suit you, but lots of people like the way it looks. Aesthetics are purely subjective, and should definitely not be judged a con on one mans opinion.Reply -

peterf28 Iam not buying a smartphone again where the chipset drivers are not open source. Like what samsung did with the S3, it is stuck on Android 4.3, and there is nothing you can do. All the custom roms are unstable crap because there are no up to date drivers For current kernels. It is like buying a PC without the possibility to update the OS . Would you buy that ?Reply -

jdrch FYI phone speakers are placed on the back of phones to take advantage of acoustics when the phone is laying on a surface. The surface spreads and reflects the sounds back to the user much better than the speaker itself would. Try it yourself.Reply

![Phase Detection Autofocus [Image Source: Wikipedia]](https://cdn.mos.cms.futurecdn.net/y7iMXbSAXGpZzMBT3sEjs3.png)