The five best Nvidia GPUs of all time: Looking back at over 20 years of Nvidia history

The best gaming GPUs from team green.

Founded in 1993, Nvidia has seldom known defeat. It has bankrupted and forced many of its competitors out of the market, and it's largely thanks to high quality products. It makes many of the best graphics cards, and has been a primary pusher of the hardware that's enabling deep learning and AI. Still, there are shortcomings, like the high-priced RTX 40-series GPUs that power its latest cards, with some questionable features like Frame Generation.

But let's get out of the somewhat depressing present and look back at Nvidia's exciting past. It's extraordinary how many great GPUs Nvidia has made over the 24 years since the GeForce brand was established, and most of them were able to go head-to-head with AMD's best. Here are — in our opinion — Nvidia's five best-ever gaming GPUs, starting from the bottom to the top, and considering both individual cards and families as a whole.

5 — GeForce RTX 3060 (12GB)

On paper, the RTX 30-series sounded pretty good in 2020. It featured a more fleshed out architecture with improved ray tracing and tensor cores, it offered tons more raw performance, and it even returned to an attractive pricing structure. In practice, retail pricing was nowhere near where it should have been. It was hard to find a 30-series GPU at anything close to MSRP — or any graphics card of the time, for that matter.

Nevertheless, in the months following the launch of the 30-series in late 2020, Nvidia continued to add new models to the lineup, working its way down the performance stack. The midrange was particularly important, as the prior RTX 20-series with its RTX 2060 and 2060 Super weren't exactly amazing follow ups to the GTX 1060. Given that anyone would buy a GPU with a pulse in 2021, Nvidia didn't really have to put much effort into a new GPU, but it surprised everyone with the RTX 3060.

Nvidia doesn't always nail its midrange offerings, but the RTX 3060 was a great exception. It was significant step up from the RTX 2060 in performance, and most notably had double the VRAM at 12GB. That was more VRAM than even the regular RTX 3080 with its 10GB (though there was a 3080 12GB that wasn't really available). Such a big improvement gen-on-gen was pretty remarkable for Nvidia. (We should also note that we're not including the gimped RTX 3060 8GB in this discussion.)

What was even more remarkable was how AMD's competing midrange cards, which were normally quite potent, weren't all that powerful. The RX 6600 and 6600 XT had decent horsepower but only 8GB of VRAM, had to rely on inferior FSR 1.0 upscaling instead of DLSS, and came out months later. AMD competed pretty well against the rest of the 30-series and usually had a VRAM capacity advantage, but the Navi 23 cards were the exception.

Of course, the GPU shortage was a thing, and the RTX 3060 wasn't immune — even with Nvidia's first "self-owned" attempt at locking out Ethereum mining. Eventually the shortage subsided in 2022, and that made the 3060 one of the most affordable GPUs. The 3060 never quite reached its MSRP of $329, while the 6600 and 6600 XT both fell well below $300 in time. Additionally, FSR 2.0 offered quality and performance improvements that made it more competitive with DLSS, further reducing the 3060's advantage.

Still, the RTX 3060 stands as one of Nvidia's best midrange GPUs ever. Beyond pricing and availability issues, it was a great card that certainly gave AMD a run for the money. It was also uniquely good among the rest of the 30-series, which was almost always way too expensive and/or paired with not nearly enough VRAM to make much sense. It's a pity the RTX 4060 threw most of that progress away.

4 — GeForce GTX 680

It's rare for Nvidia to make a serious mistake, but one of its worst was the Fermi architecture. First featured in the GTX 480 in mid-2010, Fermi was not what Nvidia needed as it only offered a modest performance boost over the 200-series while consuming tons of power. Things were so bad that Nvidia rushed out a second version of Fermi and the GTX 500-series before 2010 ended, which thankfully resulted in a more efficient product.

Fermi doubtlessly caused Nvidia to do a little soul searching, and the company rethought its traditional strategy. For most of the 2000s, Nvidia lagged behind Radeon (owned first by ATI and then AMD) when it came to nodes. While newer nodes offered better efficiency, performance, and density, they were also much more expensive to use, and there were often "teething pains." By the mid 2000s, Nvidia's main strategy was to make big GPUs on older nodes, which often was enough to put GeForce in first place.

The experience with Fermi was so traumatic for Nvidia that the company decided that it would get to the 28nm node right alongside AMD in early 2012. Kepler, Nvidia's first 28nm GPU, was a different chip than Fermi and prior Nvidia architectures. It used the latest process, its biggest version was relatively lean at just under 300mm2, and it offered great efficiency. The contest between the rival flagships from Nvidia and AMD was set to be very different in 2012.

Although AMD fired the first shot with its HD 7970, Nvidia countered three months later with its Kepler-powered GTX 680. Not only was the 680 faster than the 7970, it was more efficient and smaller, which were the very areas where AMD excelled with the HD 4000- and 5000-series GPUs. Granted, Nvidia only had a thin lead in these metrics, but it was rare — maybe even unprecedented — that Nvidia was ahead in all three.

Nvidia didn't keep the performance crown for long with the arrival of the HD 7970 GHz Edition and better performing AMD drivers, but Nvidia still held the edge in power efficiency and area efficiency. Kepler continued to give AMD trouble, as a second revision powered the GTX 700-series and forced the launch of a very hot and power hungry Radeon R9 290X. True, the R9 290X did beat the GTX 780, but it was very Fermi-like, and the GTX 780 Ti took back the crown anyway.

Although not particularly well-remembered today, the GTX 680 probably should be. Nvidia achieved a very impressive improvement over the disappointing Fermi architecture using AMD's own playbook. However, that might be because it got overshadowed by a later GPU that did the same thing but even better.

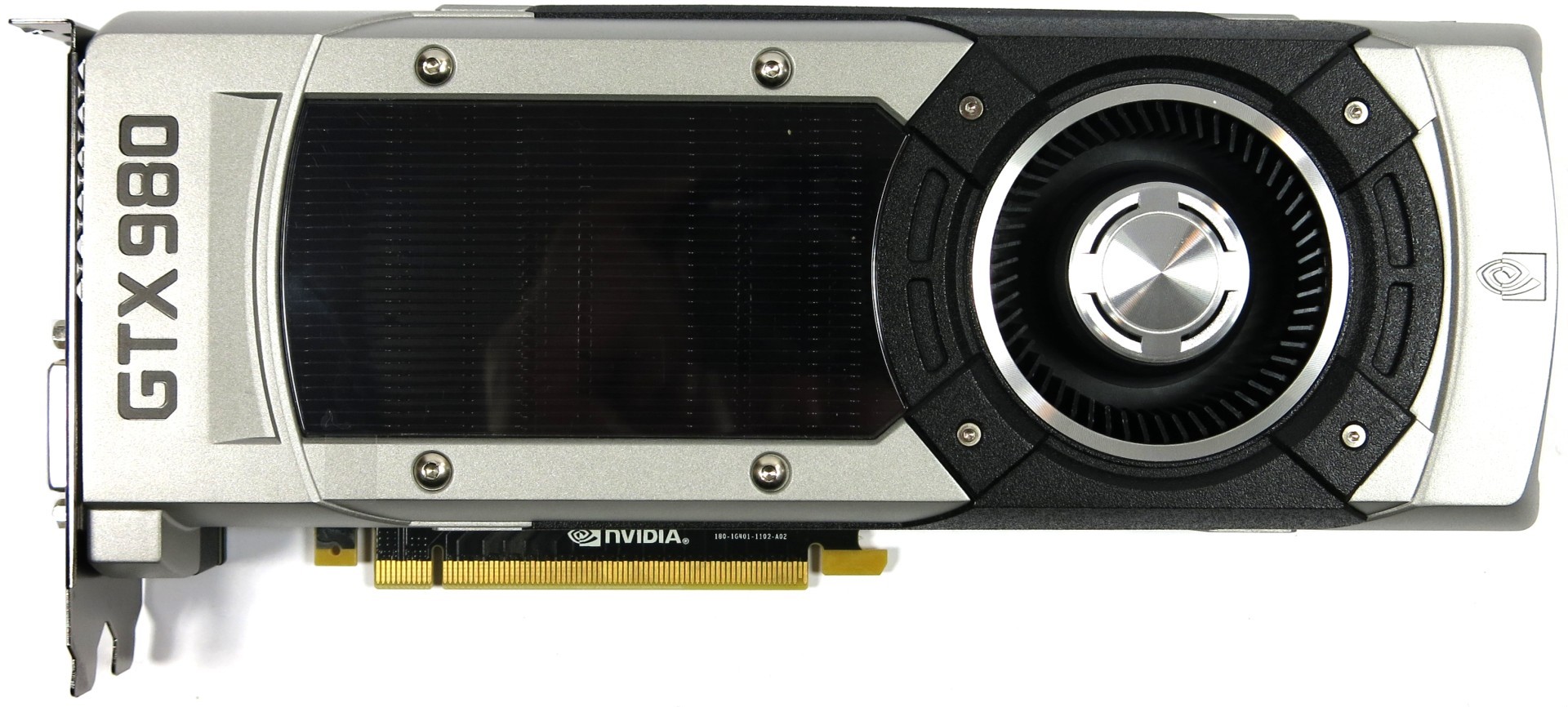

3 — GeForce GTX 980

From the emergence of the modern Nvidia versus AMD/ATI rivalry in the early 2000s to the early 2010s, both GeForce and Radeon traded blows generation after generation. Sure, Nvidia won most of the time, but usually ATI (and later AMD) weren't far behind; the only time where one side was totally beaten was actually when ATI's Radeon 9700 Pro decimated Nvidia's GeForce 4 Ti 4600. However, Nvidia came pretty close to replicating this scenario a couple of times.

By the mid-2010s, the stars must have aligned for Nvidia. Semiconductor foundries around the world were having serious issues getting beyond the 28nm node, including Nvidia's and AMD's GPU manufacturing partner TSMC. This meant that Nvidia could get comfortable with its old strategy of making big GPUs on old nodes without worrying about AMD countering with a brand-new node. Additionally, as AMD was risking bankruptcy, it effectively had no resources to compete with a wealthy Nvidia.

These two factors coinciding at the exact same time made for the perfect storm. Nvidia had already done a very respectable job with the Kepler architecture with the GTX 600- and 700-series, but the brand-new Maxwell architecture for the GTX 900-series (and the GTX 750 Ti) was something else. It squeezed even more performance, power efficiency, and density out of the aging 28nm node.

The flagship GTX 980 wiped the floor with both AMD's R9 290X and Nvidia's own last-gen GTX 780 Ti. The GTX 980 was faster, more efficient, and smaller, but unlike the 680, the 980's leads were absolutely massive in these regards. The 980 was nearly twice as efficient as the 290X, performed about 15% or so faster, and shaved off nearly 40mm2 in die area. Compared to the 780 Ti, the 980 was almost 40% more efficient, about 10% faster, and had a die size over 160mm2 smaller.

This wasn't a victory quite on the level of the Radeon 9700 Pro, but it was massive all the same. It was essentially on par with what AMD did to Nvidia with the HD 5870. Except, instead of responding with a bad GPU, AMD had nothing to throw back at Nvidia. All AMD could do in 2014 was hang on for dear life with its aging Radeon 200-series.

In 2015, AMD tried its best to compete again, but only at the high-end. It decided to refresh the Radeon 200-series as the Radeon 300-series from the low-end to the upper midrange, and then use its brand-new Fury lineup for the top-end. Nvidia however had an even bigger Maxwell GPU waiting in the wings to cut AMD's hopeful R9 Fury X off, and the GTX 980 Ti did exactly that. With 6GB of memory, the 980 Ti became the obvious choice over the 4GB-equipped Fury X (which was actually a decent card otherwise).

Though a great victory for Nvidia, the GTX 900-series permanently altered the landscape of gaming graphics cards. The Fury X was AMD's last competitive flagship until the RX 6900 XT in 2020, and that was largely because AMD stopped making them every generation. AMD is back to regularly making flagship GPUs (knock on wood), but Maxwell really mauled Radeon and it didn't recover for many years.

2 — GeForce 8800 GTX

The early 2000s saw the emergence of modern graphics cards as both Nvidia and ATI made progress in crucial areas. Nvidia's GeForce 256 introduced hardware accelerated transform and lighting visuals, while ATI's Radeon 9700 Pro revealed that GPUs should pack more computational hardware and could be really big. When Nvidia took a big loss at the hands of the 9700 Pro in 2002, it really took that lesson to heart and began making bigger and better GPUs.

Although ATI had started the arms race, Nvidia was dead set on winning it. Both Nvidia and ATI had made GPUs as large as 300mm2 or so by late 2006, but Nvidia's Tesla architecture went up to nearly 500mm2 with the flagship G80 chip. Today, that's a pretty typical size for a flagship GPU, but back then it was literally never-before-seen.

Tesla debuted with the GeForce 8800 GTX in late 2006, and it delivered a blow to AMD not far off of what the Radeon 9700 Pro did to Nvidia just four years prior. Size was the deciding factor between the 8800 GTX and ATI's flagship Radeon X1950 XTX, which was almost 150mm2 smaller. The 8800 GTX was super fast, as well as pretty power hungry for the time, so you also have the 8800 GTX to thank for normalizing GPUs with 150+ watt TDPs — even if that seems pretty quaint nowadays.

Although ATI was the one to invent the BFGPU, it couldn't keep up with the 8800 GTX. The HD 2000-series, which only got as large as 420mm2, couldn't catch up to the G80 chip and Tesla architecture. ATI instead changed tactics and began to focus on making smaller, more efficient GPUs with greater performance density. The HD 3000-series flagship HD 3870 was surprisingly small at just under 200mm2, and the following HD 4000- and 5000-series would follow with similarly small die sizes.

More recently, Nvidia tends to follow up powerful GPUs with even more powerful GPUs to remind AMD who's boss, but back then Nvidia wasn't quite like that. The Tesla architecture was so good that Nvidia decided to use it again for the GeForce GTX 9000-series, which was pretty much the GeForce 8000 series with a slight performance bump. Granted, the 9800 GTX was almost half the price of the 8800 GTX, but it still made for a boring GPU.

Although the 8800 GTX is pretty old now, it's remarkable how modern it is in other ways. It had a die size consistent with today's high-end GPUs, it used a cooler with aluminum fins, and it had two 6-pin power connectors. It only supported up to DirectX 10, which didn't really go anywhere, so it can't really be used for modern gaming, but otherwise it's very recognizable as a modern GPU.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

1 — GeForce GTX 1080 Ti

Nvidia was on an incredible streak with its 28nm GPUs: the GTX 600-, 700-, and 900-series. They had all successfully beaten off AMD's competing cards, with each victory becoming greater than the last. AMD essentially went on a break from making flagship GPUs after the Fury X, which left Nvidia as the exclusive maker of high-end GPUs going into the next generation.

Ironically for AMD, it threw in the towel just as TSMC's brand-new 16nm node was getting ready for volume production. AMD couldn't afford to produce graphics cards at TSMC, instead relying on its old CPU fabbing partner GlobalFoundries, which had licensed Samsung's 14nm. But don't get confused: TSMC's 16nm was definitely the better node.

For its part, Nvidia already a great architecture from the GTX 900-series, and it decided to go from 28nm to 16nm. By opting for TSMC's 16nm, Nvidia for the first time in a very long time was ahead of AMD when it came to process nodes, and it was a substantial lead at that. The 16nm Pascal architecture was in many ways just a shrunk down Maxwell, and the few new features it introduced were mostly for VR, which didn't take off as Nvidia (and AMD) expected. There was also VXAO, Voxel Ambient Occlusion, which was used in precisely one game ever: Rise of the Tomb Raider, though it did pave the way for ray tracing in some ways.

Being a mere shrink didn't make the GTX 10-series any less potent. The GTX 1080 as the flagship GPU in 2016 made the GTX 980's improvements over the GTX 700-series look small. Compared to the previous flagship 980 Ti, the 1080 was roughly 30% faster, nearly twice as efficient, and close to half the size. Maxwell was clearly held back by the 28nm node, and the 16nm shrink with Pascal fixed that.

As in 2014, AMD had no new flagships ready to meet the GTX 1080 and 1070, instead relying on the old R9 Fury X and regular R9 Fury — and the compact R9 Nano. Instead, AMD launched its lower-end to midrange RX 400-series, which was inaugurated by the RX 480. Though a good card in its own right, Nvidia's competing GTX 1060 was also quite good, featuring the same great efficiency that the 1080 displayed. Driver updates and the 480's 2GB extra VRAM helped AMD stay competitive in the midrange GPU sector, which became the company's bread and butter at this point.

With the GTX 1080, Nvidia didn't just win in 2016 but also 2017 when AMD's RX Vega flagship GPUs finally came out. AMD could only barely manage to match the 1080 and 1070 in performance, but lagged behind substantially in efficiency. Of course, Nvidia preempted AMD with an even larger Pascal GPU, the GTX 1080 Ti, and did so by three months. The Fury X could at least claim to mostly tie the 980 Ti, but the Vega 64 couldn't even touch the 1080 Ti.

Today, the GTX 10-series is fondly remembered for its great performance, efficiency, and pricing. It's arguably Nvidia's last great series of graphics cards, as neither the RTX 20-, 30-, or 40-series have really replicated the 10-series' wide and diverse product stack. Nvidia had the time to not only put out $700 flagships like the GTX 1080 but also a $100 GTX 1050, which was pretty good for the time. The GTX 10-series coincided with what was in many ways one of the best times to be a PC gamer.

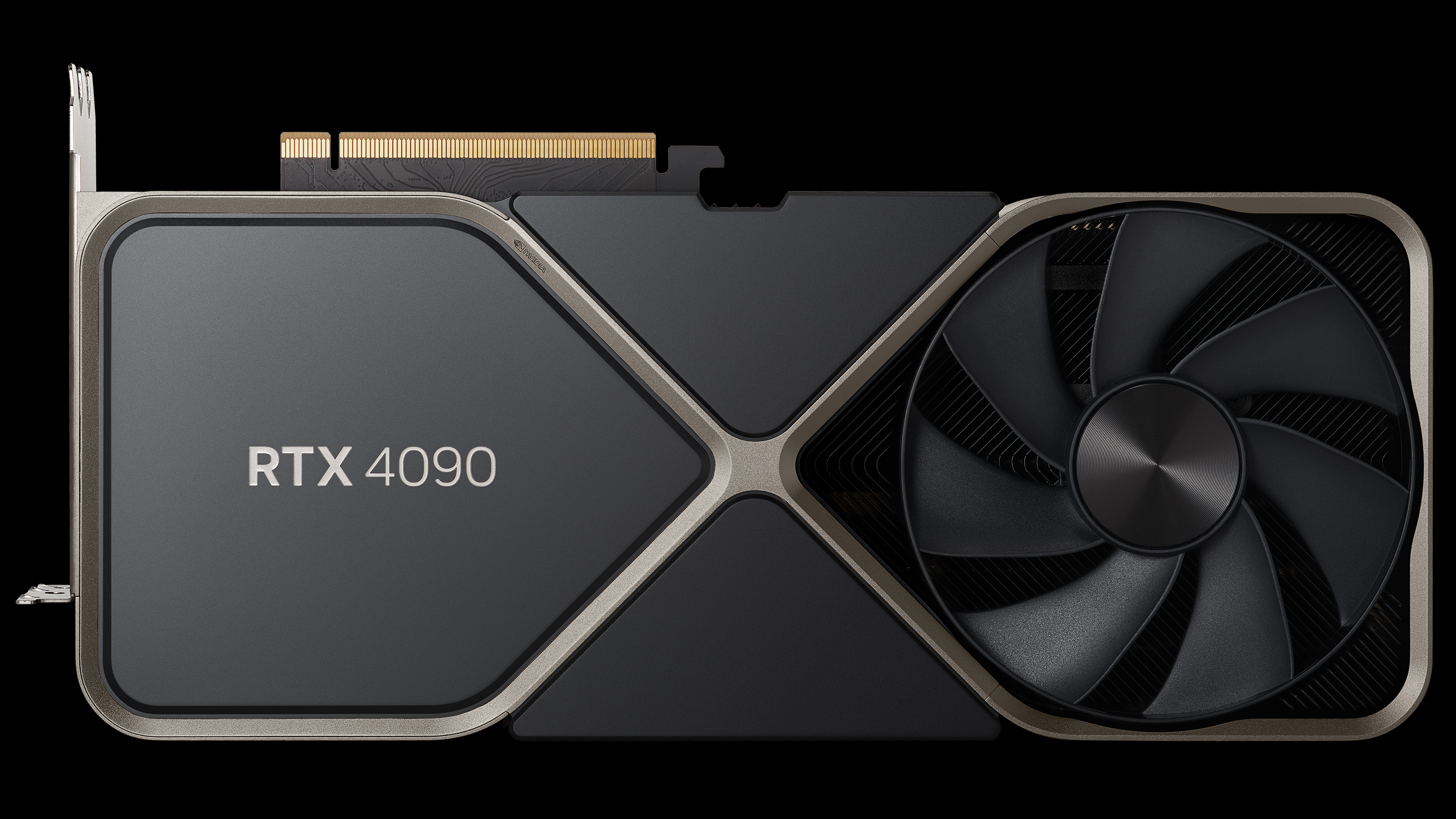

Honorable and Dishonorable Mention — GeForce RTX 4090

The RTX 4090 is conspicuously missing from this list, and there was lots of discussion behind the scenes on whether to include it or not. It's definitely a fast GPU with great hardware behind it, but it's also really complicated. We ended up deciding that it earns an honorable/dishonorable mention to wrap things up. It was the best of GPUs, it was the worst of GPUs...

The 4090 isn't unlike the 980 Ti and the 1080 Ti from way back when, as it's currently the fastest GPU for gaming by a decent margin. AMD's competing RX 7900 XTX isn't that far behind in raw horsepower, but the 4090 gains a massive lead in ray tracing, and DLSS is a decent bit better than FSR. Though the 4090's performance lead is far from the blowout we saw with the 1080 Ti, it's still just about in a class of its own.

That's all counteracted by two highly negative attributes of the 4090 that are really hard to ignore. The whole power situation with the 4090 is unpleasant at best, with the 4090 consuming well over 400 watts and using the problematic 12VHPWR connector. Although the power plug is now discontinued and has been revised, that doesn't change anything for the many 4090 cards out there that were made prior to the redesign. The 4090 could probably be called a disaster for this alone, as even Fermi didn't combust.

The 4090 is also emblematic of just how expensive PC gaming has gotten in the past five years. With an MSRP of $1,600, it costs as much as a whole PC with some decent gaming hardware. Of course, the 4090 is only following the lead of the 3090 when it comes to pricing, but the 3090 was originally envisioned more as a Titan-class, luxury GPU. The RTX 3080 performed just behind the RTX 3090; the RTX 4080 is much slower than the RTX 4090.

It's really hard to say that the 4090 is a direct line successor to the 980 Ti and 1080 Ti when it's just so expensive. The 980 Ti and 1080 Ti were praised for how cheap they were relative to their performance, with the former costing $649 and the latter $699 at launch. Today, you can't even find a 4090 for its already ludicrous MSRP of $1,599 — the cheapest units tend to cost $2,000 or more.

It's already pretty obvious that the RTX 4090, though great in many ways, can't be one of Nvidia's greatest GPUs of all time. It just doesn't measure up in quite the same way that its forerunners did, and that's kind of a shame. If it didn't have a connector liable to melt and was priced at even $1,000, it could have been the next GTX 1080 Ti. Instead, we're still waiting for a new champion.

Matthew Connatser is a freelancing writer for Tom's Hardware US. He writes articles about CPUs, GPUs, SSDs, and computers in general.

-

evdjj3j Did nVidia pay for this or can we expect an AMD version?Here is the AMD versionReply

https://www.tomshardware.com/features/best-amd-gpus-of-all-time -

ryanlionrah https://www.tomshardware.com/features/best-amd-gpus-of-all-timeReply

Try not to act like such a dumb fanboy mmmk? -

cknobman Still rock my 1080ti graphics card today!Reply

Truly the GOAT.

Giving the 4090 an honorable mention seems a little shill IMHO.

Regardless of how powerful it is the price should keep it off this list and any honorable mentions. -

JarredWaltonGPU Reply

You might want to read that a bit more closely.cknobman said:Still rock my 1080ti graphics card today!

Truly the GOAT.

Giving the 4090 an honorable mention seems a little shill IMHO.

Regardless of how powerful it is the price should keep it off this list and any honorable mentions.

"Honorable and Dishonorable Mention — GeForce RTX 4090"

It's both a good GPU and a bad GPU and we wanted to highlight it as sort of a microcosm of everything that's going on right now. I love the performance it offers, and even the cooling and design of the Founders Edition is great. But that dang 16-pin connector, plus the pricing — which is getting even higher now — are terrible. It's a bit like the 30-series in that sense, except it's not screwed up by cryptomining but rather by other factors.

FWIW, there are still two more articles in this series coming: the Worst AMD GPUs and the Worst Nvidia GPUs. For precisely the same reasons, the 4090 doesn't fully belong on the worst list. So when you look at the full picture (best and worst), the 4090 stands out as one card in particular that warrants mention on both lists. Hence, the Honorable and Dishonorable bit. -

PEnns My 8800 GTX served me very well for over 10 years.Reply

I should have put it in a shadowbox and hung it on my wall when it reached retirement age. -

RoLleRKoaSTeR Running a 3090FE in the rig now. Still have an Gigabyte Aorus 1080Ti - it is in the original box.Reply -

dorinmac hmmm... flrom all time? maybe you forgot the 4600 series ot the 6800 series, gt and ti.... they were the begining of a whole new era, WHERE ARE THEY? jeeezzzz.... new kids who didn't live back then :)Reply -

Murissokah No G92? G80 was great, but G92 simply blew the market. Nothing else made sense at the time. It was a 4060 performing like 3090ti, at 200 USD.Reply -

warezme Surprised the 2080ti was mentioned. It was an amazing card for it's time. Totally made the much venerated 1080 series seem outdated right away. Featuring the first truly useful raytracing features.Reply -

Eximo Reply

It was also a huge jump in price.warezme said:Surprised the 2080ti was mentioned. It was an amazing card for it's time. Totally made the much venerated 1080 series seem outdated right away. Featuring the first truly useful raytracing features.

I agree, G92 missing seems out of place. 8800GT was THE card to have of that era. I was one of the few that ended up with a 8800GTS G80 because I wanted a DX10 card immediately for some upcoming game releases.