Early Verdict

Nvidia’s GeForce GTX 1080 Ti Founders Edition simultaneously ascends the throne as a new performance king and extends Titan X-class performance to gamers for $500 less. There is a lot to like if you want to enjoy your favorite games on a 4K monitor, with a fast-refresh QHD display, or in virtual reality. Although some of Nvidia’s board partners will offer 1080 Tis with bigger coolers and less noise, its Founders Edition card remains a favorite for its ability to exhaust waste heat. We’re eager to see how AMD’s Radeon RX Vega responds in Q2.

Pros

- +

Fastest graphics card available

- +

Attractive price versus Titan X

- +

Centrifugal fan exhausts waste heat

Cons

- -

Temperature-limited

- -

Not as quiet as some board partner designs

Why you can trust Tom's Hardware

Nvidia GeForce GTX 1080 Ti Review

Nobody was surprised when Nvidia introduced its GeForce GTX 1080 Ti at this year’s Game Developer Conference. What really got gamers buzzing was the card's $700 price tag.

Based on its specifications, GeForce GTX 1080 Ti should be every bit as fast as Titan X (Pascal), or even a bit quicker. So why shave off so much of the flagship’s premium? We don’t really have a great answer, except that Nvidia must be anticipating AMD’s Radeon RX Vega and laying the groundwork for a battle at the high-end.

Why now? Because GeForce GTX 1080 Ti is ready today, Nvidia tells us. And because Vega is not, we’d snarkily add.

Turning A Zero Into A Hero

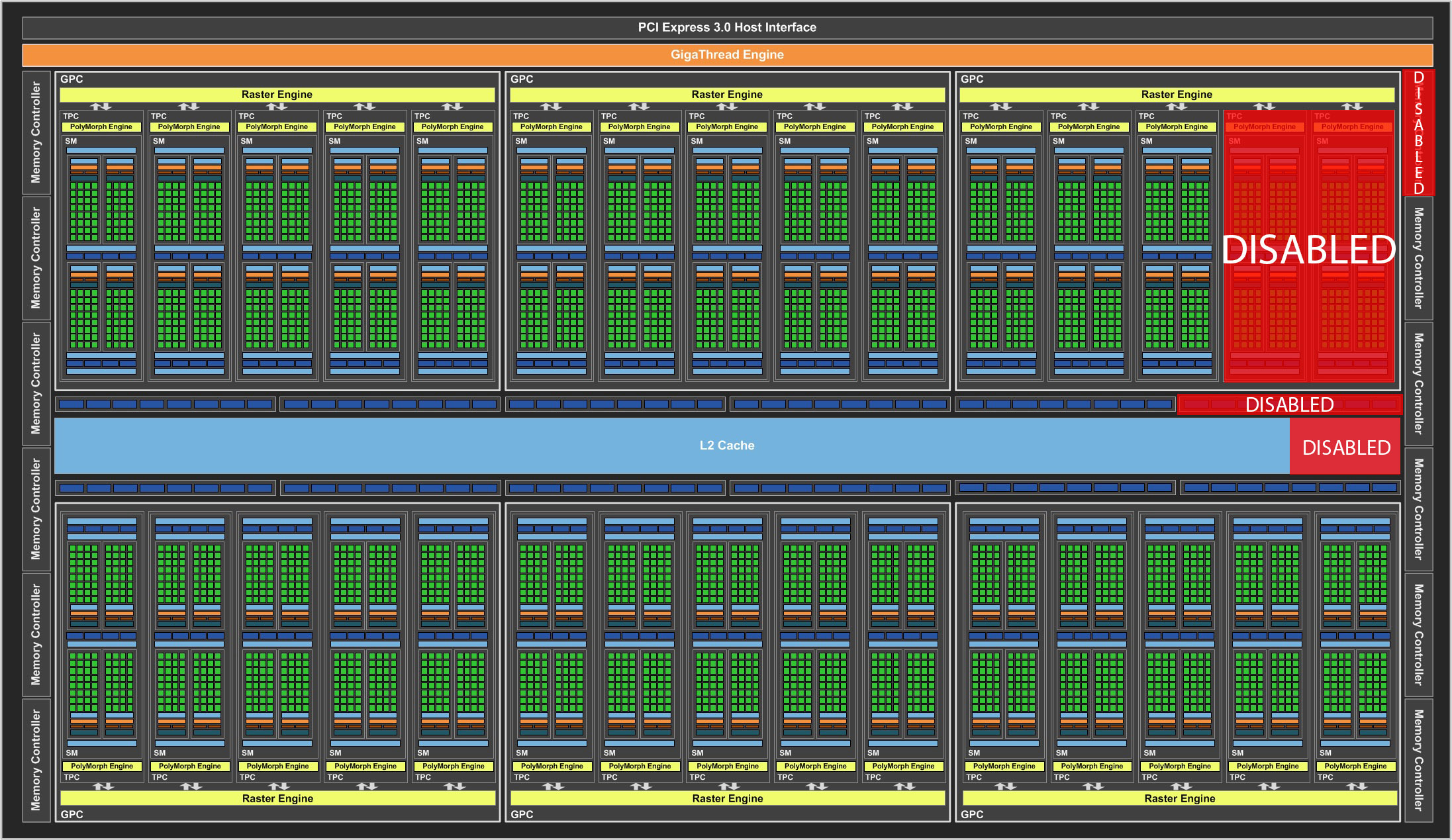

There are currently two graphics cards based on Nvidia’s GP102 processor: Titan X and Quadro P6000. The former uses a version of the GPU with two of its Streaming Multiprocessors disabled, while the latter employs a pristine GP102, without any defects at all.

We’re talking about a 12 billion transistor chip, though. Surely yields aren’t so good that they all bin into one of those two categories, right? Enter GeForce GTX 1080 Ti.

The 1080 Ti employs a similar Streaming Multiprocessor configuration as Titan X—28 of its 30 SMs are enabled, yielding 3584 CUDA cores and 224 texture units. Nvidia pushes the processor’s base clock rate up to 1480 MHz and cites a typical GPU Boost frequency of 1582 MHz. In comparison, Titan X runs at 1417 MHz and has a Boost spec of 1531 MHz.

Where the new GeForce differs is its back-end. Both Titan X and Quadro P6000 utilize all 12 of GP102’s 32-bit memory controllers, ROP clusters, and slices of L2 cache. This leaves no room for the foundry to make a mistake. Rather than tossing the imperfect GPUs, then, Nvidia turns them into 1080 Tis by disabling one memory controller, one ROP partition, and 256KB of L2. The result looks a little wonky on a spec sheet, but it’s perfectly viable nonetheless. As such, we get a card with an aggregate 352-bit memory interface, 88 ROPs, and 2816KB of L2 cache, down from Titan X’s 384-bit path, 96 ROPs, and 3MB L2.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Left alone, that’d put GeForce GTX 1080 Ti at a slight disadvantage. But in the months between 1080’s launch and now, Micron introduced 11 Gb/s (and 12 Gb/s, according to its datasheet) GDDR5X memories. The higher data rate more than compensates for the narrower memory bus: on paper, GeForce GTX 1080 Ti offers a theoretical 484 GB/s to Titan X’s 480 GB/s.

Of course, eliminating one memory channel affects the card’s capacity. Stepping down from 12GB to 11GB isn’t particularly alarming when we’re testing against a 4GB Radeon R9 Fury X that works just fine at 4K, though. Losing capacity is also preferable to repeating the problem Nvidia had with GeForce GTX 970, where it removed an ROP/L2 partition, but kept the memory, causing slower access to the orphaned 512MB segment. In this case, all 11GB of GDDR5X communicates at full speed.

Specifications

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Meet The GeForce GTX 1080 Ti Founders Edition

During its presentation, Nvidia announced that its Founders Edition cooler was improved compared to Titan X's. Looking at this card head-on though, you wouldn't know it. It looks identical except for the model name. The combination of materials (namely cast aluminum and acrylic) is also the same, as is the commanding presence of its 62mm radial cooler.

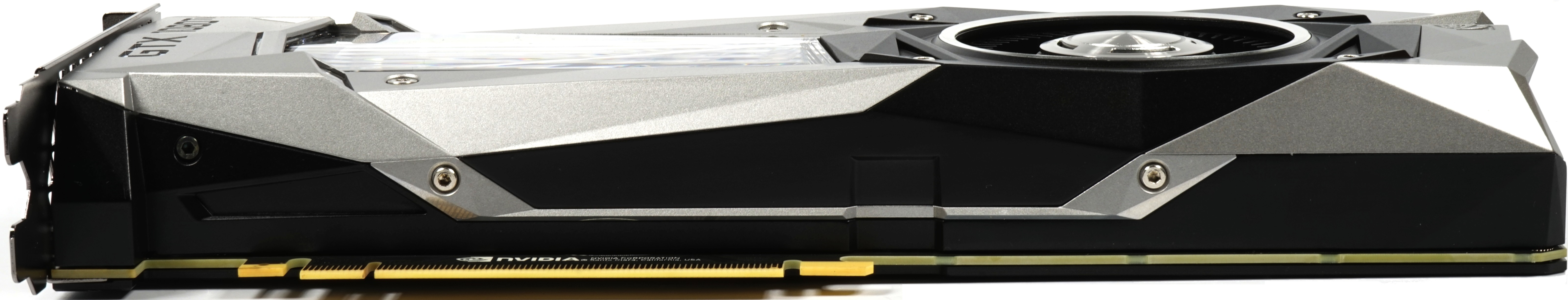

Nvidia's reference GeForce GTX 1080 Ti is the same size as its Titan X (Pascal). The distance from the slot cover to end of the cooler spans 26.9cm. From the top of the motherboard slot to the top of the cooler, the card stands 10.5cm tall. And with a depth of 3.5cm, it fits nicely in a dual-slot form factor. We weighed the 1080 Ti and found that it's a little heavier than the Titan X at 1039g.

The top of the card looks just as familiar as its front, sporting a green back-lit logo, along with one eight- and one six-pin power connector. The bottom is even less interesting; there’s really nothing to say about its plain cover.

Some hot air may escape the back of the card through its open vent. But the way Nvidia designed its thermal solution ensures most of the waste heat exhausts out the back.

Nvidia improves airflow through the cooler by removing its DVI output. You get three DisplayPort connectors and one HDMI 2.0 port, while a bundled DP-to-DVI dongle covers anyone still using that interface.

Cooler Design

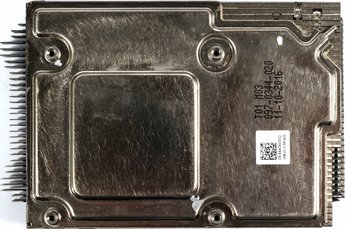

We had to dig deep in our tool box because Nvidia primarily uses thin 0.5mm screws, which fit into the mating threads of special piggyback screws that sit below the backplate. These uncommon M2.5 hex bolts also attach the card’s cover to its circuit board.

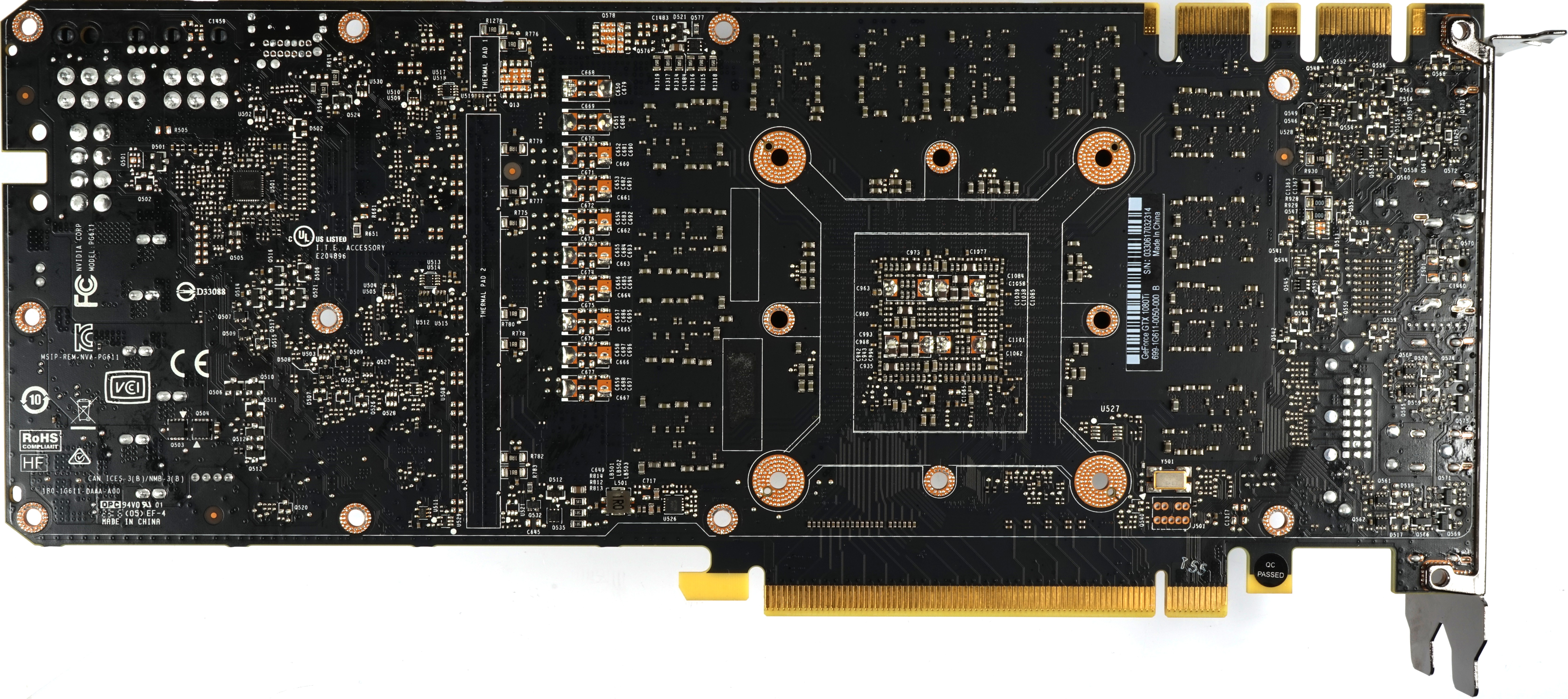

One improvement to the GeForce GTX 1080 Ti became apparent as we started taking the card apart: Nvidia mated the PWM controller on the back of its PCA with part of the backplate using thick thermal fleece. This is a material we don't see used very often, and it's meant to augment heat dissipation. The move would have been even more effective if Nvidia cut a hole into the plastic sheet covering the backplate in this area.

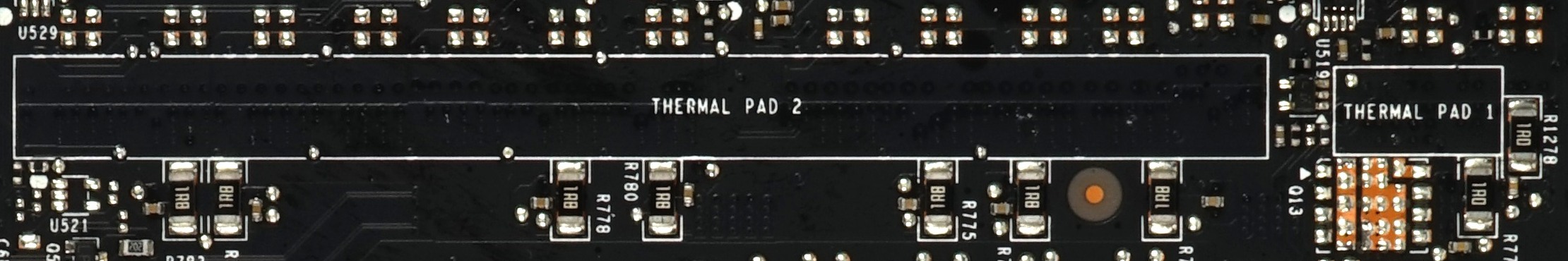

The exposed board reveals two areas on the left labeled THERMAL PAD 1 and THERMAL PAD 2. However, these do not actually host any thermal pads. We don’t know if Nvidia’s engineers deemed them unnecessary or if its accountants decided they were too expensive. Our measurements will tell.

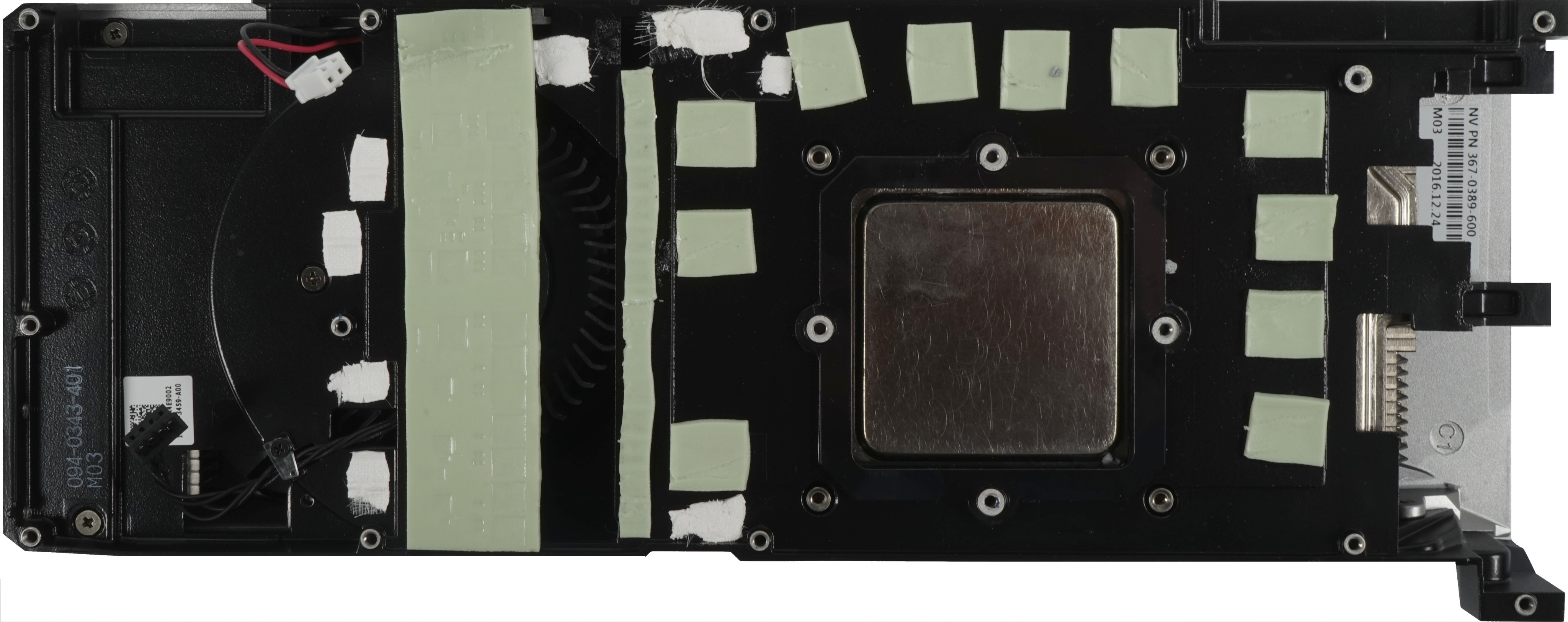

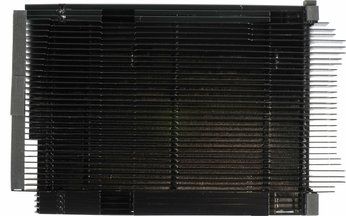

The cooler’s massive bottom plate sports thermal pads for the voltage converters and memory modules, as well as several of the thermal fleece strips we mentioned previously. Those strips connect other on-board components to the cooler's bottom plate.

Similar to its other high-end Founders Edition cards, Nvidia uses a vapor chamber for cooling the GPU. It’s attached to the board with four spring bolts.

Board Design & Components

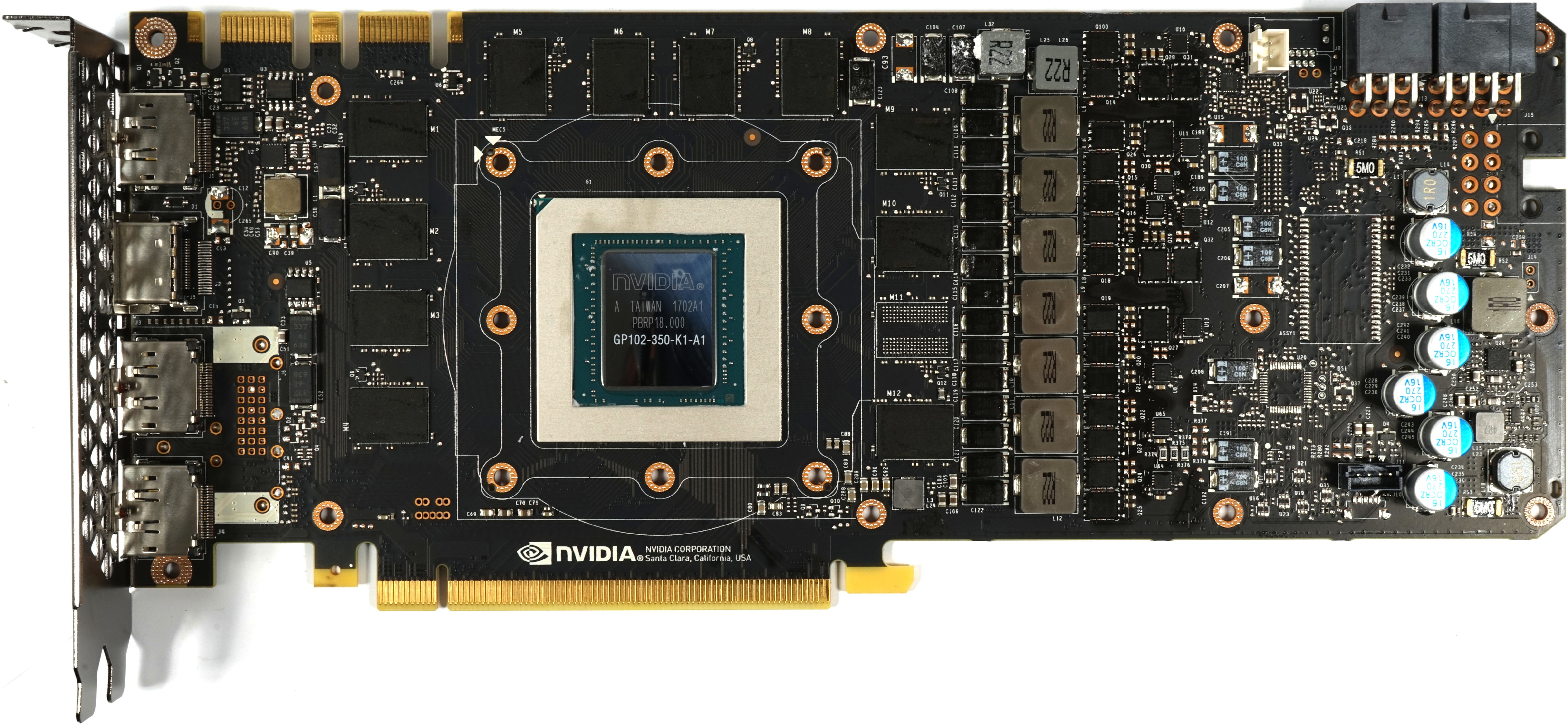

Physically, the first thing you might notice about the PCA is its full complement of voltage regulators. Nvidia’s Titan X (Pascal) had the same layout, but not all of its emplacements were populated. The Quadro P6000, on the other hand, uses this board design. That card's eight-pin power connector points toward the back, and you can see the holes for it on the 1080 Ti's PCB.

The opposite holds true for memory: compared to Titan X (Pascal), one of GeForce GTX 1080 Ti's modules is missing.

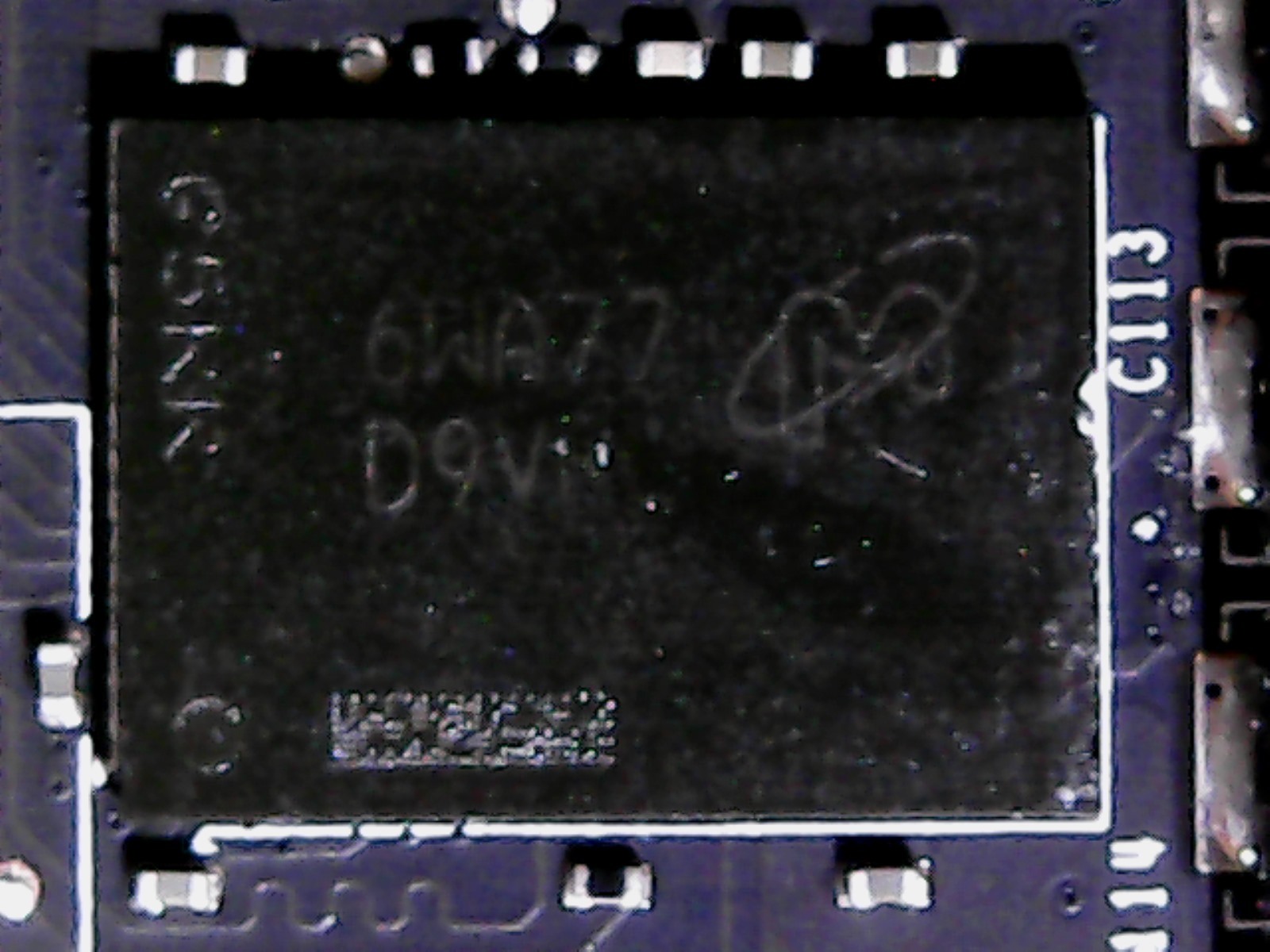

A total of 11 Micron MT58K256M321JA-110 GDDR5X are organized around the GP102 processor. They operate at 11 Gb/s data rates, which helps compensate for the missing 32-bit memory controller compared to Titan X. We asked Micron to speculate why Nvidia didn't use the 12 Gb/s MT58K256M321JA-120 modules advertised in its datasheet, and the company mentioned they aren't widely available yet, despite appearing in its catalog.

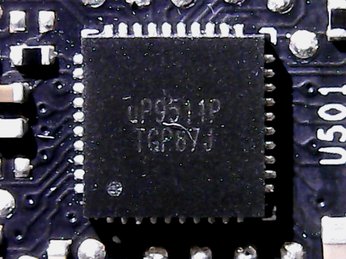

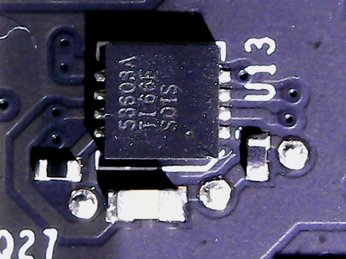

Nvidia sticks with the uP9511 we've seen several times now, which makes sense because this PWM controller allows for the concurrent operation of seven phases, as opposed to just 6(+2). The same hardware is used for all seven of the GPU's power phases, and they're found on the back of the board.

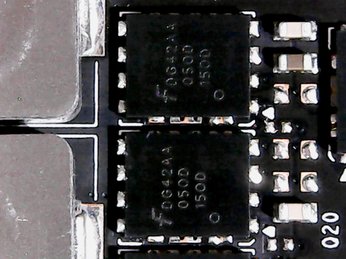

The voltage converters’ design is interesting in that it’s quite simple: one buck converter, the LM53603, is responsible for the high side, and two (instead of one) Fairchild D424 N-Channel MOSFETs operate on the low side. This setup spreads waste heat over twice the surface area, minimizing hot-spots.

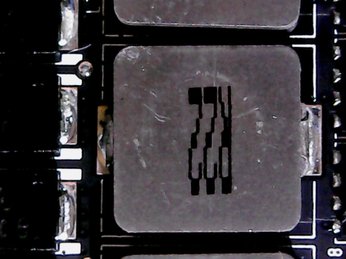

For coils, Nvidia went with encapsulated ferrite chokes (roughly the same quality as Foxconn’s Magic coils). They can be installed by machines and aren’t push-through. Thermally, the back of the board is a good place for them, though we find it interesting that Nvidia doesn't do more to help cool these components. Stranger still, the capacitors right next to them receive cooling consideration.

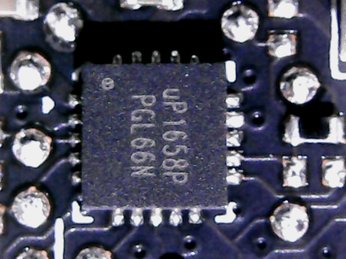

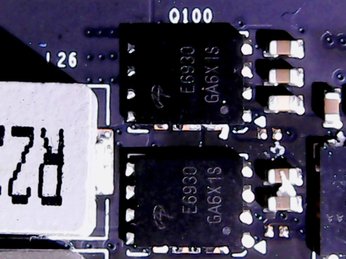

The memory gets two power phases run in parallel by a single uP1685. The high side uses the FD424 mentioned above, whereas the low side sports two dual N-Channel Logic Level PowerTrench E6930 MOSFETs in a parallel configuration. Because the two phases are simpler, their coils are correspondingly smaller.

So, what’s the verdict on Nvidia's improved thermal solution? Based on what we found under the hood, it'd be safer to call this a cooling reconceptualization. Switching out active components and using additional thermal pads to more efficiently move waste heat are the most readily apparent updates. The cooler itself should perform identically to cards we've seen in the past.

MORE: Nvidia GeForce GTX 1080 Roundup

MORE: Nvidia GeForce GTX 1070 Roundup

How We Tested Nvidia's GeForce GTX 1080 Ti

Nvidia’s latest and greatest will no doubt be found in high-end platforms. Some of these may include Broadwell-E-based systems. However, we’re sticking with our MSI Z170 Gaming M7 motherboard, which was recently upgraded to host a Core i7-7700K CPU. The new processor is complemented by G.Skill’s F4-3000C15Q-16GRR memory kit. Intel’s Skylake architecture remains the company’s most effective per clock cycle, and a stock 4.2 GHz frequency is higher than the models with more cores. Crucial’s MX200 SSD remains, as does the Noctua NH-12S cooler and be quiet! Dark Power Pro 10 850W power supply.

As far as competition goes, the GeForce GTX 1080 Ti is rivaled only by the $1200 Titan X (Pascal). The only other comparisons that make sense are Nvidia’s GeForce GTX 1080, the lower-end 1070, and AMD’s flagship Radeon R9 Fury X. We add a GeForce GTX 980 Ti to the mix for showing what 1080 Ti can do versus its predecessor.

Our benchmark selection now includes Ashes of the Singularity, Battlefield 1, Civilization VI, Doom, Grand Theft Auto V, Hitman, Metro: Last Light, Rise of the Tomb Raider, Tom Clancy’s The Division, Tom Clancy’s Ghost Recon Wildlands, and The Witcher 3. That substantial list drops Battlefield 4 and Project CARS, but adds several others.

The testing methodology we're using comes from PresentMon: Performance In DirectX, OpenGL, And Vulkan. In short, all of these games are evaluated using a combination of OCAT and our own in-house GUI for PresentMon, with logging via AIDA64. If you want to know more about our charts (particularly the unevenness index), we recommend reading that story.

All of the numbers you see in today’s piece are fresh, using updated drivers. For Nvidia, we’re using build 378.78. AMD’s card utilizes Crimson ReLive Edition 17.2.1, which was the latest at test time.

Current page: Nvidia GeForce GTX 1080 Ti Review

Next Page Ashes, Battlefield 1 & Civilization VI-

dstarr3 Oh my. I've got a 980 Ti now, and I thought I could hold out until Christmas 2018 or so to upgrade, but seeing that this card has nearly double the FPS... That's a pretty big deal...Reply -

HaB1971 Would love one, but pointless for 1080p gaming which is what I am restricted to thanks to 2 x 27inch 1080p monitors. I don't need to replace those either they work, they are good enough and not interested in VR etc. 4k for me, is still too priceyReply -

salgado18 Reply19401792 said:Oh my. I've got a 980 Ti now, and I thought I could hold out until Christmas 2018 or so to upgrade, but seeing that this card has nearly double the FPS... That's a pretty big deal...

Why not wait? Your card is still great, and you can pick up a 2080 or Vega 2 by then. Unless you can't live without 4K at Ultra, keep your card. -

Ray_58 Hab1971, 4k really isn't that bad price wise, its ok, part of the problem is the LCD panel industry milking the crap out of 1080p resolutions still up to the 300$ pricepoint when in actuality we should have been at base standard 2k TN/IPS panels at the 160$-300$ range. Still today though the greatest costs are the fact 60htz is still standard and any increase is massive price cost increases, and obviously Gsync for NVidia. Still spending $500-$800 on a monitor and then dropping 700$ on this is a bit 2 much for the mainstream. Id rather buy a 2k IPS screen with Gsync at 700$ than a 4k 60htz monitor at 400$Reply -

envy14tpe Reply19401792 said:Oh my. I've got a 980 Ti now, and I thought I could hold out until Christmas 2018 or so to upgrade, but seeing that this card has nearly double the FPS... That's a pretty big deal...

I feel the same. I see the jump in BF1 to be massive and enough to warrant a 1080 Ti for 1440p gaming. I'm holding out until June when the next Nvidia price drops. -

dstarr3 Reply19401838 said:19401792 said:Oh my. I've got a 980 Ti now, and I thought I could hold out until Christmas 2018 or so to upgrade, but seeing that this card has nearly double the FPS... That's a pretty big deal...

Why not wait? Your card is still great, and you can pick up a 2080 or Vega 2 by then. Unless you can't live without 4K at Ultra, keep your card.

Well, two reasons: 1) I'd like to upgrade to 1440p/144 this year. 2) I also have an HTPC with a 770 in it that needs an upgrade. I was considering buying a 1060 for that computer, but instead, I might just buy this 1080 Ti for my main rig and put its 980 Ti in the HTPC.

Either way, no purchasing until Christmas, because I hate paying full price for just about anything. So I've got time to think about this.