Video Encoding Tested: AMD GPUs Still Lag Behind Nvidia, Intel (Updated)

AVC, HEVC, and AV1 encoding put to the test

The best graphics cards aren't just for playing games. Artificial intelligence training and inference, professional applications, video encoding and decoding can all benefit from having a better GPU. Yes, games still get the most attention, but we like to look at the other aspects as well. Here we're going to focus specifically on the video encoding performance and quality that you can expect from various generations of GPU.

Generally speaking, the video encoding/decoding blocks for each generation of GPU will all perform the same, with minor variance depending on clock speeds for the video block. We've checked the RTX 3090 Ti and RTX 3050 as an example — the fastest and slowest GPUs from Nvidia's Ampere RTX 30-series generation — and found effectively no difference. Thankfully, that leaves us with fewer GPUs to look at than would otherwise be required.

We'll test Nvidia's RTX 4090, RTX 3090, and GTX 1650 from team green, which covers the Ada Lovelace, Turing/Ampere (functionally identical), and Pascal-era video encoders. For Intel, we're looking at desktop GPUs, with the Arc A770 as well as the integrated UHD 770. AMD ends up with the widest spread, at least in terms of speeds, so we ended up testing the RX 7900 XTX, RX 6900 XT, RX 5700 XT, RX Vega 56, and RX 590. We also wanted to check how the GPU encoders fare against CPU-based software encoding, and for this we used the Core i9-12900K and Core i9-13900K.

Update 3/09/2013: The initial VMAF scores were calculated backward, meaning we used the "distorted" video as the "reference" video, and vice versa. This, needless to say, led to much higher VMAF scores than intended! We have since recalculated all of the VMAF scores. If you're wondering, that's super computationally intensive and takes about 30 minutes per GPU on a Core i9-13900K.

The good news is that our charts are corrected now. The bad news (besides the initially incorrect results being published) is that we killed off the initial torrent file and are updating with a new file that includes the logs from the new VMAF calculations. Also, scores got lower, so if you were thinking 4K 60 fps encodes at 16Mbps would basically match the original quality, that's only selectively the case (depending on the source video).

Video Encoding Test Setup

Most of our testing was done using the same hardware we use for our latest graphics card reviews, but we also ran the CPU test on the 12900K PC that powers our 2022 GPU benchmarks hierarchy. As a more strenuous CPU encoding test, we also ran the 13900K with a higher-quality encoding preset, but more on that in a moment.

INTEL 13TH GEN PC

Intel Core i9-13900K

MSI MEG Z790 Ace DDR5

G.Skill Trident Z5 2x16GB DDR5-6600 CL34

Sabrent Rocket 4 Plus-G 4TB

be quiet! 1500W Dark Power Pro 12

Cooler Master PL360 Flux

Windows 11 Pro 64-bit

INTEL 12TH GEN PC

Intel Core i9-12900K

MSI Pro Z690-A WiFi DDR4

Corsair 2x16GB DDR4-3600 CL16

Crucial P5 Plus 2TB

Cooler Master MWE 1250 V2 Gold

Corsair H150i Elite Capellix

Cooler Master HAF500

Windows 11 Pro 64-bit

For our test software, we've found ffmpeg nightly to be the best current option. It supports all of the latest AMD, Intel, and Nvidia video encoders, can be relatively easily configured, and it also provides the VMAF (Video Multi-Method Assessment Fusion) functionality that we're using to compare video encoding quality. We did, however, have to use the last official release, 5.1.2, for our Nvidia Pascal tests (the nightly build failed on HEVC encoding).

We're doing single-pass encoding for all of these tests, as we're using the hardware provided by the various GPUs and it's not always capable of handling more complex encoding instructions. GPU video encoding is generally used for things like livestreaming of gameplay, while if you want best quality you'd generally need to opt for CPU-based encoding with a high CRF (Constant Rate Factor) of 17 or 18, though that of course results in much larger files and higher average bitrates. There are still plenty of options that are worth discussing, however.

AMD, Intel, and Nvidia all have different "presets" for quality, but what exactly these presets are or what they do isn't always clear. Nvidia's NVENC in ffmpeg uses "p4" as its default. And switching to "p7" (maximum quality) did little for the VMAF scores, while dropping encoding performance by anywhere from 30 to 50 percent. AMD opts for a "-quality" setting of "speed" for its encoder, but we also tested with "balanced" — and like Nvidia, the maximum setting of "quality" reduced performance a lot but only improved VMAF scores by 1~2 percent. Lastly, Intel seems to use a preset of "medium" and we found that to be a good choice — "veryslow" took almost twice as long to encode with little improvement in quality, while "veryfast" was moderately faster but degraded quality quite a bit.

Ultimately, we opted for two sets of testing. First, we have the default encoder settings for each GPU, where the only thing we specified was the target bitrate. Even then, there are slight differences in encoded file sizes (about a +/-5% spread). Second, after consulting with the ffmpeg subreddit, we attempted to tune the GPUs for slightly more consistent encoding settings, specifying a GOP size equal to two seconds ("-g 120" for our 60 fps videos). AMD was the biggest benefactor of our tuning, trading speed for roughly 5-10 percent higher VMAF scores. But as you'll see, AMD still trailed the other GPUs.

There are many other potential tuning parameters, some of which can change things quite a bit, others which seem to accomplish very little. We're not targeting archival quality, so we've opted for faster presets that use the GPUs, but we may revisit things in the future. Sound off in our comments if you have alternative recommendations for the best settings to use on the various GPUs, with an explanation of what the settings do. It's also unclear how the ffmpeg settings and quality compare to other potential encoding schemes, but that's beyond the scope of this testing.

Here are the settings we used, both for the default encoding as well as for the "tuned" encoding.

AMD:

Default: ffmpeg -i [source] -c:v [h264/hevc/av1]_amf -b:v [bitrate] -y [output]

Tuned: ffmpeg -i [source] -c:v [h264/hevc/av1]_amf -b:v [bitrate] -g 120 -quality balanced -y [output]

Intel:

Default: ffmpeg -i [source] -c:v [h264/hevc/av1]_qsv -b:v [bitrate] -y [output]

Tuned: ffmpeg -i [source] -c:v [h264/hevc/av1]_amf -b:v [bitrate] -g 120 -preset medium -y [output]

Nvidia:

Default: ffmpeg -i [source] -c:v [h264/hevc/av1]_nvenc -b:v [bitrate] -y [output]

Tuned: ffmpeg -i [source] -c:v [h264/hevc/av1]_nvenc -b:v [bitrate] -g 120 -no-scenecut 1 -y [output]

Most of our attempts at "tuned" settings didn't actually improve quality or encoding speed, and some settings seemed to cause ffmpeg (or our test PC) to just break down completely. The main thing about the above settings is that it keeps the key frame interval constant, and potentially provides for slightly higher image quality.

Again, if you have some better settings you'd recommend, post them in the comments; we're happy to give them a shot. The main thing is that we want bitrates to stay the same, and we want reasonable encoding speeds of at least real-time (meaning, 60 fps or more) for the latest generation GPUs. That said, for now our "tuned" settings ended up being so close to the default settings, with the exception of the AMD GPUs, that we're just going to show those charts.

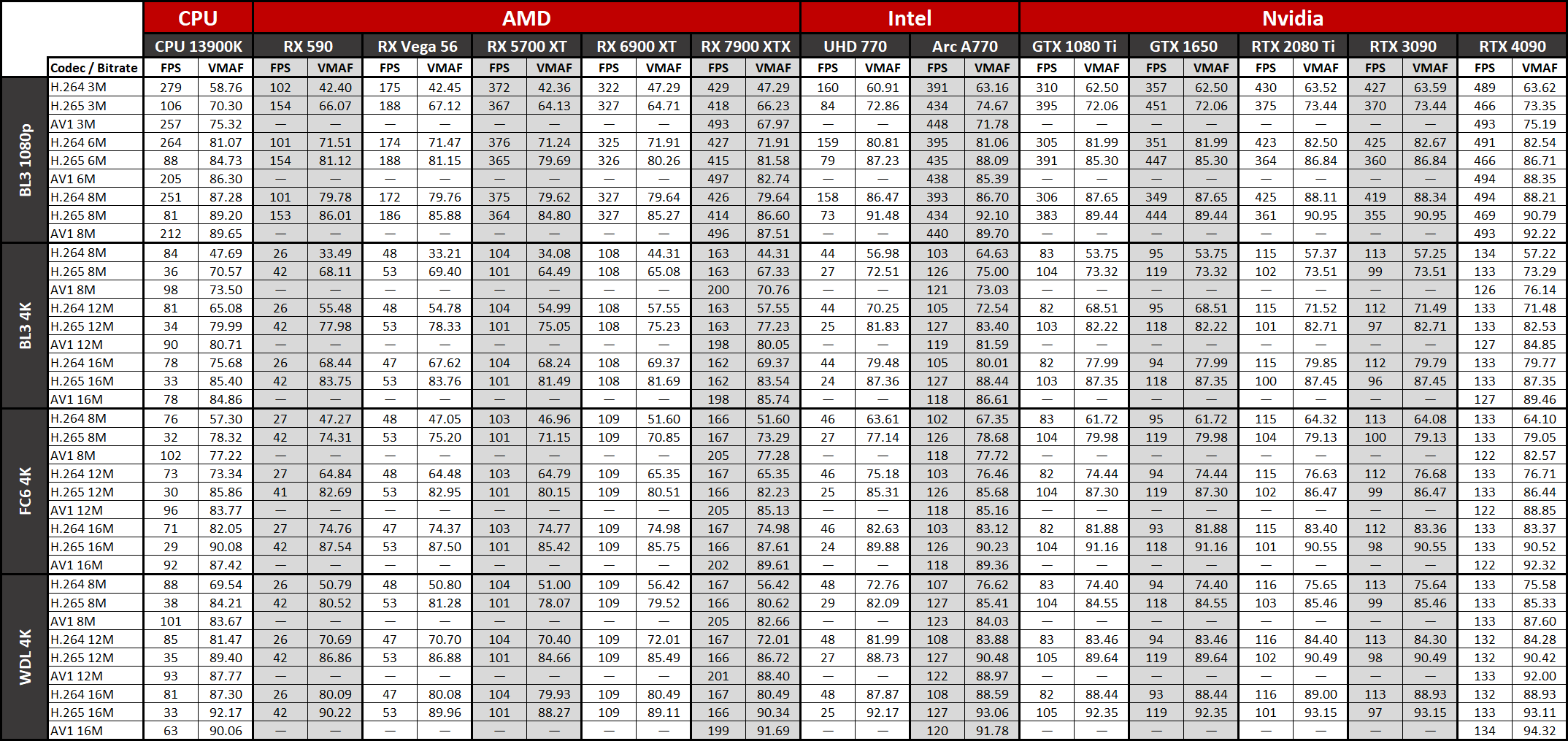

With the preamble out of the way, here are the results. We've got four test videos, taken from captured gameplay of Borderlands 3, Far Cry 6, and Watch Dogs Legion. We ran tests at 1080p and 4K for Borderlands 3 and at 4K with the other two games. We also have three codecs: H.264/AVC, H.265/HEVC, and AV1. We'll have two charts for each setting and codec, comparing quality using VMAF and showing the encoding performance.

For reference, VMAF scores follow a scale from 0 to 100, with 20 as "bad", 40 equates to "poor," 60 rates as "fair," 80 as "good," and 100 is "excellent." In general, scores of 90 or above are desirable, with 95 or higher being mostly indistinguishable from the original source material.

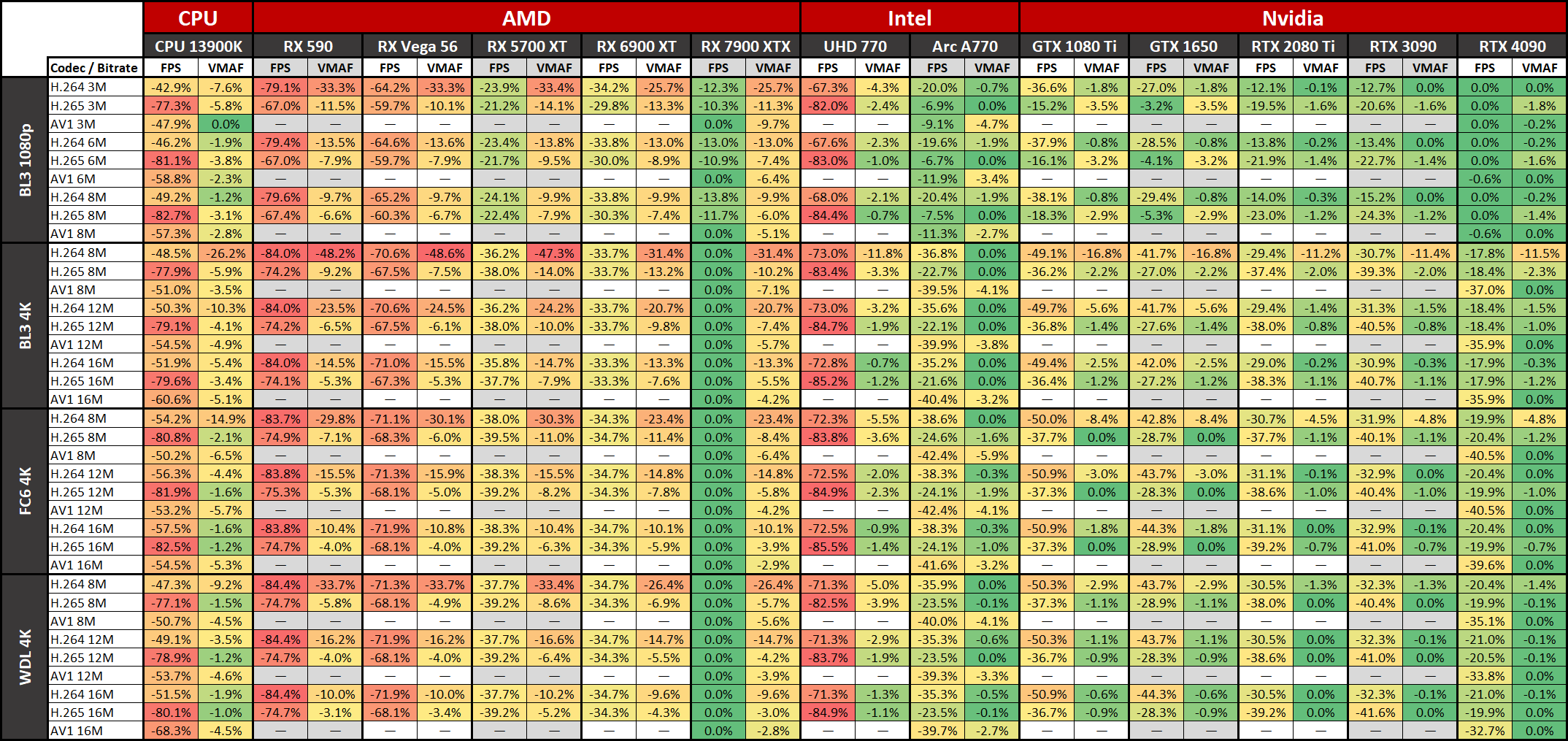

There's plenty of overlap in the VMAF scores, so if you can't see one of the lines, check the tables lower down that show the raw numbers — or just remember that GPUs from adjacent generations often have nearly the same quality. If you don't want to try to parse the full table below, AMD's RDNA 2 and RDNA 3 generations have identical results for H.264 encoding quality, though performance differs quite a bit. Nvidia's GTX 1080 Ti and GTX 1650 also feature identical quality on both H.264 and HEVC, while the RTX 2080 Ti and RTX 3090 have identical quality for HEVC.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

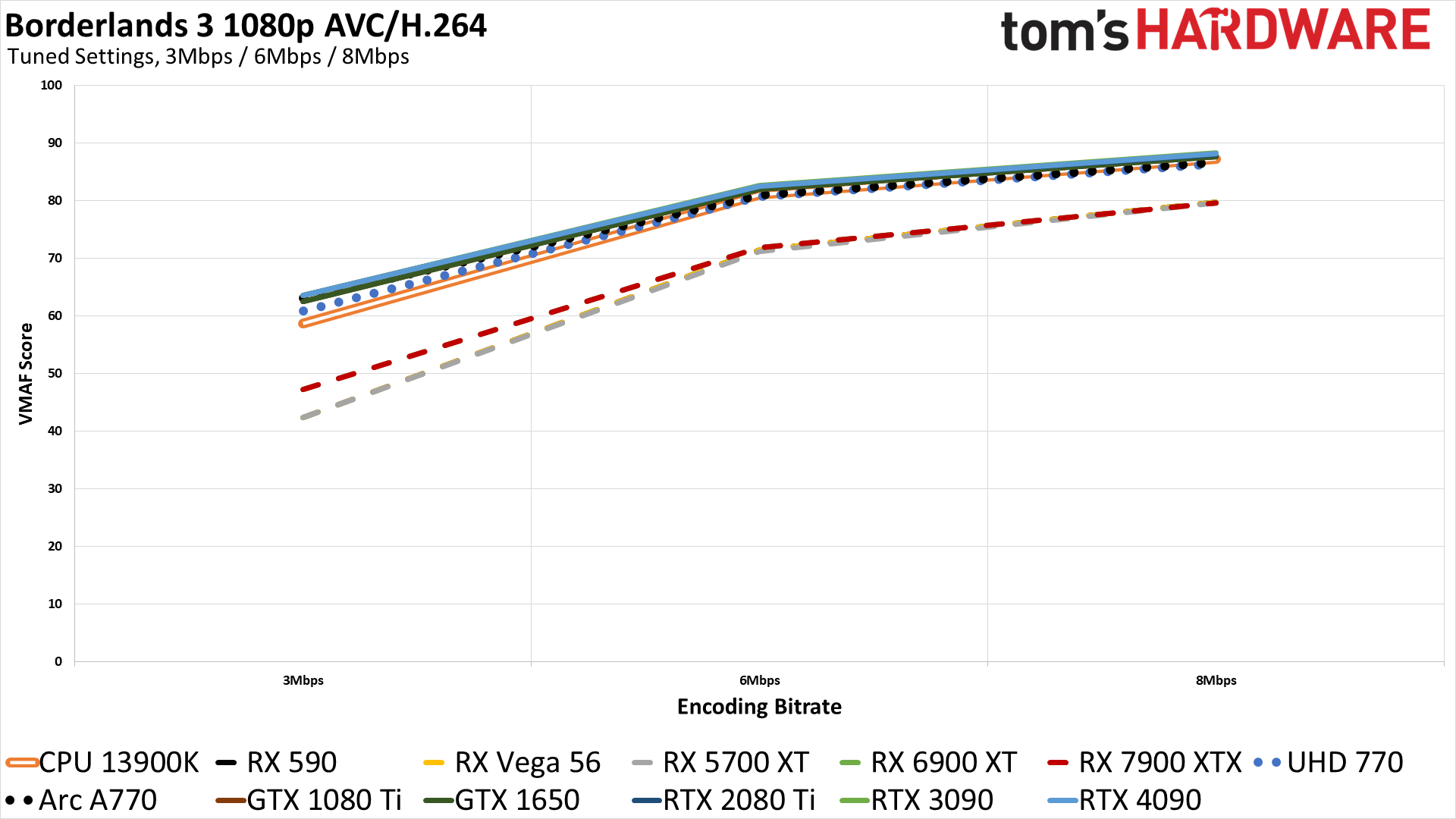

Video Encoding Quality and Performance at 1080p: Borderlands 3

Starting out with a video of Borderlands 3 at 1080p, there are a few interesting trends that we'll see elsewhere. We're discussing the "tuned" results, as they're slightly more apples to apples among the GPUs. Let's start with AVC/H.264, which remains the most popular codec. Even though it's been around for about a decade and has since been surpassed in quality, its effectively royalty-free status made for widespread adoption.

AMD's GPUs rank at the bottom of our charts on this test, with the Polaris, Vega, and RDNA 1 generations of hardware all performing about the same in terms of quality. RDNA 2 improved the encoding a bit, mostly with quality at lower bitrates increasing by about 10%. RDNA 3 appears to use the identical logic from RDNA 2 for H.264, with tied scores on the 6900 XT and 7900 XTX that are a bit higher than the other AMD cards at 3Mbps, but the 6Mbps and 8Mpbs results are effectively unchanged.

Nvidia's H.264 implementations come out as the top solutions, in terms of quality, with Intel's Arc just a hair behind — you'd be hard pressed to tell the difference in practice. The Turing, Ampere, and Ada GPUs do just a tiny bit better than the older Pascal video encoder, but there don't seem to be any major improvements in quality.

With our chosen "medium" settings on the CPU encoding, the libx264 codec ends up being roughly at the level of AMD's UHD 770 (Xe LP) graphics, just a touch behind Nvidia's older Pascal-era encoder, at least for these 1080p tests.

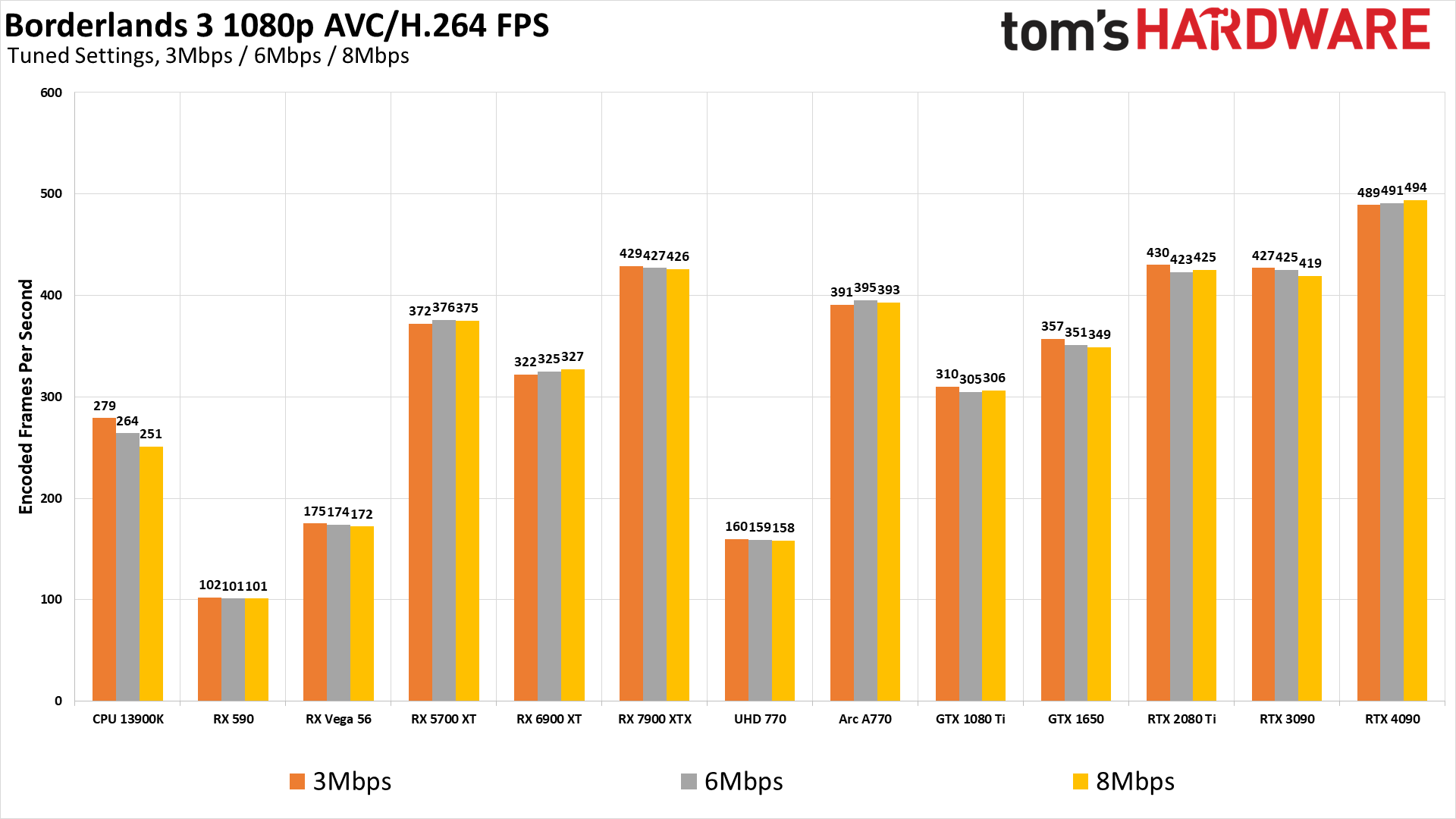

As far as performance goes, which is something of a secondary concern provided you can break 60 fps for livestreaming, the RTX 4090 was fastest at nearly 500 fps, about 15% ahead of the RTX 2080 Ti, RTX 3090, and RX 7900 XTX. Intel Arc comes in third place, then Nvidia's Pascal in the GTX 1650, followed by the RX 6900 XT, and then the GTX 1080 Ti take on Pascal — note that the GTX 1080 Ti and GTX 1650 had identical quality results, so the difference is just in video engine clocks.

Interestingly, the Core i9-13900K can encode at 1080p faster than the old RX Vega 56, which is also about as fast as the UHD integrated graphics in the Core i9-13900K. AMD's old RX 590 brings up the caboose, at just over 100 fps. Then again, that probably wasn't too shabby back in the 2016–2018 era.

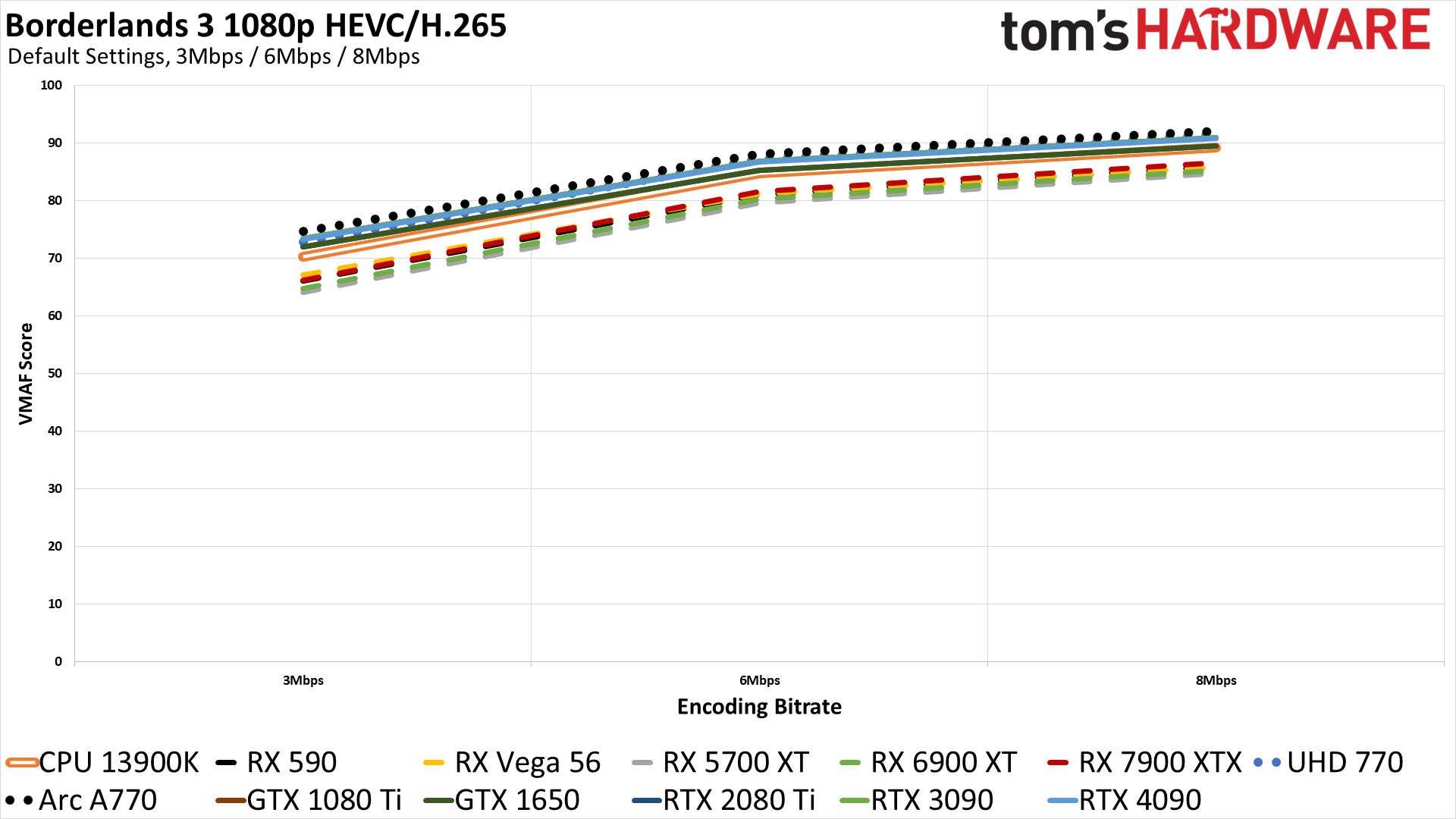

Switching to HEVC provides a solid bump in image quality, and it's been around long enough now that virtually any modern PC should have decent GPU encoding support. The only thing really hindering its adoption in more applications is royalties, and a lot of software opted to stay with H.264 to avoid that.

As far as the ranking go, AMD's various GPUs again fall below the rest of the options in this test, but they're quite a bit closer than in H.264. The RDNA 3 video engine is slightly better than the RDNA 1/2 version, but curiously the older Polaris and Vega GPUs basically match RDNA 3 here.

Nvidia comes in second, with the Turing, Ampere, and Ada video engines basically tied on HEVC quality. Intel's previous generation Xe-LP architecture in the UHD 770 also matches the newer Nvidia GPUs, followed by Nvidia's older Pascal generation and then the CPU-based libx265 "medium" results.

But the big winner for HEVC quality ends up being Intel's Arc architecture. It's not a huge win, but it clearly comes out ahead of the other options across every bitrate. With an 8Mbps bitrate, a lot of the cards also reach the point of being very close (perceptually) to the original source material, with the Arc achieving a VMAF score of 92.1.

For our tested settings, the CPU and Nvidia Pascal results are basically tied, coming in 3~5% above the 7900 XTX. Intel's Arc A770 just barely edges out the RTX 4090/3090 in pure numbers, with a 0.5–1.9% lead, depending on the bitrate. Also note that with scores of 95–96 at 8Mbps, the Arc, Ada, and Ampere GPUs all effectively achieve encodings that would be virtually indistinguishable from the source material.

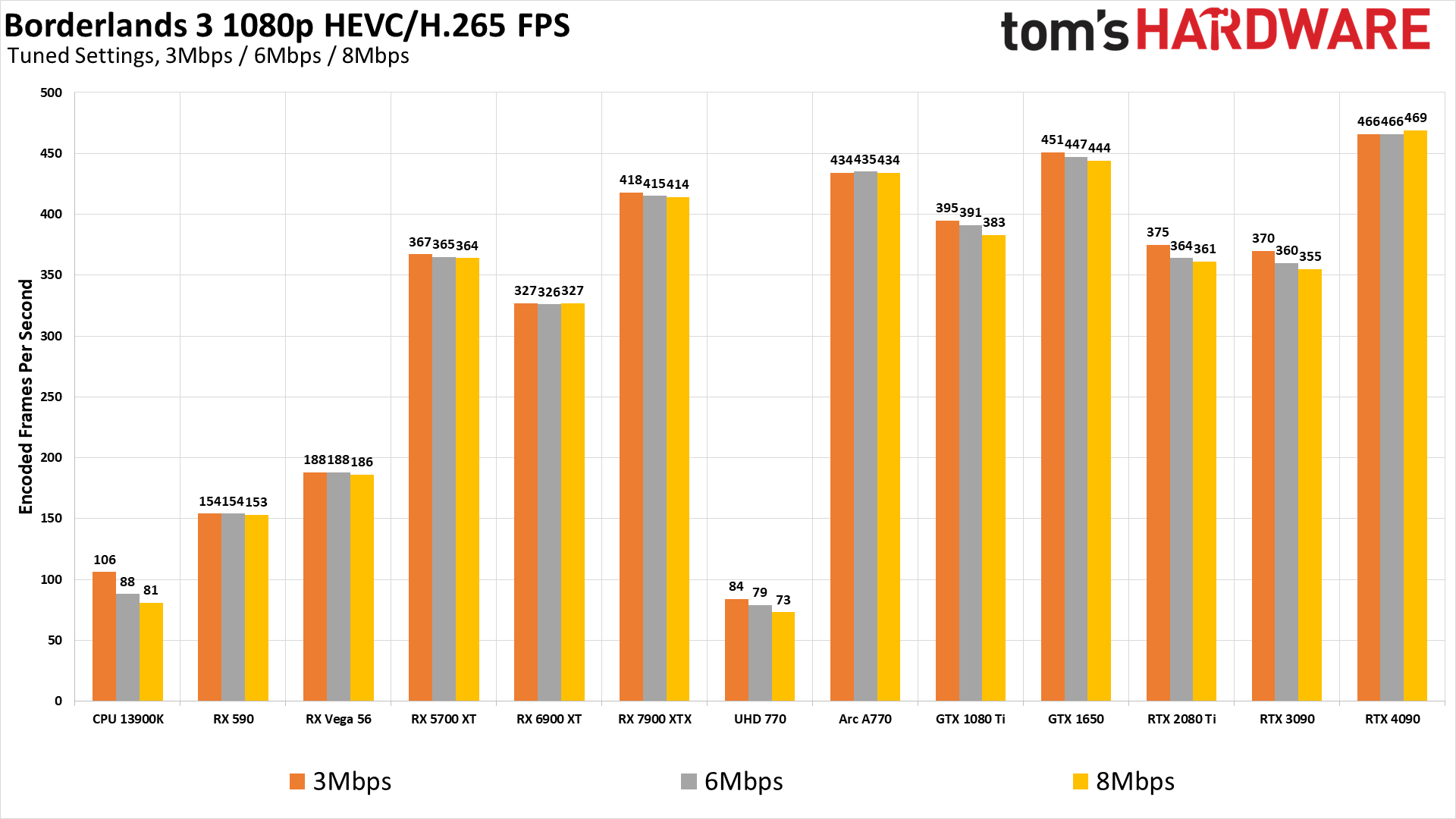

Performance is a bit interesting, especially looking at the generations of hardware. The older GPU models were generally faster at HEVC than H.264 encoding, sometimes by a fairly sizable margin — look at the RX 590, GTX 1080 Ti, and GTX 1650. But the RTX 2080 Ti, RTX 3090, and RTX 4090 are faster at H.264, so the Turing generation and later seem to have deprioritized HEVC speed compared to Pascal. Arc meanwhile delivers top performance with HEVC, whereas the Xe-LP UHD 770 does much better with H.264.

There's a big shift in overall quality, particularly at the lower 3Mbps bitrate. The worst VMAF results are now in the mid 60s, with the CPU, Nvidia GPUs, and Intel GPUs all staying above 80. But let's see if AV1 can do any better.

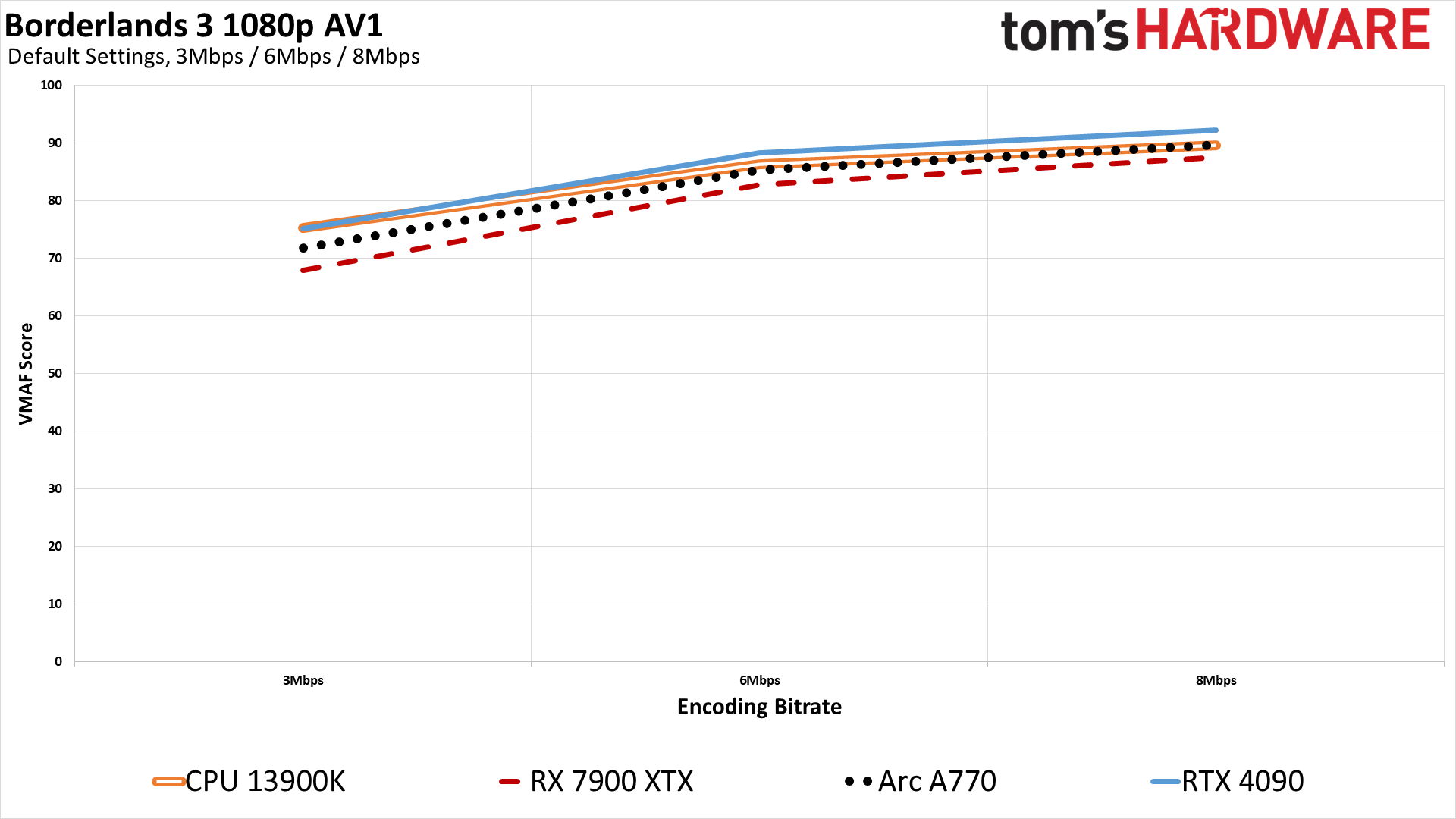

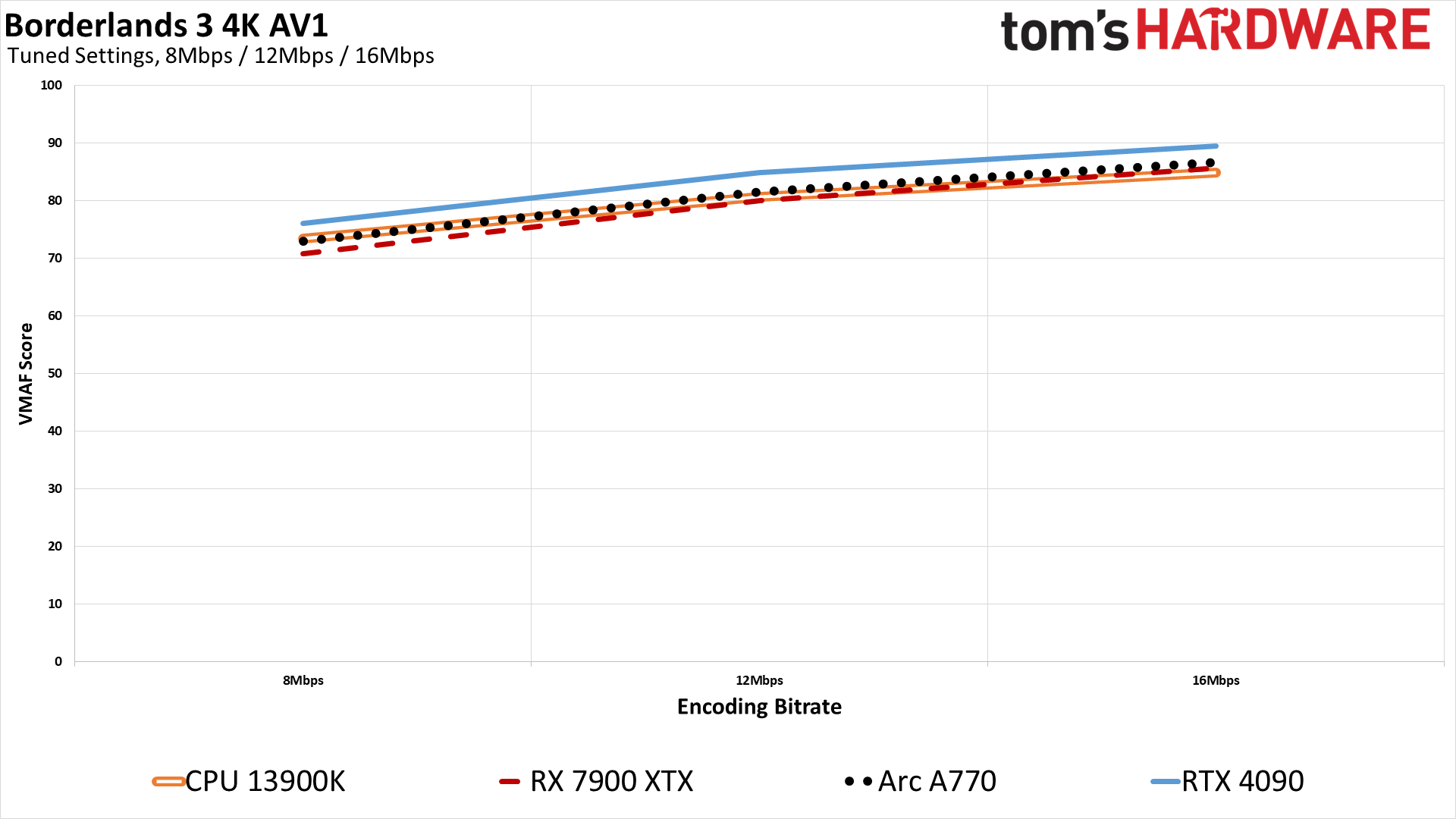

Is AV1 better than HEVC on quality? Perhaps, depending on which GPU (or CPU) you're using. Overall, AV1 and HEVC deliver nearly equivalent quality, at least for the four samples we have. There are some differences, but a lot of that comes down to the various implementations of the codecs.

AMD's RDNA 3 for example does better with AV1 than with HEVC by 1–2 points. Nvidia's Ada cards achieve their best results so far, with about a 2 point improvement over HEVC. Intel's Arc GPUs go the other way and score 2–3 points lower in AV1 versus HEVC. As for the libsvtav1 codec CPU results, it's basically tied with HEVC at 8Mbps, but leads by 1.6 points at 6Mbps and has a relatively substantial 5 point lead at 3Mpbs.

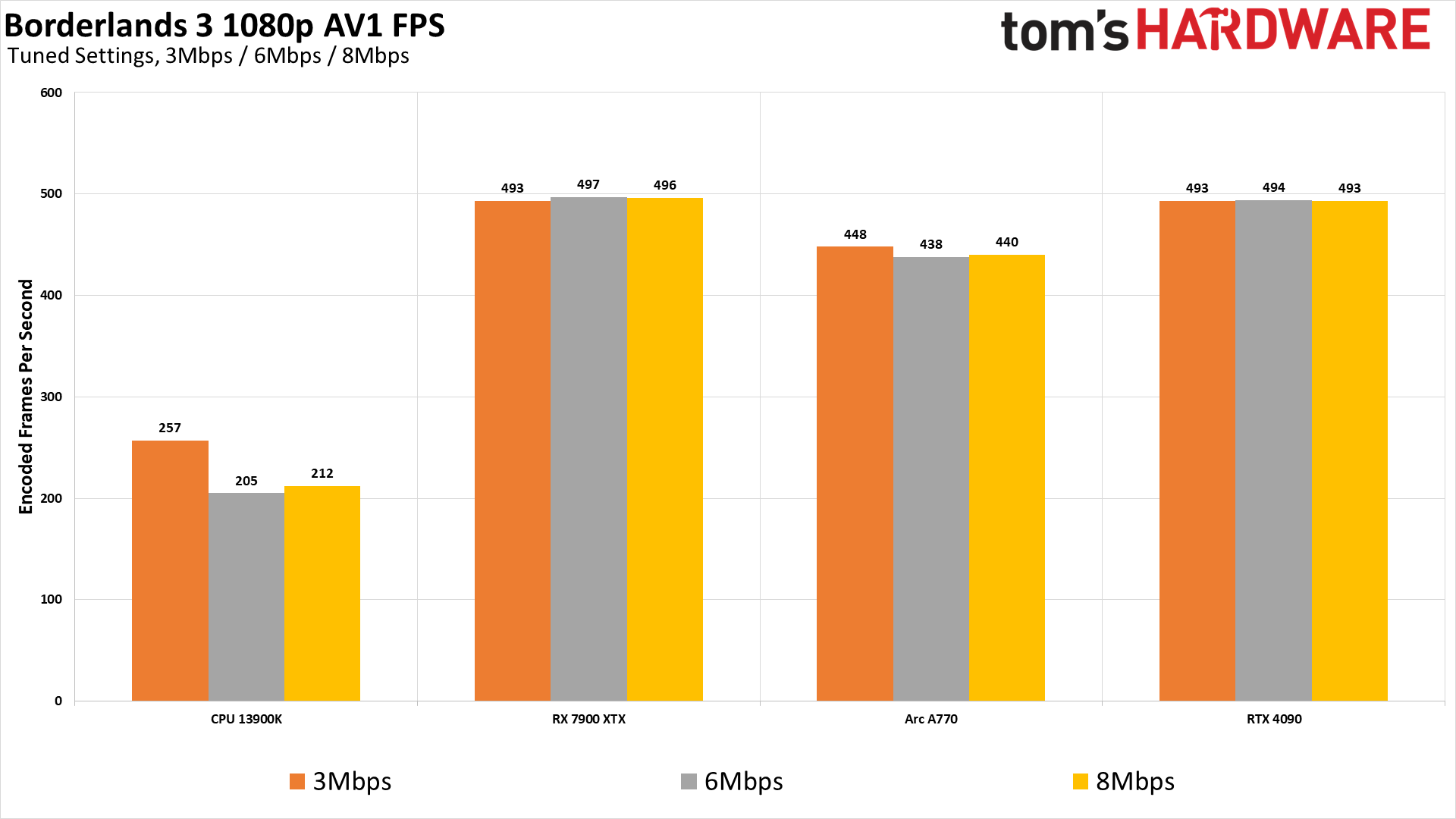

Given the cost of RX 7900-series and RTX 40-series cards, the only practical AV1 option right now for a lot of people will be the Arc GPUs. The A380 incidentally delivered identical quality results to the A770 (down to six decimal places), and it was actually slightly faster — probably because the A380 card from Gunnir that we used has a factory overclock. Alternatively, you could opt for CPU-based AV1 encoding, which nearly matches the GPU encoders and can still be done at over 200 fps on a 13900K.

That's assuming you even want to bother with AV1, which at this stage still doesn't have as widespread of support as HEVC. (Try dropping an AV1 file into Adobe Premiere, for example.) It's also interesting how demanding HEVC encoding can be on the CPU, where AV1 by comparison looks pretty "easy." In some cases (at 4K), the CPU encoding of AV1 was actually faster than H.264 encoding, though that will obviously depend on the settings used for each, as well as your particular CPU.

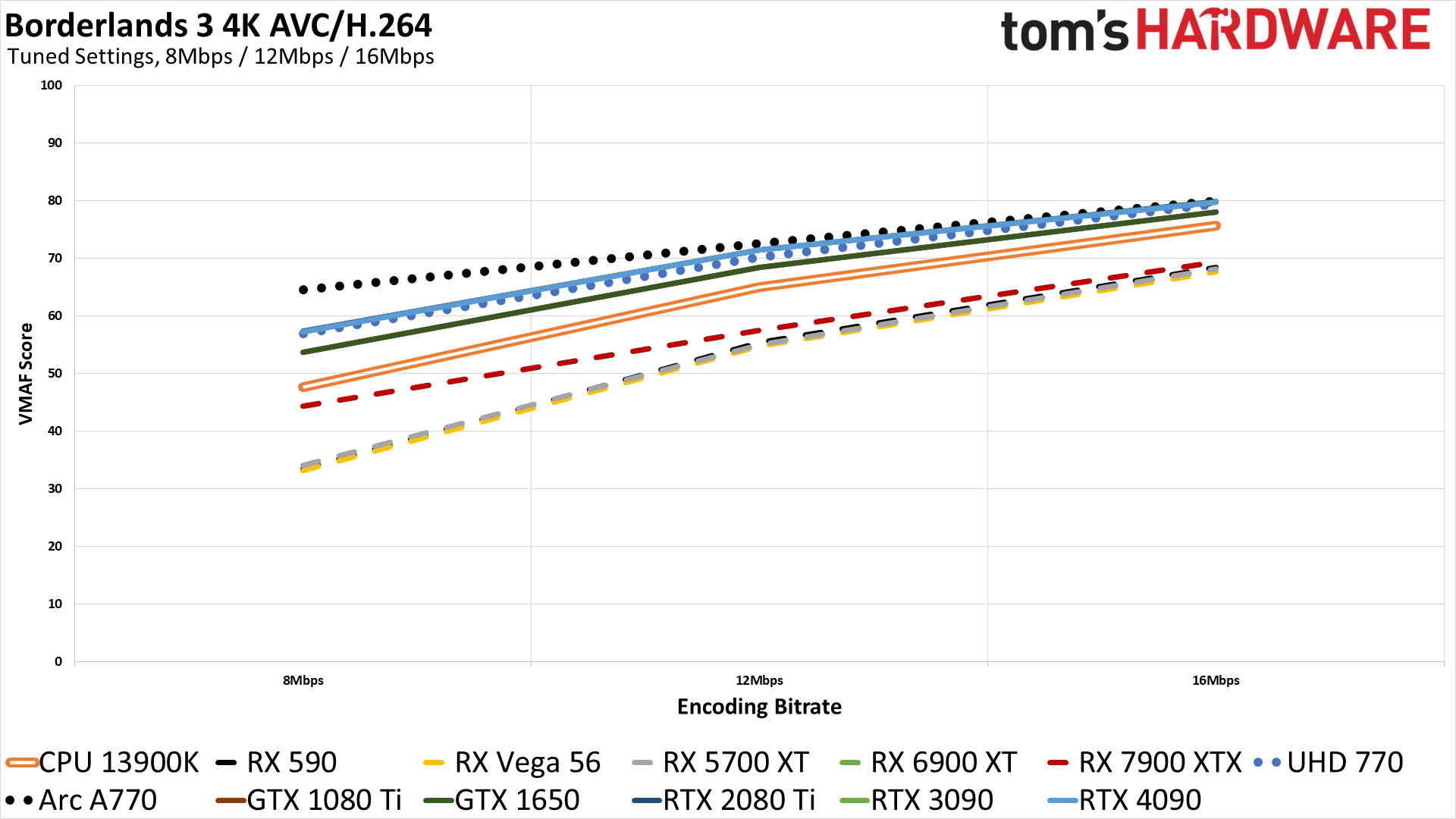

Video Encoding Quality and Performance at 4K: Borderlands 3

Moving up to 4K encoding, the bitrate demands with H.264 become significantly higher than at 1080p. With 8Mbps, Intel's Arc claims top honors with a score of just 65, while Nvidia's Ada/Ampere/Turing GPUs (which had effectively the same VMAF results) land at around 57, right with the Intel UHD 770. Nvidia's Pascal generation encoder gets 54, then the CPU encoder comes next at 48. Finally, AMD's RDNA 2/3 get 44 points, while AMD's older GPUs are rather abysmal at just 33 points.

Upping the bitrate to 12Mbps and 16Mbps helps quite a bit, naturally, though even the best result for 4K H.264 encoding with 16Mbps is still only 80 — a "good" result but not any better than that. You could get by with 16Mbps at 4K and 60 fps in a pinch, but really you need a modern codec to better handle higher resolutions with moderate bitrates.

AMD made improvements with RDNA 2, while RDNA 3 appears to use the same core algorithm (identical scores between the 6900 XT and 7900 XTX), just with higher performance. But the gains made over the earlier AMD GPUs mostly materialize at the lower bitrates; by 16Mbps, the latest 7900 XTX only scores 1–2 points higher than the worst-case Vega GPU.

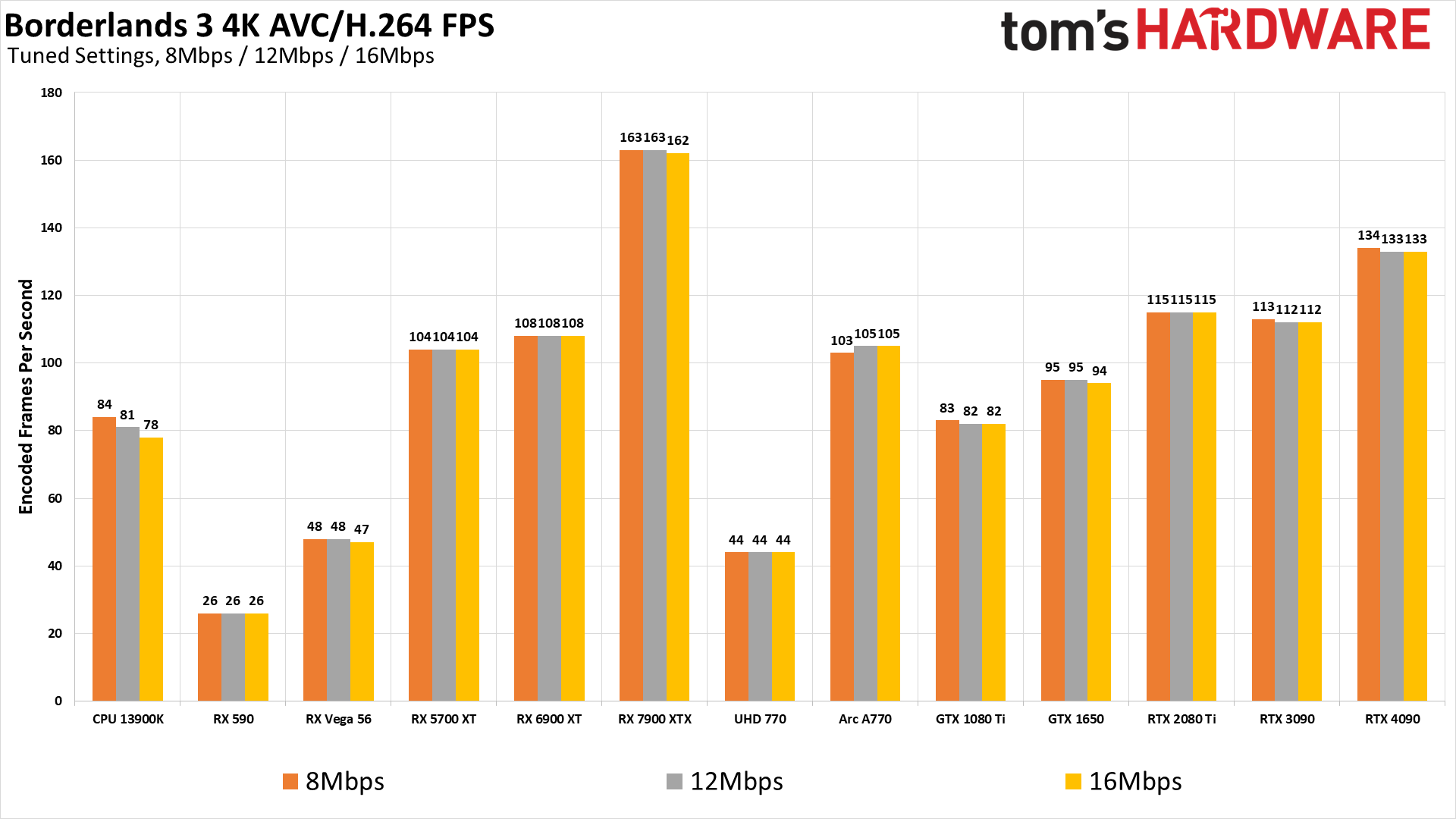

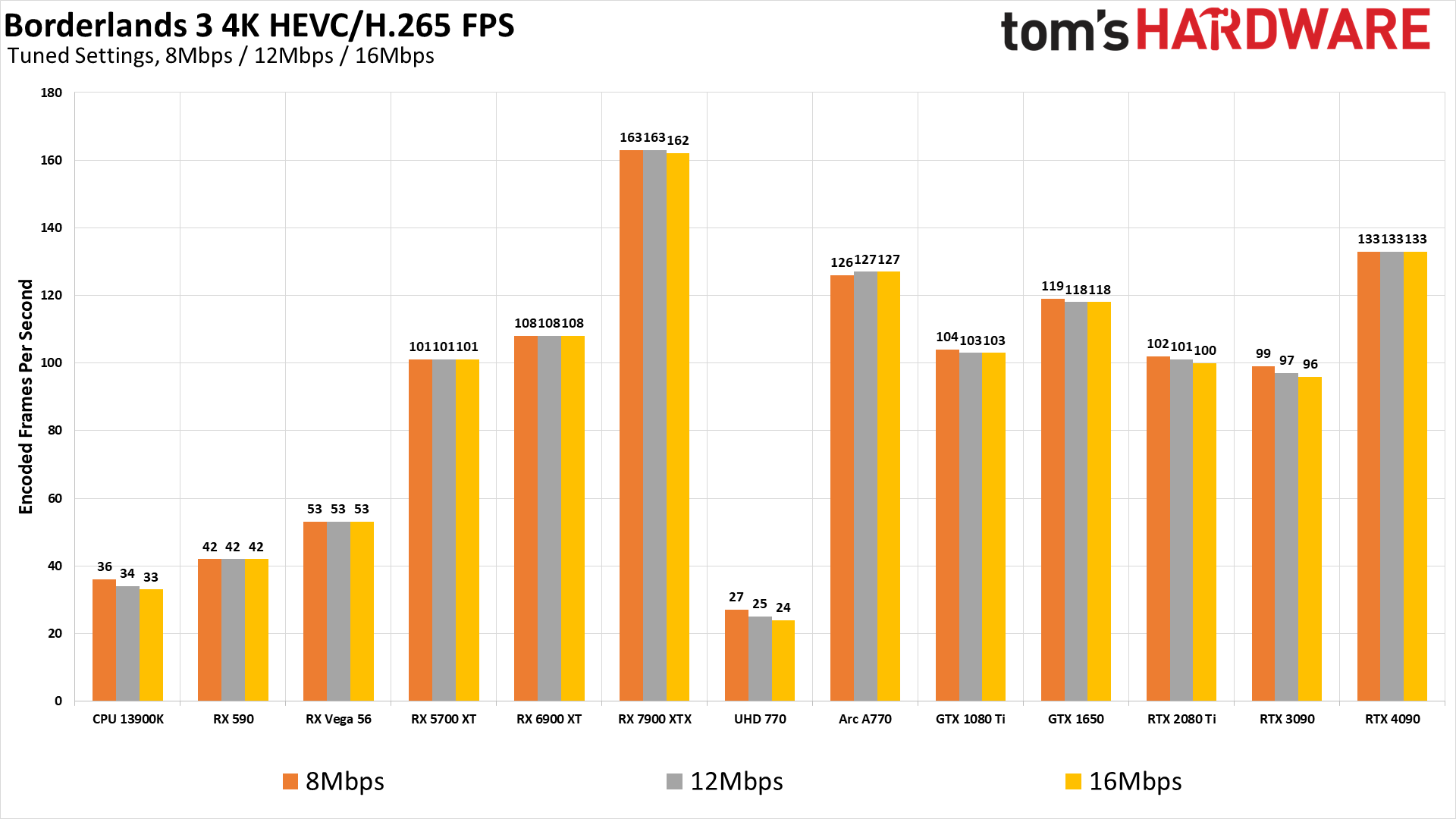

Encoding performance at 4K is a lot more demanding, as expected. Most of the solutions are roughly 1/3 as fast as at 1080p, and in some cases it can even be closer to 1/4 the performance. AMD's older Polaris GPUs can't manage even 30 fps based on our testing, while the Vega cards fall well below 60 fps. The Core i9-13900K does plug along at around 80 fps, though if you're playing a game you may not want to devote that much CPU horsepower to the task of video encoding.

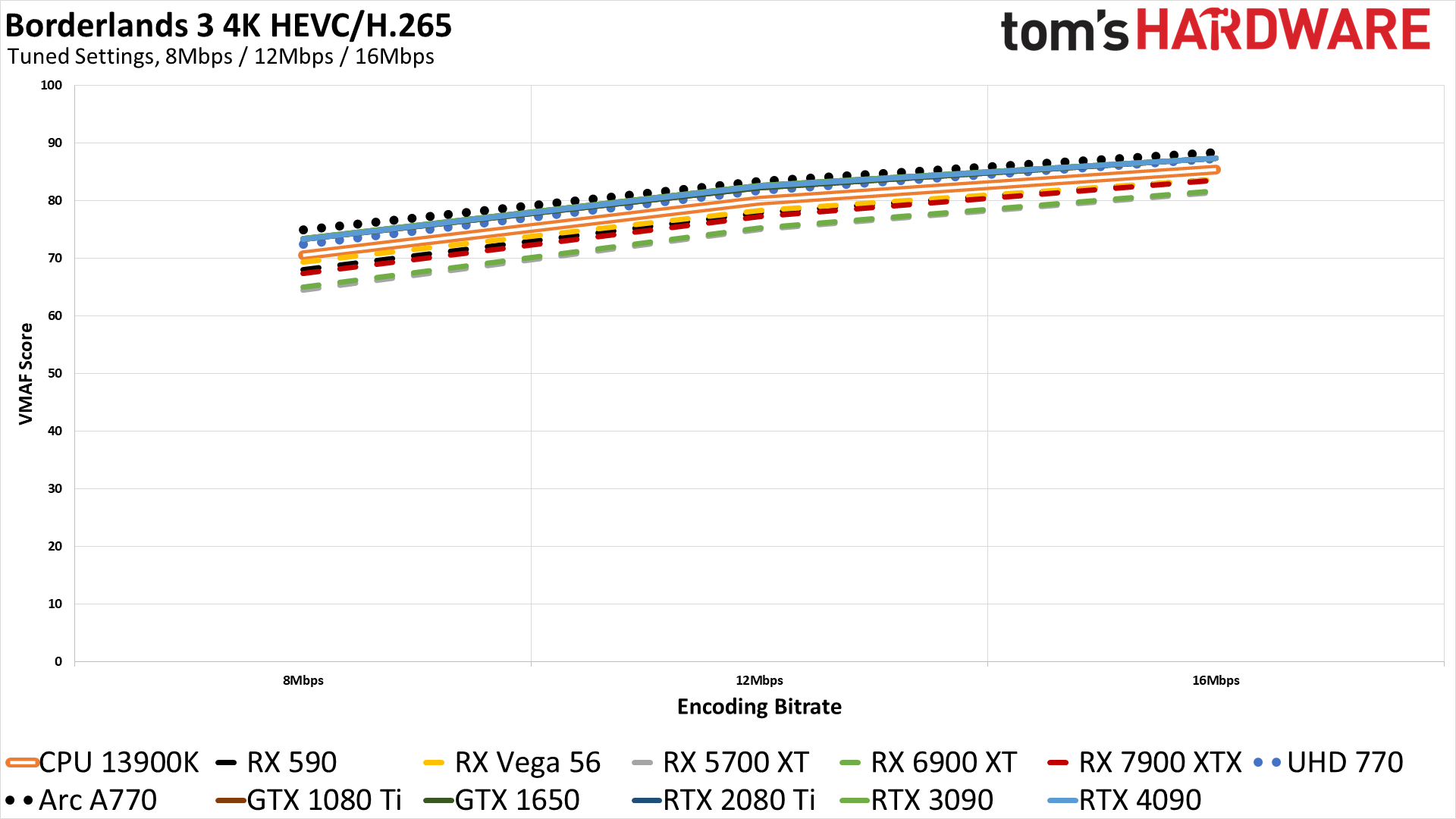

HEVC and AV1 encoding were designed to scale up to 4K and beyond, and our charts prove this point nicely. HEVC and AV1 with 8Mbps can generally beat the quality of H.264 with 12Mbps. All of the GPUs (and the CPU) can also break 80 on the VMAF scale with 16Mbps, with the top results flirting with scores of 90.

If you're after image quality, AMD's latest RDNA 3 encoder still falls behind. It can be reasonably fast at encoding, but even Nvidia's Pascal era hardware generally delivers superior results compared to anything AMD has, at least with our ffmpeg testing. Even the GTX 1650 with HEVC can beat AMD's AV1 scores, at every bitrate, though the 7900 XTX does score 2–3 points higher with AV1 than it does with HEVC. Nvidia's RTX 40-series encoder meanwhile wins in absolute overall quality, with its AV1 results edging past Intel's HEVC results at 4K at the various bitrates.

AMD has informed us that it's working with ffmpeg to get some quality improvements into the code, and we'll have to see how that goes. We don't know if it will improve quality a lot, bringing AMD up to par with Nvidia, or if it will only be one or two points. Still, every little bit of improvement is good.

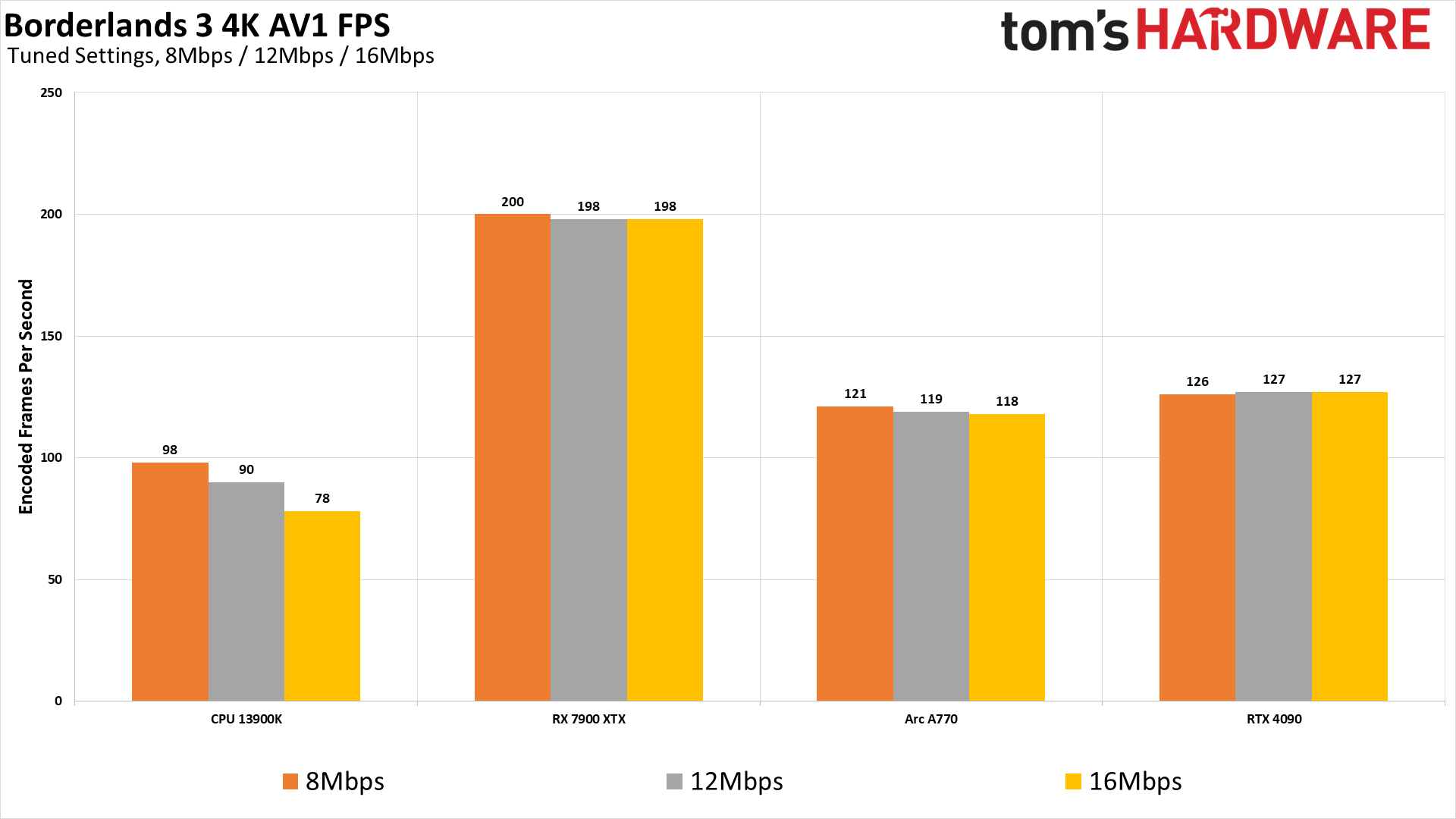

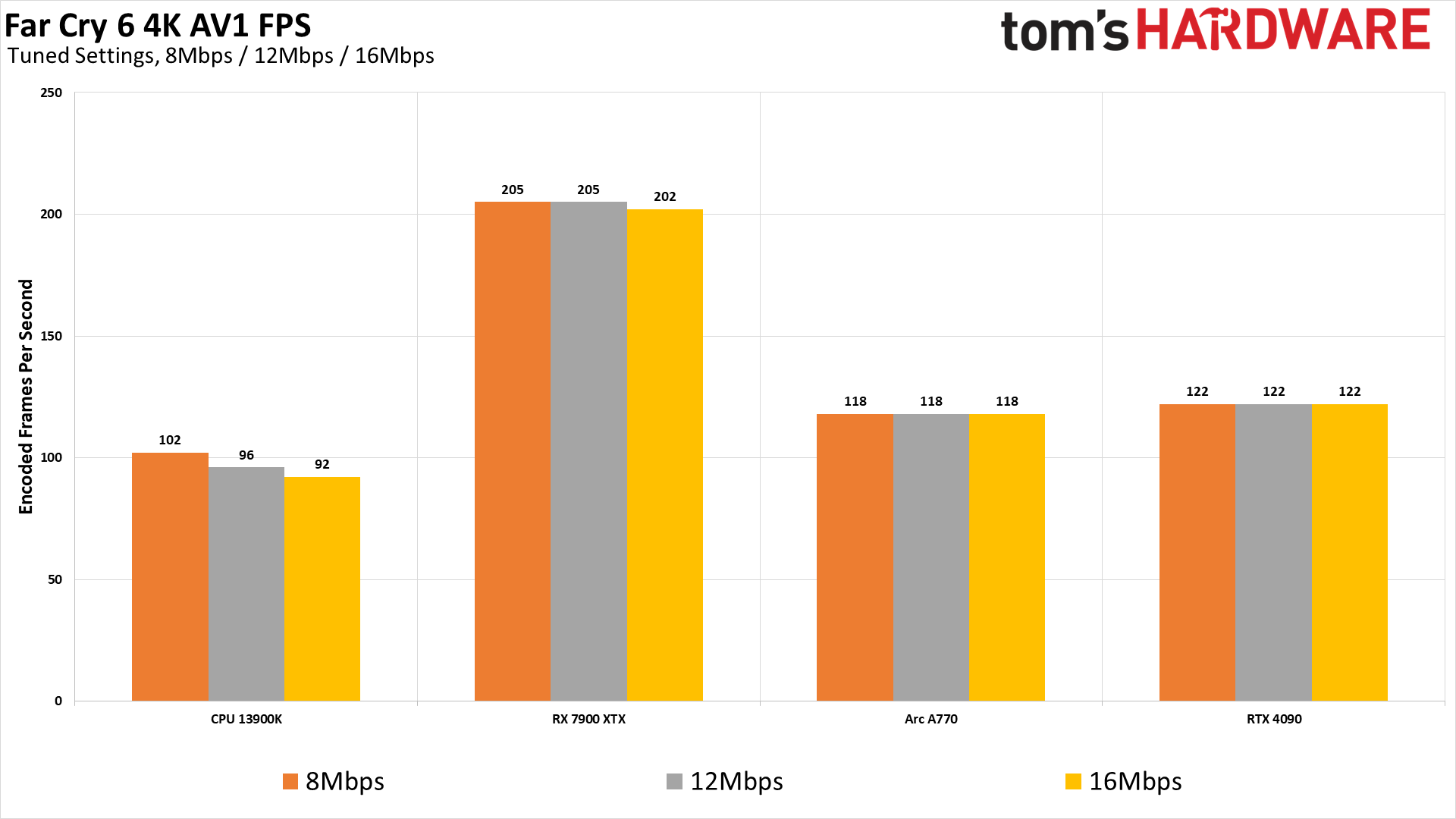

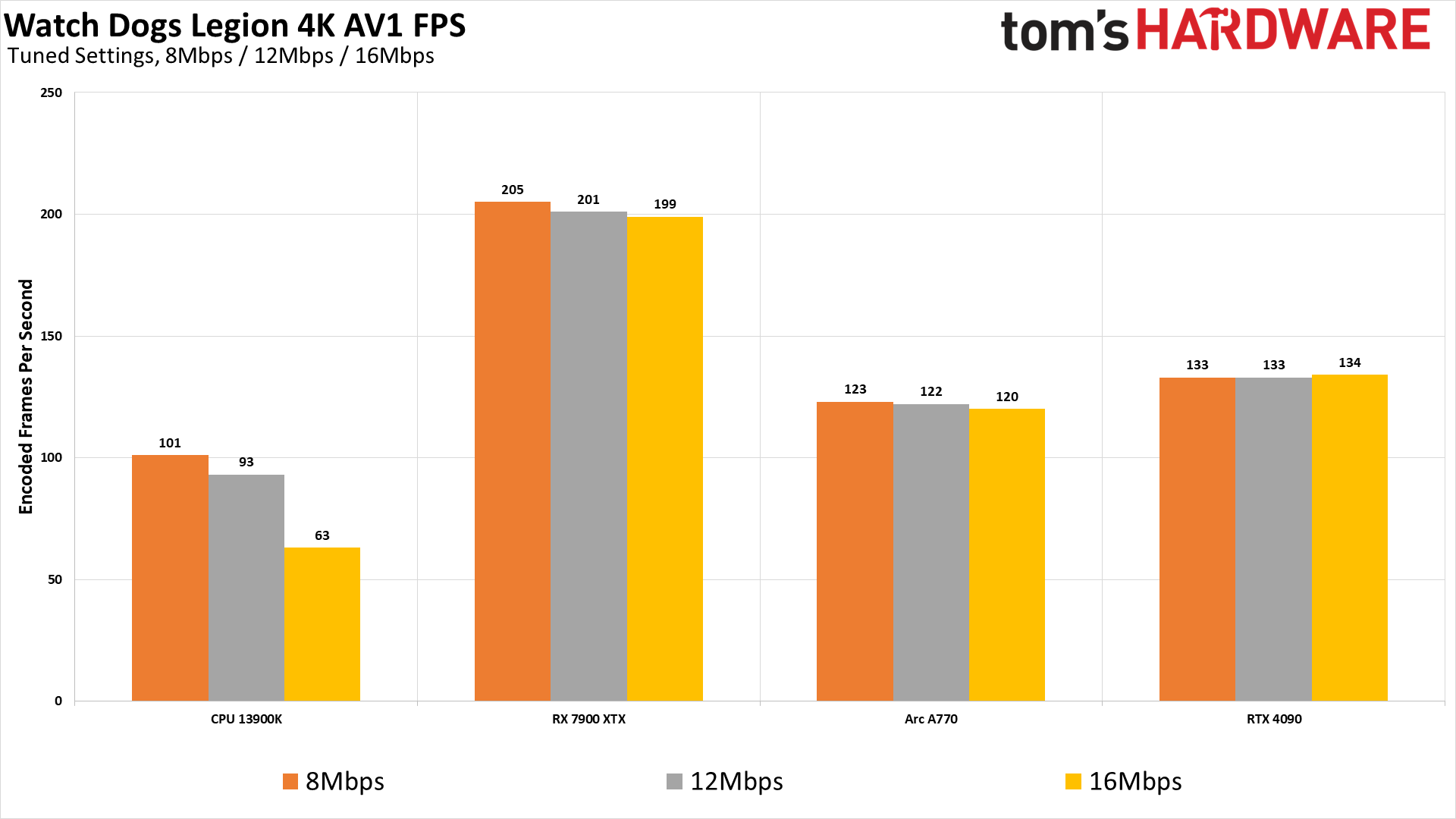

HEVC and AV1 aren't really any better than H.264 from the performance perspective. AMD's 7900 XTX does hit 200 fps with AV1, the fastest of the bunch by a fairly wide margin, which again leaves us some hope for quality improvements. Otherwise, for 4K HEVC, you'll want a Pascal or later Nvidia card, an RDNA or later AMD card, or Intel's Arc to handle 4K encoding at more than 60 fps (assuming your GPU can actually generate frames that quickly for livestreaming).

Also notice the CPU results for HEVC versus AV1 when it comes to performance. Some of that will be due to the selected encoder (libsvtav1 and libx265), as well as the selected preset. Still, considering how close the VMAF results are for HEVC and AV1 encoding via the CPU, with more than double the throughput you can certainly make a good argument for AV1 encoding here — and it's actually faster than our libx264 results, possibly due to better multi-threading scaling in the algorithm.

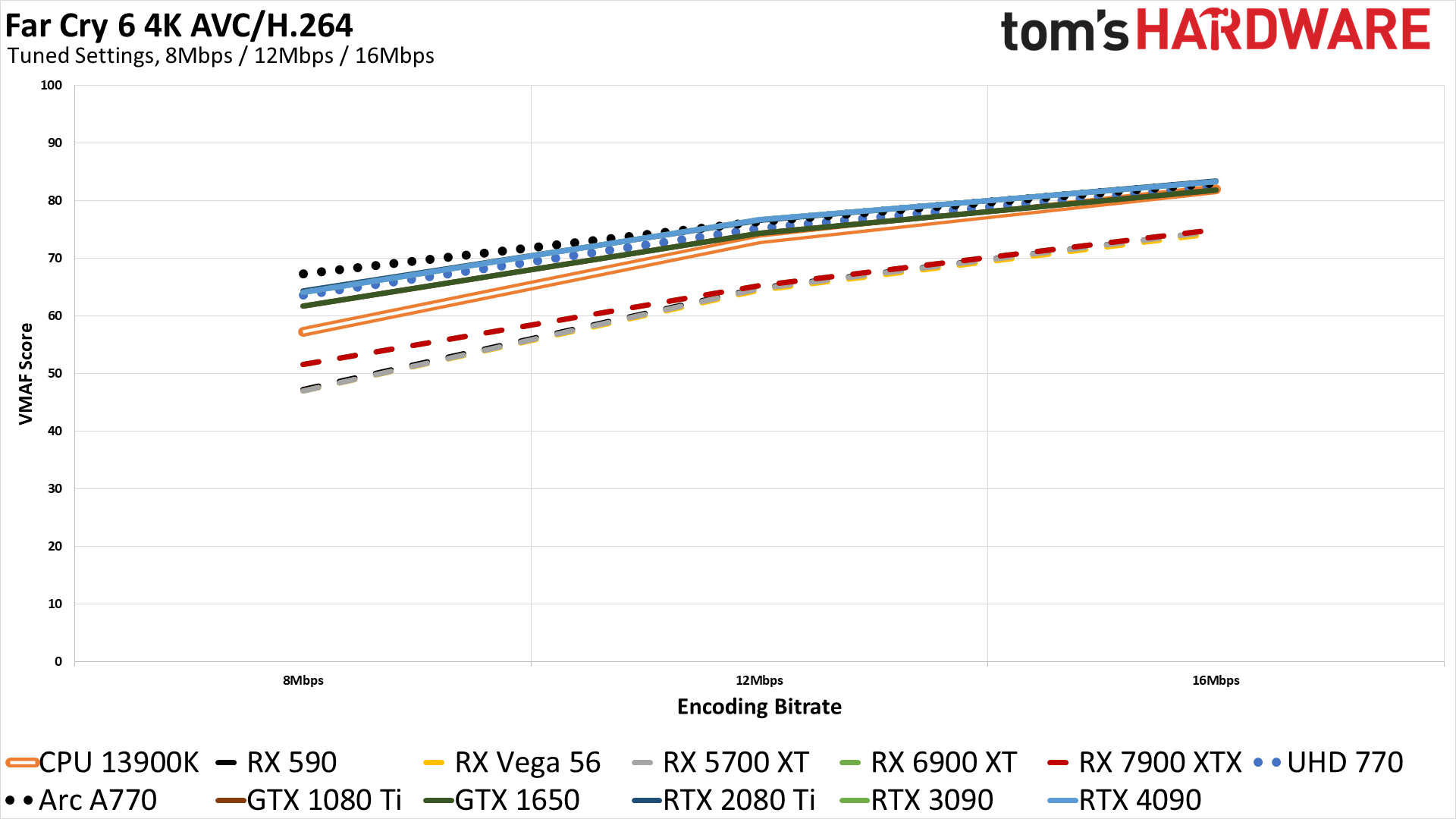

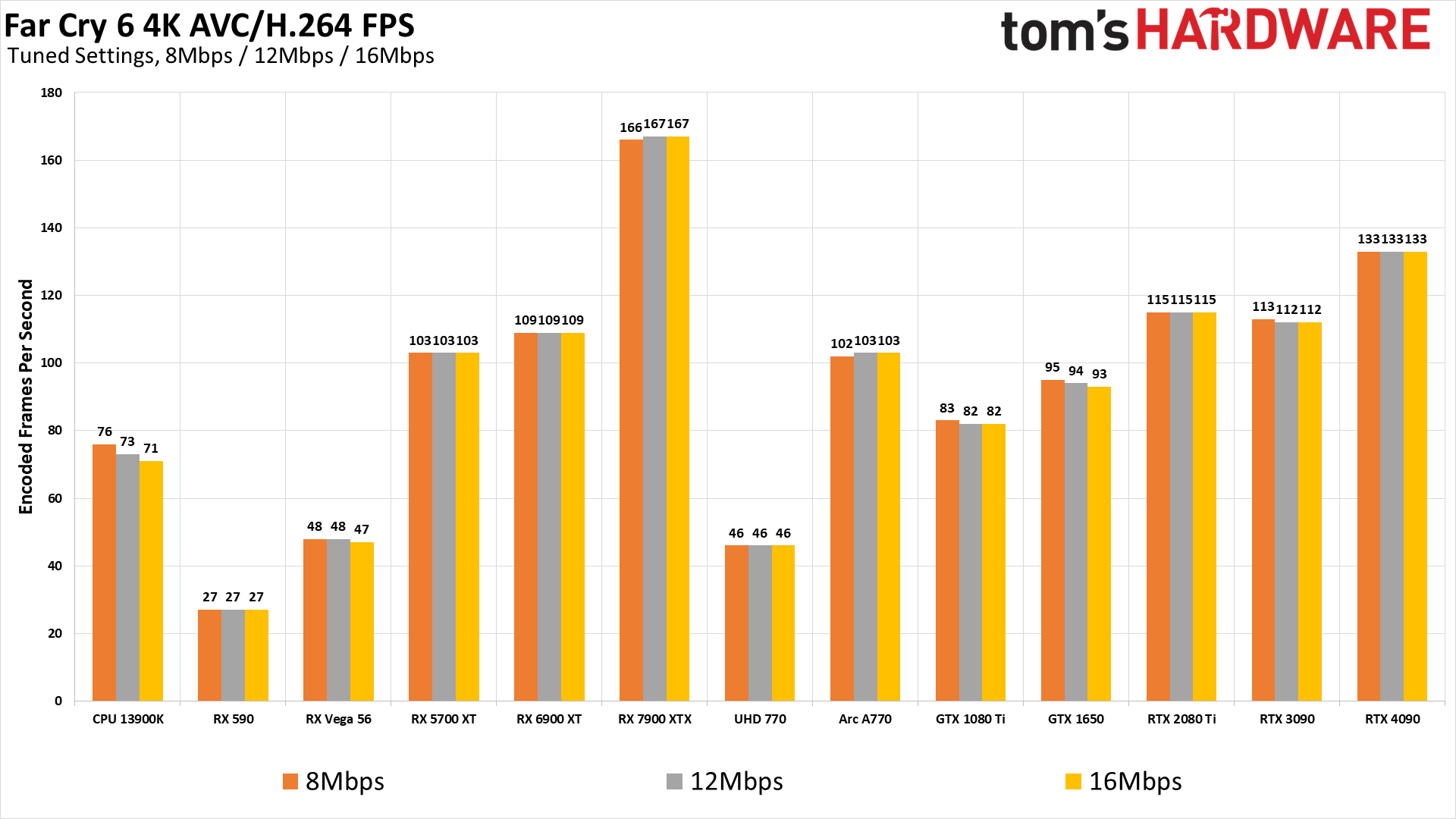

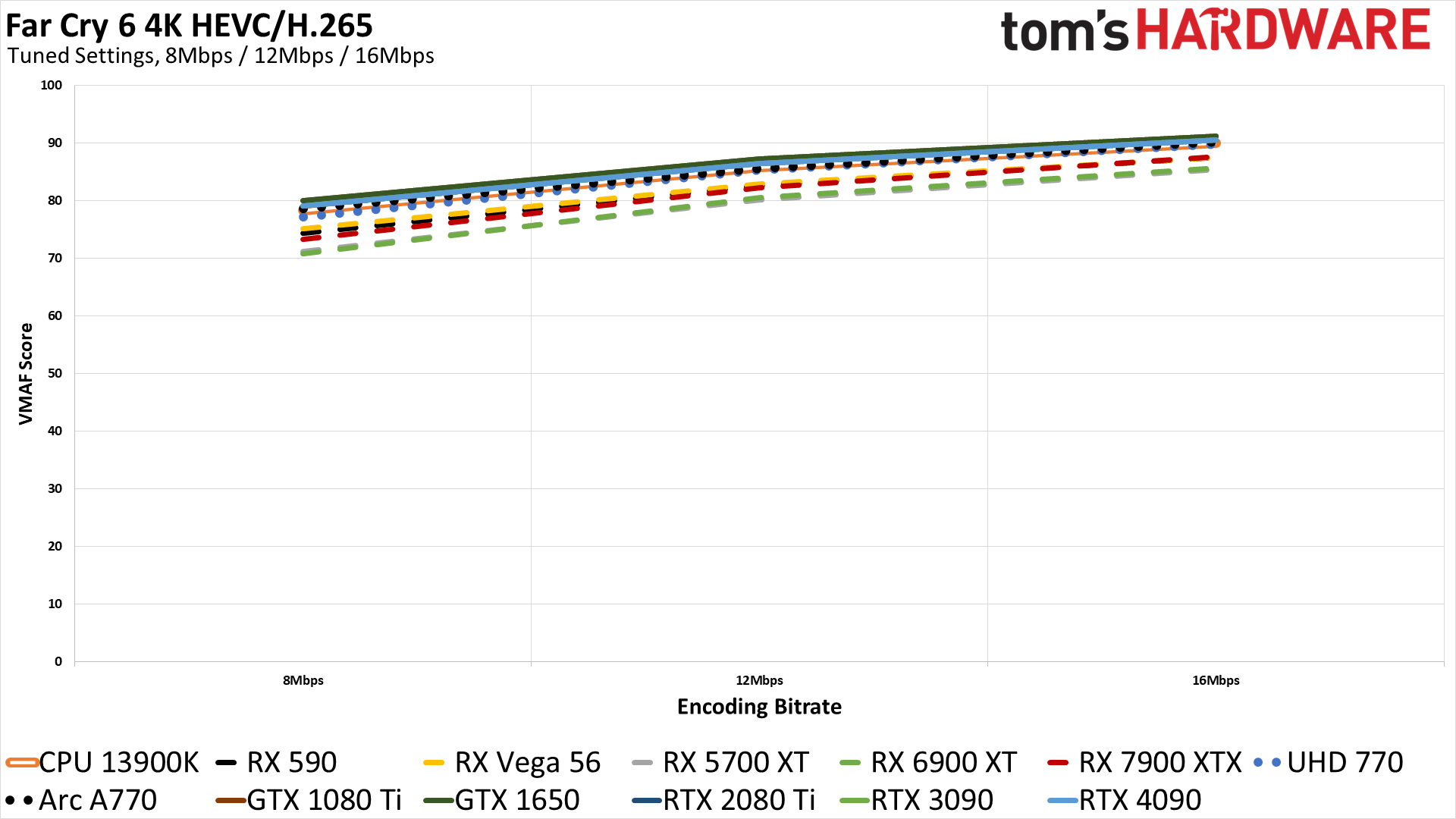

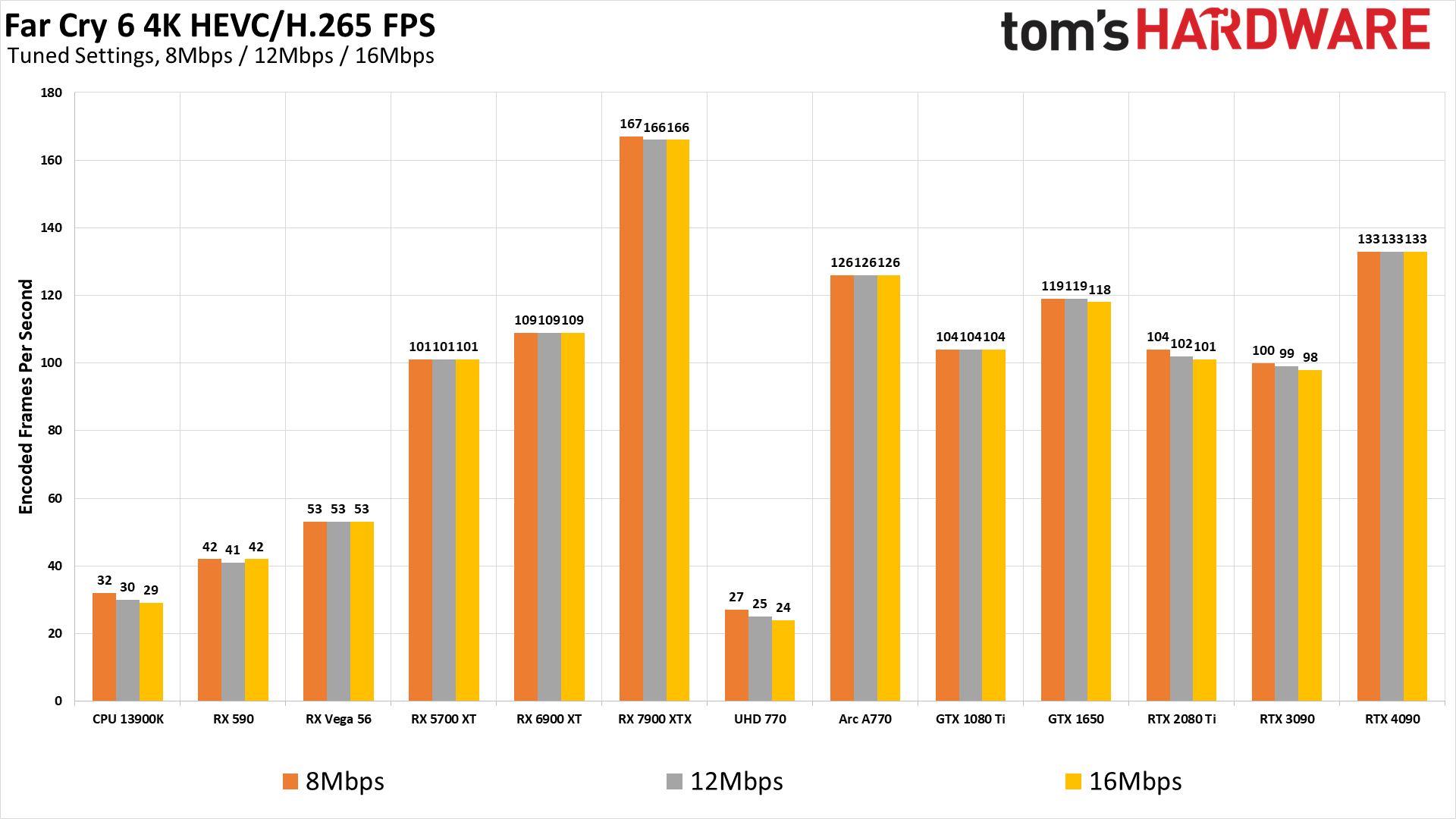

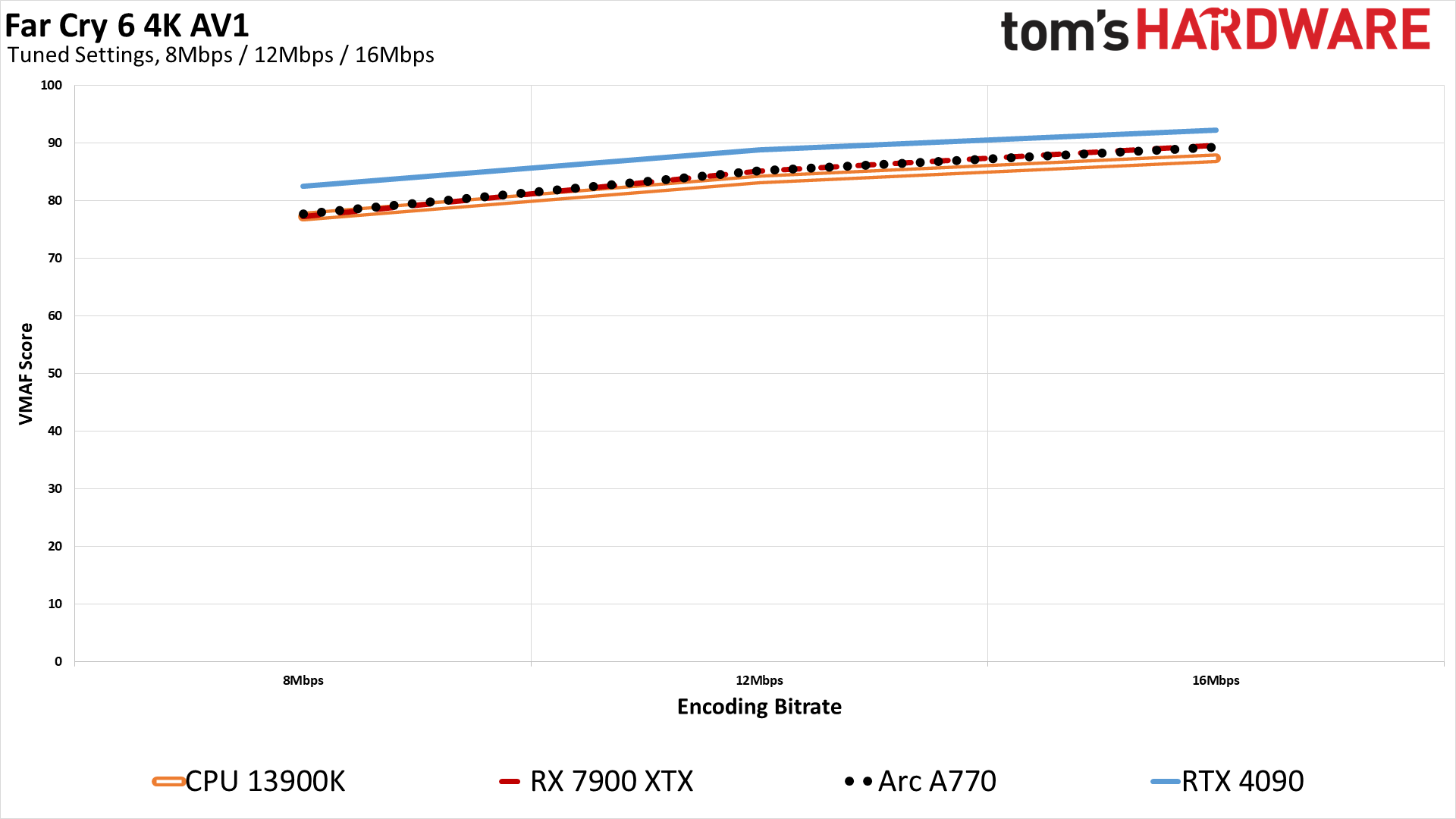

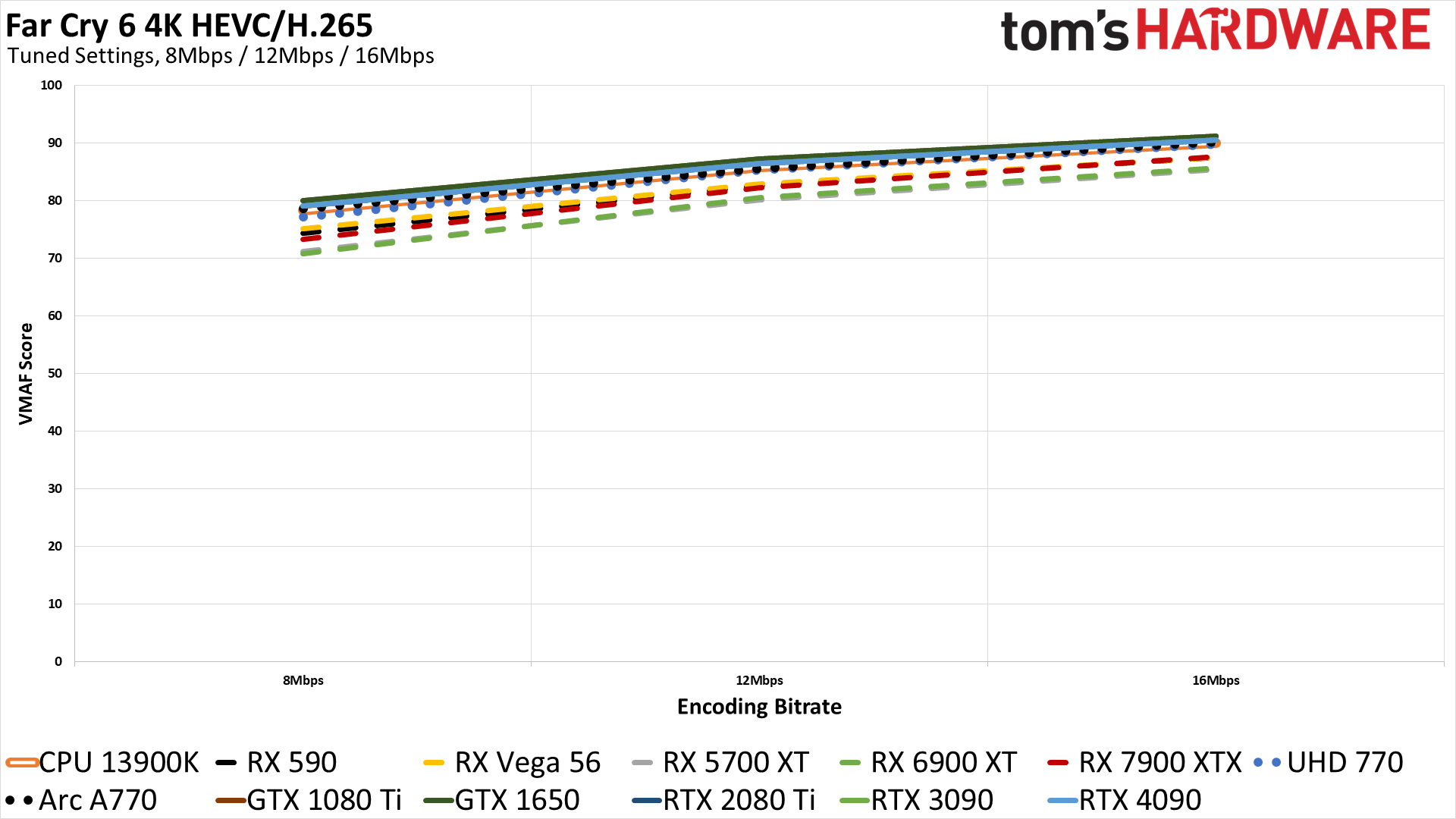

Video Encoding Quality and Performance at 4K: Far Cry 6

The results with our other two gaming videos at 4K aren't substantially different from what we saw with Borderlands 3 at 4K. Far Cry 6 ends up with higher VMAF scores across all three codecs, with some minor variations in performance, but that's about all we have to add to the story.

Given we haven't spent much time looking at it so far, let's take a moment to discuss Intel's integrated UHD Graphics 770 in more detail — the integrated graphics on 12th Gen Alder Lake and 13th Gen Raptor Lake CPUs. The quality is a bit lower than Arc, with the H.264 results generally falling between Nvidia's Pascal and the more recent Turing/Ampere/Ada generation in terms of VMAF scores. HEVC encoding on the other hand is only 1–2 points behind Arc and the Nvidia GPUs in most cases.

Performance is a different story, and it's a lot lower than we expected, far less than half of what the Arc can do. Granted, Arc has two video engines, but the UHD 770 only manages 44–48 fps at 4K with H.264, and that drops to 24–27 fps with HEVC encoding. For HEVC encoding, the 12900K CPU encoding was faster, and with AV1 it was about three times as fast as the UHD 770's HEVC result while still delivering better fidelity.

Intel does support some "enhanced" encoding modes if you have both UHD 770 and an Arc GPU. These modes are called Deep Link, but we suspect given the slightly lower quality and performance of the Xe graphics solutions that it will mostly be of benefit in situations where maximum performance is more important than quality.

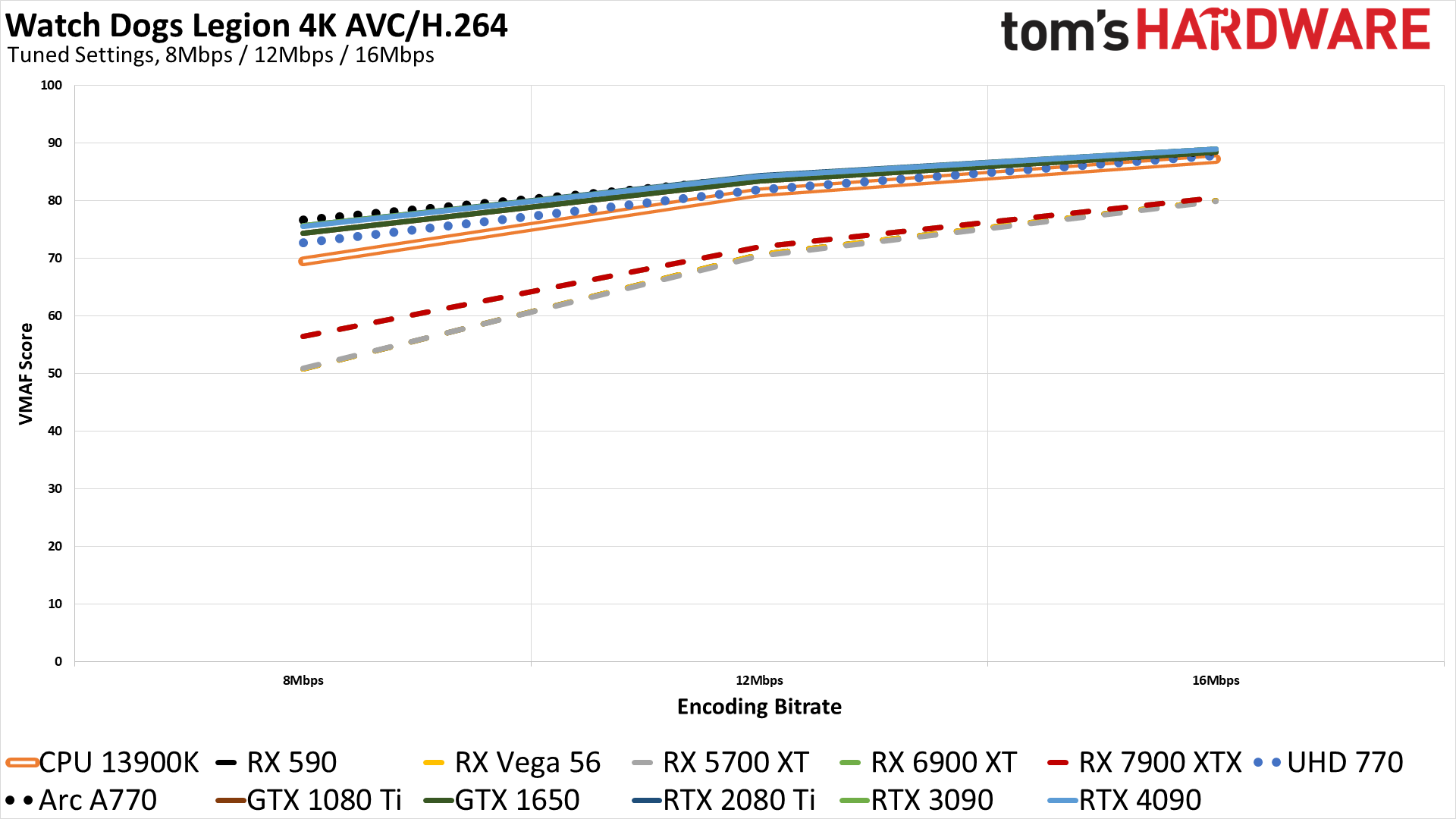

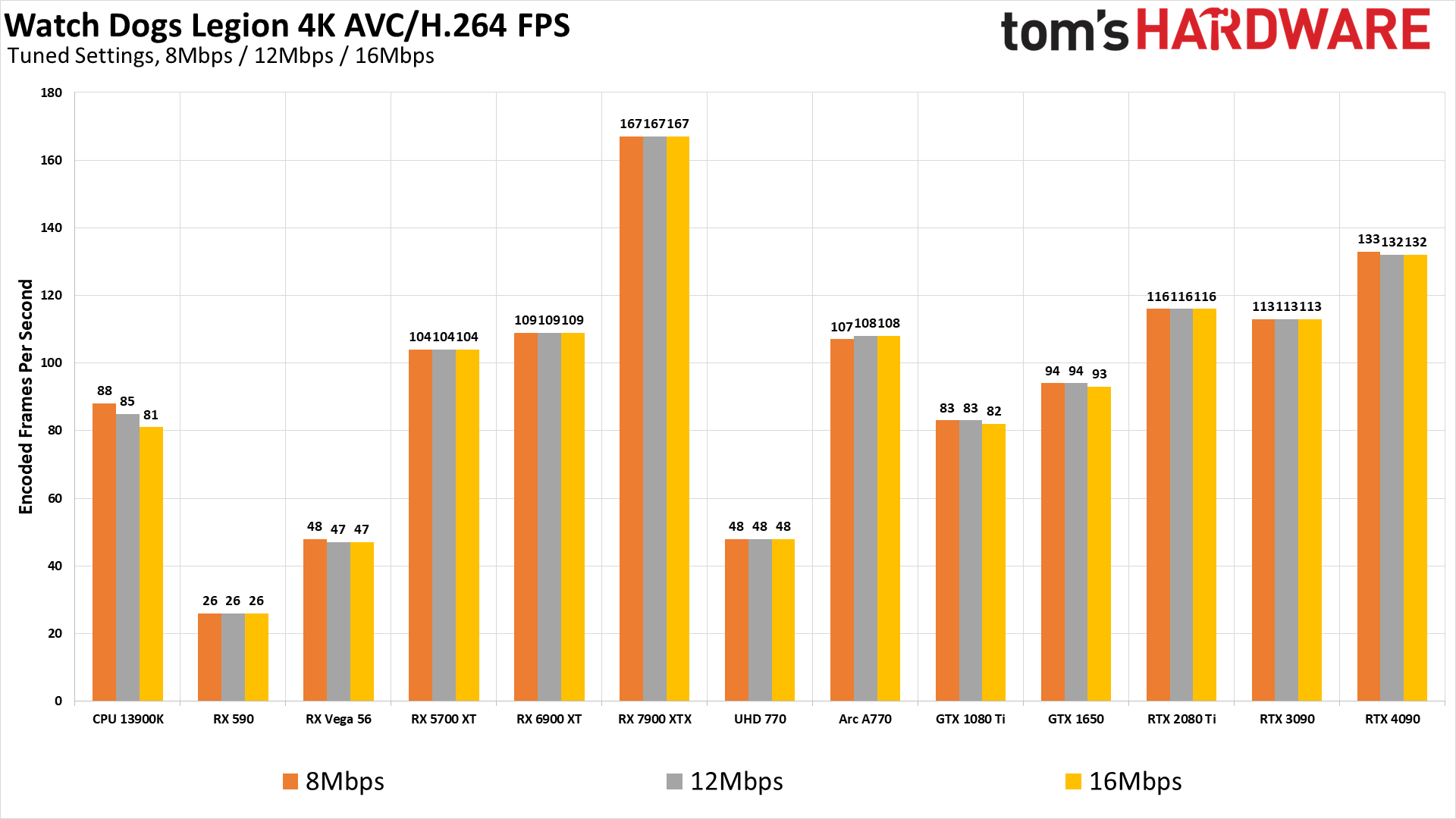

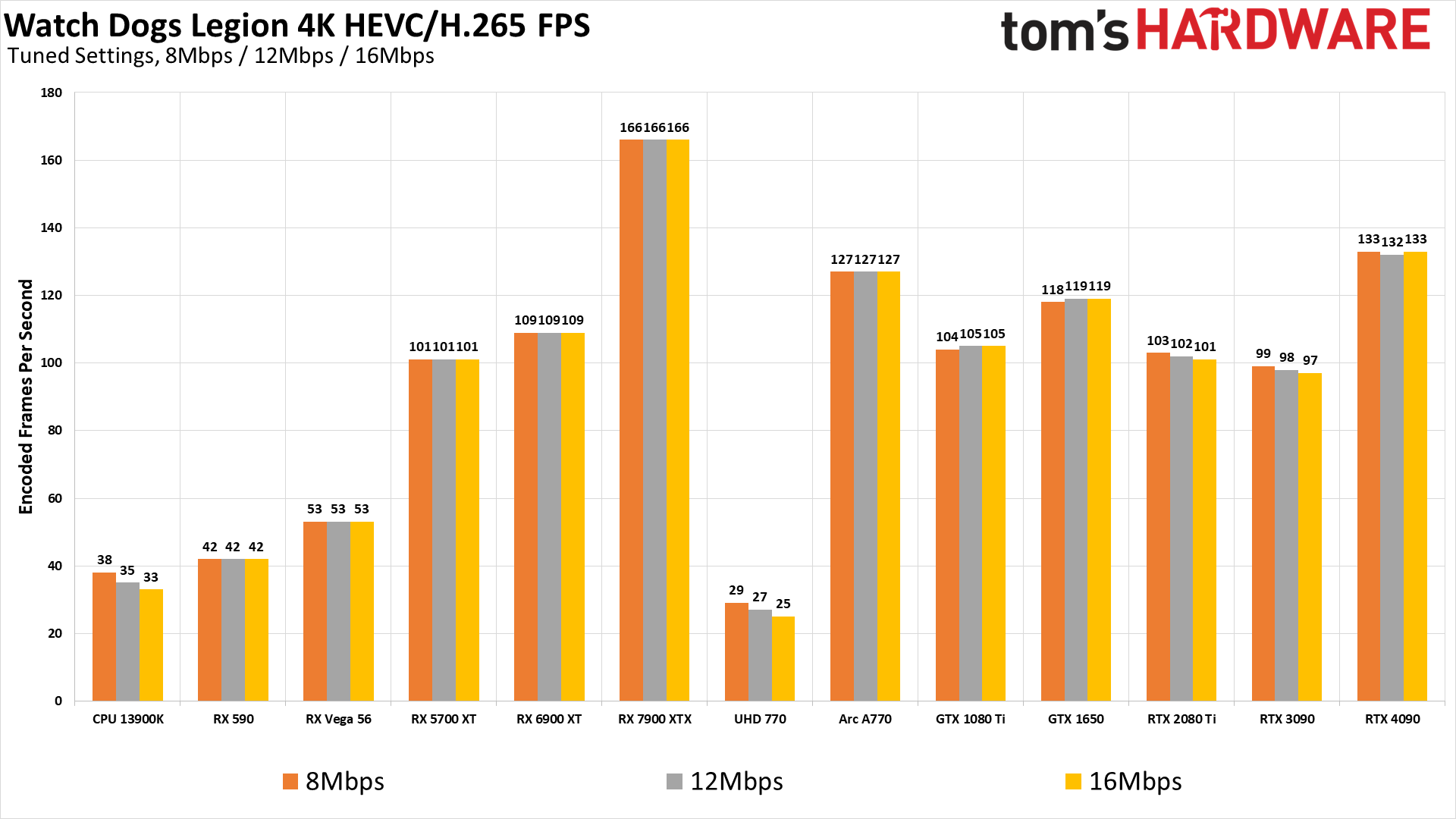

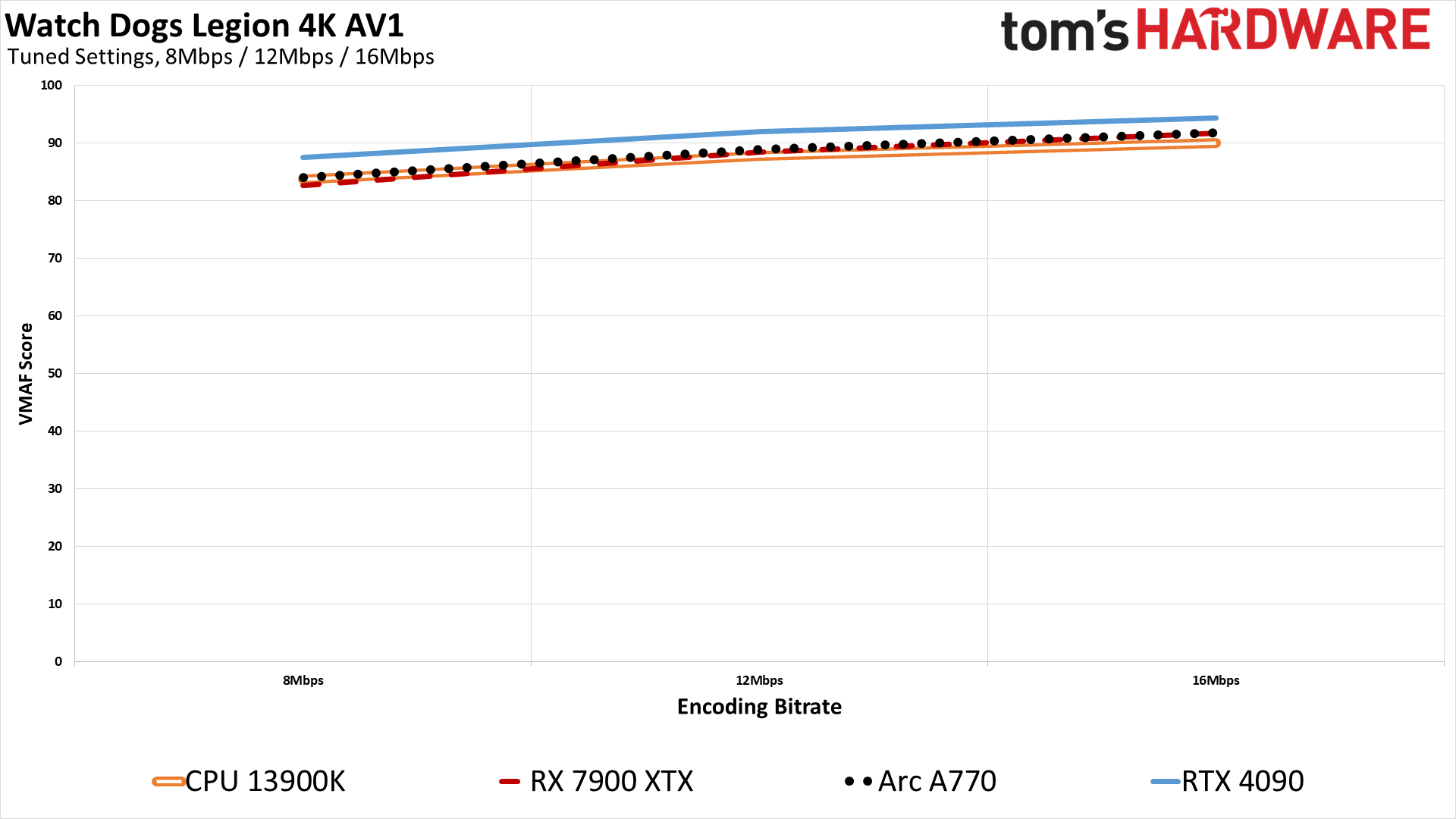

Video Encoding Quality and Performance at 4K: Watch Dogs Legion

Watch Dogs Legion repeats the story, but again with increased VMAF scores compared to Far Cry 6. That's probably due to there being less rapid movement in the camera, so the frame to frame changes are smaller than that allows for better compression and thus higher objective quality within the same amount of bitrate.

Performance ends up nearly the same as Far Cry 6 in most cases, and there's some variability between runs that we're not really taking into account — we'd estimate about a 3% margin of error for the FPS, since we only did each encode once per GPU.

As an alternative view of all the charts, here's the raw performance and quality data summarized in table format. Note that things are grouped by bitrate and then codec, so it's perhaps not that easy to parse, but this will give you all the exact VMAF scores if you're curious — and you can also see how little things changed between some of the hardware generations in terms of quality.

We also have a second table, this time colored based on performance and quality relative to the best result for each codec and bitrate. One interesting aspect that's readily visible is how fast AMD's encoding hardware is at 4K with the RX 7900 XTX, and similarly you can see how fast the RTX 40-series encoder is at 1080p. Quality meanwhile favors the 40-series and the Arc GPUs.

Test Videos and Log Files

If you'd like to see the original video files, I've created this personally hosted BitTorrent magnet link:

magnet:?xt=urn:btih:df31e425ac4ca36b946f514c070f94283cc07dd3&dn=Tom%27s%20Hardware%20Video%20Encoding%20Tests

UPDATE: This is the updated torrent, with corrected VMAF calculations. It also includes the log files from the initial encodes with incorrect VMAF, but those files have the FPS results for the encoding times.

In the torrent download, each GPU also has two log files. "Encodes-FPS-WrongVMAF" text files are the logs from the initial encoding tests, with the backward VMAF calculations. "Encodes-CorrectVMAF" files are only the corrected VMAF calculations (with placeholder "—" values for GPUs that don't support AV1 encoding, to make our lives a bit easier when it comes to importing the data used for generating the charts). For the curious, you can see the before and after VMAF results as well as the encoding speed FPS values by issuing the following commands from a command line in the appropriate folder:

for /f "usebackq tokens=*" %i in (`dir /b *Encodes*.txt`) do type "%i" | find /i "vmaf score"

for /f "usebackq tokens=*" %i in (`dir /b *Encodes*.txt`) do type "%i" | find /i "fps=" | find /v "N/A"If you'd like to do your own testing, the above BitTorrent magnet link will be kept active over the coming months — join and share if you can, as this is seeding from my own home network and I'm limited to 20Mbps upstream. If you previously downloaded my Arc A380 testing, note that nearly all of the files are completely new as I recorded new videos at a higher 100Mbps bitrate for these tests. I believe I also swapped the VMAF comparisons in that review, so these new results take precedence.

The files are all spread across folders, so you can download just the stuff you want if you're not interested in the entire collection. And if you do want to enjoy all 350+ videos for whatever reason, it's a 16.4GiB download. Cheers!

For those that don't want to download all of the files, I'll provide a limited set of screen captures from the Borderlands 3 1080p and 4K videos for reference below. I'm only going to do screens from recent GPUs, as otherwise this gets to be very time consuming.

Video Encoding Image Quality Screenshots

1080p Source 38Mbps

AMD RDNA3 1080p H.264 3Mbps

AMD RDNA3 1080p HEVC 3Mbps

AMD RDNA3 1080p AV1 3Mbps

Intel Arc 1080p H.264 3Mbps

Intel Arc 1080p HEVC 3Mbps

Intel Arc 1080p AV1 3Mbps

Nvidia Ada Lovelace 1080p H.264 3Mbps

Nvidia Ada Lovelace 1080p HEVC 3Mbps

Nvidia Ada Lovelace 1080p AV1 3Mbps

CPU 13900K 1080p H.264 3Mbps

CPU 13900K 1080p HEVC 3Mbps

CPU 13900K 1080p AV1 3Mbps

4K Source 50Mbps

AMD RDNA3 4K H.264 8Mbps

AMD RDNA3 4K HEVC 8Mbps

AMD RDNA3 4K AV1 8Mbps

Intel Arc 4K H.264 8Mbps

Intel Arc 4K HEVC 8Mbps

Intel Arc 4K AV1 8Mbps

Nvidia Ada Lovelace 4K H.264 8Mbps

Nvidia Ada Lovelace 4K HEVC 8Mbps

Nvidia Ada Lovelace 4K AV1 8Mbps

CPU 13900K 4K H.264 8Mbps

CPU 13900K 4K HEVC 8Mbps

CPU 13900K 4K AV1 8Mbps

The above gallery shows screengrabs for just one frame from the AMD RX 7900 XTX, Intel Arc A770, Nvidia RTX 4090, and CPU encodes on the Core i9-13900K. Of course a single frame isn't the point, and there can be differences in where key frames are located, but that's why we used the "-g 120" option so that, in theory, all of the encoders are putting key frames at the same spot. Anyway, if you want to browse through them, we recommend viewing the full images in separate tabs.

We've only elected to do screen grabs for the lowest bitrates, and that alone took plenty of time. You can at least get some sense of what the encoded videos look like, but the VMAF scores arguably provided a better guide as to what the overall experience of watching a stream using the specified encoder would look like.

Because we're only doing the lowest bitrates from the selected resolutions, note that the VMAF results range from "poor" to "fair" — the higher bitrate videos definitely look much better than what we're showing here.

Video Encoding Showdown: Closing Thoughts

After running and rerunning the various encodes multiple times, we've done our best to try and level the playing field, but it's still quite bumpy in places. Maybe there are some options that can improve quality without sacrificing speed that we're not familiar with, but if you're not planning on putting a ton of time into figuring such things out, these results should provide a good baseline of what to expect.

For all the hype about AV1 encoding, in practice it really doesn't look or feel that different from HEVC. The only real advantage is that AV1 is supposed to be royalty free (there are some lawsuits in progress contesting this), but if you're archiving your own movies you can certainly stick with using HEVC and you won't miss out on much if anything. Maybe AV1 will take over going forward, just like H.264 became the de facto video standard for the past decade or more. Certainly it has some big names behind it, and now all three of the major PC GPU manufacturers have accelerated encoding support.

From an overall quality and performance perspective, Nvidia's latest Ada Lovelace NVENC hardware comes out as the winner with AV1 as the codec of choice, but right now it's only available with GPUs that start at $799 — for the RTX 4070 Ti. It should eventually arrive in 4060 and 4050 variants, and those are already shipping in laptops, though there's an important caveat: the 40-series cards with 12GB or more VRAM have dual NVENC blocks, while the other models will only have a single encoder block, which could mean about half the performance compared to our tests here.

Right behind Nvidia in terms of quality and performance, at least as far as video encoding is concerned, Intel's Arc GPUs are also great for streaming purposes. They actually have higher quality results with HEVC than AV1, basically matching Nvidia's 40-series. You can also use them for archiving, sure, but that's likely not the key draw. Nvidia definitely supports more options for tuning, however, and seems to be getting more software support as well.

AMD's GPUs meanwhile continue to lag behind their competition. The RDNA 3-based RX 7900 cards deliver the highest-quality encodes we've seen from an AMD GPU to date, but that's not saying a lot. In fact, at least with the current version of ffmpeg, quality and performance are about on par with what you could get from a GTX 10-series GPU back in 2016 — except without AV1 support, naturally, since that wasn't a thing back then.

We suspect very few people are going to buy a graphics card purely for its video encoding prowess, so check our GPU benchmarks and our Stable Diffusion tests to see how the various cards stack up in other areas. Next up, we need to run updated numbers for Stable Diffusion, and we're looking at some other AI benchmarks, but that's a story for another day.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

PlaneInTheSky AMD expected ppl to buy expensive threadripper CPU for streaming. That was literally one of their promotion slides.Reply

But x86 CPU suck at parallelisation.

So everyone went with Nvidia's NVENC and CUDA instead. Rightfully so.

To this day, AMD sucks at GPU encoding and decoding. You're lucky if your whole encode isn't a corrupted file. -

-Fran- Thanks for the data!Reply

It would've been nice to add a CPU-based encoded video for reference and see how any of the fixed-function pipelines of the GPUs compare. EDIT: I'm dumb as always missing something in the graphs; there's the 13900K, so nice one!

I've been using software AV1 with the CPU on "fast" and it beats anything on the GPU. It's kind of hilarious. And I "only" have a 5800X3D. Newer CPUs should perform even better; specially CPUs with even more cores.

Video

ID : 1

Format : AV1

Format/Info : AOMedia Video 1

Format profile : Main@L4.1

Codec ID : V_AV1

Duration : 4 min 2 s

Width : 1 920 pixels

Height : 1 080 pixels

Display aspect ratio : 16:9

Frame rate mode : Constant

Frame rate : 60.000 FPS

Color space : YUV

Chroma subsampling : 4:2:0

Bit depth : 8 bits

Default : Yes

Forced : No

Color range : Limited

Color primaries : BT.709

Transfer characteristics : BT.709

Matrix coefficients : BT.709

That's just the video general settings as described by the encoder. Also, I only use OBS as it is the practical and realistic software to use.

Regards. -

springhalo Great article! Looks like you put a ton of work into making a fair comparison. I'm impressed the CPU encoding was able to hold its own against dedicated silicon, even if it uses much more power. Which CPU encoder did you use?Reply

I'm happy recording NVENC_HEVC 720p 20Mbps and re-encoding 20-second clips at 3Mbps for sharing on discord. Looking at these results seems like I can decrease that further to 10Mbps. Cheers! -

COCOViper Reply

Would you also be willing to run an x264 encode at "very slow" preset, tune -film (or -grain) to see how quality compares? For those of us doing encodes not for streaming but for archive purposes, its always good to stay abreast of how all the encoders are performing from a quality first perspective.Admin said:We tested multiple generations of AMD, Intel, and Nvidia GPUs to look at both encoding performance and quality. Here's how the various cards stack up.

Video Encoding Tested: AMD GPUs Still Lag Behind Nvidia, Intel : Read more -

bit_user Thanks for the tests, @JarredWaltonGPU !Reply

Over all, closer than I would've expected. Nice to see AMD pull out some wins on 4k encoding performance, but it doesn't mean very much if the quality isn't there.

Also, I'm pleased to see Intel doing so well. I suspected a significant number of early Intel GPU adopters were buying them for encoding. -

bit_user Reply

Looking at the charts, I assumed the "CPU" was still using its Quicksync Video hardware encoder, but I see Jarred says it's using a pure software path. Very impressive!-Fran- said:It would've been nice to add a CPU-based encoded video for reference and see how any of the fixed-function pipelines of the GPUs compare. EDIT: I'm dumb as always missing something in the graphs; there's the 13900K, so nice one!

Would've been cool to use a Ryzen 7950X and remove all doubt about whether any hardware assist was at play, but I trust Jarred to know what he's doing. -

DavidLejdar Thank you for the effort! I don't stream, but I am doing a video series of 3D open-world games on YT for fun, recorded at 1440p. And a concern was to not have a file size too huge, while having the full-screen end result as crisp as the source screengrabs show or at least as in the screengrab below (from the video), without an easily visible blur as can be seen with the grass in the other screengrabs, which can also occur e.g. with the faces and clothing of NPCs.Reply

In that regard it is nice to see that I likely wouldn't be able to push the bitrate down much with another GPU (instead of the RX 6700 XT here) for the same end quality, as the video encoding quality seems to level out beyond a certain point for all the (newer) GPUs. But also good to know that hardware can make a visible difference when going with a lower bitrate, such as for the streaming.

-

-Fran- Reply

The 7950X does have an iGPU, but I wonder if it has the fixed-hardware encoder in it?bit_user said:Looking at the charts, I assumed the "CPU" was still using its Quicksync Video hardware encoder, but I see Jarred says it's using a pure software path. Very impressive!

Would've been cool to use a Ryzen 7950X and remove all doubt about whether any hardware assist was at play, but I trust Jarred to know what he's doing.

The APUs all have the full fledged VCE built in at least, but I'm not 100% sure the iGPU in the Ry7K does. The Wiki does say they have VCN 3.1, so RDNA1 and 2 encoding engines.

Regards. -

bit_user Reply

Not sure, but I assuming it's low-performance enough that we'd notice.-Fran- said:The 7950X does have an iGPU, but I wonder if it has the fixed-hardware encoder in it?

Perhaps the best option would be to benchmark both the iGPU and pure software path. As long as they perform differently, we can be reasonably certain the software path really isn't using the iGPU. -

deesider Reply

When you upload to youtube the video is re-encoded. Given the tiny file size that results, the encoder that youtube uses is the best you can get. It's great for minimising file sizes for portability - like presentations. You just upload to youtube, wait for re-encoding, then download again without publishing the video.DavidLejdar said:Thank you for the effort! I don't stream, but I am doing a video series of 3D open-world games on YT for fun, recorded at 1440p. And a concern was to not have a file size too huge, while having the full-screen end result as crisp as the source screengrabs show or at least as in the screengrab below (from the video), without an easily visible blur as can be seen with the grass in the other screengrabs, which can also occur e.g. with the faces and clothing of NPCs.

In that regard it is nice to see that I likely wouldn't be able to push the bitrate down much with another GPU (instead of the RX 6700 XT here) for the same end quality, as the video encoding quality seems to level out beyond a certain point for all the (newer) GPUs. But also good to know that hardware can make a visible difference when going with a lower bitrate, such as for the streaming.

- But since it will always be re-encoded, for maximum quality, you are better off uploading the uncompressed video (if you are able).