Tom's Hardware Verdict

The Intel Arc A770 LE delivers a compelling midrange option for anyone willing to take a chance on continued driver support. You get 16GB of VRAM, an attractive and understated design, and performance that easily rivals the RTX 3060.

Pros

- +

Great price-to-performance ratio

- +

Excellent video encoding hardware

- +

16GB VRAM, XeSS, AV1 support

- +

Welcome to the GPU party, Intel!

Cons

- -

Drivers remain finicky at times

- -

Arrived far later than initially expected

- -

Uses more power than the direct competition

- -

XeSS adoption will be an uphill battle

Why you can trust Tom's Hardware

The Intel Arc A770 graphics card has finally arrived, along with its little brother, the Intel Arc A750. After a rather disappointing Arc A380 review last month, Intel has a lot to prove with the bigger and far more potent A770. And it mostly succeeds! While there are certainly caveats — mostly about drivers, XeSS adoption, and long-term support — Intel clearly wants to prove it can compete with the likes of AMD and Nvidia, perhaps even laying claim to a seat at the table among the best graphics cards.

It's been a long road to Intel re-entering the dedicated graphics card market. It first attempted to make a dedicated graphics card with the i740 back in the late 90s — that would be "Gen1 Graphics" if you're wondering — before giving up. Larabee was another attempt at a potential GPU, but it got mired in internal politics. Now, despite claims of Arc being dead in the water, we have cards in hand and can finally see what Intel's best-ever GPU brings to the table.

How does Arc A770 stack up against the AMD and Nvidia competition? Is XeSS a true DLSS competitor, and what about all the AV1 video hype? Could Intel fix its drivers to the point where it won't be one of the first things people warn you about? We'll look to answer all these questions and more, but let's start with the high-level overview.

Arc Alchemist Architecture Recap

We've had plenty of details on Intel's Arc Alchemist architecture, which we've known about since last year. We now have final clocks and specs, as well as pricing, but it's worth revisiting the road Intel has traveled. The first time we heard about Arc GPUs, we anticipated a late 2021 or early 2022 launch. By that metric, Intel is nearly a year late to the party — blame Covid, supply chain issues, and even the Russian invasion of Ukraine if you're looking for reasons.

On one level, Arc Alchemist would be the 13th iteration of Intel Graphics. At the same time, we'd also mark this down as generation one. There are enough major overhauls of the fundamental design, features, and functionality that a card like the A770 has very little in common with Intel's current 12th-Gen integrated Xe Graphics. There are several new additions to the GPU that provide a clear demarcation between the pre-Arc and the post-Arc world of Intel GPUs.

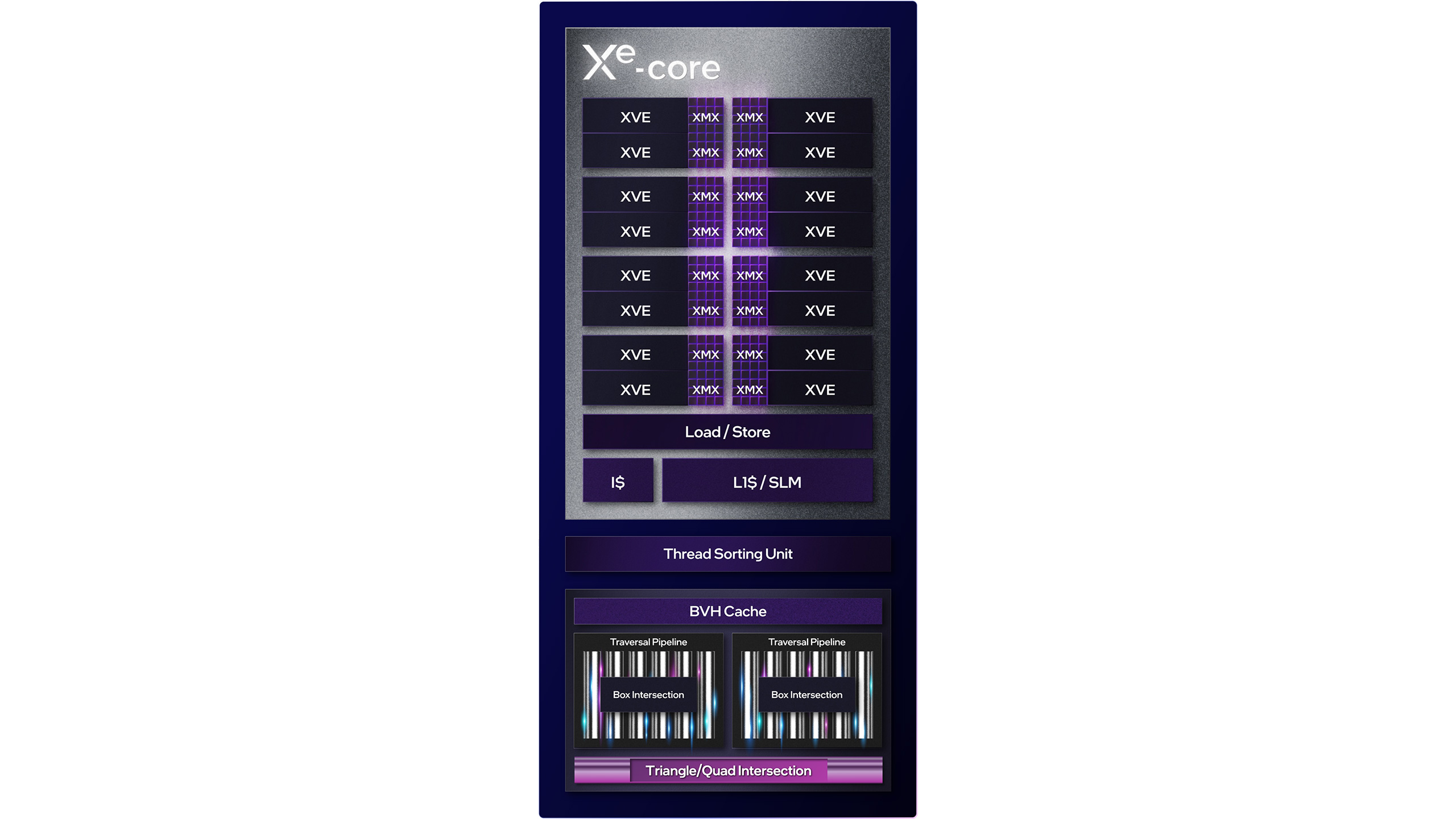

Starting with the main building block, the Xe-Core, Intel is apparently waffling a bit on naming conventions. The "Xe Vector Engines (XVE)" are still referred to as "Execution Units (EUs)" at times — a rose by any other name would be as fast, I suppose. Intel groups 16 XVEs into a single Xe-Core, which also includes other functionality like the Xe Matrix Engines (XMX) blocks, Load/Store unit, and L1 cache. Attached to each Xe-Core (but not directly a part of it) are the Thread Sorting Unit (TSU), BVH Cache (for ray tracing), and the other ray tracing hardware for BVH ray/box intersection and ray/triangle intersection.

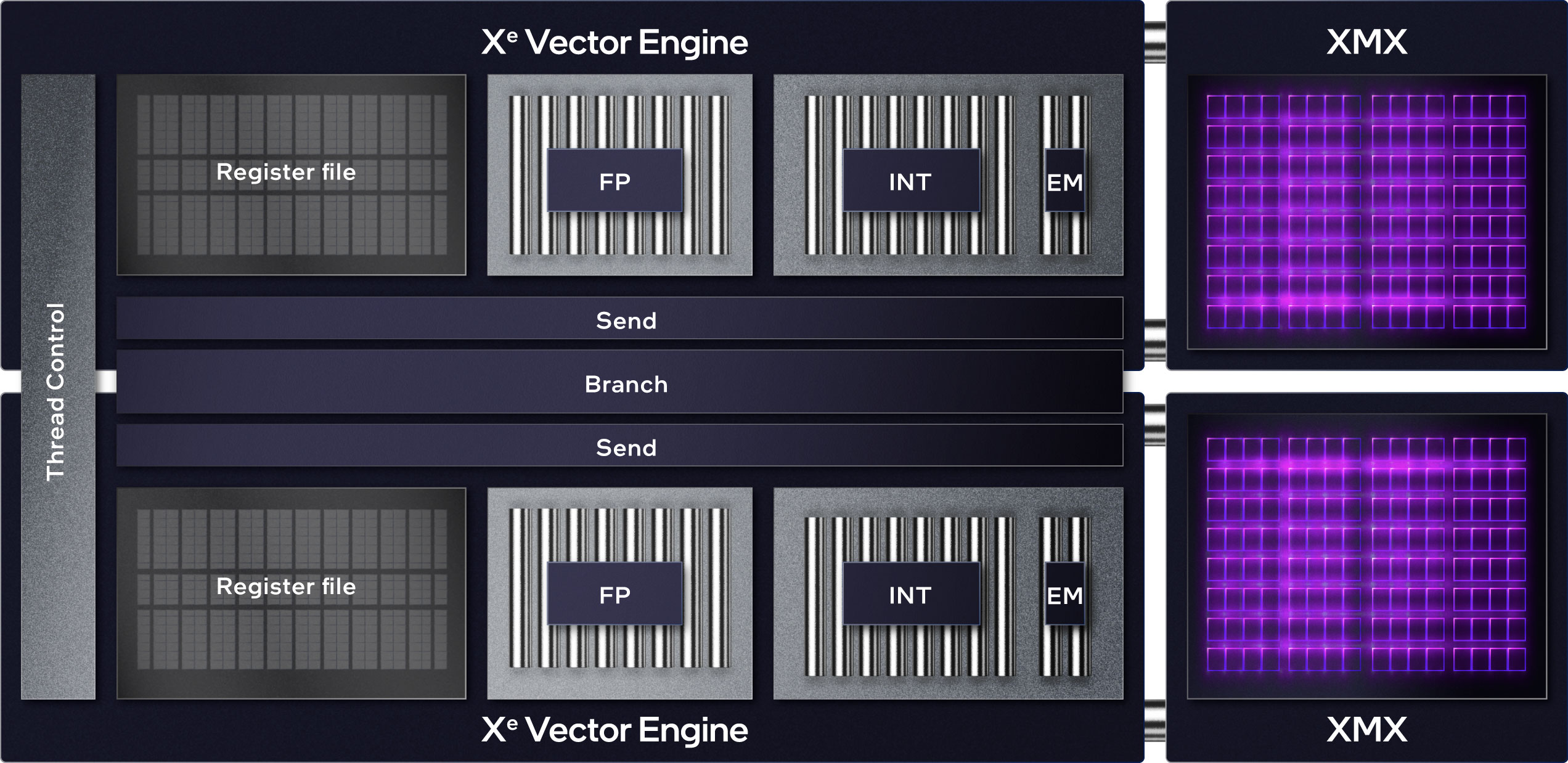

Each XVE can compute eight FP32 operations per cycle. That gets loosely translated into "GPU cores," though we prefer to call them "GPU shaders," and each is roughly (very roughly!) equivalent to an AMD or Nvidia shader. Each Xe-Core thus has 128 shader cores and sort of maps to an (upcoming) AMD RDNA 3 Compute Unit (CU) or an Nvidia Streaming Multiprocessor (SM) — both of which will also have 128 GPU shaders. They're all SIMD (single instruction multiple data) designs, and Arc Alchemist has enhanced the shaders to meet the full DirectX 12 Ultimate feature set.

The XVEs also have additional functionality, like INT and EM support. There are a lot of memory address calculations in graphics workloads, which is where the INT functionality often helps, though such things can also be used for cryptographic hashing. EM stands for "Extended Math" and provides access to more complex stuff like transcendental functions — log, exponent, sine, cosine, etc. The INT execution port is shared with the EM functionality, which tends to be used less frequently than INT and FP calculations.

Meanwhile, the XMX blocks are comparable to Nvidia's Tensor cores. Each XMX unit can handle either FP16/BF16 (16-bit floating point/brain floating point), INT8 (8-bit integer), or INT4/INT2 (4-bit/2-bit integer) data. These blocks are useful with deep learning workloads, including Intel's XeSS (Xe Super Sampling) upscaling algorithm. They can be used in any workload that just needs a lot of lower-precision number crunching, and each XMX block can do either 128 FP16, 256 INT8, or 512 INT4/INT2 operations per clock.

The processor can co-issue instructions to all three execution ports — FP, INT/EM, and XMX — at the same time, and all three execution blocks can be active at the same time. Again, that's loosely similar to Nvidia's architectures, though it's important to note that Nvidia has a shared INT/FP port, so if the INT aspect is active, it cuts the potential FP throughput in half.

Stepping up one level, Intel has what it calls a render slice, which is sort of analogous to Nvidia's Graphics Processing Cluster (GPC). The render slice consists of four Xe-Cores, and then adds texture units ("Sampler" in the above image) and Render Outputs (ROPs, the "Pixel Backend" in the image), plus some other hardware.

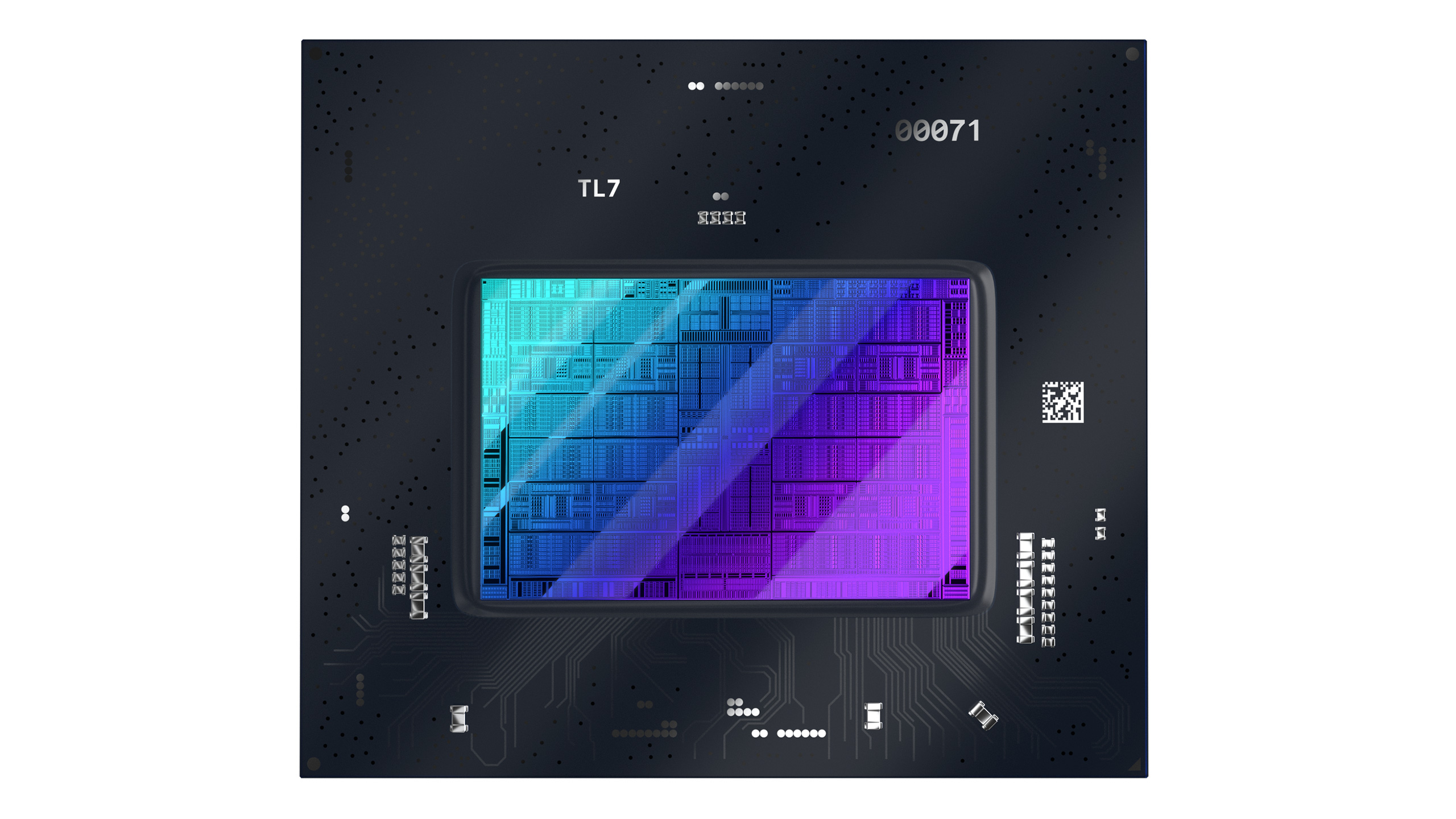

Intel has two main designs with Arc Alchemist, one with two render slices and up to eight Xe-Cores, and the other is a larger design with up to eight render slices. The Arc A770 represents the fully enabled larger design and thus has the full 32 Xe-Cores, while the Arc A380 uses the fully enabled smaller design — with other variants (including mobile models) using partially enabled chips.

Here's the full block diagram for the larger ACM-G10 die, and the A770. Along with all the render slices and other functionality, there's a large chunk of L2 cache and the memory fabric linking all of the slices together, up to 16MB in the A770. That might not seem that large, considering Nvidia's Ada Lovelace and the new AD102 has up to 96MB of L2 cache, but keep in mind the different performance and price levels, plus the fact that Nvidia's soon-to-be-previous-gen GA102 chip (used in the RTX 3090 Ti and other cards) only had up to 6MB of L2 cache.

The block diagram also shows the Xe Media Engine, which handles various video/image codecs, including AV1, VP9, HEVC, and H.264. There are two full media engines (MFX) in both the larger and smaller Arc GPUs. These can work on separate streams or combine their computational power to double their encoding throughput.

On the left of the block diagram — and it's important to note that this is not a representation of the actual chip layout — Intel shows the four display engine blocks, PCI Express link, Copy Engine, and Memory Controllers. The GDDR6 controllers are actually positioned around the outside of the GPU, linking up to the external memory, and the ACM-G10 has up to eight 32-bit memory controllers.

It's not clear if Intel can disable those individually on the larger GPU or if it needs to be done in pairs, though we do know on the smaller ACM-G11 that the three 32-bit controllers can be individually disabled. Meanwhile, the mobile A730M has a 192-bit interface with two of the controllers fused off, while the mobile A550M has a 128-bit interface with four controllers disabled.

Intel Arc A770 Specifications

With that overview of the architecture out of the way, here are the full specifications for the Arc A770, A750, (upcoming) A580, and A380. Performance potential here is theoretical teraflops / teraops (trillions of operations per second), but remember that not all teraflops and teraops are created equal. We need real-world testing to see what sort of actual performance the architecture can deliver, but we'll get to that soon enough.

| Graphics Card | Arc A770 16GB | Arc A770 8GB | Arc A750 | Arc A580 | Arc A380 |

|---|---|---|---|---|---|

| Architecture | ACM-G10 | ACM-G10 | ACM-G10 | ACM-G10 | ACM-G11 |

| Process Technology | TSMC N6 | TSMC N6 | TSMC N6 | TSMC N6 | TSMC N6 |

| Transistors (Billion) | 21.7 | 21.7 | 21.7 | 21.7 | 7.2 |

| Die size (mm^2) | 406 | 406 | 406 | 406 | 157 |

| Xe-Cores | 32 | 32 | 28 | 24 | 8 |

| GPU Shaders | 4096 | 4096 | 3584 | 3072 | 1024 |

| Matrix Cores | 512 | 512 | 448 | 384 | 128 |

| Ray Tracing Units | 32 | 32 | 28 | 24 | 8 |

| Boost Clock (MHz) | 2100 | 2100 | 2050 | 1700 | 2000 |

| VRAM Speed (Gbps) | 17.5 | 16 | 16 | 16 | 15.5 |

| VRAM (GB) | 16 | 8 | 8 | 8 | 6 |

| VRAM Bus Width | 256 | 256 | 256 | 256 | 96 |

| L2 Cache | 16 | 16 | 16 | 16 | 6 |

| ROPs | 128 | 128 | 128 | 128 | 32 |

| TMUs | 256 | 256 | 224 | 192 | 64 |

| TFLOPS FP32 | 17.2 | 17.2 | 14.7 | 10.4 | 4.1 |

| TFLOPS FP16 (INT8) | 138 (275) | 138 (275) | 118 (235) | 84 (167) | 33 (66) |

| Bandwidth (GB/s) | 560 | 512 | 512 | 512 | 186 |

| TDP (watts) | 225 | 225 | 225 | 175 | 75 |

| Launch Date | October 2022 | October 2022 | October 2022 | ? | June 2022 |

| Launch Price | $349 | $329 | $289 | ? | $139 |

Intel's Arc A770 looks quite potent on paper, and clearly, Intel is gunning for its share of the midrange market with aggressive pricing on the A750 and A770. Both end up competing against the Nvidia RTX 3060, with the A770 coming at a similar theoretical price, while the A750 clearly undercuts the 3060 — though Nvidia's GPU still tends to sell for closer to $370 at retail. On the AMD side of the fence, things are a bit different. The RX 6700 XT potentially delivers more performance at a higher $400 price point, while the RX 6650 XT still sells for around $300 (give or take).

That's where GPU prices stand right now, at least — and we expect everything to continue to fall in the coming months, though probably not too much further before parts start getting discontinued and replaced by newer models.

In terms of specs and features, Intel's Arc GPUs end up looking a lot more like Nvidia's GPUs than AMD's offerings, with the extra matrix cores and a much bigger emphasis on ray tracing hardware. Intel is also the only GPU company that currently has AV1 and VP9 hardware accelerated video encoding. AMD and Nvidia will add AV1 support to their upcoming RDNA 3 and Ada architectures, but those may not come to the midrange market any time soon. If you're interested in AV1 and don't want to spend more than $350, the Arc A770, A750, and A580 might be your best and only options this side of 2023.

The three midrange Arc cards deliver theoretical compute performance of 10.4 to 17.2 teraflops of FP32. (Note that Intel uses "typical" game clocks, though, in our testing, we've seen a lot of cases where the clock speed is far higher than the nominal 2.1 GHz listed above.) By comparison, Nvidia's RTX 3060 offers 12.7 teraflops of graphics compute, while AMD's RX 6650 XT sits at 10.8 teraflops. However, we're definitely getting into the realm of apples and oranges, as — spoiler alert! — the RX 6650 XT tends to lead the RTX 3060 by 10–20 percent in traditional gaming benchmarks. Ray tracing games flip the tables, however, with the 3060 beating the 6650 XT by around 25 percent.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Arc's ray tracing capabilities have been a bit difficult to pin down up to now. The A380 did deliver better RT performance than the RX 6500 XT, but that's hardly praiseworthy. With four times the cores and hardware, we're expecting a lot more from the A770 and A750 — and Intel has even shown benchmarks where the A770 clearly beat the RTX 3060 with ray tracing enabled.

That's a nice change of pace from AMD, which to date hasn't done much with ray tracing and tends to downplay its importance. And to be fair, AMD has a point: the visual fidelity gains that come from enabling ray tracing are often far outweighed by the loss of performance. Especially on AMD's GPUs.

Intel's RTUs have dedicated BVH traversal hardware and a BVH cache that reduces memory bottlenecks, and it says each RTU can do up to 12 ray/box BVH intersections per clock, along with one ray/triangle intersection. By comparison, AMD's RDNA 2 GPUs only do up to four ray/box intersections per clock, and that's done using enhanced texture units, which means the hardware gets shared and ends up with some resource contention.

Nvidia's RT cores are more like a black box that Nvidia doesn't want to discuss in too much detail. Turing could do one ray/triangle intersection per clock, and an indeterminate number of ray/box intersections. Nvidia apparently determined the BVH side of things was running ahead of the ray/triangle hardware, so in Ampere, it added a second ray/triangle intersection unit per RT core. Looking forward, Ada doubles the ray/triangle throughput and includes other BVH enhancements, and it seems unlikely that any contemporary GPUs will match Ada in ray tracing performance. But Intel does seem to be at least relatively competitive with Ampere in terms of RT capabilities.

The A770 maxes out at 32 RTUs, which is more than the RTX 3060's 28 RT cores but less than the RTX 3060 Ti's 38 RT cores. Meanwhile, AMD's RX 6650 XT has 32 ray accelerators, while the RX 6700 XT has 40 ray accelerators… but again, AMD's ray tracing hardware appears to be the weakest of the three GPU vendors. Check our ray tracing performance results a few pages on to see where the chips fall in real-world testing.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Current page: Intel Arc A770 Review

Next Page Meet the Intel Arc A770 Limited Edition Card

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

ingtar33 When Intel can deliver drivers that don't crash simply opening a game i might be interested; however until the software side is figured out (something intel hasn't done yet in 20+ years of graphic drivers) I simply can't take this seriously.Reply -

edzieba If you're getting a headache with all the nebulously-pronounceable Xe-ness (Xe-cores, Xe Matrix Engines, Xe kitchen sink...) imagine it is pronounced "Ze" in a thick Hollywood-German accent. Much more enjoyable.Reply -

I'd like to see benchmarks cappped at 60fps. Not everyone uses high refresh rate monitor and today, when electricity is expensive and most likely will be even more in the near future, I'd like to see how much power a GPU draws when not trying to run the game as fast as possible.Reply

-

AndrewJacksonZA FINALLY, Intel is back! Or at least, halfway back. It's good seeing them compete in this midrange, and I hope that they flourish into the future.Reply

And I really want an A770, my i740 is feeling lonely in my collection. ;-) -

AndrewJacksonZA Reply

The "EKS-E" makes it sound cool!edzieba said:If you're getting a headache with all the nebulously-pronounceable Xe-ness (Xe-cores, Xe Matrix Engines, Xe kitchen sink...) imagine it is pronounced "Ze" in a thick Hollywood-German accent. Much more enjoyable.

-

-Fran- Limited Edition? More like DoA Edition...Reply

Still, I'll get one. We need a strong 3rd player in the market.

I hope AV1 enc/dec works! x'D!

EDIT: A few things I forgot to mention... I love the design of it. It's a really nice looking card and I definitely appreciate the 2 slot, not obnoxiously tall height as well. And I hope they can work as secondary cards in a system without many driver issues... I hope... I doubt many have tested these as secondary cards.

Regards. -

LolaGT The hardware is impressive. It looks the part, in fact that looks elegant powered up.Reply

It does look like they are trying to push out fixes, unfortunately when you are swamped with working on fixes optimization takes a back seat. The fact that they have pushed out quite a few driver updates shows they are spending resources on that and if they keep at that.....we'll see. -

AndrewJacksonZA Reply

Same. I have a BOATLOAD of media that I want to convert and rip to AV1, and my i7-6700 non-K feels sloooooowwww, lol.-Fran- said:I hope AV1 enc/dec works! -

rluker5 Looks like this card works best for those that want to max out their graphics settings at 60 fps. Definitely lagging the other two in driver CPU assistance.Reply

And a bit of unfortunate timing given the market discounts in AMD gpu prices. The 6600XT for example launched at AMD's intended price of $379. The A770 likely had it's price reduced to account for this, but the more competitors you have, generally the more competition you will have.

I wonder how many games the A770 will run at 4k60 medium settings but high textures? That's what I generally play at, even with my 3080 since the loss in visual quality is worth it to reduce fan noise.