Tom's Hardware Verdict

The RTX 4070 Ti marks the third entry in the Ada Lovelace roundup, dropping the price of entry to an almost palatable $799. Raw performance is basically on par with the RTX 3090, but with half the memory and lower power consumption, while DLSS 3 hopes to make the performance picture a bit rosier.

Pros

- +

Big jump in generational performance

- +

Very efficient graphics card

- +

DLSS 3 may yet prove useful

- +

Good ray tracing hardware

Cons

- -

Big jump in generational pricing

- -

DLSS 3 increases latency

- -

Mostly pointless 16-pin power connector

Why you can trust Tom's Hardware

Nvidia's GeForce RTX 4070 Ti may rank as the worst kept "secret" of the past few decades of graphics cards. Originally revealed back in October as the RTX 4080 12GB that was later "unlaunched," the core specs and hardware didn't change — only the price and name are new. Following in the footsteps of the more potent RTX 4090 and RTX 4080, the cards officially go on sale tomorrow, January 5, 2023. Except reviews can go up a day early, so potential buyers at least get some advice before taking the plunge.

Will the RTX 4070 Ti rank among the best graphics cards? That's a tough call, which we'll get into more during this review. What we can say is that, based on our GPU benchmarks hierarchy testing, it's faster than most of the 30-series offerings and basically ties the RTX 3090, at a lower price point. That's the good news. The bad news is that this is by far the most expensive xx70-class graphics card Nvidia has ever released. We've gone from $329 on a GTX 970 to $379 on the GTX 1070, then $499 for the RTX 2070 and 2070 Super, $499 for the RTX 3070 as well with $599 for the 3070 Ti, and now we're looking at $799 for an RTX 4070 Ti.

Put that way, what we're really talking about feels a lot more like a replacement for the RTX 3080 12GB. Nvidia never gave that an official MSRP, but we briefly saw prices dip into the $700–$800 range before supply apparently dried up. Absent the cryptocurrency boom of 2020–2022, the 3080 12GB and 3080 Ti probably would have landed in the $800–$900 range. Now Ethereum has gone proof of stake and cryptocurrency mining profits are in the toilet, but it seems we're still looking at mining-inflated graphics card prices.

There are alternative ways of looking at things as well. We don't have hard numbers on how many cards have been sold, but all of the RTX 4090 cards at retail are still selling for over $2,000. RTX 4080 cards meanwhile seem to be relatively available at close to their base $1,200 price, but none of them are actually selling at or below that price point. The same goes for AMD's new RX 7900 XTX and RX 7900 XT, the latter of which can be found for $899 while the former is mostly sold out and often costs $1,200 or more.

In other words, there's clearly enough demand from people with deep pockets that newer, faster graphics cards at lower prices is a fantasy we're not going to experience. We've heard some speculation that RTX 4090 prices in particular are greatly inflated due to demand from the professional sector — if all you want is a number crunching monster for AI research, the 4090 at $2,000 is actually a great deal compared to $5,000–$10,000 for actual professional cards that may not even be as fast! But how far down the stack will that go before such professionals simply opt for a faster card?

Hopefully not down to the $800 range, and equally hopefully we won't get scalpers trying to buy up every card and then resell them on eBay. But we probably need a lot more than hope for either of those to be likely.

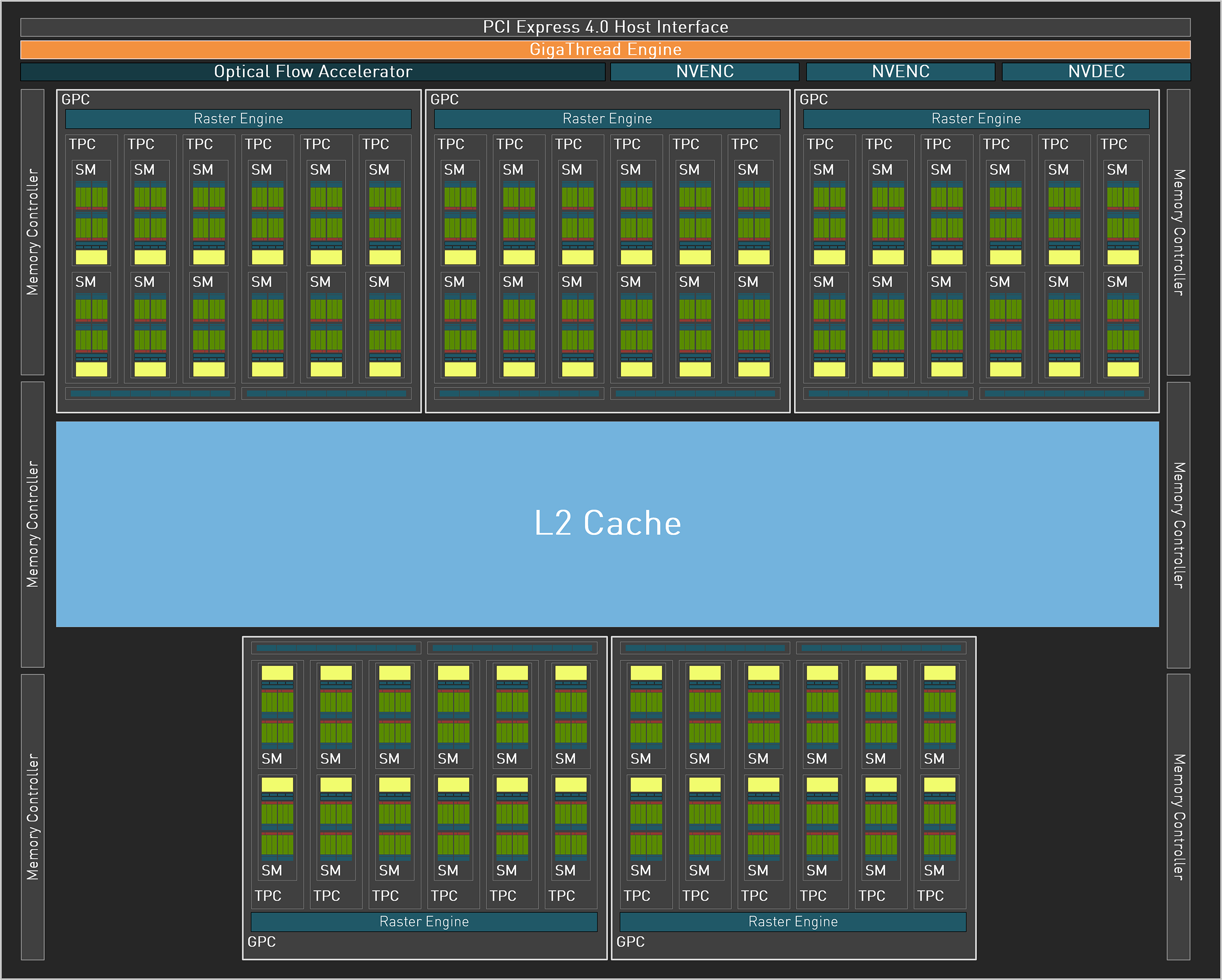

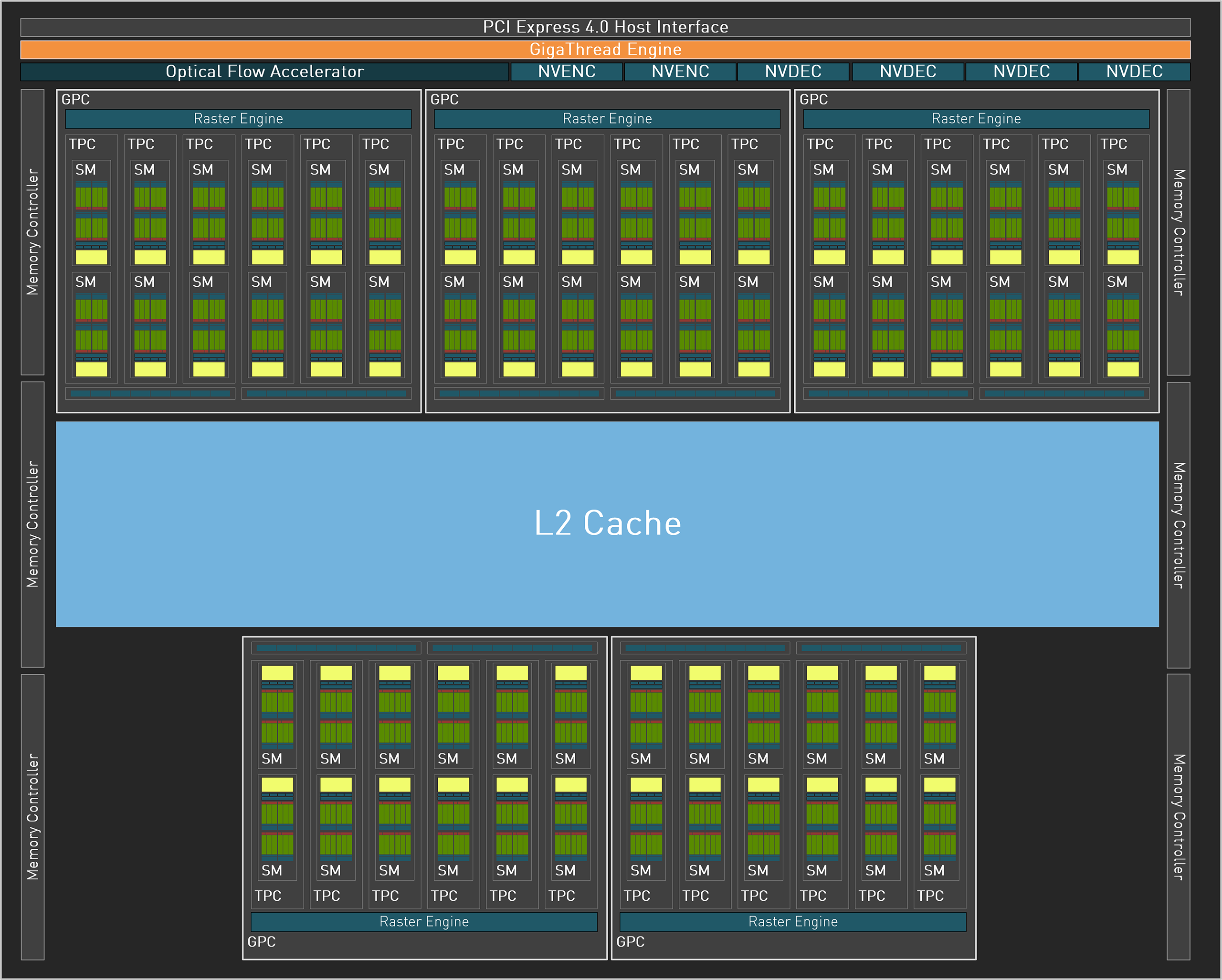

We've previously covered Nvidia's Ada Lovelace architecture, so the only real question now is how performance scales down to the AD104 GPU with fewer shaders, less memory, less cache, a narrower memory interface, etc. Leaked details from the rebadged RTX 4080 12GB suggest it will be around 20–25 percent slower than the RTX 4080… which puts it in RTX 3090 territory. Here's a look at the specifications for the new and previous generation Nvidia cards, alongside AMD's new RX 7900 offerings.

| Graphics Card | RTX 4070 Ti | RTX 4090 | RTX 4080 | RTX 3090 Ti | RTX 3080 Ti | RTX 3080 | RTX 3070 Ti | RX 7900 XTX | RX 7900 XT |

|---|---|---|---|---|---|---|---|---|---|

| Architecture | AD104 | AD102 | AD103 | GA102 | GA102 | GA102 | GA104 | Navi 31 | Navi 31 |

| Process Technology | TSMC 4N | TSMC 4N | TSMC 4N | Samsung 8N | Samsung 8N | Samsung 8N | Samsung 8N | TSMC N5 + N6 | TSMC N5 + N6 |

| Transistors (Billion) | 35.8 | 76.3 | 45.9 | 28.3 | 28.3 | 28.3 | 17.4 | 45.6 + 6x 2.05 | 45.6 + 5x 2.05 |

| Die size (mm^2) | 294.5 | 608.4 | 378.6 | 628.4 | 628.4 | 628.4 | 392.5 | 300 + 222 | 300 + 185 |

| SMs | 60 | 128 | 76 | 84 | 80 | 68 | 48 | 96 | 84 |

| GPU Shaders | 7680 | 16384 | 9728 | 10752 | 10240 | 8704 | 6144 | 12288 | 10752 |

| Tensor Cores | 240 | 512 | 304 | 336 | 320 | 272 | 192 | N/A | N/A |

| Ray Tracing "Cores" | 60 | 128 | 76 | 84 | 80 | 68 | 48 | 96 | 84 |

| Boost Clock (MHz) | 2610 | 2520 | 2505 | 1860 | 1665 | 1710 | 1765 | 2500 | 2400 |

| VRAM Speed (Gbps) | 21 | 21 | 22.4 | 21 | 19 | 19 | 19 | 20 | 20 |

| VRAM (GB) | 12 | 24 | 16 | 24 | 12 | 10 | 8 | 24 | 20 |

| VRAM Bus Width | 192 | 384 | 256 | 384 | 384 | 320 | 256 | 384 | 320 |

| L2 Cache | 48 | 72 | 64 | 6 | 6 | 5 | 4 | 96 | 80 |

| ROPs | 80 | 176 | 112 | 112 | 112 | 96 | 96 | 192 | 192 |

| TMUs | 240 | 512 | 304 | 336 | 320 | 272 | 192 | 384 | 336 |

| TFLOPS FP32 | 40.1 | 82.6 | 48.7 | 40 | 34.1 | 29.8 | 21.7 | 61.4 | 51.6 |

| TFLOPS FP16 (FP8/INT8) | 321 (641) | 661 (1321) | 390 (780) | 160 (320) | 136 (273) | 119 (238) | 87 (174) | 123 (123) | 103 (103) |

| Bandwidth (GBps) | 504 | 1008 | 717 | 1008 | 912 | 760 | 608 | 960 | 800 |

| TBP (watts) | 285 | 450 | 320 | 450 | 350 | 320 | 290 | 355 | 300 |

| Launch Date | Jan-23 | Oct-22 | Nov-22 | Mar-22 | Jun-21 | Sep-20 | Jun-21 | Dec-22 | Dec-22 |

| Launch Price | $799 | $1,599 | $1,199 | $1,999 | $1,199 | $699 | $599 | $999 | $899 |

We've mentioned before that Nvidia appears to be attempting to stretch out the range of performance offered with the RTX 40-series, and the same thing happened with the RTX 30-series. From the fastest to slowest RTX 20-series GPU, the RTX 2080 Ti was about double the performance of the RTX 2060. Meanwhile, last generation's RTX 3090 Ti was three times as fast as the RTX 3050. At present, we don't even have a vanilla RTX 4070, never mind a future RTX 4050, and we're already looking at potentially double the performance from the bigger and more expensive RTX 4090.

Things of course won't scale perfectly, but the 4090 does have more than twice as many GPU shaders, Tensor cores, RT cores, etc. It also has exactly twice as much memory and memory bandwidth, but only 50% more L2 cache. There will be workloads — stuff like AI training and inference comes to mind — where the card that costs twice as much will deliver twice the performance while consuming twice as much power. But we'll get to the benchmarks soon enough.

What about AMD's RX 7900 series cards? The RX 7900 XT costs $100 more than the 4070 Ti, and at least in several areas it looks to have superior specs. The teraflops figures favor AMD's GPU by nearly 30%, for example, and AMD gives users 67% more memory with 59% more memory bandwidth. There's little doubt that Nvidia will still pull ahead when it comes to ray tracing performance — and deep learning workloads — but for rasterization AMD might come out ahead. But then we also have to ask: Do you want to spend nearly $1,000 for a graphics card that relegates ray tracing to second tier status?

Sure, we get it: There still aren't many games where ray tracing is required, and quite a few games that support ray tracing only seem to use it for modest improvements in image fidelity while dropping performance quite a bit. But there are games like Control and Cyberpunk 2077 where it can make a difference, so spending a bit more — or in this case, potentially saving money — to get a superior feature set is something plenty of gamers seem willing to do.

Besides all the usual graphics hardware, Nvidia's latest Ada Lovelace GPUs have a few extras. AMD still doesn't have a Tensor core equivalent — its AI Accelerators share the same execution units as the GPU shaders — which means in FP16 workloads the 4070 Ti has potentially triple the throughput of the RX 7900 XT. And for AI inference work that can leverage the FP8 hardware, Nvidia doubles performance yet again.

There's also the enhanced Optical Flow Accelerator (OFA), which powers the DLSS 3 algorithm and may be used for other video related tasks. Nvidia says that over 50 games with DLSS 3 are in the works, with about 15 currently available. Even better news is that every one of those games with DLSS 3 also supports DLSS 2 and Reflex, which means anyone with an RTX card can benefit — just not with Frame Generation.

If you're interested in AI research, especially without forking over the money for a professional workstation, Nvidia's GeForce cards certainly warrant a look. There are quite a few AI tools already available, including things like Stable Diffusion, ChatGPT, and more. It's possible to get those tools running on non-Nvidia GPUs, but after months of looking for a good AI benchmark, I can say that many of the open source tools focus on Nvidia while AMD (or Intel) GPUs are at best an afterthought.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Nvidia GeForce RTX 4070 Ti Review

Next Page Nvidia RTX 4070 Ti Design and Assembly

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

sabicao ReplyAdmin said:Our GeForce RTX 4070 Ti testing reveals good performance and efficiency, but this is a large jump in generational pricing that will displease many gamers. It's barely faster than the previous generation RTX 3080 Ti and 3080 12GB, at a relatively similar price, with DLSS 3 being the potential grace.

Nvidia GeForce RTX 4070 Ti Review: A Costly 70-Class GPU : Read more

I really do feel Toms', Anand and all the other highly regarded tech sites have an obligation to express how stupid these prices are. Sure, we the customers have to use our voice by not buying, but you Sirs should be witing in every review how wrong all these new price points are. Newcomers cannot be led to believe that it is ok for a x70 series to cost 700 bucks. No freaking way. -

JarredWaltonGPU Reply

Do you not know what "almost" means? And while some would take "palatable" to mean really tasty, that's not the way I normally use it. I use it more as "acceptable but not awesome." I wouldn't call an excellent dinner "palatable," I'd say it was delicious or some other word that means I really like it. Taco Bell is palatable, for example. So is Wendy's. But neither is great, just like an $800 replacement that's only moderately faster than the outgoing $800 cards.oofdragon said:The price is Not almost palatable, stop trying to sell this nonsense -

JarredWaltonGPU Reply

They're only "wrong" when everyone refuses to pay them. Unfortunately, we're being shown time and time again that there are apparently enough people willing to spend $800 for this level of performance that we'll continue to see them. As stated in the conclusion, if Nvidia had tried to sell this as a $600 card, or a $500 card, and then scalpers just snapped them all up and asked for $800 or more, we'd be right back where we started. Except then we'd have scalpers contributing nothing and taking a chunk of the profits.sabicao said:I really do feel Toms', Anand and all the other highly regarded tech sites have an obligation to express how stupid these prices are. Sure, we the customers have to use our voice by not buying, but you Sirs should be witing in every review how wrong all these new price points are. Newcomers cannot be led to believe that it is ok for a x70 series to cost 700 bucks. No freaking way.

So yeah, don't buy a $800 card if you don't want to spend that much. Wait for prices to come down, or go with a cheaper and slower alternative. But if others keep paying a lot more than you're willing to pay, nothing is going to change. -

hannibal Cheaper than expected…Reply

Lets see what real price end up after two to tree weeks… when those few ”cheap” MSRP GPUs run out… $1200? -

Elusive Ruse Hmmm, appreciate the review Jared, yet I gotta object to your "almost" endearing tone and conclusion. Also, you insist that this is an $800 card, yet the TUF gaming you reviewed here reportedly costs $850.Reply -

peachpuff "Portal RTX at 24 fps on a 4090 gets 42 fps with Frame Generation enabled, but without upscaling, but it still feels like 24 fps. That's because the user input is still running at 24 fps."Reply

Wow really? Never knew this, interesting tidbit. -

-Fran- Thanks for the review!Reply

This is a big can of "meh; pass". Much like with the 7900XT. Ironically, the 7900XTX made the 4080-16GB look better and now nVidia returning the hand, making the 7900XT less stupid. They're still both in stupid territory, though.

I mostly agree with everything, so nothing more to add, really. Maybe just the mention this card won't have an FE (as I've read and heard), so the first batch of $800 cards will last whatever the AIBs want them to be on shelves. Which, I'm sure, won't be long. This card will be over $850 for sure.

Regards. -

Loadedaxe Replysabicao said:I really do feel Toms', Anand and all the other highly regarded tech sites have an obligation to express how stupid these prices are. Sure, we the customers have to use our voice by not buying, but you Sirs should be witing in every review how wrong all these new price points are. Newcomers cannot be led to believe that it is ok for a x70 series to cost 700 bucks. No freaking way.

He did say it. In the title.

Nvidia GeForce RTX 4070 Ti Review: A Costly 70-Class GPU

Open up your wallet and say ouch

He said it professionally, many times through out his review. I am going to assume you didn't read the whole review, so maybe you should, its there.

These cards are palatable, because most plebs pay for them. As long as everyone keeps paying these prices, Nvidia is going to keep charging them. If you all want gpu prices lower, skip a gen or two, speak with your money, not your mouth. -

DavidLejdar Seems a bit weak-ish for 4K gaming, and there are cheaper GPUs, which work fine for 1440p gaming. It still has quite some performance, and the 4K FPS are not bad as such. The numbers just don't convince me that it wouldn't drop below 60 (real) FPS at 4K with the next round of game releases, so I wouldn't pick it up for 4K at that price.Reply

And scalpers sure may be an issue, but if the RTX 4070 Ti is meant as "the entry-level GPU for 4K gaming, or for top 1440p gaming", then it wouldn't necessarily be a miscalculation if it would be produced in higher numbers, so that scalpers would have a garage full of them while they still would be in-stock at the retailers.