Radeon HD 4850 Vs. GeForce GTS 250: Non-Reference Battle

Game Benchmarks: Fallout 3

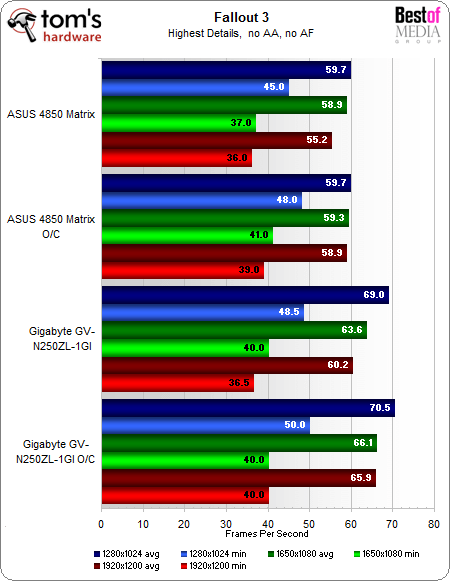

Fallout 3 is a great game built on the demanding graphics engine evolved from the legendary Elder Scrolls: Oblivion. Let’s see how these cards perform at maximum graphics settings, with no AA or AF:

Fallout 3 seems to prefer the Gigabyte GV-N250ZL-1GI, but we can’t be sure it likes the GeForce architecture or the full gigabyte of video RAM more. It’s a solid lead, but with the minimum frame rates so close between the contenders, it’s not a monster win.

We also suspect a little funny business with this benchmark as the Asus card seemed to max out at exactly 63 FPS in these benchmarks regardless of the resolution or image-quality settings. While vsync was forced off in Catalyst Control Center as well as the game itself, a constant maximum frame rate like that is suspicious. If it were caused by a CPU limitation, it should have also shown itself in the GeForce benchmarks as well.

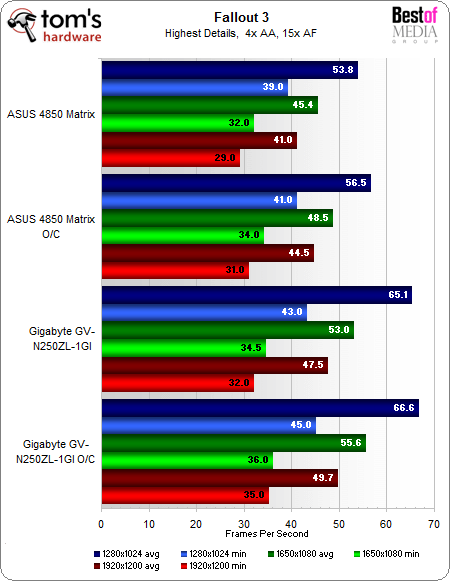

Let’s add 4xAA and 15xAF to the mix and see if the story changes any:

The results appear quite close to the benchmarks with no AA and AF, but the GV-N250ZL-1GI has increased its lead a little over the Asus 4850 Matrix.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Game Benchmarks: Fallout 3

Prev Page Game Benchmarks: Left 4 Dead Next Page Game Benchmarks: World in ConflictDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

tuannguyen rags_20In the second picture of the 4850, the card can be seen bent due to the weight.Reply

Hi rags_20 -

Actually, the appearance of the card in that picture is caused by barrel or pincushion distortion of the lens used to take the photo. The card itself isn't bent.

/ Tuan -

jebusv20 demonhorde665... try not to triple post.Reply

looks bad... and eratic. and makes the forums/coments system

more clutered than need be.

ps. your not running the same bench markes as Toms so your not really comparable.

yes, same game and engine, but for example in crysis, the frame rates are completely different from the start, through to the snowey bit at the end.

pps. are you comparing your card to there card at the same resolution? -

alexcuria Hi,Reply

I've been looking for a comparison like this for several weeks. Thank you although it didn't help me too much in my decision. I also missed some comments regarding the Physix, Cuda, DirectX 10 or 10.1 and Havok discussion.

I would be very happy to read a review for the Gainward HD4850 Golden Sample "Goes Like Hell" with the faster GDDR5 memory. If it then CLEARLY takes the lead over the GTS 250 and gets even closer to the HD4870 then my decision will be easy. Less heat, less consumption and almost same performance than a stock 4870. Enough for me.

btw. Resolutions I'm most interested in: 1440x900 and 1650x1080 for 20" monitor.

Thank you -

spanner_razor Under the test setup section the cpu is listed as core 2 duo q6600, should it not be listed as a quad? Feel free to delete this comment if it is wrong or when you fix the erratum.Reply -

KyleSTL Why a Q6600/750i setup? That is certainly less than ideal. A Q9550/P45 or 920/X58 would have been a better choice in my opinion (and may have exhibited a greater difference between the cards).Reply -

B-Unit zipzoomflyhighand no the Q6600 is classified as a C2D. Its two E6600's crammed on one die.Reply

No, its classified as a C2Q. E6600 is classified as C2D. -

KyleSTL ZZFhigh,Reply

Directly from the article on page 11:

Game Benchmarks: Left 4 Dead

Clearly this is not an ideal setup to eliminate the processor from affecting benchmark results of the two cards. Most games are not multithreaded, so the 2.4Ghz clock of the Q6600 will undoubtedly hold back a lot of games since they will not be able to utilize all 4 cores.

Let’s move on to a game where we can crank up the eye candy, even at 1920x1200. At maximum detail, can we see any advantage to either card?

Nothing to see here, though given the results in our original GeForce GTS 250 review, this is likely a result of our Core 2 Quad processor holding back performance.

To all,

Stop triple posting!

-

weakerthans4 ReplyThe default clock speeds for the Gigabyte GV-N250ZL-1GI are 738 MHz on the GPU, 1,836 MHz on the shaders, and 2,200 MHz on the memory. Once again, these are exactly the same as the reference GeForce GTS 250 speeds.

Later in the article you write,or the sake of argument, let’s say most cards can make it to 800 MHz, which is a 62 MHz overclock. So, for Gigabyte’s claim of a 10% overclocking increase, we’ll say that most GV-N250ZL-1GI cards should be able to get to at least 806.2 MHz on the GPU. Hey, let’s round it up to 807 MHz to keep things clean. Did the GV-N250ZL-1GI beat the spread? It sure did. With absolutely no modifications except to raw clock speeds, our sample GV-N250ZL-1GI made it to 815 MHz rock-solid stable. That’s a 20% increase over an "expected" overclock according to our unscientific calculation.

Your math is wrong. A claim of 20% over clock on the GV-N250ZL-1GI would equal 885.6 MHz. 10% of 738MHz = 73.8 MHz. So a 10% overclock would equal 811.8 MHz. 815 MHz is nowhere near 20%. In fact, according to your numbers, the GV-N250ZL-1GI barely lives up to its 10% minimal capability.