Nvidia's GeForce GTX 285: A Worthy Successor?

Benchmark Results: 3DMark Vantage

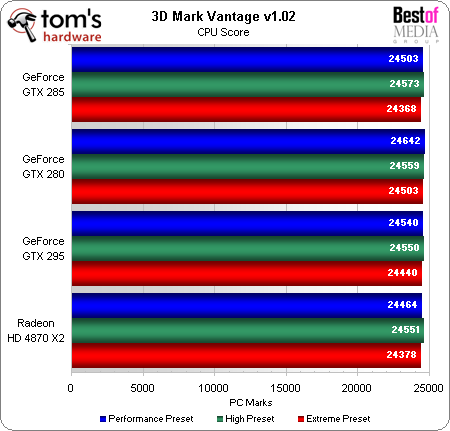

Unlike real-world games, 3DMark requires a Physics benchmark run in order to provide a full score. That benchmark runs amazingly slow using the CPU as a physics processor and incredibly fast when using the GPU. Of course, the GPU Physics feature comes in the form of PhysX, which is a proprietary Nvidia technology. 3DMark awards the added "performance points" to the CPU score, since it’s using the GPU as a CPU.

During actual game play, enabling PhysX slows the system slightly, while disabling PhysX disables advanced-physics calculations. Trading a few frames per second (FPS) for increased realism is viable for games, but judging any performance difference between competing products requires that all products support the same setting. Adding proprietary calculations removes standardization, which is a benchmark that’s supposed to be a standardized test, so we chose the Disable PPU option in 3DMark to make this an apples-to-apples comparison.

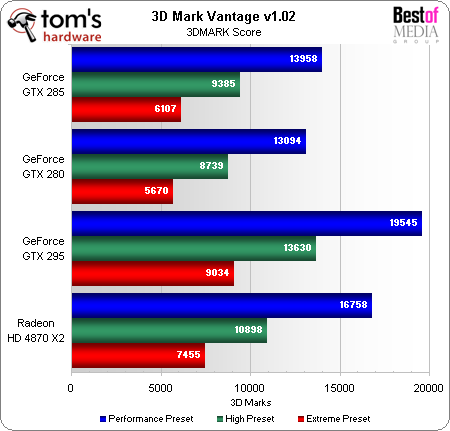

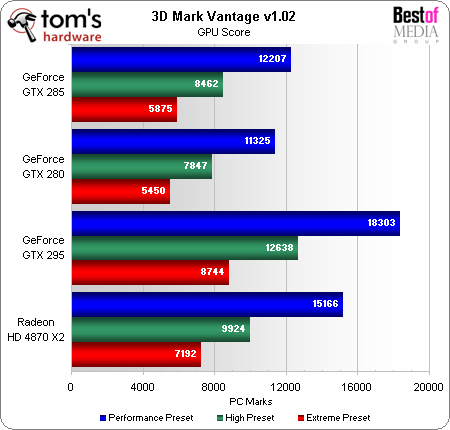

3DMark and GPU test scores favor the GeForce GTX 285 over the GTX 280 by an average of 7 to 8% percent.

CPU scores are separated by less than half of one percent, which is an expected result when the Disable PPU setting prevents benchmark inflation.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Benchmark Results: 3DMark Vantage

Prev Page Benchmark Results: World In Conflict Next Page Power And Efficiency-

Proximon Perfect. Thank you. I only wished that you could have thrown in a 4870 1GB and a GTX 260+ into the mix, since you had what I'm guessing are new beta drivers. Still, I guess you have to sleep sometime :pReply -

fayskittles I would have liked to see the over clocking that could be done to all cards and see how they compare then.Reply -

I would like to see a benchmark between SLI 260 Core 216, SLI 280, SLI 285, GTX 295, and 4870X2Reply

-

ravenware Thanks for the article.Reply

Overclocking would be nice to see what the hardware can really do; but I generally don't dabble into overclock video cards. Never seems to work out, either the card is already running hot or the slightest increase in frequency produces artifacts.

Also driver updates seem to wreak havoc with oc settings. -

wlelandj Personally, I'm hoping for a non-crippled GTX 295 using the GTX 285's full specs(^Core Clock, ^Shader Clock, ^Memory Data Rate, ^Frame Buffer, ^Memory Bus Width, and ^ROPs)My & $$$ will be waiting.Reply -

A Stoner I went for the GTX 285. I figure it will run cooler, allow higher overclocks, and maybe save energy compared to a GTX 280. I was able to pick mine up for about $350 while most GTX 280 cards are still selling for above $325 without mail in rebates counted. Thus far, over the last three years I have had exactly 0 out of 12 mail in rebates for computer compenents honored.Reply -

A Stoner ravenwareThanks for the article.Overclocking would be nice to see what the hardware can really do; but I generally don't dabble into overclock video cards. Never seems to work out, either the card is already running hot or the slightest increase in frequency produces artifacts.Also driver updates seem to wreak havoc with oc settings.I just replaced a 8800 GTS 640MB card with the GTX 285. Base clocks for the GTS are 500 GPU and 800 memory. I foget the shaders, but it is over 1000. I had mine running with 0 glitches for the life of the card at 600 GPU and 1000 memory. Before the overclock the highest temperature at load was about 88C, after the overclock the highest temperature was 94C, both of which were well within manufaturer specifications of 115C. I would not be too scared of overclocking your hardware, unless your warranty is voided because of it.Reply

I have not overclocked the GTX 285 yet, I am waiting for NiBiToR v4.9 to be released so once I overclock it, I can set it permantly to the final stable clock. I am expecting to be able to hit about 730 GPU, but it could be less. -

daeros ReplyBecause most single-GPU graphics cards buyers would not even consider a more expensive dual-GPU solution, we’ve taken the unprecedented step of arranging today’s charts by performance-per-GPU, rather than absolute performance.

In other words, no matter how well ATI's strategy of using two smaller, cheaper GPUs in tandem instead of one huge GPU works, you will still be able to say that Nvidia is the best.

Also, why would most people who are spending $400-$450 on video cards not want a dual-card setup. Most people I know see it as a kind of bragging right, just like water-cooling your rig.

One last thing, why is it so hard to find reviews of the 4850x2? -

roofus because multi-gpu cards come with their own bag of headaches Daeros. you are better off going CF or SLI then to participate it that pay to play experiment.Reply