Nvidia GeForce GTX 590 3 GB Review: Firing Back With 1024 CUDA Cores

AMD shot for—and successfully achieved—the coveted “fastest graphics card in the world” title with its Radeon HD 6990. Now, Nvidia is gunning for that freshly-claimed honor with a dual-GF110-powered board that speaks softly and carries a big stick.

GeForce GTX 590: Bringing The Heat

Today, the worst-kept secret in technology officially gets the spotlight. Hot on the heels of AMD’s Radeon HD 6990 4 GB introduction three weeks ago, Nvidia is following up with its GeForce GTX 590 3 GB. According to Nvidia, it could have introduced this card more than a month ago. However, we know it continued revising its plans for a new flagship well into March. The result is a board deliberately intended to emphasize elegance, immediately after the Radeon HD 6990 bludgeoned us over the head with abrasive acoustics.

Pursuing quietness might sound ironic, given that GPUs based on Nvidia’s Fermi architecture are notoriously hot and power-hungry. To think the company could put two on a single PCB and not out-scream AMD’s dual-Cayman-based card is almost ludicrous. And yet, that’s what Nvidia says it did.

It admits that getting there wasn’t an easy task, though. Compromises were made. For example, Nvidia uses the same mid-mounted fan design for which we chided AMD. It dropped the clocks on its GPUs to help keep thermals under control. And the card still uses more power than any graphics product we’ve ever tested.

But it’s quiet. Crazy-freaking quiet. The quietest dual-GPU board I’ve tested since ATI’s Rage Fury Maxx (how’s that for back-in-the-day?). Mission accomplished on that front. The question remains, though: was Nvidia forced to give up the farm just to show AMD that hot cards don't have to make lots of noise?

Under The Hood: Dual GF110s, Both Uncut

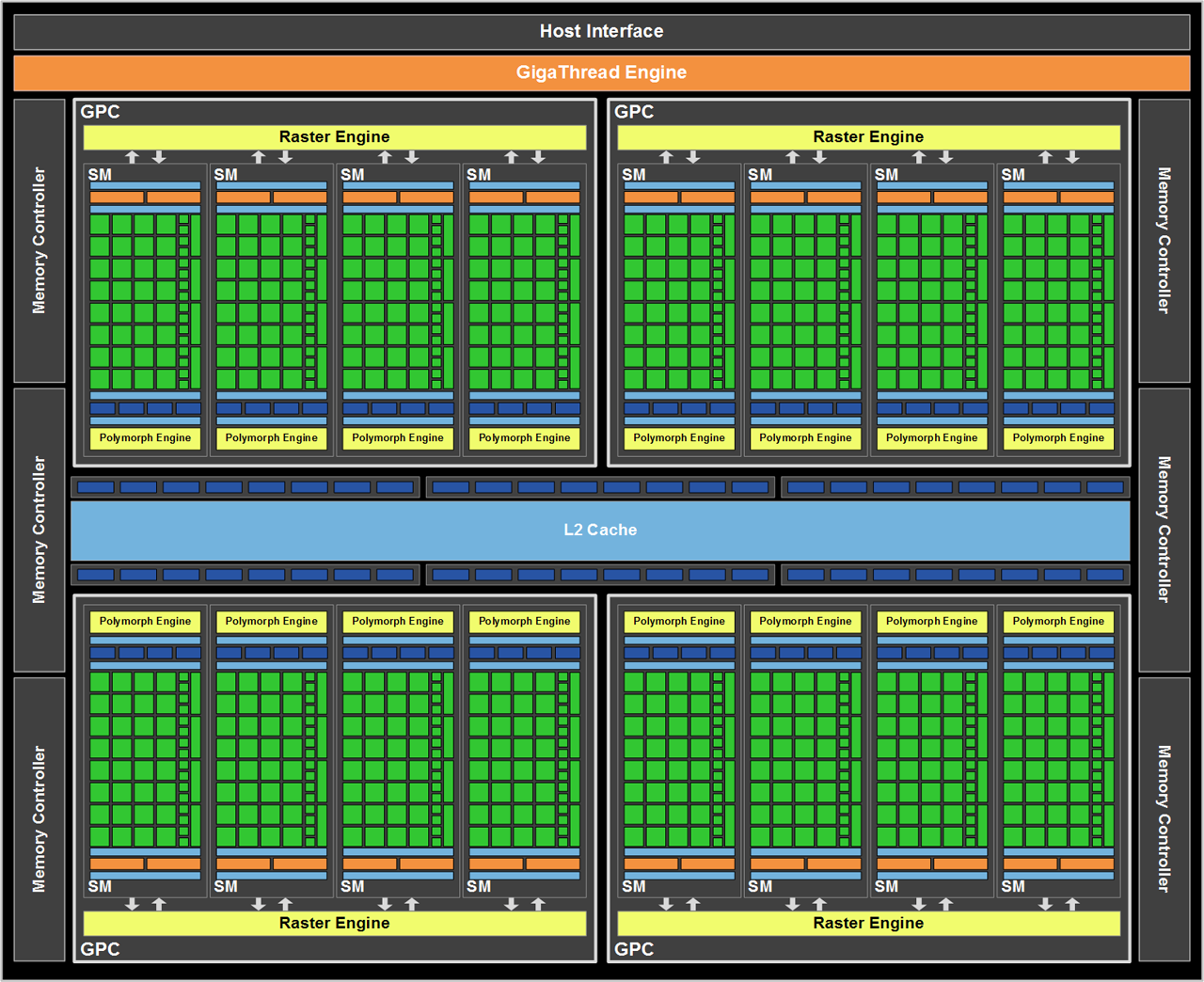

In my discussions with Nvidia, the company made it clear that it wanted to use two GF110 processors, and it didn’t want to hack them up. Uncut GF110s, as you probably already know from reading GeForce GTX 580 And GF110: The Way Nvidia Meant It To Be Played, employ four Graphics Processing Clusters, each with four Streaming Multiprocessors. You’ll find 32 CUDA cores in each SM, totaling 512 cores per GPU. Each SM also offers four texturing units, yielding 64 across the entire chip. Of course, there’s one Polymorph engine per SM as well, though as we’ve seen in the past, Nvidia’s approach to parallelizing geometry doesn’t necessarily scale very well.

The GPU’s back-end features six ROP partitions, each capable of outputting eight 32-bit integer pixels at a time, adding up to 48 pixels per clock. An aggregate 384-bit memory bus is divisible into a sextet of 64-bit interfaces, and you’ll find 256 MB of GDDR5 memory at all six stops. That adds up to 1.5 GB of memory per GPU, which is how you arrive at the GeForce GTX 590’s 3 GB.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

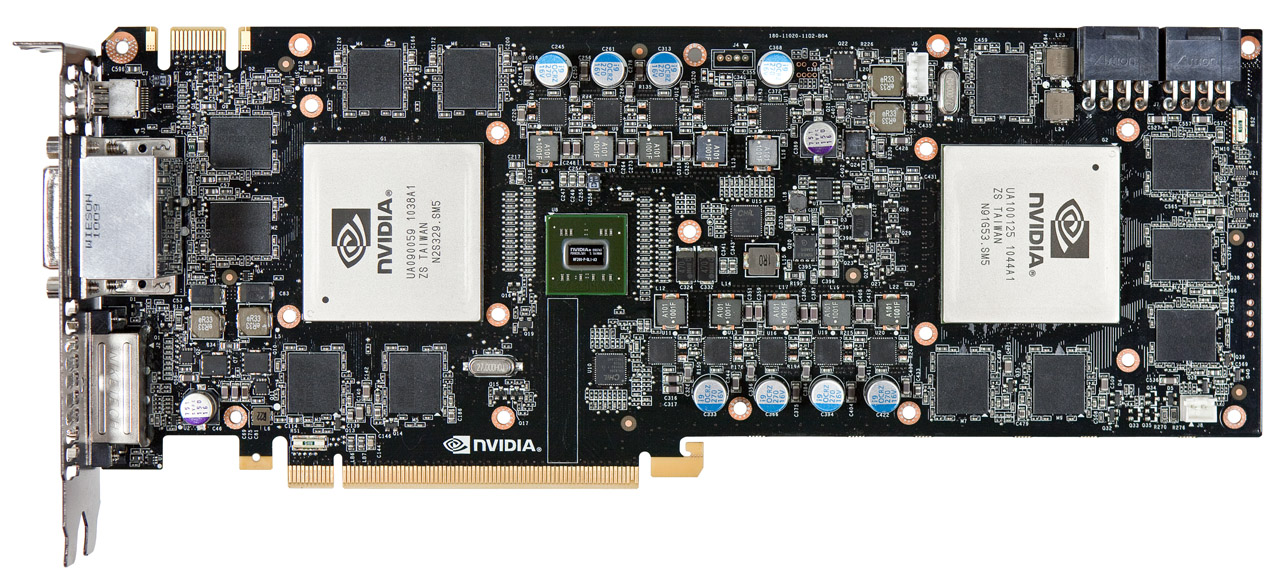

Nvidia ties GTX 590’s GF110 processors together using its own NF200 bridge, which takes a single 16-lane PCI Express 2.0 interface and multiplexes it out to two 16-lane paths—one for each GPU.

| Header Cell - Column 0 | GeForce GTX 590 | GeForce GTX 580 | Radeon HD 6990 | Radeon HD 6970 | Radeon HD 6950 |

|---|---|---|---|---|---|

| Manufacturing Process | 40 nm TSMC | 40 nm TSMC | 40 nm TSMC | 40 nm TSMC | 40 nm TSMC |

| Die Size | 2 x 520 mm² | 520 mm² | 2 x 389 mm² | 389 mm² | 389 mm² |

| Transistors | 2 x 3 billion | 3 billion | 2 x 2.64 billion | 2.64 billion | 2.64 billion |

| Engine Clock | 607 MHz | 772 MHz | 830 MHz | 880 MHz | 800 MHz |

| Stream Processors / CUDA Cores | 1024 | 512 | 3072 | 1536 | 1408 |

| Compute Performance | 2.49 TFLOPS | 1.58 TFLOPS | 5.1 TFLOPS | 2.7 TFLOPS | 2.25 TFLOPS |

| Texture Units | 128 | 64 | 192 | 96 | 88 |

| Texture Fillrate | 77.7 Gtex/s | 49.4 Gtex/s | 159.4 Gtex/s | 84.5 Gtex/s | 70.4 Gtex/s |

| ROPs | 96 | 48 | 64 | 32 | 32 |

| Pixel Fillrate | 58.3 Gpix/s | 37.1 Gpix/s | 53.1 Gpix/s | 28.2 Gpix/s | 25.6 Gpix/s |

| Frame Buffer | 2 x 1.5 GB GDDR5 | 1.5 GB GDDR5 | 2 x 2 GB GDDR5 | 2 GB GDDR5 | 2 GB GDDR5 |

| Memory Clock | 853 MHz | 1002 MHz | 1250 MHz | 1375 MHz | 1250 MHz |

| Memory Bandwidth | 2 x 163.9 GB/s(384-bit) | 192 GB/s (384-bit) | 2 x 160 GB/s (256-bit) | 176 GB/s (256-bit) | 160 GB/s (256-bit) |

| Maximum Board Power | 365 W | 244 W | 375 W | 250 W | 200 W |

What changed from the ill-received GF100-based GeForce GTX 480 to GF110? From my GeForce GTX 580 review:

“The GPU itself is largely the same. This isn’t a GF100 to GF104 sort of change, where Shader Multiprocessors get reoriented to improve performance at mainstream price points (read: more texturing horsepower). The emphasis here remains compute muscle. Really, there are only two feature changes: full-speed FP16 filtering and improved Z-cull efficiency.

GF110 can perform FP16 texture filtering in one clock cycle (similar to GF104), while GF100 required two cycles. In texturing-limited applications, this speed-up may translate into performance gains. The culling improvements give GF110 an advantage in titles that suffer lots of overdraw, helping maximize available memory bandwidth. On a clock-for-clock basis, Nvidia claims these enhancements have up to a 14% impact (or so).”

Other than that, we’re still talking about two pieces of silicon manufactured on TSMC’s 40 nm node and composed of roughly 3 billion transistors each. At 520 square millimeters, GF110 is substantially larger than AMD’s Cayman processor, which measures 389 mm² and is made up of 2.64 billion transistors.

Now, it’s great to get all of those resources (times two) on GeForce GTX 590. However, while the GeForce GTX 580 employs a 772 MHz graphics clock and 1002 MHz memory clock, the GPUs on GTX 590 slow things down to 607 MHz and 853 MHz, respectively.

As a result, this card’s performance isn’t anywhere near what you’d expect from two of Nvidia’s fastest single-GPU flagships. That might be alright, though. After all, AMD launched Radeon HD 6970 as a GeForce GTX 570-contender; the 580 sat in a league of its own. So, although AMD’s Radeon HD 6990 comes very close to doubling the performance of the company’s quickest single-GPU cards, GeForce GTX 590 doesn’t have to do the same thing in order to be competitive at the $700 price point AMD already established and Nvidia plans to match.

We already know what AMD had to do in order to deliver “the fastest graphics card in the world.” Now, how does Nvidia counter?

Current page: GeForce GTX 590: Bringing The Heat

Next Page Building A Dual-GPU Beast...And Keeping It Classy?-

nforce4max Nvidia like ATI should have gone full copper for their coolers instead of using aluminum for the fins. :/Reply -

The_King The clock speeds are a bit of a disappointment as well the high power draw and the performance is not that better than a 6990. Bleh !Reply -

LegendaryFrog I'm impressed, good to see Nvida has started to care about the "livable experience" of their high end products.Reply -

rolli59 Draw! Win some loose some. What is the fastest card? Some will say GTX590 others HD6990 and they are both right.Reply -

Scoregie MMMM... HD 6990.... OR GTX 590... HMMM I'll go with a HD 5770 CF setup because im cheap.Reply -

Sabiancym You can't say Nvidia wins based on the sound level of the cards. That's just flat out favoritism.Reply

I'll be buying a 6990 and water cooling it. Nothing will beat it. -

Darkerson rolli59Draw! Win some loose some. What is the fastest card? Some will say GTX590 others HD6990 and they are both right.Thats more or less how I feel. They both trade blows depending on the game.Reply