GeForce GTX 760 Review: GK104 Shows Up (And Off) At $250

With its last graphics card introduction until the end of Fall, Nvidia isn't trying to impress anyone with groundbreaking performance. Rather, the company is pulling better-than GeForce GTX 660 Ti-class frame rates to a $250 price point, creating value.

Overclocking

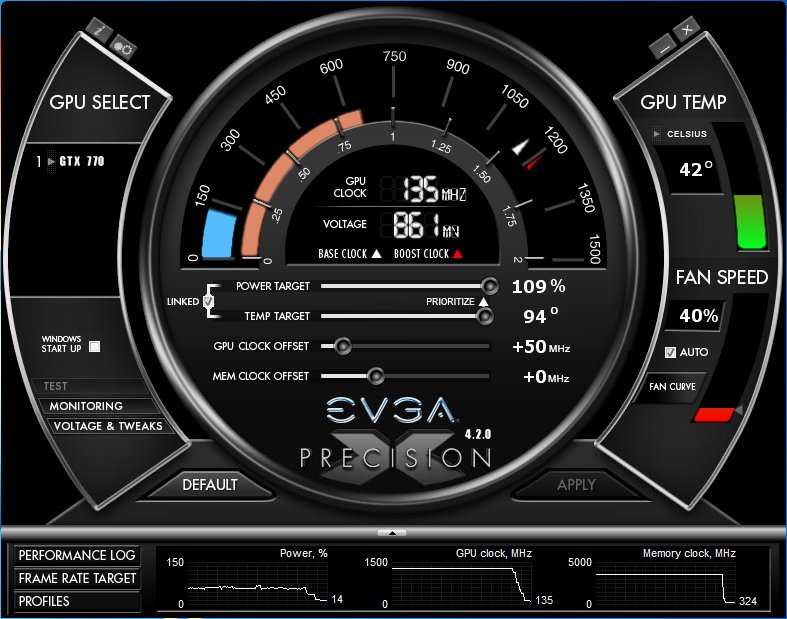

Thanks to freely available and easy-to-use tools, overclocking is something of a common pastime among gamers. However, not every tweak makes sense, especially if you want your hardware to enjoy a long and happy life. Since Nvidia's GPU Boost 2.0 is primarily affected by thermals, we want to determine how far we can we can push the GeForce GTX 760 using only superficial software tweaks, while still maintaining a constant boost state using the standard cooler.

In our launch article The GeForce GTX 770 Review: Calling In A Hit On Radeon HD 7970?, we observed that the GK104 GPUs on all of the boards we tested maxed out at around 1300 MHz. Any attempt to push the chips further resulted in crashes or freezes. Consequently, we were curious to see how the even more pared-back implementation of GK104 found on the 760s would fare. We don’t want to give away too much, but we will say this: the results from our five samples were as varied as the 770s were similar. Of course, five total boards, including Nvidia's reference design, are hardly representative. But we still get a good first impression.

Stability Testing

Any card with an accelerated turbo feature can sustain short-term peaks. That isn't exactly indicative of real-world performance though, which is why we require each product to complete a three-hour test run at its higher clocks, installed in a closed chassis. If an error occurs, even if it's after an hour or two, that frequency is deemed unstable. All remaining frequencies are verified in a second run after a cool-down phase. This may be time-consuming, but it allows us a very sound judgment of a card’s true capabilities.

Overclocking Potential and Performance Increase

Let‘s begin with the best-case scenario before taking a closer look at the individual cards. In our testing, it didn’t matter whether we change the power or temperature targets in the overclocking software, since this had absolutely no effect on the final maximum overclock. Besides, none of the cards came anywhere near its thermal target, thanks to the good cooling solutions.

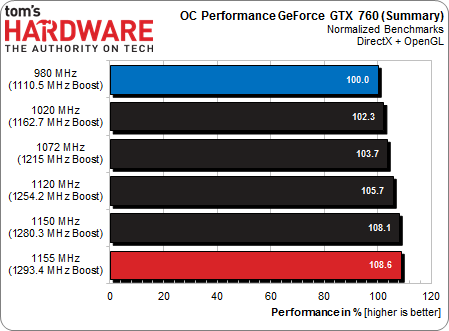

We started out by analyzing the cards‘ maximum overclocks as well as the resulting performance boost and found that all of them showed a similar correlation between clock speeds and performance, although not all of the cards were able to reach the maximum of boost clock of 1.3 GHz. Bottom line: if you’re lucky enough to buy a card with a decent GPU on it, you can expect to squeeze an extra 8 percent of real-world performance out of it through overclocking. In the end, that’s not really as much as it may sound, though. It won’t make a game that was running at 22 FPS at stock clocks play much smoother, for example. In the final tally, we used two games, a synthetic benchmark, a CAD application and one rendering title to determine the actual performance gains, Metro Last Light, Battlefield 3, Unigine Heaven, Autodesk Maya 2013, and Blender. Then we normalized the results and calculated an average.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

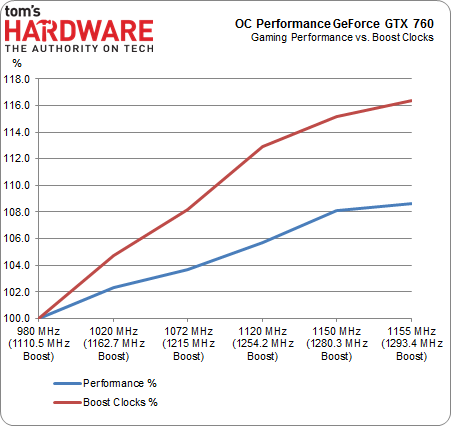

The highest Boost clock, that would run stable for more than an hour, was 1293.4 MHz – at least on two of our cards. On the other hand, none of the cards was able to exceed 1300 MHz. Let’s take a look at the relationship between additional clock speed and the resulting performance increase in percent. As we can see, we get to a point of diminishing returns rather quickly, with additional clock speed doing very little to improve performance.

Increasing Boost clocks by 16 percent yielded a mere 8 percent performance increase. Since the factory overclocked cards run at higher speeds to begin with, there’s less potential left to be exploited. In the end, our conclusion is the same as it was for the GeForce GTX 770: these cards are not overclocking champions.

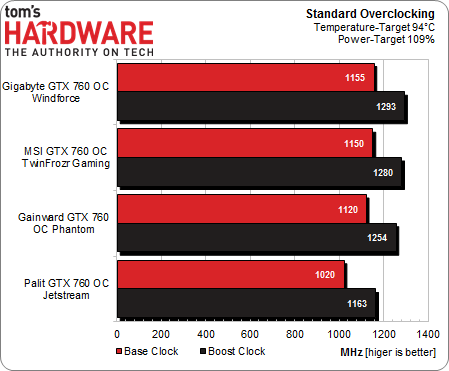

Overclocking the Board Partner Cards

The first thing we noted was that the two cards built on the GeForce GTX 680's longer PCB, namely the 760s from Gigabyte (1155 MHz) and MSI (1150 MHz), achieved the best results, with Gigabyte coming closest to our desired maximum GPU Boost clock of 1.3 GHz. The two cards with shorter PCBs, as well as Nvidia’s own reference design, were unable to duplicate those results. Gainward and Nvidia were tied at 1120 MHz, while the Palit card’s chip must have been a lemon. It wouldn’t overclock at all, and worse, would only run stable over longer periods at a base clock of 1020 MHz, below Nvidia's rated GPU Boost clock. Due to these abnormal results, we’re not going to punish Palit for the weak showing. Rather, we're going to assume that there's a faulty GPU responsible, and not bad engineering. After all, the Palit and Gainward cards are identical, manufactured by the same company, even.

Interestingly, even within our limited sample of five cards, there's quite a bit of fluctuation in the overclocking potential of these GPUs. This could be a result of the varying SMX configurations that Nvidia cuts its GK104 GPUs into, though there's no way to no for sure.

-

SiliconWars This doesn't look faster than the 7950 boost to me. Maybe you should check your scores and update your conclusion to reflect reality?Reply -

pauldh Reply11035777 said:This doesn't look faster than the 7950 boost to me. Maybe you should check your scores and update your conclusion to reflect reality?

Re-read the conclusion in question below. He doesn't say it is faster, he says this card will replace Don's recommendation for best $250 card and displace the 7950 Boost. ie. Don won't be recommending a $300 card that trades blows or barely beats a $250 card. If both were to end up $250, things change.

quote - "A quick reference to Best Graphics Cards For The Money: June 2013 shows that Don is currently recommending the Tahiti-based Radeon HD 7870 for $250. With almost certainty, the GeForce GTX 760 will take that honor next month, displacing the Radeon HD 7950 with Boost at $300 in the process." -

mapesdhs Chris, what is it about the GTX 580 that makes it so slow for the CUDA FluidmarkReply

test, given it does so well for the other CUDA tests, especially iRay and Blender?

Btw, I don't suppose you could include 580 SLI results for the game tests? ;)

Or do you have just the one 580?

My only gripe with the 760 is the misuse of a model number which allows one to

infer it should be quicker than older cards with 'lesser' names (660, etc.) when

infact it's often slower. I really wish NVIDIA would stop releasing products that

exhibit such enormous performance overlap. Given the evolutionary nature of

GPUs, and the time that has passed since the 600s launched, one might

reasonably expect a 760 to beat the 670 too, but it never does. To me, the

price drop is the only thing it has going for it. The endless meddling with shader

numbers, clocks, bus width, etc., creates an utter muddle of performance

response depending on the game. One really has to judge based on the

individual game rather than any general product description or spec summary.

I just hope Skyrim players with 660s don't upgrade on the assumption newer

model names mean better performance, but I expect some will.

Ian.

-

tomfreak GTX760 is an upgrade for GTX460/560 user and of all of that u didnt throw in those cards to bench with. Seriously?Reply -

Novuake Nice review as per usual Chris.Reply

Amazing performance at 250$. The 265bit memory interface does wonders for GK104.

Now I am wondering if there will even be a GTX760ti, while there is a large enough gap in the product stack, I have a feeling there is a chance there may not be a "ti" version.

Anyone know more? -

sarinaide AMD will have to release a new interim Radeon series, the existing family is not to outdated to be stretched to much longer.Reply -

horaciopz So, maybe there will be an GTX 760 ti, for about 300 bucks with the peformance of a GTX 670... Uh? nVidia really should. This remembers the gtx 400 series and 500 series... nVidia is doing it all over again.Reply