Benchmarking GeForce GTX Titan 6 GB: Fast, Quiet, Consistent

We've already covered the features of Nvidia's GeForce GTX Titan, the $1,000 GK110-powered beast set to exist alongside GeForce GTX 690. Now it's time to benchmark the board in one-, two-, and three-way SLI. Is it better than four GK104s working together?

Power Consumption

All of our thermal, acoustic, and power testing was run on a machine with three monitors. Particularly if you’re looking at a GeForce GTX 690 or Titan card, 5760x1080 or 5760x1200 are likely resolutions.

Now, be aware that outputting to a single 2560x1440 display will yield different power consumption results. For a better idea of what you can expect from the Radeon HD 7970 GHz Edition in single-monitor mode, look back to AMD Radeon HD 7970 GHz Edition Review: Give Me Back That Crown!

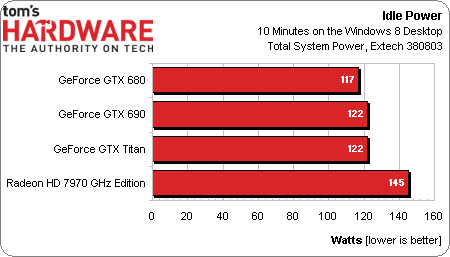

If you hook the same Tahiti-based GPU up to a trio of screens, however, its core operates at 500 MHz, while memory runs at a full-speed 1,500 MHz. The result is relatively high draw, regardless of whether the displays are off or you’re just clicking around on Windows’ desktop.

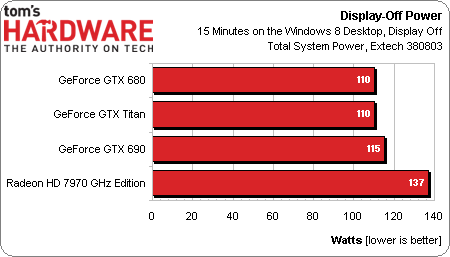

Total system consumption with one Titan driving three screens in display-off mode (after 10 minutes, by default, in Windows’ Balanced power plan) is about the same as one GeForce GTX 680. A dual-GK104 GeForce GTX 690 uses just a few watts more.

With all three monitors on and idle, Titan matches the 690’s power use. Of this group, only GeForce GTX 680 is lower.

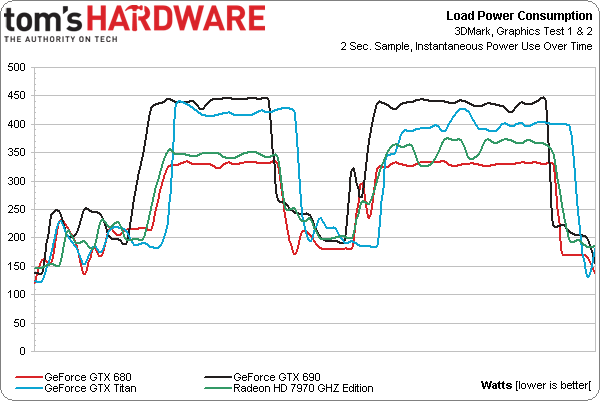

This line graph represents system consumption during the first and second graphics tests in 3DMark. Two GK104s on the GeForce GTX 690 clearly use the most power, followed by Titan, then AMD’s Radeon HD 7970 GHz Edition, and finally GeForce GTX 680.

Work out the averages, and a Titan-based machine uses 311 W through our test. Swapping in a GeForce GTX 690 increases that number to 339 W. And the GeForce GTX 680 drops it to 265 W. AMD’s Radeon HD 7970 GHz Edition averages 285 W.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Power Consumption

Prev Page Heat And Noise Next Page GeForce GTX Titan: Crazy-Fast; Crazy-Expensive-

Novuake Pure marketing. At that price Nvidia is just pulling a huge stunt... Still an insane card.Reply -

whyso if you use an actual 7970 GE card that is sold on newegg, etc instead of the reference 7970 GE card that AMD gave (that you can't find anywhere) thermals and acoustics are different.Reply -

cknobman Seems like Titan is a flop (at least at $1000 price point).Reply

This card would only be compelling if offered in the ~$700 range.

As for compute? LOL looks like this card being a compute monster goes right out the window. Titan does not really even compete that well with a 7970 costing less than half. -

downhill911 If titan costs no more than 800USD, then really nice card to have since it does not, i call it a fail card, or hype card. Even my GTX 690 make more since and now you can have them for a really good price on ebay.Reply -

spookyman well I am glad I bought the 690GTX.Reply

Titan is nice but not impressive enough to go buy. -

hero1 jimbaladinFor $1000 that card sheath better be made out of platinum.Reply

Tell me about it! I think Nvidia shot itself on the foot with the pricing schim. I want AMD to come out with better drivers than current ones to put the 7970 at least 20% ahead of 680 and take all the sales from the greedy green. Sure it performs way better but that price is insane. I think 700-800 is the sweet spot but again it is rare, powerful beast and very consistent which is hard to find atm. -

raxman "We did bring these issues up with Nvidia, and were told that they all stem from its driver. Fortunately, that means we should see fixes soon." I suspect their fix will be "Use CUDA".Reply

Nvidia has really dropped the ball on OpenCL. They don't support OpenCL 1.2, they make it difficult to find all their OpenCL examples. Their link for OpenCL is not easy to find. However their OpenCL 1.1 driver is quite good for Fermi and for the 680 and 690 despite what people say. But if the Titan has troubles it looks like they will be giving up on the driver now as well or purposely crippling it (I can't imagine they did not think to test some OpenCL benchmarks which every review site uses). Nvidia does not care about OpenCL Nvidia users like myself anymore. I wish there more people influential like Linus Torvalds that told Nvidia where to go.