The Myths Of Graphics Card Performance: Debunked, Part 2

Testing PCIe At x16/x8 At Three Generations, From 15.75 To 2GB/s

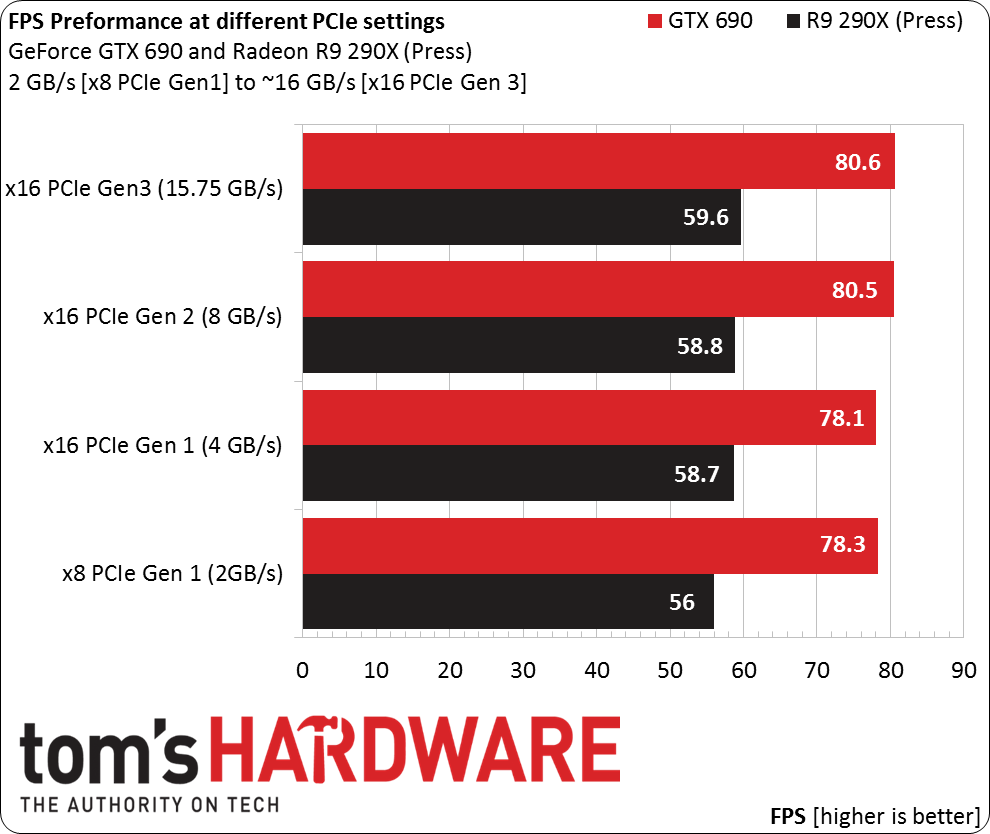

Myth: PCIe x16 (Gen 3) is essential for realizing a graphics card’s top performance.

The ASRock Z87 Extreme6's UEFI includes a feature that allows per-slot configuration of PCIe transfer rates. You’d normally never want to change this, but for our tests, the feature is incredibly valuable.

The Extreme6, unlike the higher-end Extreme9, does not include a PLX PEX 8747, and is consequently limited to 16 lanes of connectivity. Furthermore, hardware strapping sets link width based on whether one, two or three graphics cards are present, automatically configuring the controller’s 16 lanes in x16-x0-x0, x8-x8-x0 or x8-x4-x4 mode.

Article continues belowWe were thus able to test all three generations at x16 and x8 widths using enthusiast-class cards from both AMD and Nvidia. Just bear in mind that even when we're testing cards at x8, we're not testing CrossFire or SLI; we’re looking at single-card setups here.

Thomas conducted a similar test in 2011, the age of PCIe 2.0. Do his findings still hold true?

We tested using Unigine's Valley 1.0 benchmark. In each run, we let the cards reach their thermal throttling range and began testing once they were stably within a tight temperature/frequency range. We’re looking at real-world behavior; these cards are capable of higher frame rates, though they can’t be sustained over time under normal conditions due to thermal throttling.

As you can see, the GeForce GTX 690 achieves a 2.9% performance increase going from an eight-lane first-gen link (2 GB/s) to x16 PCIe 3.0 (15.75 GB/s), while the Radeon R9 290X (press version) manages a slightly more meaningful 6.4% gain. In both cases, the cards are not substantially bottlenecked by PCIe in Unigine Valley 1.0 down to 2.0 GB/s. That’s why the gains realized by ramping up to 15.75 GB/s are incremental at best.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

One caveat is that we're not testing extreme setups with three or four cards in SLI/CrossFire. It would certainly be interesting to see if these results carry over to those multi-GPU setups. We're willing to bet, based on the bandwidth being utilized, that there would still be little to no change above an eight-lane PCIe 2.0 link. If you have your own concrete data, feel free to post a comment below!

PCIe Conclusions And Additional Thoughts

Even enthusiast-class graphics cards are not particularly bandwidth-hungry from a PCIe bus standpoint. Whether you use a PCIe 2.0, PCIe 3.0, a x8 link, or a x16 connection, for a single card, it essentially doesn’t matter to performance. If you have a third-gen x16 slot available, by all means use it. Just don't expect visible gains. And, if you’re using one card, don't consider a third-gen-compatible motherboard or processor a necessary upgrade based on PCI Express alone.

The story gets more complicated when you start taking multiple cards into consideration, and that’s where most enthusiasts probably want to see this experiment head. While the GeForce GTX 690 is, essentially, a pair of underclocked GK104s in SLI on a PCB, we can understand the desire for more exotic configurations. The link above from 2011 has some older data on SLI/CrossFire, which is worth looking at.

Current page: Testing PCIe At x16/x8 At Three Generations, From 15.75 To 2GB/s

Prev Page PCIe: A Brief Technology Primer On PCI Express Next Page NVAPI: Measuring Graphics Memory Bandwidth Utilization-

iam2thecrowe i've always had a beef with gpu ram utillization and how its measured and what driver tricks go on in the background. For example my old gtx660's never went above 1.5gb usage, searching forums suggests a driver trick as the last 512mb is half the speed due to it's weird memory layout. Upon getting my 7970 with identical settings memory usage loading from the same save game shot up to near 2gb. I found the 7970 to be smoother in the games with high vram usage compared to the dual 660's despite frame rates being a little lower measured by fraps. I would love one day to see an article "the be all and end all of gpu memory" covering everything.Reply

Another thing, i'd like to see a similar pcie bandwidth test across a variety of games and some including physx. I dont think unigine would throw much across the bus unless the card is running out of vram where it has to swap to system memory, where i think the higher bus speeds/memory speed would be an advantage. -

blackmagnum Suggestion for Myths Part 3: Nvidia offers superior graphics drivers, while AMD (ATI) gives better image quality.Reply -

chimera201 About HDTV refresh rates:Reply

http://www.rtings.com/info/fake-refresh-rates-samsung-clear-motion-rate-vs-sony-motionflow-vs-lg-trumotion -

photonboy Implying that an i7-4770K is little better than an i7-950 is just dead wrong for quite a number of games.Reply

There are plenty of real-world gaming benchmarks that prove this so I'm surprised you made such a glaring mistake. Using a synthetic benchmark is not a good idea either.

Frankly, I found the article was very technically heavy were not necessary like the PCIe section and glossed over other things very quickly. I know a lot about computers so maybe I'm not the guy to ask but it felt to me like a non-PC guy wouldn't get the simplified and straightforward information he wanted. -

eldragon0 If you're going to label your article "graphics performance myths" Please don't limit your article to just gaming, It's a well made and researched article, but as Photonboy touched, the 4770k vs 950 are about as similar as night and day. Try using that comparison for graphical development or design, and you'll get laughed off the site. I'd be willing to say it's rendering capabilities are actual multiples faster at those clock speeds.Reply -

SteelCity1981 photonboy this article isn't for non pc people, because non pc people wouldn't care about detailed stuff like this.Reply -

renz496 Reply14561510 said:Suggestion for Myths Part 3: Nvidia offers superior graphics drivers

even if toms's hardware really did their own test it doesn't really useful either because their test setup won't represent million of different pc configuration out there. you can see one set of driver working just fine with one setup and totally broken in another setup even with the same gpu being use. even if TH represent their finding you will most likely to see people to challenge the result if it did not reflect his experience. in the end the thread just turn into flame war mess.

14561510 said:Suggestion for Myths Part 3: while AMD (ATI) gives better image quality.

this has been discussed a lot in other tech forum site. but the general consensus is there is not much difference between the two actually. i only heard about AMD cards the in game colors can be a bit more saturated than nvidia which some people take that as 'better image quality'. -

ubercake Just something of note... You don't necessarily need Ivy Bridge-E to get PCIe 3.0 bandwidth. Sandy Bridge-E people with certain motherboards can run PCIe 3.0 with Nvidia cards (just like you can with AMD cards). I've been running the Nvidia X79 patch and getting PCIe gen 3 on my P9X79 Pro with a 3930K and GTX 980.Reply -

ubercake Another article on Tom's Hardware by which the 'ASUS ROG Swift PG...' link listed for an unbelievable price takes you to the PB278Q page.Reply

A little misleading.