The Myths Of Graphics Card Performance: Debunked, Part 2

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

DVI, DisplayPort, HDMI: Digital, But Not Quite The Same

Modern Video Cards Tend To Expose Three Different Connectors: DVI, DisplayPort And HDMI. What Are Their Differences? Which One Should You Use?

Myth: All digital display connector technologies are the same.

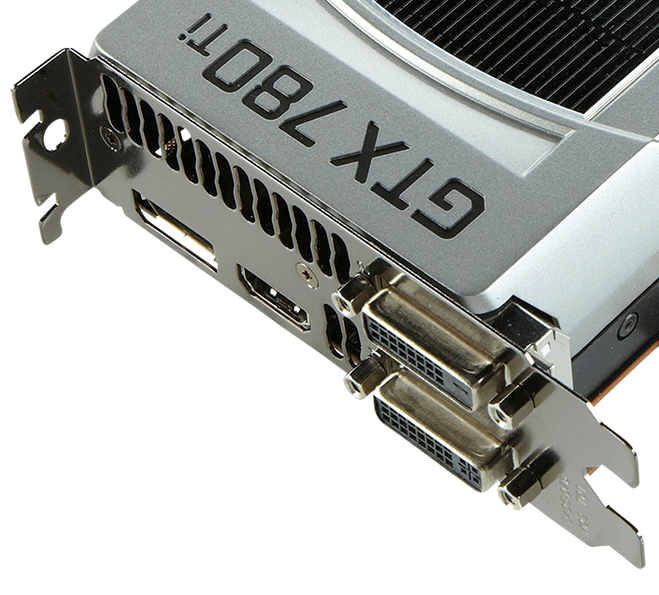

The GeForce GTX 780 Ti pictured above has four display outputs. On the left, there’s a DisplayPort connector. HDMI is in the center. The right-hand side plays host to dual-link DVI-I (below) and dual-link DVI-D (above). What are the differences between them?

The First Step Towards A (More) Digital World: DVI

DVI was introduced in 1999 to replace VGA (an analog interface), and it did so quite well. DVI is exposed through a variety of formats: DVI-A is analog-only, DVI-D is digital-only and the common DVI-I is an integrated analog plus digital interface. Trickier still, the DVI-D and DVI-I interfaces can either be single- or dual-link.

Article continues belowMost modern video cards expose dual-link interfaces, and the image above should help you determine whether yours does. More important, beware of single-link DVI cables! Externally, they look identical to the dual-link variety, though their connectors lack those four central pins. Single-link DVI cables can prevent you from reaching higher resolutions with your card/display, leaving you wondering why.

DVI is still probably the most popular standard for PC connectivity. But it is considered deprecated and planned for obsolescence by 2015, so consider an alternative interface if you wish to future-proof your setup. Unlike more modern options, it cannot carry an audio signal (although a variant that implements USB, and thus audio, was created). Furthermore, it utilizes the largest physical connector.

Enter HDTVs And HDMI

HDMI introduced a lot of TV-friendly features. It can concurrently carry audio and video signals. It opens the door to several connector sizes, but does away with the confusing -I/-A/-D and single-/dual-link concepts, arguably making it more user-friendly.

The major downside of HDMI is that is a proprietary standard, licensed out, and thus not free. Each manufacturer that wishes to make or ship HDMI cables with its products needs to pay a fixed fee, plus a per-unit royalty. Using the HDMI logo reduces the toll, which explains why the HDMI logo is ubiquitously present on product packaging.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

DisplayPort: Freedom (From Royalties) And Advanced Features

When DVI started showing its age around 2005, the Video Electronics Standards Association (VESA) designed a new standard with enhanced capabilities to replace it, leading to the birth of DisplayPort in 2006. Like HDMI, DisplayPort can carry audio and video together. Furthermore, version 1.3, released just this year, offers what is currently the highest bandwidth available on all consumer-available display connectors (at 32.4Gb/s, or 25.92Gb/s with overhead removed).

We’ll also mention that Intel's Thunderbolt technology combines PCIe, DisplayPort and a DC power connection in one cable. But for the purpose of this article, it essentially behaves as a DisplayPort 1.1 cable, so we won’t cover it. Thunderbolt 2, such as present on Apple's late 2013 Retina MacBook Pro, incorporates DisplayPort 1.2a.

Comparing Three Digital Interfaces

The Good Things To Come: HDMI 2.0 And DisplayPort 1.3

In December of 2010, Intel, AMD and several other companies discussed withdrawing support for DVI-I, VGA and LVDS-technologies between 2013 and 2015, instead emphasizing DisplayPort and HDMI. They stated: "Legacy interfaces such as VGA, DVI and LVDS have not kept pace, and newer standards such as DisplayPort and HDMI clearly provide the best connectivity options moving forward. In our opinion, DisplayPort 1.2 is the future interface for PC monitors, along with HDMI 1.4a for TV connectivity".

HDMI 2.0 was officially released in September of 2013, although hardware supporting it is still rare. The interface supports 4K at 60Hz natively, along with a host of new features mostly interesting in the TV market.

As mentioned, DP 1.3 was just released (so don't expect to see compatible devices before the end of this year). The standard pushes available bandwidth up to 32.4Gb/s compared to HDMI 2.0’s 18Gb/s. More interesting for gamers, AMD’s Project FreeSync was recently incorporated into the DisplayPort 1.2a standard, creating an industry standard called Adaptive-Sync for enabling dynamic refresh rates. It remains to be seen whether this is able to render Nvidia’s G-Sync technology obsolete.

A Man Can Dream: 4K At 120 Hz?

Myth: Gaming at 4K at 120Hz is around the corner.

In order to game at 4K and 120Hz, you'd need two HDMI 2.0 or DP 1.2a connections, along with graphics cards supporting those outputs. Only Nvidia’s GeForce GTX 980 and 970 qualify currently. The sheer complexity of such a setup, the complete lack of 120Hz-capable 4K panels and the amount of graphics processing power you’d need to pump out a smooth 60FPS make this a prohibitive prospect for now. The tradeoff between 120Hz gaming at 1440p and 60Hz gaming at 2160p will persist for at least another couple of years.

Conclusions On Display Connectors

Because of its royalty-free nature, advanced features, extended compatibility and wide industry support, DisplayPort is bound to replace DVI as the connector technology of choice for PC displays. HDMI remains a valid alternative, though it’s particularly suited for use with televisions.

As for today's reality, whether you use DVI, DP or HDMI really doesn't matter unless you fall into one of these five circumstances:

- You want to play at resolutions above 2560x1600. Then, you need a DisplayPort 1.2a setup.

- You want to use Nvidia G-Sync. Then, you need a DisplayPort 1.2a setup (the only supported technology).

- You want to connect multiple devices to a single output (via a hub). Then, you need a DisplayPort 1.2a setup.

- You want to send audio to a monitor or TV through a single cable. Then, you need either HDMI or DisplayPort

- You need compatibility with legacy VGA devices. Then, you need DVI-I (or you will have to rely on an active adapter).

Current page: DVI, DisplayPort, HDMI: Digital, But Not Quite The Same

Prev Page HDTVs, Display Size And Anti-Aliasing Next Page Vendor-Specific Technologies: Mantle, ShadowPlay, TXAA And G-Sync-

iam2thecrowe i've always had a beef with gpu ram utillization and how its measured and what driver tricks go on in the background. For example my old gtx660's never went above 1.5gb usage, searching forums suggests a driver trick as the last 512mb is half the speed due to it's weird memory layout. Upon getting my 7970 with identical settings memory usage loading from the same save game shot up to near 2gb. I found the 7970 to be smoother in the games with high vram usage compared to the dual 660's despite frame rates being a little lower measured by fraps. I would love one day to see an article "the be all and end all of gpu memory" covering everything.Reply

Another thing, i'd like to see a similar pcie bandwidth test across a variety of games and some including physx. I dont think unigine would throw much across the bus unless the card is running out of vram where it has to swap to system memory, where i think the higher bus speeds/memory speed would be an advantage. -

blackmagnum Suggestion for Myths Part 3: Nvidia offers superior graphics drivers, while AMD (ATI) gives better image quality.Reply -

chimera201 About HDTV refresh rates:Reply

http://www.rtings.com/info/fake-refresh-rates-samsung-clear-motion-rate-vs-sony-motionflow-vs-lg-trumotion -

photonboy Implying that an i7-4770K is little better than an i7-950 is just dead wrong for quite a number of games.Reply

There are plenty of real-world gaming benchmarks that prove this so I'm surprised you made such a glaring mistake. Using a synthetic benchmark is not a good idea either.

Frankly, I found the article was very technically heavy were not necessary like the PCIe section and glossed over other things very quickly. I know a lot about computers so maybe I'm not the guy to ask but it felt to me like a non-PC guy wouldn't get the simplified and straightforward information he wanted. -

eldragon0 If you're going to label your article "graphics performance myths" Please don't limit your article to just gaming, It's a well made and researched article, but as Photonboy touched, the 4770k vs 950 are about as similar as night and day. Try using that comparison for graphical development or design, and you'll get laughed off the site. I'd be willing to say it's rendering capabilities are actual multiples faster at those clock speeds.Reply -

SteelCity1981 photonboy this article isn't for non pc people, because non pc people wouldn't care about detailed stuff like this.Reply -

renz496 Reply14561510 said:Suggestion for Myths Part 3: Nvidia offers superior graphics drivers

even if toms's hardware really did their own test it doesn't really useful either because their test setup won't represent million of different pc configuration out there. you can see one set of driver working just fine with one setup and totally broken in another setup even with the same gpu being use. even if TH represent their finding you will most likely to see people to challenge the result if it did not reflect his experience. in the end the thread just turn into flame war mess.

14561510 said:Suggestion for Myths Part 3: while AMD (ATI) gives better image quality.

this has been discussed a lot in other tech forum site. but the general consensus is there is not much difference between the two actually. i only heard about AMD cards the in game colors can be a bit more saturated than nvidia which some people take that as 'better image quality'. -

ubercake Just something of note... You don't necessarily need Ivy Bridge-E to get PCIe 3.0 bandwidth. Sandy Bridge-E people with certain motherboards can run PCIe 3.0 with Nvidia cards (just like you can with AMD cards). I've been running the Nvidia X79 patch and getting PCIe gen 3 on my P9X79 Pro with a 3930K and GTX 980.Reply -

ubercake Another article on Tom's Hardware by which the 'ASUS ROG Swift PG...' link listed for an unbelievable price takes you to the PB278Q page.Reply

A little misleading.