Intel DC P3520 Enterprise SSD Review

Why you can trust Tom's Hardware

128KB Sequential Read And Write

To read more on our test methodology visit How We Test Enterprise SSDs, which explains how to interpret our charts. The most crucial step to assuring accurate and repeatable tests starts with a solid preconditioning methodology, which is covered on page three. We cover 128KB Sequential performance measurements on page five, explain latency metrics on page seven, and explain QoS testing and the QoS domino effect on page nine.

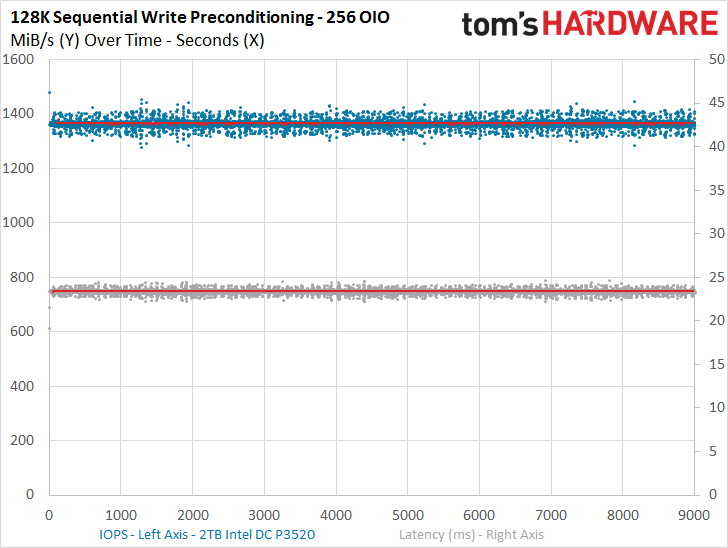

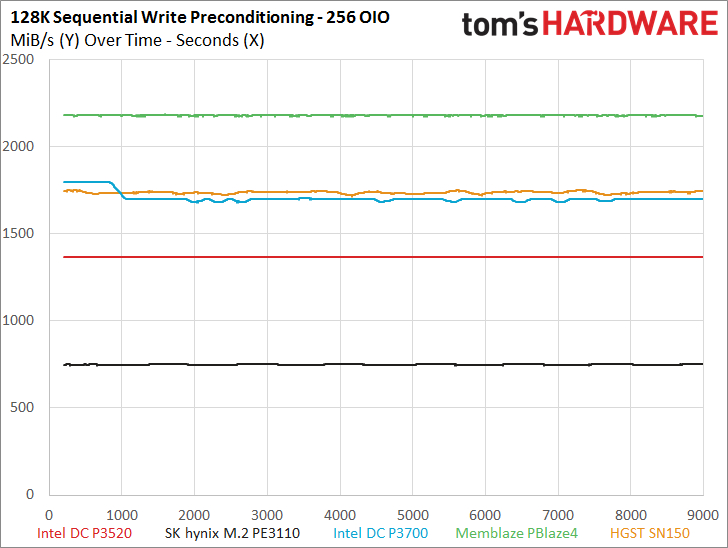

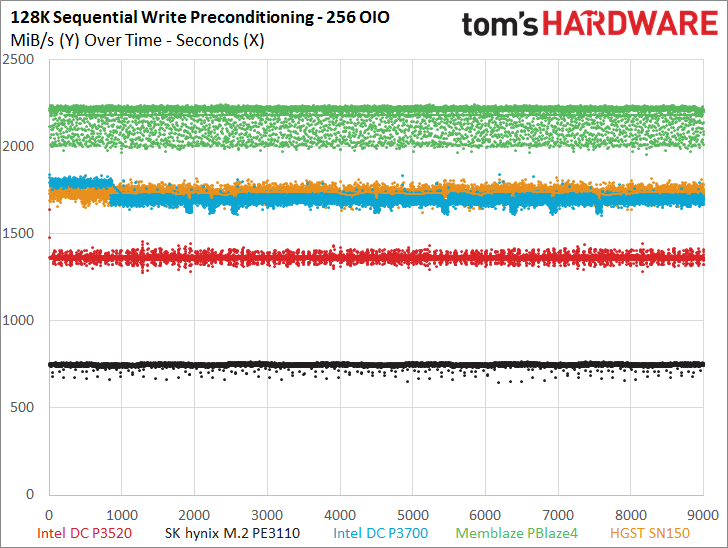

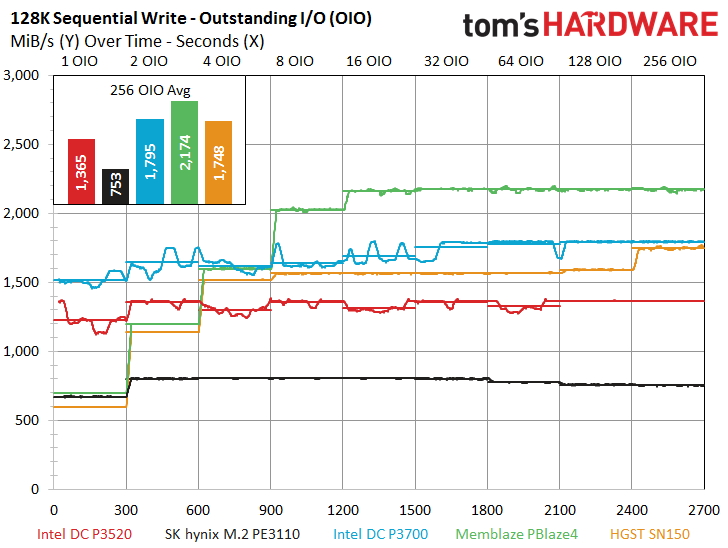

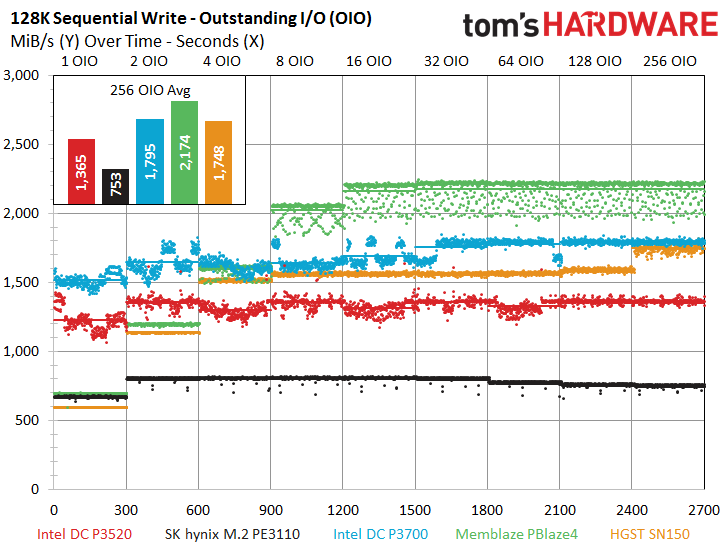

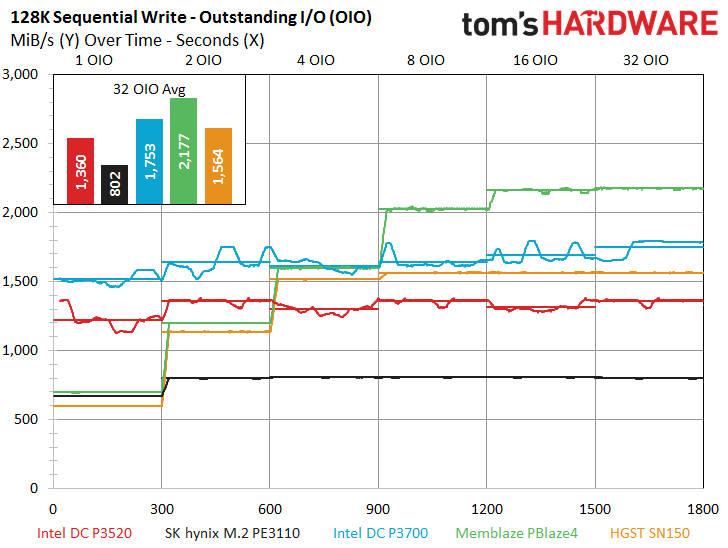

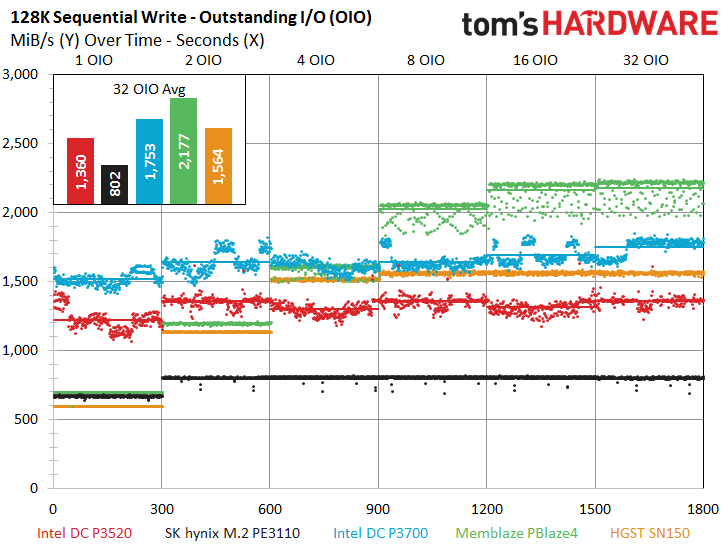

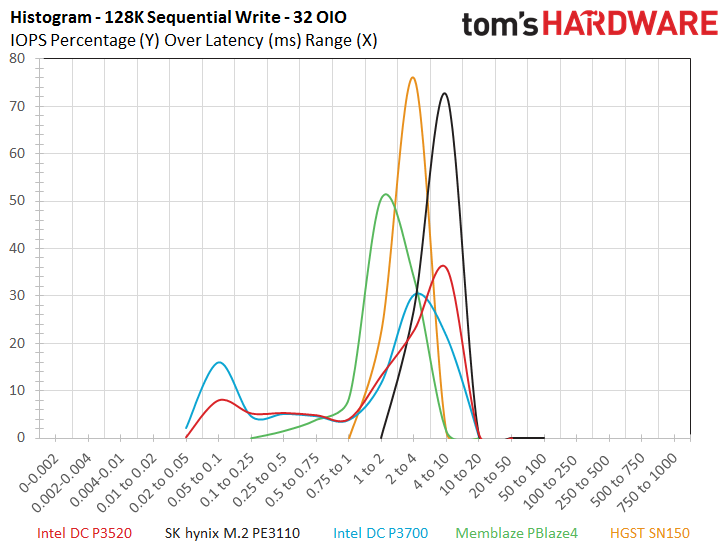

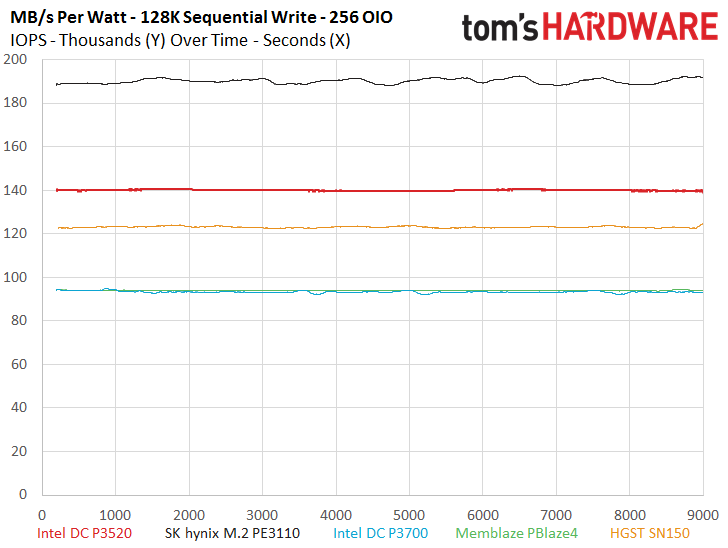

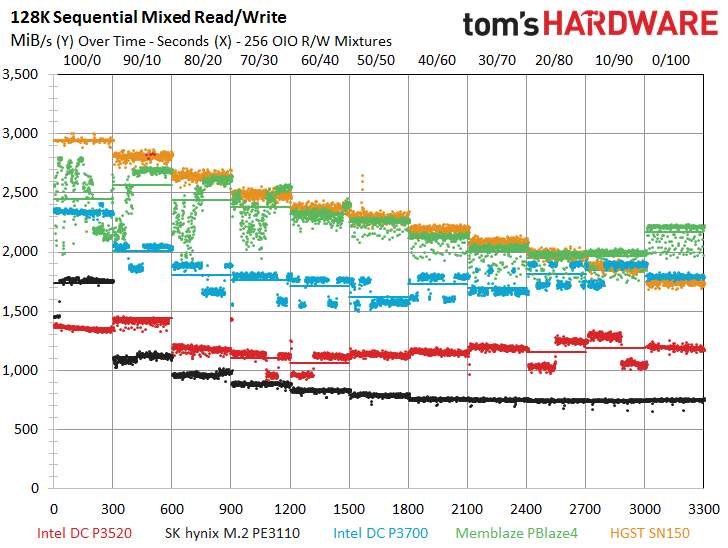

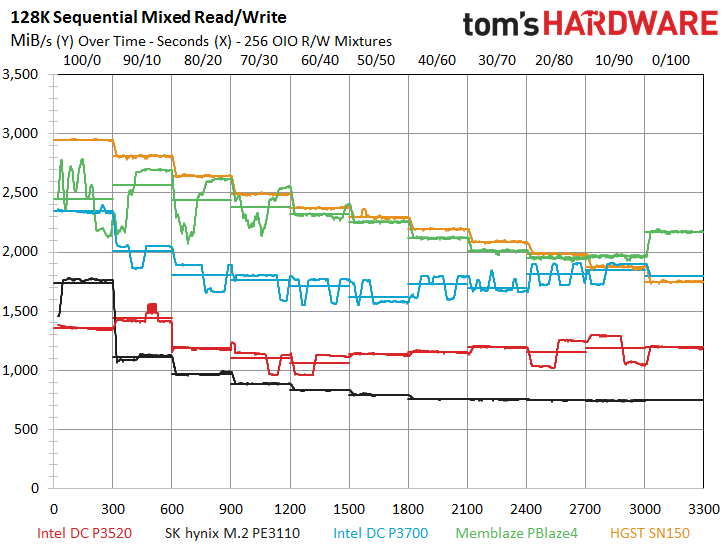

The DC P3520 provides impressive sequential write performance of nearly 1,400 MB/s during the preconditioning period, which easily outstrips the PE3110 but trails the rest of the test pool. The DC P3520 exhibits a tight I/O distribution profile during the preconditioning phase, and that carries over to the bulk of the test.

The DC P3520 provides amazing sequential write performance at QD1 with an average of roughly 1,200 MB/s, which easily outstrips both the PBlaze4 and the SN150. PMC-Sierra-powered SSDs tend to suffer lower sequential performance under light load.

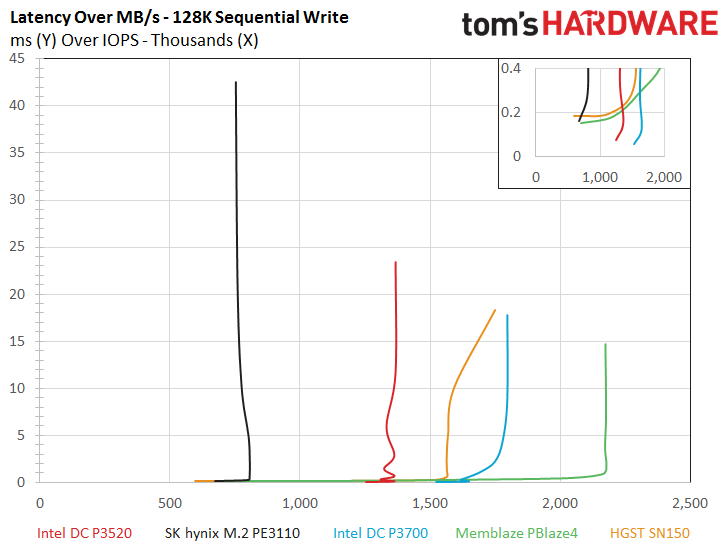

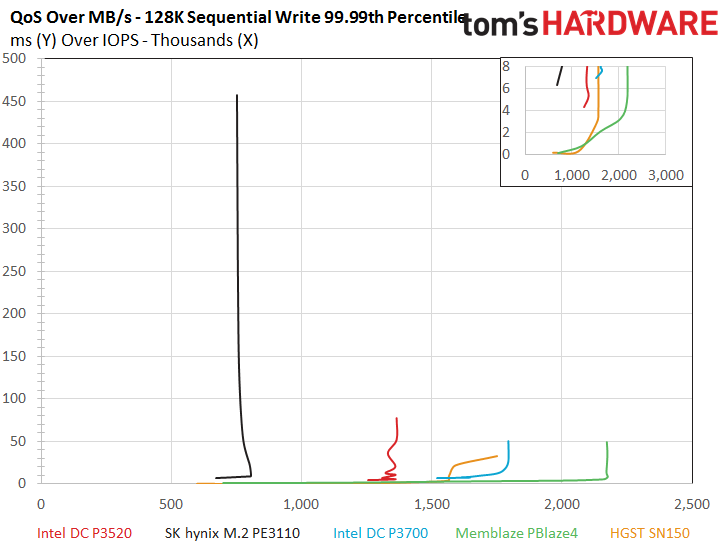

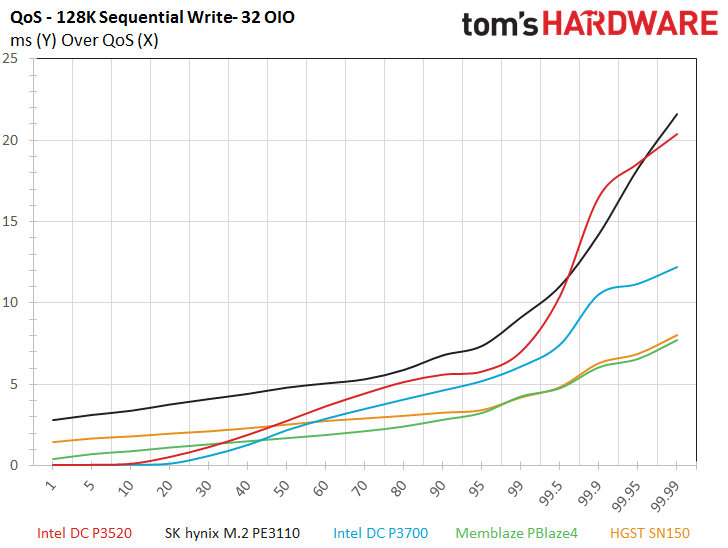

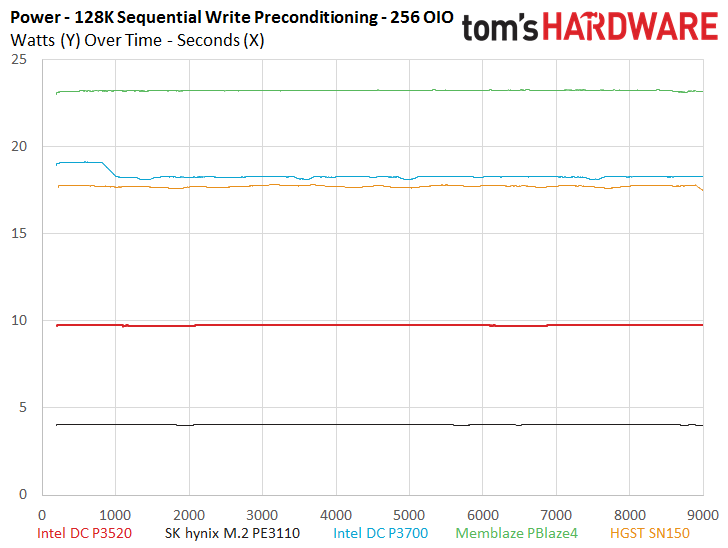

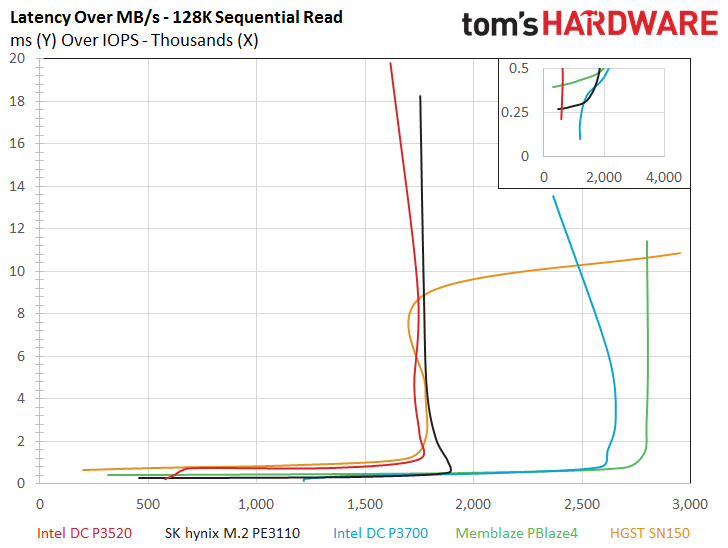

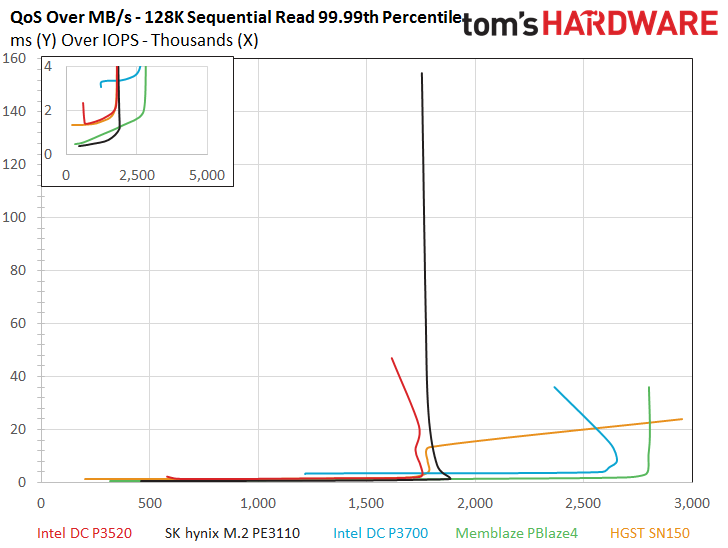

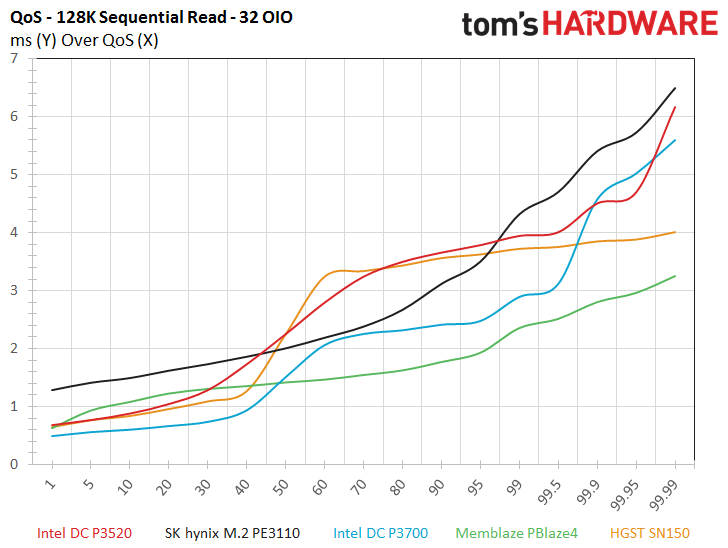

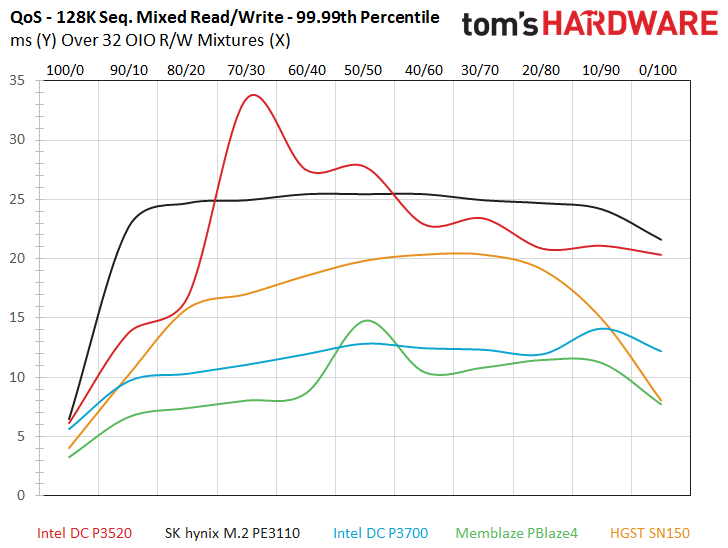

The DC P3520 doesn’t stop at impressive throughput; it also delivers great QoS and latency metrics during the workload. It also passes the majority of the test pool in both power consumption and efficiency metrics. The PE3110 takes the top of the efficiency chart, but we have to remember that it only provides 800MB/s of throughput during the tests.

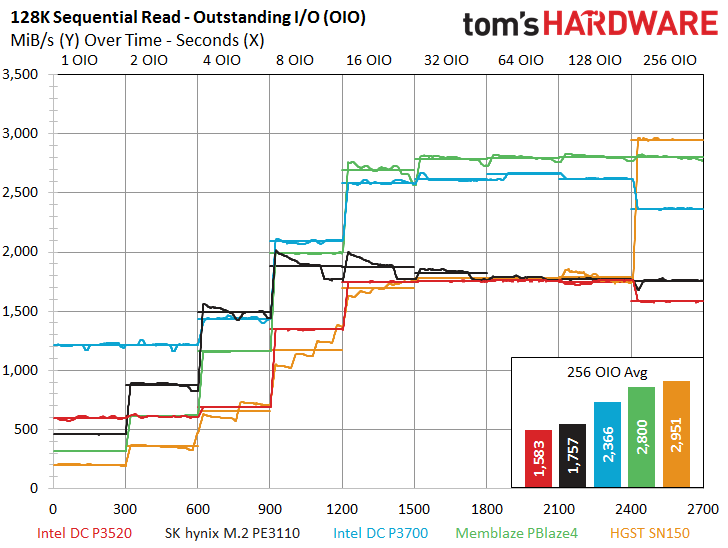

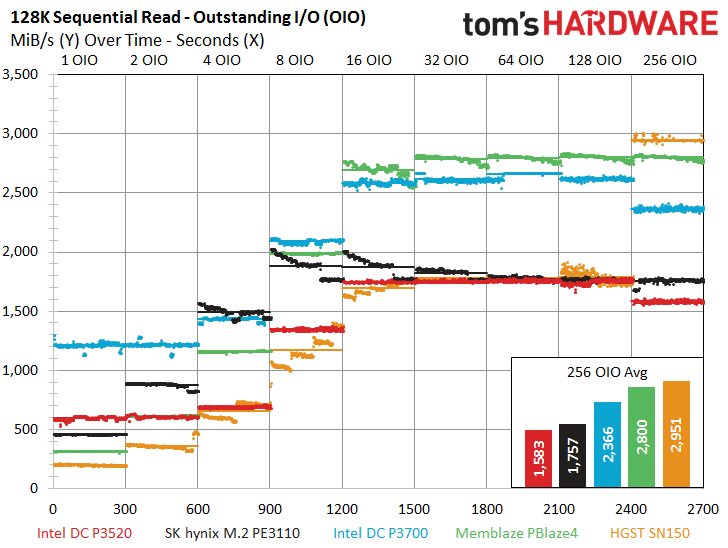

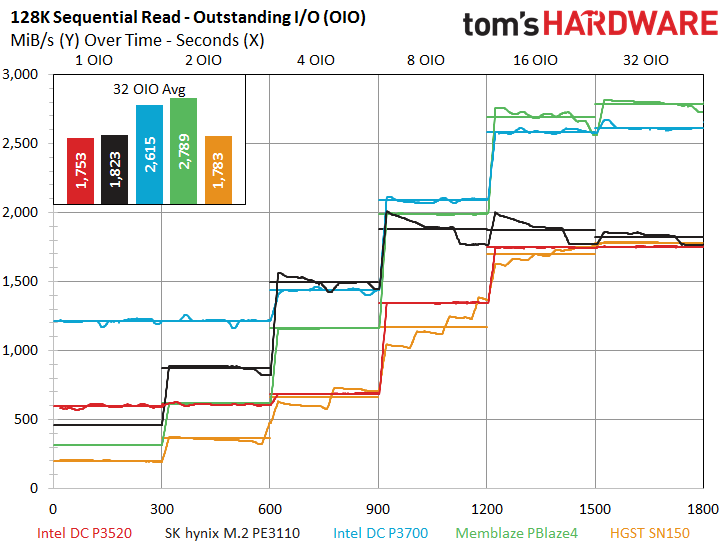

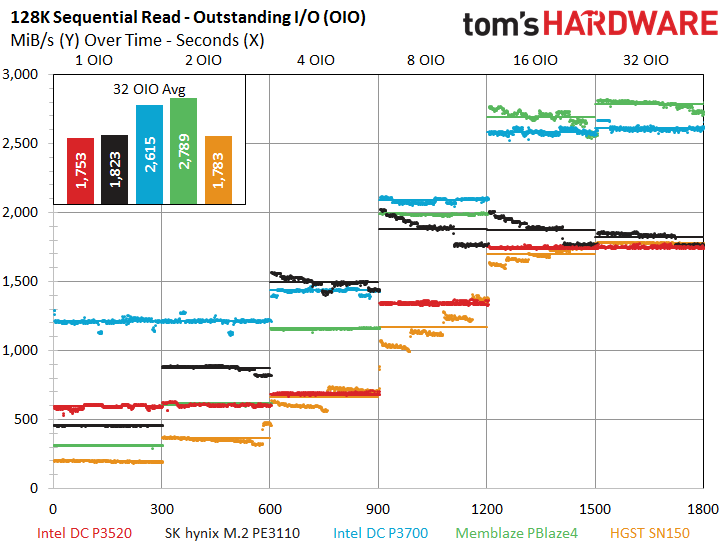

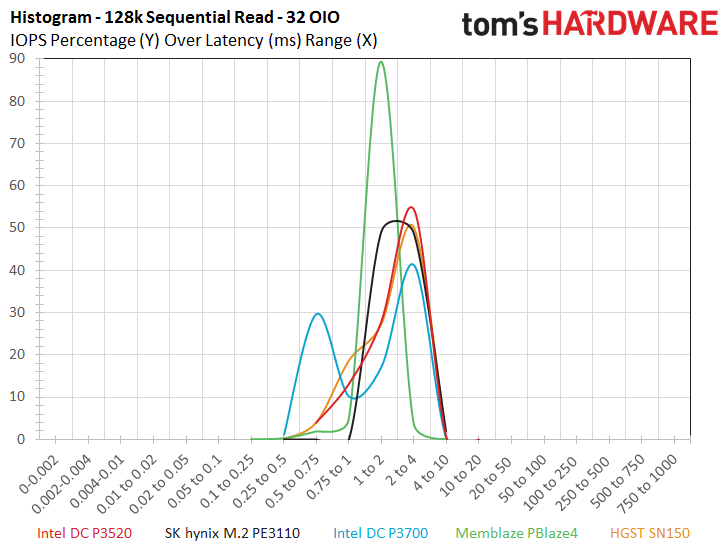

The read portions of the sequential tests are also somewhat encouraging for the DC P3520, and it outperforms all of the flagship products at the all-important 1 OIO. This is with the exception of its DC P3700 cousin, which more than doubles the nearest non-Intel competitor. The DC P3520 offers a top speed of 1,700 MB/s at 128 OIO but takes a bit of a step back at 256 OIO to 1,583 MB/s. The P3700 also shares this same reduced-performance trait at 256 OIO, while the SN150 is famous for its wild swing at 256 OIO. The SN150 provides 1,700MB/s at 128 OIO but jumps to an amazing 3,000 MB/s at 256 OIO. In either case, most users will never reach such an insane level of outstanding requests, so the mid and low-range are the metrics that matter.

The PE3110 also offers great performance during the sequential read workload, particularly as demand intensity increases. The PE3110 pulls ahead of the DC P3520 at 2 OIO and maintains an impressive lead until 128 OIO.

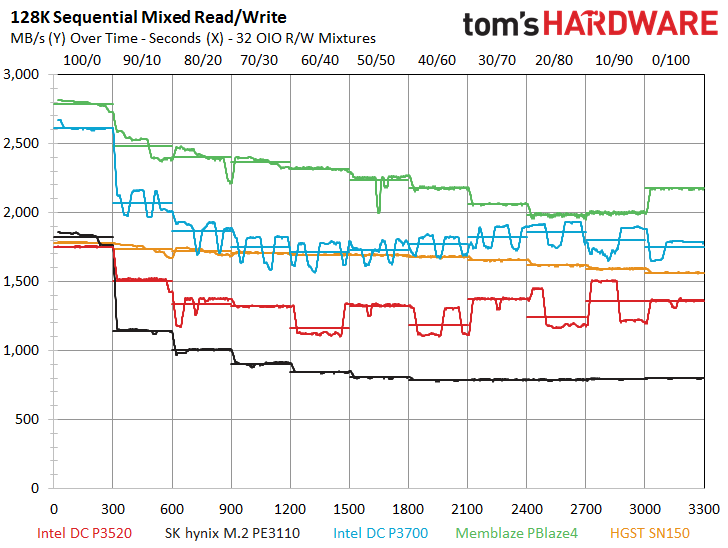

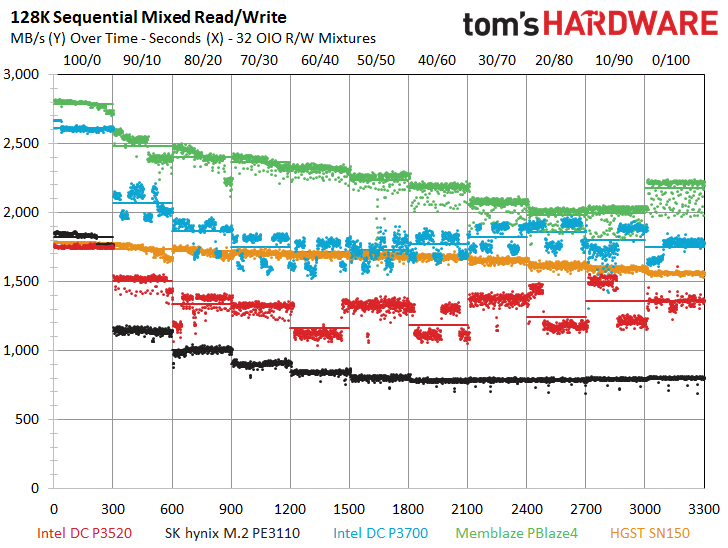

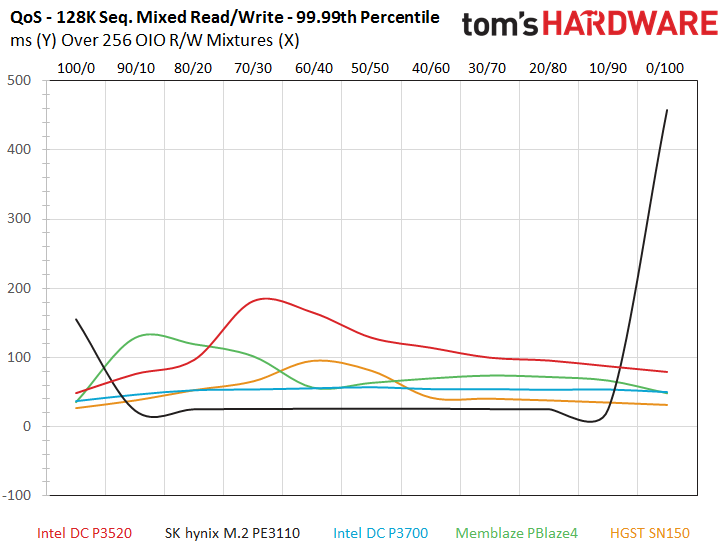

The Memblaze Blaze4 is the undisputed champion of mixed sequential workloads, and that fact shines through in this test. The DC P3520 also musters a fairly impressive performance profile during the 32 and 256 OIO mixtures, but we observe a bit of the tell-tale Intel performance seesaw emerge, which helps push its 32 QoS measurements up during the test.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

MORE: How We Test Enterprise SSDs

MORE: How We Test Enterprise HDDs

Current page: 128KB Sequential Read And Write

Prev Page 8KB Random Read And Write Next Page OLTP And Email Server Workloads

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Game256 Paul, Chris, any updates regarding possible release dates of Samsung 960 EVO/PRO? Have you received the samples already?Reply -

DocBones Glad to see more u.2 formats, really dont like m.2 for desktops. That 2mm screw is a pain.Reply -

Tom Griffin Am I am idiot but should this be on Tom's IT Pro? Aside from that the M.2 for an enterprise drive simply does not jive on current motherboards look at PCIe lane allocation. 4x lanes one drive even the high end CPUs with 40 lanes will choke with more than 4.Reply -

bit_user Reply

You might be right, but I'm glad it's not. It represents superb read-oriented SSD performance. Especially for the price.18682745 said:this be on Tom's IT Pro?

It's not M.2. They have PCIe add-in cards and U.2 form factors. M.2 wouldn't fly, due to the power dissipation, if not also the board area needed.18682745 said:Aside from that the M.2 for an enterprise drive

How many of these are you planning to use? This is for read-intensive workloads, so you'd hopefully just need one, which could be paired with cheaper storage for everything else. I guess at the high end, you might pack a machine full of them, but then you might have more than one CPU (which adds yet more PCIe lanes).18682745 said:simply does not jive on current motherboards look at PCIe lane allocation. 4x lanes one drive even the high end CPUs with 40 lanes will choke with more than 4.

BTW, did you know that M.2 is a popular form factor for high-end desktop SSDs? It supports up to 4-lanes. So, it would seem that some people think such performance is worth the resource footprint. -

bit_user ReplyThe P3520 actually takes a step back on the performance front in comparison to the previous-generation DC P3500, which featured up to 430,000/28,000 read/write IOPS.

Given the deals out there to be had, the real star of the show is the DC P3500. If you can live with the lower endurance than the P3520, it offers a compelling alternative to the 750-series. Here's how they compare:

http://ark.intel.com/compare/86740,82846

Update: snagged a 400 GB DC S3500 at $225. Price is now back up to $275. Worth keeping an eye on, if you're interested. Supposedly, a full-height bracket is included in the box. I'll update again, to confirm. -

bit_user I'm just interested in the read performance, but I noticed two pairs of images that are nearly identical. I loaded them in different tabs and flipped back and forth. The only difference seems to be whether the lines from different queue depths are connected.Reply

http://media.bestofmicro.com/ext/aHR0cDovL21lZGlhLmJlc3RvZm1pY3JvLmNvbS9leHQvYUhSMGNEb3ZMMjFsWkdsaExtSmxjM1J2Wm0xcFkzSnZMbU52YlM5R0x6WXZOakV3T1RZeUwyOXlhV2RwYm1Gc0x6QXpMbkJ1Wnc9PS9yXzYwMHg0NTAucG5n/rc_400x300.png

http://media.bestofmicro.com/ext/aHR0cDovL21lZGlhLmJlc3RvZm1pY3JvLmNvbS9leHQvYUhSMGNEb3ZMMjFsWkdsaExtSmxjM1J2Wm0xcFkzSnZMbU52YlM5R0x6a3ZOakV3T1RZMUwyOXlhV2RwYm1Gc0x6QTBMbkJ1Wnc9PS9yXzYwMHg0NTAucG5n/rc_400x300.png

And:

http://media.bestofmicro.com/ext/aHR0cDovL21lZGlhLmJlc3RvZm1pY3JvLmNvbS9leHQvYUhSMGNEb3ZMMjFsWkdsaExtSmxjM1J2Wm0xcFkzSnZMbU52YlM5R0x6Y3ZOakV3T1RZekwyOXlhV2RwYm1Gc0x6QXhMbkJ1Wnc9PS9yXzYwMHg0NTAucG5n/rc_400x300.png

http://media.bestofmicro.com/ext/aHR0cDovL21lZGlhLmJlc3RvZm1pY3JvLmNvbS9leHQvYUhSMGNEb3ZMMjFsWkdsaExtSmxjM1J2Wm0xcFkzSnZMbU52YlM5R0x6Z3ZOakV3T1RZMEwyOXlhV2RwYm1Gc0x6QXlMbkJ1Wnc9PS9yXzYwMHg0NTAucG5n/rc_400x300.png

Not really a complaint - just an observation. As long as the data is accurate, no harm done.

Then, at the end of the read graphs, it seems like some AMD slides crept in? Oops?

BTW, the Latency vs. IOPS is now officially my second favorite SSD performance graph (after IOPS vs. queue depth, of course).