Nvidia's SLI Technology In 2015: What You Need To Know

Introduction

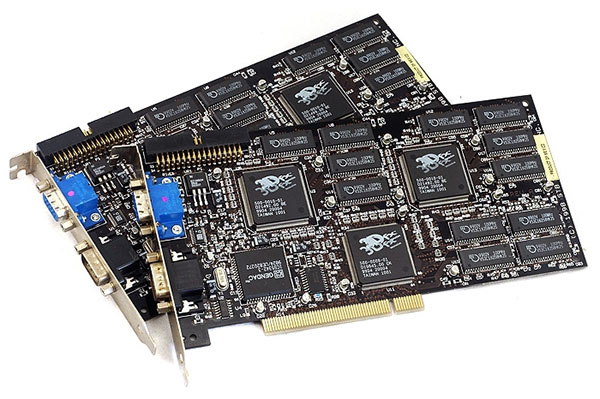

I've been excited by SLI ever since it was introduced as Scan Line Interleave by 3Dfx. Two Voodoo2 cards could operate together, with the noticeable benefit of upping your maximum 3D resolution from 800x600 to 1024x768. Amazing stuff...back in 1998.

Fast forward almost twenty years. 3Dfx went out of business long ago (it was acquired in 2000 out of bankruptcy by Nvidia), and SLI was re-introduced and re-branded by Nvidia in 2004 (it now stands for Scalable Link Interface). But the overall perception of SLI as a status symbol in hardcore gaming machines, offering massive rendering power, but also affected by numerous technical issues, has changed little.

Today we're looking at the green team specifically, and we plan to follow up with a second part on AMD's CrossFire. In that next piece, you'll see us compare both manufactures' dual-GPU offerings.

Note

Some ideas in this article come directly from users we surveyed on Reddit. Thank you to all those who contributed!

In this article, we'll explore some of the technology's basics as it operates today, take an in-depth look at scaling with two cards compared to one, discuss driver and game-related issues, explore overclocking potential and finally provide some recommendations on how to decide whether SLI is right for you.

While SLI technically supports up to four GPUs in certain configurations, it is generally accepted that three- and four-way SLI don't scale as well as a two-way array. While you are likely to see PCs with three or four GPUs at the top of synthetic benchmark charts, they're a lot less common in the real world, and not just because of their cost.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Furthermore, Nvidia representatives confirm that three-way SLI is not supported in 8x/4x/4x PCIe lane configurations, which are native to Intel's LGA 1150 platform. You'll either need an LGA 1150-based board equipped with an (expensive) PLX bridge chip or an even more expensive LGA 2011-v3 platform if you want to go beyond two-way SLI. Fortunately, most Haswell/Ivy Bridge/Sandy Bridge platforms enable two-way SLI without issue.

Finally, another downside of going beyond two-way SLI is that, because of the way SLI works, input lag increases as the number of cards working together goes up.

MORE: Best Graphics Cards For The Money

MORE: How To Build A PC: From Component Selection To Installation

MORE: Gaming At 3840x2160: Is Your PC Ready For A 4K Display

MORE: All Graphics Articles

MORE: Graphics Cards in the Forum

-

PaulBags Nice article. Looking foreward to comparing to a dx12+sli article when it happens, see how much it changes the sli game since cpu's will be less likely to bottlneck.Reply

Do you think we'd see 1080p monitors with 200hz+ in the future? Would it even make a difference to the human eye? -

none12345 They really need to redesign the way multigpu works. Something is really wrong when 2+ gpus dont work half the time, or have higher latency then 1 gpu. That fact that this has persisted for like 15 years now is an utter shame. SLI profiles and all the bugs and bs that comes with SLI needs to be fixed. A game shouldnt even be able to tell how many gpus there are, and it certainly shouldnt be buggy on 2+ gpus but not on 1.Reply

I also believe that alternating frames is utter crap. The fact that this has become the go to standard is a travesty. I dont care for fake fps, at the expense of consistent frames, or increased latency. If one card produces 60fps in a game. I would much rather have 2 cards produce 90fps and both of them work on the same frame at the same time, then for 2 cards to produce 120 fps alternating frames.

The only time 2 gpus should not be working on the same frame, is 3d or vr, where you need 2 angles of the same scene generated each frame. Then ya, have the cards work seperatly on their own perspective of the scene. -

PaulBags Considering dx12 with optimised command queues & proper cpu scaling is still to come later in the year, I'd hate to imagine how long until drivers are universal & unambiguous to sli.Reply -

cats_Paw The article is very nice.Reply

However, If i need to buy 2 980s to run a VR set or a 4K display Ill just wait till the prices are more mainstream.

I mean, in order to have a good SLI 980 rig you need a lot of spare cash, not to mention buying a 4K display (those that are actually any good cost a fortune), a CPU that wont bottleneck the GPUs, etc...

Too rich for my blood, Id rather stay on 1080p, untill those technologies are not only proven to be the next standard, but content is widely available.

For me, the right moment to upgrade my Q6600 will be after DX12 comes out, so I can see real performance tests on new platforms. -

Luay I thought 2K (1440P) resolutions were enough to take a load off an i5 and put it into two high-end maxwell cards in SLI, and now you show that the i7 is bottle-necking at that resolution??Reply

I had my eye on the two Acer monitors, the curved 34" 21:9 75Hz IPS, and the 27" 144HZ IPS, either one really for a future build but this piece of info tells me my i5 will be a problem.

Could it be that Intel CPUs are stagnated in performance compared to GPUs, due to lack of competition?

Is there a way around this bottleneck at 1440P? Overclocking or upgrading to Haswell-E or waiting for Sky-lake? -

loki1944 Really wish they would have made 4GB 780Tis, the overclock on those 980s is 370Mhz higher core clock and 337Mhz higher memory clock than my 780Tis and barely beats them in Firestrike by a measly 888 points. While SLI is great 99% of the time there are still AAA games out there that don't work with it, or worse, are better off disabling SLI, such as Watchdogs and Warband. I would definitely be interested in a dual gpu Titan X card or even 980 (less interested in the latter) because right now my Nvidia options for SLI on a mATX single PCIE slot board is limited to the scarce and overpriced Titan Z or the underwhelming Mars 760X2.Reply -

baracubra I feel like it would be beneficial to clarify on the statement that "you really need two *identical* cards to run in SLI."Reply

While true from a certain perspective, it should be clarified that you need 2 of the same number designation. As in two 980's or two 970's. I fear that new system builders will hold off from going SLI because they can't find the same *brand* of card or think they can't mix an OC 970 with a stock 970 (you can, but they will perform at the lower card's level).

PS. I run two 670's just fine (one stock EVGA and one OC Zotac) -

jtd871 I'd have appreciated a bit of the in-depth "how" rather than the "what". For example, some discussion about multi-GPU needing a separate physical bridge and/or communicating via the PCIe lanes, and the limitations of each method (theoretical and practical bandwidth and how likely this channel is to be saturated depending on resolution or workload). I know that it would take some effort, but has anybody ever hacked a SLI bridge to observe the actual traffic load (similar to your custom PCIe riser to measure power)? It's flattering that you assume knowledge on the part of your audience, but some basic information would have made this piece more well-rounded and foundational for your upcoming comparison with AMDs performance and implementation.Reply -

mechan ReplyI feel like it would be beneficial to clarify on the statement that "you really need two *identical* cards to run in SLI."

While true from a certain perspective, it should be clarified that you need 2 of the same number designation. As in two 980's or two 970's. I fear that new system builders will hold off from going SLI because they can't find the same *brand* of card or think they can't mix an OC 970 with a stock 970 (you can, but they will perform at the lower card's level).

PS. I run two 670's just fine (one stock EVGA and one OC Zotac)

What you say -was- true with 6xx class cards. With 9xx class cards, requirements for the cards to be identical have become much more stringent!