Seagate 600 SSD 240 GB Review: LAMD And Toshiba, Together Again

Seagate is the world's largest purveyor of mechanical hard drives. As the company prepares for mortal combat in the consumer SSD space, are its wits, Toshiba's Toggle-mode NAND, and SK hynix memory solutions' 87800 controller enough to get by?

Results: Tom's Storage Bench v1.0

Storage Bench v1.0 (Background Info)

Our Storage Bench incorporates all of the I/O from a trace recorded over two weeks. The process of replaying this sequence to capture performance gives us a bunch of numbers that aren't really intuitive at first glance. Most idle time gets expunged, leaving only the time that each benchmarked drive was actually busy working on host commands. So, by taking the ratio of that busy time and the the amount of data exchanged during the trace, we arrive at an average data rate (in MB/s) metric we can use to compare drives.

It's not quite a perfect system. The original trace captures the TRIM command in transit, but since the trace is played on a drive without a file system, TRIM wouldn't work even if it were sent during the trace replay (which, sadly, it isn't). Still, trace testing is a great way to capture periods of actual storage activity, a great companion to synthetic testing like Iometer.

Incompressible Data and Storage Bench v1.0

Also worth noting is the fact that our trace testing pushes incompressible data through the system's buffers to the drive getting benchmarked. So, when the trace replay plays back write activity, it's writing largely incompressible data. If we run our storage bench on a SandForce-based SSD, we can monitor the SMART attributes for a bit more insight.

| Mushkin Chronos Deluxe 120 GBSMART Attributes | RAW Value Increase |

|---|---|

| #242 Host Reads (in GB) | 84 GB |

| #241 Host Writes (in GB) | 142 GB |

| #233 Compressed NAND Writes (in GB) | 149 GB |

Host reads are greatly outstripped by host writes to be sure. That's all baked into the trace. But with SandForce's inline deduplication/compression, you'd expect that the amount of information written to flash would be less than the host writes (unless the data is mostly incompressible, of course). For every 1 GB the host asked to be written, Mushkin's drive is forced to write 1.05 GB.

If our trace replay was just writing easy-to-compress zeros out of the buffer, we'd see writes to NAND as a fraction of host writes. This puts the tested drives on a more equal footing, regardless of the controller's ability to compress data on the fly.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

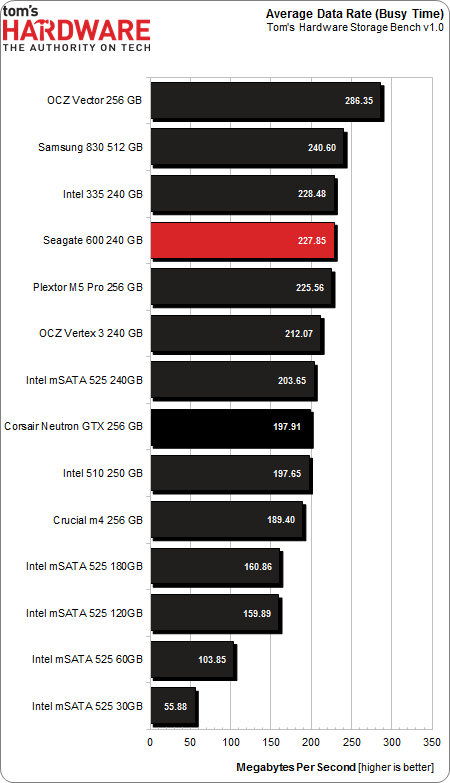

Average Data Rate

The Storage Bench trace generates more than 140 GB worth of writes during testing. Obviously, this tends to penalize drives smaller than 180 GB and reward those with more than 256 GB of capacity. It's a little unfair in that way, which is why it's a good metaphor for life.

First, the Vector is fast. Seagate's 600 might be an iron fist in a silk glove, but the Vector is a iron fist that rockets around at 286 MB/s. Pulling down an average data rate so far ahead of everything else is impressive, though it doesn't necessarily guarantee better performance quality on its own. Second, among all the 240/256 GB SSDs in this collection, all models (except for the Vector) are within spitting distance of each other.

Corsair's Neutron GTX falls further in the standings than Seagate. In absolute terms, the Neutron is 30 MB/s behind the 600. The GTX's 197 MB/s is certainly quick. But the Seagate implementation earns its first real performance break from the other LAMD-controlled drive with a whopping 227 MB/s.

Also notable is the value-oriented Intel SSD 335 and the pricier SSD 510. The 250 GB SSD 510 posts an average data rate just a few KB/s slower than Corsair's solution, and the SSD 335 pushes slightly ahead of Seagate's 600. Considering the vast majority of transfers are 4 KB in size, the week of system activity doesn't really punish the dilatory SSD 510 as much as the previous page's charts might have led you to believe.

It'd be reasonable to expect the Seagate and Corsair drives to hand in nearly identical numbers, based on the previous few pages of results. Given that both are likely very similar at the firmware level, the differences in performance could amount to a few gigabytes of spare NAND on the Seagate.

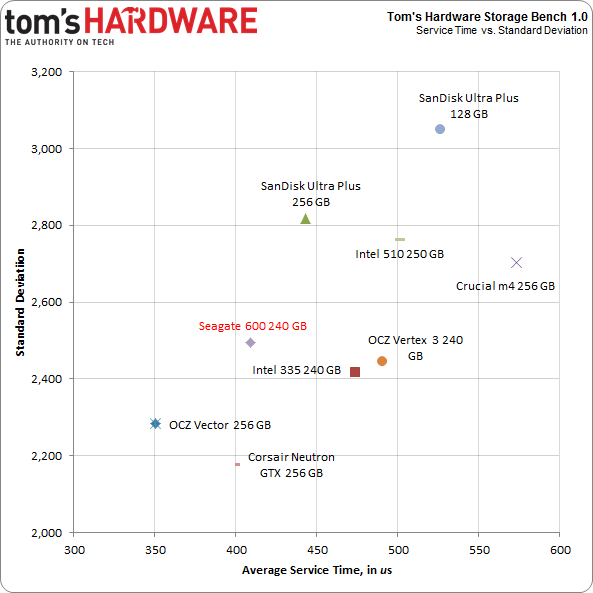

Service Times and Standard Deviation

There is a wealth of information we can collect with Tom's Storage Bench above and beyond the average data rate. Mean (average) service times show what responsiveness is like on an average I/O during the trace. It would be difficult to plot the 10 million I/Os that make up our test, so looking at the average time to service an I/O makes more sense. We can also plot the standard deviation against mean service time. That way, drives with quicker and more consistent service plot toward the origin (lower numbers are better here).

Given its average data rate performance, we're a little surprised to see the "slower" Neutron GTX serving up I/O as rapidly as the Seagate 600. Corsair might be doing its work in a more consistent fashion than Seagate, though. Overall, the Neutron demonstrates better quality of service, though the differences aren't spectacularly huge.

In a news flash that should surprise nobody, OCZ's Vector is still fast, breaking through with the lowest latency of all.

Current page: Results: Tom's Storage Bench v1.0

Prev Page Results: 4 KB Random Performance Next Page Results: PCMark 7 And PCMark Vantage-

mayankleoboy1 1. Where is the Samsung 840 and 840 Pro ? Samsung 830 is quite old now.Reply

2. I dont get why you use QD greater than 4 in the synthetics. All of thses drives are for PC users, who will rarely get QD even equal to 4.

3.I would have liked more real world tests like : Copying to and from drive, restoring backups, decompressing large ISO files , doing all of the above and then noting the time it takes to open Photoshop,

4. Can you do a pre and post defragment test, just for lolz ?

5. Can you do a test where the windows system is paging on the SSD ? basically a measure of the read/write disc speed when the OS is low on RAM and is using the SSD for pagefile.

6. IMHO, if you use completely incompressible data to check the perf of SSD, you are deliberately biasing against the Sandforce based SSD's. Could you use a better mix of compressible and incompressible data ? The dynamic compression will definitely improve the perf of Sandforce SSD's in real world desktop usage. -

mayankleoboy1 And two more :Reply

1. The time it takes to do a full drive complete error checking (check file errors+recovery of bad sectors).

2. The time it takes for a deleted file to be recovered ,using a third party data recovery freeware. -

kyuuketsuki Not a bad drive at all. However, that warranty nonsense Seagate is trying to pull is enough to make this a definite pass. Not going to support that.Reply -

ryomitomo There's a typo in the chart in the first page. The Max Warranty TBW for 120GB version should read 36.5TB instead of 36.5GB. Otherwise, it is not much of a lifetime write endurance.Reply -

Twoboxer I have no problem with their warranty statement. They are telling you exactly how long its going to last. As long as the device reports where it is along the way, I'll know exactly when to replace it - no surprises.Reply -

Soda-88 I don't see the problem with dual condition warranty. They're just protecting themselves from people who would abuse their SSD with heavy video capturing or something of the sort.Reply -

velosteraptor Soda-88I don't see the problem with dual condition warranty. They're just protecting themselves from people who would abuse their SSD with heavy video capturing or something of the sort.Reply

I dont have a problem with the dual condition warranty either, its a lot like a car; (10 year, 100,000 miles) I think the problem is that they are only giving a 3 year warranty, where almost everyone else in the ssd market has 5 year warrantys, and unconditioned at that. Even if the drive is faster than some of the other models tested here, id feel much safer buying a drive with a longer warranty, knowing its going to be protected for an extra 2 years.

-

raidtarded Almost every SSD manufacturer ties warranties to the amount of writes to the drive, you just have to read the fine print in the warranty. At least Seagate is upfront, most are hiding it until RMA time.Reply -

will1220 raidtardedAlmost every SSD manufacturer ties warranties to the amount of writes to the drive, you just have to read the fine print in the warranty. At least Seagate is upfront, most are hiding it until RMA time.Reply

False. Neither Ocz or samsung have limits on how much data is written on the drive. And their the only two ssd brands worth buying.

-

mapesdhs Please stop using graphs that have non-zero origins! They are incredibly visually misleading.Reply

Such charts are the domain of dodgy advertisers, not tech sites that seek to convey useful

information, etc.

Ian.