HBM3 to Top 665 GBps Bandwidth per Chip, SK Hynix Says

SK Hynix spills the beans on HBM3.

While high bandwidth memory (HBM) has yet to become a mainstream type of DRAM for graphics cards, it is a memory of choice for bandwidth-hungry datacenter and professional applications. HBM3 is the next step, and this week, SK Hynix revealed plans for its HBM3 offering, bringing us new information on expected bandwidth of the upcoming spec.

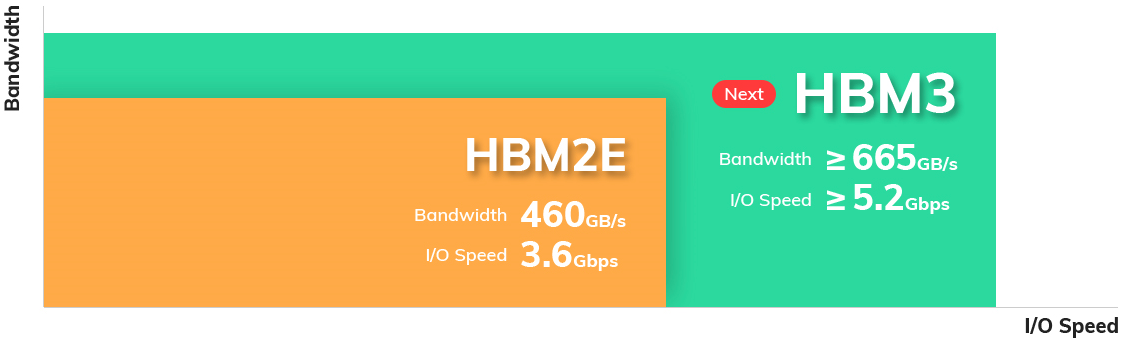

SK Hynix's current HBM2E memory stacks provide an unbeatable 460 GBps of bandwidth per device. JEDEC, which makes the HBM standard, has not yet formally standardized HBM3. But just like other makers of memory, SK Hynix has been working on next-generation HBM for quite some time.

Its HBM3 offering is currently "under development," according to an updated page on the company's website, and "will be capable of processing more than 665GB of data per second at 5.2 Gbps in I/O speed." That's up from 3.6 Gbps in the case of HBM2E.

SK Hynix is also expecting bandwidth of greater than or equal to 665 GBps per stack -- up from SK Hynix's HBM2E, which hits 460 GBps. Notably, some other companies, including SiFive, expect HBM3 to scale all the way to 7.2 GTps.

Nowadays, bandwidth-hungry devices, like ultra-high-end compute GPUs or FPGAs use 4-6 HBM2E memory stacks. With SK Hynix's HBM2E, such applications can get 1.84-2.76 TBps of bandwidth (usually lower because GPU and FPGA developers are cautious). With HBM3, these devices could get at least 2.66-3.99 TBps of bandwidth, according to the company.

SK Hynix did not share an anticipated release date for HBM3.

In early 2020, SK Hynix licensed DBI Ultra 2.5D/3D hybrid bonding interconnect technology from Xperi Corp., specifically for high-bandwidth memory solutions (including 3DS, HBM2, HBM3 and beyond), as well as various highly integrated CPUs, GPUs, ASICs, FPGAs and SoCs.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The DBI Ultra supports from 100,000 to 1,000,000 interconnects per square-millimeter and allows stacks up to 16 high, allowing for ultra-high-capacity HBM3 memory modules, as well as 2.5D or 3D solutions with built-in HBM3.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

littlechipsbigchips How about the Capacity and Price per GB ? this is the most important factor here . bandwidth is more than enough for today hardware.Reply -

escksu Replylittlechipsbigchips said:How about the Capacity and Price per GB ? this is the most important factor here . bandwidth is more than enough for today hardware.

Considering the A100 has 40/80GB models, its not too bad although its never enough. As for price, no doubt they cost more, but these aren't meant for consumer cards so don't it doesn't matter that much as well. Performance matters more. The A100 cost over 10K.

Bandwidth is never enough for these cards. They need all the bandwidth they can get. They also need all memory they can get. -

TheJoker2020 I have been wondering for a few years now whether AMD will release a CPU with with their I/O die, chiplets and a HBM chip.Reply

Yes there would need to be a significant redesign of the I/O die, substrate, power delivery and how the caching works, but this could be a very powerful option.

I would expect the HBM to either be used as a victim cache for the L3, or as the first part of main memory, but I expect that would cause problems (the RAM equivalent of scheduler issues.?)

Of course the best IMHO use case for this would be for the above configuration but with one of the chiplets replaced with a competent GPU for a very powerful APU. This however seems to be wasteful as the HBM would cost a load of money for its capacity, and would that capacity be enough to not be a bottleneck... Probably not.

The other option I foresee would be for AMD to make a monolithic die (as it currently does) but with the Memory interface expanded to use HBM as well and to use this as dedicated RAM for the GPU, again, this seems to be wasteful and would still encounter other problems leaving this idea to be better suited for CPU usage.

Such an idea might work very well for AMD server CPU's, but the added complexity might outweigh the gains, still, an interesting thought experiment.

Note that I have not mentioned Intel, simply because it does not have any current chiplet based products which this would IMHO be best suited for, but also because this is not a long way off of Intel's various Foveros options anyway.

Both AMD and Intel would have already gone through all of these options and looked at whether or not it would work effectively, be cost, power and performance effective as well as the manufacturing hurdles. "IF" such a thing is in the works, I would expect it to be baked into the design from the beginning and that would likely mean that "if" it ever sees the light of day it will be Zen 5 at the earliest IMHO.

"IF" and any such design using HBM as well as DDR5 as an option wins out, it would also have to compete against 3D stacked cache (which AMD demoed, but could be 4x higher capacity with a greatly higher cost) and 3D stacked CPU dies, the industry is incredibly interesting right now.

Apple and Ampere could always do such a thing, and IMHO it would be relatively easy for Ampere to do so because they are now bringing their designs in house and the only produce one CPU (with different SKU's).

Mods, please let me know if this needs to be a post on its own rather than here in this thread. -

Kamen Rider Blade Reply

I can see AMD using HBM3 for their Professional line of cards and for a future dedicated mobile line of cards.TheJoker2020 said:I have been wondering for a few years now whether AMD will release a CPU with with their I/O die, chiplets and a HBM chip.

Yes there would need to be a significant redesign of the I/O die, substrate, power delivery and how the caching works, but this could be a very powerful option.

I would expect the HBM to either be used as a victim cache for the L3, or as the first part of main memory, but I expect that would cause problems (the RAM equivalent of scheduler issues.?)

Of course the best IMHO use case for this would be for the above configuration but with one of the chiplets replaced with a competent GPU for a very powerful APU. This however seems to be wasteful as the HBM would cost a load of money for its capacity, and would that capacity be enough to not be a bottleneck... Probably not.

The other option I foresee would be for AMD to make a monolithic die (as it currently does) but with the Memory interface expanded to use HBM as well and to use this as dedicated RAM for the GPU, again, this seems to be wasteful and would still encounter other problems leaving this idea to be better suited for CPU usage.

Such an idea might work very well for AMD server CPU's, but the added complexity might outweigh the gains, still, an interesting thought experiment.

Note that I have not mentioned Intel, simply because it does not have any current chiplet based products which this would IMHO be best suited for, but also because this is not a long way off of Intel's various Foveros options anyway.

Both AMD and Intel would have already gone through all of these options and looked at whether or not it would work effectively, be cost, power and performance effective as well as the manufacturing hurdles. "IF" such a thing is in the works, I would expect it to be baked into the design from the beginning and that would likely mean that "if" it ever sees the light of day it will be Zen 5 at the earliest IMHO.

"IF" and any such design using HBM as well as DDR5 as an option wins out, it would also have to compete against 3D stacked cache (which AMD demoed, but could be 4x higher capacity with a greatly higher cost) and 3D stacked CPU dies, the industry is incredibly interesting right now.

Apple and Ampere could always do such a thing, and IMHO it would be relatively easy for Ampere to do so because they are now bringing their designs in house and the only produce one CPU (with different SKU's).

Mods, please let me know if this needs to be a post on its own rather than here in this thread.

Given how little PCB real estate area there is on mobile cards, having HBM lowers mobile power consumption and PCB real estate, allowing you to move the VRM / Power Regulation modules to be closer to the GPU die.

HBM3 would fit perfectly for a future MXM like card or "Open MXM" standard where you have the power & data as close as possible in a note book format.

The Mobile monolithic GPU that has HBM3 support can also be re-used for ITX / SFF line of dedicated GPU's.

And you can recycle the GPU architecture for a Mobile Professional line.

For the DeskTop GPU Graphics Card line, assuming AMD goes modular / chiplet as planned:

AMD can have a cIOD that has HBM3 for professional support and regular GDDR6 for consumer cards.