ATI Stream: Finally, CUDA Has Competition

Introduction

Imagine if you told everybody you were going to throw this awesome, mind-altering, uberlicious party. But the day of the party, the first people in the door discovered that the plumbing was backed up, and everybody left, which was fine because the live band had been killed by freak tornado while en route. Five months later, you try to throw the same party again. The difference is that now you have a Fisher boom box instead of a live band, and, thanks to some duct tape, the plumbing works. Meanwhile, another guy down the block has already started throwing his own party. The invitations look a lot like yours. He’s serving the same drinks. You’re throwing in a free party favor, but no one seems to care, in part because the people who might care are already bustin’ moves down the block. Several people have RSVPed for your soiree, but only two or three have showed up so far.

You’re AMD, and the name of your party is “ATI Stream.”

If you caught our recent coverage of Nvidia’s CUDA platform, then you’re up to speed on the state of GPGPU processing, or GPU computing, or whatever you want to call it these days, and you know that ATI Stream stands alongside CUDA as one of the two most prevalent GPU computing platforms available today. The idea with GPU computing is to take highly parallelized tasks typically run in the CPU and offload them to the GPU, where they can run more quickly and efficiently. Programmable shaders are exceptionally well-suited for floating point-intensive tasks. Each shader operates as its own sort of processor core, so instead of having four or eight threads crunching on a parallelized task in the CPU, you could have 64 or 320 or however many stream processors tackling the same work in the GPU. Naturally, the program has to be coded to take advantage of this architecture, and the operations need to involve a relatively heavy amount of arithmetic per memory fetch in order to see decent results.

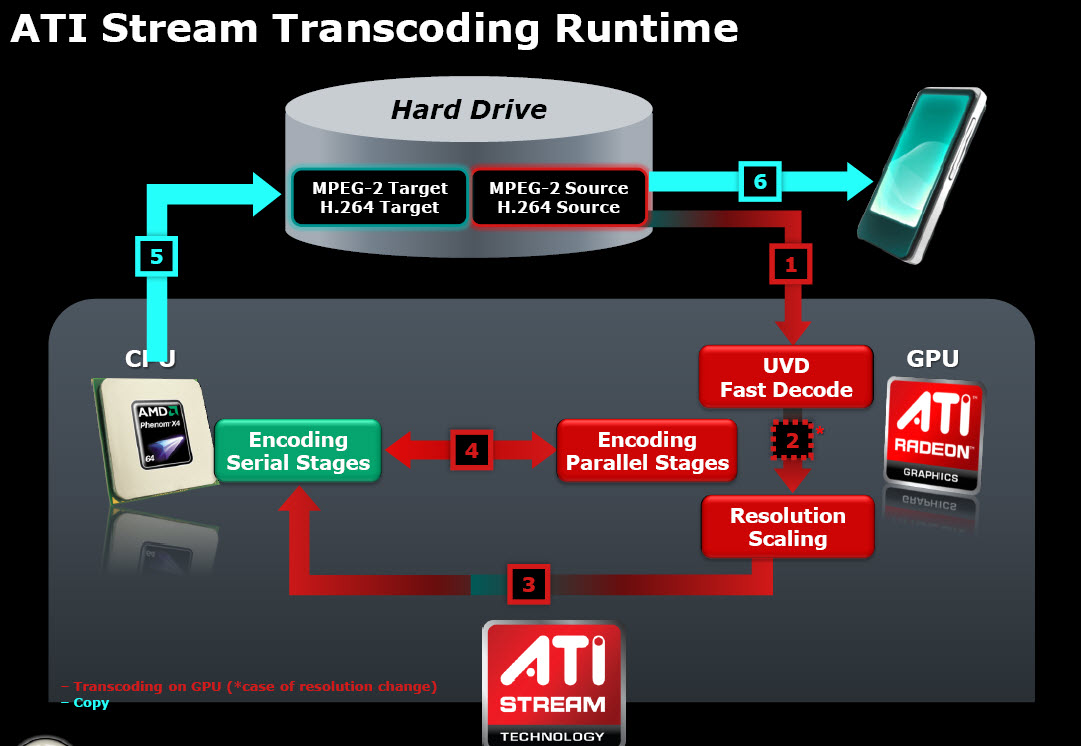

When Stream launched last December, AMD had only enabled it to accelerate encoding into MPEG-2 and H.264 formats. The acceleration part was fine. What AMD hadn’t counted on was that it would be deluged with criticisms over its encoding quality. With the May’s Catalyst 9.5 driver update, though, we finally have bug fixes for the quality issues and a fuller acceleration pipeline that now includes MPEG-2 and H.264 decoding, as well as resolution scaling. You can see this represented in the high-level illustration shown here.

The burning question, of course, is how does Stream stack up? Was it worth the wait? We’ve got some preliminary answers and more besides, but first, let’s step back for some perspective...

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

radiowars So..... TBH they both work pretty well, I hope that we don't start a whole competition over this.Reply -

falchard Did someone necro an old topic? I think ATI has been talking about ATI Stream for a while. I know atleast a year since FireStream.Reply -

Andraxxus They're good but hopefully they will manage to improve them more. Competition is good for business.Reply -

IzzyCraft Stream is old but not nearly as old and compatible as CUDA I'd get it a year or two more when more capable cards circulate the market and trickle down to the people before i would call it competition.Reply

Well it's good to see more then just 1 app that supports it. -

ThisIsMe Just for the sake of it, and the fact that many pros would like to know the result, it would be nice to see comparisons like this using nVidia's Quadro cards vs. ATI's FirePro cards.Reply -

ohim why use 185.85 since those drivers are a total wreckReply

http://forums.nvidia.com/index.php?showtopic=96665&st=0&start=0

13 pages with ppl having different problems with that driver -

I think the second graph on the "Mixed Messages" page isn't the right graph.Reply

It's the same graph from the following "Heavier Lifting" page instead of a graph for the 298MB VOB file that should be shown? -

Spanky Deluxe Stream and CUDA are likely to go the way of the dodo soon though. OpenCL's where its at. Unfortunately its a tad hard to get programming with it right now since you need to be a registered developer on nVidia's Early Access Program or you have to be a registered developer with Apple's developer program with access to pre-release copies of Snow Leopard.Reply

Virtually no one will bother using CUDA or Steam after OpenCL's out - why limit yourself to one hardware base after all? It'd be like writing Windows software that only ran on AMD processors and not Intel. Developers will not bother writing for both when they can just use one language that can run on both hardware platforms.