AI Startup Groq Debuts in the Cloud

1000 TOPS in the cloud.

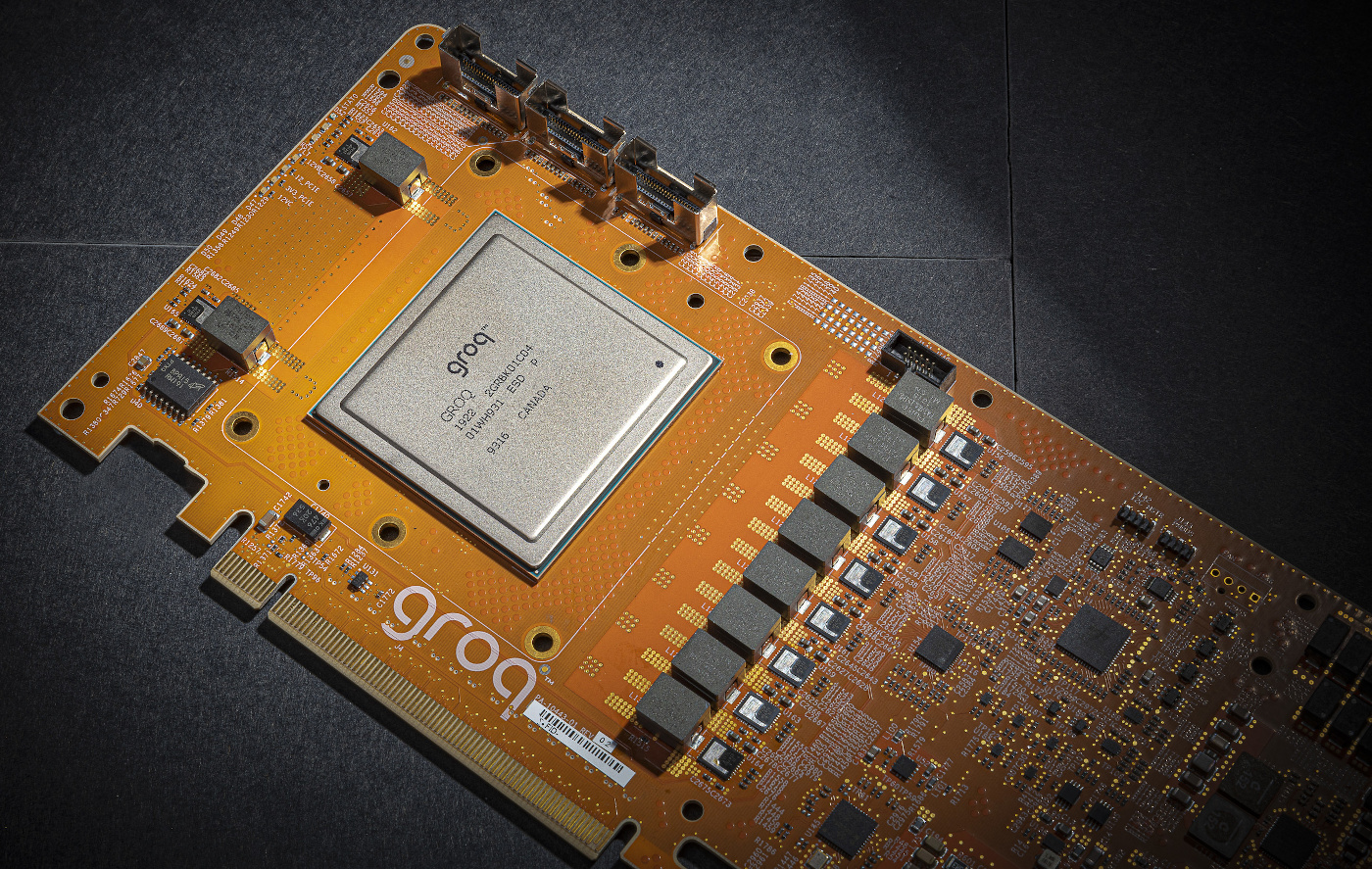

Groq has become the second start-up to debut its deep learning chip in the cloud, EETimes reported. Its tensor streaming processor (TSP) for AI inference is now available via cloud service provider Nimbix for “selected customers”.

Late last year, Graphcore became the first startup to see its AI chip become available in the cloud through Microsoft’s Azure. Nimbix is now offering Groq’s tensor streaming processor.

Nimbix’ CEO stated: “Groq’s simplified processing architecture is unique, providing unprecedented, deterministic performance for compute intensive workloads, and is an exciting addition to our cloud-based AI and Deep Learning platform.”

Article continues belowGroq’s TSP is rated at 1000 TOPS (1POPS) and the company claims it has 2.5x the performance of the best GPUs at a large batch size, and its performance lead would be 17x at a batch size of 1.

Besides GPUs, the chip competes with many other deep learning inference chips. Most notably, Intel and Qualcomm will also seek to get their own NNP-I and Cloud 100 chips in the cloud this year.

WikiChip has a deep dive on the chip.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

-

bit_user As seems to be the trend, it's heavily-dependent on on-chip memory. The pic of the PCIe card doesn't show any off-chip memory, though perhaps there's some HBM2 under the IHS? Otherwise, you might hit a (performance) wall, when your model tries to scale beyond what they can fit on-chip.Reply

I would also worry about the energy usage resulting from all of the on-chip data movement, since the on-chip memory is supposedly organized in a global pool.

The top-line numbers are impressive, though.