Intel Xe HPG Gaming GPU: Taking on AMD and Nvidia

Discrete Intel Xe HPG graphics cards will take on the best Nvidia and AMD have to offer in 2021.

The reveal of the Intel Xe HPG during Intel's Architecture Day 2020 was part of the virtual firehose of information on a ton of upcoming products and technologies. We've covered Tiger Lake and Xe LP Graphics elsewhere, and we have new details on Xe HP / HPC and the newly christened 10nm SuperFin process. But one of the products we're most interested in seeing and testing isn't coming until 2021. We've speculated on what Intel's Xe Graphics would bring to the dedicated GPU since we first heard about Team Blue's aspirations back in 2018. The latest details are far more promising than what we were previously expecting, so a delay until (hopefully earlier rather than later) 2021 isn't necessarily a bad thing. Intel's dedicated GPU plans are definitely going to be more potent than the DG1 demo at CES back in January.

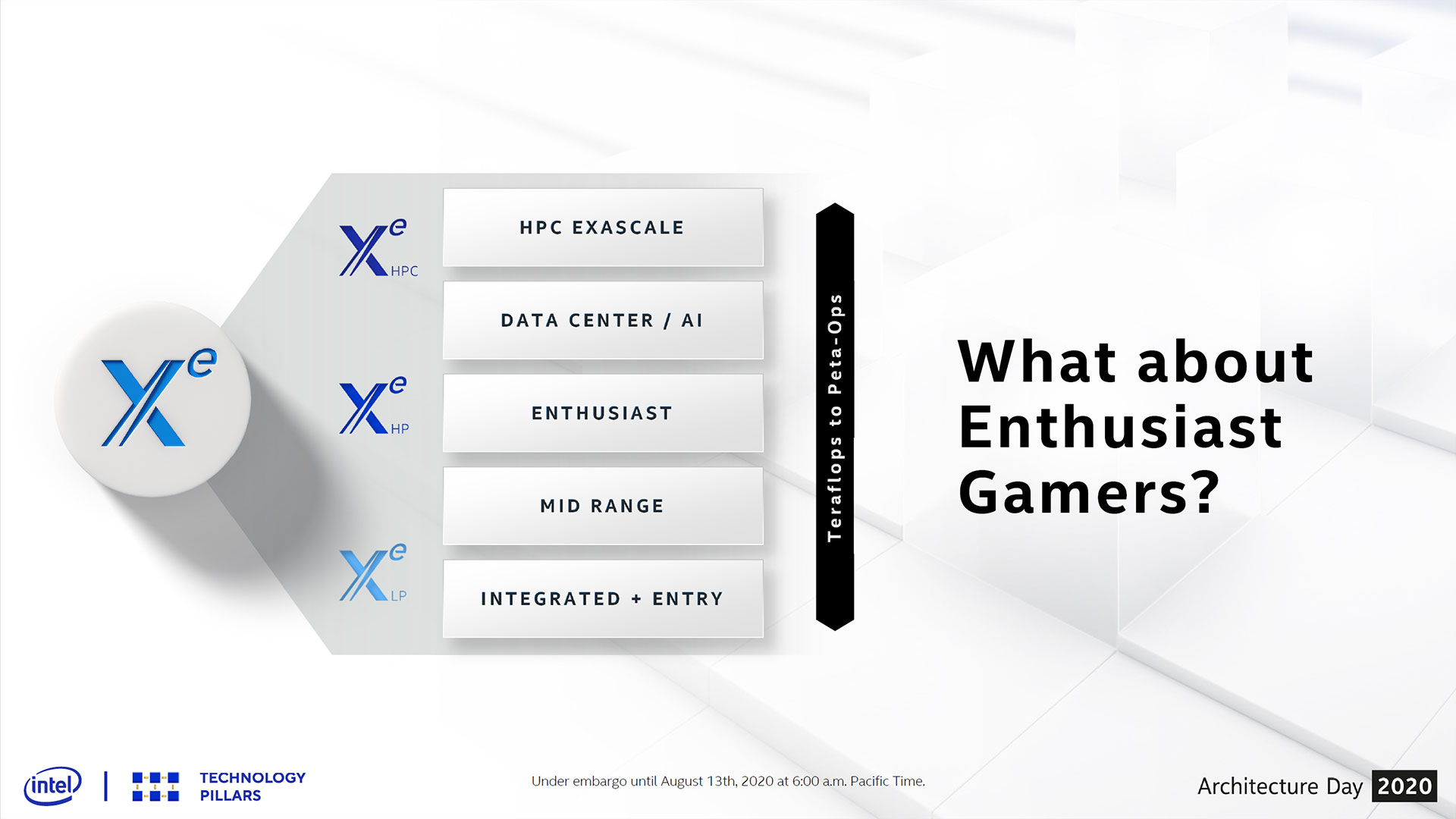

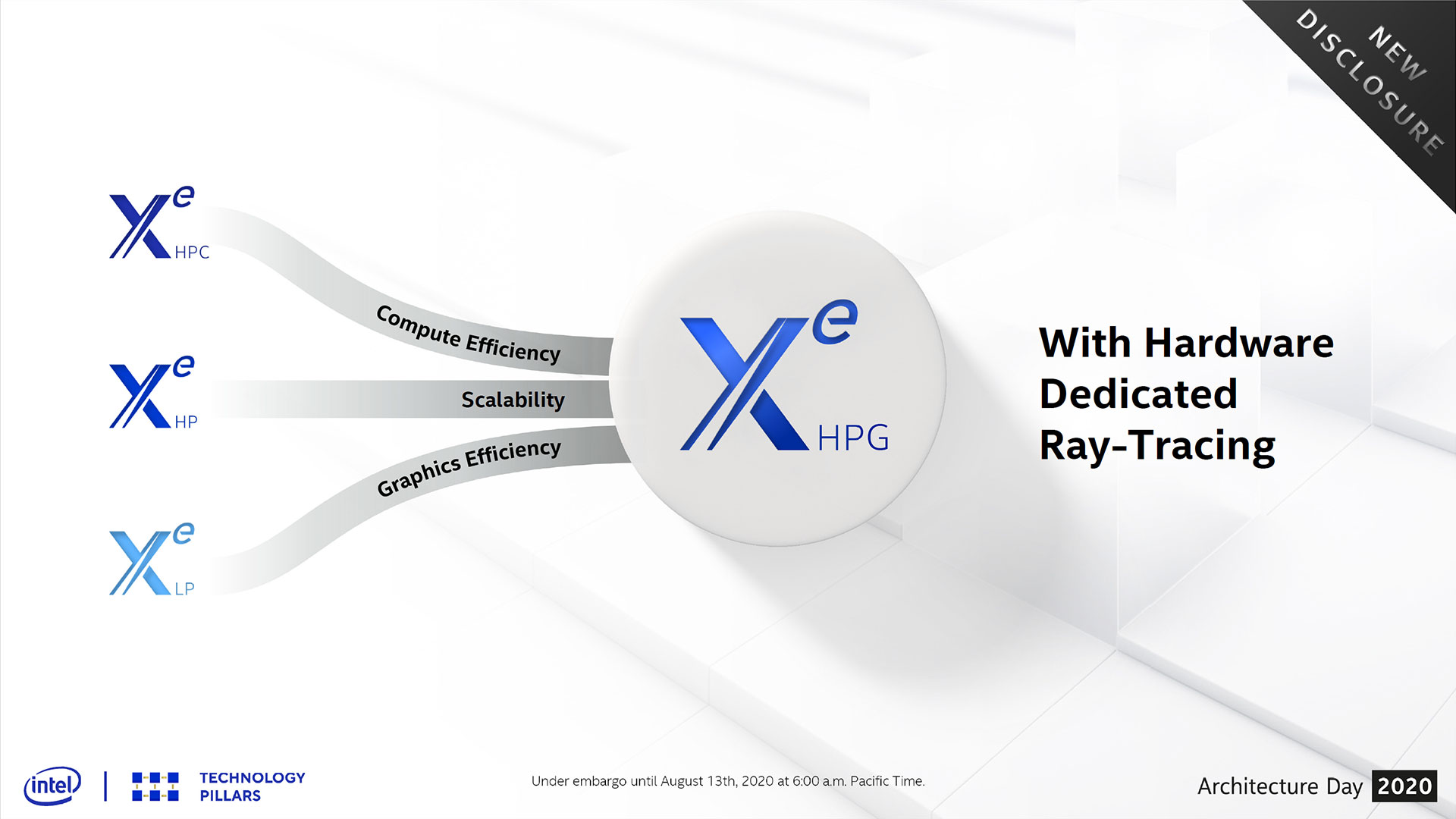

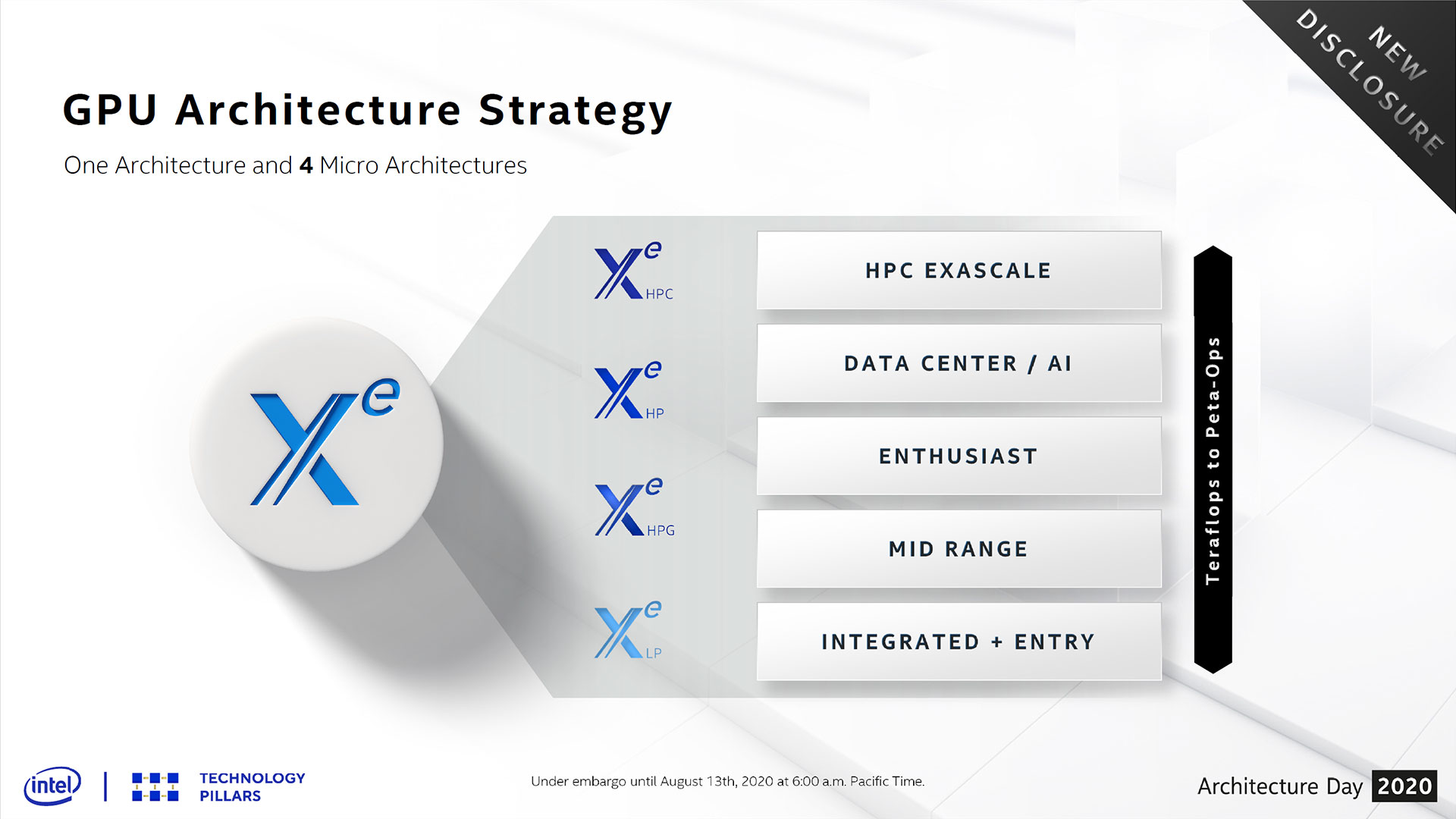

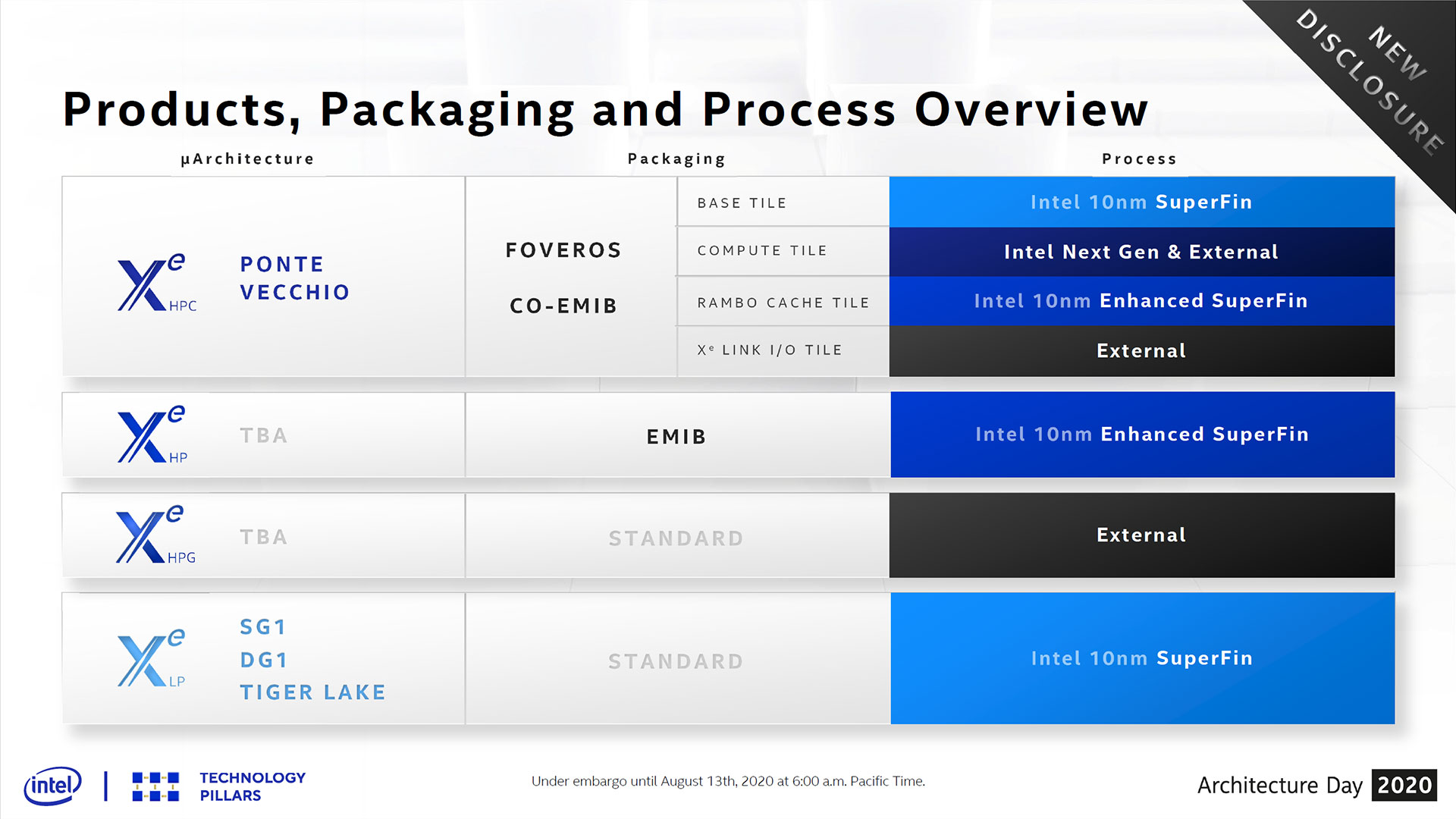

Intel originally had plans for a single graphics architecture with two micro-architectures back in 2018. Two years later, the number of microarchitectures has doubled to four. Xe LP is for integrated graphics and entry-level solutions, Xe HP is for high-end compute and data center workloads, and Xe HPC is basically Xe HP on steroids (and possibly with a shrink to 7nm, sort of), targeting supercomputing exascale solutions. That leaves the fourth and final microarchitecture that Intel just announced during its Architecture Day briefings: Intel Xe HPG.

Given what we know of Xe HP, the bifurcation into two different parts makes a lot of sense. First, Xe HP of necessity has FP64 (64-bit floating point) support, along with tensor cores. These are common features in data center compute, deep learning, and AI environments, but they're also extra bloat that's not needed for a gaming GPU. Second, Xe HP will use HBM2e memory.

Intel's Raja Koduri joked about having scars on his back from trying to launch two different HBM (high bandwidth memory) GPUs into the consumer market, referring to his time at AMD with the Fiji / R9 Fury X and Vega 10 / RX Vega 64 product launches. Both performed okay, but the adoption of HBM inflated costs and the resulting prices of both GPU families, ultimately leading to less than optimal prices and performance. For data center workloads, the additional bandwidth that HBM2e brings to the table makes sense — Nvidia uses HBM2 in its Nvidia P100, V100, and A100 products — but for consumers, it's still too expensive.

Intel Xe HPG will address both of these items by removing the FP64 support (or at least trimming it way down) and by adopting GDDR6 memory. That's not the only change, however. Dumping FP64 (possibly tensor cores as well) and HBM gives Intel room to add in other features. Specifically, Intel confirmed that Xe HPG will support hardware ray tracing. That's a critical move, considering the AMD Big Navi / RDNA 2 and Nvidia RTX 3080 Ampere will both arrive in the next month or two with ray tracing, and in November the Sony PlayStation 5 and Microsoft Xbox Series X will launch with AMD GPUs that also support the feature. Trying to break into the dedicated graphics card market with a product that lacks features the established players support wouldn't go over well.

There are still plenty of unknowns. How many EUs will Xe HPG support? Xe HP appears to have up to 512 EUs per tile, or the equivalent of 4096 shader cores (ALUs) if we're comparing it with AMD and Nvidia GPUs. AMD's Big Navi meanwhile is expected to support up to 5120 shader cores, while Nvidia's Ampere could go as high as 8192 CUDA cores (but will probably come in below that mark). With the reworking of features, Xe HPG could certainly increase the number of EUs to stay competitive, and a configuration with 640 EUs (5120 ALUs) isn't out of the question.

The other major bombshell that Intel dropped is that it plans to utilize a third party for fabrication of Xe HPG. That means it won't have to use up its limited 10nm SuperFin capacity making gaming GPUs, and it will perhaps join AMD and Nvidia by using TSMC to manufacture Xe HPG. It's either that or Samsung; either way Intel gets access to 7nm fabrication technology, though 10nm SuperFin is perhaps similar or even superior in practice — the nanometer numbers are certainly prone to being used as marketing rather than indicating true feature sizes.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

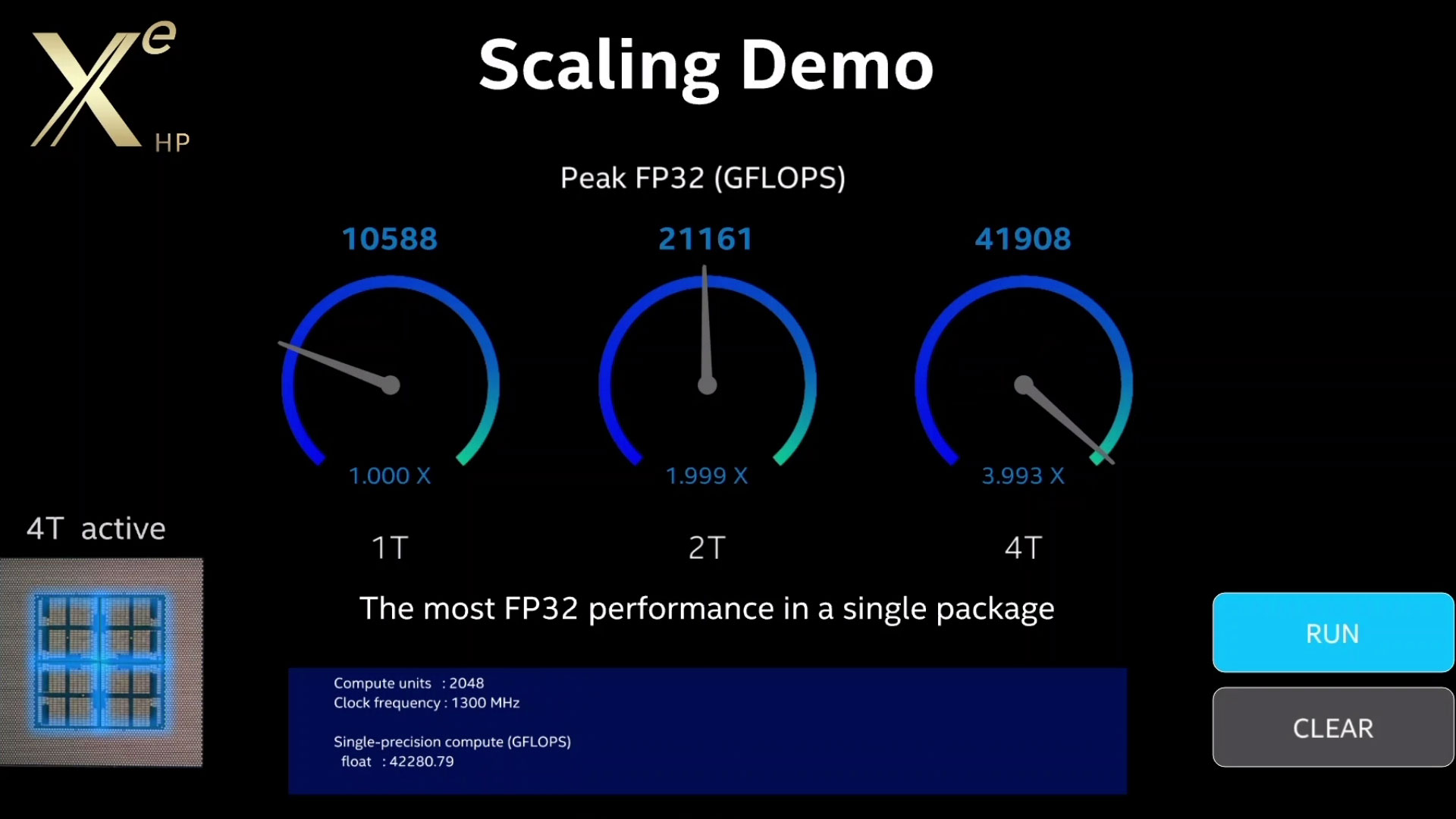

Despite everything we don't yet know about Xe HPG and Intel's enthusiast gaming aspirations, don't count Team Blue out just yet. Intel demonstrated 1-tile, 2-tile, and 4-tile variants of Xe HP running a compute workload, with almost perfect scaling based on the number of tiles. The test used early drivers, running at non-final clocks of 1300 MHz. Intel was able to deliver 10.6 TFLOPS (FP32) with a single tile and up to 42.3 TFLOPS with a 4-tile implementation.

Obviously, this isn't the same thing as playing a game. Still, strip the extra stuff out of Xe HP, add some more EUs, and clock it at 1.7 GHz and we're talking serious firepower. If Intel can do that with Xe HPG (and we're not saying it will, but merely that it could) and Intel would have a gaming graphics card capable of 17.4 TFLOPS. That's far more than Nvidia's current RTX 2080 Ti, and it could end up being competitive with even Big Navi and Ampere — assuming drivers, ray tracing performance, and everything else fall into place.

The drivers experience with Intel has certainly improved, at least, as we discuss further in the Xe LP article. We recently tested Ice Lake Gen11 graphics as well as other integrated graphics solutions on Intel (and AMD) CPUs from the past several years. While the older HD 4600 (Gen7) graphics failed to run several titles (it lacks DX12 and Vulkan support) and had very poor performance, UHD 630 and Iris Plus worked properly. Scaling to 20 times as many EUs as UHD 630 should do wonders for performance, naturally.

Intel's ability to scale to a 2-tile solution linked via EMIB might also solve the scaling problem we've seen with SLI and CrossFire. The two tiles would simply appear as a single GPU (maybe), meaning there's even potential for a 2-tile Xe HPG solution that could theoretically crush any current GPU. It wouldn't be cheap, and scaling in games probably wouldn't be nearly as good as what Intel showed with the compute demo above, but we'll wait and see.

We're not saying any of this will happen, of course, but even a 512 EU Xe HPG solution could be competitive. Maybe. The proverbial proof is in the eating of the pudding — ray traced pudding, naturally. The good news is that, whatever happens, the next year of GPUs is shaping up to be far more exciting than the past year. New products from AMD, Nvidia, and Intel are coming to market, all with ray tracing support, and any one of the big three could come out on top.

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

warezme Intels Achilles heel has always been drivers. If they haven't sorted that out, the hardware won't matter.Reply -

jimmysmitty Replywarezme said:Intels Achilles heel has always been drivers. If they haven't sorted that out, the hardware won't matter.

In GPUs, yes. But they also have never really done more than just had a half decent iGPU. In others theirs are typically pretty good.

I hope they can break in and kill the duopoly that AMD and nVidia have. AMD and nVidia have price fixed before and having a third party in to push competition and possibly get us better pricing is never a bad thing. -

JarredWaltonGPU Reply

I discussed this in the Xe LP article, but the latest drivers have been pretty good for me. There were one or two issues I think, but with UHD 630 and Iris Plus, every game I tested at least worked. I also discussed the drivers in the recent integrated graphics testing.warezme said:Intels Achilles heel has always been drivers. If they haven't sorted that out, the hardware won't matter.

https://www.tomshardware.com/uk/news/intel-xe-lp-graphics-specs

https://www.tomshardware.com/features/intel-gen11-core_i7_1065g7-tested

https://www.tomshardware.com/features/amd-vs-intel-integrated-graphics

It's by no means a 'solved' problem, but compatibility is not nearly as bad as in the Haswell and earlier days. -

TerryLaze Reply

Yeah,why do I doubt that intel will somehow have aggressive pricing on...anything.jimmysmitty said:I hope they can break in and kill the duopoly that AMD and nVidia have. AMD and nVidia have price fixed before and having a third party in to push competition and possibly get us better pricing is never a bad thing.

I mean I wish but it's very unlikely,it's not like intel has to make sales to survive,especially desktop/gaming GPUs. -

Chung Leong Seems like a mistake to not use HBM. A first generation product is not going to achieve high volume in any event, so why launch something mediocre? Given Nvidia's market position and advantage in game optimization, an Intel solution has to have 50% more raw horsepower to be competitive. I'm not seeing that here.Reply -

jimmysmitty ReplyTerryLaze said:Yeah,why do I doubt that intel will somehow have aggressive pricing on...anything.

I mean I wish but it's very unlikely,it's not like intel has to make sales to survive,especially desktop/gaming GPUs.

It depends on the case. Core 2 was very aggressive price wise. The Core 2 Quad Q6600 was much cheaper and outperformed the Quad FX setup, AMDs then equivalent quad core system pre-Phenom.

In this case I would like to think since Intel is new to the market so to speak they would be more aggressive to start. -

AnimeMania I think Intel's Achilles heel has been killing off ideas/products before they have a chance to catch on. Nobody wants to buy a Graphics Card that won't be supported a year from now.Reply -

TCA_ChinChin Reply

When did they price fix? Having Intel compete is nice though.jimmysmitty said:AMD and nVidia have price fixed before and having a third party in to push competition and possibly get us better pricing is never a bad thing. -

spongiemaster Reply

I don't think they ever have. It's more of a case of non-competing for the past few years. Nvidia has chosen whatever price can maintain their margins because AMD can't compete. Then a year or two later, AMD releases cards that just slightly undercut Nvidia on price, but not by enough to warrant any real response from Nvidia, keeping ASP's inflated.TCA_ChinChin said:When did they price fix? Having Intel compete is nice though. -

JarredWaltonGPU Reply

HBM is terribly expensive. Even AMD learned its lessons and has backed off of pushing HBM2 in the consumer segment. It has its use cases, like enterprise / HPC stuff, or when you want to use as little area as possible (eg, Navi 12 for Apple MacBook), but the price/performance just isn't necessary or beneficial for consumer products.Chung Leong said:Seems like a mistake to not use HBM. A first generation product is not going to achieve high volume in any event, so why launch something mediocre? Given Nvidia's market position and advantage in game optimization, an Intel solution has to have 50% more raw horsepower to be competitive. I'm not seeing that here.

As far as a raw horsepower advantage, I think you're stuck in the past. Iris Plus Gen11 graphics has about 1.1 TFLOPS of computational power. It performs basically like a 1.1 TFLOPS AMD or Nvidia GPU. By which I mean that it's woefully underpowered, only half the performance of a lowly GTX 1050. Intel really needs more ALUs / EUs for its graphics ambitions, and it looks like Xe HPG may do the trick. I don't expect a 10 TFLOPS Intel part to outperform a 10 TFLOPS Nvidia part, but a 15 TFLOPS Xe HPG could probably put up a good fight against RTX 2080 Ti.

We definitely need to see Xe HPG in action before coming to any conclusions, though. Even Xe LP won't really tell us what to expect from Xe HPG, since HPG is going to reworks the architecture for sure. Fundamentally, it's just a matter of getting optimized ALU cores that can run gaming workloads fast, and Intel has the R&D resources to try and make that happen. Whether it can succeed (and overcome internal politics) is a major question.