GeForce RTX 2070, 2080, or 2080 Ti: Which Nvidia Turing Card Is Right for You?

Nvidia's full lineup of Turing-based enthusiast-class gaming cards is finally here, which means gamers have a new set of graphics cards consider. The GPU giant kicked off the RTX generation of graphics cards in mid-September with the release of the GeForce RTX 2080 ($799 / £749 for the Founders Edition version).

Two weeks later, the flagship GeForce RTX 2080 Ti ($1,199 / £1,099 for the Founders Edition model) arrived on store shelves for the first time. And as of October 18, you could pick up a Turing card on a slightly lower budget with the GeForce RTX 2070 ($599 / £549 for the Founders Edition models, or a little less for some third-party variants). But the question remains: Should you buy one?

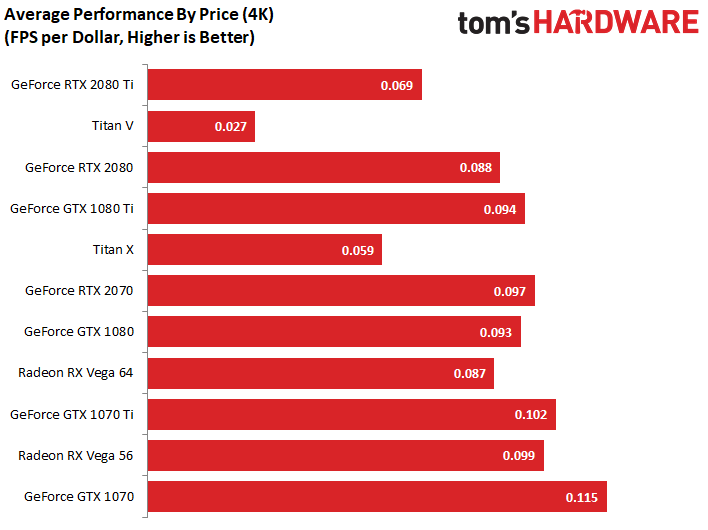

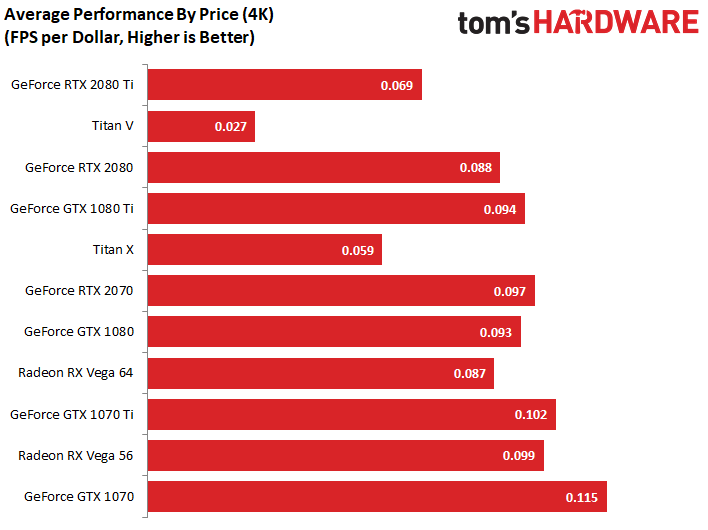

The prices of Nvidia’s latest GPUs have been a substantial sticking point with reviewers. In past generations, gamers could expect to pay upwards of $600 for an 80-class GPU, and somewhere shy of $1000 for a Ti variant. The GeForce RTX cards, however, sell for much higher prices, which makes them a harder purchase to justify. The new GeForce RTX 2070, while considerably cheaper than the other Turing-based GPUs, is no exception. Nvidia’s 70-class GPUs have always been a great bang-for-your-buck, but at $500 and up, the new RTX 2070 cards aren’t exactly cheap.

So, given the high prices, should you even be considering one of these new GPUs for your gaming rig? The answer depends on what you want to do with your graphics card, how long you plan to keep it, and how much of your hard-earned money you’re willing to part with to reach smooth gaming nirvana? To help you answer those questions, we'll have to dig a bit deeper.

Are You an Early-Adopter?

Before you start worrying about the cost of these new GPUs, it’s important to ask yourself why you want one, and how much you’re willing to put up with to get it. Early adapters are a segment of the buying public that sees value in being one of the first people to own a new product. They generally have a higher tolerance for high prices, limited compatibility, and are often intrigued by the idea of playing with new technologies as they come to fruition.

If you’re going to be buying a GeForce RTX card anytime soon, you'd better land squarely in the early adopter category.

What Turing Brings to the Table

Nvidia’s new Turing architecture promises great things: It’s the first GPU architecture to feature RT cores designed for and dedicated to real-time ray tracing, which enables it’s new hybrid rendering technology. The company’s launch presentation focused primarily on ray tracing, with CEO Jensen Huang hyping its arrival as “the holy grail of our industry.” To be sure, the lighting effects and realistic reflections that ray tracing enables are striking.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

The Turing architecture also brings Tensor cores to gamers for the first time, and Nvidia is using these AI-dedicated cores to leverage machine learning technology to improve image quality in games. Nvidia’s Deep Learning Super Sampling (DLSS) technology promises lower GPU load compared to other super sampling and anti-aliasing techniques, while simultaneously producing a crisper image. You'll find more in-depth coverage of Nvidia's new GPUs and their technology in our Turing deep dive.

Let's take a quick look at the specs of these new cards.

| Row 0 - Cell 0 | GeForce RTX 2080 Ti Founders Edition | GeForce RTX 2080 Founders Edition | GeForce RTX 2070 Founders Edition |

| Price | $1,199 | $799 | $599 |

| CUDA Cores | 4352 | 2944 | 2304 |

| Boost Clock | 1635MHz (OC) | 1800MHz (OC) | 1710MHz (OC) |

| Base Clock | 1350MHz | 1515MHz | 1410MHz |

| Memory | 11GB GDDR6 | 8GB GDDR6 | 8GB GDDR6 |

| USB Type-C and VirtualLink | Yes | Yes | Yes |

| Maximum Resolution | 7680x4320 | 7680x4320 | 7680x4320 |

| Connectors | DisplayPort, HDMI, USB Type-C | DisplayPort, HDMI, USB Type-C | DisplayPort, HDMI, USB Type-C |

| Graphics Card Power | 260W | 225W | 225W |

Ray Tracing is Promising, But it Ain’t Here Yet

Once game developers adopt Nvidia’s new technologies (if they do), the Turing-based GPUs stand to gain a boost in visual realism and real-world performance that should put them well ahead of the aging Pascal-based cards. However, until Turing’s specialty technology is in play, Nvidia’s last-generation lineup is hard to ignore.

Currently, there are no games that support ray tracing and the list of announced titles is a short one, with release dates mostly unknown. Battlefield V is one of the only titles that is set to support ray tracing this year. But we’ve yet to see the performance impact of enabling the feature. Disappointingly, EA delayed Battlefield V's release until November.

Deep Learning Super Sampling is no Better

Nvidia’s AI-based DLSS technology could dramatically improve performance and image fidelity on high-resolution panels and in the best VR headsets in the future, but we don't expect huge benefits from it in the near term.

DLSS technology has a good chance for rapid adoption because, unlike with ray tracing, developers can retroactively enable DLSS in older games. However, as with ray tracing, no games exist today with DLSS enabled, and the list of coming DLSS-enabled games is also short.

We have no doubt that more than a few games will eventually support Turing’s new technologies. But the truth is, we really don’t have any idea if Nvidia’s promises will pan out, and we’ll likely be waiting for a long time before Turing’s flagship features are widely used by the game industry. By then, Nvidia may have a new generation of GPUs available that outpace the Turing lineup. So you probably shouldn’t let Turing’s fancy new tech severely sway your buying decision just yet.

That said, there are compelling reasons to consider Nvidia’s new GPU lineup when you buy your next gaming card.

Undeniable Performance for High-Resolution Gaming

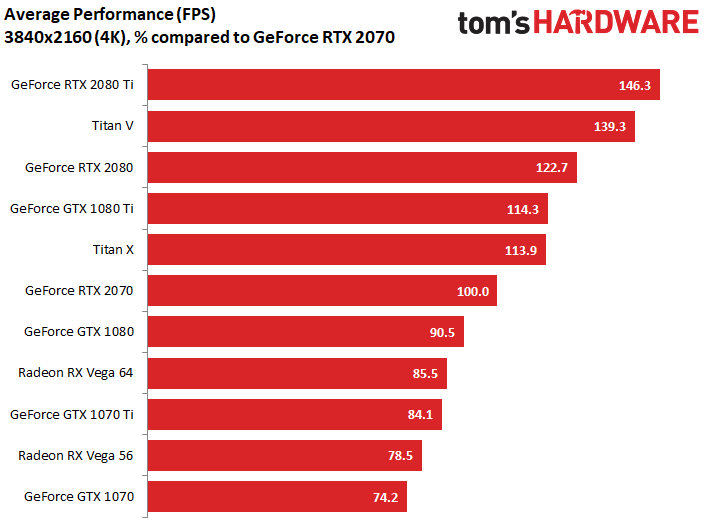

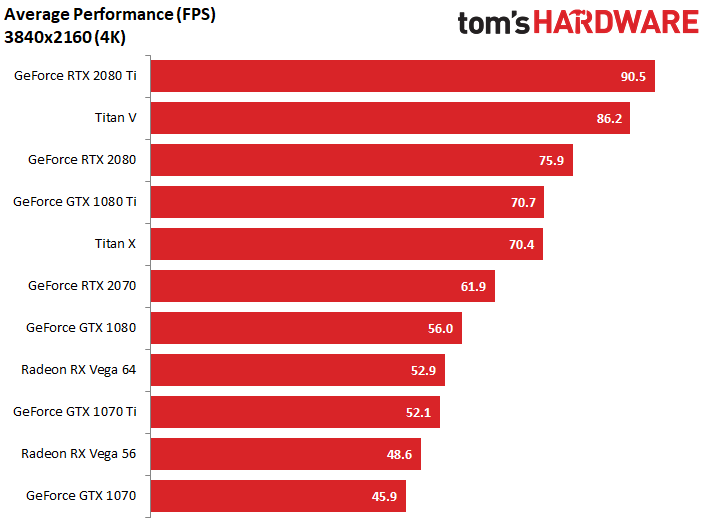

Apart from the RT and Tensors cores and the technologies that they enable, Turing-based GPUs also bring high levels of performance to the table. The top-of-the-line GeForce RTX 2080 Ti is unquestionably the fastest gaming GPU available. You won’t find a better option to drive your 4K resolution display.

As we said in our review, "If you aspire to game at 4K and don’t want to choose between smooth frame rates and maxed-out graphics quality, GeForce RTX 2080 Ti is the card to own. There’s just no way around it."

The GeForce RTX 2080 isnt as powerful as the RTX 2080 Ti, but it provides roughly the performance of the previous-generation GTX 1080 Ti. And it delivers fantastic performance for 2560 x 1440-resolution displays, including high-refresh rate options.

As you can see in the charts below, the RTX 2080 doesn't lag all that far behind its pricier Ti counterpart, despite costing $400 less.

Another Option for 1440p Gaming

Nvidia’s GeForce RTX 2080 and its inflated $800 price are hard to justify for 1440p gaming, but Nvidia does offer another alternative that you may want to consider. You can get your hands on a GeForce RTX 2070 for as little as $500 (£421) . That's still a good chunk of change, but you would have been hard-pressed to find a GTX 1080 for that price in the months before the Turing launch.

Our RTX 2070 review sample walked all over our GTX 1080 in no less than 14 tests. So even though it costs more money, an RTX 2070 delivers better performance. Plus you get those RTX-specific features--even though we don't know exactly how well ray tracing and DLSS will work on this lower-end Turning silicon.

An RTX 2080 would squeeze out a few extra frames per second, but an RTX 2070 will get the 1440p job done for a lot less money.

Great Deals on 10-Series Cards

Nvidia’s three Turing GPUs each offer compelling reasons to consider picking one up. But at their current prices, the company’s outgoing Pascal GPUs offer equally compelling reasons to ignore the new generation of cards. Third-party card makers are slashing prices to help push the old stock out the door, and as a result there has never been a better time to pick up a great 10-series GPU for a really great price.

You can find GeForce GTX 1080 Ti cards, which until September represented the pinnacle of consumer gaming cards, for as little as $650. That’s a much better deal than a GeForce RTX 2080 for $700-$800 if all you want is raw graphics performance.

And now that GeForce RTX 2070 cards are on the market, other 10-series cards are dropping in price too. These cards hit the market 2-years ago for $599 and up, but today you can pick one up for around $450.

If raw performance is what you’re after, and you’re not interested in gambling on the potential benefits of Ray Tracing and DLSS, you can’t go wrong with a discounted 10-series card. Even a GTX 1070 Ti, which you can pick up for under $400 these days, is a good buy. But realistically, if you're shopping for sub-$400 cards, you aren't seriously considering a $500-plus RTX 2070 anyway.

Don’t expect these deals to stick around, though. These GPUs will likely run out sooner rather than later. Nvidia’s partners are sitting on overstock of 10-series GPUs. But at these prices, the stockpile won’t last for long. Soon enough, a GeForce RTX card will be your only viable option for a new Nvidia graphics card.

Still a Difficult Choice

So, what do we ultimately recommend? Well, if you have deep pockets a 4K screen, and don’t mind spending money to have the best of the best, you can’t go wrong with an RTX 2080 Ti. Really, there’s no other option for uncompromising high-resolution gaming.

If you game on a 2560 x 1440 resolution display, the choice isn't as clear. If you can find a GTX 1080 Ti for under $650 (£500), that would be a good buy. Alternatively, a $500 (£385) RTX 2070 would be an even better deal, albeit a less powerful option.

A GTX 1080 for under $450 (£346) is also a compelling option, but we think the extra performance and potential of ray tracing and DLSS is worth the $50 (£38) premium if you're buying a graphics card today. If you're spending close to $500 (£385) anyway, you might as well buy in to current-gen tech--even if we are still waiting to find out exactly what that tech can actually do.

Kevin Carbotte is a contributing writer for Tom's Hardware who primarily covers VR and AR hardware. He has been writing for us for more than four years.

-

jaber2 *80TI this or next version in about 5 years, my 1080Ti does all I want to do with current gamesReply -

quilciri I wonder how many torches & pitchforks of people who bought 20 series cards becaue of Tom's will be outside their offices when AMD's 7nm cards drop.Reply -

compprob237 Until GPU prices come down to even more reasonable prices I'll be sticking with my GTX 970. There's no cards yet to perform well enough to warrant a purchase at roughly the same price.Reply -

beoza I have a GTX 970 as well, but I was looking at upgrading to a 1080 as the 970 is getting a bit old in the tech world. But if the 2070 is offering the same if not better performance than the 1080 I think I'll upgrade to the 2070. I play some games that are more CPU dependent like WoW (14yrs later they decide to work on optimizing for multi-core/multi-threaded), and others that utilize more of the GPU and can take advantage of more CPU cores. It's time for a GPU upgrade, I think my i7 6700K still has some life left in it.Reply -

Math Geek i tend to buy mid range or lower cards so none of them matter to me right now. looking to replace my 280 but am waiting to see what the 2050/60 models look like as they are closer to my preferred price range. though a current 1060 6gb looks good if the price is right :)Reply

don't care at all about eye candy in games so the new stuff is meaningless to me and not likely to be on the lower end 2000 series anyway from what i've read. not in a big hurry so if AMD is coming soon with their new stuff, i'll see what they have to offer as wel since i prefer to support them over nvidia to keep the competition alive. -

Posty351 Seems like 1070 to 1080ti is still the way to go. The RTX's small bump in performance just don't justify the huge bump in price. I just went from a 970 to a 1080ti and could not be happier. It will last me a few years until 4k GPU's are affordable.Reply -

Jim90 "GeForce RTX 2070, 2080, or 2080 Ti: Which Nvidia Turing Card Is Right for You?"Reply

For the sane purchaser...none! -

Giroro The Ray-Tracing and AI hardware is a total waste of die space and money when it comes to gaming, even if its just $50. Most people will be better off putting that extra money towards RAM, SSD, their CPU, or even one of those little Optane modules. Literally half the hardware on the die generally won't be supported by devs for *years*, if ever. Just imagine how much better this generation would have been if that die space had been devoted to the actual GPU. Instead, the RTX line are just faulty quadro chips, so Nvidia is just doing the bare minimum amount of marketing to sell you on leftover features that are really only useful when companies like Disney and Google fill a warehouse with racks of Turing cards.Reply

If Nvidia is still supporting these features in a generation or two, then maybe it's worth looking into (although Nvidia RARELY supports throw-away leftover enterprise features like these past the pre-launch sales pitch). At this point, I don't have much confidence that Ray-Tracing and AI hardware will even be supported within the same generation in a RTX 2060/2050. They have to cut out about 3/4 of the die to get costs down enough to compete with AMD's current options in the mainstream market. But, if they made that cut and continued to include the wasted hardware, then those chips would only be able to eke out about 75% the performance of the last-gen equivalents.

In the least, I think that it will at least be quite awhile before we see a RTX 2060 - because the reduced performance would force Nvidia to rebrand a would-be RTX 2060/2050 as an overpriced RTX 2030/2040.

Maybe they'll even have to split the product lines and introduce a graphics-only GTX 2060 or skip directly to a chip that can be cut into a GTX 2180/2170/2160/2150.

Bottom line, Nvidia has really marketed themselves into a corner trying to sucker people into all this this useless ray-tracing and AI crap. They're trying to justify a short-term (crypto influenced?) price-hike in a way that is ultimately going to do a lot of long-term harm to their branding.