Tom's Hardware Verdict

Nvidia’s GeForce RTX 2080 Founders Edition is a bit faster than the existing GeForce GTX 1080 Ti, but at a $100-higher price. As the Turing architecture’s RT and Tensor cores are utilized by game developers, the RTX 2080 is expected to perform even better. For now, though, gamers with high-end graphics in their existing PCs will find this more of a side-grade than an upgrade.

Pros

- +

Ideal complement to high-refresh QHD monitors

- +

Many games run smoothly at 4K and maximum quality

- +

Improved thermal solution performance helps sustain higher GPU Boost clocks than previous generation

- +

Packed with future-looking tech to accelerate next-gen games with ray tracing and AI support

Cons

- -

$800 price tag is higher than many existing GeForce GTX 1080 Ti cards, which perform competitively

- -

Dual axial fan design exhausts heat back into your case

Why you can trust Tom's Hardware

GeForce RTX 2080 Founders Edition

GeForce RTX 2080 doesn’t get a day in the sun. It’s thrust upon us, born alongside a handsomer, more athletic GeForce RTX 2080 Ti sibling. Enthusiasts fawn over that card’s ability to dribble through 4K resolutions at maximum quality without breaking a sweat. Though the 2080 Ti is obscenely expensive, it knows no equal and therefore sets a new bar for the competition to ogle. We all love a winner.

And then there’s GeForce RTX 2080. No slouch itself, the TU104-based board was bound to be fast by virtue of genetics. Indeed, Nvidia’s Founders Edition implementation generally outperforms the GeForce GTX 1080 Ti—a once-king of gaming performance. But it’s burdened by an $800 price tag. At a time when you can still find GTX 1080 Tis for $700, slightly higher frame rates from a more expensive RTX 2080 fail to impress. And so we wait…either for the supply of previous-gen Pascal GPUs to dry up, or third-party 2080s to appear at the $700 price point Nvidia promised back when Turing was announced.

Fortunately, GeForce RTX 2080’s prospects for the future are promising. Not only does the card serve up GTX 1080 Ti-class performance, but it also supports the Turing-exclusive features that we know Nvidia is working hard to make available: real-time ray tracing via fixed-function RT cores, DLSS and AI denoising through its Tensor cores, mesh shaders, variable rate shading—all of the capabilities covered in Nvidia’s Turing Architecture Explored: Inside the GeForce RTX 2080.

TU104: Turing With Middle Child Syndrome

Like the TU102 GPU found in GeForce RTX 2080 Ti, TSMC manufactures TU104 on its 12nm FinFET node. But a transistor count of 13.6 billion results in a smaller 545 mm² die. “Smaller,” of course, requires a bit of context. Turing Jr out-measures the last generation’s 471 mm² flagship (GP102).

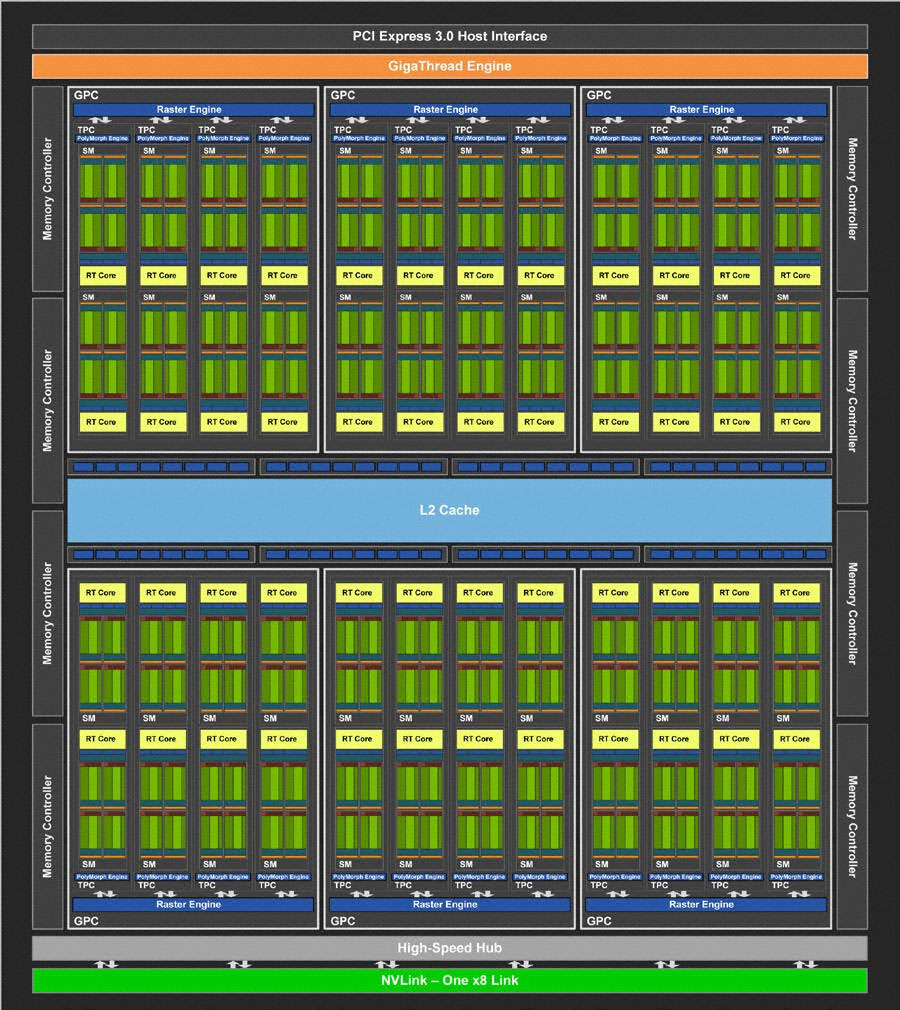

TU104 is constructed with the same building blocks as TU102; it just features fewer of them. Streaming Multiprocessors still sport 64 CUDA cores, eight Tensor cores, one RT core, four texture units, 16 load/store units, 256KB of register space, and 96KB of L1 cache/shared memory. TPCs are still composed of two SMs and a PolyMorph geometry engine. Only here, there are four TPCs per GPC, and six GPCs spread across the processor. Therefore, a fully enabled TU104 wields 48 SMs, 3072 CUDA cores, 384 Tensor cores, 48 RT cores, 192 texture units, and 24 PolyMorph engines. A correspondingly narrower back end feeds the compute resources through eight 32-bit GDDR6 memory controllers (256-bit aggregate) attached to 64 ROPs and 4MB of L2 cache.

TU104 also loses an eight-lane NVLink connection, limiting it to one x8 link and 50 GB/s of bi-directional throughput.

GeForce RTX 2080: TU104 Gets A (Tiny) Haircut

After seeing the GeForce RTX 2080 Ti serve up respectable performance in Battlefield V at 1920x1080 with ray tracing enabled, we can’t help but wonder if GeForce RTX 2080 is fast enough to maintain playable frame rates. Even a complete TU104 GPU is limited to 48 RT cores compared to TU102’s 68. But because Nvidia goes in and turns off one of TU104’s TPCs to create GeForce RTX 2080, another pair of RT cores is lost (along with 128 CUDA cores, eight texture units, 16 Tensor cores, and so on).

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Unfortunately, we’ll have to wait for another day to measure RTX 2080’s alacrity in ray-traced games. There simply aren’t any available yet. UL did send us its 3DMark Ray Tracing Tech Demo to check out. And we were able record some video from the Star Wars Reflections demo running on a GeForce RTX 2080 Ti. But the real excitement happens in a couple of months when game developers implement the first hybrid rendering paths. Until then, GeForce RTX 2080’s ability to keep up in those workloads remains a mystery.

So, in the end, GeForce RTX 2080 struts onto the scene with 46 SMs hosting 2944 CUDA cores, 368 Tensor cores, 46 RT cores, 184 texture units, 64 ROPS, and 4MB of L2 cache. Eight gigabytes of 14 Gb/s GDDR6 on a 256-bit bus move up to 448 GB/s of data, adding more than 100 GB/s of memory bandwidth beyond what GeForce GTX 1080 could do.

| Header Cell - Column 0 | GeForce RTX 2080 Ti FE | GeForce RTX 2080 FE | GeForce GTX 1080 Ti FE | GeForce GTX 1080 FE |

|---|---|---|---|---|

| Architecture (GPU) | Turing (TU102) | Turing (TU104) | Pascal (GP102) | Pascal (GP104) |

| CUDA Cores | 4352 | 2944 | 3584 | 2560 |

| Peak FP32 Compute | 14.2 TFLOPS | 10.6 TFLOPS | 11.3 TFLOPS | 8.9 TFLOPS |

| Tensor Cores | 544 | 368 | N/A | N/A |

| RT Cores | 68 | 46 | N/A | N/A |

| Texture Units | 272 | 184 | 224 | 160 |

| Base Clock Rate | 1350 MHz | 1515 MHz | 1480 MHz | 1607 MHz |

| GPU Boost Rate | 1635 MHz | 1800 MHz | 1582 MHz | 1733 MHz |

| Memory Capacity | 11GB GDDR6 | 8GB GDDR6 | 11GB GDDR5X | 8GB GDDR5X |

| Memory Bus | 352-bit | 256-bit | 352-bit | 256-bit |

| Memory Bandwidth | 616 GB/s | 448 GB/s | 484 GB/s | 320 GB/s |

| ROPs | 88 | 64 | 88 | 64 |

| L2 Cache | 5.5MB | 4MB | 2.75MB | 2MB |

| TDP | 260W | 225W | 250W | 180W |

| Transistor Count | 18.6 billion | 13.6 billion | 12 billion | 7.2 billion |

| Die Size | 754 mm² | 545 mm² | 471 mm² | 314 mm² |

| SLI Support | Yes (x8 NVLink, x2) | Yes (x8 NVLink) | Yes (MIO) | Yes (MIO) |

Nvidia’s Founders Edition card sports a 1515 MHz base frequency and 1800 MHz GPU Boost rating. Peak FP32 compute performance of 10.6 TFLOPS puts GeForce RTX 2080 behind GeForce GTX 1080 Ti (11.3 TFLOPS), but well ahead of GeForce GTX 1080 (8.9 TFLOPS). Of course, the faster Founders Edition model also uses more power. Its 225W TDP is 10W higher than the reference GeForce RTX 2080, and a full 45W above last generation’s GeForce GTX 1080. Still, 225W is low enough that Nvidia gets away with one six- and one eight-pin supplementary power connector (versus RTX 2080 Ti’s pair of eight-pin connectors).

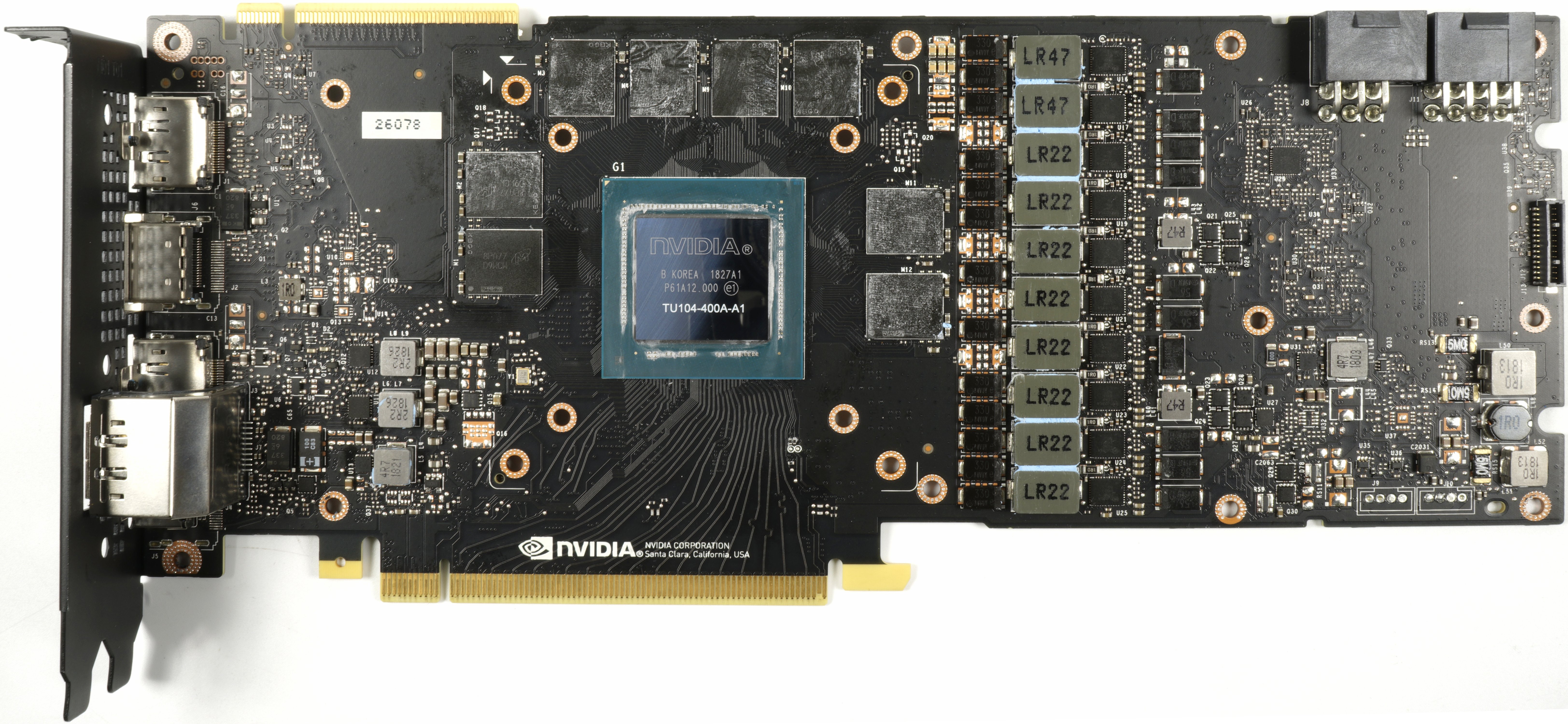

With its thermal solution removed, the GeForce RTX 2080’s PCB looks a little tidier than what we found on GeForce RTX 2080 Ti. After all, it hosts far fewer components. The power supply, for example, is a conventional 8 (GPU) + 2 (memory)-phase design. Nvidia didn’t need any of the trickery we discovered on its flagship. Six of the GPU’s phases are fed by the aforementioned power connectors (along with the memory’s phases), while the other two originate at the PCIe slot.

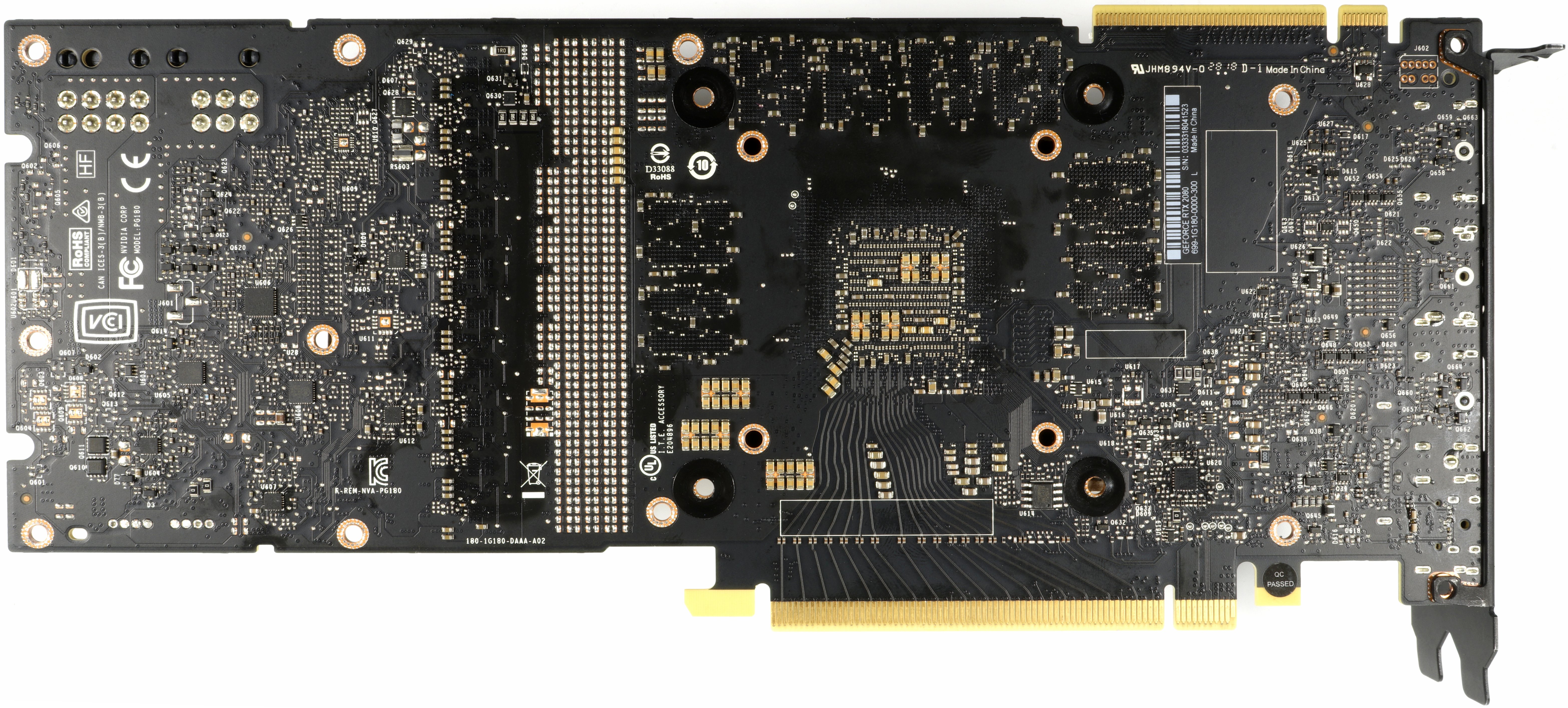

The PWM controller responsible for the GPU’s power phases is surface-mounted around back, while the one corresponding to Micron’s GDDR6 modules is up toward the top, under a PCIe connector.

It’s easy to tell where the memory phases are located; they’re up top as well, next to the higher-inductance coils.

GPU Power Supply

Front and center in this design is uPI's uP9512 eight-phase buck controller specifically designed to support next-gen GPUs. Per uPI, "the uP9512 provides programmable output voltage and active voltage positioning functions to adjust the output voltage as a function of the load current, so it is optimally positioned for a load current transient."

The uP9512 supports Nvidia's Open Voltage Regulator Type 4i+ technology with PWMVID. This input is buffered and filtered to produce a very accurate reference voltage. The output voltage is then precisely controlled to the reference input. An integrated SMBus interface offers enough flexibility to optimize performance and efficiency, while also facilitating communication with the appropriate software.

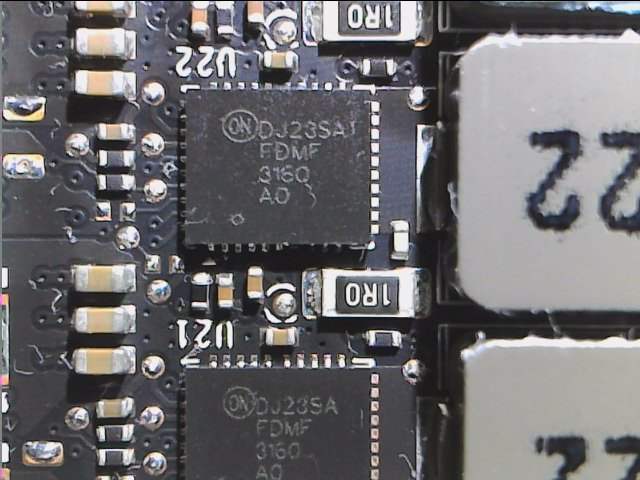

All 13 voltage regulation circuits are equipped with an ON Semiconductor FDMF3160 Smart Power Stage module with integrated PowerTrench MOSFETs and driver ICs.

As usual, the coils rely on encapsulated ferrite cores, but this time they are rectangular to make room for the voltage regulator circuits.

Memory Power Supply

Micron's MT61K256M32JE-14:A memory ICs are powered by two phases coming from a second uP9512. The same FDMF3160 Smart Power Stage modules crop up yet again. The 470mH coils offer greater inductance than the ones found on the GPU power phases, but they're completely identical in terms of physical dimensions.

The input filtering takes place via three 1μH coils, whereby each of the three connection lines has a matching shunt. This is a very low resistance to which voltage drop is measured in parallel and passed on to the telemetry. Through these circuits, Nvidia can limit board power in a precise way.

Unfortunately for the folks who like a bit of redundancy, this card only comes equipped with one BIOS.

How We Tested GeForce RTX 2080

Nvidia’s latest and greatest will no doubt be found in one of the many high-end platforms now available from AMD and Intel. Our graphics station still employs an MSI Z170 Gaming M7 motherboard with an Intel Core i7-7700K CPU at 4.2 GHz, though. The processor is complemented by G.Skill’s F4-3000C15Q-16GRR memory kit. Crucial’s MX200 SSD remains, joined by a 1.4TB Intel DC P3700 loaded down with games.

As far as competition goes, we can assume that GeForce RTX 2080 is bested by GeForce RTX 2080 Ti and Titan V, both of which we have in our test pool. We also compare GeForce GTX 1080 Ti, Titan X, GeForce GTX 1080, GeForce GTX 1070 Ti, and GeForce GTX 1070 from Nvidia. AMD is represented by the Radeon RX Vega 64 and 56. All cards are either Founders Edition or reference models. We do have some partner boards in-house from both Nvidia and AMD, and plan to use those for third-party reviews.

Our benchmark selection now includes Ashes of the Singularity: Escalation, Battlefield 1, Civilization VI, Destiny 2,Doom, Far Cry 5,Forza Motorsport 7, Grand Theft Auto V, Metro: Last Light Redux, Rise of the Tomb Raider, Tom Clancy’s The Division, Tom Clancy’s Ghost Recon Wildlands, The Witcher 3 and World of Warcraft: Battle for Azeroth. We’re working on adding Monster Hunter: World, Shadow of the Tomb Raider, Wolfenstein II, and a couple of others, but had to scrap those plans due to very limited time with Nvidia’s final driver for its Turing-based cards.

The testing methodology we're using comes from PresentMon: Performance In DirectX, OpenGL, And Vulkan. In short, all of these games are evaluated using a combination of OCAT and our own in-house GUI for PresentMon, with logging via AIDA64.

All of the numbers you see in today’s piece are fresh, using updated drivers. For Nvidia, we’re using build 411.51 for GeForce RTX 2080 Ti and 2080. The other cards were tested with build 398.82. Titan V’s results were spot-checked with 411.51 to ensure performance didn’t change. AMD’s cards utilize Crimson Adrenalin Edition 18.8.1, which was the latest at test time.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: GeForce RTX 2080 Founders Edition

Next Page Deep Learning Super-Sampling: Our First Taste of Quality and Performance-

velocityg4 What? No “just buy it” in your conclusion.Reply

Considering Founders edition usually starts about $100 more that standard edition. Plus, it is new to market. If a 2080 can be had for $100 more than a 1080 Ti. The price is as expected. -

Krazie_Ivan 2080 should have been the 2070, as it barely beats a 1080ti and is the TU104 die. and given the 30mo since Pascal launch, we should almost be looking at 3000 series benches. combine those two with the insane pricing, and Turing/RTX is a huge disappointment. DLSS could be nice and i'm glad Nvidia is pushing for RT development, but there's not enough positives here to justify the costs. $380 2080 / $500 2080ti (and relabel them to match their die codes, like Keplar-Pascal)... otherwise, no thx.Reply -

chaosmassive thank you for your thorough review on these cards,Reply

finally the card has been demystified and indeed for the price is it not worth the buy considering 1080 ti in such a low price..

turned out I dont need ray tracing in my life before I die. -

shrapnel_indie ReplyAfter seeing the GeForce RTX 2080 Ti serve up respectable performance in Battlefield V at 1920x1080 with ray tracing enabled,

Unfortunately, we’ll have to wait for another day to measure RTX 2080’s alacrity in ray-traced games. There simply aren’t any available yet.

Odd... either ray tracing graphics games are available or they're not. You can't test what isn't available for testing... and RT for BF5, last I heard was a zero-day patch... (or was it the modifications to RT that was supposed to improve FPS to acceptable levels.) -

cangelini Reply21333311 said:After seeing the GeForce RTX 2080 Ti serve up respectable performance in Battlefield V at 1920x1080 with ray tracing enabled,

Unfortunately, we’ll have to wait for another day to measure RTX 2080’s alacrity in ray-traced games. There simply aren’t any available yet.

Odd... either ray tracing graphics games are available or they're not. You can't test what isn't available for testing... and RT for BF5, last I heard was a zero-day patch... (or was it the modifications to RT that was supposed to improve FPS to acceptable levels.)

They're not available, but we've seen Battlefield 5 in action with ray tracing enabled ;) -

WINTERLORD wait a minute the 2080 has only one RT core and the 2080 has 72 RT cores? I think there may be an error in the review. update spoke to soon i think that means 1rt cluster...Reply

first page says " TU104 is constructed with the same building blocks as TU102; it just features fewer of them. Streaming Multiprocessors still sport 64 CUDA cores, eight Tensor cores, one RT core, four texture units, 16 load/store units, 256KB of register space, and 96KB of L1 cache/shared memory. " -

jimmysmitty Reply21333069 said:failure, this is a failure.

this gtx20 series looks like it won't be worth it.

I think sales will determine that and if history is anything without stiff competition from AMD I am sure they will sell just fine especially once the AiB cards come out.

21333082 said:What? No “just buy it” in your conclusion.

Considering Founders edition usually starts about $100 more that standard edition. Plus, it is new to market. If a 2080 can be had for $100 more than a 1080 Ti. The price is as expected.

Chris has never been like that.

That said, the pricing should be decent for AiB after a few months. When they launch they get price gouged. Still I would have loved a GTX 1080 price number. That GPU outperformed the 980 Ti by a good margin and was cheaper at launch.

Maybe AMD will come out with something sometime soon. Otherwise we wont see pricing drop. That or AMD will take advantage of the pricing increase and up theirs too.