Nvidia Uses Neural Network for Innovative Texture Compression Method

Nvidia proposes to use neural networks for innovative texture compression method.

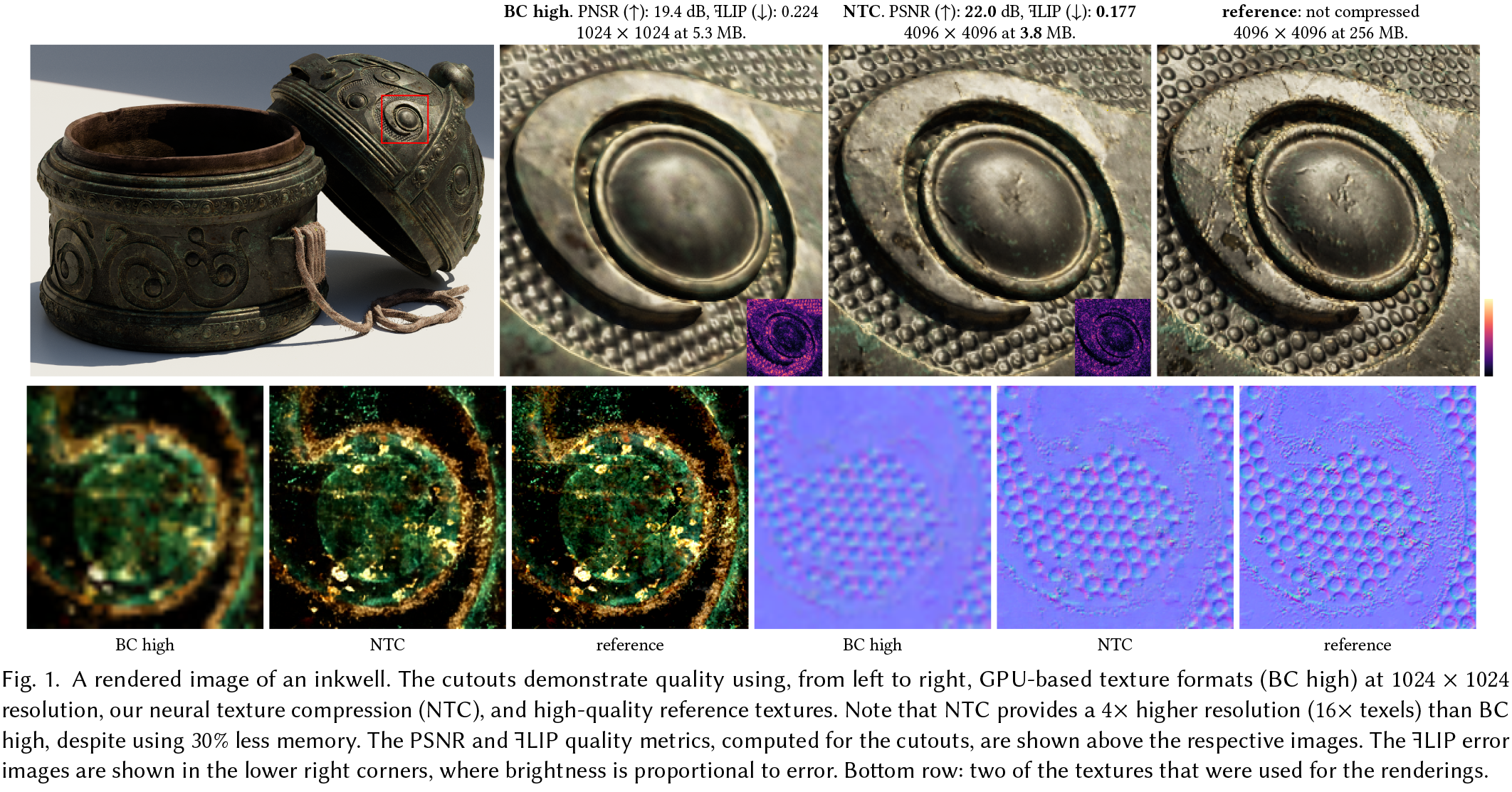

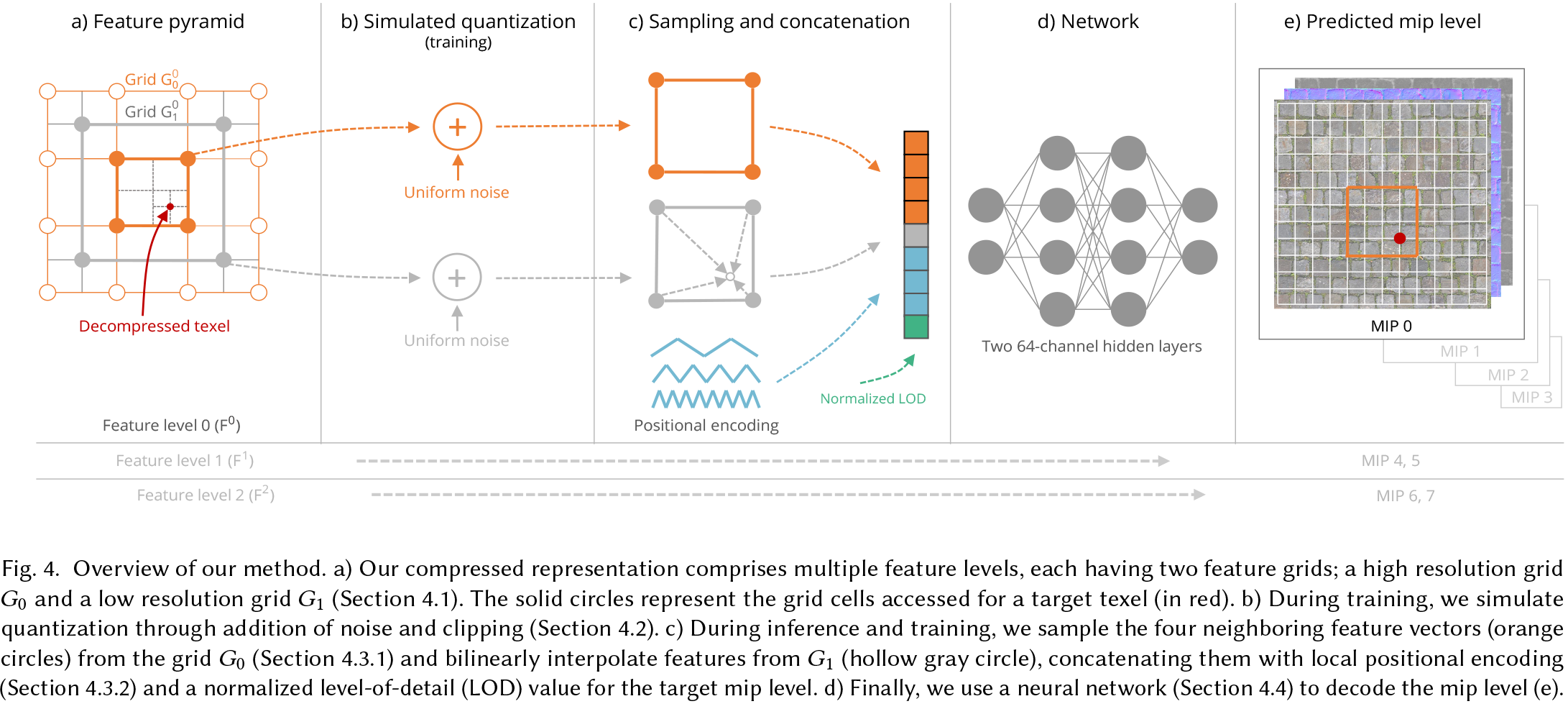

Nvidia this week introduced its new texture compression method that provides four times higher resolution than traditional Block Truncation Coding (BTC, BC) methods while having similar storage requirements. The core concept of the proposed approach is to compress multiple material textures and their mipmap chains collectively and then decompress them using a neural network that is trained for a particular pattern it decompresses. In theory, the method can even impact future GPU architectures. Yet, for now the method has limitations.

New Requirements

Recent advancements in real-time rendering for video games have approached the visual quality of movies due to usage of such techniques as physically-based shading for photorealistic modeling of materials, ray tracing, path tracing, and denoising for accurate global illumination. Meanwhile, texturing techniques have not really advanced at a similar pace mostly because texture compression methods essentially remained the same as in the late 1990s, which is why in some cases many objects look blurry in close proximity.

The reason for this is because GPUs still rely on block-based texture compression methods. These techniques have very efficient hardware implementations (as fixed-function hardware to support them have evolved for over two decades), random access, data locality, and near-lossless quality. However, they are designed for moderate compression ratios between 4x and 8x and are limited to a maximum of 4 channels. Modern real-time renderers often require more material properties, necessitating multiple textures.

Nvidia's Method

This is where Nvidia's Random-Access Neural Compression of Material Textures (NTC) comes into play. Nvidia's technology enables two additional levels of detail (16x more texels, so four times higher resolution) while maintaining similar storage requirements as traditional texture compression methods. This means that compressed textures with per-material optimization with resolutions up to 8192 x 8192 (8K) are now feasible.

To do so, NTC exploits redundancies spatially, across mipmap levels, and across different material channels. This ensures that texture detail is preserved when viewers are in close proximity to an object, something that modern methods cannot enable.

Nvidia claims that NTC textures are decompressed using matrix-multiplication hardware such as tensor cores operating in a SIMD-cooperative manner, which means that the new technology does not require any special purpose hardware and can be used on virtually all modern Nvidia GPUs. But perhaps the biggest concern is that every texture requires its own optimized neural network to decompress, which puts some additional load on game developers.

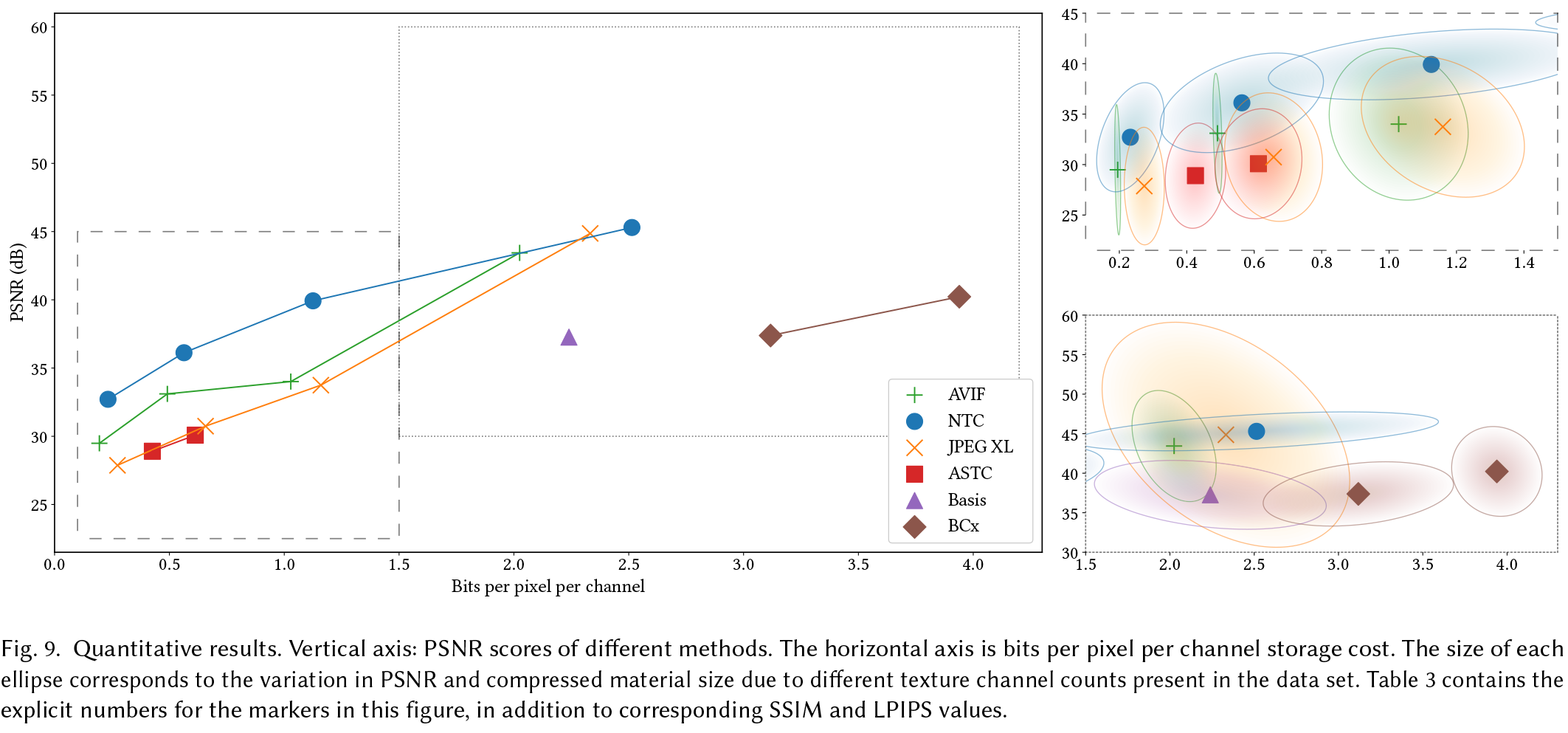

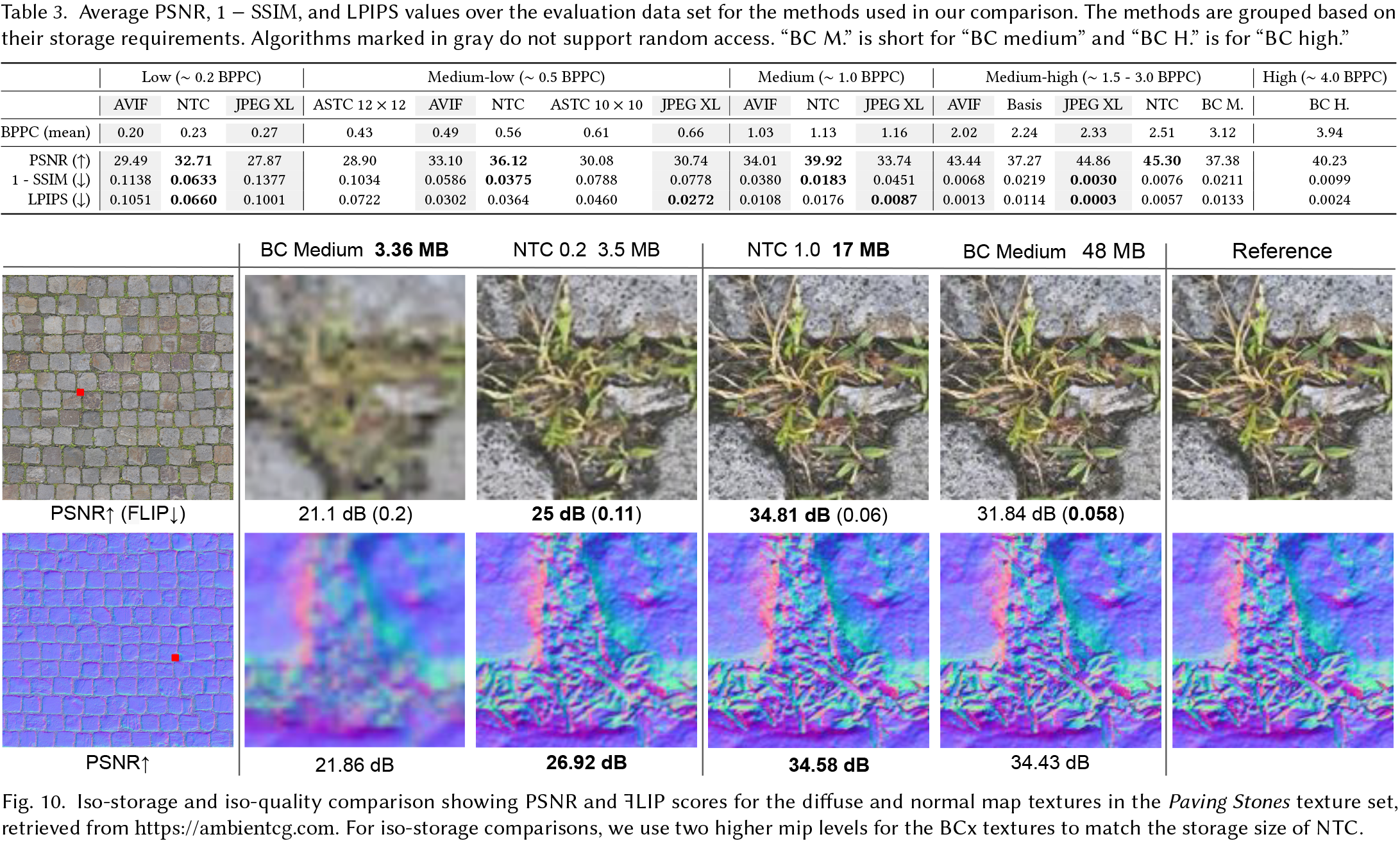

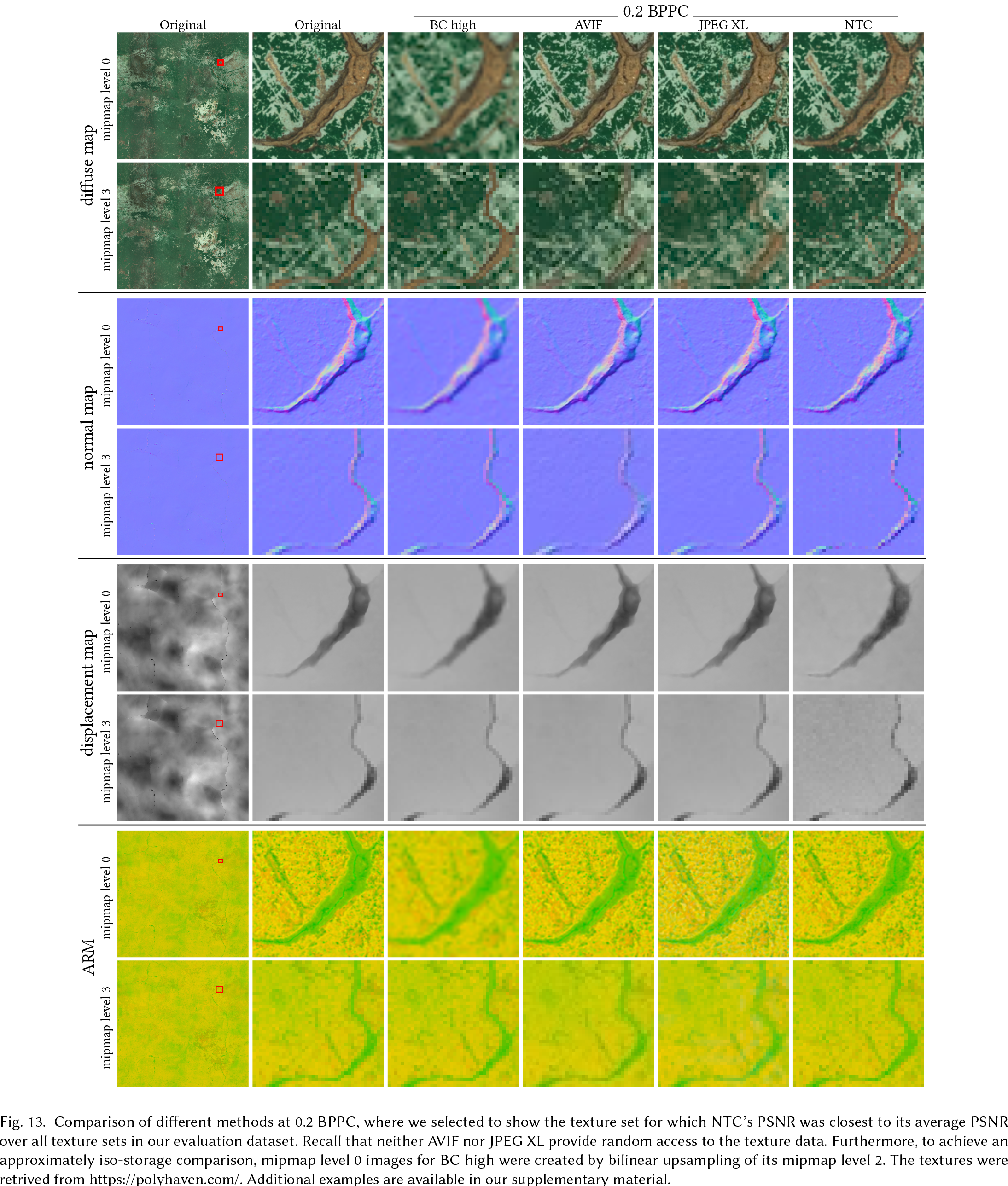

Nvidia says that resulting texture quality at these aggressively low bitrates is said to be comparable to or better than recent image compression standards, such as AVIF and JPEG XL, which are not designed for real-time decompression with random access anyway.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Practical Advantages and Disadvantages

Indeed, images demonstrated by Nvidia clearly show that NTC is better than traditional Block Coding-based technologies. However, Nvidia admits that its method is slower than traditional methods (it took a GPU 1.15 ms to render a 4K image with NTC textures and 0.49 ms to render a 4K image with BC textures), but it provides 16x more texels albeit with stochastic filtering.

While NTC is more resource-intensive than conventional hardware-accelerated texture filtering, the results show that it delivers high performance and is suitable for real-time rendering. Moreover, in complex scenes using a fully-featured renderer, the cost of NTC can be partially offset by the simultaneous execution of other tasks (e.g., ray tracing) due to the GPU's ability to hide latency.

Meanwhile, rendering with NTC can be accelerated new hardware architectures, increased number of dedicated matrix-multiplication units that might used, increased cache sizes, and register usage. Actually, some of the optimizations can be made on the programmable level.

Nvidia also admits that NTC is not a completely lossless method of texture compression and produce visual degradation at low bitrates and has some limitations, such as sensitivity to channel correlation, uniform resolution requirements, and limited benefits at larger camera distances. Furthermore, advantages are proportional to channel count and may not be as significant for lower channel counts. Also, since NTC is optimized for material textures and always decompresses all material channels, it makes it potentially unsuitable for use in different rendering contexts.

While the advantage of NTC is that it does not use fixed-function texture filtering hardware to produce its superior results, this is also its key disadvantage. Texture filtering cost is computationally expensive, which is why for now anisotropic filtering with NTC is not feasible for real-time rendering. Meanwhile, stochastic filtering can introduce flickering.

But despite limitations, NTC's compression of multiple channels and mipmap levels together produces a result that exceeds industry standards. Nvidia researchers believe that its approach is paving the way for cinematic-quality visuals in real-time rendering and is practical for memory-constrained graphics applications. Yet, it introduces a modest timing overhead compared to simple BTC algorithms, which impacts performance.

Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

-

hotaru.hino I'm imagining the holy grail of this would be just have the AI procedurally generate a texture.Reply -

From the blog post, it appears that NVIDIA’s algorithm is representing these in three dimensions, tensors like a 3-D matrix . But the only thing that NTC assumes is that each texture has the same size which can be a drawback of this method if not implemented properly.Reply

That's why the render time is actually higher than BC, and also visual degradation at low bitrates. All the maps need to be the same size before compression, which is bound to complicate workflows, and algorithm's speed.

But i's an interesting concept nonetheless. NTC seems to employ matrix multiplication techniques, which at least makes it more feasible and versatile due to reduced disk and/or memory limitations.

And with GPU manufacturers being strict with VRAM even on the newest mainstream/mid-range GPUs, the load is now on software engineers to find a way to squeeze more from the hardware available today. Maybe we can see this as more feasible after 2 or 3 more generations.

Kind of reminds me of PyTorch implementation. -

hotaru251 tl;dr NVIDIA wants industry to move towards its specific tech part of market.Reply

only really true of Nvidia. (i mean they gave 60 tier mroe than their 70 tier last gen wand that made completely no sense)Metal Messiah. said:with GPU manufacturers being strict with VRAM even on the newest mainstream/mid-range GPUs,

AMD's been pretty generous with vram on their gpu's. -

hotaru.hino Reply

If it does a significantly better job than anything else, then the industry will move to it anyway, licensing fees be damned.hotaru251 said:tl;dr NVIDIA wants industry to move towards its specific tech part of market. -

Sleepy_Hollowed While cool, I don't see this being money or time spent by game devs, unless nvidia or someone takes it upon themselves to make it transparent to the developers without causing any issues.Reply

The schedules for games are already insane, and some huge budget titles either get pushed back a lot, or worse, get released with a lot of issues on different systems (cyberpunk anyone?), so I don't see this being adopted until it's mature or nvidia is willing to bleed money to make it a monopoly, which is always possible. -

derekullo Reply

That sounds nice, but the phrase a picture is worth a 1000 words works against us here.hotaru.hino said:I'm imagining the holy grail of this would be just have the AI procedurally generate a texture.

Without a very specific set of parameters for the AI to follow everyone would get a different texture.

I can type 99 red balloons into Stable diffusion and get 99 different sets of 99 red balloons, each one slightly different from the last. -

hotaru.hino Reply

And that would be fine for certain things where randomness is expected, like say tree bark, grass, ground clutter, dirt or other general messiness.derekullo said:That sounds nice, but the phrase a picture is worth a 1000 words works against us here.

Without a very specific set of parameters for the AI to follow everyone would get a different texture.

I can type 99 red balloons into Stable diffusion and get 99 different sets of 99 red balloons, each one slightly different from the last. -

bit_user ReplyMeanwhile, texturing techniques have not really advanced at a similar pace mostly because texture compression methods essentially remained the same as in the late 1990s, which is why in some cases many objects look blurry in close proximity.

LOL, wut? Were they even doing texture compression, in the late '90s? If so, whatever they did sure wasn't sophisticated.

Since then, I think the most advanced method is ASTC, which was only introduced about 10 years ago.

More to the point, programmable shaders were supposed to solve the problem of blurry textures! I know they can't be used in 100% of cases, but c'mon guys!

Nvidia claims that NTC textures are decompressed using matrix-multiplication hardware such as tensor cores operating in a SIMD-cooperative manner, which means that the new technology does not require any special purpose hardware and can be used on virtually all modern Nvidia GPUs.

Uh, well you can implement conventional texture compression using shaders, but it's not very fast or efficient. That's the reason to bake it into the hardware!

in complex scenes using a fully-featured renderer, the cost of NTC can be partially offset by the simultaneous execution of other tasks

It's still going to burn power and compete for certain resources. There's no free lunch, here.

I think they're onto something, but it needs a few more iterations of development and refinement. -

bit_user Reply

I joked about this with some co-workers, like 25 years ago. Recently, people have started using neural networks for in-painting, which probably means you might also be able to use it for extrapolation!hotaru.hino said:I'm imagining the holy grail of this would be just have the AI procedurally generate a texture.

AI is _really_ good at optimizing, though. I think they're onto something.hotaru251 said:tl;dr NVIDIA wants industry to move towards its specific tech part of market.

Also, texture compression has been key for mobile gaming, where memory capacity and bandwidth are at a premium. Any improvements in this tech can potentially benefit those on low-end hardware, the most.