Nvidia RTX 3050 vs AMD RX 6600 faceoff: Which GPU dominates the budget-friendly $200 market?

Only previous generation GPUs currently cost $200 or less.

Budget-minded gamers rarely want to spend more than $200 on a graphics card, making this price bracket important for the sheer number of GPUs that get sold. Neither AMD nor Nvidia has released a new card for this market in the past couple of years, so there are limited options and few would make our list of the best graphics cards. Two GPUs that hit the desired budget price point are the RTX 3050 and RX 6600, and today we're putting them against each other for a GPU faceoff battle.

The RTX 3050 debuted at the start of 2022 as one of Nvidia’s final RTX 30-series SKUs. The card originally launched for $249, or at least that was the official MSRP — we were still living in an Ethereum cryptomining world and so most cards ended up selling for far more than the MSRP. We liked the card's price-to-performance ratio in theory but disliked its real-world pricing. Thankfully, prices have come down on all graphics cards, and the RTX 3050 8GB model now starts at $199.

Please note that we're strictly looking at the RTX 3050 8GB variant and not the newer RTX 3050 6GB that cuts specs and performance. Apparently Nvidia had some extra GA106/GA107 chips that it still needed to clear out. You can save $20~$30 with the 6GB card, but even 8GB feels a bit worrisome these days, with games using increasing amounts of VRAM.

The AMD RX 6600 launched in late 2021, a few months before the 3050. It was one of AMD’s prime mid-range GPUs based on the RDNA 2 architecture and an intended competitor to the RTX 3060 12GB. But AMD didn't start with MSRPs that no one would see for a year or more, instead pricing the 6600 at $329 at launch. Since its release, the GPU has seen shockingly steep pricing discounts and it has been selling for around $200 for well over a year now. This has kept the card competitive as an entry-level solution, and while we weren't impressed with the card's launch price and sometimes lackluster 1080p performance — remember, it was theoretically priced to compete more with the 3060 at launch — we're far more forgiving when you can pick the GPU up for a couple of Benjamins.

We're now a couple of years past the launch dates of the 3050 and 6600, but let's look at these budget-friendly cards from today's perspective to see how they stack up against each other. We'll discuss performance, value, features, technology, software, and power efficiency — as usual those are listed in order of generally decreasing importance.

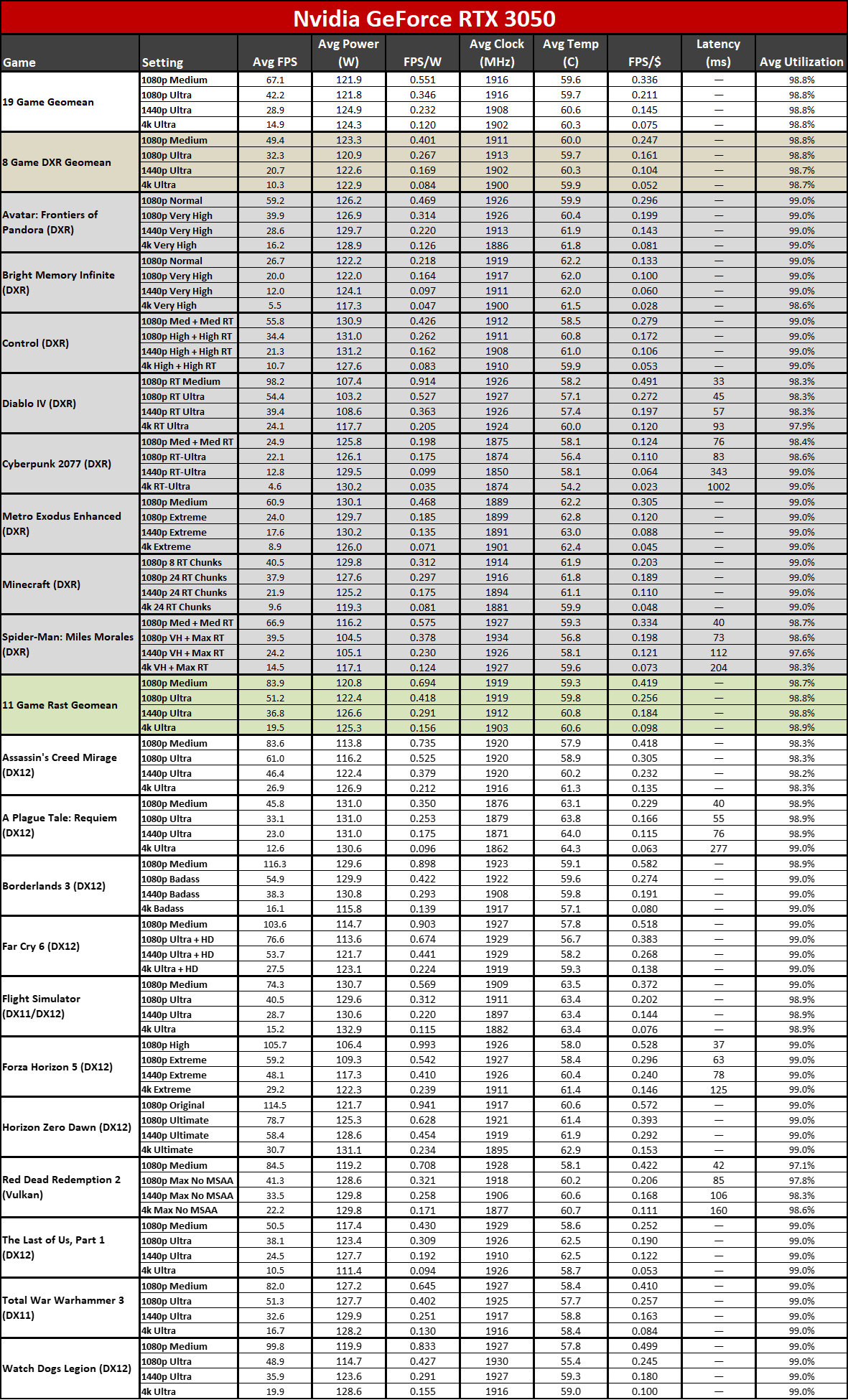

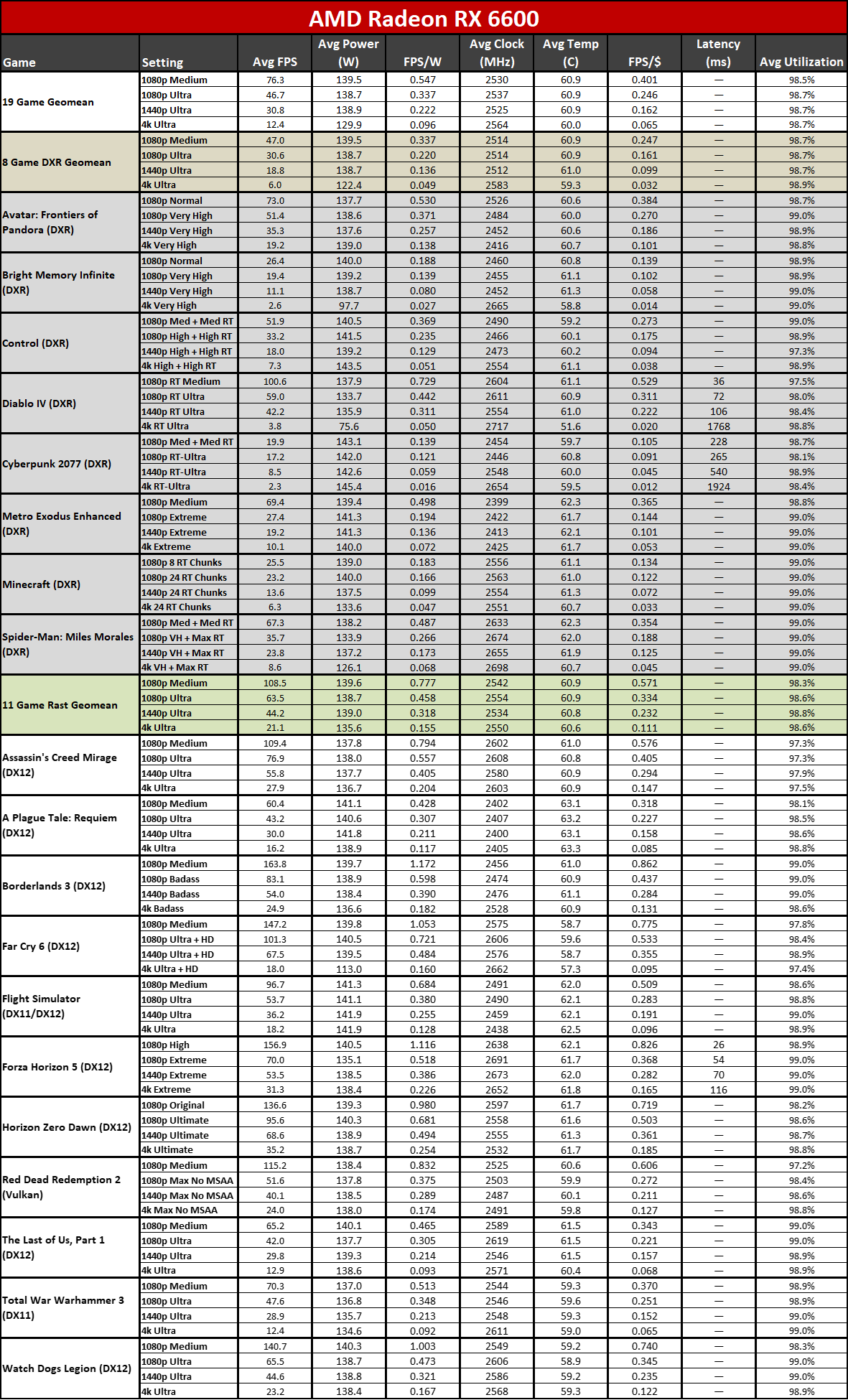

RTX 3050 vs RX 6600: Performance

The fact that the RX 6600 was originally competing against the RTX 3060 should give you a good guess at its performance. Unsurprisingly, the AMD GPU is ultra-competitive at its current pricing, and it's generally able to significantly outpace the RTX 3050.

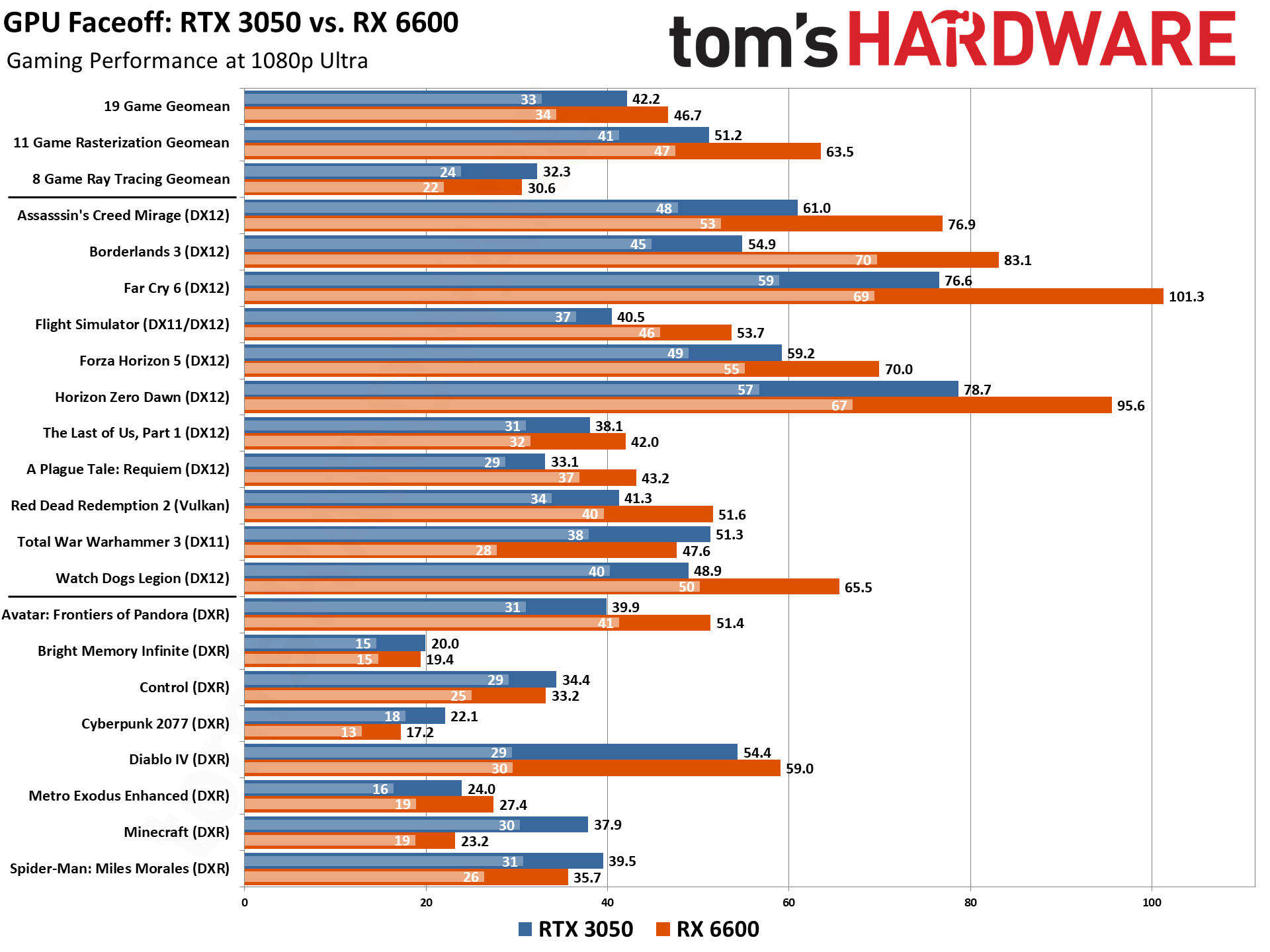

At 1080p ultra, the RX 6600 was almost 25% faster in our 11-game rasterization test suite. That's thanks to several games where it absolutely dominates the RTX 3050. Borderlands 3 is the posterchild for a games that heavily favorable toward AMD GPUs — and it was an AMD-promoted game at launch — with the 6600 beating the 3050 by over 50%. Far Cry 6, another AMD-promoted game, also shows a 32% lead. It's not only AMD-promoted games that favor the 6600, however, as Plague Tale: Requiem (Nvidia-promoted) also shows a 30% lead for AMD's GPU. The same goes for Watch Dogs Legion (34%, Nvidia-promoted), Flight Simulator (33%, neutral party) and Red Dead Redemption 2 (29%, also neutral).

The only rasterized title that favors the Nvidia GPU is Total War: Warhammer 3, where the 3050 outpaces the RX 6600 by 8% — but with a bigger 36% lead on the minimum framerates. Total War: Warhammer 3 is also the only DX11-exclusive title left in our test suite, and AMD's GPUs have often done worse using the older API. Most modern games have upgraded to DX12 engines, so this is a relatively insignificant advantage nowadays. There are still plenty of e-sports titles that use DX11, but they tend to run fast regardless of what GPU you use.

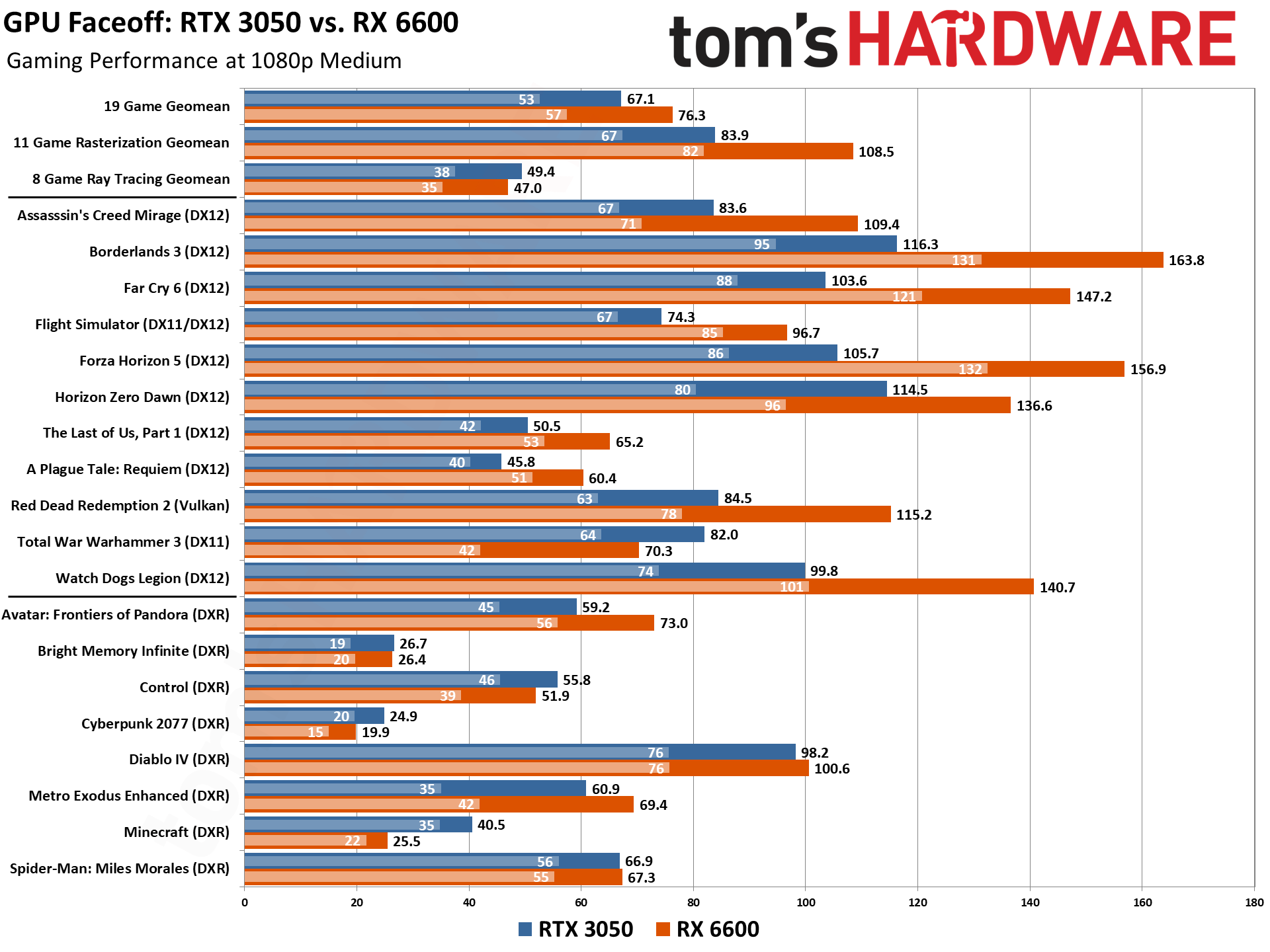

1080p medium settings further tilt toward the RX 6600, with similar patterns to the above. Overall, AMD outpaces the RTX 3050 8GB card by 14%, but if we focus just on the rasterization games it's a 29% lead. That's about the difference between the 3060 12GB and the 3050 8GB, incidentally.

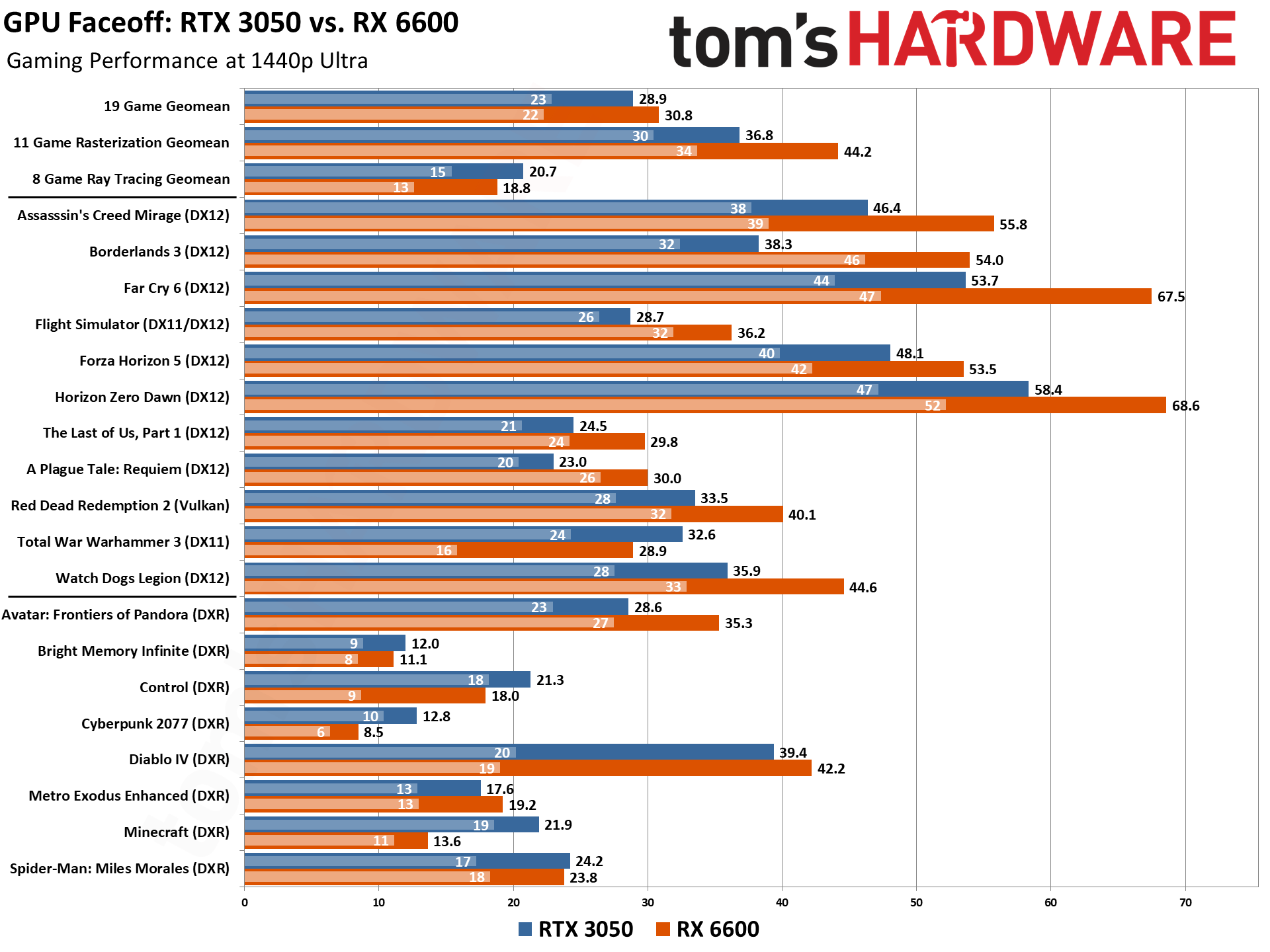

At 1440p ultra, rasterized performance still heavily favors the RX 6600, with it maintaining a 20% lead over the 3050 in our 11-game aggregate score. Borderlands 3, Far Cry 6, A Plague Tale: Requiem, Flight Simulator, The Last of Us, Red Dead Redemption 2, and Watch Dogs Legion all continue to show a 20% or higher (up to 40% with BL3) lead for the AMD GPU over the Nvidia GPU.

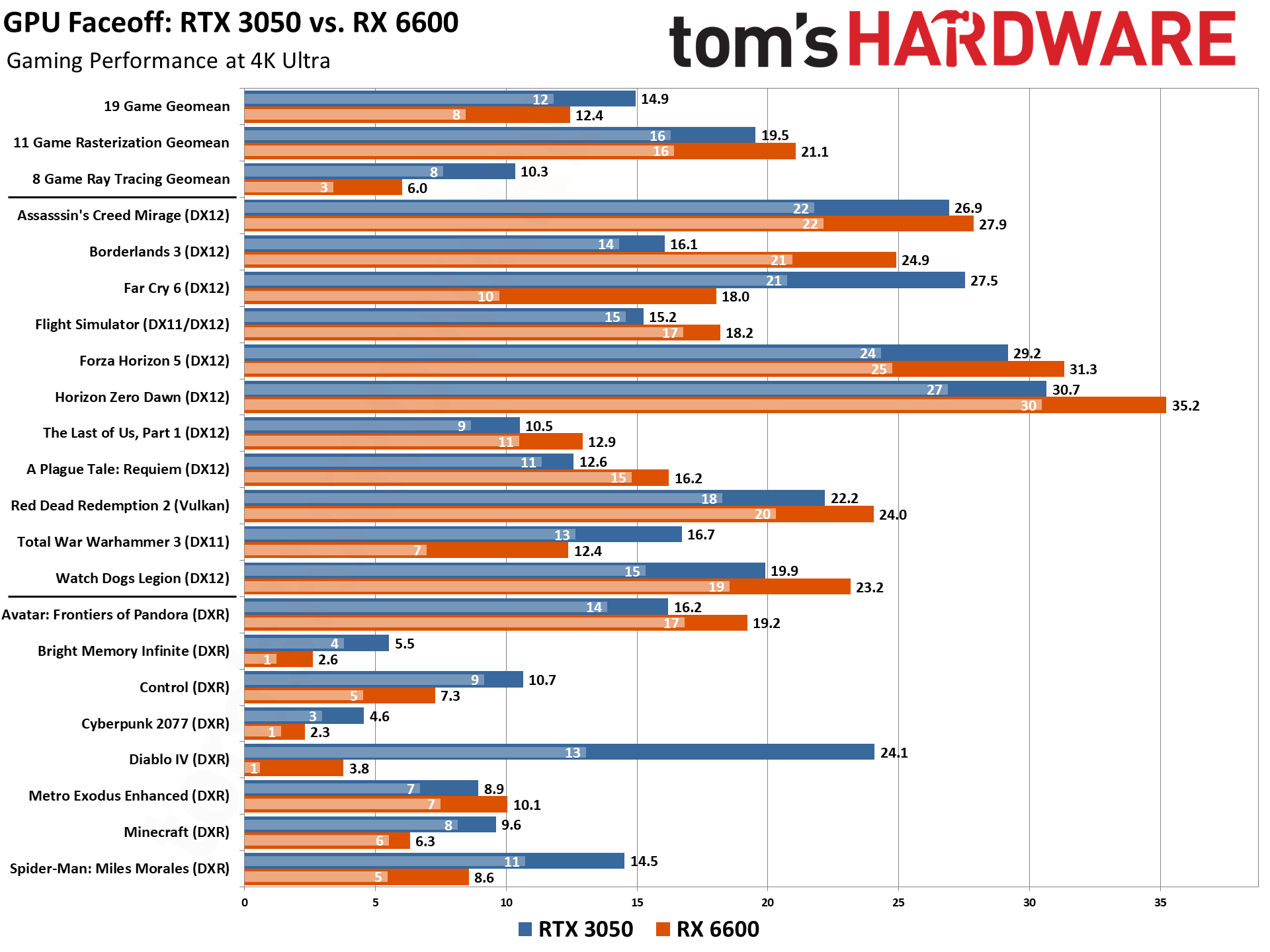

Things go haywire for the RX 6600 at 4K ultra in quite a few of our games. In our 11-game geomean, the RX 6600 goes from a massive 20% lead to a measly 8% lead over the RTX 3050. To make matters worse for the AMD GPU, Far Cry 6 (an AMD-sponsored game, mind you) joins Warhammer 3 in performing better on the RTX 3050 at 4K. It isn’t by a small margin either — the RX 6600 goes from a 25.8% performance advantage at 1440p to an decisive 34.5% performance loss.

This is something of an outlier, and Far Cry 6 is known to have some wonky behavior when it comes to 8GB GPUs, especially when the HD texture pack is applied and testing at 4K. It really needs at least 10GB, or even 12GB, of VRAM to handle such settings. Performance becomes far more inconsistent, and the poor RX 6600 performance highlights AMD's inferior memory compression technology. Neither GPU delivers a great experience in the game at 4K, though, so it's a bit of a pyrrhic victory for Nvidia.

Not surprisingly, ray tracing performance generally favors the RTX 3050, but not by as much as we've seen with other GPU matchups. At 1080p ultra, the Nvidia RTX 3060 is only 6% faster than the RX 6600, which is impressive considering that Nvidia has a vastly superior architecture when it comes to processing RT workloads. 1080p medium ray tracing also favors the 3050 with a minor 5% lead overall.

Moving up to 1440p ultra, the RTX 3050 doubles its lead to 10%. And at 4K, the GPUs aren’t even close. The RTX 3050 outperforms the RX 6600 by a whopping 72%. Again, this is a result of the Nvidia GPU’s superior memory management, as well as its RT hardware acceleration, which is better at handling ray tracing at higher resolutions. But that 72% lead doesn't mean much when we're talking about 10.3 fps versus 6.0 fps — clearly these two GPUs aren't even remotely capable of handling 4K ultra with ray tracing.

In fact, ray tracing performance should not be considered a priority for anyone looking at $200 graphics cards like the RX 6600 and RTX 3050. The performance hit for often minor changes in image quality simply isn't worth considering, and while 1080p might be playable with DXR (DirectX Raytracing), 1440p rasterization would be a better overall experience.

Note that we're not showing professional or AI performance for these GPUs, as they're really not a great fit for either market. The RTX 3050 does have faster AI and Blender rendering performance, and the RX 6600 wins in SPECviewperf, but if you're serious about using such applications, you should try to find a faster GPU than either of these budget options.

Performance Winner: AMD

The RX 6600 comes off as the clear winner in the performance category. There are too many things going right for the RX 6600. It boasts superb rasterization performance, beating the RTX 3050 by a significant amount. Even in ray tracing, the RX 6600 is only 5% slower than its Nvidia counterpart at 1080p, which is arguably the only resolution that truly matters for GPUs of this caliber. This is what you get when you discount a previously $329 GPU by nearly $150.

RTX 3050 vs RX 6600: Price

As of June 2024, pricing for the RX 6600 and RTX 3050 are mostly the same. Both GPUs can be had for as little as $199.99 from multiple AIB partners including PowerColor, ASRock, MSI, and Sapphire. The AMD GPU technically can be found for less with the Gigabyte RX 6600 Eagle for $194.99 at Newegg, but that’s via a $5 limited time promo. The cheapest Nvidia card right now appears to be the MSI RTX 3050 Ventus 2X at $199.99 — and given how old these cards are, retail supply might become harder to find in the coming months.

Premium versions of both cards however do cost a bit more. If you're willing to shell out an additional $20-$50 (which is a lot considering the price these cards go for), you could pick up overclocked and even triple-fan variants. But at that point there are often faster GPUs that already offer better features.

Price Winner: Tie

We're calling pricing a tie since neither GPU costs significantly less than the other. Of course, the AMD GPU was already declared the winner in performance, meaning it's a better value overall at the same price, but we already gave AMD credit for the performance category.

RTX 3050 vs RX 6600: Features, Technology, and Software

| Graphics Card | RTX 3050 | RX 6600 |

|---|---|---|

| Architecture | GA106 | Navi 23 |

| Process Technology | Samsung 8N | TSMC N7 |

| Transistors (Billion) | 12 | 11.1 |

| Die size (mm^2) | 276 | 237 |

| SMs / CUs | 20 | 28 |

| GPU Cores (Shaders) | 2560 | 1792 |

| Tensor / AI Cores | 80 | NA |

| RT Cores/Ray Accelerators | 20 | 28 |

| Boost Clock (MHz) | 1777 | 2491 |

| VRAM Speed (Gbps) | 14 | 14 |

| VRAM (GB) | 8 | 8 |

| VRAM Bus Width | 128 | 128 |

| L2 / Infinity Cache | 2 | 32 |

| Render Output Units | 48 | 64 |

| Texture Mapping Units | 80 | 112 |

| TFLOPS FP32 (Boost) | 9.1 | 8.9 |

| TFLOPS FP16 (FP8) | 36 (73) | 18 |

| Bandwidth (GBps) | 224 | 224 |

| TDP (watts) | 130 | 132 |

| Launch Date | Jan 2022 | Oct 2021 |

| Launch Price | $249 | $329 |

| Online Price | $200 | $200 |

AMD and Nvidia use very different approaches when it comes to the GPUs and their respective specifications. The Nvidia RTX 3050 has more GPU shaders while AMD's RX 6600 has more ROPS and TMUs. AMD's RDNA 2 architecture also comes with a big chunk of L3 Infinity Cache that helps dramatically boost effective bandwidth — a tactic Nvidia employs with its newer Ada Lovelace architecture — but the older Ampere architecture only has a 2MB L2 cache.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

These differences help explain why the RX 6600 is so good in gaming workloads, particularly for rasterization where ROPs and TMUs play a more significant role. So, despite the fact that AMD technically has fewer cores and slightly lower compute teraflops, it ultimately wins in many performance metrics.

Nvidia does offer other features that AMD's RX 6600 lacks. We've talked about ray tracing, but arguably the presence of tensor cores with FP16 support represents a far more important ability. These are useful in AI workloads, and the RTX 3050 offers up 73 teraflops of FP16 compute (with sparsity; half that for dense operations), and 146 teraops (TOPS) of INT8 compute (again, with sparsity). That's significantly more than the fastest NPUs for Copilot+ certification.

Inevitably, these extra features make the RTX 3050 a better GPU for compute-intensive tasks (i.e., non-gaming workloads), but realistically, not many people will be doing such tasks on an entry-level Nvidia GPU. But the RTX 3050's raw compute performance isn't entirely a waste in gaming; Nvidia's AI-based DLSS upscale takes advantage of the tensor cores, and delivers superior image fidelity than AMD's non-AI FSR 2/3 upscaling.

Memory speed and capacity are identical between the two GPUs, but as noted already there are two key differences. First, AMD has a large L3 cache that boosts the effective bandwidth. But the flip side of this is that Nvidia has superior memory compression technology. That's mostly only apparent in our 4k tests, where the RTX 3050 was able to outperform the RX 6600 significantly in multiple titles when AMD's GPU seemed to hit the proverbial wall.

Ray tracing is another area where the RTX 3050 offers superior features. This is simply due to the architectural differences between the 3050 and 6600. Nvidia prioritized ray tracing in its Ampere architecture (technically it's been doing so since Turing), with dedicated RT cores for handling all of the ray intersection calculations. AMD hasn't been as open regarding how its RT hardware functions, but every empirical test clearly indicates you can get higher throughput from the Nvidia implementation. It's a niche feature for a $200 GPU, but if you want to poke around at ray tracing — either in games or in professional apps — it's another Nvidia advantage.

Nvidia takes another win in software and other utilities. Besides the aforementioned DLSS upscaling, there are several other AI-enhanced apps including RTX Broadcast, Video Super Resolution (video upscaling), and RTX Chat. AMD doesn't have a direct answer to these (though it does have a background noise cancellation — just not AI-powered).

Features, Technology, and Software Winner: Nvidia

Nvidia wins this part of the faceoff. Even though the RTX 3050 might be clearly worse in raw gaming performance (besides a few exceptions including DXR games), there's no denying that Nvidia has packed the RTX 3050 with better technology. It has AI accelerators, better RT cores, and superior memory compression compared to the RX 6600.

RTX 3050 vs RX 6600: Power Efficiency

Power consumption ends up being exceptionally close between the two GPUs, particularly if you consider performance per watt rather than raw power use. As our final category, this is already lower on the scale of importance, and most people aren't going to be terribly worried about a difference of up to 20W — which is what we see here. Turn off an LED bulb or two and you've glosed the gap.

At 1080p and 1440p, the RTX 3050 does beat the RX 6600 in raw power consumption, but only by a small margin. It's only 14–18 watts overall, and again we have to consider performance as well. Looking at the FPS/W metric, the RTX 3050 "wins" but only by 1–4 percent. It's a bigger lead at 4K ultra, due to the collapse in the RX 6600's performance that happens there, so the 25% higher FPS/W doesn't really amount to much.

The closer results in power efficiency speaks to the quality of Nvidia’s Ampere architecture. Nvidia opted to not use the best silicon available at the time the RTX 30-series was released, opting instead for the less expensive Samsung 8N node (a refined 10nm-class that Nvidia helped create). AMD used TSMC’s superior N7 node — and Nvidia also used TSMC N7 for it's data center and AI Ampere A100 GPU. Supply constraints were also a concern, but whatever the case, Nvidia basically matches AMD on FPS/W while using an inferior process node, which in turn shows that the fundamental architecture is very good in terms of efficiency.

Power Efficiency Winner: Tie

While the RTX 3050 does use slightly less power than the RX 6600, in practice it's not a meaningful victory. Both GPUs are found in cards that typically use an 8-pin PEG connector, and outside of 4K ultra — not really a practical resolution for many games on these budget cards — efficiency ends up being a tie. Discount the ray tracing results and AMD even comes out with about a 10% lead in FPS/W for rasterization games.

RTX 3050 vs. RX 6600 Verdict

| Row 0 - Cell 0 | RTX 3050 | RX 6600 |

| Performance | Row 1 - Cell 1 | XX |

| Price | X | X |

| Features, Technology, Software | X | Row 3 - Cell 2 |

| Power and Efficiency | X | X |

| Total | 3 | 4 |

As we've noted in other GPU faceoffs, there's more to declaring a winner than simply adding up the four categories. We called pricing and power a tie, so those categories don't really factor in one way or the other. Nvidia wins in features and software, but AMD wins in gaming performance. And at the end of the day, performance matters far more to most people than some extra AI features, so we're giving two points for that category — especially considering the often wide margin of victory. If you're mostly interested in non-gaming uses, the RTX 3050 could be the better card, but for gaming we would rather have the RX 6600.

The RX 6600 really is considerably faster for gaming purposes, particularly at settings people would want to use with this level of hardware. These GPUs are for 1080p gaming and perhaps 1440p at a stretch, so even though we showed 4K performance results, they're not a serious part of the discussion. AMD intended for the RX 6600 to compete with the RTX 3060 12GB, at least in theory and based on launch prices. It would have a difficult time winning that matchup — one of the few instances where Nvidia will give you more VRAM than AMD — but the RTX 3060 is largely phased out now, especially the 12GB variant.

If you just want a budget gaming card, both GPUs have strengths and weaknesses. Perhaps you can match the RX 6600 performance in rasterization games by turning on DLSS, for example. Certainly there are other Nvidia features that can be useful, like Broadcast and VSR. Both cards also encounter issues at times due to only having 8GB of VRAM, a product of their age as much as anything.

More than two years ago, when these were brand-new GPUs, the situation in the graphics card market was entirely different than what we see today. Prices were all kinds of messed up, and while the MSRP on the RTX 3050 was lower on paper, in practice it was actually the more expensive card most of the time. Now, you can choose between the two without worrying so much about cost.

The Steam Hardware Survey at present says 2.90% of surveyed gaming PCs are using the RTX 3050, compared to just 0.81% using the RX 6600. That means the 3050 leads by about a 3.6 to 1 ratio in terms of market share. But Nvidia also leads by around 8 to 1 (looking at the past several generations of dedicated GPUs), so RX 6600 does better than many other AMD cards, and there's a reason for that. If you're in the market for a $200 graphics card right now, give serious consideration to AMD's RX 6600. We have no issue with recommending it as the generally faster option, and for that reason, it's our winner, by a nose — or a transistor.

If you're looking at the next step up in performance, check out the newer RTX 4060 versus RX 7600, where Nvidia very much turns the tables at the $260~$300 price point.

Aaron Klotz is a contributing writer for Tom’s Hardware, covering news related to computer hardware such as CPUs, and graphics cards.

- Jarred WaltonSenior Editor

-

Neilbob 'We're calling pricing a tie since neither GPU costs significantly less than the other. Of course, the AMD GPU was already declared the winner in performance, meaning it's a better value overall at the same price, but we already gave AMD credit for the performance category.'Reply

I'm not one to go all fanboy over faceless corporations, but I can't understand the reasoning used in parts of this comparison. The 3050 was bad value from the moment it was released, and it's still bad value now.

The above part sums it up for me: why the aversion to giving credit to AMD when the performance of the 6600 overall is at times vastly better than the 3050 for a similar or even lower price? Because 'that wouldn't be fair to the 3050' is what it reads like to me. It makes no sense. Any extra 'features' or 'technology' the 3050 has can't make up for that level of discrepancy. You don't seem to have a similar problem with certain other product comparisons. Not to mention that the performance difference between the two cards may equate to going from a jittery mess on one to relatively smooth on the other.

I hope you're not using the overall geomean result as a justification for making price a tie - in general, raytracing performance is too low to be considered worthwhile for either of them. You make mention of this in the article, notably with the 3050 being 72% faster at 4k; a lot of that can be attributed to just Diablo 4, but it seems the performance margin is still being counted as valid. These are NOT 4K cards, even with rasterisation.

---

I've just read all that back to myself and realised I've gone off on a rant. And I don't care. I can't help it: I honestly think the 3050 is one of the worst value products Nvidia has ever spawned, and hate that so many people have blindly flocked to it. It's nonsense like that which enables companies to justify higher prices. -

artk2219 Reply

I get it, and im in agreement with you, the RTX 3050 was always a bad value proposition, and that hasn't changed just because its now tied in pricing with a card that is still generally faster where it counts. Sure you can talk about ray tracing etc all day long, but for cards of this class it doesn't matter, they both suck at it and are not really useful for that application. This one should have gone to the 6600 without any reservations, as the people that are buying these are generally just looking to get into 1080p gaming, and neither card can really ray trace. These are the charts that really matter, and almost across the board, with very few exceptions, the RTX 3050 gets its teeth kicked in, in some cases it ties, but even those are few.Neilbob said:'We're calling pricing a tie since neither GPU costs significantly less than the other. Of course, the AMD GPU was already declared the winner in performance, meaning it's a better value overall at the same price, but we already gave AMD credit for the performance category.'

I'm not one to go all fanboy over faceless corporations, but I can't understand the reasoning used in parts of this comparison. The 3050 was bad value from the moment it was released, and it's still bad value now.

The above part sums it up for me: why the aversion to giving credit to AMD when the performance of the 6600 overall is at times vastly better than the 3050 for a similar or even lower price? Because 'that wouldn't be fair to the 3050' is what it reads like to me. It makes no sense. Any extra 'features' or 'technology' the 3050 has can't make up for that level of discrepancy. You don't seem to have a similar problem with certain other product comparisons. Not to mention that the performance difference between the two cards may equate to going from a jittery mess on one to relatively smooth on the other.

I hope you're not using the overall geomean result as a justification for making price a tie - in general, raytracing performance is too low to be considered worthwhile for either of them. You make mention of this in the article, notably with the 3050 being 72% faster at 4k; a lot of that can be attributed to just Diablo 4, but it seems the performance margin is still being counted as valid. These are NOT 4K cards, even with rasterisation.

---

I've just read all that back to myself and realised I've gone off on a rant. And I don't care. I can't help it: I honestly think the 3050 is one of the worst value products Nvidia has ever spawned, and hate that so many people have blindly flocked to it. It's nonsense like that which enables companies to justify higher prices.

-

rluker5 I'd have to say the unmentioned $200 A750 wins this round.Reply

From 2022:

And after 2 years of driver improvements it isn't as close anymore. -

artk2219 Reply

Yep, i recently picked up a refurbished Arc A770 16gb for 210 taxes, shipping, and a 2 year warranty included. Other than the need to definitely have re-sizable BAR and above 4GB encoding enabled, its been a decent card (some games were a stutter fest without those). I still cant recommend Arc to brand new first time builders because you definitely need to have a board that supports those features, and you need to know to enable them. But for experienced builders, yeah they're a very viable option now, and very well priced. The arc control center is still buggy, but hey at least it generally loads now.rluker5 said:I'd have to say the unmentioned $200 A750 wins this round.

From 2022:

And after 2 years of driver improvements it isn't as close anymore. -

Eximo I still have two lingering issues with Arc.Reply

HDCP at higher resolutions causes the screen to go completely black at every screen change (ie tab, re-size, etc), and briefly at lower resolutions at beginning of content. So any time a copyrighted advertisement or content is playing in a browser, it can do that.

Failing to re-connect to audio over HDMI after the display has been off. This used to be a constant problem, now only happens every once in a while. Still means going into the device manager to kill the display audio driver and restart it.

I can't fault the gaming performance of even an A380 at relatively modern games. Still struggles with older DX titles, lots of stuttering.

You are tempting me to pick up an A770 myself. Kind of want to see if I can get an Intel edition one, just to have. -

ingtar33 this was better then the comparison between the 7900gre and the 4070 that they did recently. 7900gre beat it badly in pretty much every title and they gave 3 other categories to the 4070 and declared it a winner. cost the same money and performed 20-40% less in gaming, and it was the "winner"Reply -

artk2219 ReplyEximo said:I still have two lingering issues with Arc.

HDCP at higher resolutions causes the screen to go completely black at every screen change (ie tab, re-size, etc), and briefly at lower resolutions at beginning of content. So any time a copyrighted advertisement or content is playing in a browser, it can do that.

Failing to re-connect to audio over HDMI after the display has been off. This used to be a constant problem, now only happens every once in a while. Still means going into the device manager to kill the display audio driver and restart it.

I can't fault the gaming performance of even an A380 at relatively modern games. Still struggles with older DX titles, lots of stuttering.

You are tempting me to pick up an A770 myself. Kind of want to see if I can get an Intel edition one, just to have.

If you were interested, this is the one that i picked up, its an Acer Bifrost from Acers ebay refurbished store, it comes with an 9% off coupon and then i found a 10% off coupon on top of that. They refresh the stock pretty regularly it seems, so if it runs out, they'll have more soon.

Refurbished Acer Bifrost A770 16GB -

rluker5 Reply

Could you be more specific? I haven't seen that issue with my A750, but I apparently don't watch a lot of copyrighted content. I watch movies from those free streaming services and sometimes rent one on a whim, youtube, other website stuff. But dropped Netflix and Prime. maybe it is that my A750 is hooked up to a Samsung 4k tv?Eximo said:I still have two lingering issues with Arc.

HDCP at higher resolutions causes the screen to go completely black at every screen change (ie tab, re-size, etc), and briefly at lower resolutions at beginning of content. So any time a copyrighted advertisement or content is playing in a browser, it can do that.

Failing to re-connect to audio over HDMI after the display has been off. This used to be a constant problem, now only happens every once in a while. Still means going into the device manager to kill the display audio driver and restart it.

I can't fault the gaming performance of even an A380 at relatively modern games. Still struggles with older DX titles, lots of stuttering.

You are tempting me to pick up an A770 myself. Kind of want to see if I can get an Intel edition one, just to have.

Lately I've been messing with that Lossless Scaling with the triple refresh on my tv that does 120. Not a fan of the blurring you see around a 3rd person pc during fast motion, and can't quite set Riva refresh perfect to completely eliminate tearing, but not a lot of other artifacts. Looks better in first person. Also not as smooth as locked vsync, but seems smoother than variable refresh.

Really I'm saving my irresponsible spending for Battlemage. I hope a high end one still comes in a 2 slot card. -

Eximo Windows 10 still. HDMI to a 4K TV. If I run it at 4K, the black screen problem appears constantly whenever something with HDCP is playing. Fine if you just watch statically, but every time an advertisement plays or any time the aspect ratio changes, it will go black again. Minimizing, maximizing a video will also do it.Reply

At 1080p it does far better, but will still black out on the likes of a youtube movie. Usually only once or twice and for seemingly less time (that might just be draw time with the scaler running a little faster at 1080p)

Been a while since I have tried a new driver version. That last few made it far worse, so I had to painstakingly re-install a slightly older version. Arc driver install still leaves a lot to be desired. Generally I have had to self extract the files to get anything to work. Letting it download from the Arc control center seems like a failing proposition. Just wastes time downloading, fails silently.

My laptop also has Iris Xe, it suffers from similar black screen problems when using the integrated chip. Luckily it has the lowest tier Nvidia card, and I just run my browsers through it and it solves the problem. -

rluker5 I can't replicate it on my A750. I tried that new Fallout on Amazon at highest quality, 4k on Youtube and watched John Wick 4 two weeks ago as a 4k rental and big and smalled it a couple of times. Also just tried alt tabbing in and out, also no display issues.Reply

I use Edge btw so maybe that makes a difference? Also I have no issues using the integrated graphics on my 13600k doing the same thing.

But I'm on new W11 with no modifications. I have set a couple custom resolutions with Intel Graphics Command Center Beta from the Windows store but that is about it.

Also the first I've heard of Intel having video display issues. They've always been rock solid for me. Even my old W10 32 bit 2ghz ram 2w atom tablet still works great for video, but that's about it, slow as a rock with everything else. Well I do have an issue going from 4k60 to 1440p120 that the tv sometimes needs to be told to use high bandwidth so I can run 120hz but I think that is on the TV because it happened with AMD as well.