Why you can trust Tom's Hardware

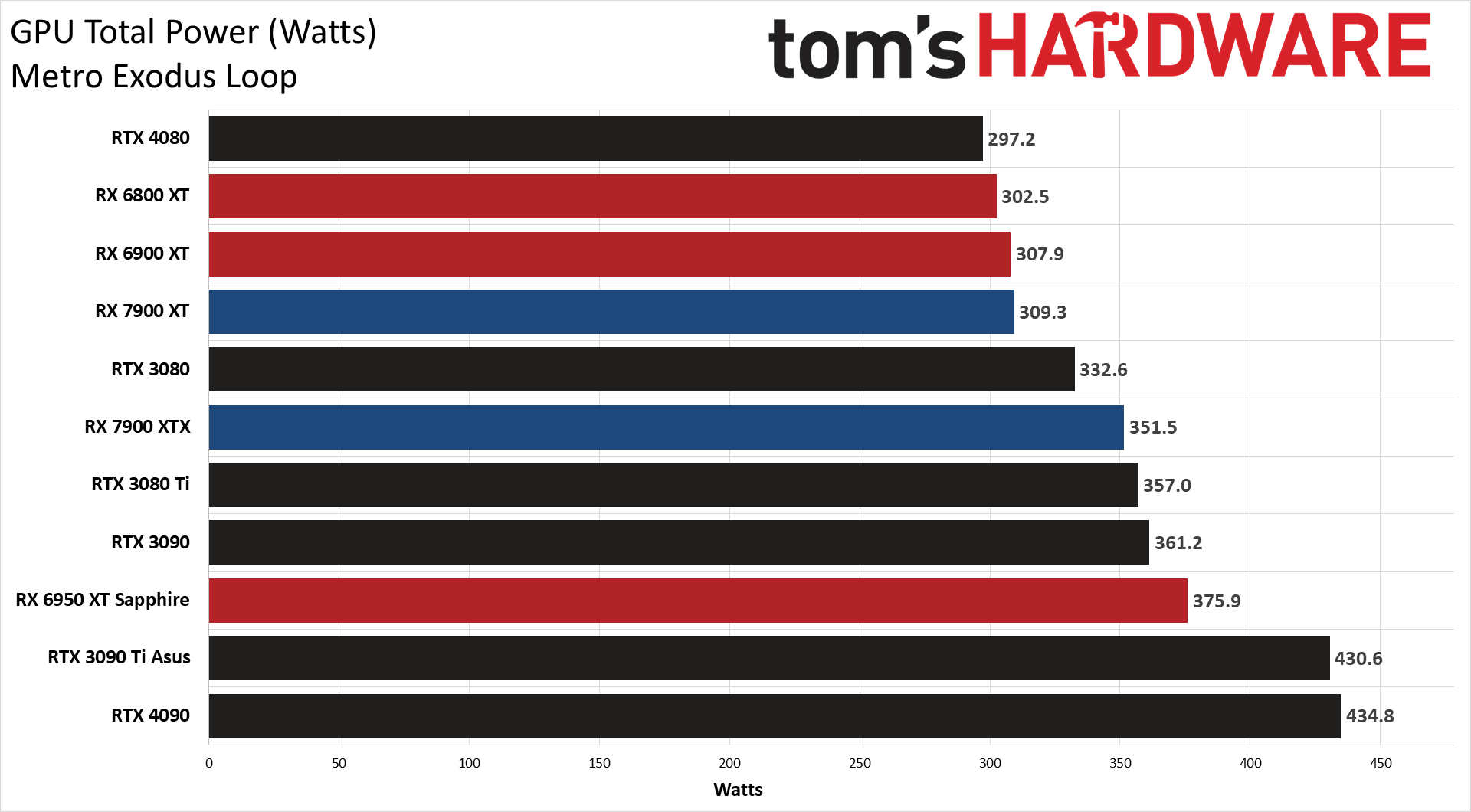

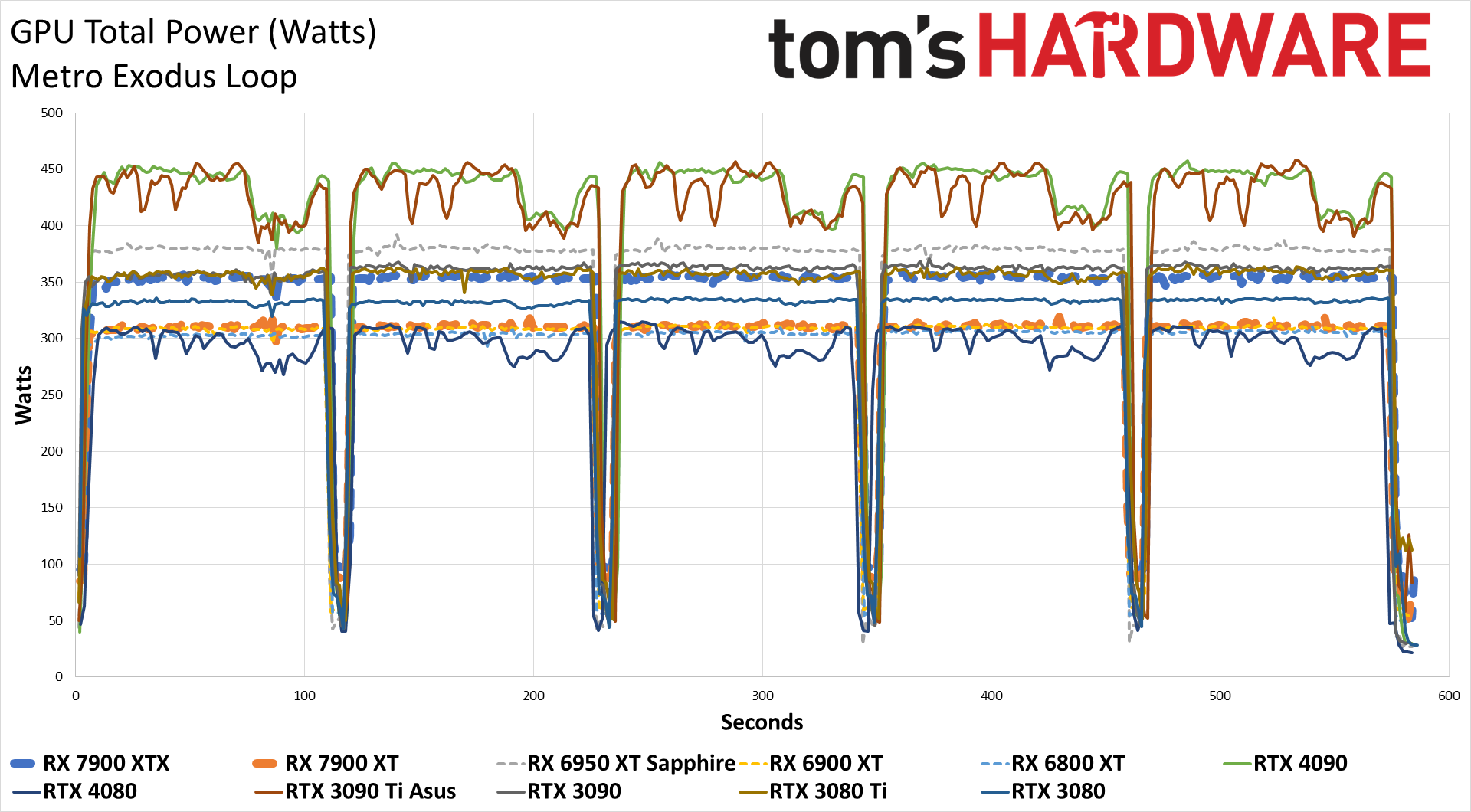

We measure real-world power consumption using Powenetics testing hardware and software. We capture in-line GPU power consumption by collecting data while looping Metro Exodus (the original, not the enhanced version) and while running the FurMark stress test. Our test PC remains the same old Core i9-9900K as we've used previously, to keep results consistent.

Note that we have additional power data from Nvidia's PCAT v2. However, we haven't fully converted to using our new test PCs and PCAT for everything, so we also gathered power data using our existing methodology.

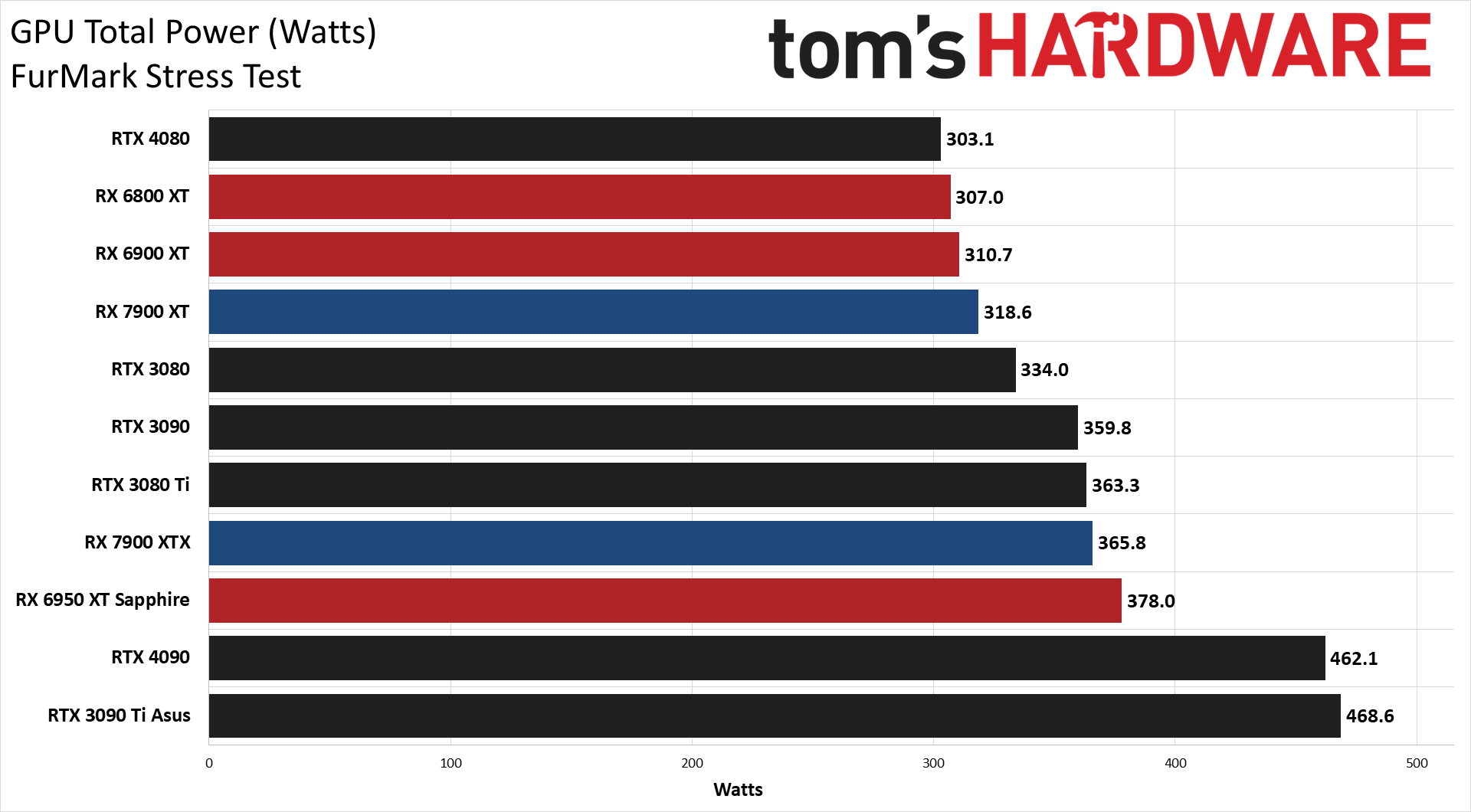

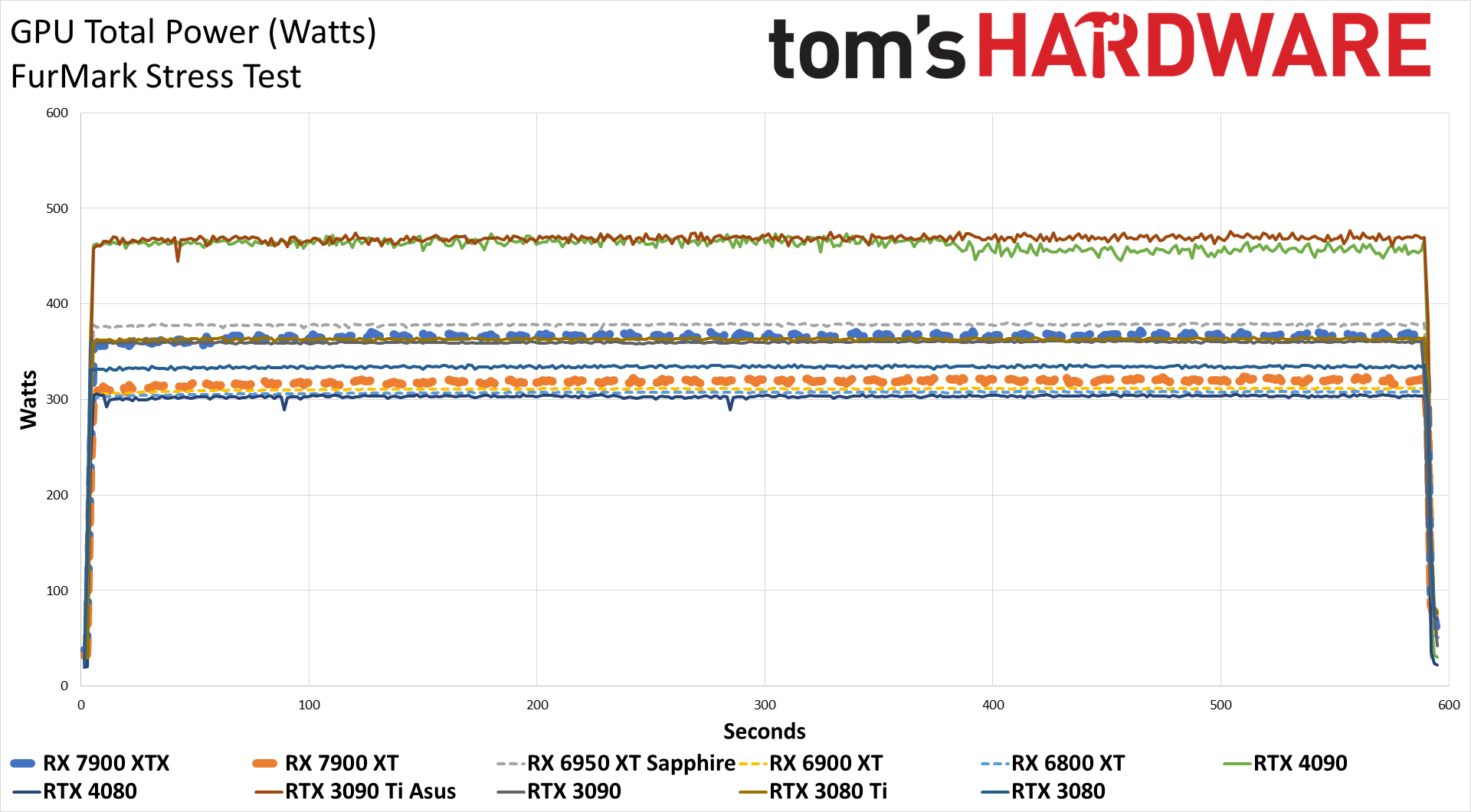

For the RX 7900 cards, we ran Metro at 3840x2160 using the Extreme preset (no ray tracing), and we ran FurMark at 2560x1440. The following charts are intended to represent something of a worst-case scenario for power consumption, temps, etc.

AMD bumped up the TBP on the 7900 XT to 315W instead of the original 300W, and in FurMark we actually just barely exceeded that mark — and the same goes for the RX 7900 XTX, which hit 366W. Our Metro Exodus power tests both end up around 5W lower than the rated TBP, but it's worth noting that in other gaming tests, particularly at 1080p, power use can be quite a bit lower (see below).

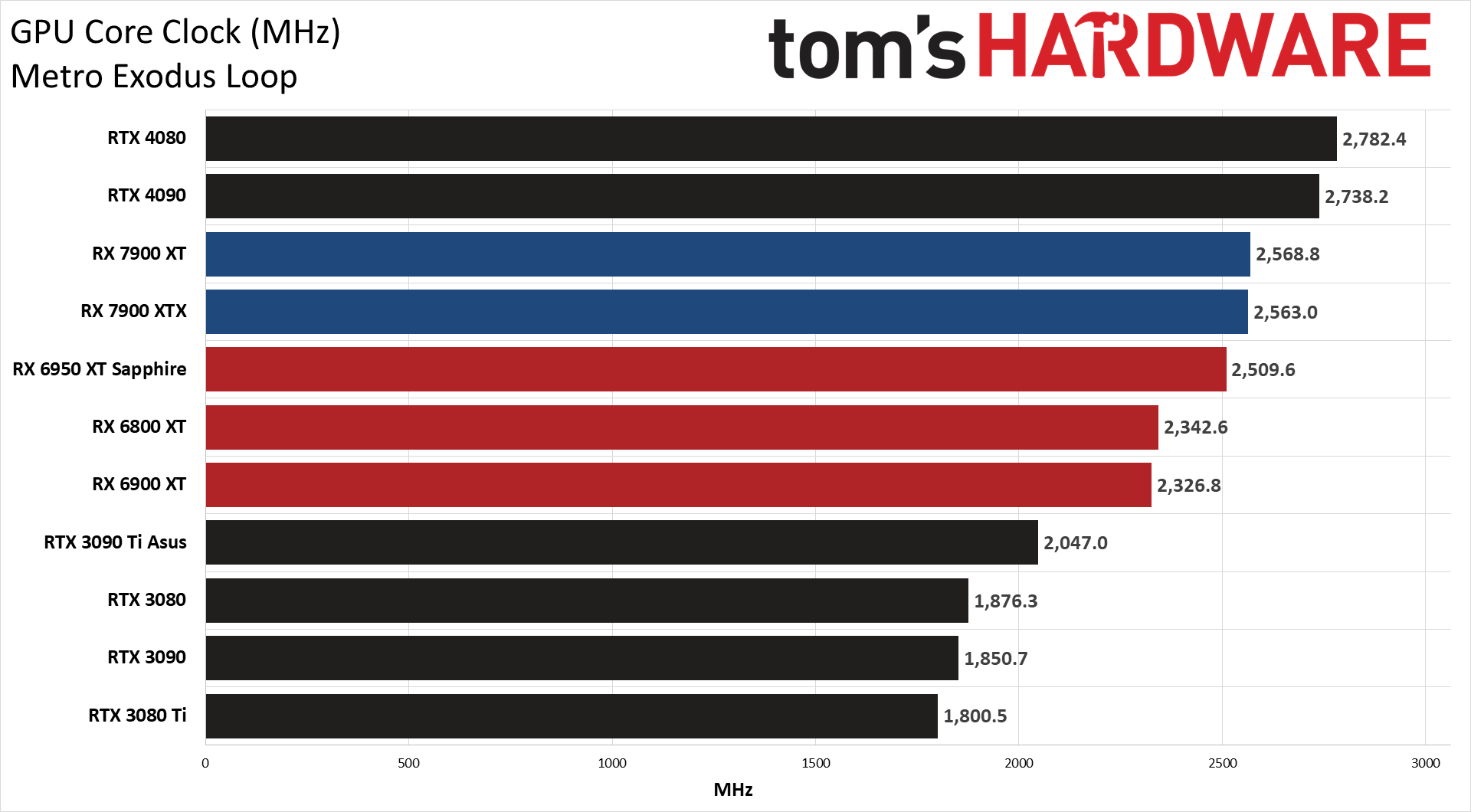

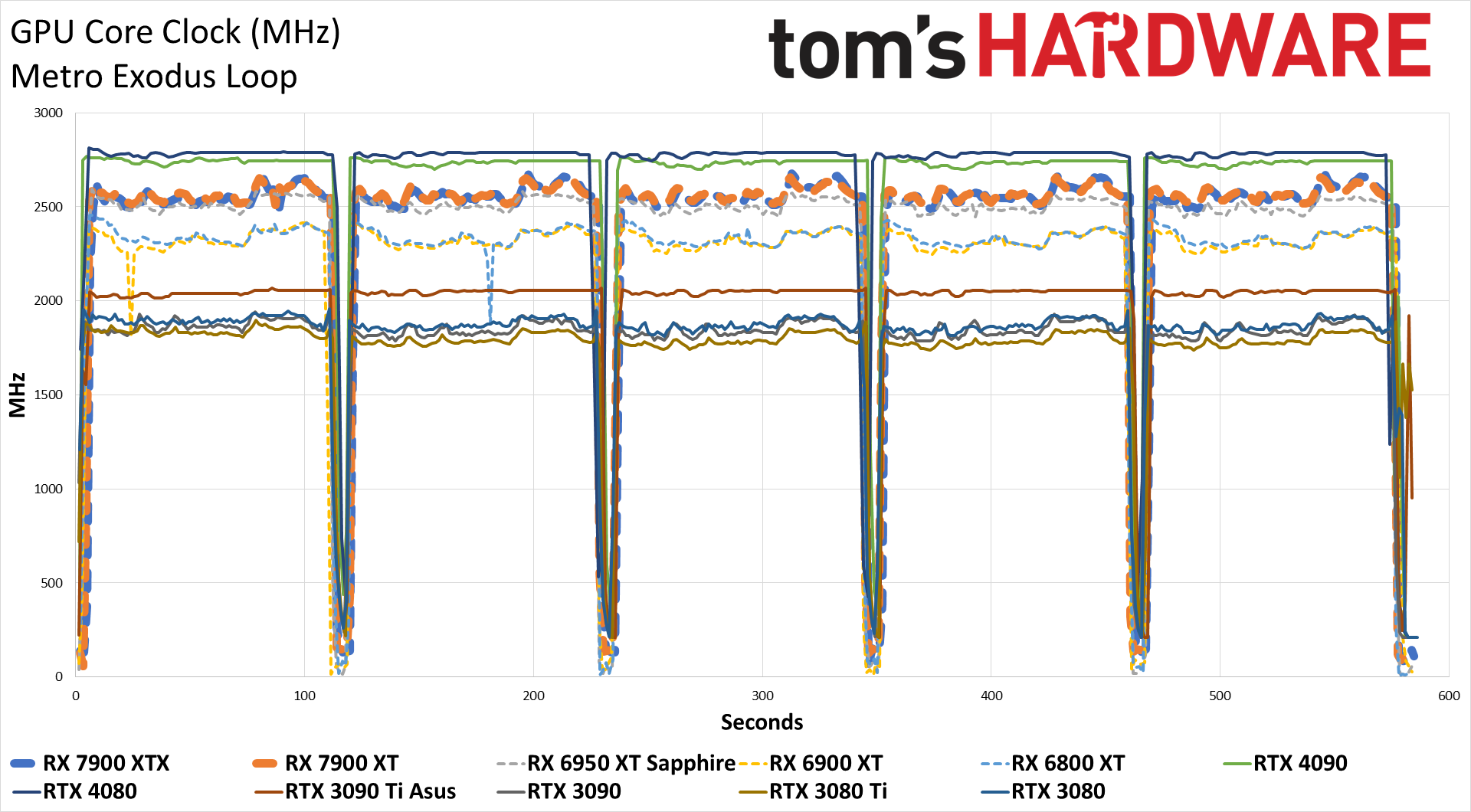

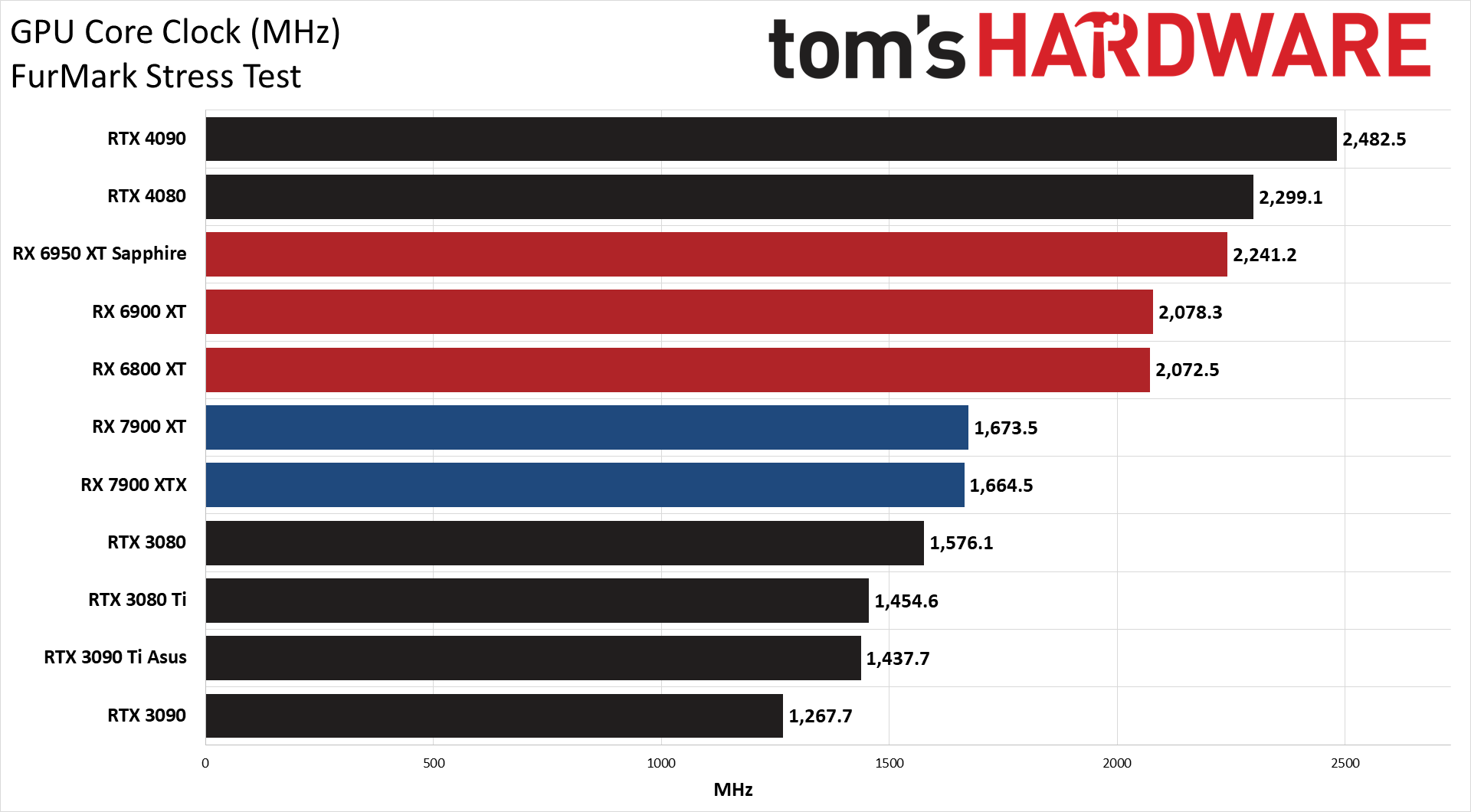

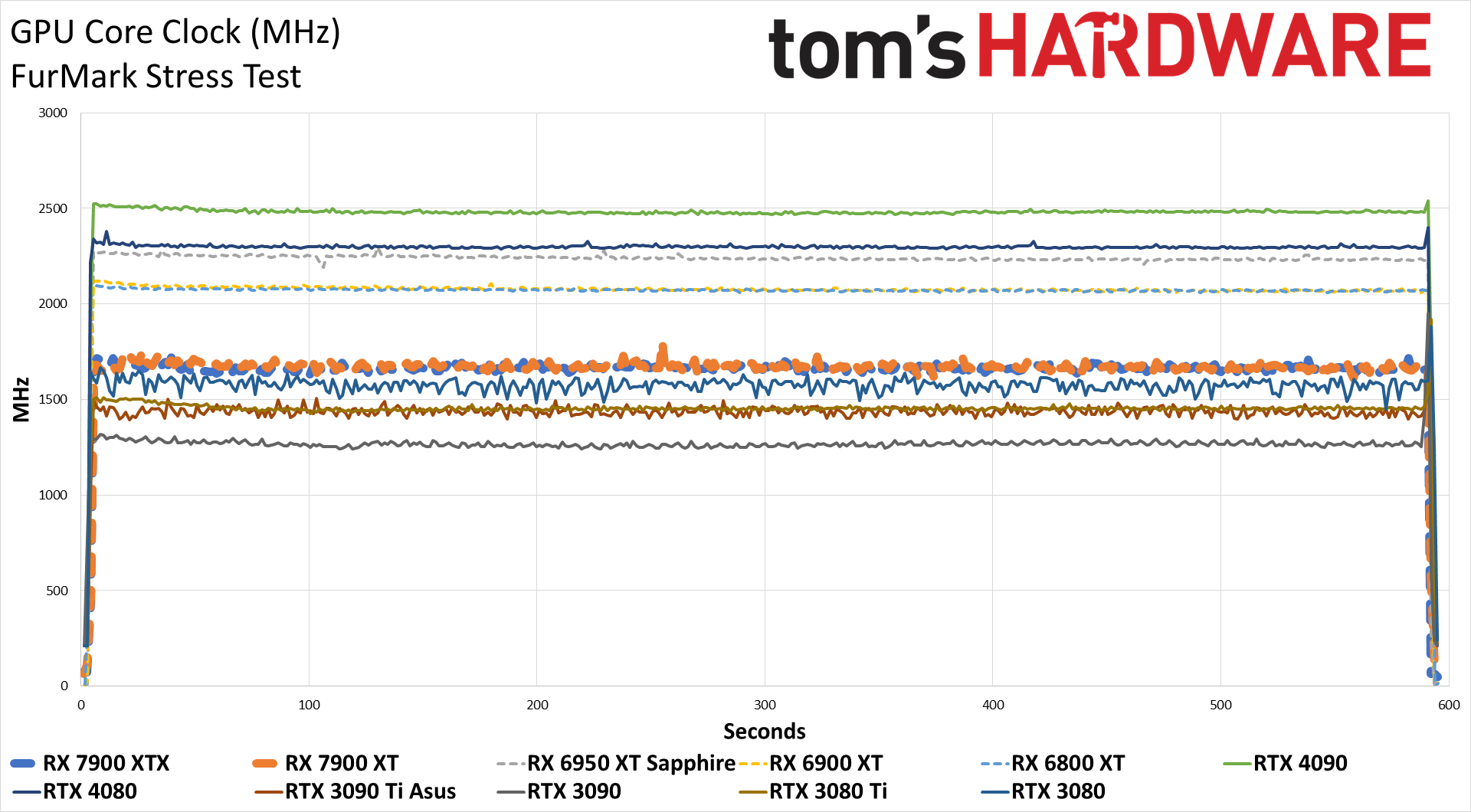

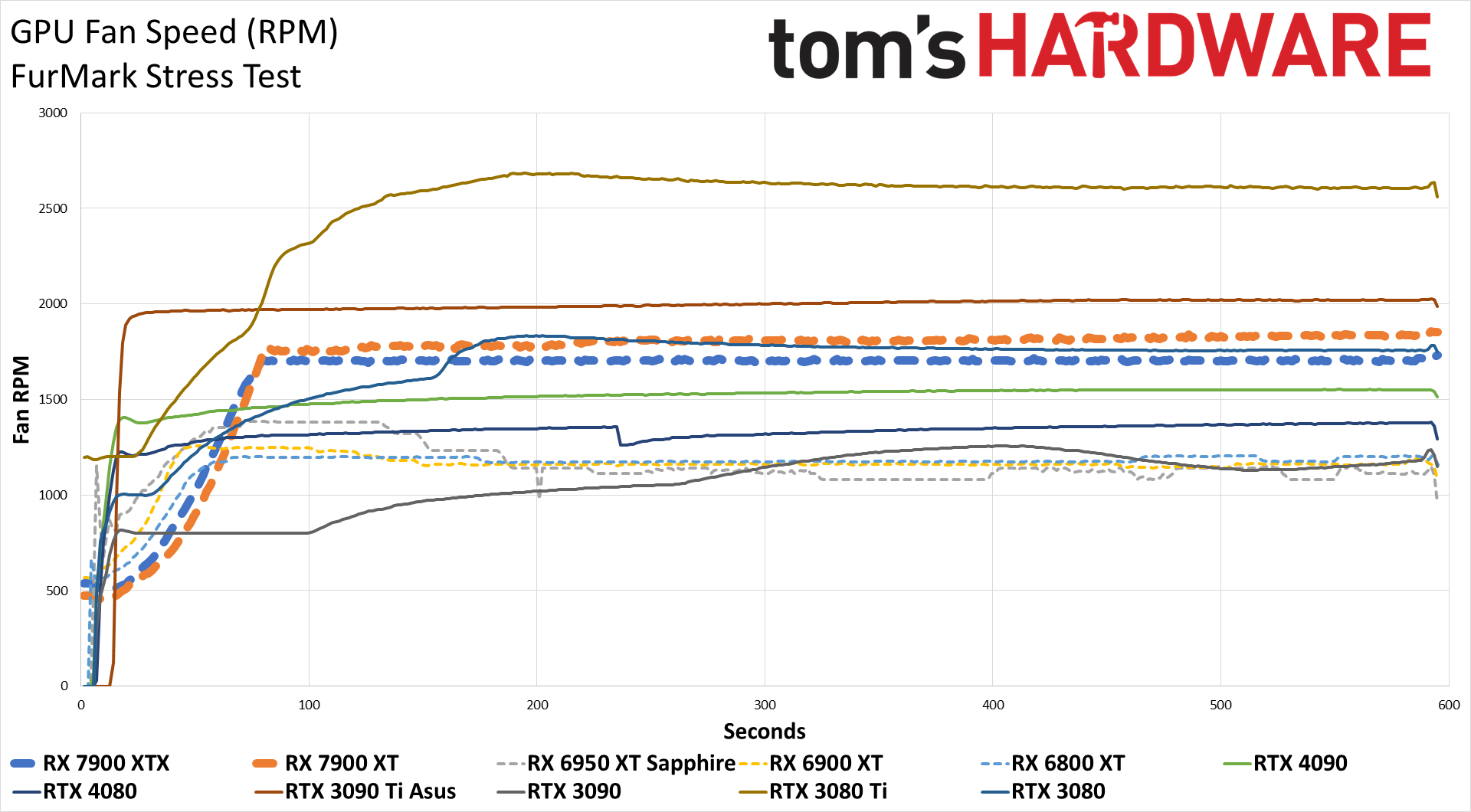

Clock speeds on both cards landed above the 2.5 GHz mark while gaming, though as you'd expect, the clocks were quite a bit lower in FurMark. In fact, in FurMark it looks like AMD's new cards throttle more than just about any other GPU we've tested recently. That's not a huge issue, as FurMark represents an atypical "worst-case" workload — though that could change in the future.

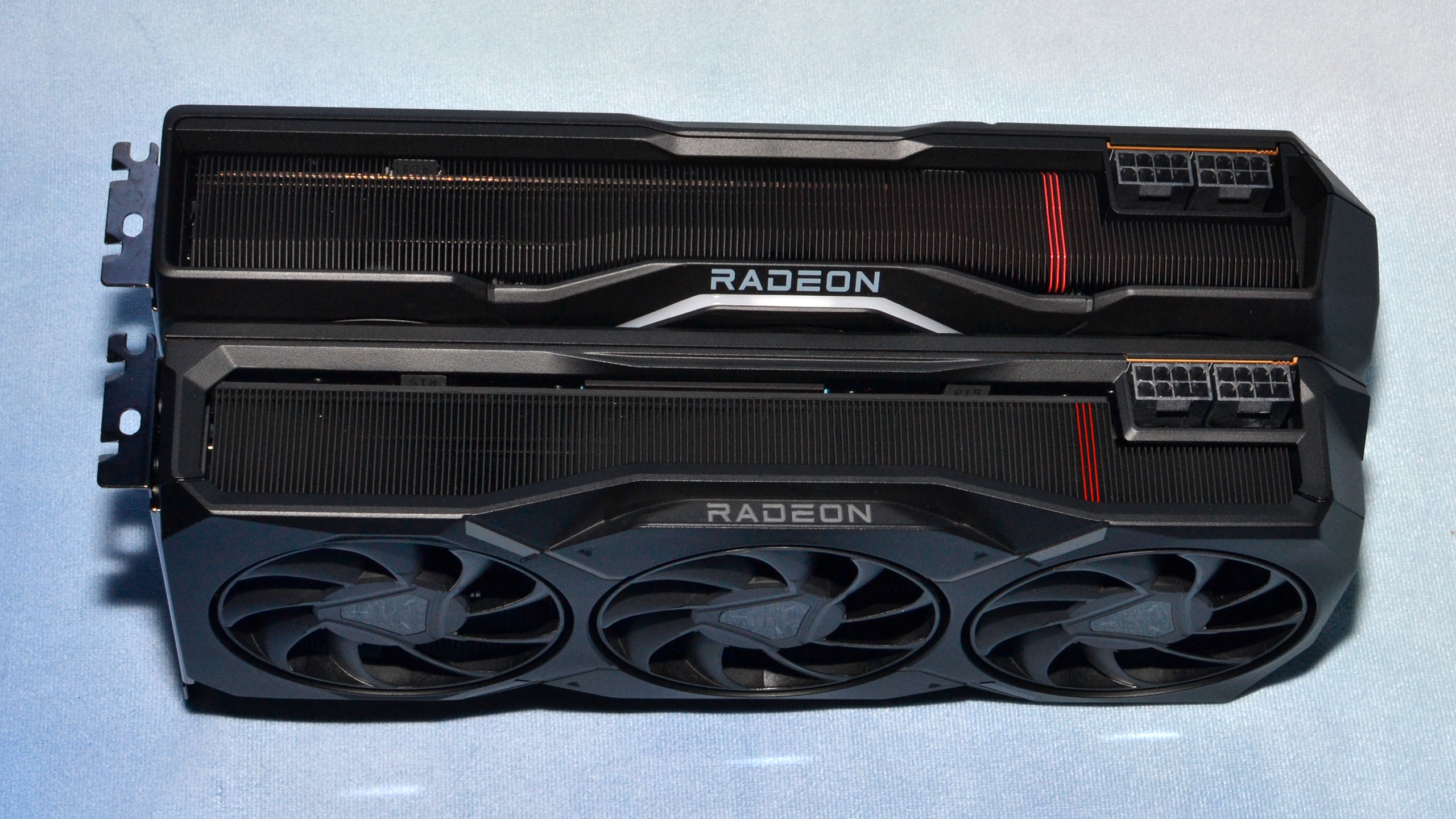

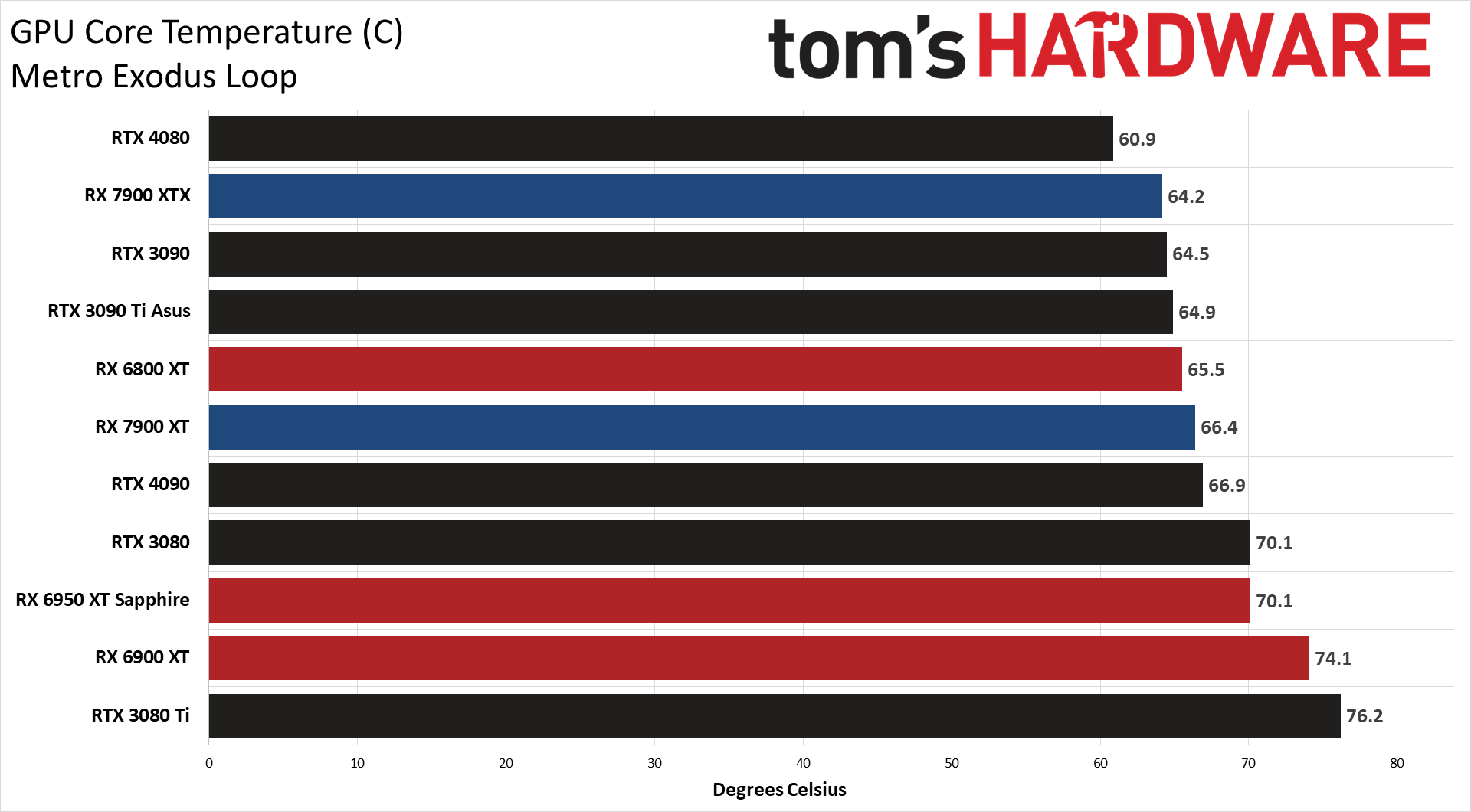

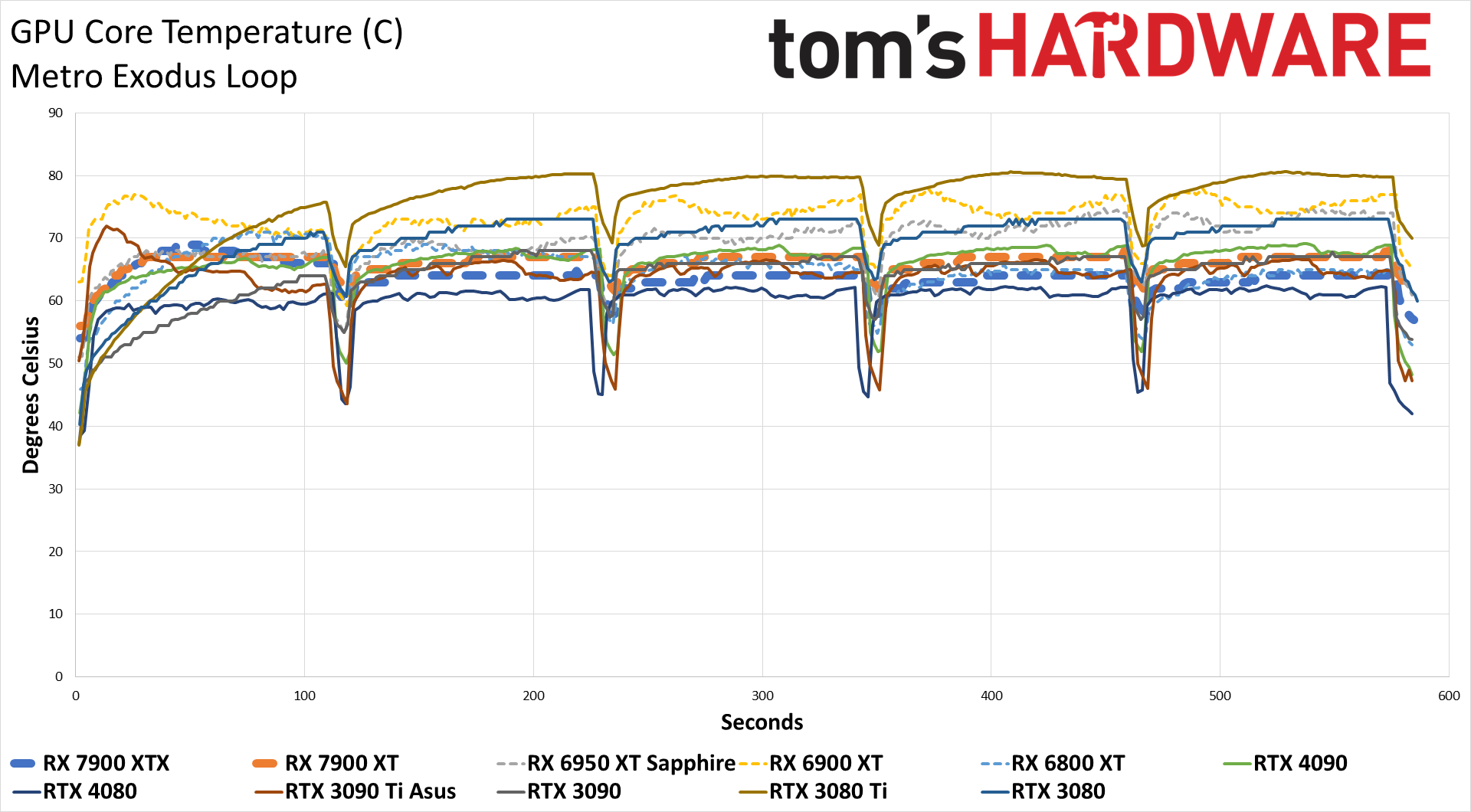

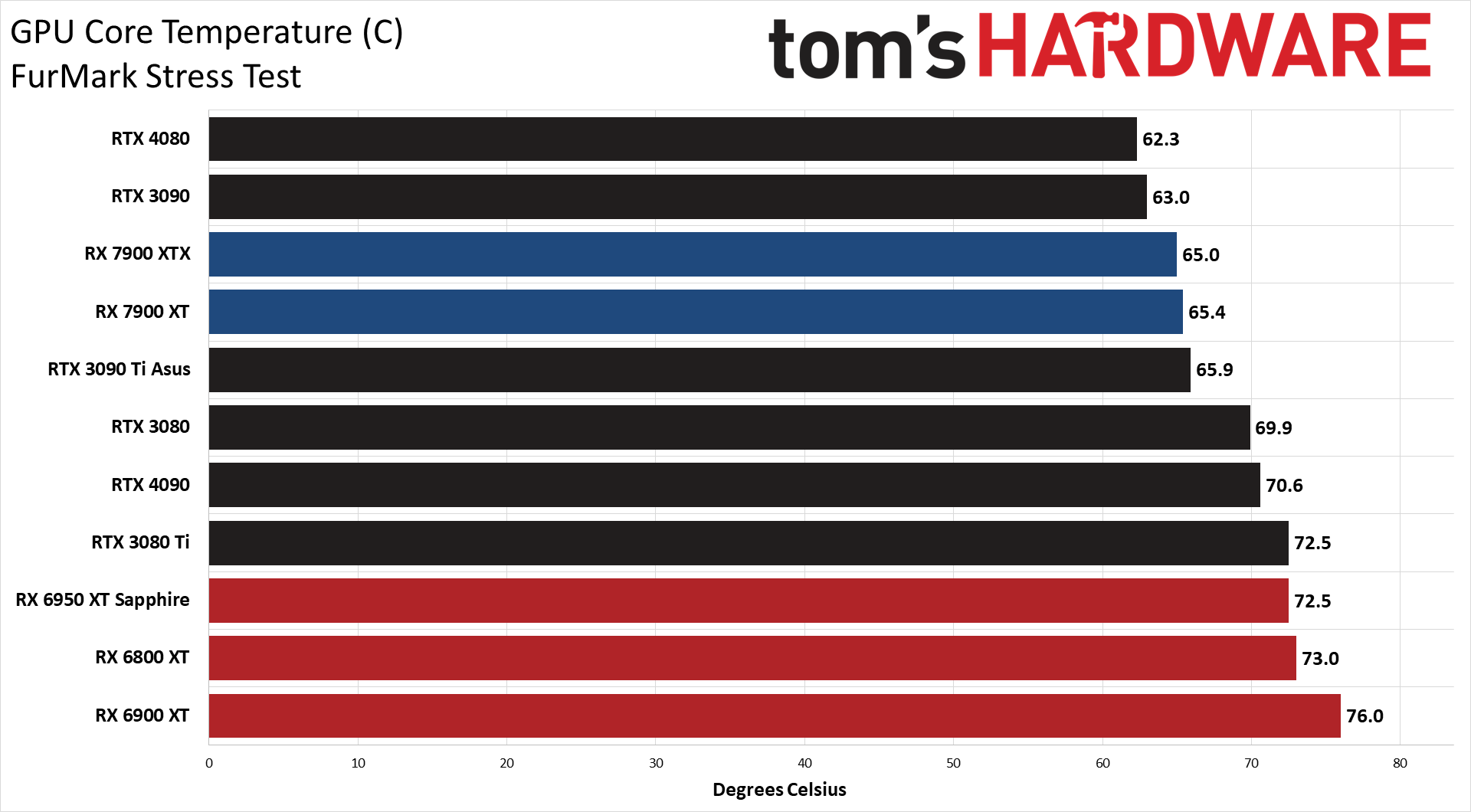

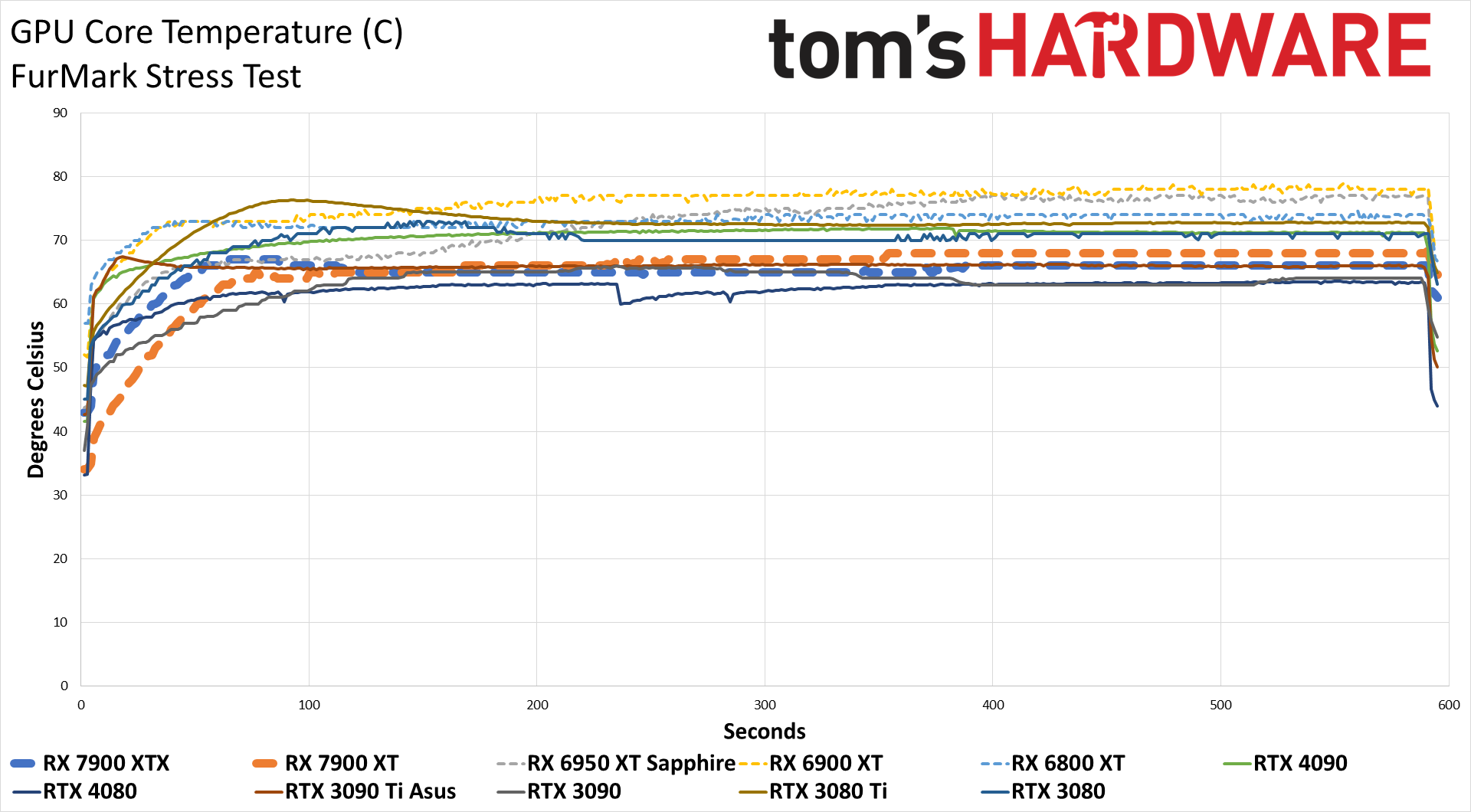

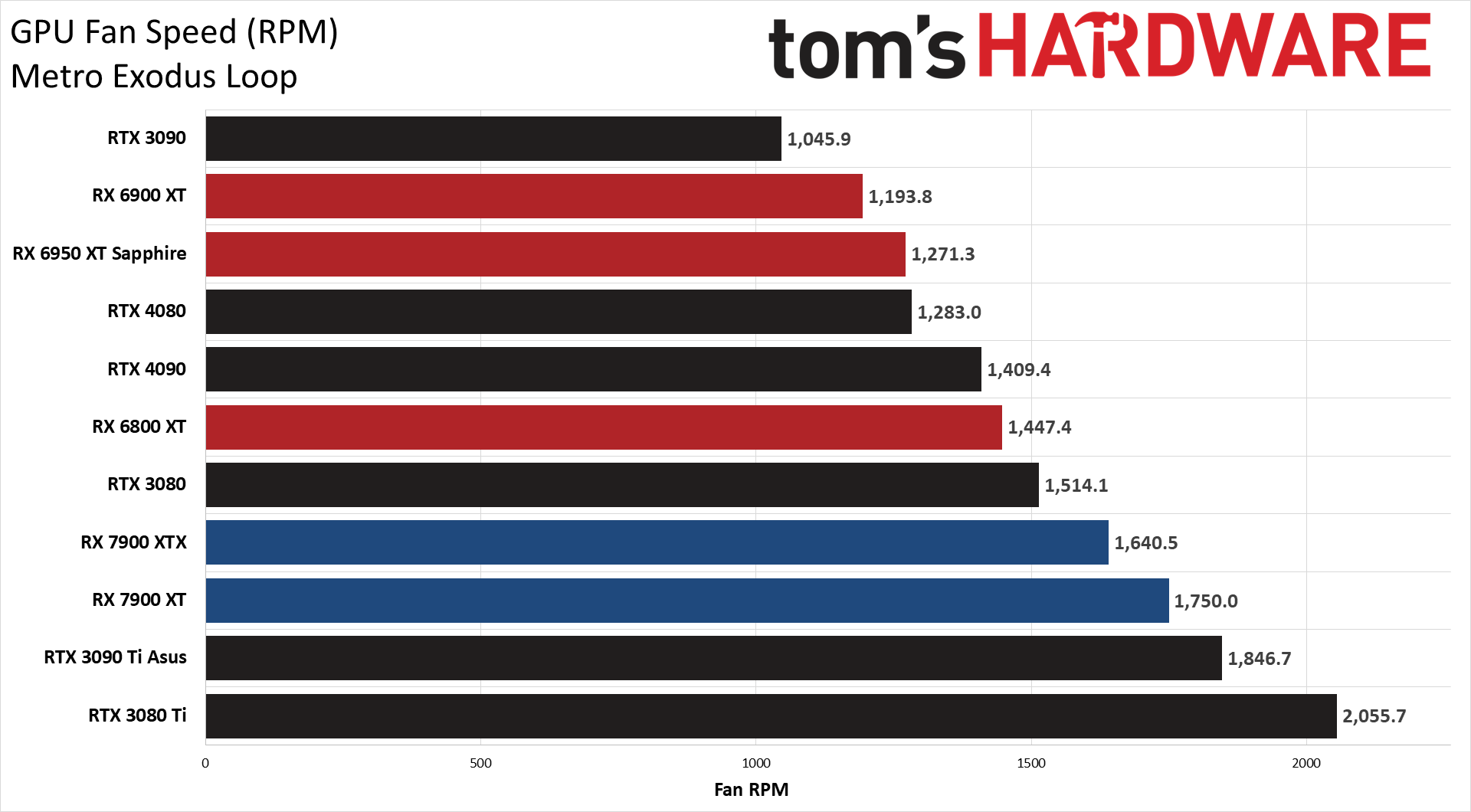

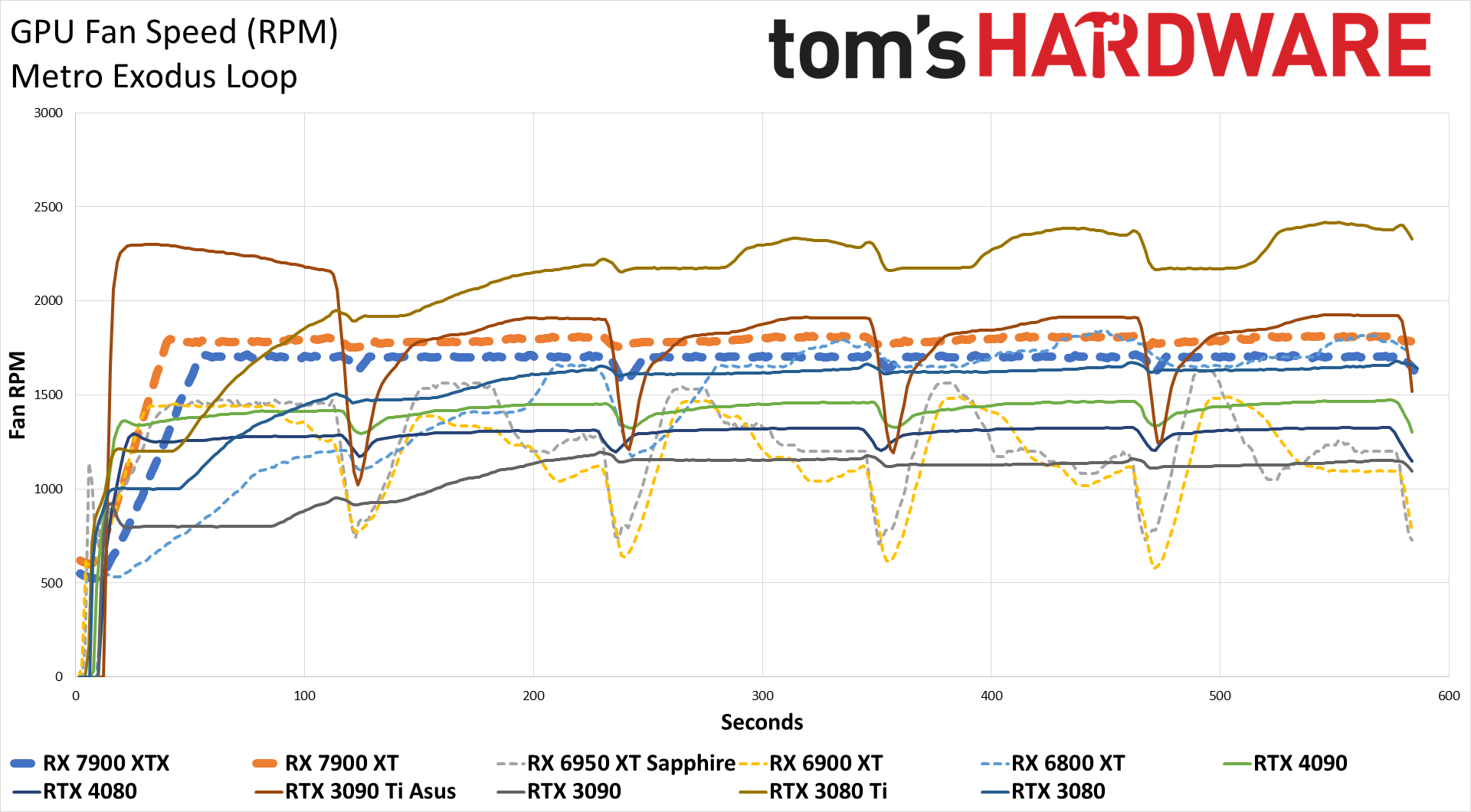

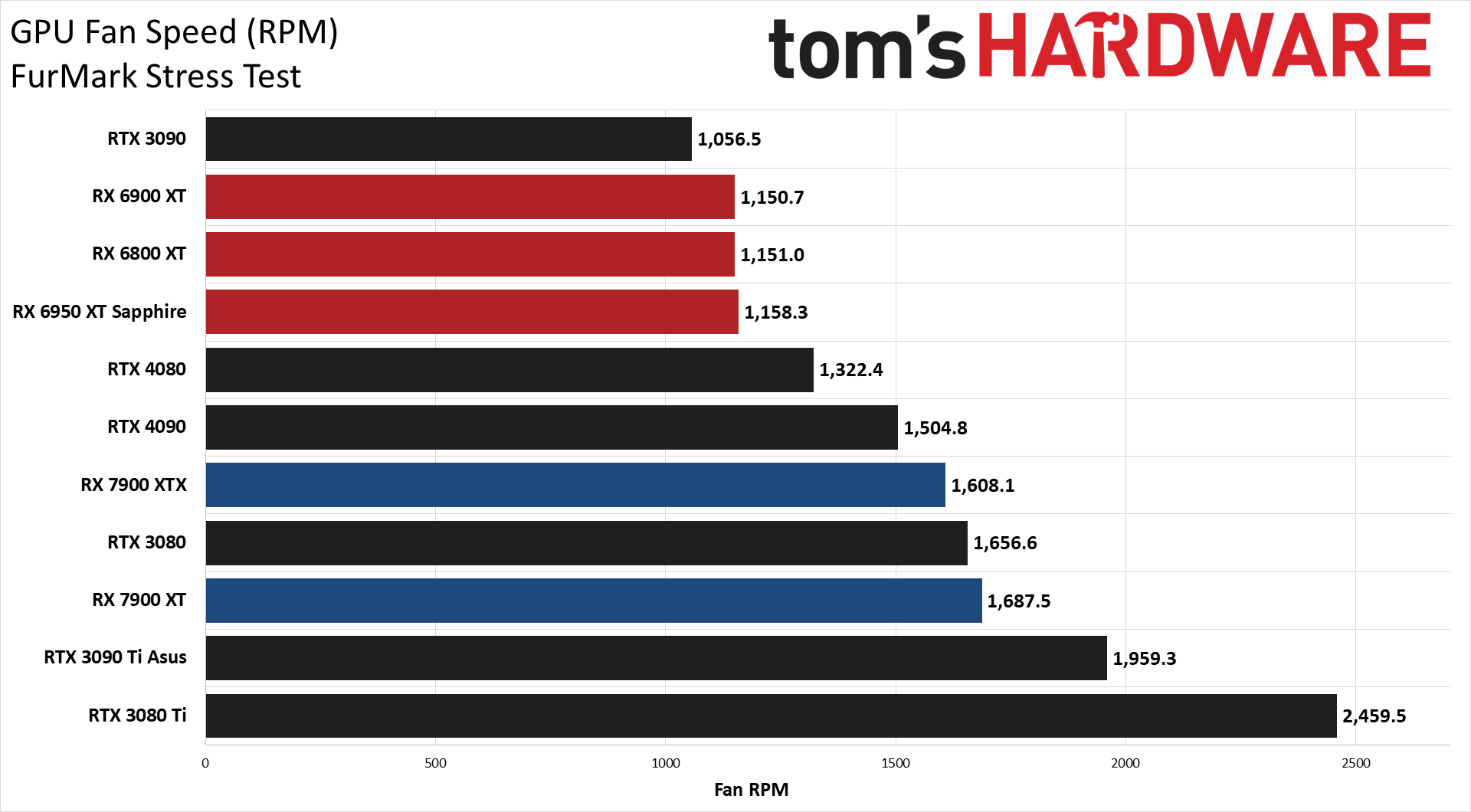

GPU temperatures remained below 70C in all of our testing, with fan speeds south of 2,000 RPM. The fan speed and temperature results also line up with what we experienced in regular use, as the XTX card has a larger and more potent cooling subsystem and often runs noticeably quieter than the XT model.

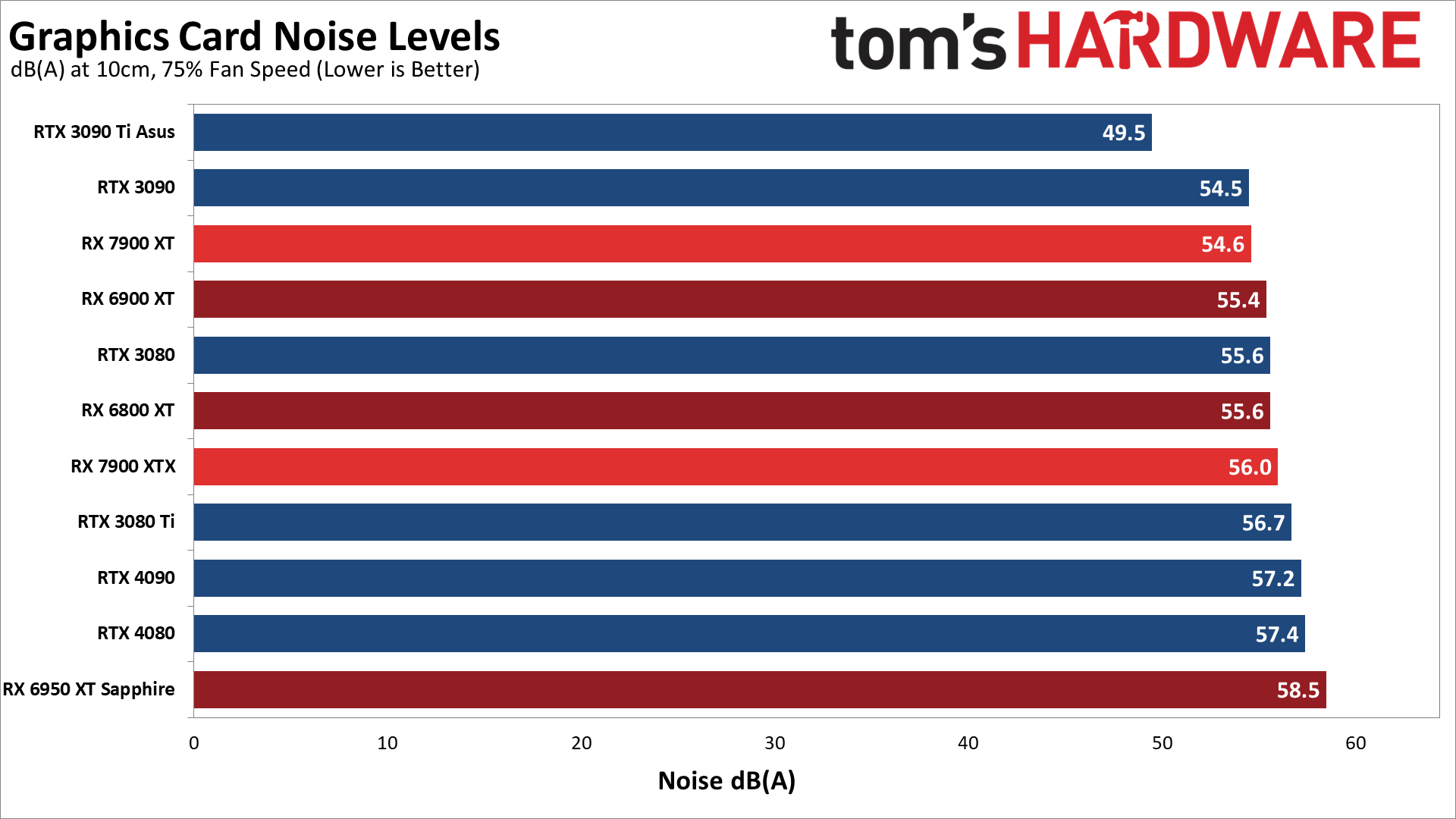

Of course, that doesn't always happen. Running Metro Exodus for 30 minutes, the XTX card eventually settled in at 49.9 dB(A) while the 7900 XT was a bit quieter with 48.7 dB(A). AMD's reference designs are pretty good overall, and we like the way they look, but they're definitely not among the quietest graphics cards we've tested.

Full Suite FrameView and PCAT Testing Results

Besides our Powenetics testing, we collect all of our frametime data using Nvidia's FrameView, which also logs clock speeds, temperatures, power, and GPU utilization (and plenty of other data as well). We've verified with our Powenetics equipment that Nvidia's PCAT results are close enough that we have no problem using PCAT going forward, as the results were within 1W of each other.

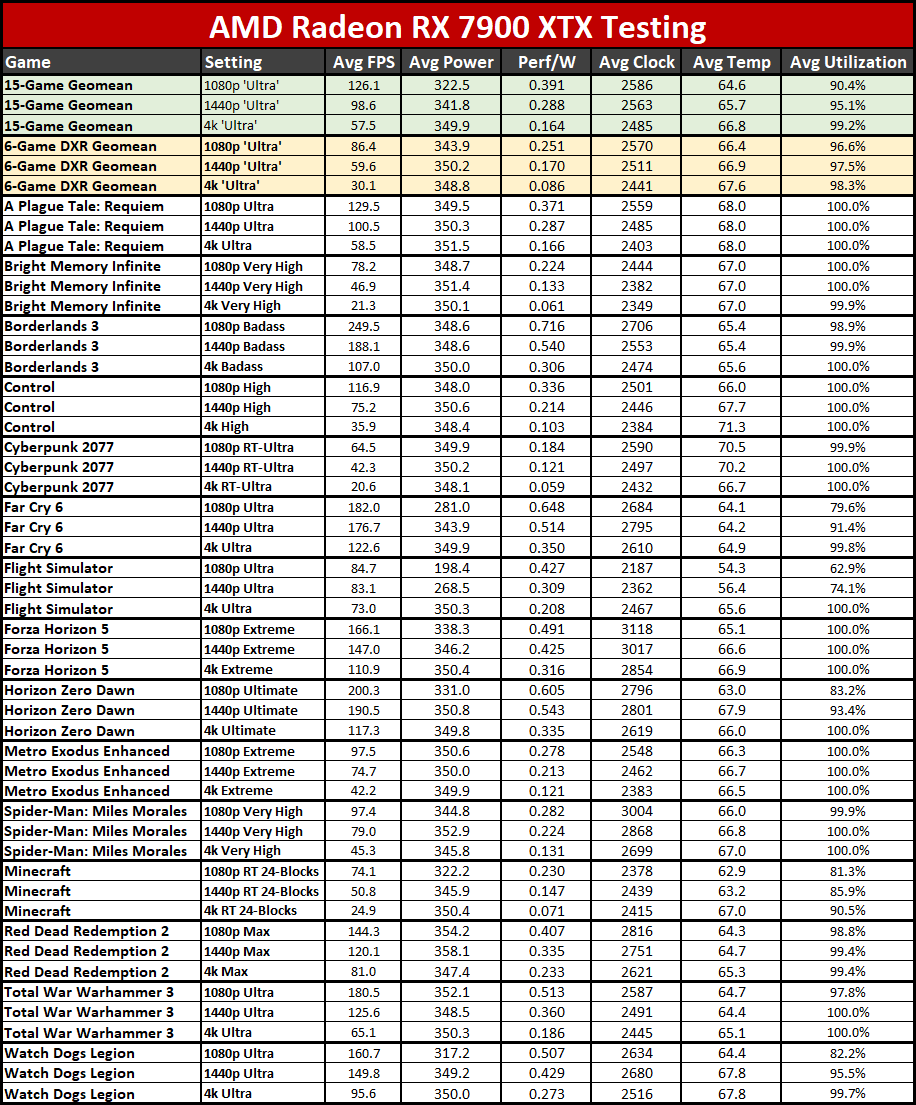

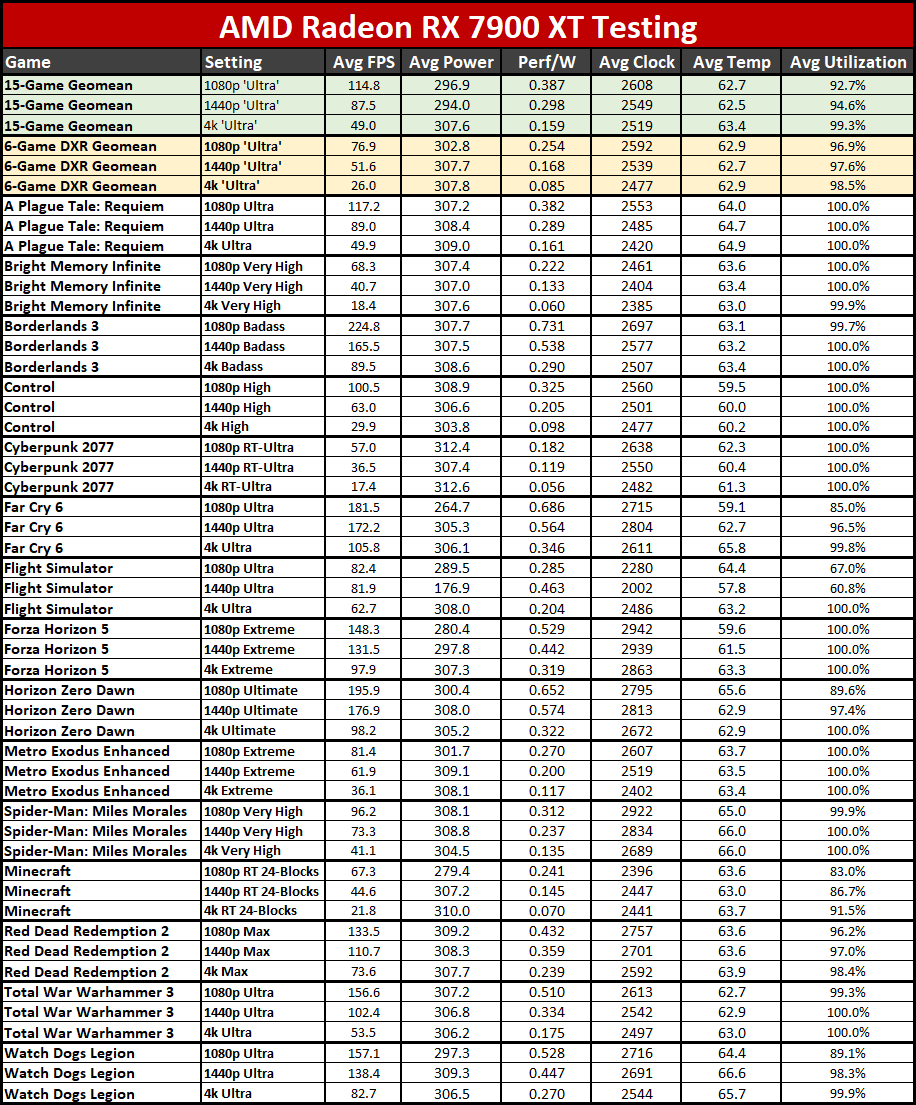

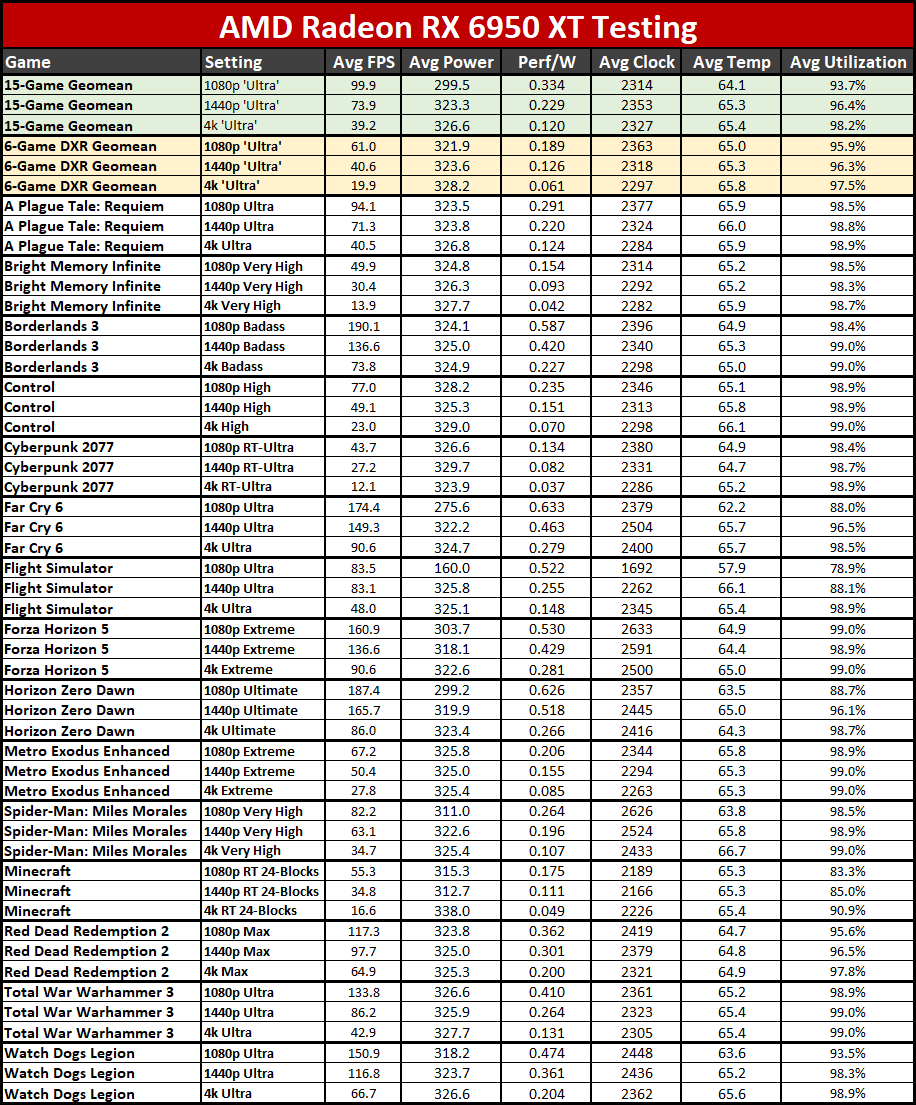

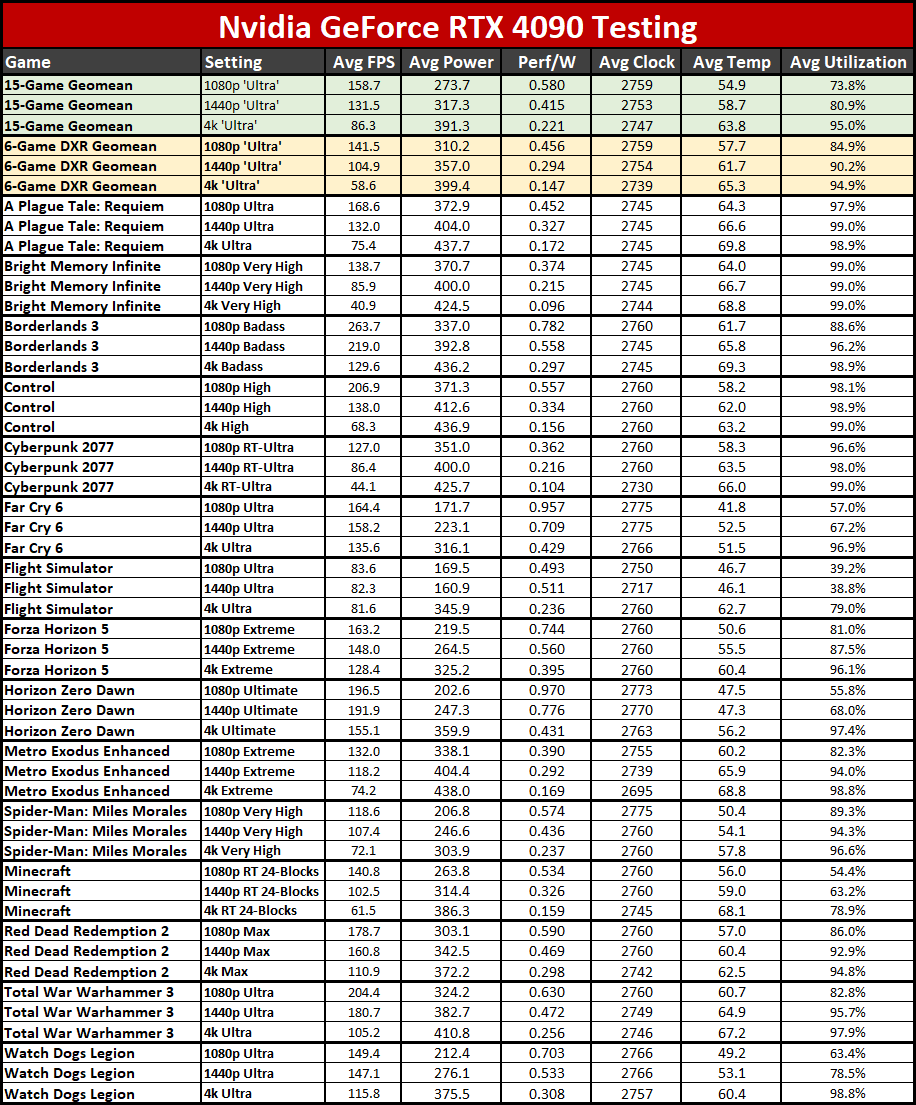

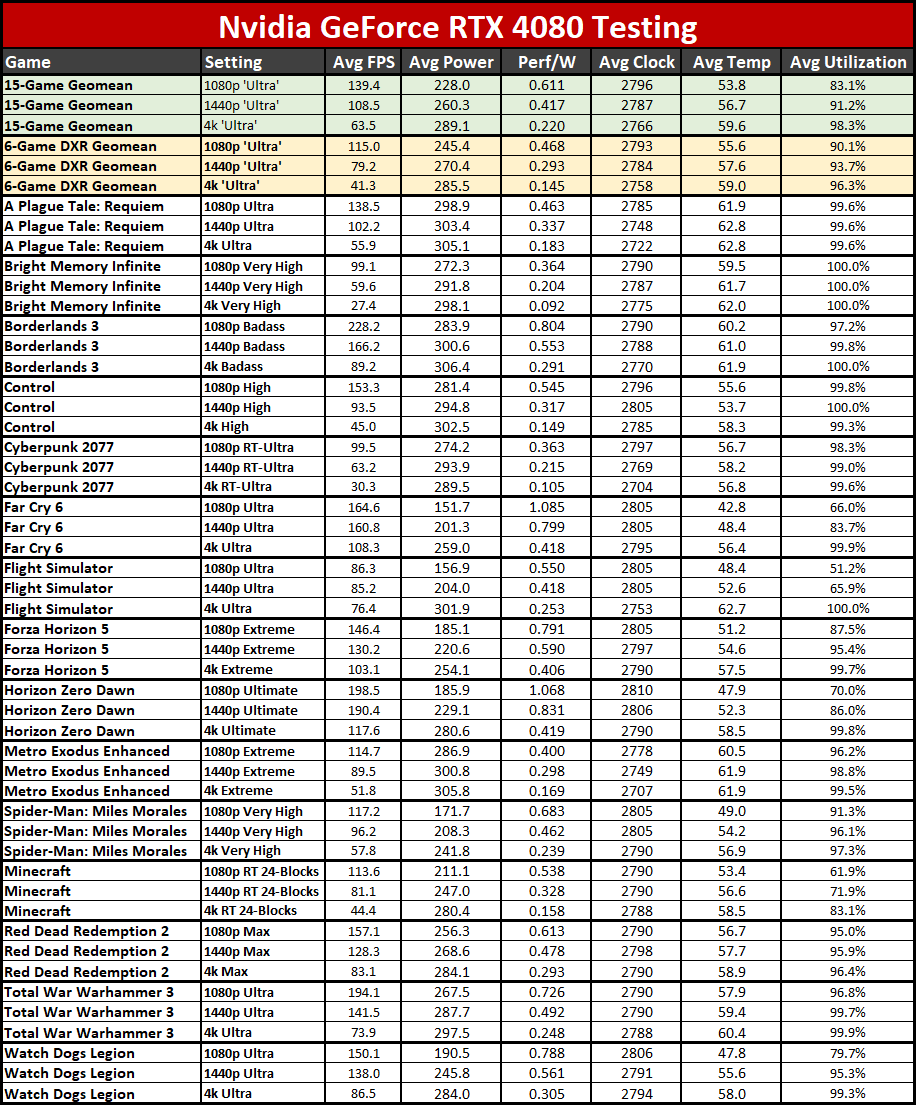

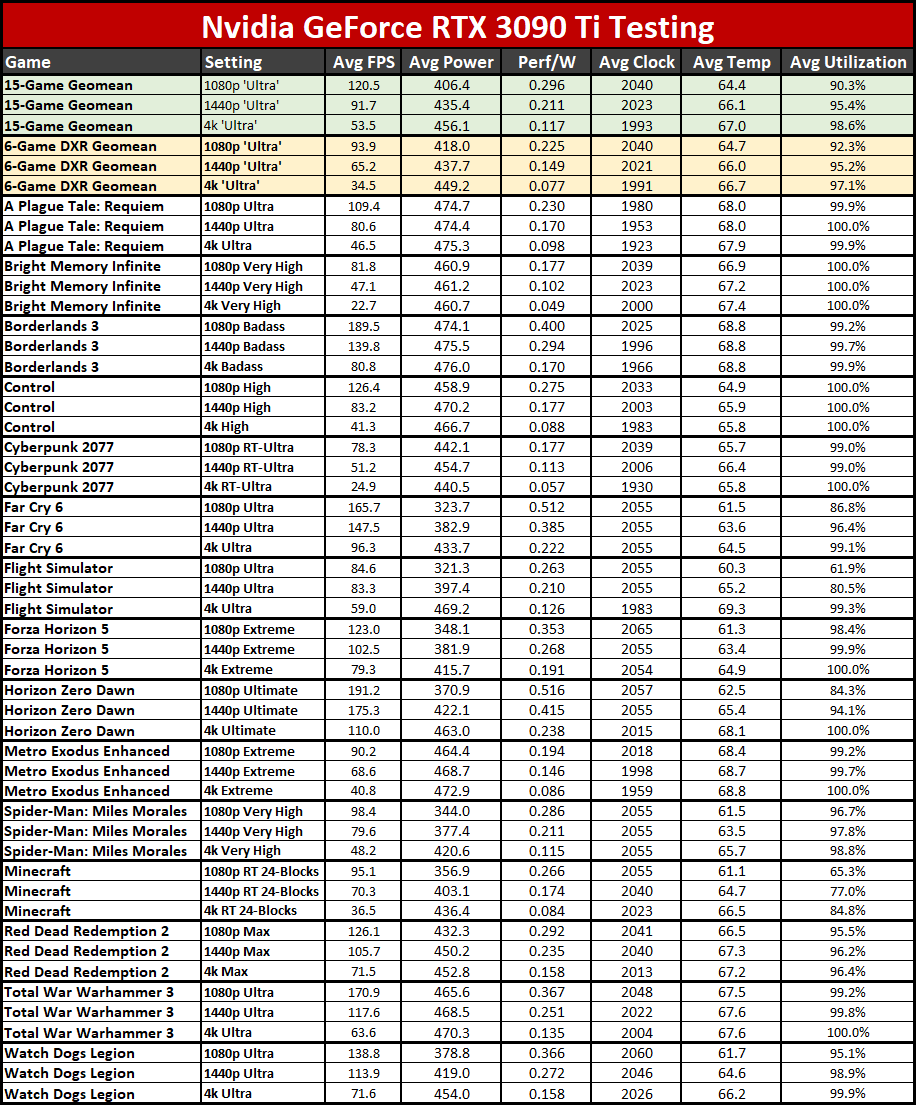

Below, we have the summarized test results from all 15 games, and we've calculated performance per watt for the main six GPUs from our testing: From AMD, we have the new RX 7900 XTX and RX 7900 XT, along with the previous generation RX 6950 XT; for Nvidia, we have the RTX 4090, RTX 4080, and the previous generation RTX 3090 Ti. Note that both the RX 6950 XT and RTX 3090 Ti use third party cards, the ASRock RX 6950 XT Formula and the Asus RTX 3090 Ti TUF Gaming OC.

For AMD's new GPUs, most of the games we tested came pretty close to 100% GPU utilization, and as such the power levels are pretty close to the rated TBP. Far Cry 6, Flight Simulator, and Minecraft weren't quite as high as the others, particularly at 1080p, but overall in real-world gaming tests, the results match up pretty closely with what we measured in our Powenetics testing.

It's interesting that AMD raised the TBP slightly on the 7900 XT, and that may account for its higher than expected clocks. At 1080p and 4K, the XT model averaged higher GPU core clocks than the XTX variant, even though the latter officially has a 100 MHz higher boost clock.

Looking at the overall performance per watt data from the six GPUs, it's also fun to see just how much things have improved. Both the RX 6950 XT and RTX 3090 Ti land at an average of 0.12 FPS/W at 4K. AMD's RX 7900 series bumps that to 0.16 FPS/W, a 33% improvement, and the 7900 XTX actually comes out ahead of the 7900 XT. But Nvidia's RTX 4080 and 4090 take the efficiency crown in this case, with 0.22 FPS/W at 4K, with the 4090 also just edging past the 4080.

And yes, looking at the overall result isn't always the best solution, which is why we provided the full tables above. Looking just at Borderlands 3 4K, for example, which lands close to the GPUs' TBP for all six cards, the rankings shift a bit. The 6950 gets 0.23 FPS/W while the 7900 cards get 0.29 and 0.31 for the XT and XTX models — 26% and 35% better performance per watt in that case. Doing the same for Nvidia, the 3090 Ti gets 0.17 FPS/W, 4080 gets 0.29 FPS/W, and the 4090 gets 0.30 FPS/W. That's a 71% and 76% generational improvement, but here at least the AMD cards match Nvidia in relative efficiency. Of course, that's largely thanks to the removal of the ray tracing results.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Radeon RX 7900: Power, Clocks, Temps, Fans, and Noise

Prev Page Radeon RX 7900: Professional and Content Creation Performance Next Page Radeon RX 7900 XTX and 7900 XT: A Tale of Two Cards

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

-Fran- Thanks for the review!Reply

I'm reading it now, but I've watched numbers in other places. My initial reaction is lukewarm* TBH. I expected a bit more, but they're not terrible either. They did fall short of AMD's promise though. They indeed oversell the capabilities on raster, but were pretty on point for RT increases.

Still, this card better have a "fine wine" effect down the line and the MSRP may just be well justified. This being said, it is still too expensive. for what it is.

Regards. -

spongiemaster ReplyThat's probably because this is the most competitive AMD has been in the consumer graphics market in quite some time.

Not sure how this was determined, but I would argue this is a step backwards in almost every situation from the 6000 series. Also, it should be pointed out that there is something going on with the power consumption of the 7000 series in non-gaming situations that will affect many users. Looks like the memory isn't down clocking or something.

-

JarredWaltonGPU Reply

I don't do a ton of power testing scenarios, so I'd have to look into that more... and I really need to go sleep. As for the "being competitive," AMD is pretty much on par with Nvidia's best in rasterization (similar to 6000-series), and it's at least narrowed the gap in ray tracing. Or maybe that's just my perception? Anyway, since basically Pascal, it's felt like AMD GPUs have been very behind Nvidia. Nvidia offers more performance and more features, at an admittedly higher price.spongiemaster said:Not sure how this was determined, but I would argue this is a step backwards in almost every situation from the 6000 series. Also, it should be pointed out that there is something going on with the power consumption of the 7000 series in non-gaming situations that will affect many users. Looks like the memory isn't down clocking or something. -

Colif Steve appears to have recorded a 750watt transient on the xtx which makes me sit back and wonder what PSU you need. though I am not sure if that is peak system power or just the gpu itself.Reply -

Elusive Ruse A bit of a cynical Pros/Cons section, no mention of XTX being a much better value over 4080 which is its direct competition?Reply

Performance falls within expected margins (reasonable expectations, not that of crazed fanboys). Beating 4080 in rasterization and falling short in RT and professional uses. I don't quite care for RT but the performance gap in Blender e.g. is still eyepopping. I have heard of Blender 3.5 offering big improvements, yet that's not the current reality of things. I also doubt this will be a better story for RNDA 3 in Maya (Arnold) either. -

shADy81 I assume the 750 W transient GN recorded is for the full system? TPU are showing 455W spike for the XTX and 412 for the XT, lower than 6900 and 6800 by quite some way on their charts.Reply

They also downgraded their PSU recommendation to 650 W for both cards. Was 1000W on the 6900. I think I'd feel a bit close on 650 W even allowing for a good quality unit being able to supply more than rated for short times. 650 would surely be way to close for a 13900K system, what do they know that I dont? -

zecoeco Replyspongiemaster said:Not sure how this was determined, but I would argue this is a step backwards in almost every situation from the 6000 series. Also, it should be pointed out that there is something going on with the power consumption of the 7000 series in non-gaming situations that will affect many users. Looks like the memory isn't down clocking or something.

This is actually a bug that was already reported to AMD and they're already working on a fix. -

zecoeco "Chiplets don't actually improve performance (and may hurt it)"Reply

How on earth is this even a con? who said chiplets are for performance? chiplets are for cost saving.

But what did y'all expect ? You just can't complain for this price point.. there you go, chiplets saved you $200 bucks + gave you 24GB of VRAM as bonus (versus 16GB on 4080)

It is meant for GAMING so don't expect productivity performance, for many reasons including nvidia's cuda cores that has every major software optimized for it.

RDNA is going the right direction with chiplets.. in an industry of increasing costs year after year.

Chiplet design is a solution, and not a new groundbreaking feature that's meant to boost performance.

Sadly, instead of working on the problem, nvidia decided to give excuses such as "Moore's law is dead". -

salgado18 ReplyBut for under a grand, right now the RX 7900 XTX delivers plenty to like and at least keeps pace with the more expensive RTX 4080. All you have to do is lose a good sized chunk of ray tracing performance, and hope that FSR2 can continue catching up to DLSS.

That, I believe, is the reason AMD won't increase too much their market share in this generation. Yes, rasterization is comparable, so are power, memory, price and even upscaling performance/quality. But it is a bad card for raytracing, or at least that's the message, and between a full card and a crippled card, people will prefer the fully featured one. I know designing GPUs is a monstrously complex task, but they really needed to up their RT performance by at least 3x to be competitive. Now they will keep being "bang-for-buck", which is nice, but never "the best".

Edit: by some rough calcs, if the XTX is ~40% faster without RT than the 6950, and ~50% faster with RT, then the generational improvement is ~7%? If so, then that's hardly any improvement at all. Great cards and all that, but I'm very disappointed with the lack of focus on RT. -

btmedic04 Replysalgado18 said:That, I believe, is the reason AMD won't increase too much their market share in this generation. Yes, rasterization is comparable, so are power, memory, price and even upscaling performance/quality. But it is a bad card for raytracing, or at least that's the message, and between a full card and a crippled card, people will prefer the fully featured one. I know designing GPUs is a monstrously complex task, but they really needed to up their RT performance by at least 3x to be competitive. Now they will keep being "bang-for-buck", which is nice, but never "the best".

Edit: by some rough calcs, if the XTX is ~40% faster without RT than the 6950, and ~50% faster with RT, then the generational improvement is ~7%? If so, then that's hardly any improvement at all. Great cards and all that, but I'm very disappointed with the lack of focus on RT.

AMD has a definite physical size advantage though which is something thats applicable to quite a few folks (myself included.) I hear what you are saying, but as a 3090 owner, 3090-like RT performance from the 7900xtx is still quite good for most people.