Blu-ray Done Right: How Does Your Integrated GPU Stack Up?

Image-Quality: HQV’s High-Definition Video Benchmark

Remember the drill before watching HD video: ensure that “pull-down detection” is enabled in the Radeon’s Catalyst drivers and that “inverse telecine” is activated in the GeForce drivers. Even though these options tend to be enabled by default, it's good practice.

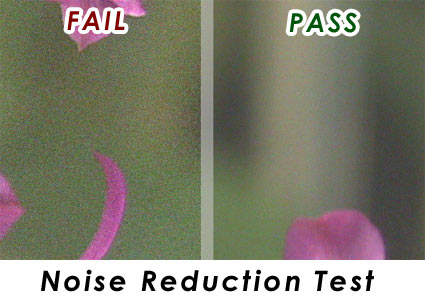

HD Noise-Reduction Test: Out of 25 Points

This test shows scenes that are plagued with noise artifacts. Good noise reduction mitigates most of these artifacts and makes the image appear more natural and much less grainy. The trick is to make the noise reduction work without losing detail.

| Graphics Processor | Score |

|---|---|

| Integrated Radeon HD 3200 | 25 |

| Integrated Radeon HD 4200 | 25 |

| Integrated GeForce 8200 | 25 |

| Integrated GeForce 9400 | 25 |

| Integrated Intel G45 | 25 |

Things are off to a very good start, with the Radeon and GeForce integrated chipsets achieving a full score. While Intel's G45 seems to be working, the quality doesn't seem to be in the same league as the Radeon and Nvidia solutions, but we still gave it a full score. Out of all three, the Radeons appear to have the highest-quality noise reduction, followed by Nvidia and then Intel. This is, of course, a somewhat subjective test, and since the Radeon and Nvidia chipsets met the criteria, they both get full points.

It is interesting to note that noise reduction was not enabled by default on the GeForce boards. We found that a setting of 70% would give the best results, but personal taste will, of course, be a factor.

Most disturbing, however, was how the GeForce 8200 board tended to hiccup when noise reduction was used. So, while it achieved an ideal score in this synthetic test, it's not really viable in a real-world situation.

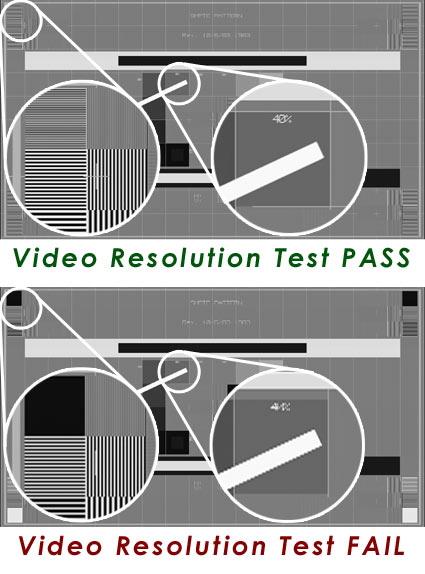

Video-Resolution Loss Test: Out of 20 Points

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

This test simply shows a pattern of lines and color bars from an interlaced source. If the DVD player can show the smallest lines without flickering, it is successfully de-interlacing the image.

| Graphics Processor | Score |

|---|---|

| Integrated Radeon HD 3200 | 20 |

| Integrated Radeon HD 4200 | 20 |

| Integrated GeForce 8200 | 20 |

| Integrated GeForce 9400 | 20 |

| Integrated Intel G45 | 20 |

All of the integrated chipsets handle video resolution loss with no flickering and are performing well in this test. While some of the chipsets would take a half a second or so to compensate for the video resolution loss, they all did the job adequately and scored the full 20 points. Note that the GeForce 8200 failed this test when using drivers newer than 182.5, such as the current 190.38 driver, so the points are awarded for the older driver.

HD Video-Reconstruction Test: Out of 20 Points

This test simply shows a few rotating lines in a circle and is also from an interlaced video source. As the angle changes, interlacing makes the lines appear to become stepped. Successful de-interlacing will remove this stepping.

| Graphics Processor | Score |

|---|---|

| Integrated Radeon HD 3200 | 0 |

| Integrated Radeon HD 4200 | 0 |

| Integrated GeForce 8200 | 0 |

| Integrated GeForce 9400 | 20 |

| Integrated Intel G45 | 10 |

There are some very interesting developments here, indeed.

First, the GeForce 9300/9400 was the only IGP able to achieve a full score in this test. However, it failed the test with the 190.38 driver and would only pass once we reverted to the 182.5 driver.

The Intel G45 managed to perform de-interlacing, but it didn't quite receive the full 20 points for this test because the smoothed edges would display some artifacts. Since it was clear that the G45 was performing some enhancements here, we gave it half points.

As far as the GeForce 8200 and Radeon solutions go, they performed no diagonal filtering whatsoever. This appears to be a feature they leave to their discrete card lineups, but since the Intel G45 can handle jaggy reconstruction, it's hard to believe the GeForce 8200 and Radeon 4200 don't have the power to handle it. Perhaps the feature was omitted to drive users toward add-in boards.

Current page: Image-Quality: HQV’s High-Definition Video Benchmark

Prev Page Test Systems And Benchmarks Next Page Image-Quality: HQV’s High-Definition Video Benchmark, Cont'dDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

Proximon Great article. I think maybe the 4650 is a bit overkill, but that's just nitpicking.Reply

As long as you are talking about HTPC builds though, you might want to mention temps... aren't the 9300/9400 boards very hot? -

epsiloneri Power draw is not interesting because of the electricity bill, it is the generated heat needed to be dissipated with the associated noise levels due to cooling that is critical for an HTPC.Reply

-

HalfHuman i don't get it why a home theater would use a 1200w power source. at the same time i don't get why would someone evaluate the power efficency using this kind of power sorce. if you ask me i'd make this crazy ass power supplies illegal. a normal hometheater should not use more than 50w at idle and 100-150w at load. seems that this is what these actually consume. factor in the less than 5% load on the power supply and you get a masterfull 50-60% power efficency. i'd love to see some proper power supply test.Reply -

falchard BTW, I would like to see a "Can it play Crysis" article in the future that runs down every video card and IGP, then determines if it can possibly play Crysis and at what settings.Reply -

HalfHuman the 1200w power supply is green as in blue-green mould green.Reply

this is in fact an excellent power supply... if you use it. at 100watts load it has a "cool" 76% efficency. if the intel pc uses less than 82watts in load and 66watts in idle you can only imagine the efficency a power supply has at below 5% load. the site suggest around 65% so instead of having a proper power supply using 40watts or less when idle, you get this "green" efficient hummer who swollows 66w. i really like you articles guys but this kind of testing is not the way to go. -

Efficiency isn't even tested below 20% load i believe But it should still be around 70-80% it is a Thermaltake Toughpower 1200w and all of them(3 listed on their site) are standard 80% eff rated or bronze. Ture a more modest Delta,Seasonic 250w or 300w would be much more appropriate for a htpc.Reply

-

HalfHuman 20% for this would be 240watts and efficency would still be reasonable.Reply

i posted some link but i see it's been removed. that review said something about 65% minimum. -

drew_a Uh, guys... you might want to edit this article...Reply

"For the last CPU utilization test, we will check the capability of these graphic chipsets to accelerate picture-in-picture (PIP) video streams. To do this, we will use the Blu-ray dick Sunshine, which utilizes the H.264 codec and features PIP commentary during playback."

on page 6 -

icepick314 "If you are an audiophile, you should know that out of these remaining options, only the GeForce 9300/9400 can handle uncompressed eight-channel LPCM audio over HDMI 1.3."Reply

i did NOT know this...

i thought only way to listen to uncompressed audio on blu-ray was using Asus Xonar HDAV 1.3 audio card to bitstream to your receiver...

it's nice to know that IGP has enough power to handle 1080p while streaming HD audio codec....