Five Overclocked GeForce GTX 560 Cards, Rounded-Up

We were foiled in our quest to find the best vendor-provided GPU cooler for Nvidia's GeForce GTX 560. But out of the ashes sprung a round-up of cards armed with those very same solutions. Which of these five GF114-based boards is right for you?

Galaxy 56NGH6DH4TTX GeForce GTX 560 MDT x4

Galaxy’s entry is quite unique; it is the only GeForce GTX 560 that supports four monitors from a single dual-slot board, and it doesn’t require a second card in SLI to enable three-screen gaming.

Priced at $230 on Newegg, it is the most expensive card in our round-up, but not my much. It’s only $10 more than the Asus and Zotac offerings with their own factory overclocks.

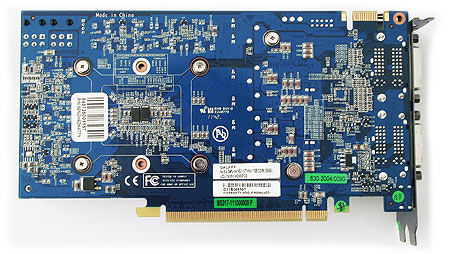

Despite its ability to accommodate additional display connectivity, Galaxy’s MDT x4 is actually the smallest card, with a PCB that measures 8.25” x 4.5” PCB and overall dimensions of 8.75” x 5”, including the bezel.

Article continues belowIn an apparent compromise for the unique output configuration, Galaxy's card sports the lowest operating frequencies of our five tested boards. Although an 830 MHz core still counts as overclocked, it's only 20 MHz higher than Nvidia's reference. A 1002 MHz memory clock matches the first GeForce GTX 560 we received from Nvidia exactly. Fortunately, this card's twin six-pin power inputs are up on top of the PCB, where we prefer them.

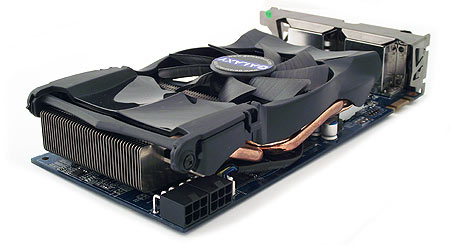

Galaxy’s small, unique cooler employs three 6 mm heat pipes to transfer thermal energy away from the GPU and into an array of aluminum fins. A single 3.5” radial fan facilitates heat dissipation from there.

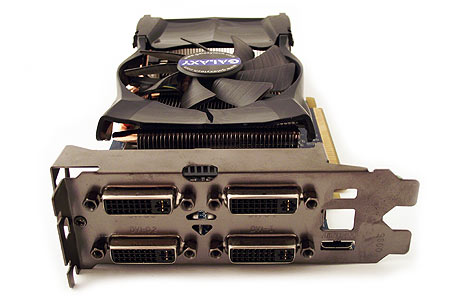

As a multi-display card, Galaxy’s MDT x4 boasts the most interesting I/O panel on our bench. Four DVI connectors and a single mini-HDMI output leave no room back there for additional ventilation. This really isn't a problem, though, because none of the other GeForce GTX 560s we've tested force air down a closed shroud and out the back of the card. Zotac seems to be the only company designing 560s that exhaust heated air from your PC.

Let’s talk a little more about the card’s unique multi-display functionality. Galaxy taps the IDT VMM1403 multi-monitor controller to translate one dual-link DVI signal into three single-link DVI outputs. Unfortunately, bandwidth limitations prevent you from running the three screens attached to the IDT chip at 1080p/60. Instead, the card maxes out at 1080p and 50 Hz, yielding one 5760x1080 surface.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You could encounter issues with screens that don't appreciate 50 Hz refresh rates. In that case, you'd need to back down to 5040x1050 (using three 1680x1050 displays) to enable 60 Hz. This happened to us with the 285.62 driver from Nvidia's site. The problem was fixed, however, by reverting to driver 285.54 from Galaxy.

We need to reiterate, though: you're still limited to two independent display pipelines from Nvidia's GF114 graphics processor. IDT's ViewXpand technology simply allows you to turn one of them into a single larger surface. If you use three or more 2560x1600 displays requiring dual-link DVI connectors or are not willing to compromise on lower refresh rates, you'd need two Nvidia cards in SLI or any number of AMD-based products with Eyefinity support instead.

Keep in mind that even though the card supports four monitors, the fourth cannot be made a part of the three-screen setup coming from IDT's chip.

Included with the card are two dual Molex-to-six-pin power adapters, a mini-HDMI-to-HDMI adapter, A DVI-to-VGA adapter, a driver disk, a software disk, and instruction pamphlets.

As we already know, this card is designed for three or four screens. However, Nvidia's driver isn't designed with that many displays in mind. As a result, Galaxy includes WinSplit's Revolution software, which lets you assign an application to a preset screen position using Control+Alt+Number pad keys. Alternatively, the company also offers a download for Galaxy MDT EZY Display, a little app that allows you to choose the display configuration you want, and automatically maximizes windows within the display on which they appear. Both pieces of software do a good job of managing windows where you want them to appear, but MDT EZY Display is simpler and more elegant.

Overclocking

Galaxy supports voltage manipulation in its Xtreme Tuner HD utility. Keep in mind that you have to use the version bundled with the card, or wait until the version on Galaxy's website is updated to release 3016 (Update: v3016 is now available for download from galaxy's website). Although the software lets you specify core voltages as high as 1.3 V, it drops down to a 1.15 V when you try to apply the setting. That's not entirely bad news; we wouldn’t want to push voltages much higher than 1.15 V on air cooling anyway.

With a peak 1.15 V setting and fan duty cycle dialed in to 100%, we managed to hit 1000 MHz core and 1250 MHz memory frequencies. That's an impressive overclock given Galaxy's more moderate shipping clocks.

Current page: Galaxy 56NGH6DH4TTX GeForce GTX 560 MDT x4

Prev Page ECS Black Series NBGTX560-1GPI-F GeForce GTX 560 Next Page MSI N560GTX Twin Frozr II/OCDon Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

pensivevulcan Kepler is around the corner, so are lower end AMD 7000 series parts, this was interesting but wouldn't one want to wait for a plethora of reasons.Reply -

payneg1 The Galaxy model comes closest with its 830/1002 MHz clocks, but Zotac's AMP! edition goes all the way to 950/1100 MHz.Reply

This dosent match with the above chart -

crisan_tiberiu so, basicaly if someone plays on a single monitor, there is no point going beyond a gtx 560 or a 6950 in today's games. (it slike in the "best gaming CPU chart", no point going beyond i5 2500k for gaming.Reply -

giovanni86 salad10203Are those temps for real? My 280 gtx has never idled under 40C.Your kidding right, my overclocked 580GTX at 60% fan speed idles at 32c. Cards down clock themselves which allows them to run cooler at idle temps even if it were clocked at upwards i don't think a card would get hot unless it was being used.Reply -

justme1977 crisan_tiberiuReply

I have the feeling that even a i5 2500k@4ghz bottlenecks a 7970 @1080p in most newer games.

If the GPU market goes the way it does, it won't take long that even midrange cards will be bottlenecked @1080p by the cpu.

-

FunSurfer I think there is an error on the Asus idle voltage: instead "0.192 V Idle" it should be 0.912Reply