The GeForce GTX 770 Review: Calling In A Hit On Radeon HD 7970?

Wait, the new GeForce GTX 770 is powered by Nvidia's old GK104? That's right. And guess what? The card is faster, quieter, more feature-complete, and less expensive than the GeForce GTX 680 that came before it. Can it usurp the compelling Radeon HD 7970?

Gigabyte GTX 770 OC Windforce

We’re happy to see that Gigabyte’s GeForce GTX 770 OC Windforce (GV-N770OC-2GD) remains a dual-slot card, qualifying for duty in an SLI setup, even in cramped quarters. The cooling fins are oriented vertically though, so the majority of warm exhaust air is expelled upward. The rest gets blown on to the mainboard from where the card’s own fans draw it back in.

| Technical Specifications And Dimensions | |

|---|---|

| GPU Clock | 1,135 MHz |

| Boost (according to BIOS) | 1,189 MHz |

| Actual Boost Under Load | 1,254.4 MHz |

| Height | 125 mm / 4.92 inches |

| Length | 282 mm / 11.1 inches |

| Width (Cooler Side) | 36 mm / 1.41 inches (<= dual slot) |

| Width (PCB side) | 4 mm / 0.16 inches (no back plate, frame only) |

| max. Weight | 982 g / 34.6 ounces |

| Fans | 3 x 75 mm / 2.95 inches (fan diameter) |

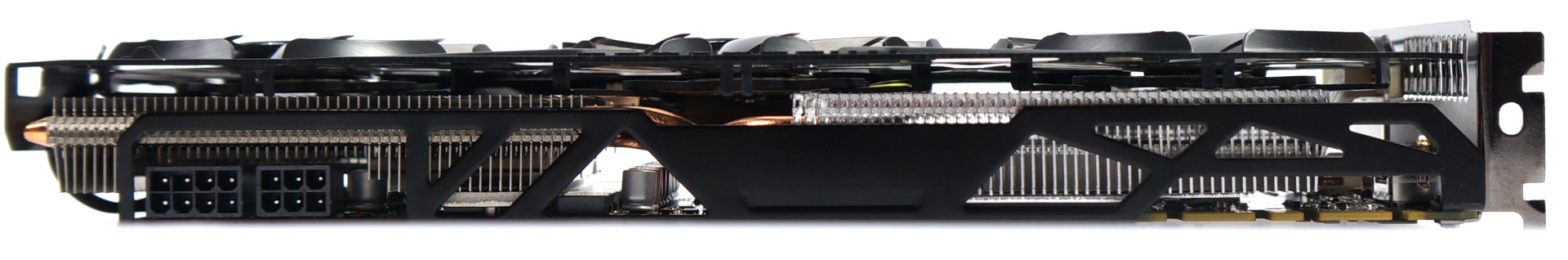

Let’s begin our tour of this card with its new Windforce cooler and familiar 75 mm fans. It’s been fundamentally reworked compared to its predecessor. For example, Gigabyte replaced the previous incarnation's plastic shroud with one made of metal, and the cooling fins are now spaced further apart, enabling more evenly distributed airflow combined with lower operating noise.

The top of the card sports an eight- and six-pin PCIe power connector, as well as two connectors for SLI bridges.

Heat is dissipated through a bifid cooler with the help of six heat pipes (2 x 8mm and 4 x 6 mm) made of a new composite material. RAM and VRM modules receive their own heat sinks, which are also connected to the big cooler.

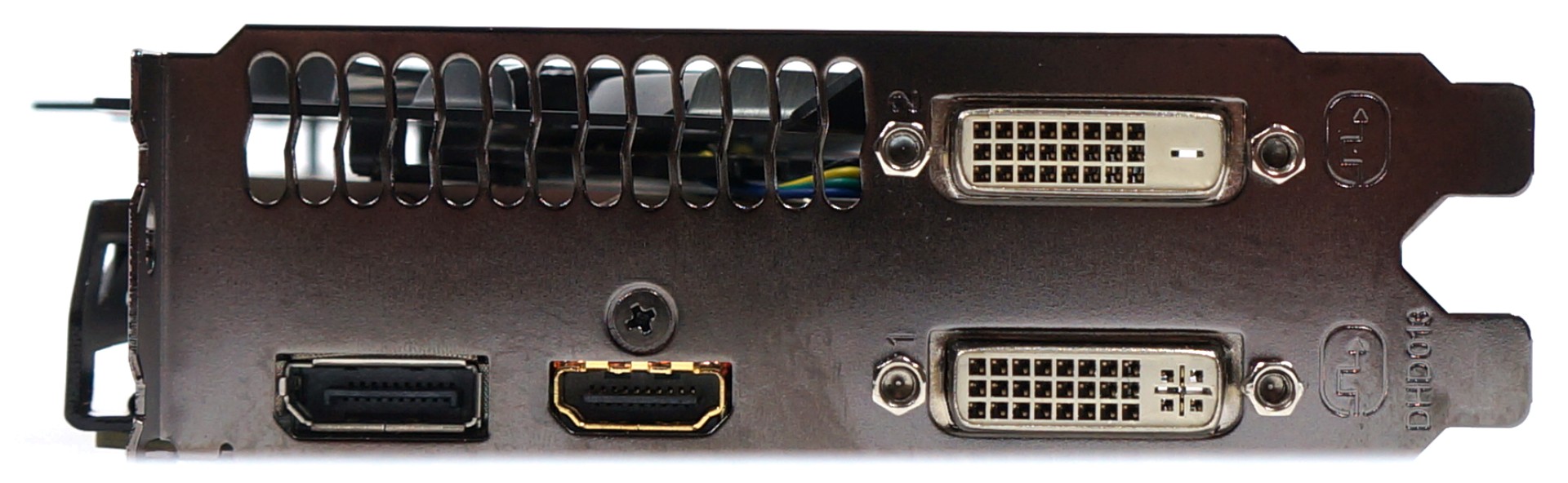

Connectivity options mirror those of the reference card, including two dual-link DVI connectors, HDMI, and a DisplayPort output.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Gigabyte GTX 770 OC Windforce

Prev Page Overclocking Three Partner Boards Next Page MSI GTX 770 OC Lightning-

EzioAs Thanks for the article.Reply

Kind of an expected performance increase. Seeing overclocked GTX 680 review was conclusive enough unless you've never seen one. Never expected this card to be getting the Smart Buy award though to be completely honest.

Hey, how about another title for the review?

- GTX 680 Gets a New Cooler, BIOS Update and Price Drop! -

No? I'll think of a better one... -

CarolKarine the fact that every single site is comparing nvidia's next-gen stuff with AMD's current gen stuff kinda sickens me. don't start throwing around "Nvidia's got this gen in the bag" till we see what AMD comes up with. they've had what, 1 1/2, 2 years? I'm hoping for GCN 2 and a die shrink on a new architecture.Reply -

Memnarchon EzioAsNever expected this card to be getting the Smart Buy award though to be completely honest.Better power consumption than 7970GE.Reply

Less noise than 7970GE.

Runs cooler than 7970GE.

Same FPS as 7970GE.

$50 less cost.

Yeah indeed, why to get the Smart Buy award I wonder... -

GMPoisoN Reply10884687 said:When you factor in the 4 games that come with the HD7970 GHz Edition, it is still cheaper than the GTX 770. I find it odd that nVidia had over a year to come up with something to beat AMD in single GPU performance at this price point but failed to deliver.

Yes, there is a bit of power savings. Yes, multi-GPU performance is better. But, that is nothing new. I also wouldn't expect future drivers to deliver much in the way of performance improvements since this card is essentially a GTX 680 v2.

Ultimately, I expected more from nVidia. Yes, this is a polished card out of the gate. But I'm not sure the release of this card will affect AMDs bottom line as things currently stand, performance wise.

Agreed. Sapphire 7970 Ghz ftw <3 -

SiliconWars None of Nvidia's partners are using the reference cooler so this is just a scam to get better turbo clock speeds and good scores on quiet and cool operation. You've been had Chris and now you've spread Nvidia's lies to your readership.Reply