Updated: Nvidia GeForce GTX 1070 8GB Pascal Review

Power Consumption Results

Stock Power Consumption

Power consumption at idle is less than 10W for the two Founders Edition boards. That's a respectable result, to be sure. The same can’t be said for MSI’s two Pascal-based models though, which land at 15 and 16W. The explanation for those higher idle power figures is related to Nvidia's restrictive rules for the number of GPU Boost steps. Due to how the technology is handled, Nvidia's Founders Edition cards idle at 139 MHz, whereas MSI’s factory-overclocked models sit at 235 MHz.

As it stands, if manufacturers want a higher maximum GPU Boost frequency, then they have to take all of the other steps down a notch to make space for it.This means that the lowest possible GPU Boost clock rate step gets eliminated from the bottom of the BIOS’ table. So, if you want an additional space at the top, you need to make room for it by getting rid of the very bottom one.

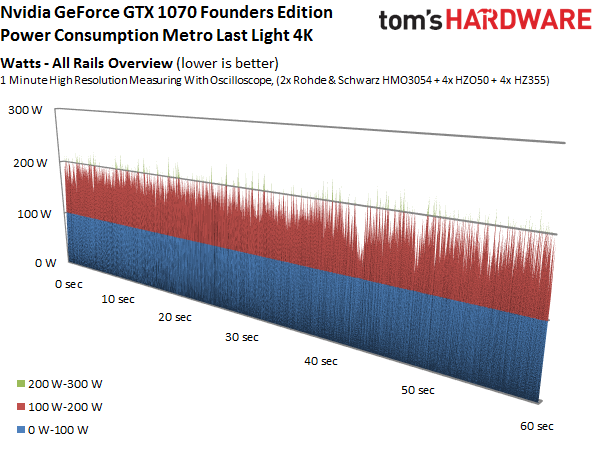

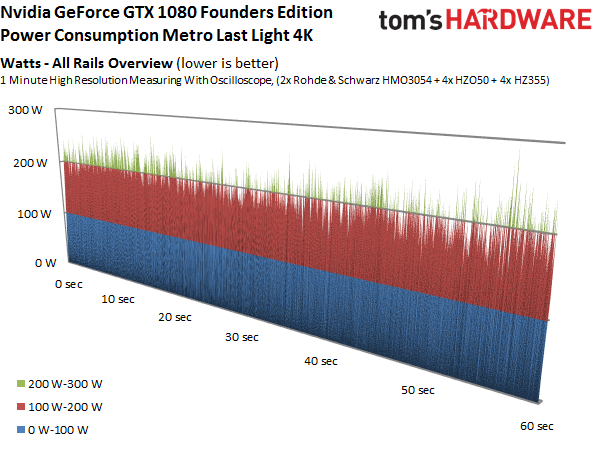

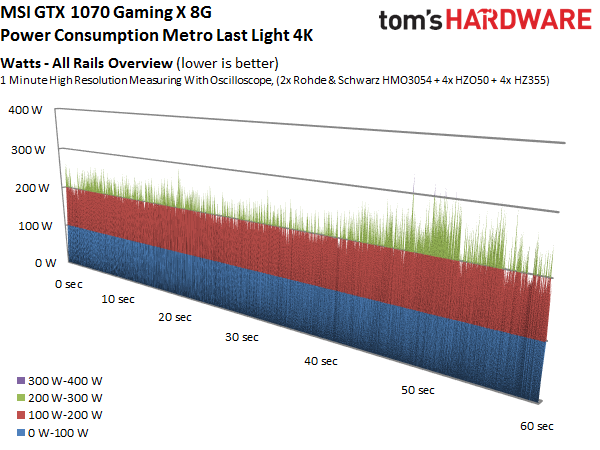

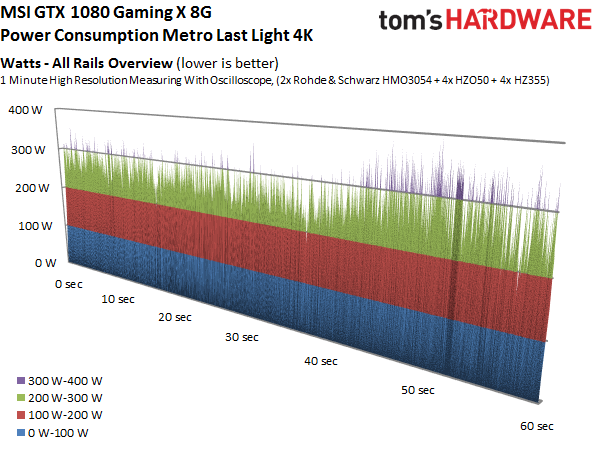

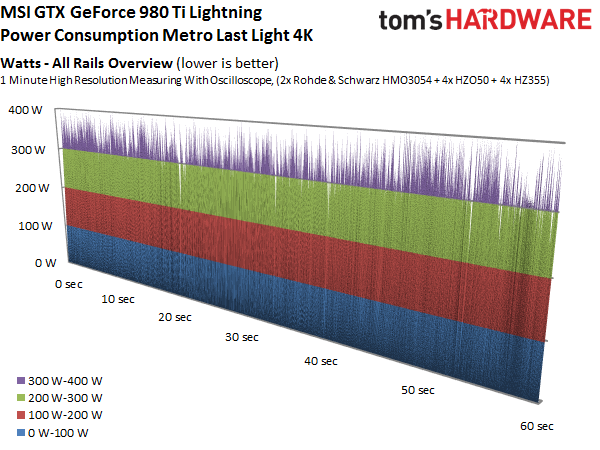

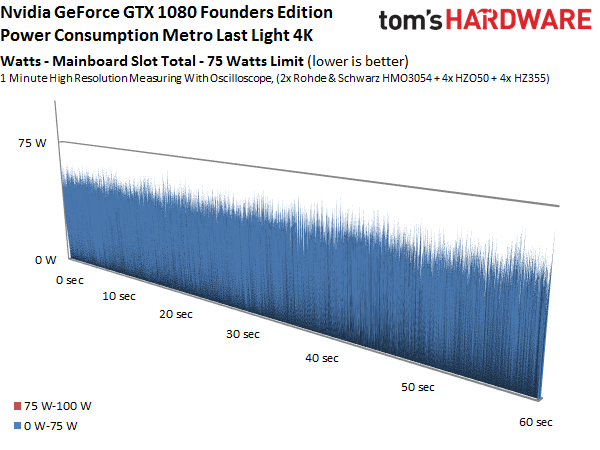

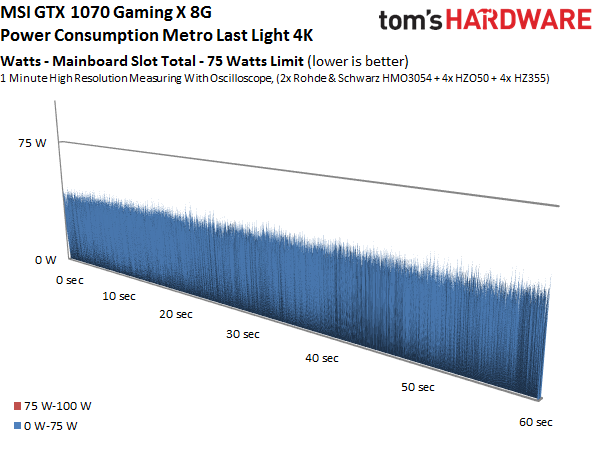

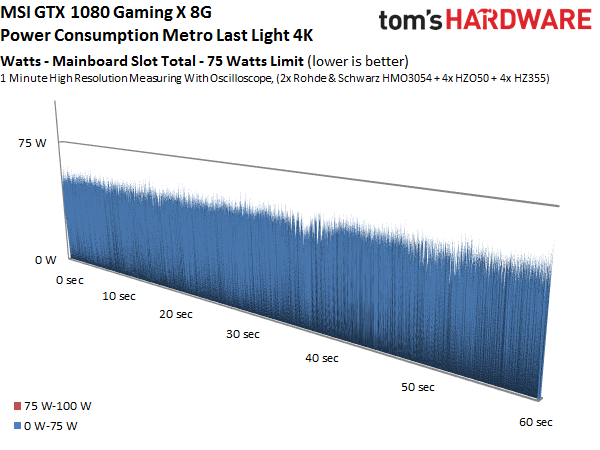

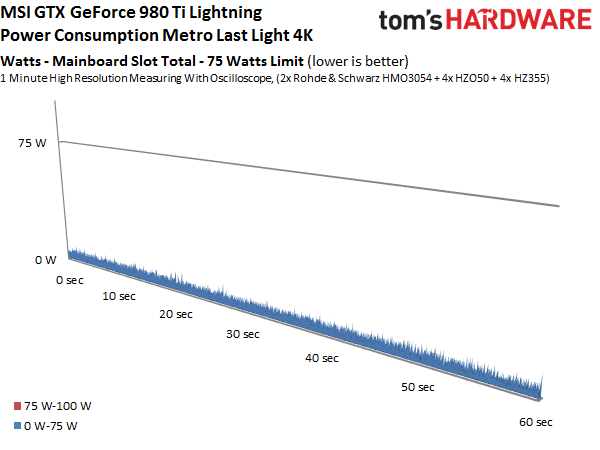

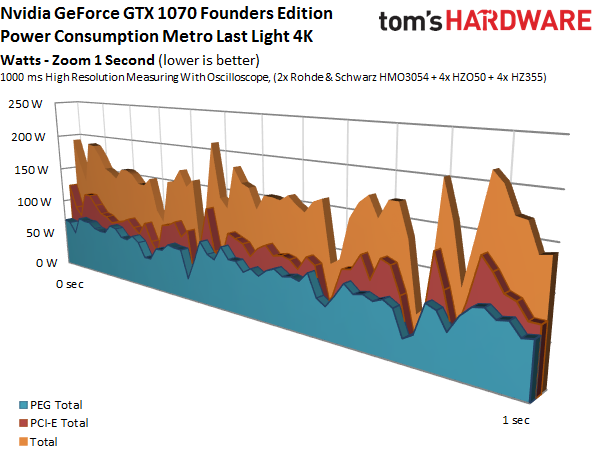

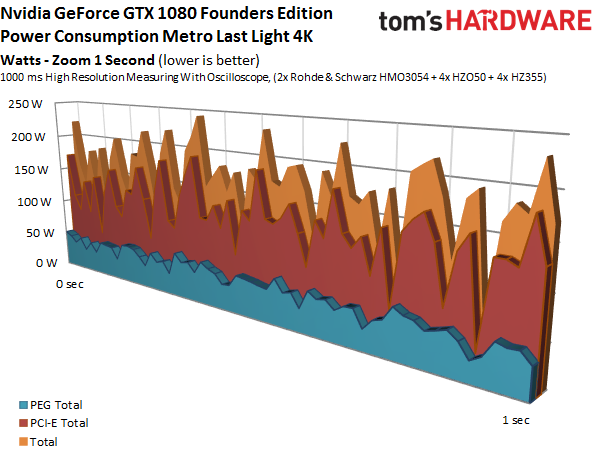

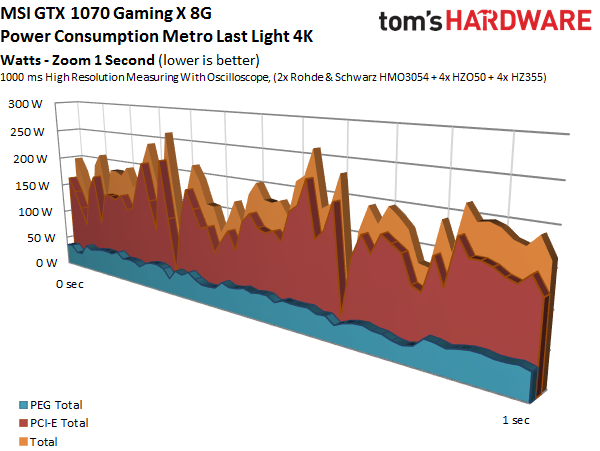

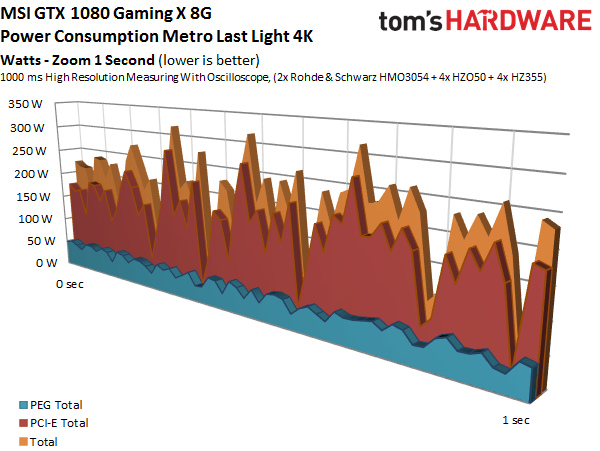

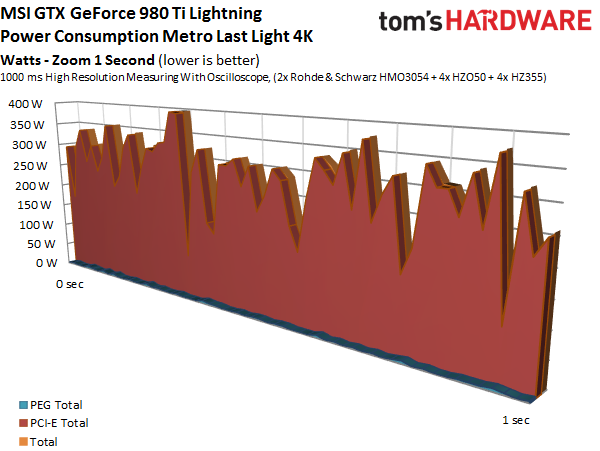

Next, let’s take a detailed look at the five graphics cards’ power consumption during gaming. Starting with the general overview, it’s plain to see that the GeForce GTX 1070 Founders Edition produces the lowest spikes, whereas MSI’s older Maxwell-based GeForce GTX 980 Ti Lightning knows no bounds.

Article continues below

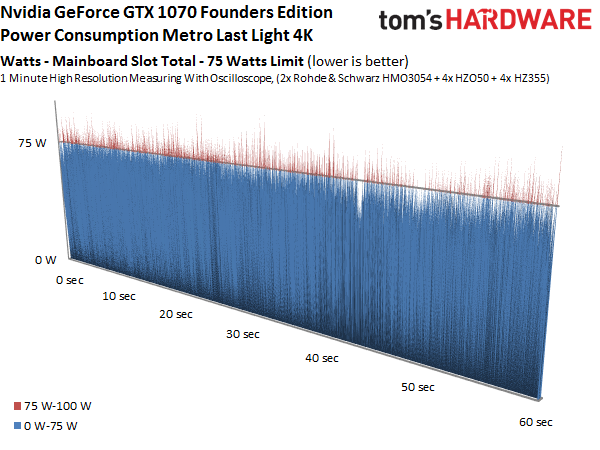

Taking a closer look at the motherboard slot yields a surprising finding: none of the cards in this round-up use the 3V rail at all. This means that the PCIe slot doesn’t really provide the 75W most enthusiasts assume it does, since the 12V rail only offers about 65W on its own. This is almost exactly where Nvidia’s GeForce GTX 1070 Founders Edition ends up, with spikes well in excess of 75W. They're not particularly dangerous, but can cause audible artifacts if you're using on-board audio on a poorly-designed motherboard.

The other graphics cards fare a lot better due to their additional power phases. MSI's GeForce GTX 980 Ti Lightning doesn’t use the motherboard’s PCIe slot at all. Instead, it has an additional six-pin power connector on top of the expected pair of eight-pin connectors.

A detailed 3D graph illustrates the distribution across each rail. The curve is smoothed out.

Overall, each card performs as expected. What happens if we play with their clock rates and power targets, though?

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Power Consumption at Different Frequencies

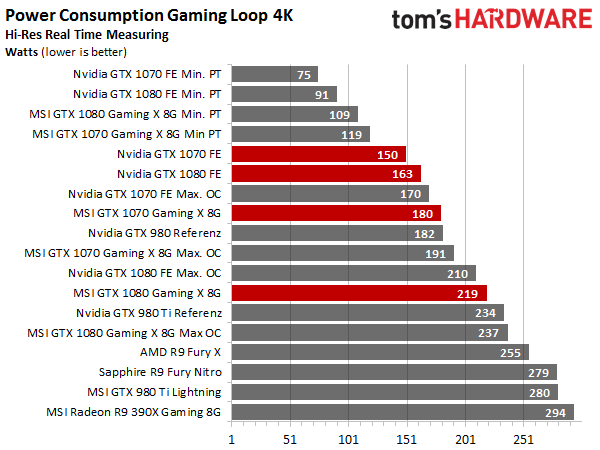

In order to find out, we performed 10 to 20 tests per board. Step by step, we both overclocked them and lowered their power target to achieve the lowest possible power consumption and performance. The following bar graph shows the three main results representing the lowest possible power consumption, stock settings, and the highest possible overclock.

But we didn't want to limit ourselves to three results. Instead, we set out to examine each graphics card’s efficiency and performance across its entire range. Facilitating this required adjusting the settings to get closer and closer to each of the GPU Boost clock rates that we wanted to test. For each one, we had to find the setting that allowed the card to run stably under full loan in a closed PC case after warming up.

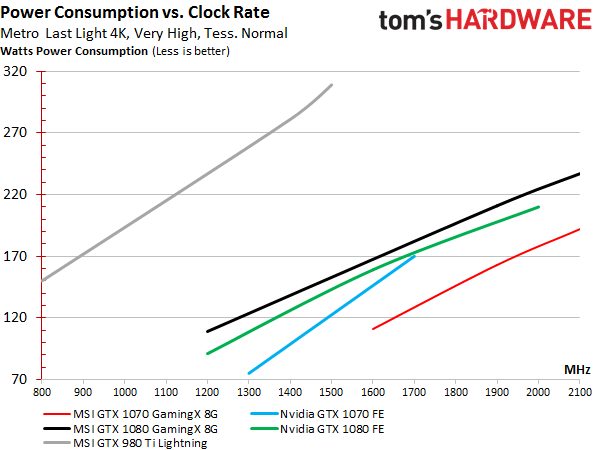

The graph below clearly shows that frequency and power consumption have a very linear relationship. This is actually an odd result based on past experience. Efficiency curves usually level out toward the top, which is to say that power consumption starts exploding at some point.

It turns out that the increase really is linear so long as GPU Boost is left alone to do its thing and you don't intervene with (largely pointless) manual voltage increases. Unfortunately, this calls into question what happens to performance. As clock frequency, power consumption, and performance are all increased, at least one of the three will run into a wall. Seeing that the limiting factor isn't clock rate or power consumption, our next order of business is exploring the third variable.

MORE: Best Graphics Cards

MORE: All Graphics Content

Current page: Power Consumption Results

Prev Page Partner Cards And Efficiency Testing Next Page Efficiency Results-

adamovera Archived comments are found here: http://www.tomshardware.com/forum/id-3073584/nvidia-geforce-gtx-1070-8gb-pascal-performance-review.htmlReply -

nitrium So given the simultaneously lower price and higher performance of the partner boards, only an actual idiot would buy the "Founder's Edition" GTX 1070?Reply -

George Phillips I feel that I should regret getting MSI 1070 FE. MSI's custom designs perform superior then FE cards in every way. Very impressive. Asus and Gigabyte's custom designs must also do better than FE cards.Reply -

Krushe When you're talking about the heat on FE cards. I think the default fan speed is 45-50% at 83c. Make it 80% and the card never reaches 70c even with boost clock up to 1900+. What speeds are the MSI fans running at during your temp measurements?Reply -

DookieDraws Edit: The article has been updated, so I deleted my original comment about the MSI GPU.Reply -

Tony Casagrande "This means that the lowest possible GPU Boost clock rate step gets eliminated from the bottom of the BIOS’ table. So, if you want an additional space at the top, you need to make room for it by getting rid of the very bottom one."Reply

If it were me, I would have removed a low to middle clock rate instead of the very lowest to get both the low idle power consumption and the OC speed. -

neblogai Regarding the possible audible noise because of power spikes on PEG: it is not really about cheap MB, but about using analog audio out of MB, and not anything digital, right?Reply

Also, about overclocking: I think reviews of all these new generation nVidia and AMD cards should include average clock that cards operated when doing all game benchmarks. Official boost clock numbers are a bit useless, because AMD cards run games at below boost clocks, and average for nVidia GTX1070 is above boost clocks. Having just official boost clock numbers make it difficult to evaluate overclocking potential and make real gains look much bigger or smaller than expected. -

Calculatron Am I the only one that noticed that the Founder's Edition cards managed to pull over 75 watts from the motherboard PCIe slot and that no one went bonkers over it?Reply