Tom's Hardware Verdict

The RTX 4070 brings Ada Lovelace down to the mainstream with a $599 price tag. It's basically on par with the RTX 3080 in a more compact and efficient package, with DLSS 3 Frame Generation sweetening the pot.

Pros

- +

Efficient and fast graphics card

- +

Great features at a mostly reasonable price

- +

Excellent ray tracing and AI hardware

Cons

- -

Still more expensive than the last-gen 3070

- -

Unnecessary 16-pin power connector and adapter

- -

DLSS 3 isn't really a killer feature

Why you can trust Tom's Hardware

Nvidia positions its new GeForce RTX 4070 as a great upgrade for GTX 1070 and RTX 2070 users, but that doesn't hide the fact that in many cases, it's effectively tied with the last generation's RTX 3080. The $599 MSRP means it's also replacing the RTX 3070 Ti, with 50% more VRAM and dramatically improved efficiency. Is the RTX 4070 one of the best graphics cards? It's certainly an easier recommendation than cards that cost $1,000 or more, but you'll inevitably trade performance for those saved pennies.

At its core, the RTX 4070 borrows heavily from the RTX 4070 Ti. Both use the AD104 GPU, and both feature a 192-bit memory interface with 12GB of GDDR6X 12Gbps VRAM. The main difference, other than the $200 price cut, is that the RTX 4070 has 5,888 CUDA cores compared to 7,680 on the 4070 Ti. Clock speeds are also theoretically a bit lower, though we'll get into that more in our testing. Ultimately, we're looking at a 25% price cut to go with the 23% reduction in processor cores.

We've covered Nvidia's Ada Lovelace architecture already, so start there if you want to know more about what makes the RTX 40-series GPUs tick. The main question here is how the RTX 4070 stacks up against its costlier siblings, not to mention the previous generation RTX 30-series. Here are the official specifications for the reference card.

| Graphics Card | RTX 4070 | RTX 4080 | RTX 4070 Ti | RTX 3080 Ti | RTX 3080 | RTX 3070 Ti | RTX 3070 |

|---|---|---|---|---|---|---|---|

| Architecture | AD104 | AD103 | AD104 | GA102 | GA102 | GA104 | GA104 |

| Process Technology | TSMC 4N | TSMC 4N | TSMC 4N | Samsung 8N | Samsung 8N | Samsung 8N | Samsung 8N |

| Transistors (Billion) | 32 | 45.9 | 35.8 | 28.3 | 28.3 | 17.4 | 17.4 |

| Die size (mm^2) | 294.5 | 378.6 | 294.5 | 628.4 | 628.4 | 392.5 | 392.5 |

| SMs | 46 | 76 | 60 | 80 | 68 | 48 | 46 |

| GPU Cores (Shaders) | 5888 | 9728 | 7680 | 10240 | 8704 | 6144 | 5888 |

| Tensor Cores | 184 | 304 | 240 | 320 | 272 | 192 | 184 |

| Ray Tracing "Cores" | 46 | 76 | 60 | 80 | 68 | 48 | 46 |

| Boost Clock (MHz) | 2475 | 2505 | 2610 | 1665 | 1710 | 1765 | 1725 |

| VRAM Speed (Gbps) | 21 | 22.4 | 21 | 19 | 19 | 19 | 14 |

| VRAM (GB) | 12 | 16 | 12 | 12 | 10 | 8 | 8 |

| VRAM Bus Width | 192 | 256 | 192 | 384 | 320 | 256 | 256 |

| L2 Cache (MiB) | 36 | 64 | 48 | 6 | 5 | 4 | 4 |

| ROPs | 64 | 112 | 80 | 112 | 96 | 96 | 96 |

| TMUs | 184 | 304 | 240 | 320 | 272 | 192 | 184 |

| TFLOPS FP32 (Boost) | 29.1 | 48.7 | 40.1 | 34.1 | 29.8 | 21.7 | 20.3 |

| TFLOPS FP16 (FP8) | 233 (466) | 390 (780) | 321 (641) | 136 (273) | 119 (238) | 87 (174) | 81 (163) |

| Bandwidth (GBps) | 504 | 717 | 504 | 912 | 760 | 608 | 448 |

| TGP (watts) | 200 | 320 | 285 | 350 | 320 | 290 | 220 |

| Launch Date | Apr 2023 | Nov 2022 | Jan 2023 | Jun 2021 | Sep 2020 | Jun 2021 | Oct 2020 |

| Launch Price | $599 | $1,199 | $799 | $1,199 | $699 | $599 | $499 |

There's a pretty steep slope going from the RTX 4080 to the 4070 Ti, and from there to the RTX 4070. We're now looking at the same number of GPU shaders — 5888 — as Nvidia used on the previous generation RTX 3070. Of course, there are plenty of other changes that have taken place.

Chief among those is the massive increase in GPU core clocks. 5888 shaders running at 2.5GHz will deliver a lot more performance than the same number of shaders clocked at 1.7GHz — almost 50% more performance, by the math. Nvidia also likes to be conservative, and real-world gaming clocks are closer to 2.7GHz... though the RTX 3070 also clocked closer to 1.9GHz in our testing.

The memory bandwidth ends up being slightly higher than the 3070 as well, but the significantly larger L2 cache will inevitably mean it performs much better than the raw bandwidth might suggest. Moving to a 192-bit interface instead of the 256-bit interface on the GA104 does present some interesting compromises, but we're glad to at least have 12GB of VRAM this round — the 3060 Ti, 3070, and 3070 Ti with 8GB are all feeling a bit limited these days. But short of using memory chips in "clamshell" mode (two chips per channel, on both sides of the circuit board), 12GB represents the maximum for a 192-bit interface right now.

While AMD was throwing shade yesterday about the lack of VRAM on the RTX 4070, it's important to note that AMD has yet to reveal its own "mainstream" 7000-series parts, and it will face similar potential compromises. A 256-bit interface allows for 16GB of VRAM, but it also increases board and component costs. Perhaps we'll get a 16GB RX 7800 XT, but the RX 7700 XT will likely end up at 12GB VRAM as well. As for the previous-gen AMD GPUs having more VRAM, that's certainly true, but capacity is only part of the equation, so we need to see how the RTX 4070 stacks up before declaring a victor.

Another noteworthy item is the 200W TGP (Total Graphics Power), and Nvidia was keen to emphasize that in many cases, the RTX 4070 will use less power than TGP, while competing cards (and previous generation offerings) usually hit or exceeded TGP. We can confirm that's true here, and we'll dig into the particulars more later on.

The good news is that we finally have a latest-gen graphics card starting at $599. There will naturally be third-party overclocked cards that jack up the price, with extras like RGB lighting and beefier cooling, but Nvidia has restricted this pre-launch review to cards that sell at MSRP. We've got a PNY model as well that we'll look at in more detail in a separate review, though we'll include the performance results in our charts. (Spoiler: It's just as fast as the Founders Edition.)

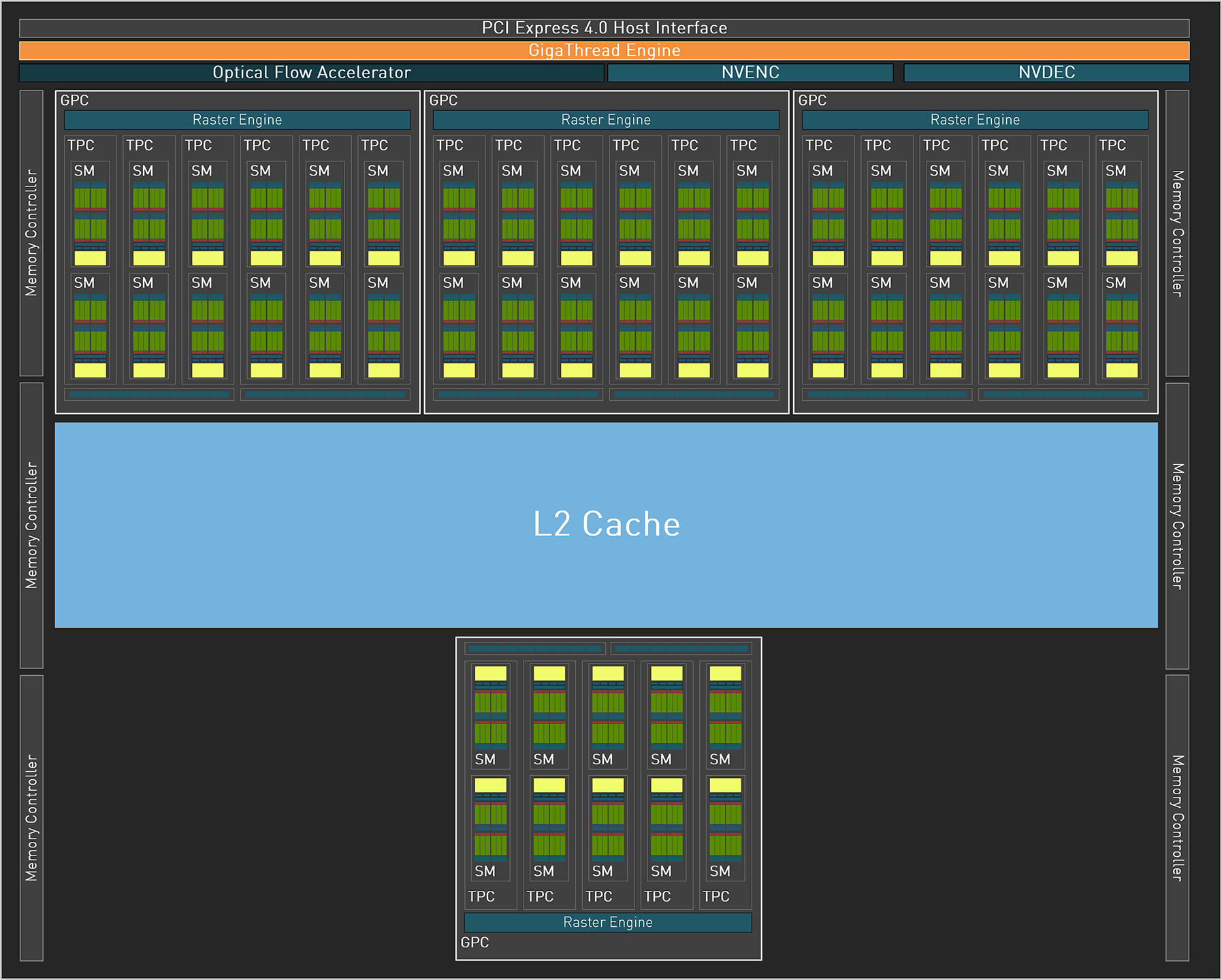

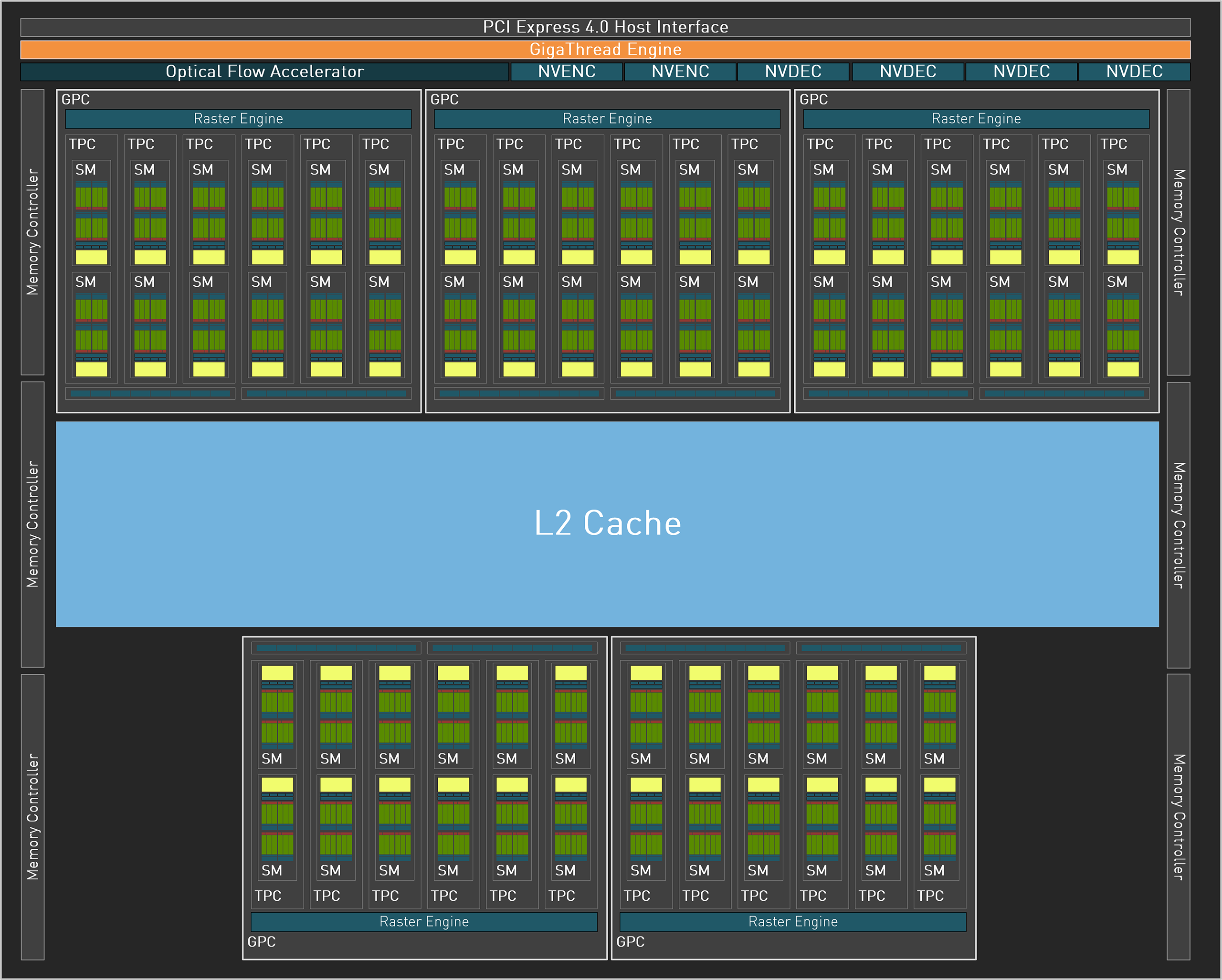

Above are the block diagrams for the RTX 4070 and for the full AD104, and you can see all the extra stuff that's included but turned off on this lower-tier AD104 implementation. None of the blocks in that image are "to scale," and Nvidia didn't provide a die shot of AD104, so we can't determine just how much space is dedicated to the various bits and pieces — not until someone else does the dirty work, anyway (looking at you, Fritzchens Fritz).

As discussed previously, AD104 includes Nvidia's 4th-gen Tensor cores, 3rd-gen RT cores, new and improved NVENC/NVDEC units for video encoding and decoding (now with AV1 support), and a significantly more powerful Optical Flow Accelerator (OFA). The latter is used for DLSS 3, and while it's "theoretically" possible to do Frame Generation with the Ampere OFA (or using some other alternative), so far only RTX 40-series cards can provide that feature.

The Tensor cores meanwhile now support FP8 with sparsity. It's not clear how useful that is in all workloads, but AI and deep learning have certainly leveraged lower precision number formats to boost performance without significantly altering the quality of the results — at least in some workloads. It will ultimately depend on the work being done, and figuring out just what uses FP8 versus FP16, plus sparsity, can be tricky. Basically, it's a problem for software developers, but we'll probably see additional tools that end up leveraging such features (like Stable Diffusion or GPT Text Generation).

Those interested in AI research may find other reasons to pick an RTX 4070 over its competition, and we'll look at performance in some of those tasks as well as gaming and professional workloads. But before the benchmarks, let's take a closer look at the RTX 4070 Founders Edition.

- MORE: Best Graphics Cards

- MORE: GPU Benchmarks and Hierarchy

- MORE: All Graphics Content

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Nvidia GeForce RTX 4070 Founders Edition Review

Next Page Nvidia RTX 4070 Founders Edition Design

Jarred Walton is a senior editor at Tom's Hardware focusing on everything GPU. He has been working as a tech journalist since 2004, writing for AnandTech, Maximum PC, and PC Gamer. From the first S3 Virge '3D decelerators' to today's GPUs, Jarred keeps up with all the latest graphics trends and is the one to ask about game performance.

-

Elusive Ruse If there were no previous gen high-end GPUs available this would be a good buy but at this price you can just buy a new AIB 6950XT.Reply -

PlaneInTheSky So it offers similar performance to the 3080 and launches at an only slightly lower MSRP.Reply

Going by price leaks of some AIB, we will be lucky if we're not going backwards in price / performance.

In 2 years time since the 3080, performance per $ has barely increased.

The 12GB VRAM is depressing too, considering a RX 6950XT offers 16GB for the same price.

DLSS 3.0 doesn't interest me in the slightest since it's just glorified frame interpolation that does nothing to help responsiveness, it actually adds lag.

It's hard to get excited about PC Gaming when it is in such a terrible state. -

PlaneInTheSky In Europe, price/performance of Nvidia GPU generation-generation has basically flatlined now.Reply

3080 MSRP: €699

4070 MSRP: €669

That's the state PC gaming is in now. In 2 years time, no improvement in price/performance.

It is a good example of what happens in a duopoly market without competition. -

peachpuff Reply

That's really sad, how many gold plated leather jackets does jensen really need?PlaneInTheSky said:In 2 years time since the 3080, performance per $ has barely increased.

-

DSzymborski The 4070 is releasing at a significantly lower price than the 3080. $699 in September 2020 is $810 in March 2023 dollars. $200 over the 4070 MSRP of $599 is a significant amount. Now, few 4070s will be available at $599, but then few 3080s were actually available at $699 (which, again, is $810 in current dollars).Reply

The 3070 was released at an MSRP of $499, which is $578 in March 2023 dollars. -

Exphius_Mortum Title of the article needs a little adjustment, it should read as followsReply

Nvidia GeForce RTX 4070 Review: Mainstream Ada Arrives at Enthusiast Pricing -

Elusive Ruse @JarredWaltonGPU thanks for the review, I saw that you retested all the GPUs with recent drivers which must have taken a huge effort (y)Reply

PS: Are you planning to add or replace some older titles with new games for future benchmarks? -

btmedic04 Hey look, a 1.65% performance improvement per 1% more money card when compared to the 3070. It's disappointing that's where we are at with gpus these daysReply -

healthy Pro-teen Reply

A duopoly at best, it's almost a monopoly. AMD will probably undercut it by a small amount, get middling reviews and then heavily discount the GPU only when most people have already decided on the 4070. 6950XT is discounted right now and offers better value and the average gamer doesn't know it, so 4070 will sell regardless.PlaneInTheSky said:In Europe, price/performance of Nvidia GPU generation-generation has basically flatlined now.

3080 MSRP: €699

4070 MSRP: €669

That's the state PC gaming is in now. In 2 years time, no improvement in price/performance.

It is a good example of what happens in a duopoly market without competition.