Update: Nvidia Titan X Pascal 12GB Review

GTA V, Hitman And Project CARS

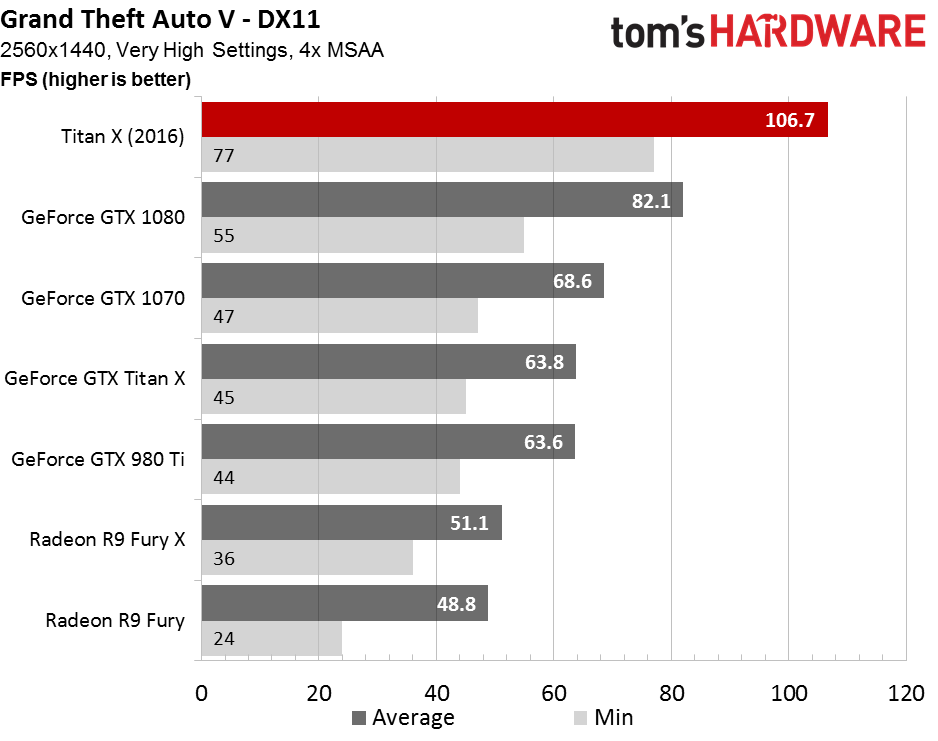

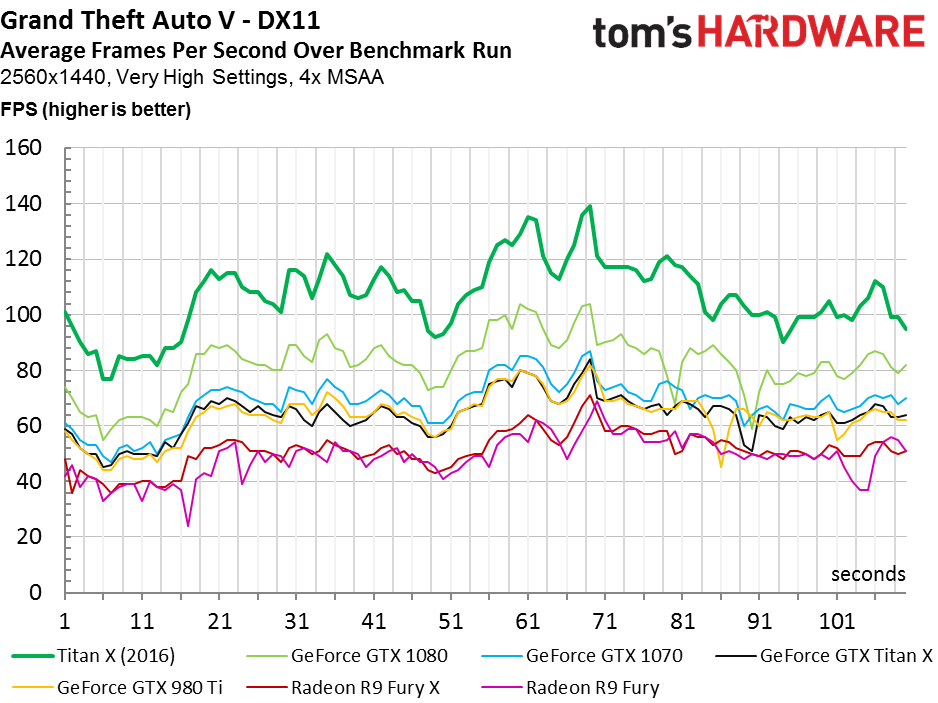

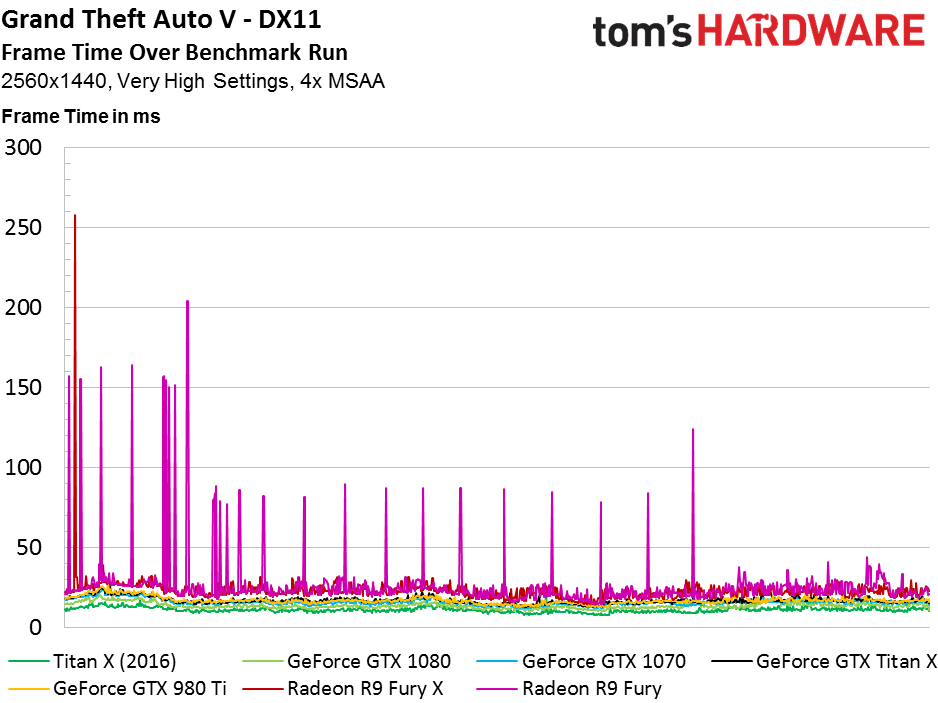

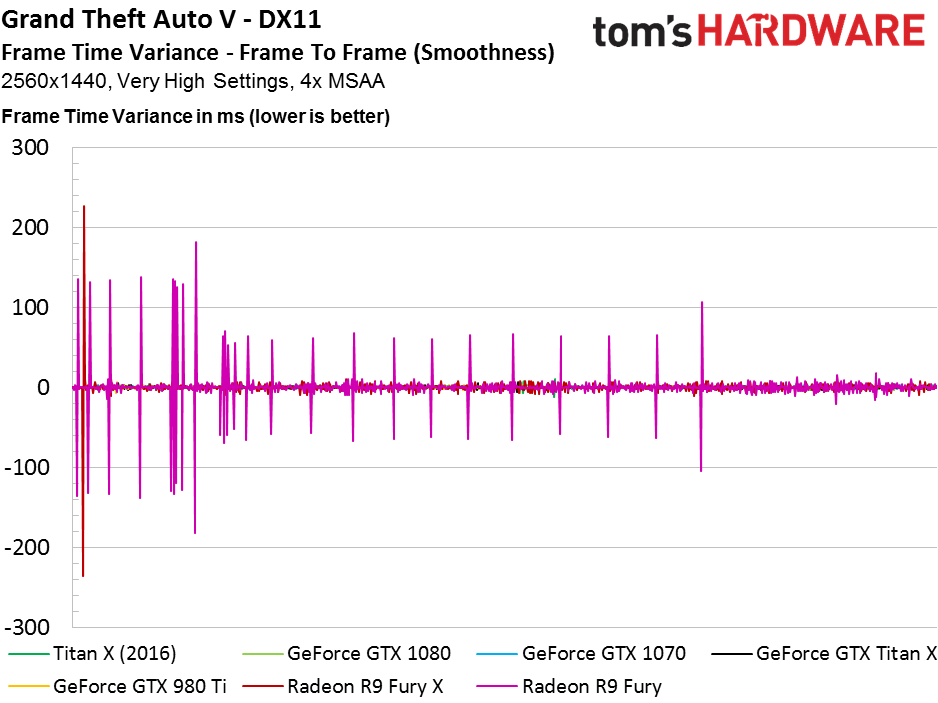

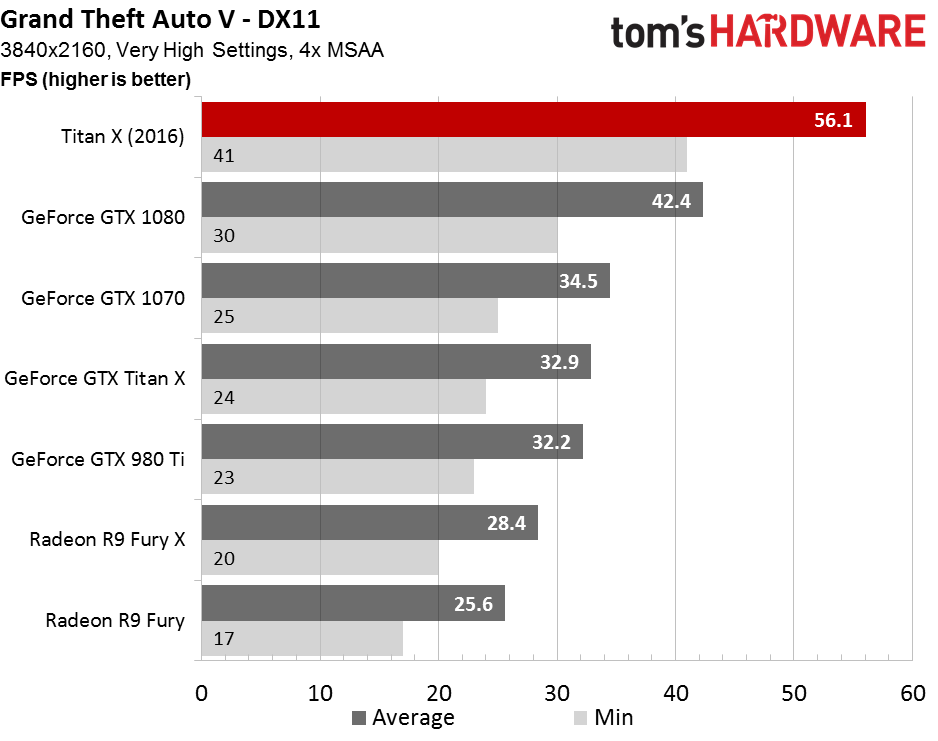

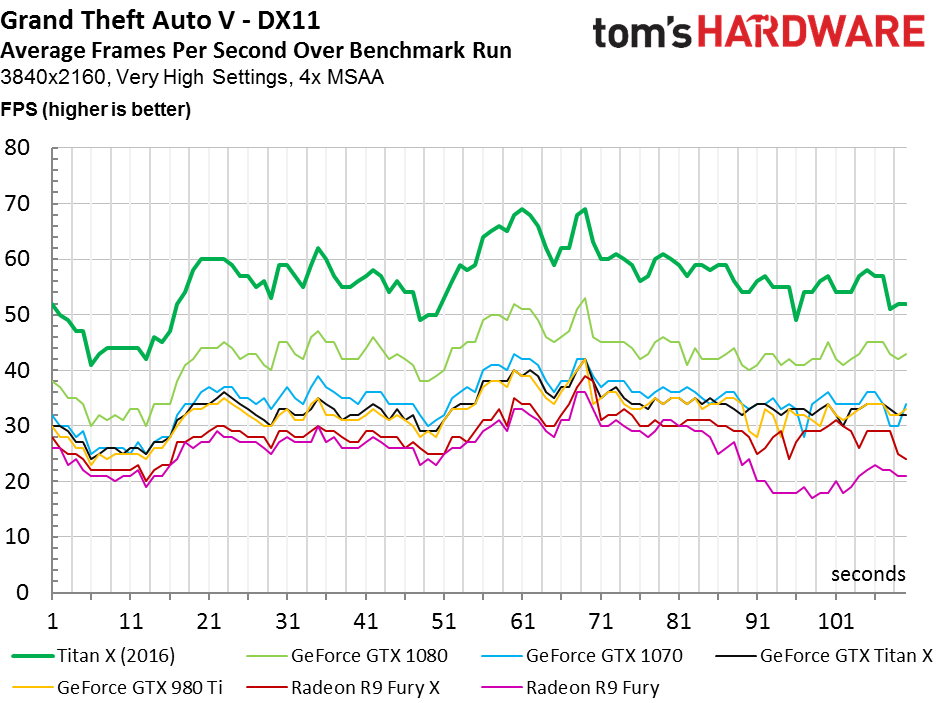

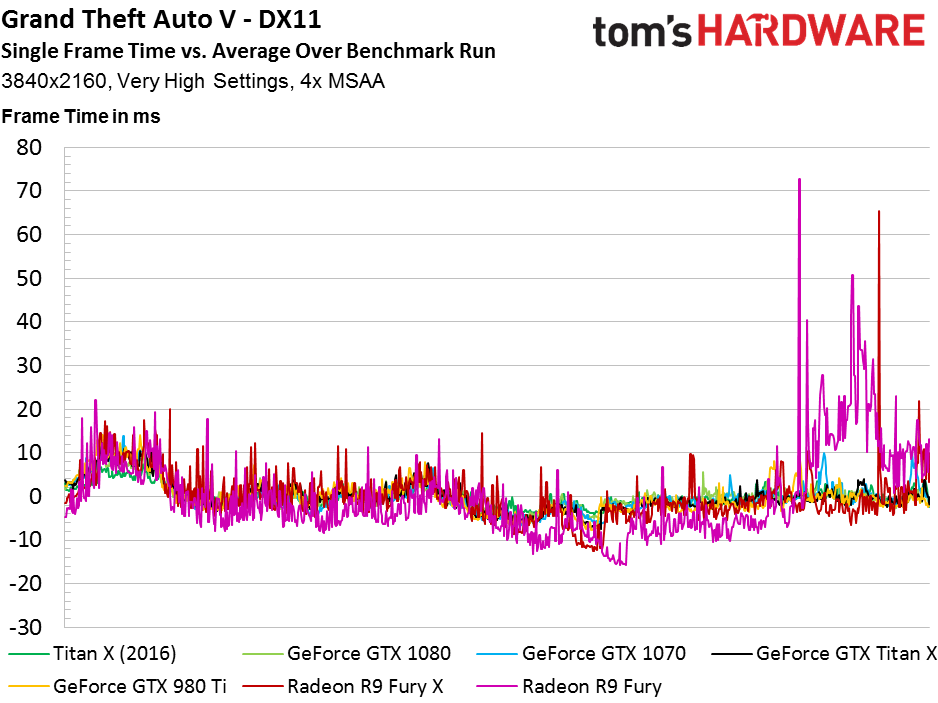

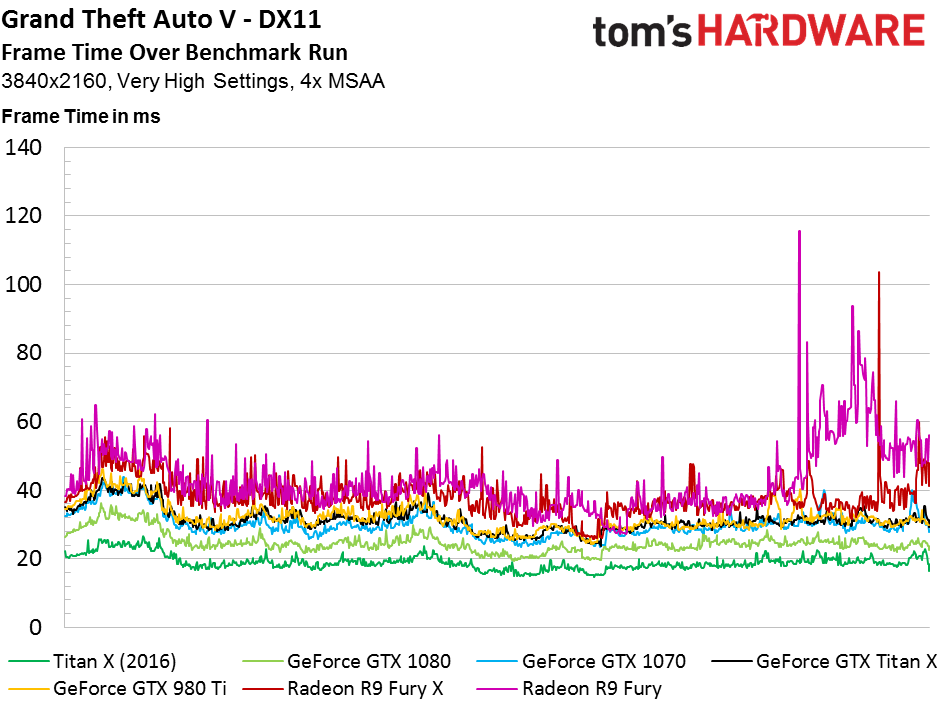

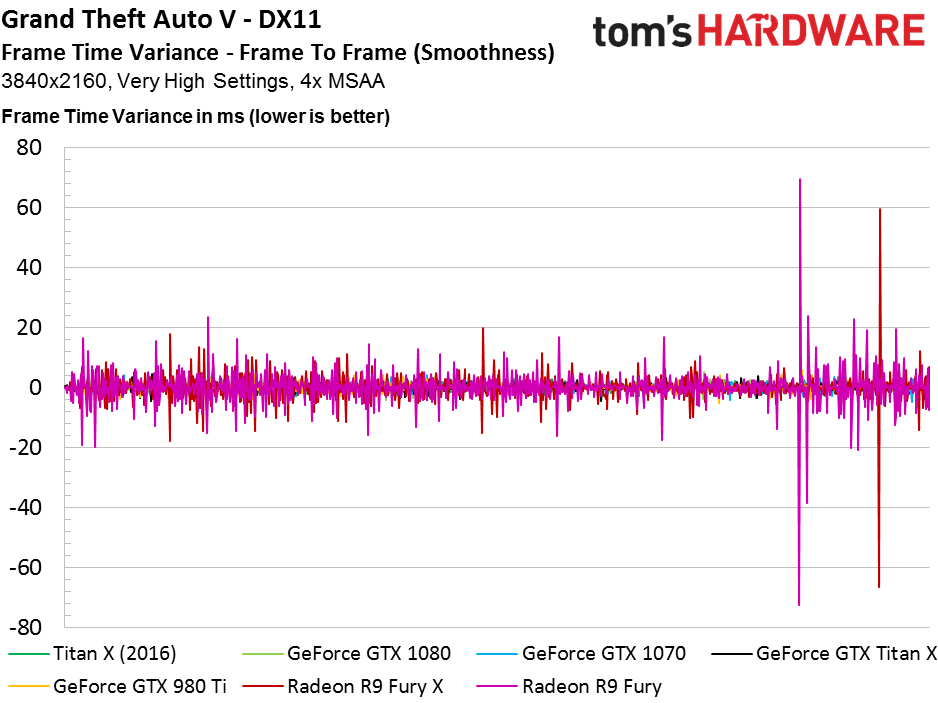

GTA V

Despite the fact that Rockstar’s Advanced Game Engine utilizes the open source Bullet physics engine, and not PhysX, AMD’s cards trail handily behind their most relevant competition. But the real reason we’re here is at the other end of the chart: Titan X turns in an average frame rate 30% higher than the 1080X.

The lead extends to 32% at 3840x2160, and performance remains playable at GTA V’s most taxing settings with 4x MSAA enabled.

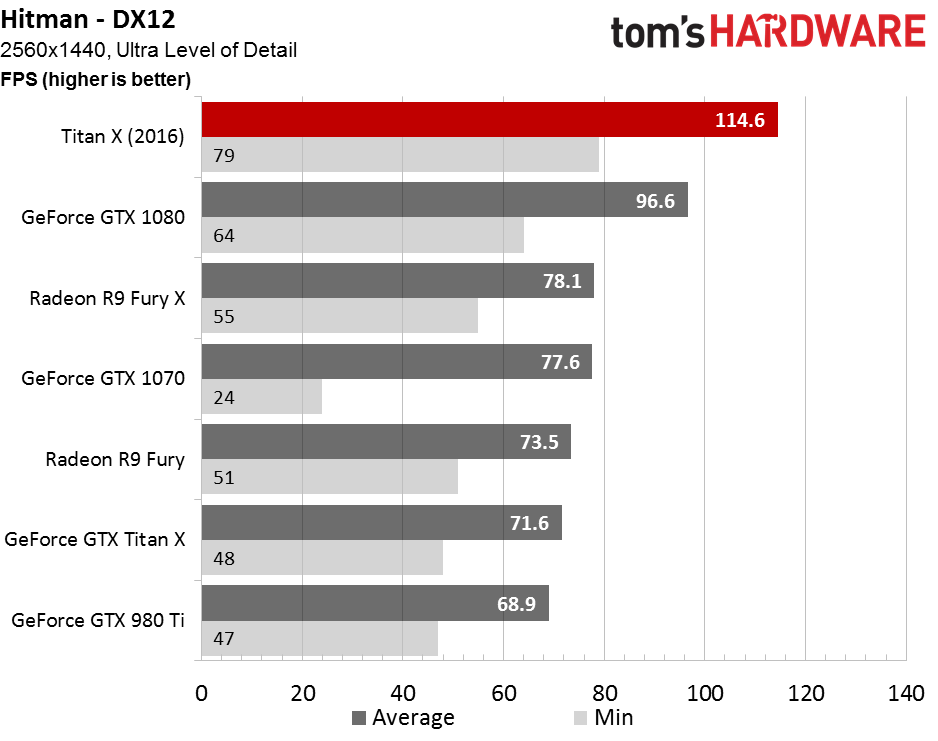

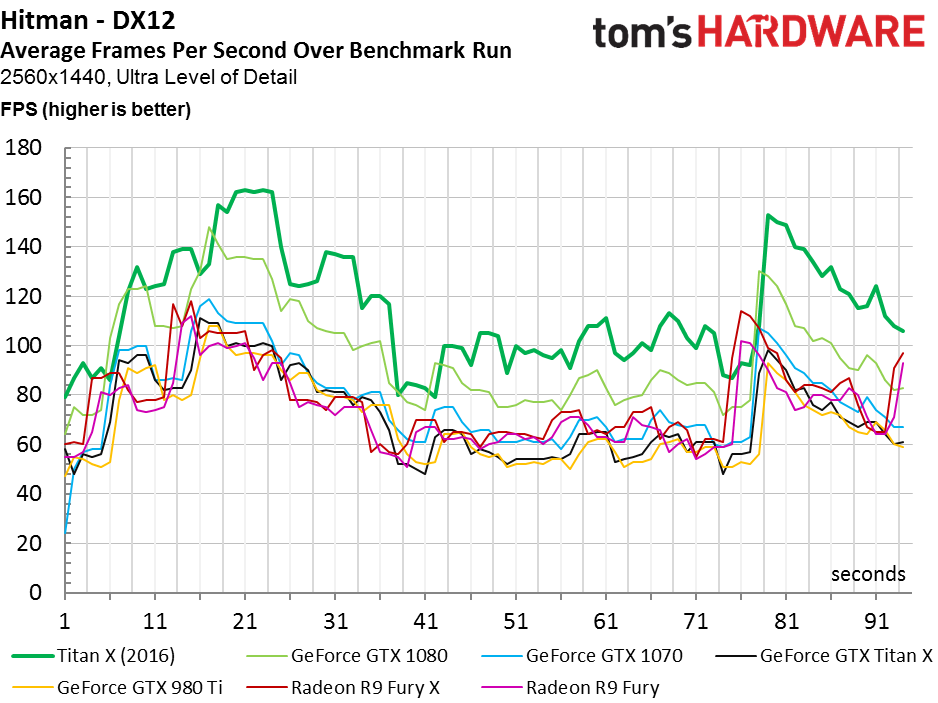

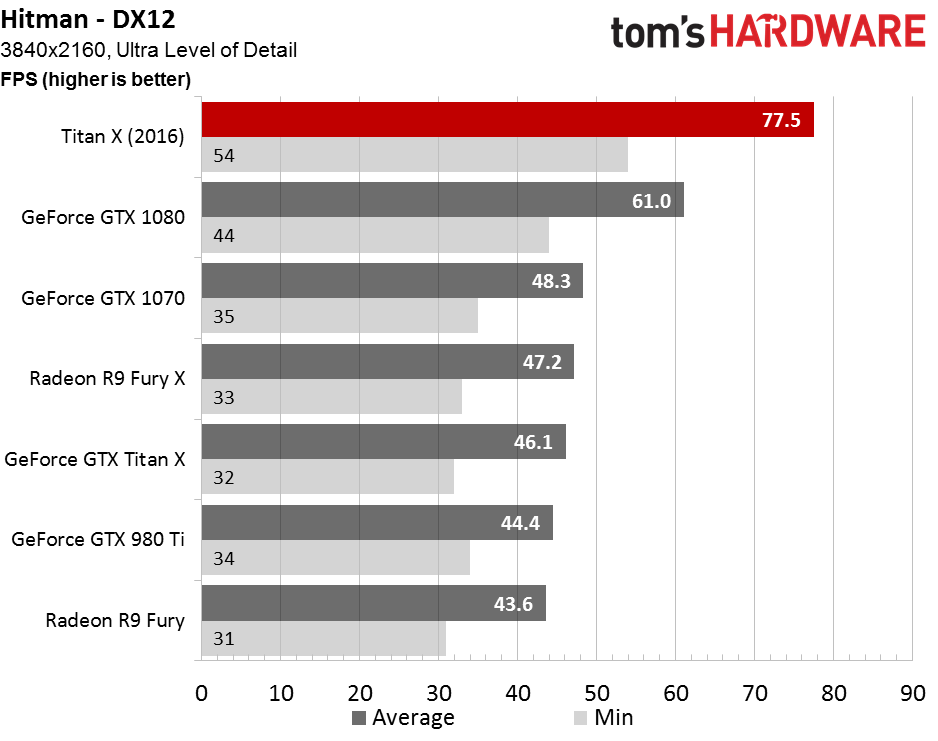

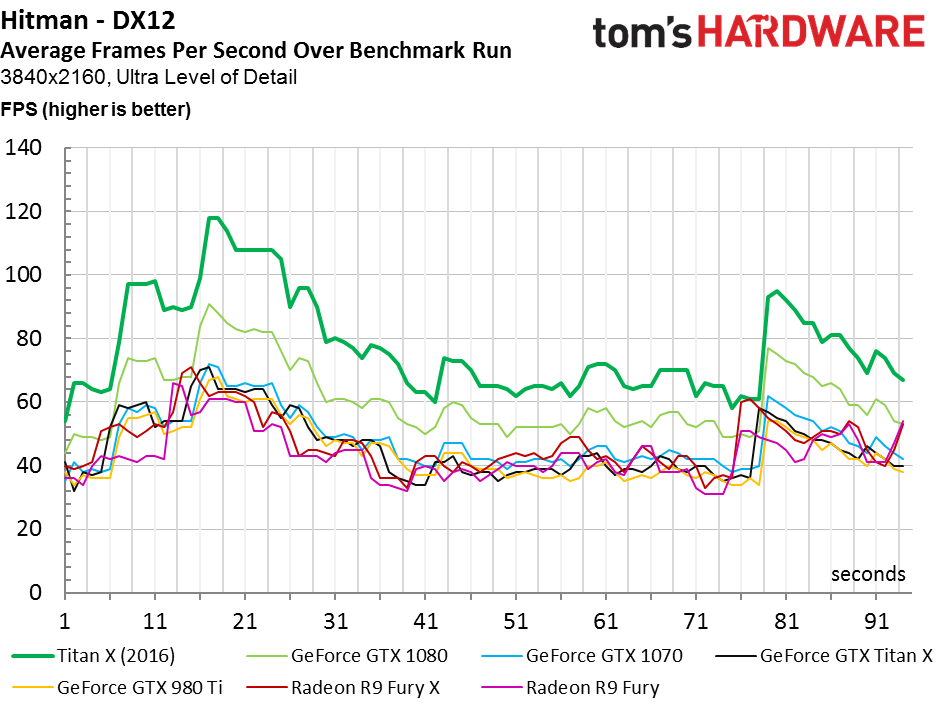

Hitman

Hitman was already a bastion for AMD, even under DirectX 11. Using DirectX 12, minimum frame rates are generally higher than what we saw in our GeForce GTX 1070 coverage. The Radeon R9 Fury X can’t catch GeForce GTX 1080, but it does eclipse the 1070. Meanwhile, Titan X is 19% faster than the 1080.

Article continues below

The Titan X’s advantage over GeForce GTX 1080 grows to 27% at 3840x2160, where is maintains an average of 77+ FPS.

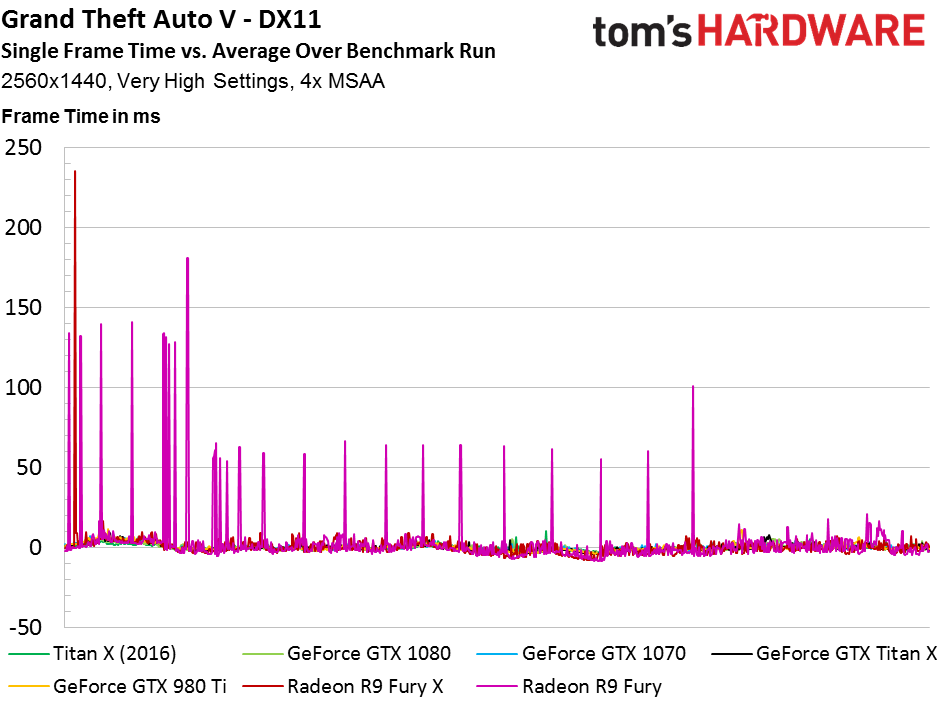

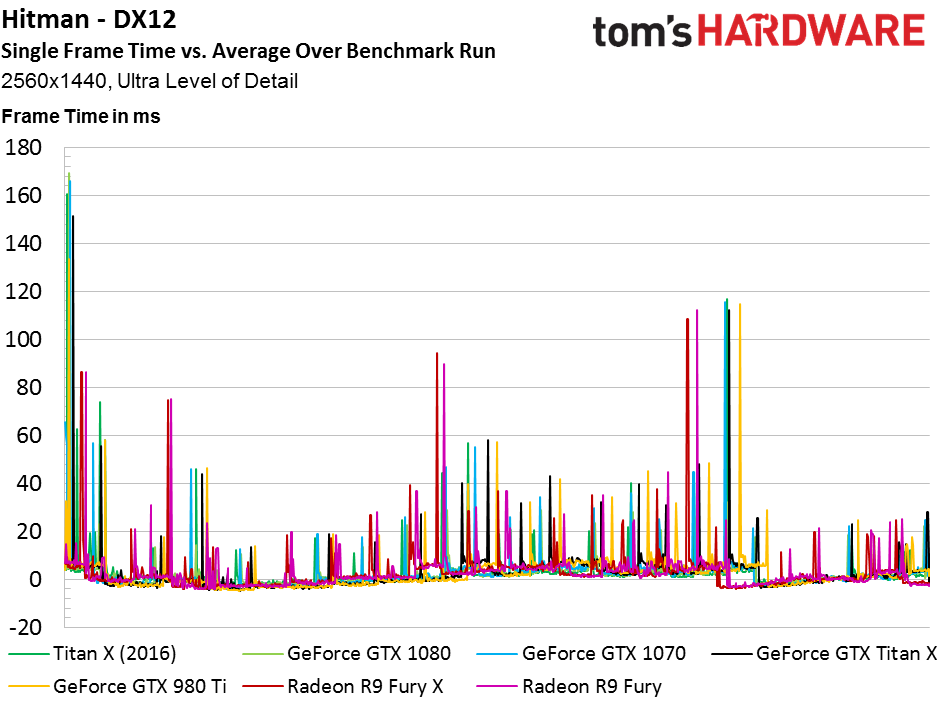

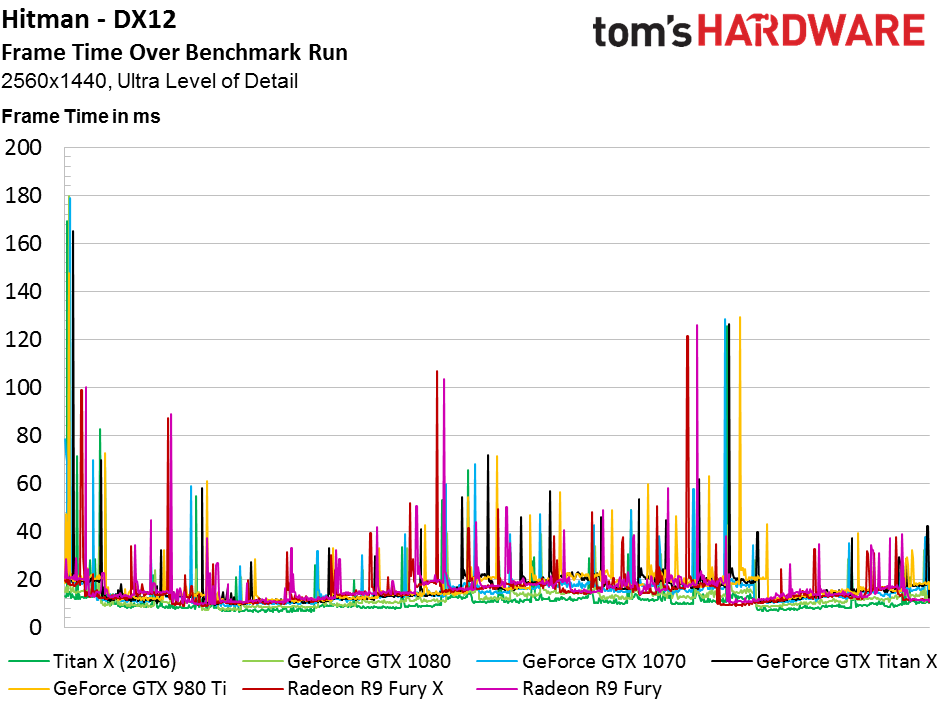

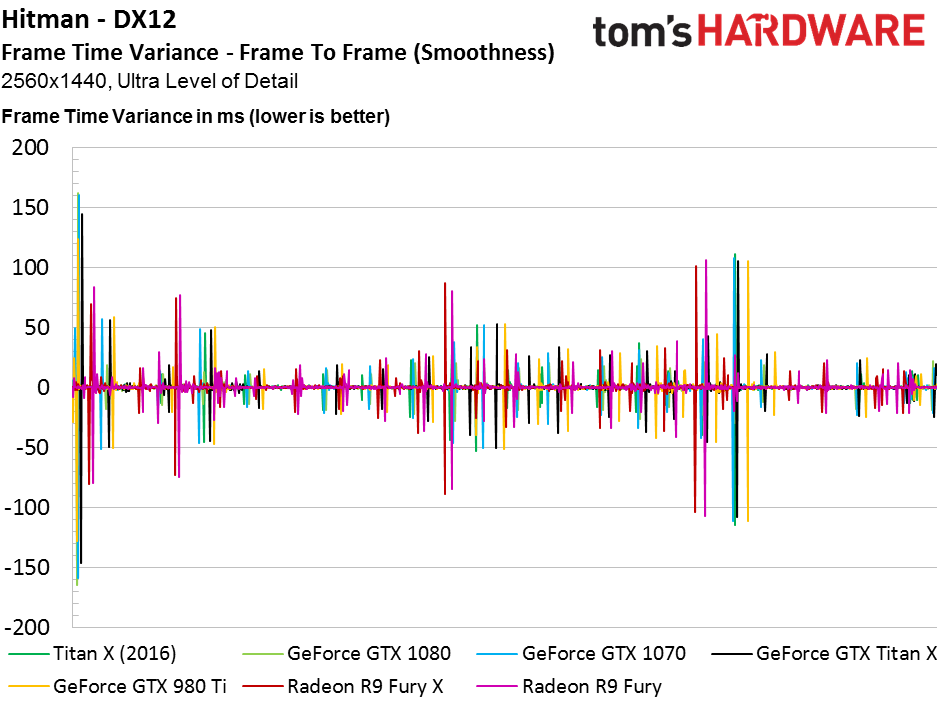

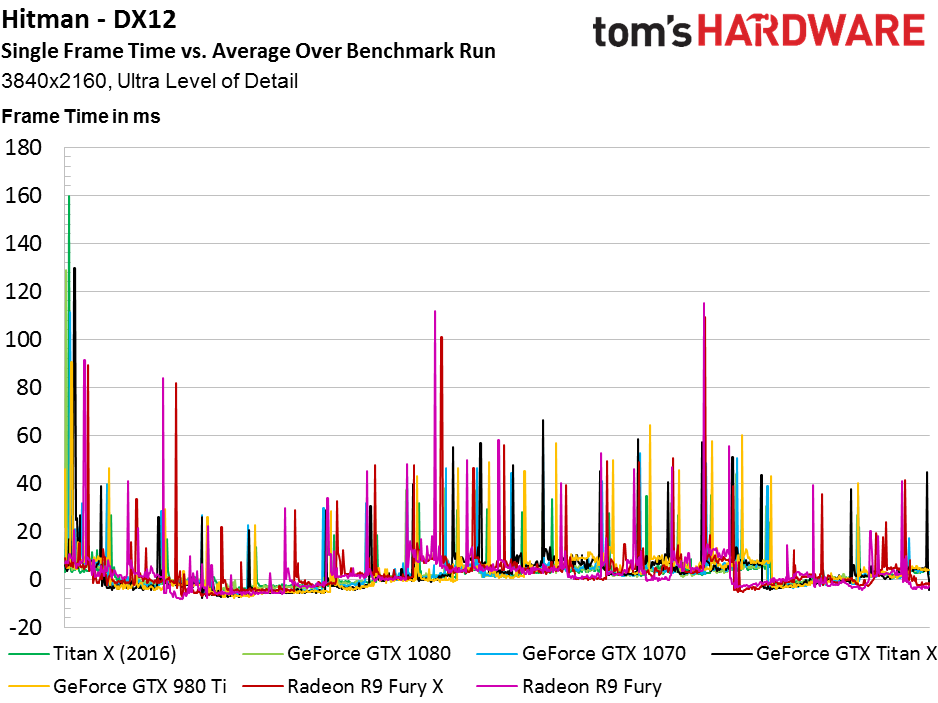

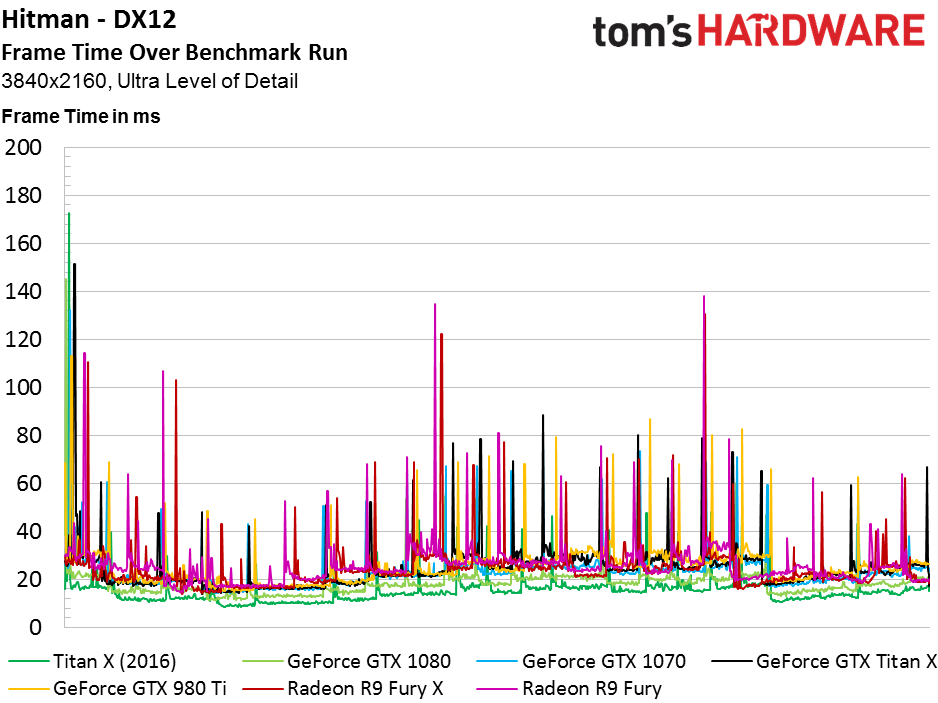

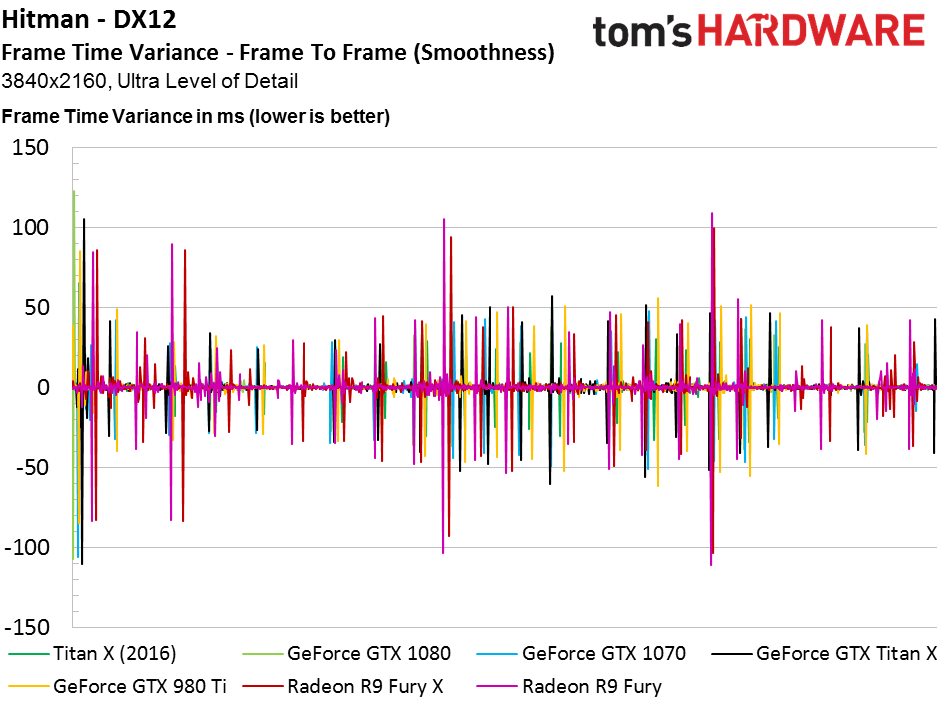

Although it appears that several cards encounter frame time variance spikes through the Hitman benchmark at both resolutions, those peaks seem to correspond with scene changes during the sequence. They’d be noticeable if they were interruptions in movement, and Hitman plays through smoothly for the most part.

These were far less prevalent in our DX11 Fraps-based capture compared to what PresentMon records. However, this could also be caused by an adjustment to our custom interpreter software, which interpolates the run’s frame time data into a 1000-point data set. It now favors peaks, making it less likely they’ll be filtered out. We also added a low-pass filter to avoid big spikes affecting the average frame time by some large offsetting number.

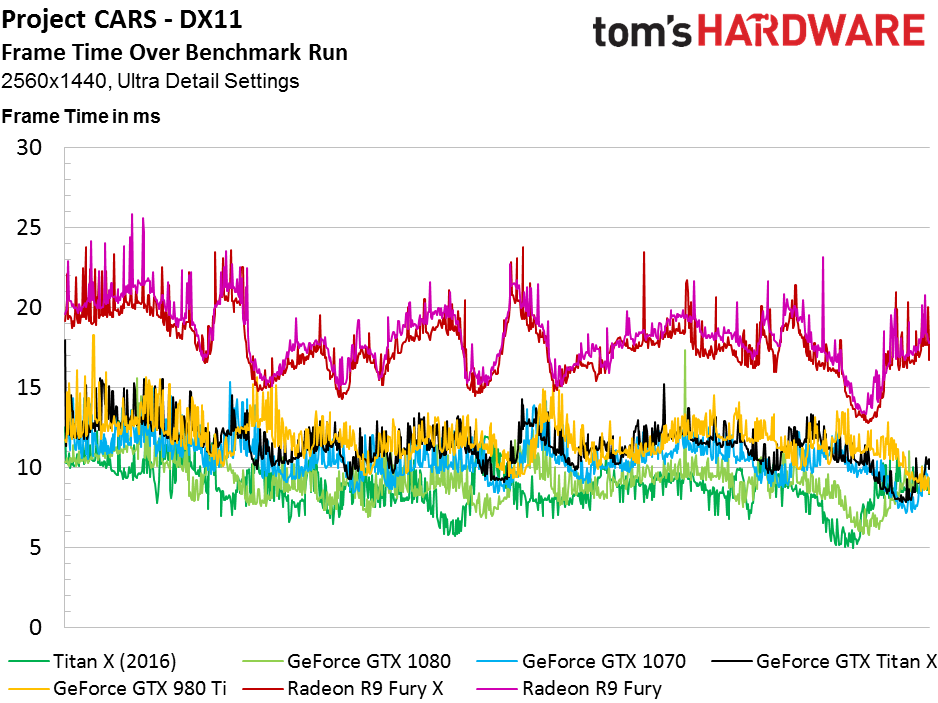

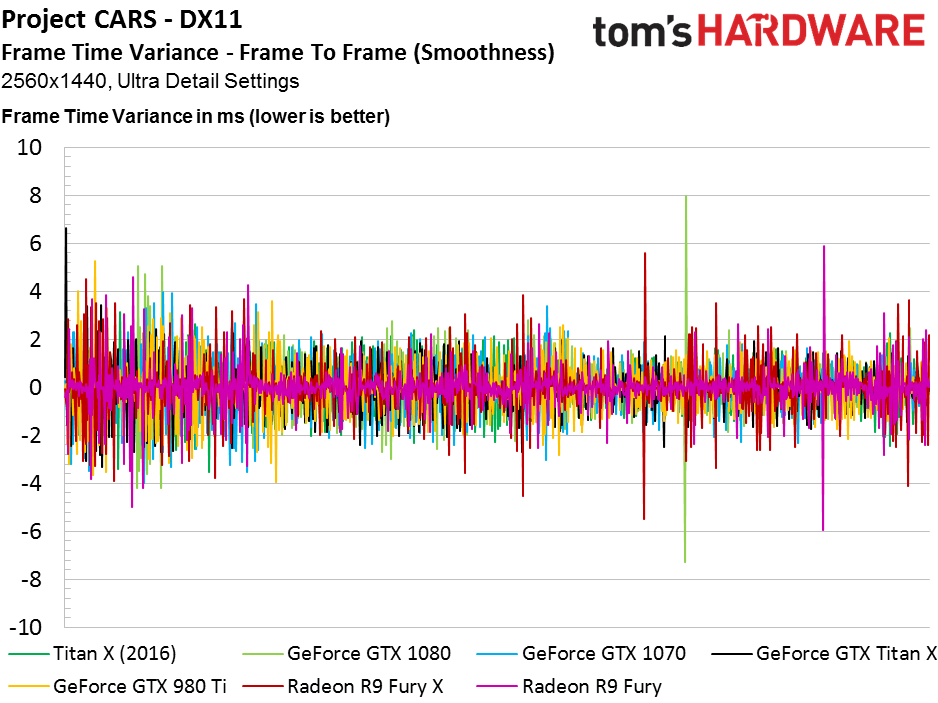

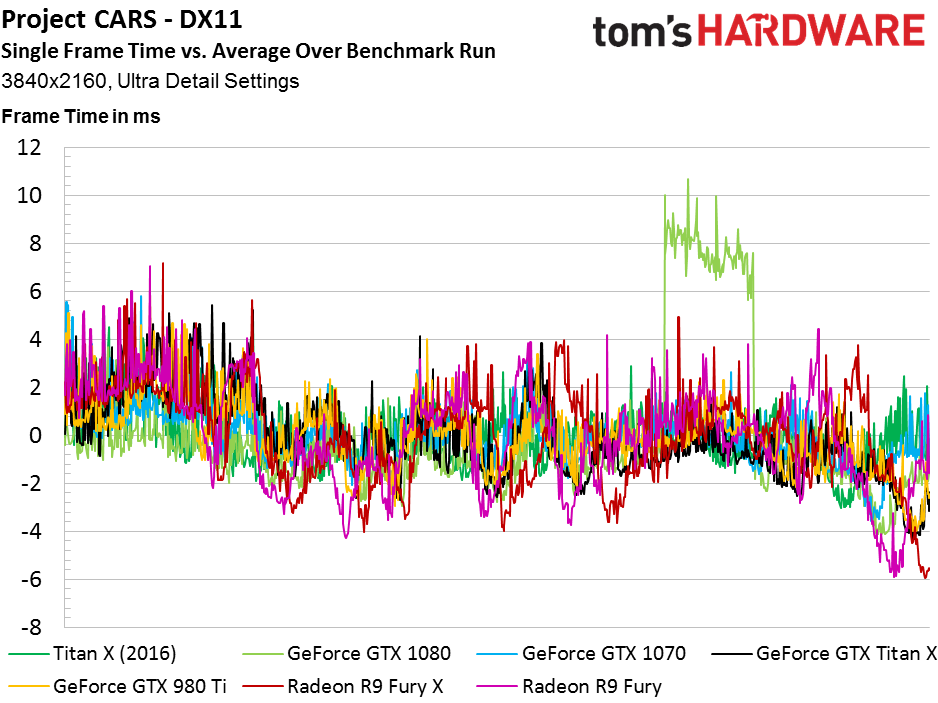

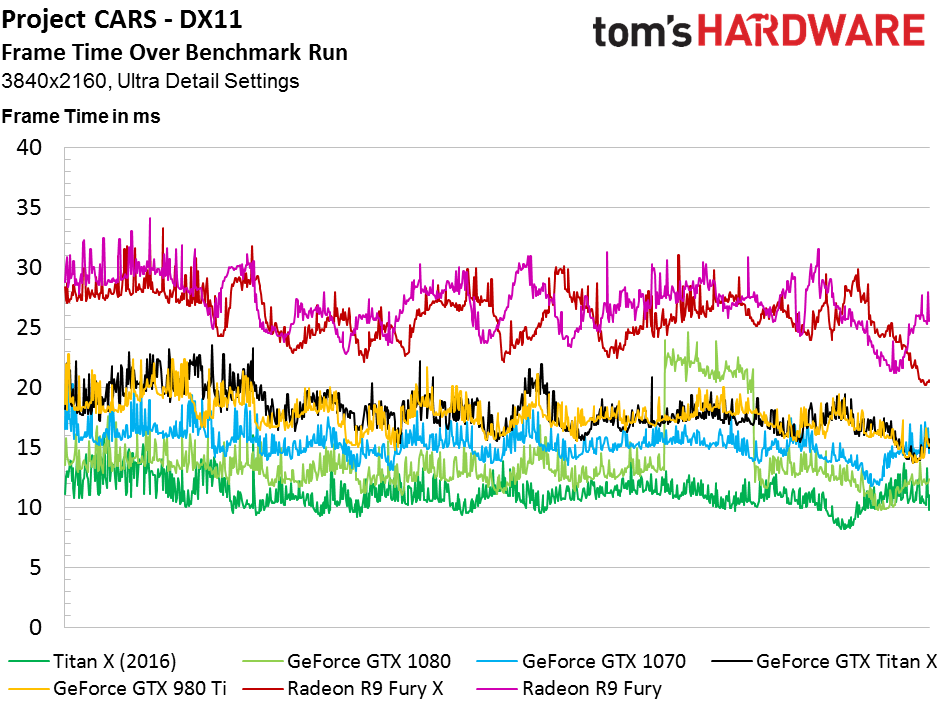

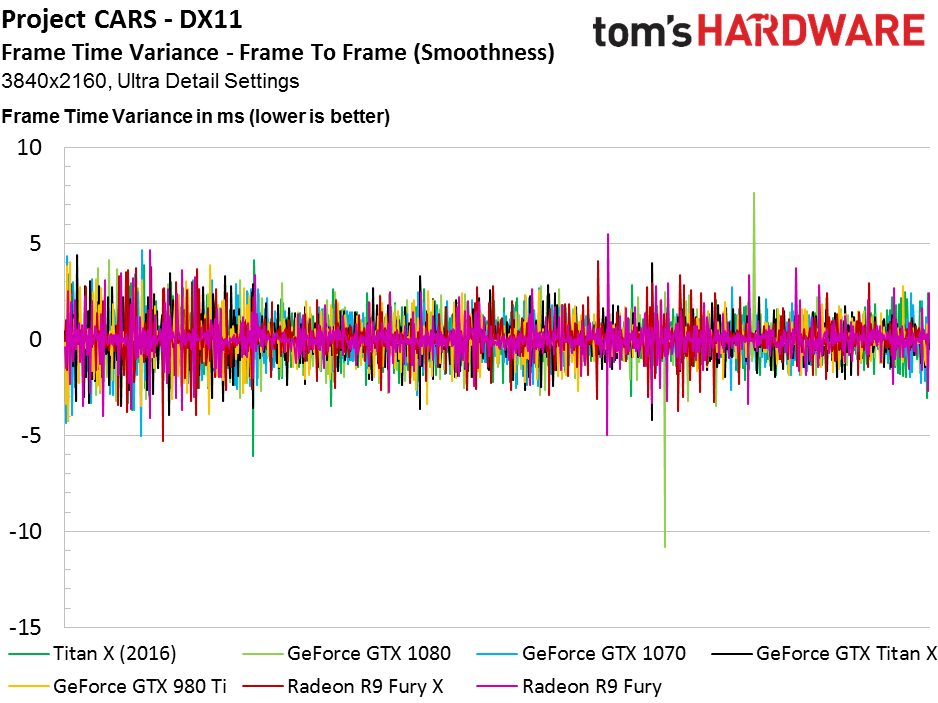

Project CARS

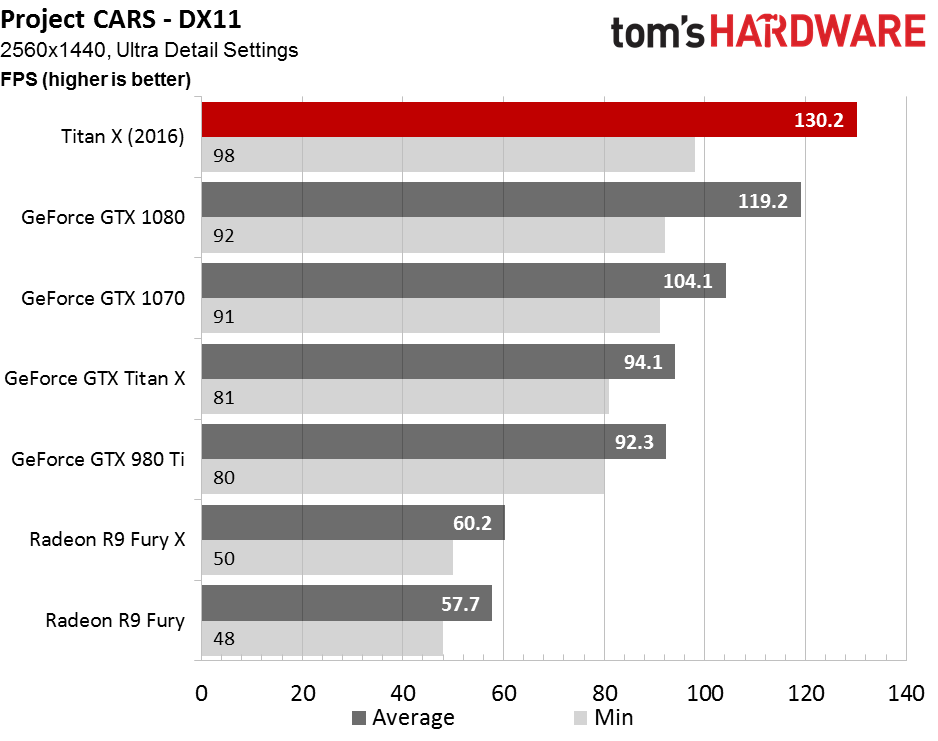

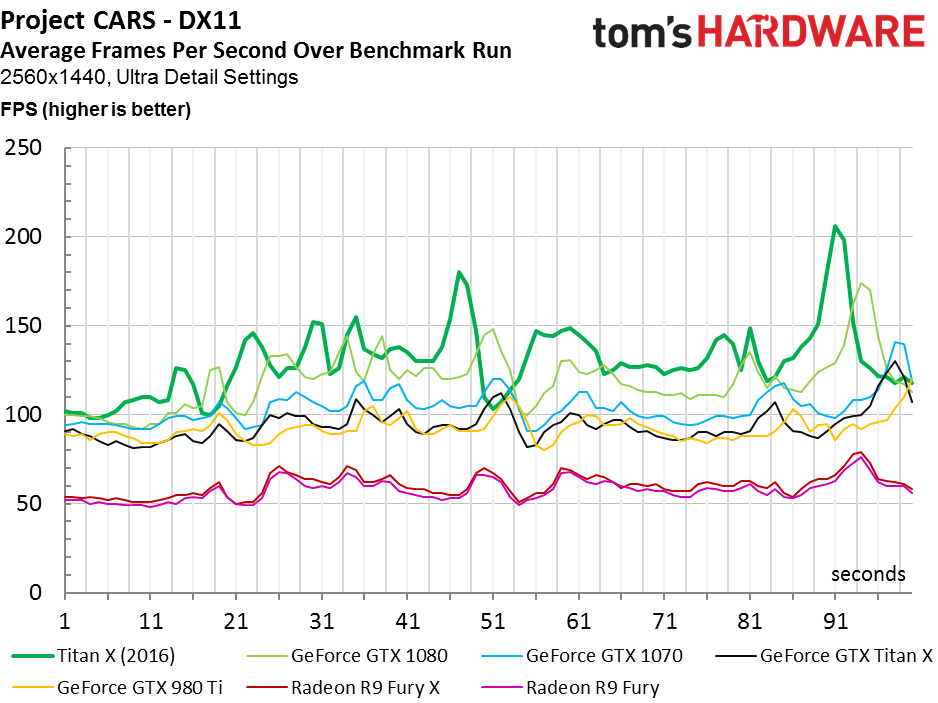

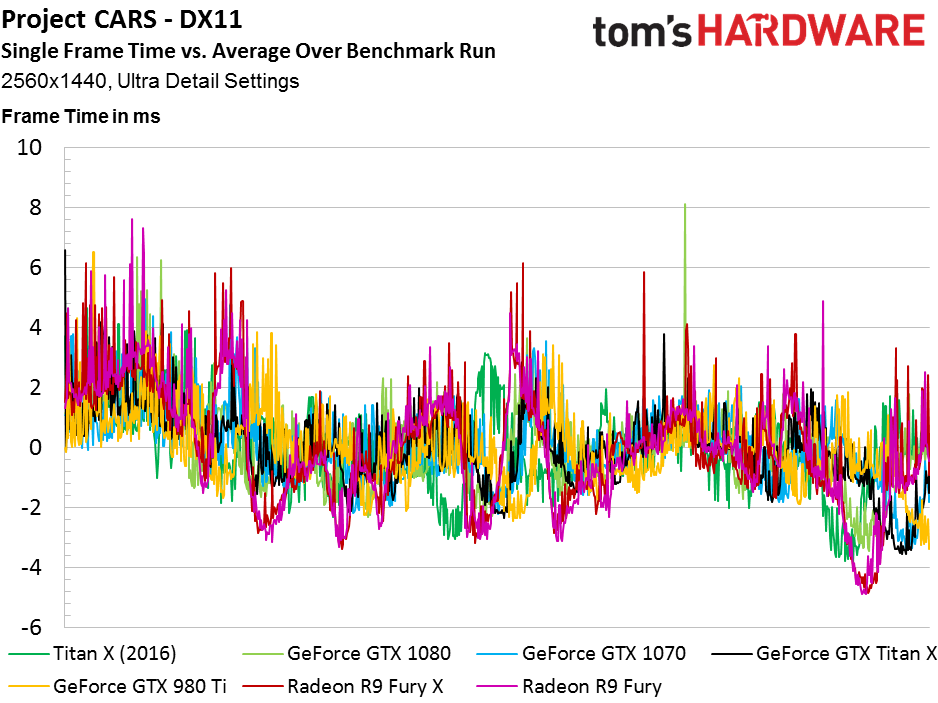

Titan X’s 9% advantage over GTX 1080 suggests that Project CARS isn’t particularly graphics-bound at 2560x1440, despite using the game’s highest detail settings. Even the previous-generation Maxwell-based cards average more than 90 FPS.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

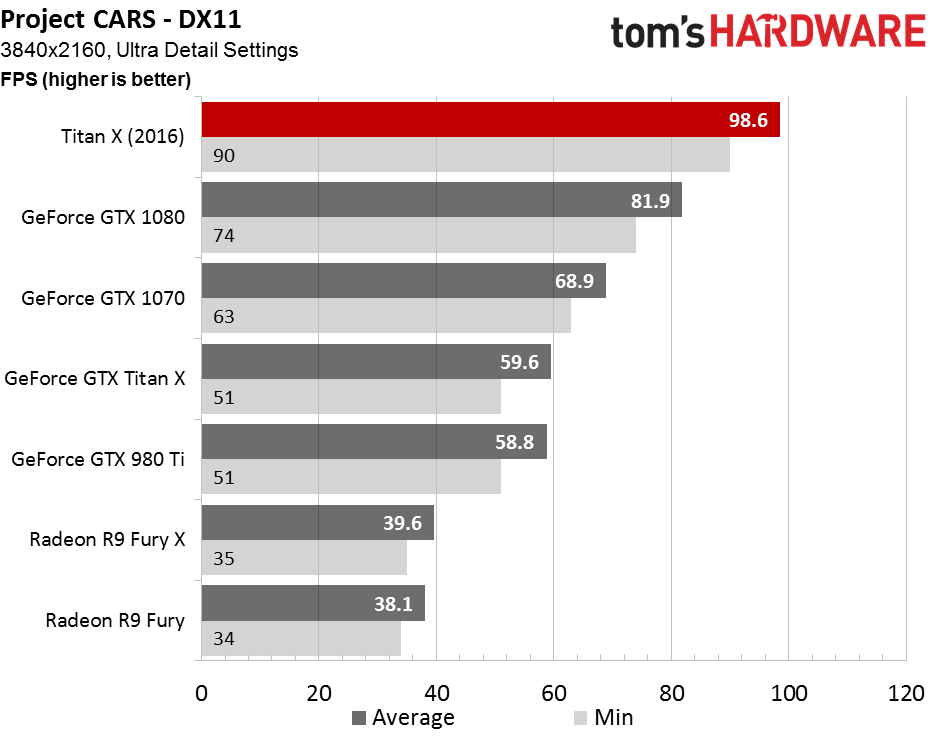

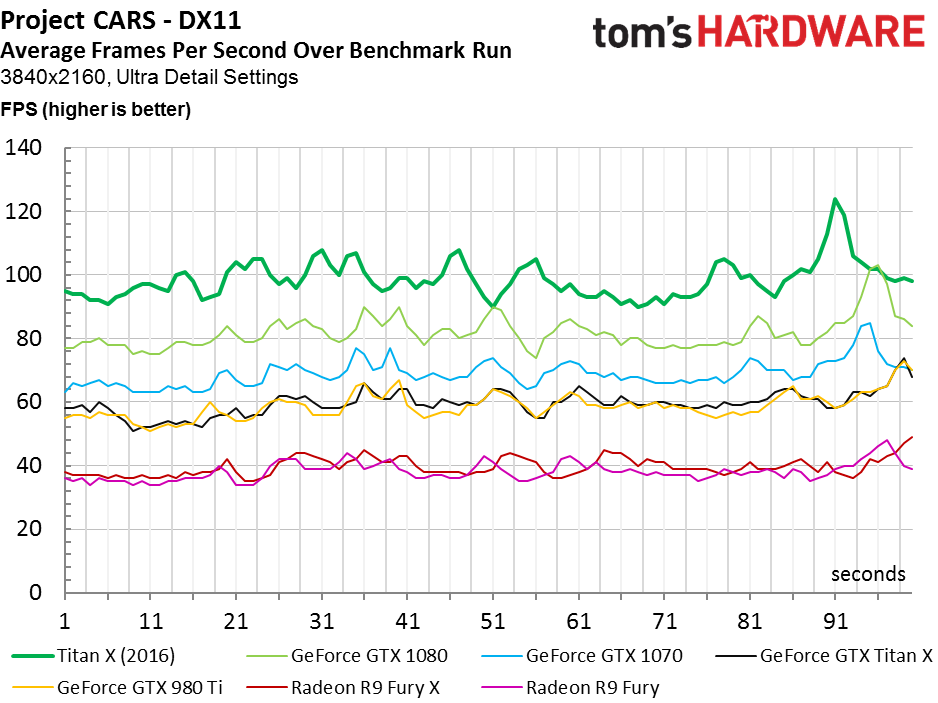

The new hotness is 20% faster than GTX 1080 at 3840x2160, reflecting the more taxing graphics workload.

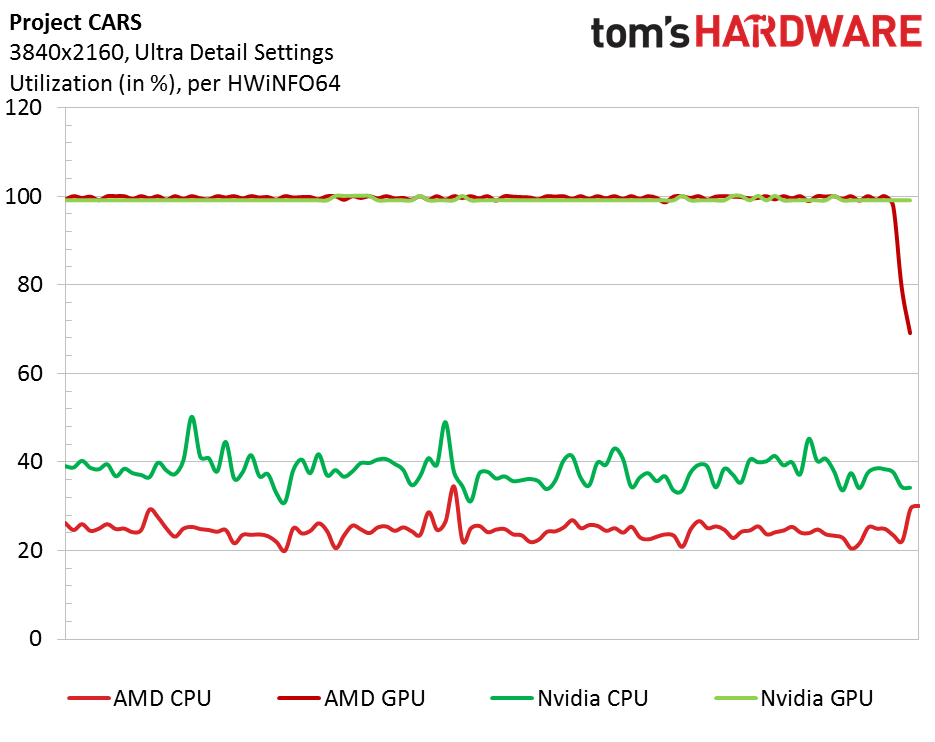

Eager to answer some of the questions relating to AMD’s poor performance in CARS, we did some digging using HWiNFO64.

Looking at total utilization numbers, it’s clear that both the GeForce GTX 980 Ti and Radeon R9 Fury are maxed-out—both cards show 100% use through our 100-second capture. CPU utilization, on the other hand, is quite a bit different. The system with the GeForce inside has our Core i7-6700K busier doing…something. Given SMS’ public statements regarding the use of PhysX in CARS, it’d be hard to imagine that difference corresponding to what the developer calls “a small part of the overall physics systems” running at 50 Hz.

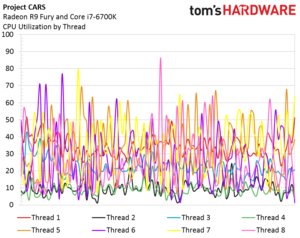

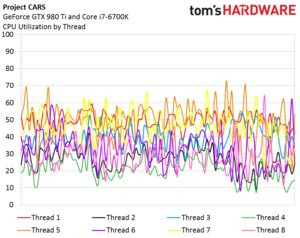

Breaking the workload down into individual threads yields the following two graphs:

Processor utilization on the machine with AMD’s Radeon R9 Fury is prone to wild variation. At least four threads spike up and down through the run. Two remain almost completely idle, and two others (presumably the rendering and game threads) are utilized fairly consistently.

Activity is concentrated in a narrower band on the Nvidia-based setup, and none of the logical cores are left completely inactive. We have an email request into SMS for help interpreting these findings, but as we mentioned on the How We Test page, if answers aren’t provided, we’ll shift away from CARS now that DiRT Rally is available for the Oculus Rift.

MORE: Best Graphics Cards

MORE: Desktop GPU Performance Hierarchy Table

MORE: All Graphics Content

Current page: GTA V, Hitman And Project CARS

Prev Page Ashes of the Singularity, Battlefield 4 And DOOM Next Page Rise of the Tomb Raider, The Division And The Witcher 3-

adamovera Archived comments are found here: http://www.tomshardware.com/forum/id-3142290/nvidia-titan-pascal-12gb-review.htmlReply -

chuckydb Well, the thermal throttling was to be expected with such a useless cooler, but that should not be an issue. If you are spending this much on a gpu, you should water-cool it!!! Problem solvedReply -

Jeff Fx I might spend $1,200 on a Titan X, because between 4K gaming and VR I'll get a lot of use out of it, but they don't seem to be available at anything close to that price at this time.Reply

Any word when we can get these at $1,200 or less?

I wish I was confident that we'd get good SLI support in VR, so I could just get a pair of 1080s, but I've had so many problems in the past with SLI in 3D, that getting the fastest single-card solution available seems like the best choice to me. -

ingtar33 $1200 for a gpu which temp throttles under load? THG, you guys raked AMD over the coals for this type of nonsense, and that was on a $500 card at the time.Reply -

Sakkura Interesting to see how the Titan X turned into an R9 Nano in your anechoic chamber. :DReply

As for the Titan X, that cooler just isn't good enough. Not sure I agree that memory modules running 90 degrees C is "well below" the manufacturer's limit of 95 degrees C. What if your ambient temperature is 5 or 10 degrees higher? -

hotroderx Basically the cards just one giant cash grab... I am shocked toms isn't denouncing this card! I could just see if Intel rated a CPU at 6ghz for the first 10secs it was running. Then throttled it back to something more manageable! but for those 10 secs you had the worlds fastest retail CPU.Reply -

tamalero Does this means there will be a GP101 with all core enabled later on? as in TI version?Reply -

hannibal TitanX Ti... No, 1080ti is cut down version. Most full ships will go to professinal cards and maybe we will see TitanZ later...Reply

-

blazorthon An extra $200 for a gimped cooler makes for a disappointing addition to the Titan cards.Reply