Resident Evil 5: Demo Performance Analyzed

Benchmarks: Comparing Detail Settings And Performance Impact

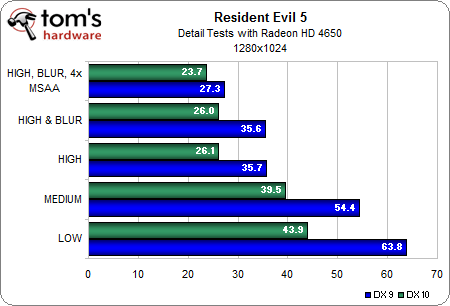

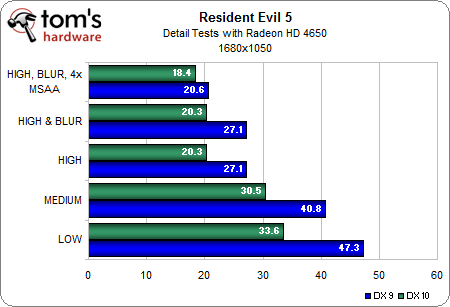

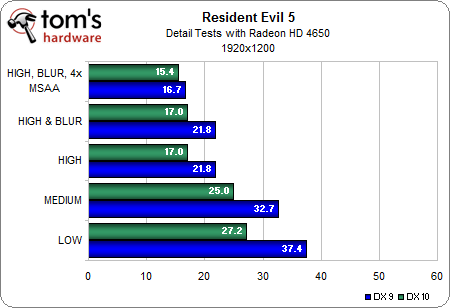

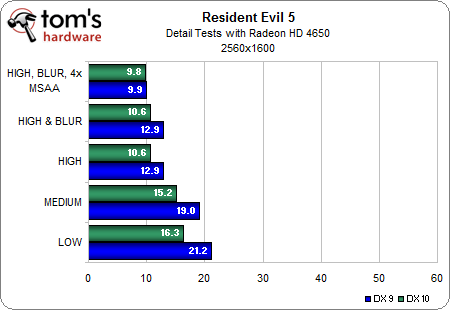

With separate DirectX 9 and DirectX 10 render paths, in addition to three levels of detail settings, motion blur, and AA, there are a lot of performance variables.

We decided to examine the results that multiple combinations of these variables had on the slowest card with which we had to test, and use those results to choose the configurations we were most interested in benchmarking:

We can see there's relatively little difference between low and medium settings when it comes to performance, and since the medium settings offer greatly increased texture detail in addition to shadows, we'll consider medium detail to be the lowest recommended settings for running Resident Evil 5.

It is also obvious that there is a notable performance impact when enabling DirectX 10, but since we now know there is no visual advantage with this setting, we will avoid it, except to demonstrate the performance hit that owners of Nvidia's GeForce 3D Vision LCD glasses will experience.

In addition, we have seen that the highest detail settings offer some really impressive visuals. It also appears that enabling motion blur doesn't cause much of a performance hit with this hardware, so we'll leave that feature enabled for the highest detail benchmark runs.

With these observations in mind, we've decided to benchmark DirectX 9 at medium details as a baseline, at high details with motion blur enabled to demonstrate the maximum graphical fidelity, and with high details, motion blur, and 4x AA enabled to show how AA will affect performance.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Benchmarks: Comparing Detail Settings And Performance Impact

Prev Page Test System And Settings Next Page Benchmark Results: Medium Detail, DirectX 9Don Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

gkay09 Does this imply that game developers in general dint even utilize the full potential of DirectX 9 and jumped on DirextX 10 bandwagon and now to DirextX 11?Reply -

renz496 i've tried the benchmark before. the dx 10 produce slightly better frame rate than dx9. this game have better performance in dx 10 compared to dx 9 in my machineReply -

yellosnowman Am I going blind or is there no HD4890Reply

or is this just a quick benchmark before the HD5*** series -

mitch074 @yellosnowman: there is a 4890, but it's been downclocked to 4870 levels (read the article) to be used as reference for Radeon performances (tests on Radeon wasn't too extensive, as the benchmark is optimized for Nvidia hardware). And yes, with HD 5xxx almost there, doing complete benchmarks here is pretty much useless: the game is playable with everything at full on a Radeon HD 4770 up to Full HD quality.Reply -

voltagetoe Gkay09, Direct X 10 was a failure because Vista was a failure. DX 9 has been thoroughly utilized - there has been no other choice.Reply -

juliom On the variable benchmark @ 1680 x 1050 2x AA my Phenom II x4 955 and Radeon 4870 pulls and average of 80 fps in directx 9. I'm happy and have the game pre-ordered on Steam :)Reply -

amnotanoobie voltagetoeGkay09, Direct X 10 was a failure because Vista was a failure. DX 9 has been thoroughly utilized - there has been no other choice.Wasn't it more of because the mainstream DX10 cards (8600GT and 2600XT) didn't really perform well, and even some were beaten by previous generation cards. As such, pushing the detail level higher might mean fewer sales as fewer people had the cards to play the games at decent levels (8800GTS 320MB/640MB or 2900XT).Reply -

HTDuro DX10 isnt really a failure .. if programmer take time to really work on DX10 optimisation .. more on SM4.0. remember Assassins creed? ubisoft take time to work on SM4.0 and the game work better in D10 than 9 ... higher framerate with better shadowReply