AMD Radeon R9 Fury Review: Sapphire Tri-X Overclocked

Quickly following the Fury X, AMD’s next graphics foray is a cut-down Fiji called Fury, running at 1000MHz GPU clock. We tested Sapphire’s Tri-X Overclocked version.

Why you can trust Tom's Hardware

Product 360

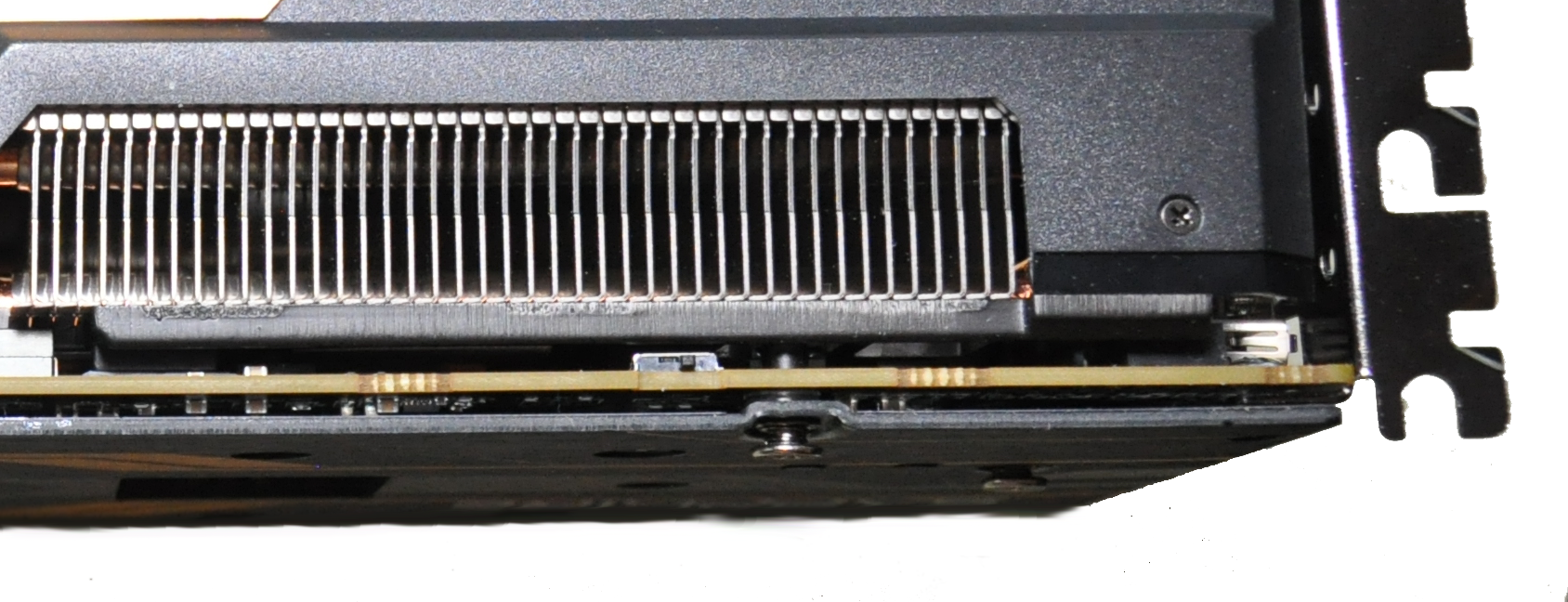

Sapphire’s Radeon R9 Fury Tri-X makes use of a custom heat sink design featuring seven copper pipes of varying thickness and two separate sections of fins to dissipate thermal energy generated by the GPU and HBM. The central pipe is a gargantuan 10mm thick; two 8mm pipes flank it on each side, and these all span from the main section of fins over the GPU to a separate group of rear fins. The remaining two pipes are 6mm thick, each making a single loop back through the fins over the GPU contact block.

The card's PCB is quite short at only 12cm. However, the heat sink and shroud extend well beyond the back of the board. In fact, they nearly double its length, taking it to 23.5cm. The rear section of the cooler is wide open, with only a die-cast exoskeleton holding it in place. This facilitates significant airflow through the heat sink fins.

Sapphire said it was targeting a load temperature of less than 75 degrees C and modest acoustics as it designed the Tri-X cooler. The company uses three dual ball-bearing fans managed by advanced profiles, which ramp up the fans slowly in order to maintain silence when possible. Under normal load, the fans should spin at 40% or less, though they can be manually adjusted if more cooling capacity is desired.

Not only is the R9 Fury Tri-X quite long, but it is also very thick. The card measures 5cm from the shroud to the screws sticking out of its back plate. Clearance may be an issue for some motherboards. It came close to not fitting in our reference board's first PCIe slot. If the back plate was 1mm thicker, it would not have worked.

Despite the card being technically capable of running four-way CrossFire, the heat sink blocks a neighboring PCIe slot. So, short of using riser cables, you’re limited to double-spaced setups unless you opt to replace the sink with a water block, which Sapphire actually cautions against.

According to Sapphire, the heat sink's design is rather intricate and it was adamant that we couldn't remove it during our review. Apparently, because the HBM modules sit higher than the GPU, it's very difficult to replace the cooler correctly, which can result in poor cooling performance and possibly damage the GPU.

Along the top of the card, you’ll find two eight-pin power connectors. A row of eight LED lights are positioned next to those auxiliary inputs, and they illuminate sequentially to indicate higher load.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Sapphire also includes a BIOS toggle switch that switches between two slightly different profiles. One is tailored to a 75 degree C load temperature target and keeps power going to the GPU limited to 300W. The second option allows for an 80 degree threshold and a 350W power limit.

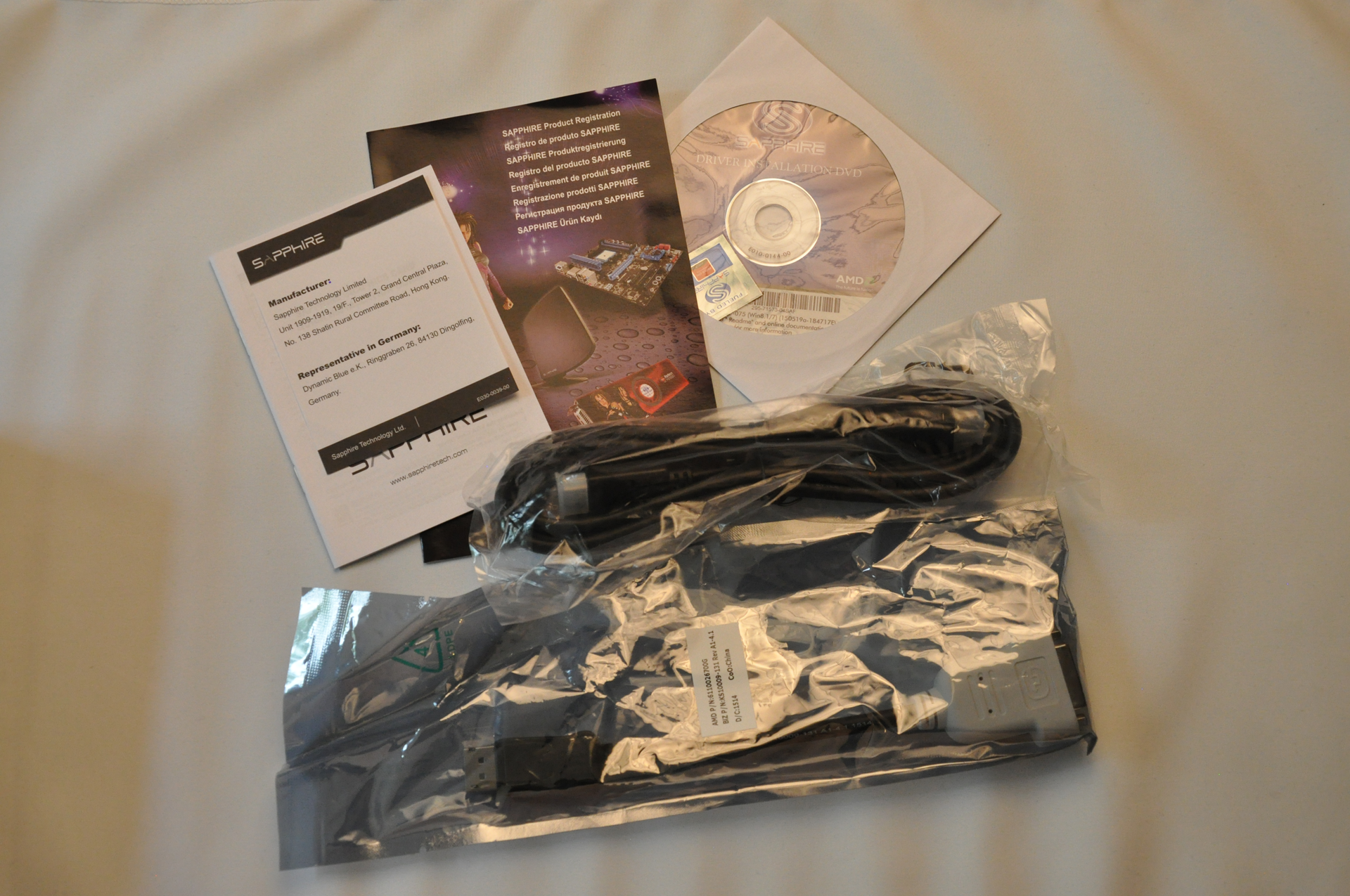

AMD’s Fury X was designed without a DVI port, and the Fury follows its lead. The I/O plate has three DisplayPort 1.2 connectors and one HDMI 1.4 interface. Up to four displays can be driven natively, but six are supported through MST hubs.

DVI is still supported through a DisplayPort-to-DVI adapter, which Sapphire graciously includes in its retail package. The company also adds an HDMI cable.

Kevin Carbotte is a contributing writer for Tom's Hardware who primarily covers VR and AR hardware. He has been writing for us for more than four years.

-

Troezar Some good news for AMD. A bonus for Nvidia users too, more competition equals better prices for us all.Reply -

AndrewJacksonZA Kevin, Igor, thank you for the review. Now the question people might want to ask themselves is, is the $80-$100 extra for the Fury X worth it? :-)Reply -

vertexx When the @#$@#$#$@#$@ are your web designers going to fix the bleeping arrows on the charts????!!!!!Reply -

ern88 I would like to get this card. But I am currently playing at 1080p, but will probably got to 1440p soon!!!!Reply -

confus3d Serious question: does 4k on medium settings look better than 1080p on ultra for desktop-sized screens (say under 30")? These cards seem to hold a lot of promise for large 4k screens or eyefinity setups.Reply -

rohitbaran This is my next card for certain. Fury X is a bit too expensive for my taste. With driver updates, I think the results will get better.Reply -

Larry Litmanen ReplySerious question: does 4k on medium settings look better than 1080p on ultra for desktop-sized screens (say under 30")? These cards seem to hold a lot of promise for large 4k screens or eyefinity setups.

I was in microcenter the other day, one of the very few places you can actually see a 4K display physically. I have to say i wasn't impressed, everything looked small, it just looks like they shrunk the images on PC.

Maybe it was just that monitor but it did not look special to the point where i would spend $500 on monitor and $650 for a new GPU.