What Does DirectCompute Really Mean For Gamers?

We've been bugging AMD for years now, literally, to show us what GPU-accelerated software can do. Finally, the company is ready to put us in touch with ISVs in nine different segments to demonstrate how its hardware can benefit optimized applications.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

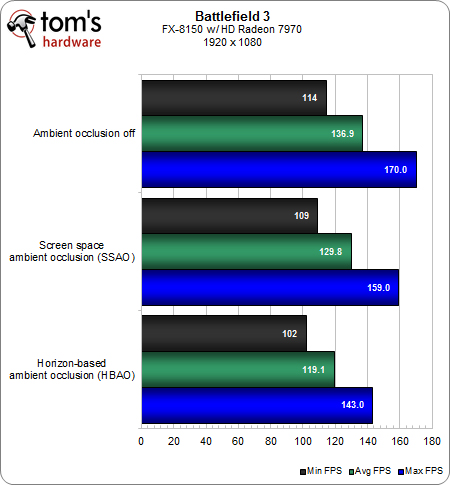

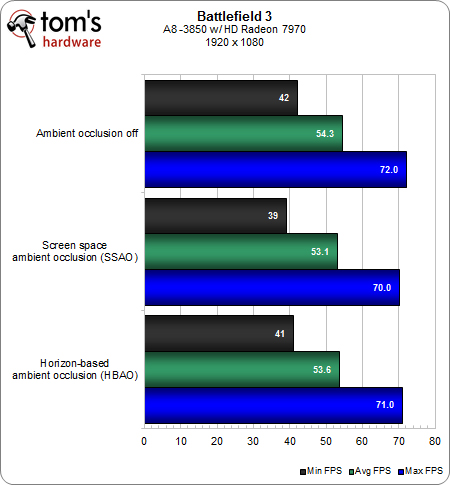

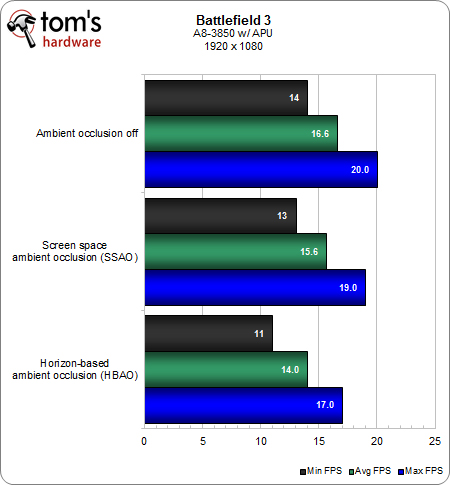

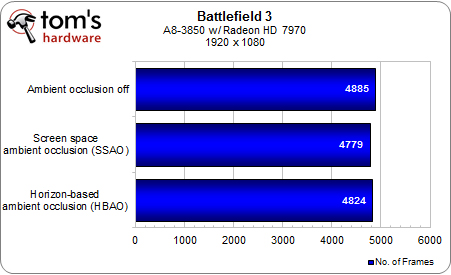

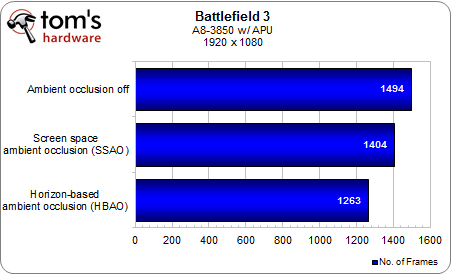

Benchmark Results: Battlefield 3 At 1920x1080

We tested Battlefield 3 by using Fraps and recording a 90-second section of the Going Hunting mission. On page four, you may have noticed our inclusion of a screen space ambient occlusion (SSAO) screen capture. SSAO is a pixel shader-based approach to ambient occlusion originally developed for the game Crysis.

In a nutshell, the SSAO pixel shader samples every pixel’s depth value and works to determine the amount of occlusion from each sampled point. Because this is very resource-intensive at high resolutions, SSAO frequently employs random sampling coupled with post-processing blurring. As you can see in the earlier screen grabs, DirectCompute-based AO yields more realistic results...but at what cost?

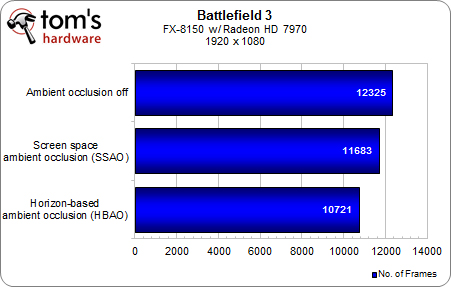

True to DICE’s word, the answer is “not much.” Even though BF3 isn’t playable at 1920x1080 on our APU configuration, and severely bottlenecked when we drop a Radeon HD 7970 into the same platform, there is only a minor difference in frame rate response switching between HBAO and SSAO. Even turning AO off gains only about 5%.

Article continues belowIn terms of total frames generated, we see the same story from another angle.

We should point out that, apart from an obvious message about the need for discrete graphics with this caliber of game and resolution, note the 2.2x performance difference between the FX and A8 platforms hosting the same Radeon HD 7970. This speaks to our continued emphasis on building balanced platforms. Clearly, although Battlefield 3's campaign mode is regarded as graphically challenging, your choice of CPU should reflect the caliber of GPU you use. Both need to be considered in tandem.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Benchmark Results: Battlefield 3 At 1920x1080

Prev Page What We Tested: Other Apps And Test Config Next Page Benchmark Results: Battlefield 3 At 1280x768-

de5_Roy would pcie 3.0 and 2x pcie 3.0 cards in cfx/sli improve direct compute performance for gaming?Reply -

hunshiki hotsacomanHa. Are those HL2 screenshots on page 3 lol?Reply

THAT. F.... FENCE. :D

Every, single, time. With every, single Source game. HL2, CSS, MODS, CSGO. It's everywhere. -

hunshikiTHAT. F.... FENCE. Every, single, time. With every, single Source game. HL2, CSS, MODS, CSGO. It's everywhere.Reply

Ha. Seriously! The source engine is what I like to call a polished turd. Somehow even though its ugly as f%$#, they still make it look acceptable...except for the fence XD -

theuniquegamer Developers need to improve the compatibility of the API for the gpus. Because the consoles used very low power outdated gpus can play latest games at good fps . But our pcs have the top notch hardware but the games are playing as almost same quality as the consoles. The GPUs in our pc has a lot horse power but we can utilize even half of it(i don't what our pc gpus are capable of)Reply -

marraco I hate depth of field. Really hate it. I hate Metro 2033 with its DirectCompute-based depth of field filter.Reply

It’s unnecessary for games to emulate camera flaws, and depth of field is a limitation of cameras. The human eye is able to focus everywhere, and free to do that. Depth of field does not allow to focus where the user wants to focus, so is just an annoyance, and worse, it costs FPS.

This chart is great. Thanks for showing it.

It shows something out of many video cards reviews: the 7970 frequently falls under 50, 40, and even 20 FPS. That ruins the user experience. Meanwhile is hard to tell the difference between 70 and 80 FPS, is easy to spot those moments on which the card falls under 20 FPS. It’s a show stopper, and utter annoyance to spend a lot of money on the most expensive cards and then see thos 20 FPS moments.

That’s why I prefer TechPowerup.com reviews. They show frame by frame benchmarks, and not just a meaningless FPS. TechPowerup.com is a floor over TomsHardware because of this.

Yet that way to show GPU performance is hard to understand for humans, so that data needs to be sorted, to make it easy understandable, like this figure shows:

Both charts show the same data, but the lower has the data sorted.

Here we see that card B has higher lags, and FPS, and Card A is more consistent even when it haves lower FPS.

It shows on how many frames Card B is worse that Card A, and is more intuitive and readable that the bar charts, who lose a lot of information.

Unfortunately, no web site offers this kind of analysis for GPUs, so there is a way to get an advantage over competition.

-

hunshiki I don't think you owned a modern console Theuniquegamer. Games that run fast there, would run fast on PCs (if not blazing fast), hence PCs are faster. Consoles are quite limited by hardware. Games that are demanding and slow... or they just got awesome graphics (BF3 for example), are slow on consoles too. They can rarely squeeze out 20-25 FPS usually. This happened with Crysis too. On PC? We benchmark FullHD graphics, and go for 91 fps. NINETY-ONE. Not 20. Not 25. Not even 30. And FullHD. Not 1280x720 like XBOX. (Also, on PC you have a tons of other visual improvements, that you can turn on/off. Unlike consoles.)Reply

So .. in short: Consoles are cheap and easy to use. You pop in the CD, you play your game. You won't be a professional FPS gamer (hence the stick), or it won't amaze you, hence the graphics. But it's easy and simple. -

kettu marracoI hate depth of field. Really hate it. I hate Metro 2033 with its DirectCompute-based depth of field filter.It’s unnecessary for games to emulate camera flaws, and depth of field is a limitation of cameras. The human eye is able to focus everywhere, and free to do that. Depth of field does not allow to focus where the user wants to focus, so is just an annoyance, and worse, it costs FPS.Reply

'Hate' is a bit strong word but you do have a point there. It's much more natural to focus my eyes on a certain game objects rather than my hand (i.e. turn the camera with my mouse). And you're right that it's unnecessary because I get the depth of field effect for free with my eyes allready when they're focused on a point on the screen. -

npyrhone Somehow I don't find it plausible that Tom's Hardware has *literally* been bugging AMD for years - to any end (no pun inteded). Figuratively, perhaps?Reply