GeForce RTX 4080 Emerges at U.S. Retailer Starting at $1,199

Nvidia's GeForce RTX 4080 graphics card will hit the retail market on November 16, so retailers worldwide have started listing custom Ada graphics card models from the chipmaker's AIB partners. Although it won't be as fast as the GeForce RTX 4090, the GeForce RTX 4080 still poses as one of the best graphics cards.

Initially available in both 16GB and 12GB variants, Nvidia has decided to "unlaunch" GeForce RTX 4080 12GB after much criticism and user backlash. There must have been heavy pressure for Nvidia to cancel a graphics card launch, something the chipmaker has never done. Don't be sad about the GeForce RTX 4080 12GB, though, as vendors will likely recycle it for a GeForce RTX 4070-tier SKU. Nvidia's benchmarks showed that the GeForce RTX 4080 12GB was up to 30% slower than the 16GB variant so it would be a hard sell. In addition, the rumor is that Nvidia has reportedly reimbursed partners for the packaging and rebranding costs.

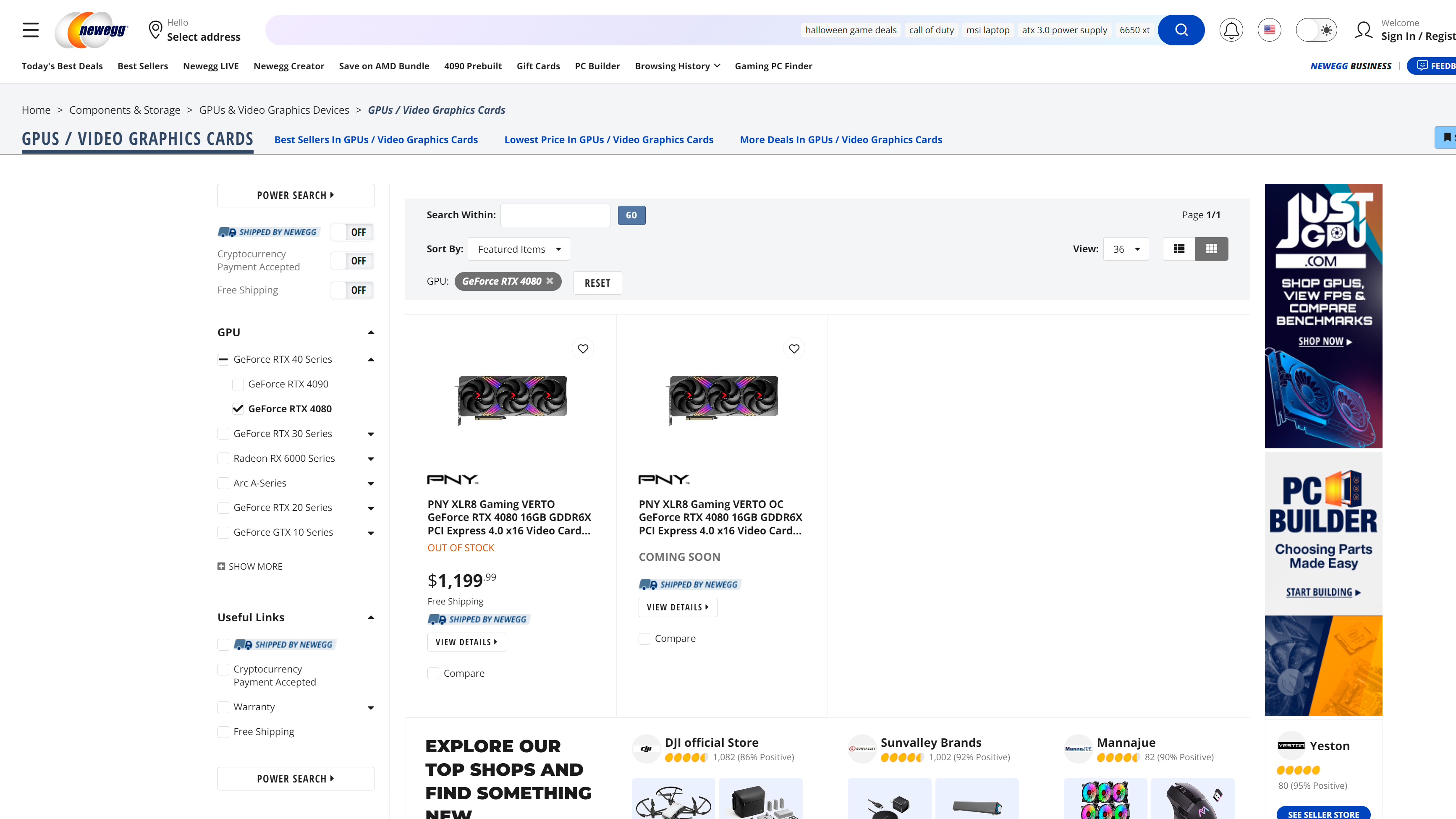

The GeForce RTX 4080 Founders Edition will retail for $1,199 in the U.S. and £1,269 in the U.K. However, manufacturers will undoubtedly have some custom models that start at the same MSRP. For example, Newegg has listed the PNY XLR8 Gaming Verto GeForce RTX 4080 for $1,199.99, while pricing for the overclocked variant (PNY XLR8 Gaming Verto OC GeForce RTX 4080) remains unknown. The standard version comes with a 2,505 MHz boost clock, which is the same speed as the Founders Edition. On the other hand, the overclocked version comes with a 2,550 MHz boost clock, only 50 MHz higher than Nvidia's specification.

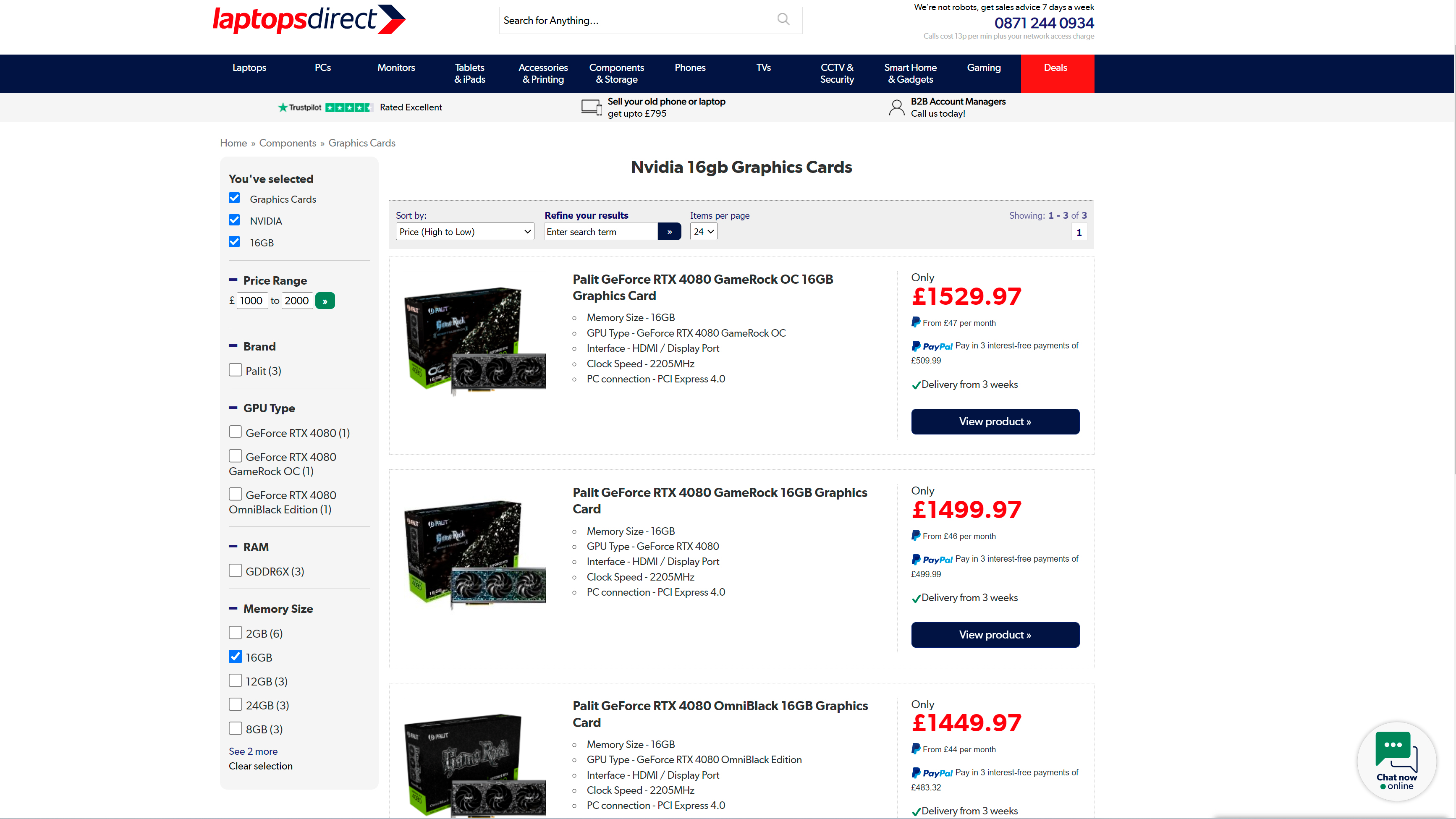

Meanwhile, a VideoCardz reader spotted three GeForce RTX 4080 listings over at LaptopsDirect, a retailer in the U.K. The Palit GeForce RTX 4080 OmniBlack and GeForce RTX 4080 GameRock models sell for £1,449.97, 14% more expensive than the GeForce RTX 4080's U.K. MSRP. TheGeForce RTX 4080 GameRock OC, which is also from Palit, retails for a whopping £1,529.97, 21% over MSRP.

By Nvidia's numbers for 4K (3840x2160) tests, the GeForce RTX 4080 is close to 2X faster than the GeForce RTX 3080 Ti in titles such as Microsoft Flight Simulator and Warhammer 40,000: Darktide. In addition, the GeForce RTX 4080 reportedly delivers over 3X better performance than the GeForce RTX 3080 Ti in Cyberpunk 2077 (New RT Overdrive). As always, take vendor-provided benchmarks with a bit of salt.

Despite the eye-watering price tag, the GeForce RTX 4090 sold out almost instantly when the Ada flagship debuted on October 12. We expect the GeForce RTX 4080, which has a slightly better price tag, to follow suit. Although the Ethereum boom is over, consumers still have to fend off scalpers. If you're convinced that the GeForce RTX 4080 is your next graphics card, better mark November 16 on your calendar, and as Effie Trinket from the Hunger Games would say, "may the odds be ever in your favor."

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Zhiye Liu is a news editor, memory reviewer, and SSD tester at Tom’s Hardware. Although he loves everything that’s hardware, he has a soft spot for CPUs, GPUs, and RAM.

-

PlaneInTheSky $1,199. So that will be €1.400 + insane electric bill.Reply

pass, I'm not a millionaire. -

DavidLejdar I suppose I am kind of lucky in that I need a new screen first anyways (instead of currently 1080p 60Hz), as it will take a bit of time until I get one, and by that time, there will hopefully be some new-gen GPU at a bit lower price for 1440p gaming.Reply

As for the power consumption, there will certainly be an increase upgrading GTX 1050 Ti (with 75W). To off-set that a bit, I am considering to run a dual-screen setup, with one screen hooked up to the MB and using the iGPU of the CPU, while turning off the GPU when not gaming (if it takes more power i.e. for browsing than the iGPU would).

But in any case, adding some 300W per hour of gaming, if I game 40 hours a month, that's 12 kWh a month extra, which comes at around the price of one pint (and I am in a tarif for my electricity to be sourced from renewables). Of course though, if I would be playing some older MMO 50 hours a week, then that electricity consumption could get a bit excessive, especially if a weaker GPU could run pretty much the same level of graphics at a lower wattage. -

sailorjeff I will wait and see what AMD reveals in a couple of days. The more different and competing cards on the market the more competition and prices will stop dropping. The scalpers have already dropped the price of the RTX 4090 card I was looking by a thousand dollars since its release. By the time the holidays are over prices may be getting back down near MSRP again. My RTX 3080 will make do for me until then.Reply -

punkncat Admittedly, I didn't NEED the 2K/144 monitor I purchased, and I didn't NEED the 3070 I upgraded to from a 1080 previously. Doing so did create what I consider a fairly balanced system for my desires now and some time well beyond now. With that said, I paid $700 for BOTH of those items which was nice.Reply

The current price norms are absolutely the result of someone on the decision making process hitting that crack pipe too hard. I very much hope that other consumers and their wallet agree with that perception and comments made here. Our dollar (not) spent will shake them back to rehab, er I mean reason. -

bit_user Reply

Nvidia must be smoking too much of something California legalized a few years ago.-Fran- said:So this is the new normal?

$1200 for the second best GPU in the market?

Wow, I guess we really need Intel to step up, with their next gen... -

bit_user Reply

dGPUs usually don't use much power, for stuff like web browsing. Even if you have some video ads playing, it shouldn't be much at all. If your old monitor has a CFL backlight, it might actually burn more power than your GPU, during those activities.DavidLejdar said:As for the power consumption, there will certainly be an increase upgrading GTX 1050 Ti (with 75W). To off-set that a bit, I am considering to run a dual-screen setup, with one screen hooked up to the MB and using the iGPU of the CPU, while turning off the GPU when not gaming (if it takes more power i.e. for browsing than the iGPU would).

If you're worried about power usage, you can dial back your GPU without too much impact on frame rates.DavidLejdar said:But in any case, adding some 300W per hour of gaming, if I game 40 hours a month, that's 12 kWh a month extra, which comes at around the price of one pint (and I am in a tarif for my electricity to be sourced from renewables). Of course though, if I would be playing some older MMO 50 hours a week, then that electricity consumption could get a bit excessive, especially if a weaker GPU could run pretty much the same level of graphics at a lower wattage.

https://www.tomshardware.com/news/improving-nvidia-rtx-4090-efficiency-through-power-limiting

At 60% power, Jarred got 90% as many FPS across his 8-game benchmark.

I would also advise experimenting with limiting the PL2 setting of your CPU (or equivalent, if AMD). -

hotaru251 im sorry but if nvidia's price creep is at such a steep hill anymore...imma just go to amd or do 3rd party old gpu's.Reply

also I could see it push many gaming people to consoles (as why spend 1000$ on a gpu alone when can just buy a $400 console and enjoy games?) -

BX4096 Reply

The problem is not Nvidia but the suckers who keep buying these cards at such outrageous prices. I'm not even sure that's it's really the rich who are buying them, and not a bunch of spoiled, fame-seeking streamers who keep maxing out their parents' credit cards in order to show off their new shiny thing.bit_user said:Nvidia must be smoking too much of something California legalized a few years ago.

I think we both will agree: there's nothing normal about this at all.-Fran- said:So this is the new normal? $1200 for the second best GPU in the market? -

Alvar "Miles" Udell And knowing AMD their version of the 3080 will be priced at $1100 so...Collusion!Reply