Anti-Aliasing Analysis, Part 2: Performance

Earlier this year, we took a detailed look at different anti-aliasing technologies in Part 1 of this series. In Part 2, we test all of those modes in order to get an accurate picture of the performance hit we can expect when it comes to using each one.

Coverage Sample Anti-Aliasing: 1280x1024

Coverage sampling is a method of increasing the quality of anti-aliasing without adding a lot of processing overhead. Instead of fully sampling a point in the pixel, coverage sampling simply detects whether or not a polygon is present and uses that information to weigh the multisamples. You can learn more about coverage sampling in our Anti-Aliasing Analysis, Part 1 article on the Coverage Sampling Modes: Nvidia’s CSAA and AMD’s EQAA page.

Nvidia's implementation is designated CSAA and AMD's is called EQAA. Since EQAA only works with the Radeon HD 6900 series, we’ll keep those results separate for now. Let’s consider the GeForce performance first:

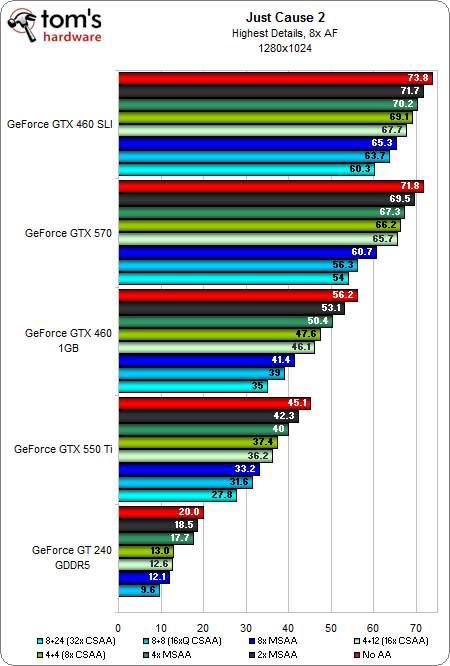

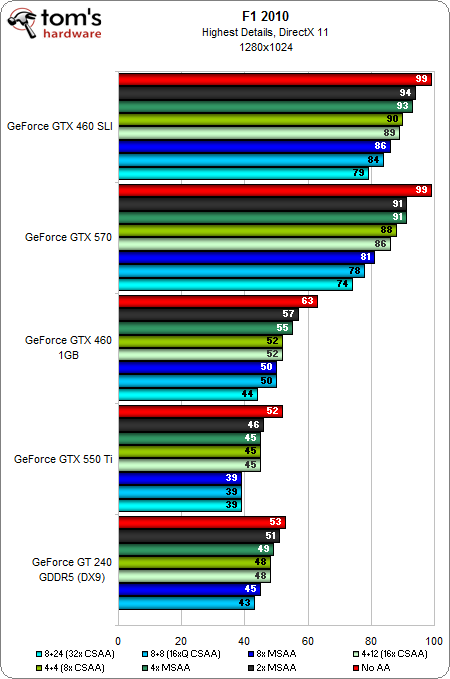

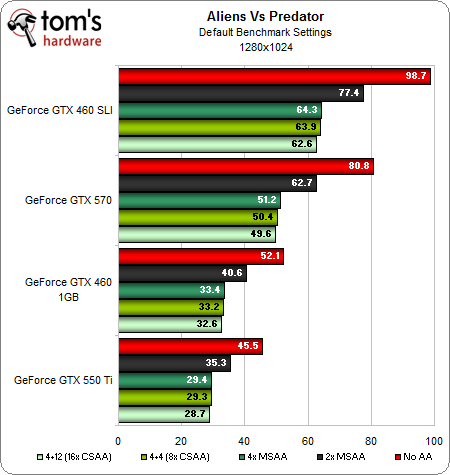

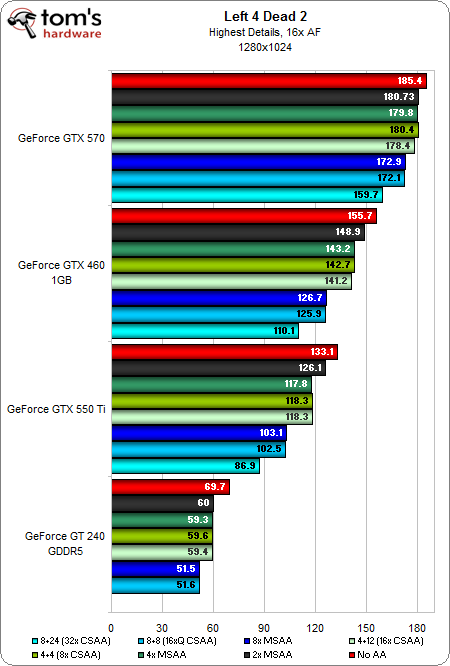

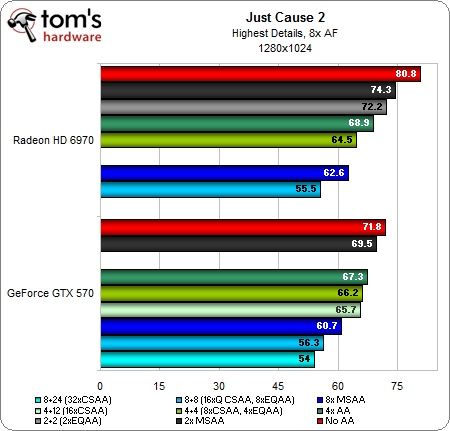

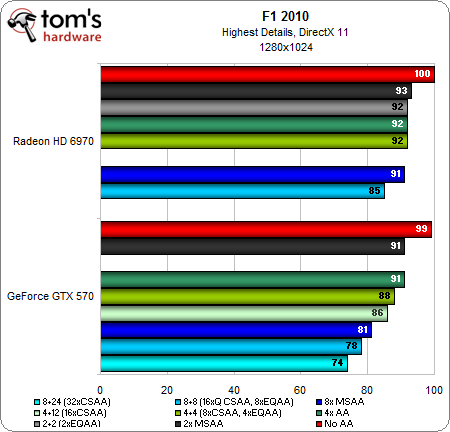

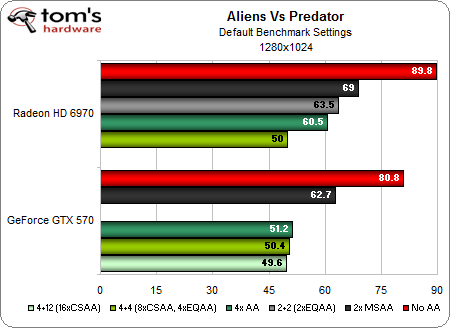

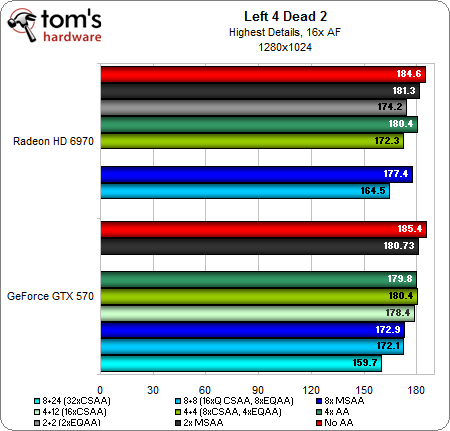

The CSAA performance falls about where we would expect it to on the GeForce cards. The 8x CSAA and 16x CSAA modes perform in between 4x MSAA and 8x MSAA, although they often sit closer to the lighter 4x MSAA load. The 16xQ CSAA and 32x CSAA modes perform slightly slower than 8x MSAA.

Now, let’s consider EQAA on the Radeon HD 6970:

Note that the Radeon features a 2x MSAA + 2x coverage sample (2x EQAA) mode that the GeForce card doesn’t counter. On the other hand, Nvidia has 4x MSAA + 12x coverage sample (16x CSAA) and 8x MSAA + 24x coverage sample (32x CSAA) modes to which AMD can't answer. On a per-game basis, there are certainly differences in performance. However, all together, CSAA and EQAA appear to have a similar impact on the hardware.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Coverage Sample Anti-Aliasing: 1280x1024

Prev Page Multi-Sample Anti-Aliasing: 1920x1080 Next Page Coverage Sample Anti-Aliasing: 1680x1050Don Woligroski was a former senior hardware editor for Tom's Hardware. He has covered a wide range of PC hardware topics, including CPUs, GPUs, system building, and emerging technologies.

-

compton This series is one of the best. The first article was most illuminating, and the second keeps it coming. Before the first article I was clueless to nVidia's AA nomenclature. Now it makes much more sense, and I applaud nVidia for not making the situation worse (though nVidia and AMD need nomenclature help in other areas still).Reply

I'm not a huge gamer and the games I do play mostly run awesome with my 2500K + GTX460. I decided that if it's going to be a while before the next generation of GPUs drop, I'd get another 460. So that's what I did, should be here in a few days. I was worried that even at 1920x1200 I'd have problems with AA and the lack of VRAM, but it's good to see that two 460s work pretty admirably.

As an aside, I'm totally on an efficiency kick, and I don't relish the thought of needing two cards to get decent performance, but the GTX 460 is one of the most efficient cards around well over a year after it's release. -

Zeh What happened to Morphological AA? When the 6000 series was released, Morph AA showed an impressively low demand on hardware - about 2 or 3 fps lost -, and now it's cutting frame rates in half?Reply

Seriously, what is it? -

This article is going to have me diving into my settings tonight, I've basically set my aged 5770 to run as poorly as possible given I game at 1920x1200 :/ Learn something new every day ;)Reply

-

ojas ZehWhat happened to Morphological AA? When the 6000 series was released, Morph AA showed an impressively low demand on hardware - about 2 or 3 fps lost -, and now it's cutting frame rates in half?Reply

Was thinking the same thing....part 1 and part 2 are contradicting each other hear...if i'm remembering part 1 correctly...

btw there's a typo at the start of page 2,

This is because the GT 420 is not DirectX 11-capable

-

cleeve ZehWhat happened to Morphological AA? When the 6000 series was released, Morph AA showed an impressively low demand on hardware - about 2 or 3 fps lost -, and now it's cutting frame rates in half?Seriously, what is it?Reply

On release we tested StarCraft II because that was a game that choked with MSAA on Radeons. It turns out, that game is severely CPU limited, so it wasn't the best test subject for Morphological AA -

MauveCloud I don't like these animated gifs for comparing anti-aliasing modes, because 1. gifs are limited to 256 colors, 2. moving around in a game will affect how noticeable the differences in quality between different anti-aliasing modes are. (so will the physical size of the pixels, but that would probably be impractical to represent when viewed on other monitors). Would it be possible to get some animations that show antialiasing modes side-by-side (or half and half) while moving around in some of these games, instead of just fixed-position images that cycle between anti-aliasing modes?Reply -

cleeve MauveCloudI don't like these animated gifs for comparing anti-aliasing modes, because 1. gifs are limited to 256 colors, 2. moving around in a game will affect how noticeable the differences in quality between different anti-aliasing modes are.Reply

As for #2, there's no worries as the Half Life 2 engine in Lost Coast that we used for the majority of comparison shots doesn't move the camera during idle times. We used a save game and reloaded the scene at exactly the same position, so its not an issue here.

As for your first concern, I was worried about that, too, at first. But I carefully scrutinize the uncompressed TIFF files before exporting them to GIF and in these cases there's no practical difference, it does an excellent job of demonstrating the result with different AA modes.

-

wolfram23 Very interesting article! Although I'm a tad confused by your nomeclature of the Radeon AA settings. There's MSAA, AMSAA, SSAA, and within those you can choose box, narrow tent, wide tent, and edge detect types (edge being the only one AFAIK to increase demand), and then on top of that you can enable Morphological. So, I'm not sure what "EQ" means as it is not at all a term used by Radeon (or at least CCC).Reply

Also, as the first poster said, why is morphological so demanding all of a sudden? When I first tried using it, I barely saw an impact on performance and in a couple games it made everything look blurry. I just tried enabling it in Skyrim (a game that really needs better AA) and my performance plummeted - which these results confirm. What changed? -

cleeve wolfram23 So, I'm not sure what "EQ" means as it is not at all a term used by Radeon (or at least CCC).Reply

As it says in the article, EQAA is Radeon HD 6900-series exclusive. You probably don't have a 6900 card.

wolfram23Also, as the first poster said, why is morphological so demanding all of a sudden?

The answer is 5 posts above this comment. :) Depends on the game, you may have been using a CPU-bottlenecked title.