GeForce GTX 295 In Quad-SLI

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Test Settings

The biggest problem we’ve encountered when using high-end graphics solutions is that at the highest resolutions and settings, the cards cannot get data fast enough from the CPU and RAM to keep their graphics cores busy. In an effort to reduce this so-called “CPU bottleneck,” we overclocked our Core i7 processor to 4.00 GHz at a 200 MHz base clock.

| Test System Configuration | |

|---|---|

| CPU | Intel Core i7 920 (2.66 GHz, 8.0 MB Cache) |

| Overclocked to 4.00 GHz (BCLK 200) | |

| CPU Cooler | Swiftech Liquid Cooling: Apogee GTZ water block, |

| MCP-655b pump, and 3x120mm radiator | |

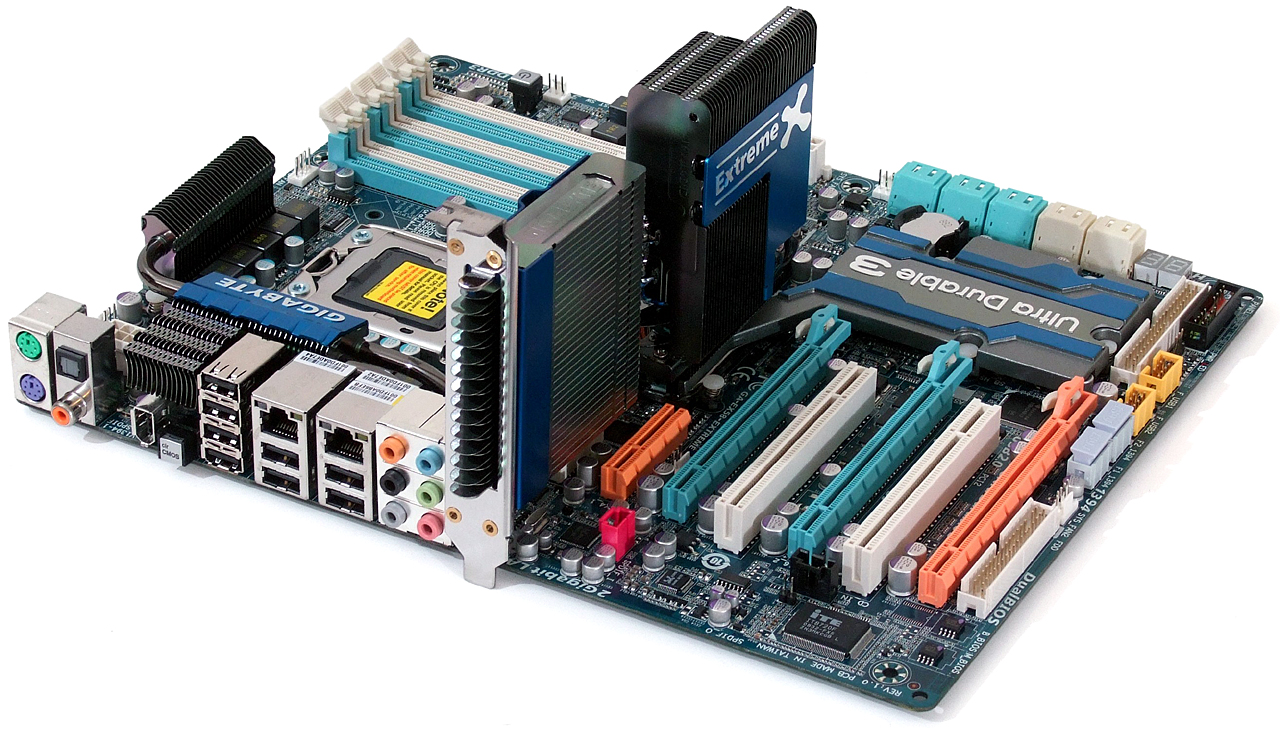

| Motherboard | Gigabyte GA-EX58-Extreme |

| Intel X58/ICH10R Chipset, LGA-1366 | |

| RAM | 6.0 GB Crucial DDR3-1600 Triple-Channel Kit |

| Overclocked to CAS 8-8-8-16 | |

| GTX 295 Graphics | 2x GeForce GTX 295 |

| 2x 576 MHz GPU, GDDR3-1998 | |

| GTX 280 Graphics | 3x EVGA GeForce GTX 280 PN: 01G-P3-1280-AR |

| 602 MHz GPU, GDDR3-2214 | |

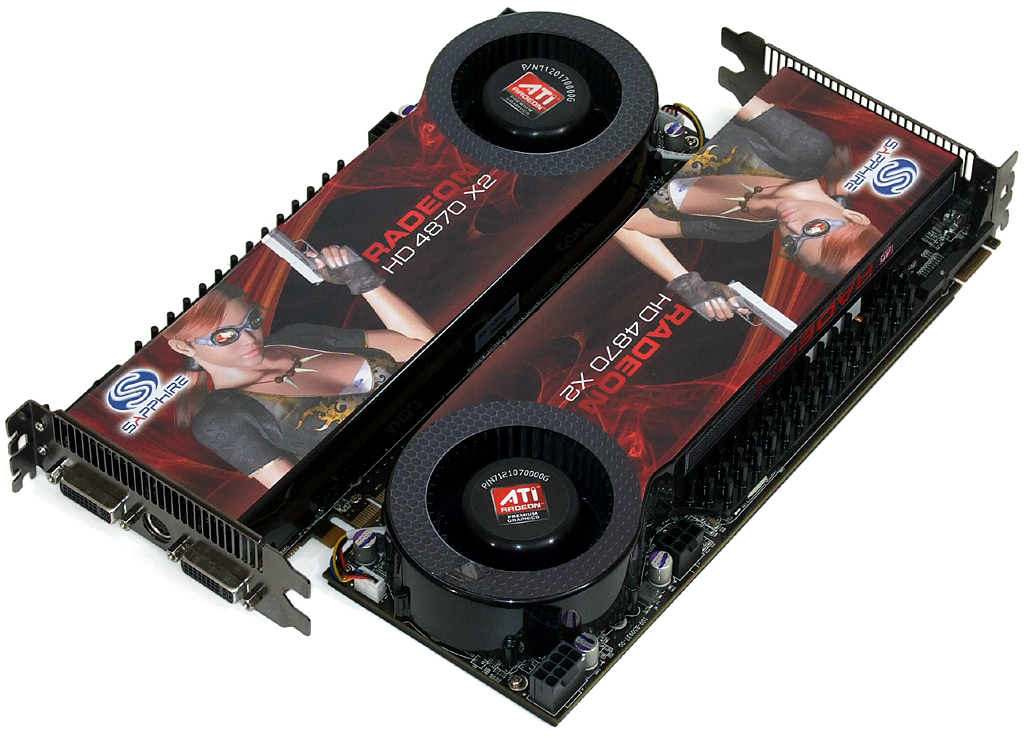

| Radeon HD 4870 X2 Graphics | 2x Sapphire HD 4870 X2 PN: 100251SR |

| 2x 750 MHz GPU, GDDR5-3600 | |

| Hard Drives | Seagate Barracuda ST3500641AS |

| 0.5 TB, 7,200 RPM, 16 MB Cache | |

| Sound | Integrated HD Audio |

| Network | Integrated Gigabit Networking |

| Power | Cooler Master RS-850-EMBA |

| ATX12V v2.2. EPS12V, 850W, 64A combined +12V | |

| Optical | LG GGC-H20LK 6X Blu-ray/HD DVD-ROM, 16X DVD±R |

| Software | |

| OS | Microsoft Windows Vista Ultimate x64 SP1 |

| Graphics | NVidia Forceware 181.20 Beta |

| ATI 8.561.3.0000 Beta | |

| Chipset | Intel INF 8.3.0.1016 |

Cooling an overclocked Core i7 CPU isn’t a task for lightweights, so we added Swiftech’s latest Apogee GTZ water block to the liquid-cooling kit we normally use for motherboard testing.

Feeding data quickly to the CPU are three 2.0 GB DDR3-1600 modules from Crucial. These particular samples are part of an upcoming High-End Triple-Channel shootout; watch for it in a few days.

Including 3-way SLI tests required a motherboard with proper slot spacing. Gigabyte’s EX58-Extreme worked well on our open platform, though the third card does extend below the lowest slot on standard cases.

Our second card came from MSI; its N295GTX-M201792 arrived overclocked to 655MHz GPU with GDDR3-2100 memory. We actually had to underclock this card to 576MHz/GDDR3-2000 reference speeds to make it cooperate with our reference card in Quad SLI. MSI says it could be releasing an overclocked board soon, which we'll be anxiously awaiting.

Our GTX-295 graphics cards were set to reference clock speeds, so it made sense to use reference-speed HD 4870 X2s in an apples-to-apples comparison. Our cards came from Sapphire.

Finally, the GTX 280 sets the standard for judging GTX 295 performance improvements. We tested three reference-speed cards in single, SLI, and 3-way SLI.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

| Benchmark Configuration | |

|---|---|

| Call of Duty: World at War | Patch 1.1, FRAPS/saved game Highest Quality Settings, No AA / No AF, vsync off Highest Quality Settings, 4x AA / Max AF, vsync off |

| Crysis | Patch 1.2.1, DirectX 10, 64-bit executable, benchmark tool Very High Quality Settings, No AA / No AF (Forced) Very High Quality Settings, 4x AA / 8x AF (Forced) |

| Far Cry 2 | DirectX 10, Steam Version, in-game benchmark Very High Quality Settings, No AA, No AF (Forced) Very High Quality Settings, 4x AA, 8x AF (Forced) |

| Left 4 Dead | Very High Details, No AA / No AF, vsync off Very High Details, 4xAA / 8x AF, vysnc off |

| World in Conflict | Patch 1009, DirectX 10, timedemo Very High Quality Settings, No AA / No AF, vsync off Very High Quality Settings, 4x AA / 16x AF, vsync off |

| 3D Mark Vantage | Version 1.02: 3DMARK, GPU, CPU scores Performance, High, Extreme Presets |

Current page: Test Settings

Prev Page Meet The New "GX2" Next Page Benchmark Results: COD World At War-

JeanLuc I’m looking at page 9 on the power usage charts – I have to say the GTX295 is very impressive it’s power consumption isn’t that much greater then the GTX280. And what’s very impressive is it uses 40% less power in SLI then the HD4870X2 does in Crossfire., meaning if I already owned a pretty decent PSU say around 700-800 watt’s I wouldn’t have to worry about getting it replaced if I were planning on SLIing the GTX295.Reply

I would have liked to have seen some temperatures in there somewhere as well. With top end cards becoming hotter and hotter (at least with ATI) I wonder if cheaper cases are able to cope with the temperatures these components generate.

BTW any chance of doing some sextuple SLI GTX295 on the old Intel Skulltrail?

-

Crashman JeanLucBTW any chance of doing some sextuple SLI GTX295 on the old Intel Skulltrail?Reply

Not a chance: The GTX 295 only has one SLI bridge connector. NVIDIA designs its products intentionally to only support a maximum of four graphics cores, and in doing so eliminates the need to make its drivers support more. -

neiroatopelcc I'd like to see a board that takes up 3 slots, and use both the 1st and the 3rd slot's pcie connectors to power 4 gpu's on one board. Perhaps with the second pcie being optional - so in case of not fitting the card at all, one could fit it with reduced bandwidth. That way they'd have a basis to make some proper cooling. Perhaps a small h2o system, or a peltier coupled with some propler fan and heatsink.Reply

ie. a big 3x3x9" box resting on the expansion slots, dumping warm air outside. -

jameskangster "...Radeon HD 4870 X2 knocked the GeForce GTX 280 from its performanceReply

thrown." --> "throne"? or am I just misunderstanding the sentence? -

kschoche So the conclusion should read:Reply

Congrats on quad-sli, though, for anything that doesnt already get 100+ fps with a single GX2, you're welcome to throw in a second and get at most a 10-20% increase, unless of course you want to get an increase to a game that doesnt already have 100 FPS (crysis), in which case you're screwed - dont even bother with it. -

duzcizgi Why test with AA and AF turned on with such high end cards? Anyone who pays +$400 * X wouldn't be playing any game with AA AF turned off or with low res. display. (If I'd pay $800 for graphics cards, I'd have of course had a display with no less than 1920x1200 resolution. Not even 1680x1050)Reply

And I'm a little disappointed with the scaling of all solutions. They still don't scale well. -

hyteck9 The performance per watt char is exactly what I wanted to see (it would be even better with some temps listed though). Thanks THG, This will help things along nicely.Reply -

duzcizgi, don't forget about the real hardcore players (those who play tournaments for example), who prefer to play with the lower graphics settings and ensure > 100 FPS.Reply