System Builder Marathon, Q2 2014: A Balanced High-End Build

Power, Heat, And Efficiency

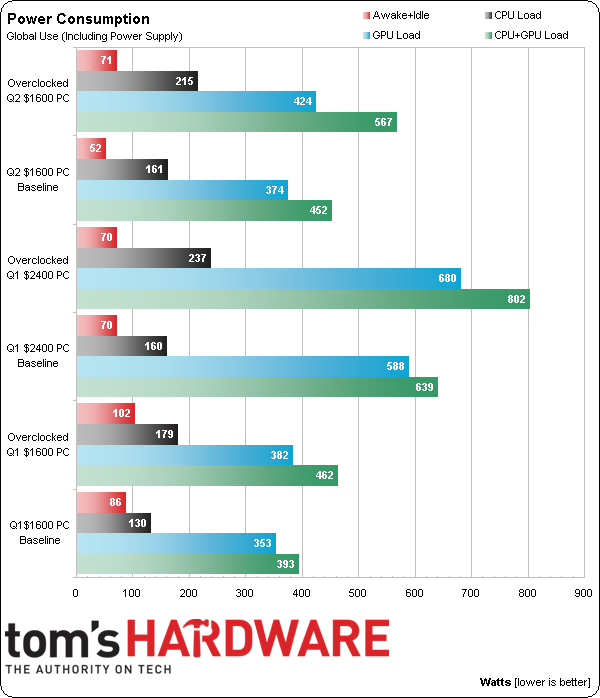

I configure my builds to maximize low-load energy savings. The same doesn't look like it applies to Don's approach. Or, maybe his motherboard didn’t idle down properly even with those settings enabled. Either way, the new $1600 build appears to draw far more energy under load, and even the 80 PLUS Gold-rated power supply in last quarter's $2400 machine couldn’t promote power-savings with GeForce GTX 780s rendering away.

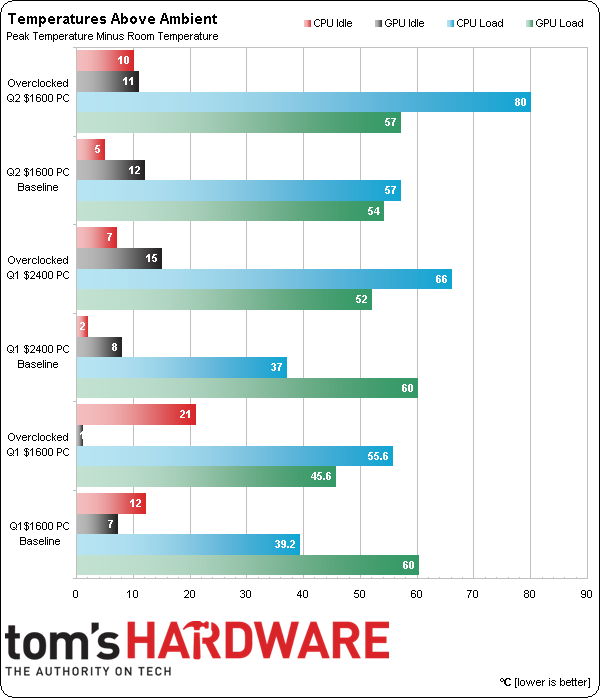

And now for the shocker: my new $1600 configuration doesn't run cool enough. But should we blame its cooler?

The above chart doesn’t tell the whole heat story, since my measurements were taken with both the GPU and CPU under duress. First, a CPU-only load would have dropped CPU temperature by up to eight degrees. Second, voltage levels approaching 1.30 V are extremely difficult to cool Haswell-based CPUs predating Devil's Canyon. Indeed, Thermaltake's cooler produced similar temperatures as the old MUX 120 that I use in motherboard round-ups to push the same Core i7-4770K up to 4.7 GHz. This truly was a bum processor for overclocking.

Article continues belowI could have spent twice as much money on CPU cooling to save a few degrees, and might have passed on a pass/fail system. But if I really wanted to be sure the platform would stay under its thermal threshold, I would have also needed more case cooling or an externally-venting graphics card cooler (you know, the noisy blower-style reference cooler from AMD that everyone hates). To that end, perhaps I could have used a GeForce GTX 780 rather than a Radeon R9 290X, since it appears that only Nvidia knows how to make a well-behaved blower-style cooler.

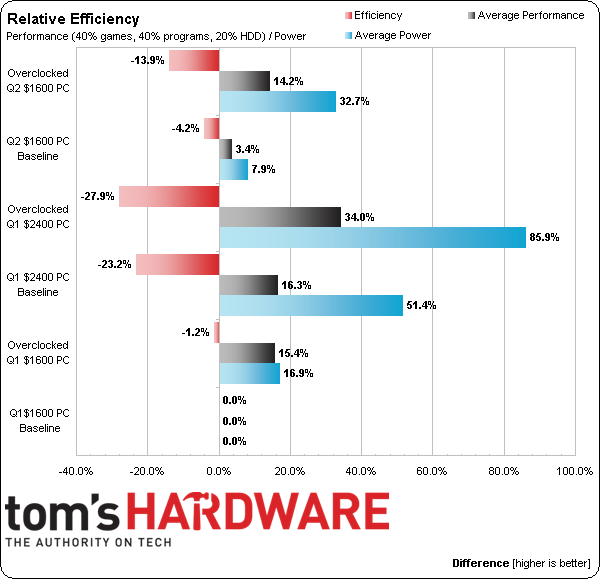

This quarter's $1600 build performs 3.4% better than Don's $1600 machine from last quarter at its stock settings, but falls a little more than 1% behind when both of us overclock. Again, it's a bummer that my CPU wouldn't stabilize above 4.2 GHz.

It’s also less efficient, and I can probably point to the graphics card’s high power consumption for most of that. The new CPU did need a bunch of extra voltage to run at its meager overclock though, which further hurt the new machine’s overclocked efficiency.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Current page: Power, Heat, And Efficiency

Prev Page Results: File Compression Next Page Less Money, Lower Performance, Better Value?-

mrmike_49 Only 8GB RAM for a high end PC? Just plain too much money spent on graphics card. Also, too much money spent on "yuppie" power supply/caseReply -

de5_Roy thermaltake nic L32 doesn't seem well suited for the cpu for stock operations. at stock settings, the cpu's load temp is 57c over ambient according to the temp. chart. the q1 $1600 pc has a hyper 212 evo and it ran the stock i7 4770k under 40c over ambient. from the looks, the tt nic cooler seemed a better performer than the hyper 212 evo.Reply

was multicore enhancement enabled for both the q1 $1600(asrock z87 pro3) and this quarter's high end pc(asus z97-a)? did it affect the heat output? asus keeps m.c.e. enabled by default. i can't see any other factors atm.

all 3 builds look very well-performing this quarter. looking forward to the perf-value analysis. -

Taintedskittles Reading the reviews on newegg about that PowerColor 290x you chose was hilarious. So whoever win's this thing can look forward to many many rma's in the future. Apparently its plagued with artifacts, bad fans, bios issues, & performance degradation. I would have chosen another brand at the very least.Reply -

pauldh To be fair though, look at the dates of those negative Newegg reviews. All but one of the complaints appeared after this system was ordered mid-May. Prior available feedback WAS almost all positive. And a manufacturer rep jumped in to resolve that one.Reply

-

Crashman Reply

A yuppie power supply...OK...13586826 said:Only 8GB RAM for a high end PC? Just plain too much money spent on graphics card. Also, too much money spent on "yuppie" power supply/case

The last time I checked the "Samsung 840 EVO MZ-7TE250BW" wasn't an HDD, and nobody wanted us to run OS/2 on a modern gaming system. Please read the charts, wabba13587011 said:kidding me, hdd and windows 8, pls, read up on hardware toms hardware.....and software.

-

crisan_tiberiu Make this "competition" global please... :( You have "Tom's Hardware" in every major region in the world... :) Also FedEx and DHL ships everywehere in the world :) make all readers happy :) Our traffic is good for your site, but we never get something special :((Reply -

rush21hit If I had a specs like this, I don't want it to be encased. I'd stick it to my wall even if it means I had to figure out how to do it.Reply -

Realist9 I think the author hit his mark for the intent of the build/article based on the budget limit and provides a good starting point for us. However, if I was actually building and buying for myself, I would make some changes to add headroom and compatibility.Reply

I would go with 16 GB of memory for $85 more, since that’s only $85/$1600=5% more cost. I’d also go ahead and get the Asus 780 for $520. (Side note: I disagree that most would go AMD in a 780 vs 290x, but I know better than to open that can of worms). SLI was mentioned but not used, and I also would not get SLI unless I KNEW it worked with the game I was most interested in. The posts on various forums about SLI causing problems in most games, along with SLI “issues” dating back to 3dFX Voodoo2 cards, keeps me away from SLI.

I also would stay away from “generally stable, but usually not stable in the games I want to play most” (not quoting the author here) overclocking of the system/video card. It’s nice to see it in the charts, but I read about way too many problems in games caused by overclocking for me to rely on it to get my ‘value’.

Lastly, I think the pendulum has swung too far towards “value” for the high end build. I suggest tweaking that a little for future high end builds (eg..780Ti, 16 GB memory, 500GB SSD, but continue to stay away from $1000 CPU, $1200 SLI, etc).