Micron 9100 Max NVMe 2.4TB SSD Review

Why you can trust Tom's Hardware

128KB Sequential Read And Write

To read more on our test methodology visit How We Test Enterprise SSDs, which explains how to interpret our charts. The most crucial step to assuring accurate and repeatable tests starts with a solid preconditioning methodology, which is covered on page three. We cover 128KB Sequential performance measurements on page five, explain latency metrics on page seven and explain QoS testing and the QoS domino effect on page nine.

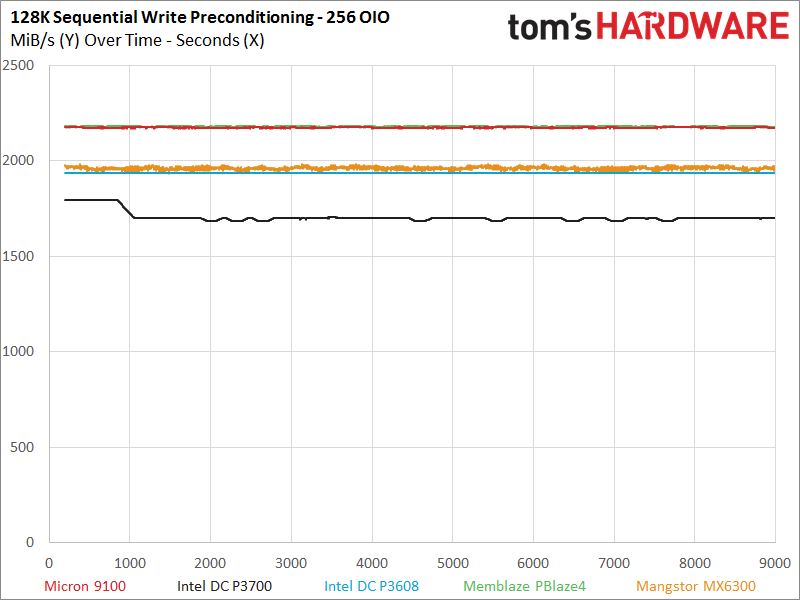

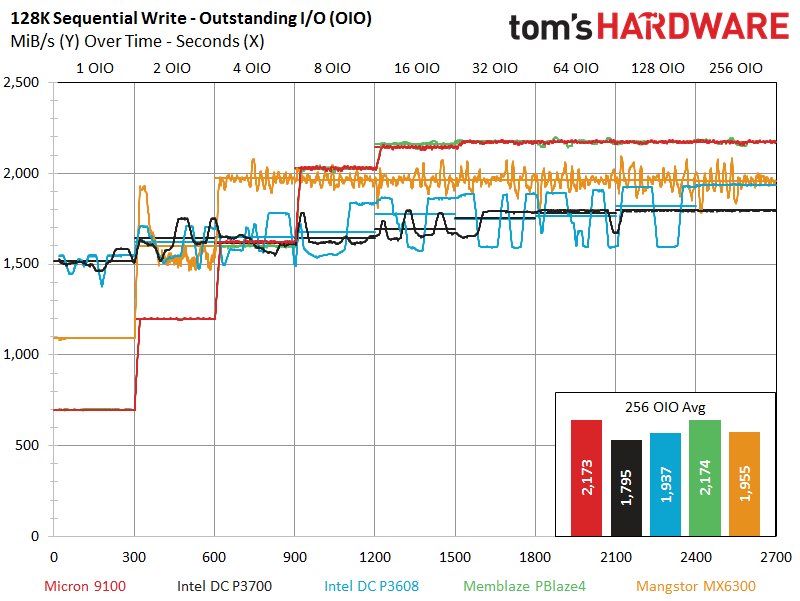

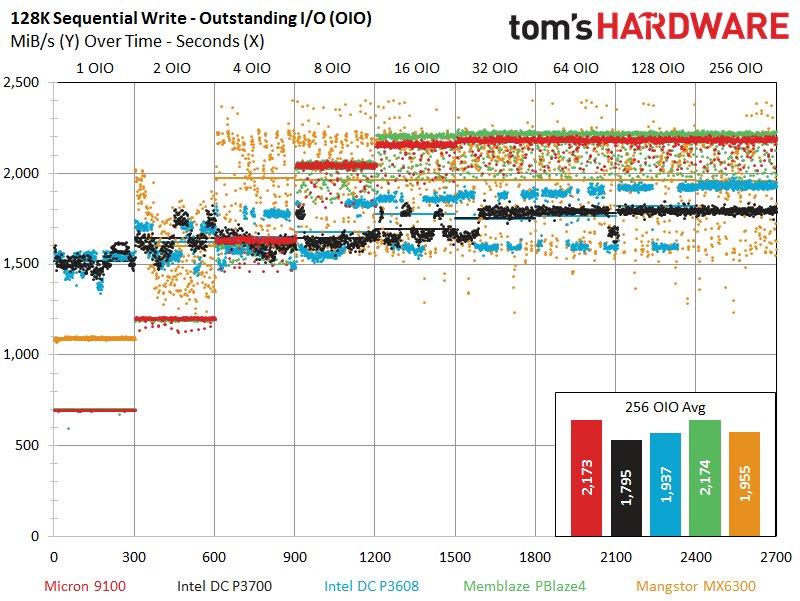

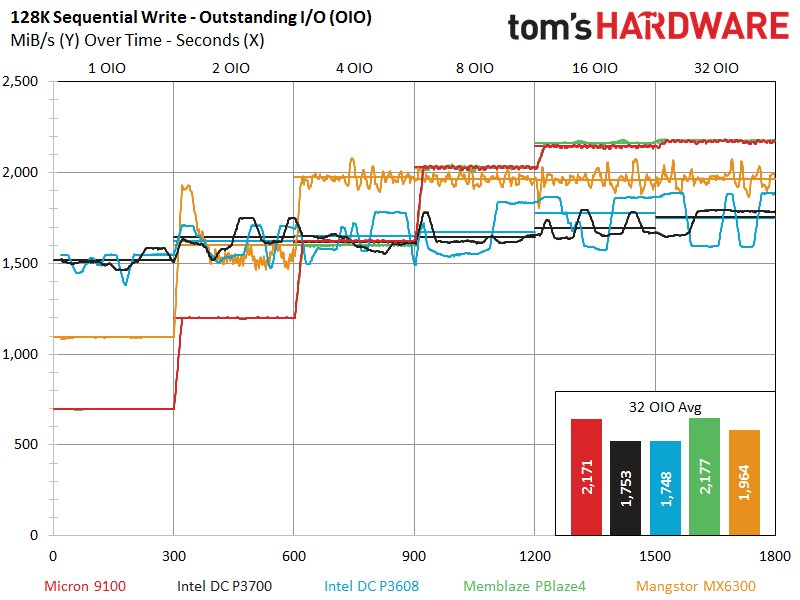

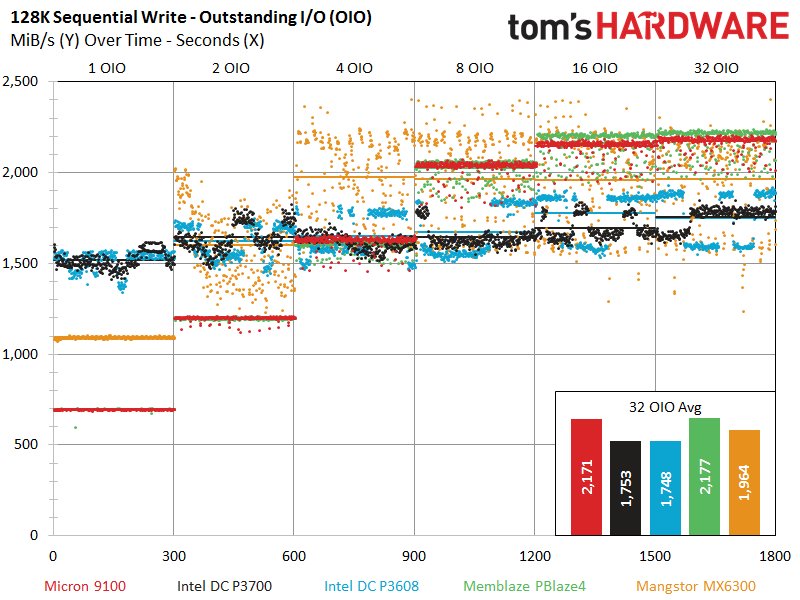

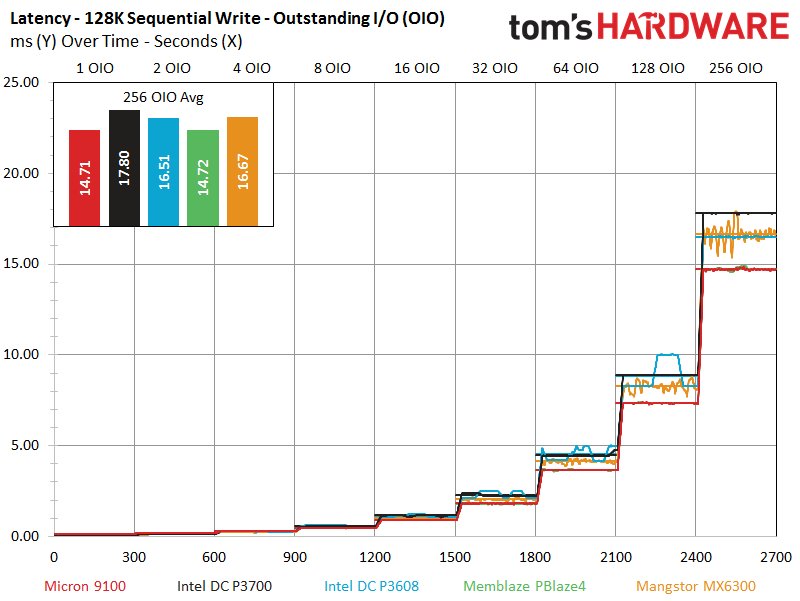

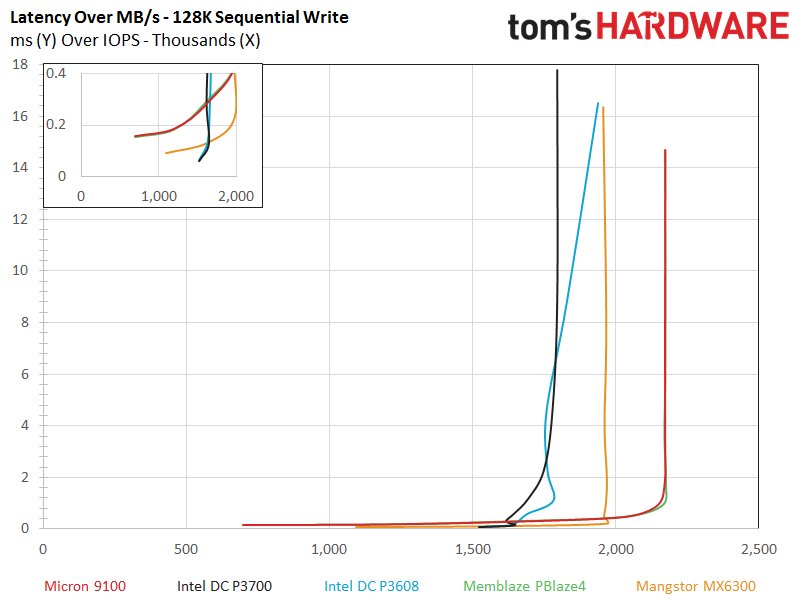

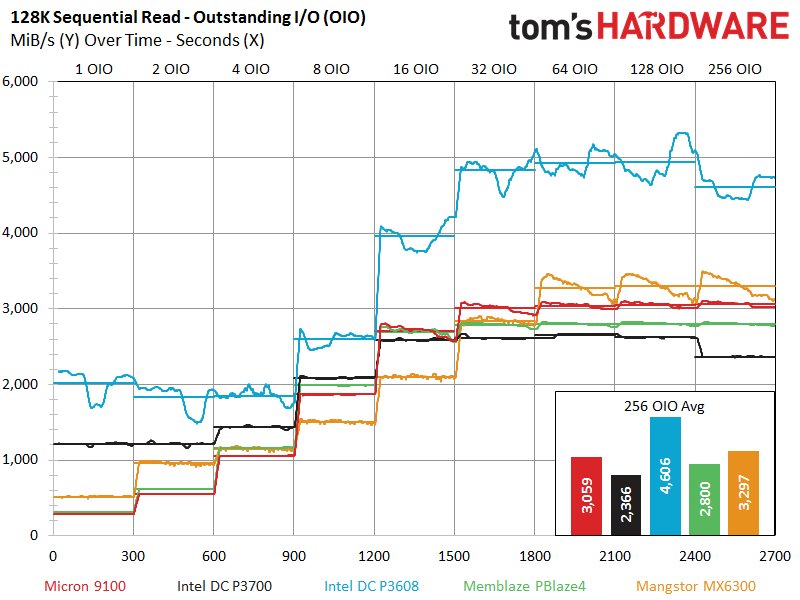

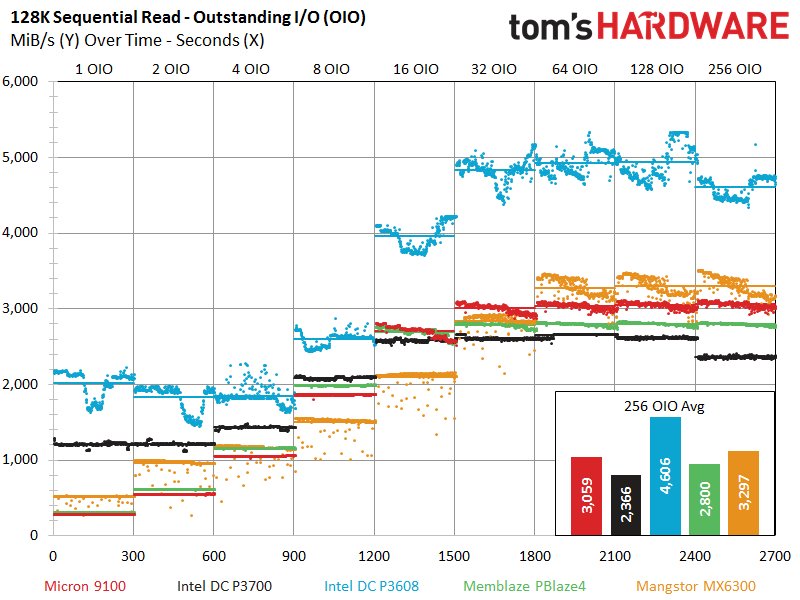

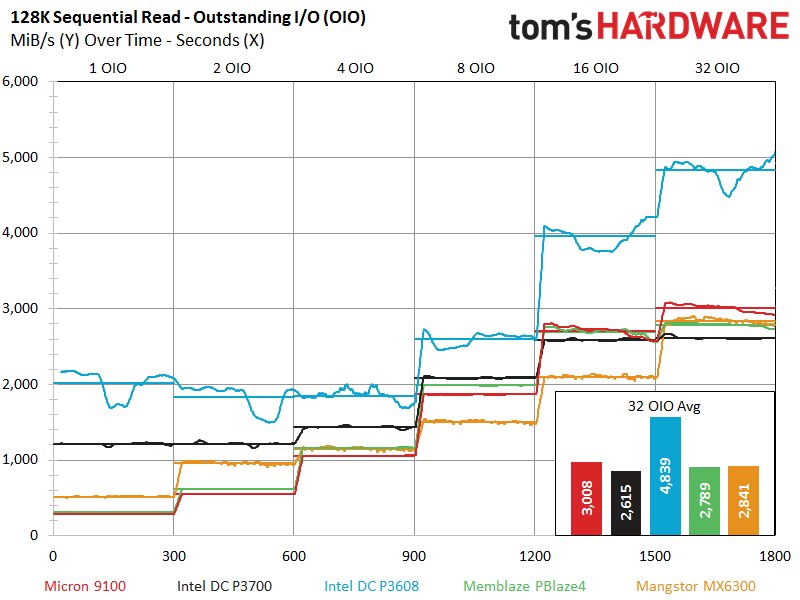

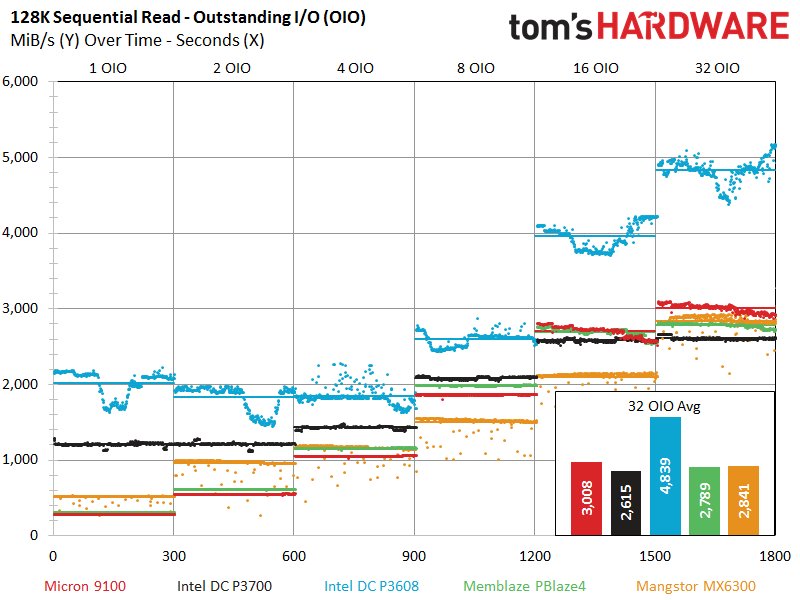

The Micron 9100 performs well with heavy write workloads, and this tendency continues as we enter the sequential portions of the test suite. The Micron 9100 exhibits the same performance profile as PBlaze4, to the point that it overlays the test results for the majority of the test. Unfortunately, both of the Microsemi-powered SSDs require a bit of parallelism to get up to speed, so they fall significantly behind the other entrants at 1 and 2 OIO. The Intel SSDs once again dominate the tests at 1 OIO, and the Mangstor MX6300 also joins in at 2 OIO.

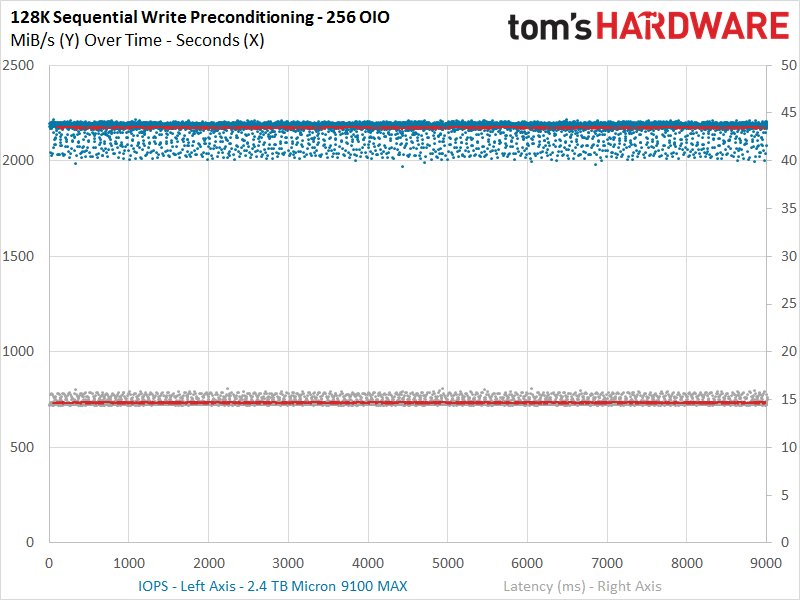

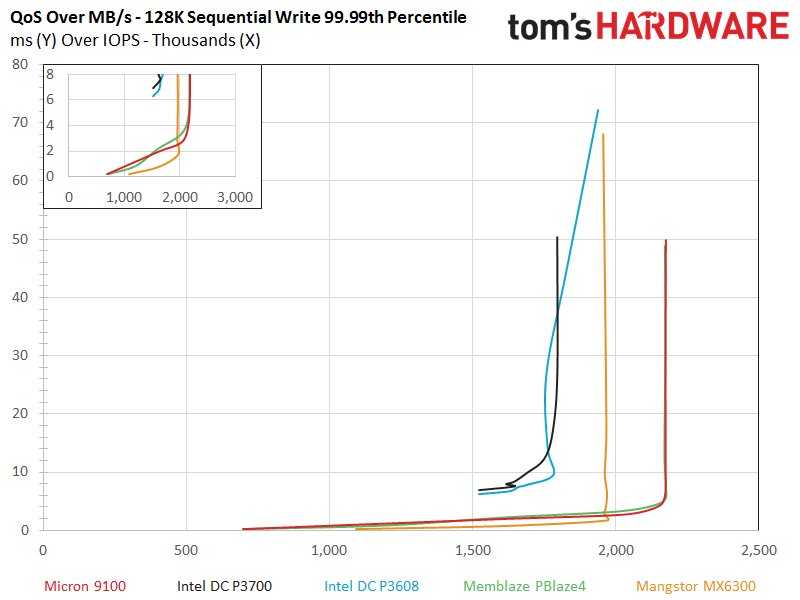

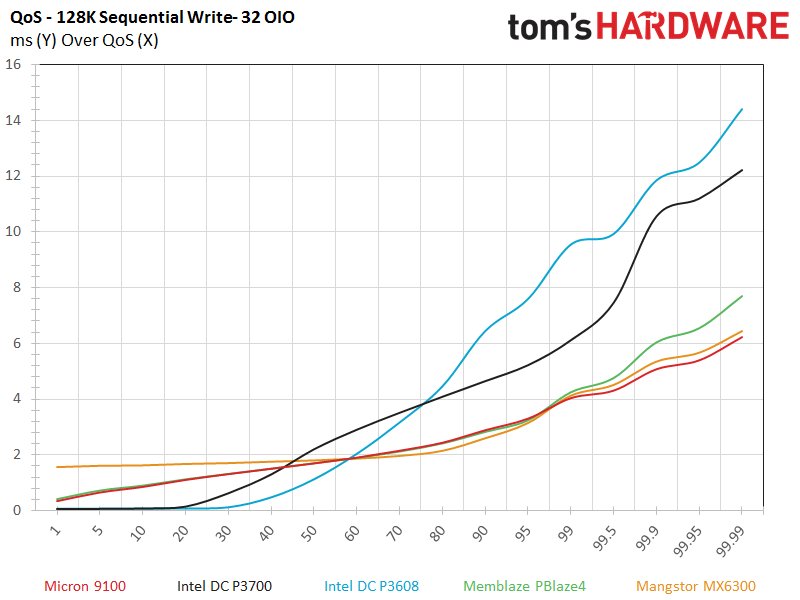

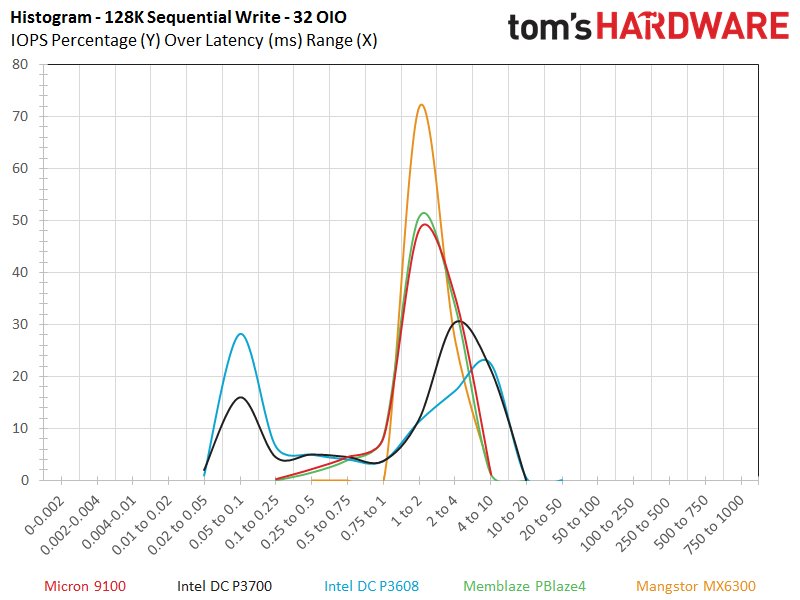

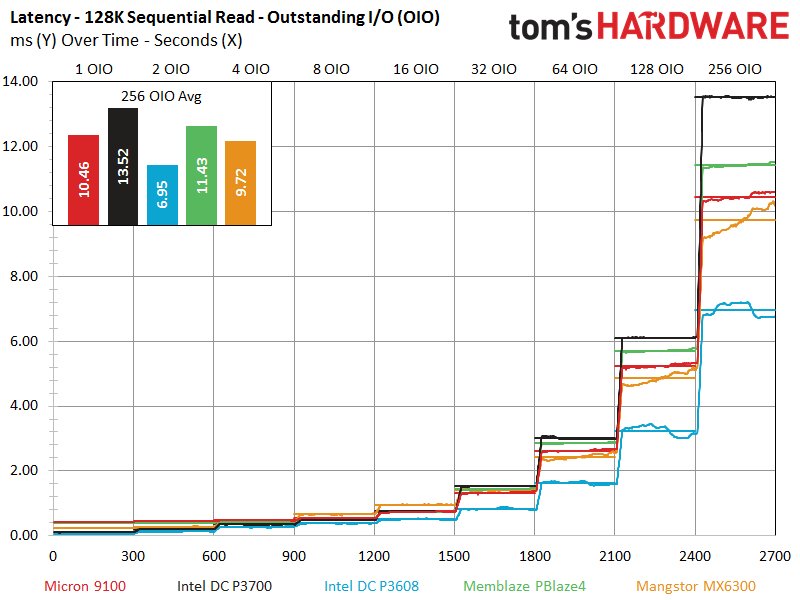

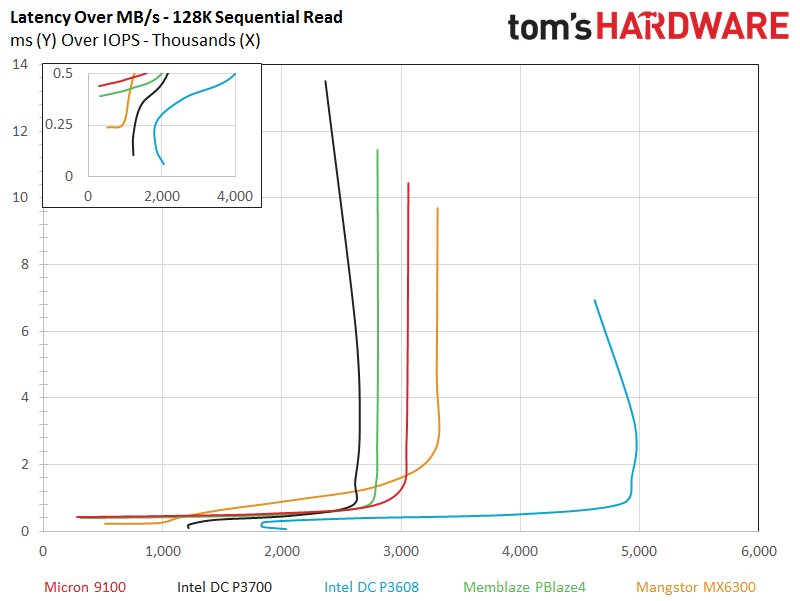

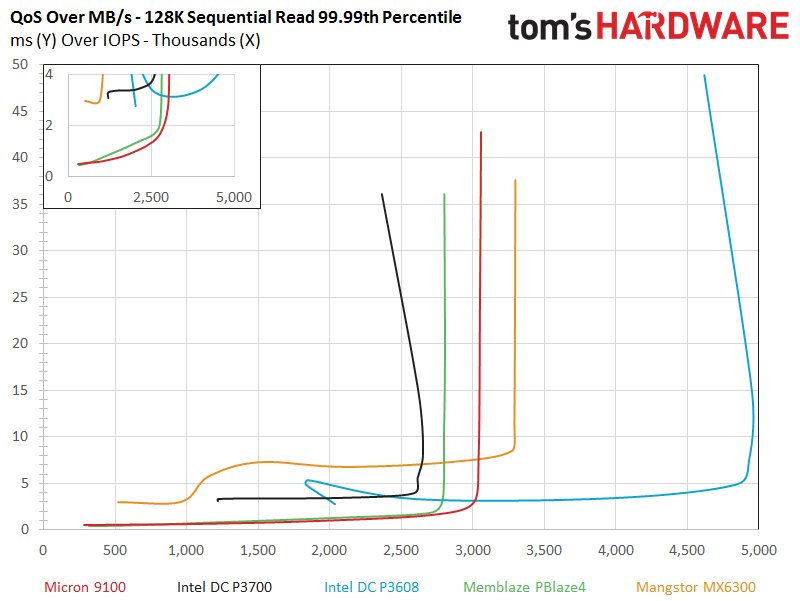

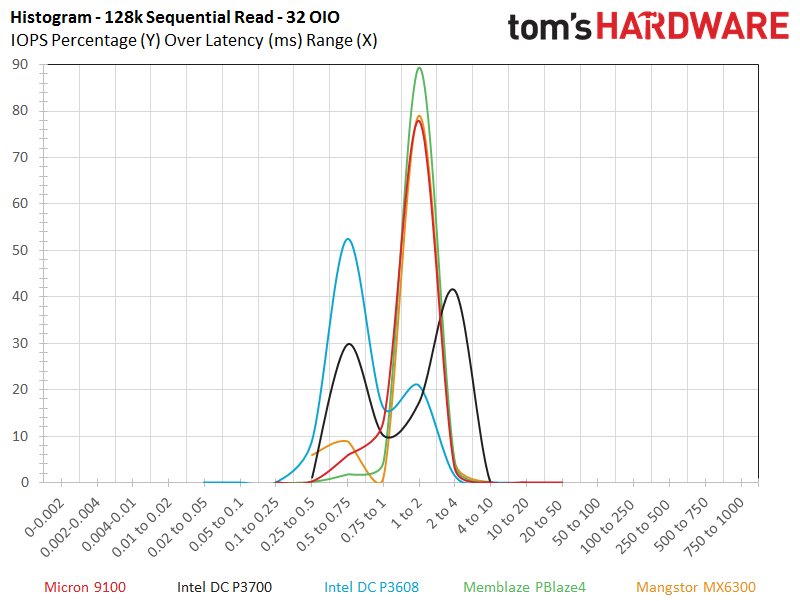

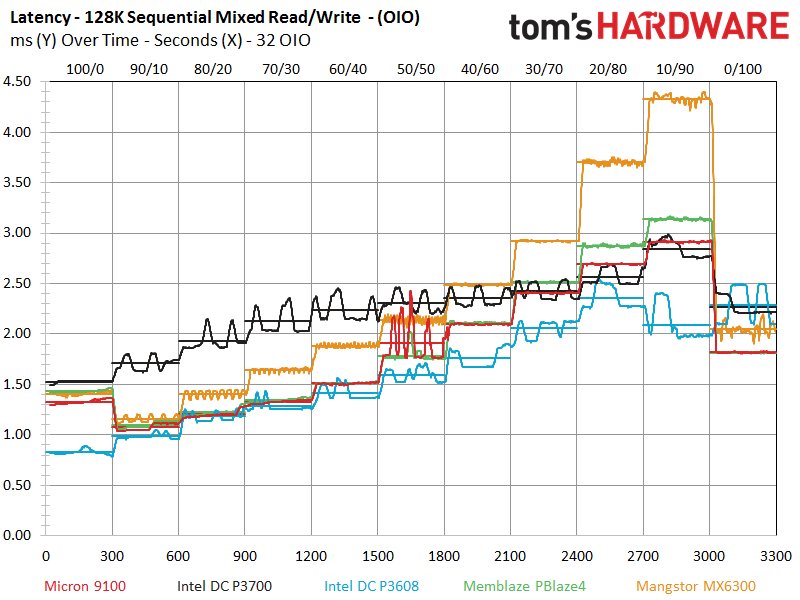

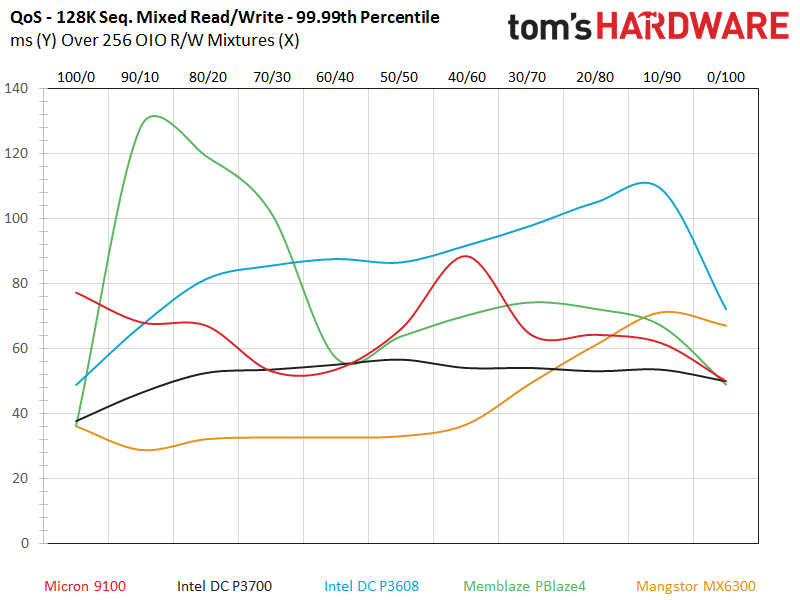

The DC P3608 offers a bit of a scattered performance profile during the workload, but nothing on the order of the Mangstor MX6300, which experiences severe turbulence. The 9100 and PBlaze4 offer an excellent tight performance distribution during the test, which carries over to the QoS measurements.

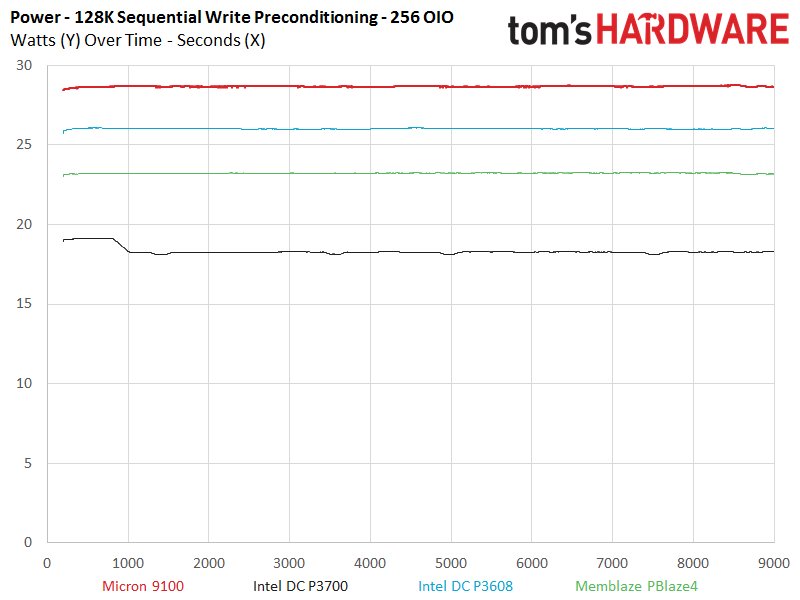

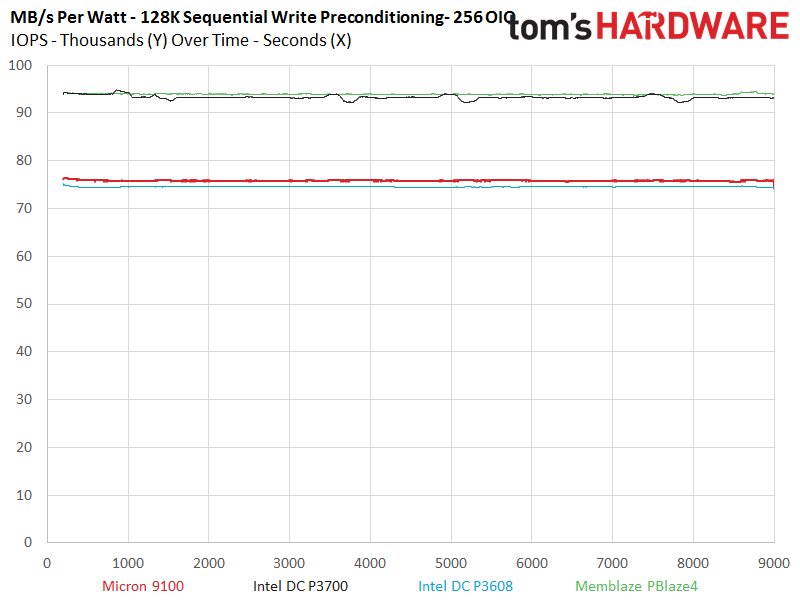

Sequential write tests tend to tease out the highest power consumption, and we observe the 9100 peaking at 28W, while the PBlaze4 (which offers similar performance) only requires 23W. The Intel DC P3700 is also miserly during the test, which ends up in a near tie in the MB/s-per-Watt metrics.

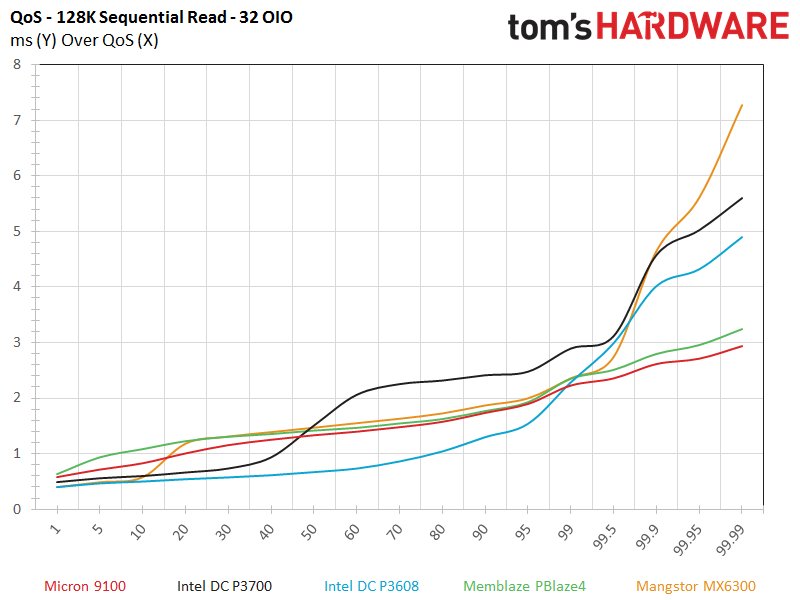

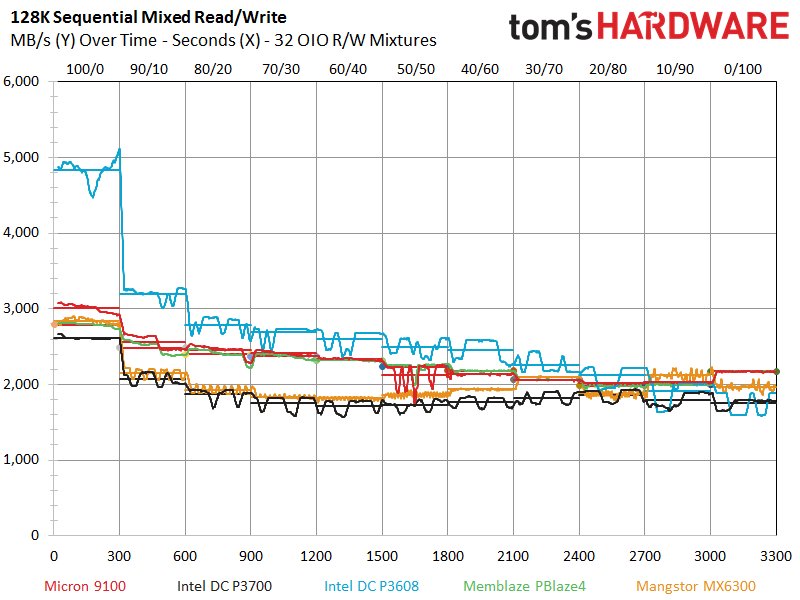

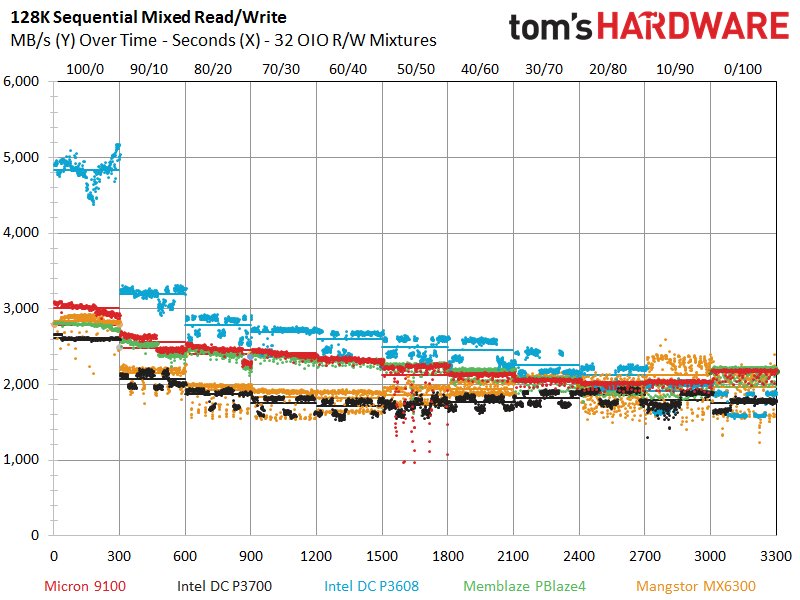

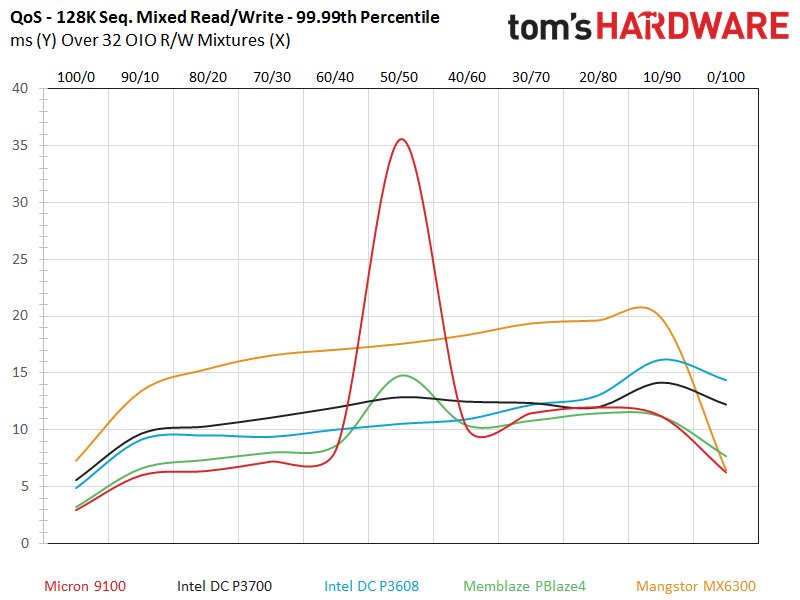

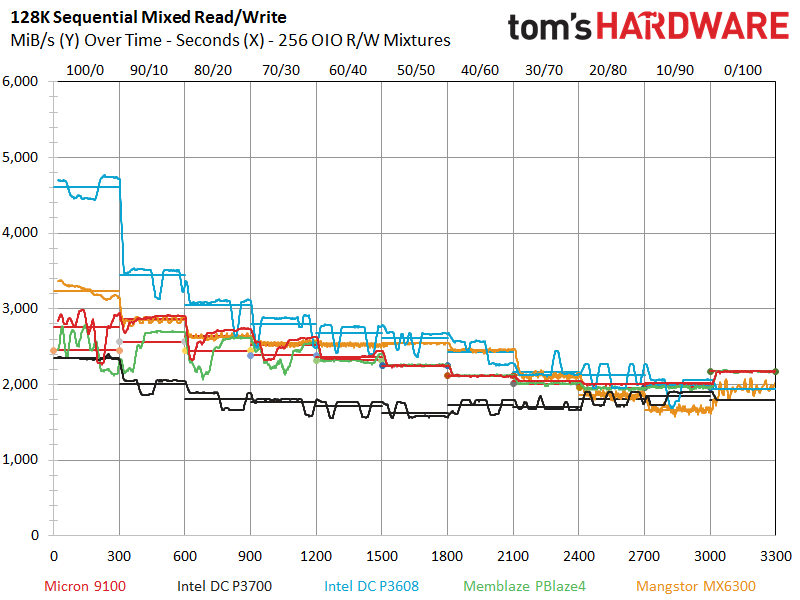

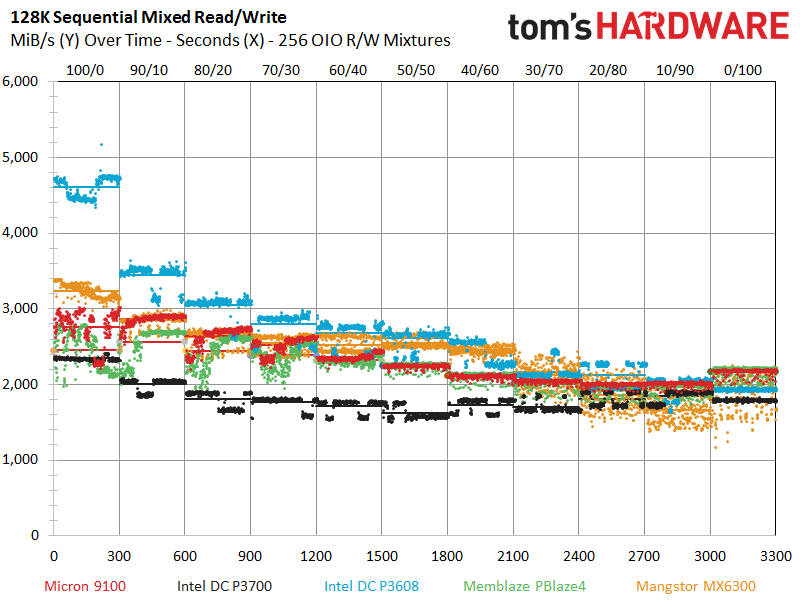

The Intel DC P3608 reveals its real purpose in life, which is to humiliate everyone else in sequential read speed tests. The DC P3608 peaks at nearly 5GB/s. It doesn't get much better than that, at least for the time being. The MX6300 is content to take second place with roughly 3.3GB/s, while the 9100 once again leads the single-ASIC pack with a more-than-respectable 3GB/s of read throughput. The DC P3608 dominates the latency and QoS testing due to its considerable performance advantage, but the 9100 takes the edge in the 32 OIO QoS test.

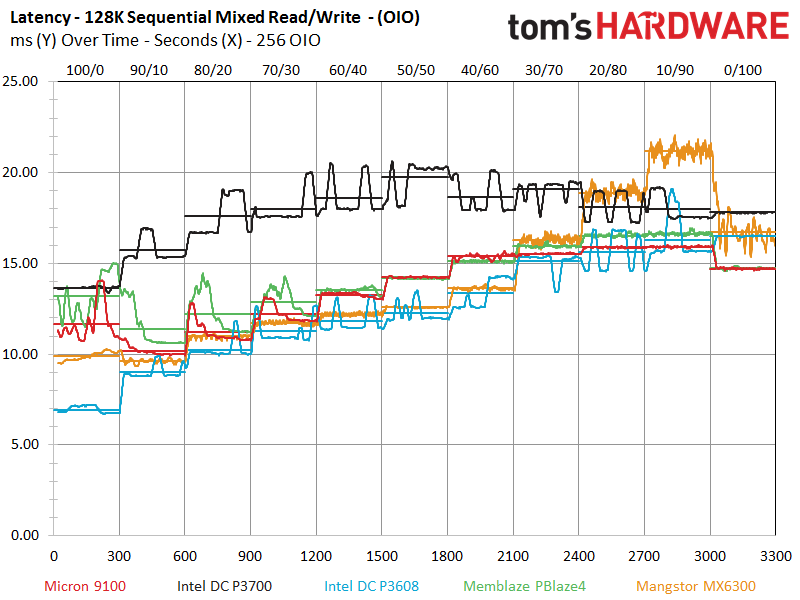

The DC P3608 looms over the rest of the test pool during the read-centric portions of the test on the left of the chart but experiences a precipitous decline as we add in more write activity. The Micron 9100 provides a nice tight performance profile as it cuts a clean swath across the chart, but we note some variability at the 50/50 read/write mix in the center of the graph. This problem manifests itself as a significant spike in the middle of the QoS measurements, which sullies an otherwise impressive round of tests for the Micron SSD. The problem is only present in the 32 OIO workloads.

We have encountered and reported this issue before. Several Microsemi-powered SSDs (but not all) have come to our lab with this same uncharacteristic variability during 50/50 mixed read/write sequential workloads. The root of the problem likely lies in a reference firmware that Microsemi provides to each company. The companies take the reference firmware and modify it to their respective needs. However, these firmware modifications are likely proprietary to each company involved, so the issue keeps reappearing in our lab.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

We have reported the issue to the companies affected, and every company has corrected the issue before publication. We often encounter bugs, since being on the cutting edge means that we get early silicon regularly, but if the company remedies the problem there is no reason to go about thumping ones chest; at that point it becomes irrelevant.

This Micron review is a bit time sensitive, but the company is aware of the issue and working to correct the problem. We await a new firmware to correct the issue, and will append fixed test results if necessary.

Current page: 128KB Sequential Read And Write

Prev Page 8KB Random Read And Write Next Page OLTP & Email Server Workloads

Paul Alcorn is the Editor-in-Chief for Tom's Hardware US. He also writes news and reviews on CPUs, storage, and enterprise hardware.

-

Flying-Q If the flash packages are producing so much heat that they need such a massive heatsink, why is there only one? Surely the flash on the rear of the card would need heatsinking too, even just a flat plate would suffice?Reply -

Unolocogringo It appears the heatsink is more for the voltage converters and controller chip to me.Reply -

Paul Alcorn Reply18234127 said:If the flash packages are producing so much heat that they need such a massive heatsink, why is there only one? Surely the flash on the rear of the card would need heatsinking too, even just a flat plate would suffice?

This is a standard configuration, though there are a few SSDs that have rear plates. Thermal pads were more common with larger lithography NAND, 20nm, 25nm, etc, because it generated more heat. New smaller NAND, such as the 16nm here, draws less power and generates less heat. In fact, it was very common with old client SSDs to have a thermal pad on the NAND, whereas now they are relatively rare. I think that they may be relying upon reducing the heat enough on one side to help wick heat from the other side, but the heatsink is primarily for the controller and DRAM with the latest SSDs. Also, it may just be convenient to add additional thermal pads to the NAND to keep the spacing for the HS even across the board.