ATI Radeon HD 4890: Playing To Win Or Played Again?

The Relevance Of DirectX 10.1

The performance differences between ATI and Nvidia graphics cards are currently fairly tight and well-segmented—in other words, it’s easy for an enthusiast to hit a site like newegg.com with a budget in mind and, all else being equal, find the best card for the money. Case in point: you tell me you have $160, I’ll tell you to get a GeForce GTX 260 Core 216 and cash in on the rebates. You tell me you can spend $180, I could tell you to grab a Radeon HD 4870 with 1 GB.

But all else is not equal, so recommendations aren’t quite that cut and dry. Nvidia preaches the gospels of PhysX and CUDA, with an occasional verse about GeForce 3D Vision. ATI sings the hymns of DirectX 10.1 and Stream. Depending on which company you put your faith in, one of those two messages is going to sound a little sweeter.

Right now, the two are in full-scale conversion mode, trying to get everyone they can to pitch in their tithing for a little gaming salvation. Just as PhysX and CUDA are starting to take hold with high-profile titles enabling support, so too are software developers paying more attention to DirectX 10.1.

As a superset of DirectX 10, DirectX 10.1 includes a handful of quality-enhancing features that, in some cases, will run on DirectX 10 hardware, but at a performance hit. For instance, the Gather4 function fetches four samples (2x2) where a DirectX 10 part would only be able to fetch one. The result should be more realistic shadow maps and better performance. The Stalker: Clear Sky demo lets us test that theory with a toggled check-box to enable or disable DirectX 10.1.

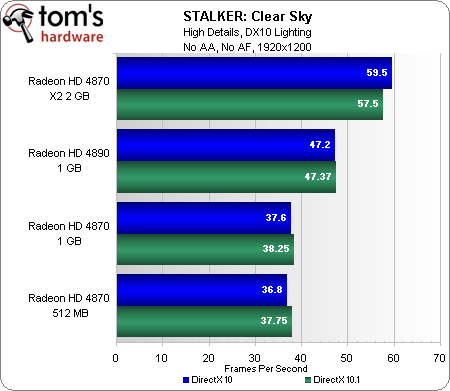

Our first test pits all of the Radeon HD 4800-series cards in this story against each other, without MSAA for alpha-tested objects enabled. At 1920x1200, performance is fairly similar across the board, with all test cases except the Radeon HD 4870 X2 demonstrating small gains by moving to DirectX 10.1.

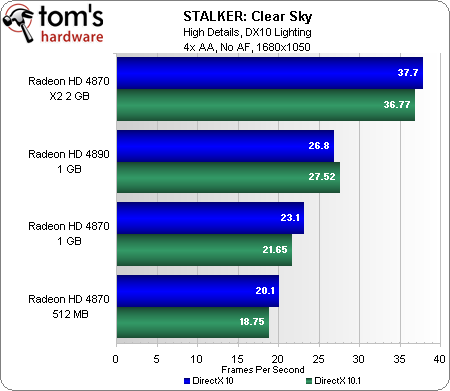

We then turned on 4x MSAA for alpha-tested objects and re-ran the numbers, this time at 1680x1050, in an attempt to maintain somewhat reasonable frame rates. This time, a majority of the test cases show DirectX 10.1 incurring a small performance hit. Is the performance tradeoff worthwhile? For that, we’ll need to make an image quality comparison from within the game itself.

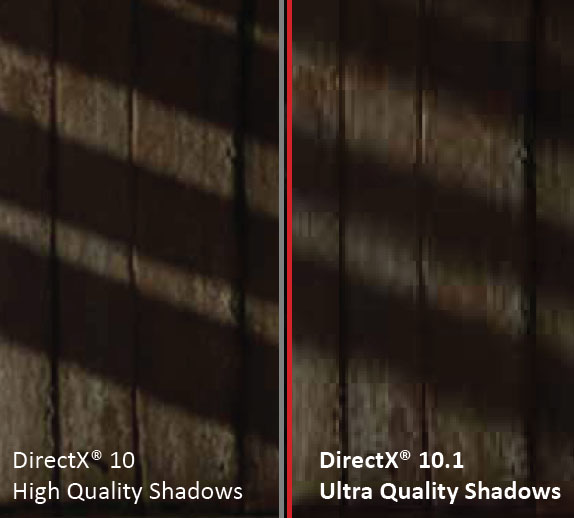

Up top you’ll find the screen capture provided by ATI, right up against a wall, demonstrating that the DirectX 10.1 shadows are softer and arguably more realistic. I ran all over the opening area of the game trying to find a clear example of the difference made by DirectX 10.1 shadows and just couldn’t come up with an indisputable best-case scenario. Even by reloading saved points, it was impossible to generate the exact same scene twice. Nevertheless, given the option of enabling DirectX 10.1 and not seeing a significant performance hit, you might as well turn the feature on.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

With the Game Developer’s Conference recently past, ATI had a handful of DX 10.1 titles to discuss during its briefing, besides Stalker. Tom Clancy’s HAWX looks like a fun title with DX 10.1 screen space ambient occlusion and accelerated Gaussian shadows. There were two other lesser-known titles, plus the UNiGiNE game engine, on which several upcoming titles are purportedly based.

As of right now, DirectX 10.1 isn’t making a huge impact, but because it will become a subset of DirectX 11, you can expect the extra features being enabled right now to work moving forward in Windows 7. The same couldn’t be said for the disruptive shift from DirectX 9/XP to DirectX 10/Vista.

Current page: The Relevance Of DirectX 10.1

Prev Page Building A Radeon HD 4890 Next Page Test Setup And Benchmarks-

eklipz330 i usually don't bitch and moan about them not having enough test gpu's, but i'd really like to see that sapphire 2gb 4870 up there, seeing how its in the same price range as the 4890...Reply

any of these cards would suffice for me, 1680*1050 does save you a pretty penny -

ravenware http://firingsquad.com/hardware/ati_radeon_4890_nvidia_geforce_gtx_275/page4.aspReply

It seems to overclock well and outpaces the 275.

The stalker results seem odd from both review sites. But stalker is glitchy.

If priced right this should be a decent addition to the 4x series.

It holds its own against the 275 and in certain games the 285.

Perhaps sapphire will release a dual card.

The 4850x2 they released performed extremely well.

-

megamanx00 The 4850X2 is absent to compare to I see. Still nice to finally see a review of this thing. Nice gains over the 4870.Reply -

eklipz330 ReplyStalker: Clear Sky benchmarks are fairly new in our graphics card reviews, even if the game itself isn’t particularly fresh.Let us know what you think of this one in the comments section. At the very least, it’s a beautiful looking game.

any benchmark is welcome i suppose

too bad price goes up exponentially for minimal improvements... the 4890 will be about %50 more than the 4870 -

cangelini Both the 2 GB card and the 4850 X2 are exclusive to Sapphire, and neither has been sent over. Nevertheless, we'll be following up with SLI/CrossFire scores in the near future and I'll see if either of those two solutions might be lined up for that story.Reply -

eklipz330 cangelini, you are the man.Reply

just thought i'd let you know. this article was very well written, and you said everything i was thinking including the pricing. too bad you have to go to the other article to refer to gtx275 comparisons. regardless of that, gj -

cangelini Thanks Ek. Truth be told, both companies pulled their launches in, allowing about a week to get the testing/writing done. Usually that's pretty tight for one new launch. Two is a little rougher. But hopefully there was enough cross-linking between the pair to convey the right messages.Reply -

ifko_pifko I'll post it here as well as in the GTX275 review:Reply

Summing all the framerates is just nonsense. ;-) The games with higher fps will weigh more than the others.